Unlock a world of possibilities! Login now and discover the exclusive benefits awaiting you.

Search our knowledge base, curated by global Support, for answers ranging from account questions to troubleshooting error messages.

Featured Content

-

How to contact Qlik Support

Qlik offers a wide range of channels to assist you in troubleshooting, answering frequently asked questions, and getting in touch with our technical e... Show MoreQlik offers a wide range of channels to assist you in troubleshooting, answering frequently asked questions, and getting in touch with our technical experts. In this article, we guide you through all available avenues to secure your best possible experience.

For details on our terms and conditions, review the Qlik Support Policy.

Index:

- Support and Professional Services; who to contact when.

- Qlik Support: How to access the support you need

- 1. Qlik Community, Forums & Knowledge Base

- The Knowledge Base

- Blogs

- Our Support programs:

- The Qlik Forums

- Ideation

- How to create a Qlik ID

- 2. Chat

- 3. Qlik Support Case Portal

- Escalate a Support Case

- Resources

Support and Professional Services; who to contact when.

We're happy to help! Here's a breakdown of resources for each type of need.

Support Professional Services (*) Reactively fixes technical issues as well as answers narrowly defined specific questions. Handles administrative issues to keep the product up-to-date and functioning. Proactively accelerates projects, reduces risk, and achieves optimal configurations. Delivers expert help for training, planning, implementation, and performance improvement. - Error messages

- Task crashes

- Latency issues (due to errors or 1-1 mode)

- Performance degradation without config changes

- Specific questions

- Licensing requests

- Bug Report / Hotfixes

- Not functioning as designed or documented

- Software regression

- Deployment Implementation

- Setting up new endpoints

- Performance Tuning

- Architecture design or optimization

- Automation

- Customization

- Environment Migration

- Health Check

- New functionality walkthrough

- Realtime upgrade assistance

(*) reach out to your Account Manager or Customer Success Manager

Qlik Support: How to access the support you need

1. Qlik Community, Forums & Knowledge Base

Your first line of support: https://community.qlik.com/

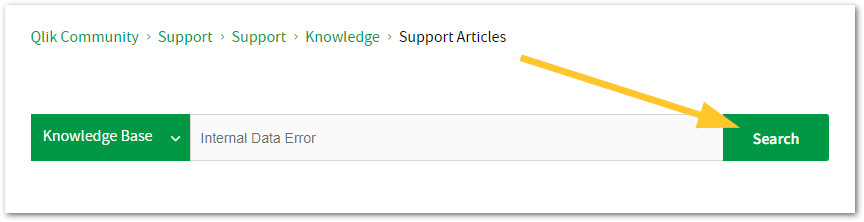

Looking for content? Type your question into our global search bar:

The Knowledge Base

Leverage the enhanced and continuously updated Knowledge Base to find solutions to your questions and best practice guides. Bookmark this page for quick access!

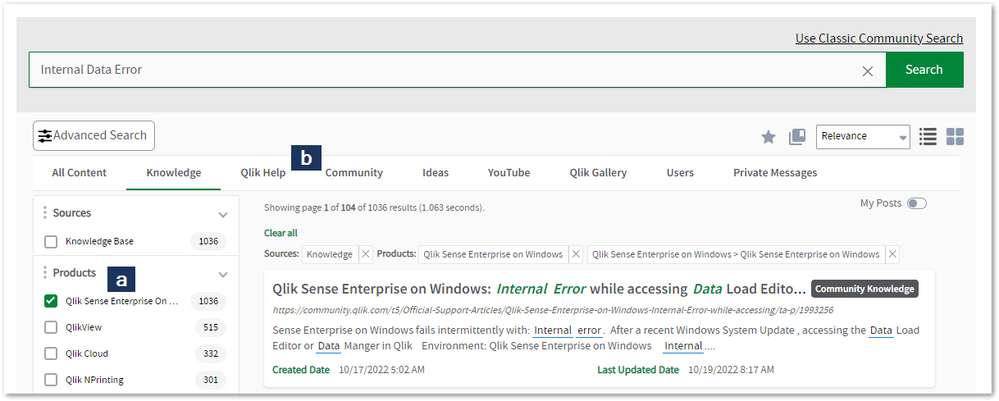

- Go to the Official Support Articles Knowledge base

- Type your question into our Search Engine

- Need more filters?

- Filter by Product

- Or switch tabs to browse content in the global community, on our Help Site, or even on our Youtube channel

Blogs

Subscribe to maximize your Qlik experience!

The Support Updates Blog

The Support Updates blog delivers important and useful Qlik Support information about end-of-product support, new service releases, and general support topics. (click)The Qlik Design Blog

The Design blog is all about product and Qlik solutions, such as scripting, data modelling, visual design, extensions, best practices, and more! (click)The Product Innovation Blog

By reading the Product Innovation blog, you will learn about what's new across all of the products in our growing Qlik product portfolio. (click)Our Support programs:

Q&A with Qlik

Live sessions with Qlik Experts in which we focus on your questions.Techspert Talks

Techspert Talks is a free webinar to facilitate knowledge sharing held on a monthly basis.Technical Adoption Workshops

Our in depth, hands-on workshops allow new Qlik Cloud Admins to build alongside Qlik Experts.Qlik Fix

Qlik Fix is a series of short video with helpful solutions for Qlik customers and partners.The Qlik Forums

- Quick, convenient, 24/7 availability

- Monitored by Qlik Experts

- New releases publicly announced within Qlik Community forums (click)

- Local language groups available (click)

Ideation

Suggest an idea, and influence the next generation of Qlik features!

Search & Submit Ideas

Ideation GuidelinesHow to create a Qlik ID

Get the full value of the community.

Register a Qlik ID:

- Go to: qlikid.qlik.com/register

- You must enter your company name exactly as it appears on your license or there will be significant delays in getting access.

- You will receive a system-generated email with an activation link for your new account. NOTE, this link will expire after 24 hours.

If you need additional details, see: Additional guidance on registering for a Qlik account

If you encounter problems with your Qlik ID, contact us through Live Chat!

2. Chat

Incidents are supported through our Chat, by clicking Chat Now on any Support Page across Qlik Community.

To raise a new issue, all you need to do is chat with us. With this, we can:

- Answer common questions instantly through our chatbot

- Have a live agent troubleshoot in real time

- With items that will take further investigating, we will create a case on your behalf with step-by-step intake questions.

3. Qlik Support Case Portal

Log in to manage and track your active cases in Manage Cases. (click)

Please note: to create a new case, it is easiest to do so via our chat (see above). Our chat will log your case through a series of guided intake questions.

Your advantages:

- Self-service access to all incidents so that you can track progress

- Option to upload documentation and troubleshooting files

- Option to include additional stakeholders and watchers to view active cases

- Follow-up conversations

When creating a case, you will be prompted to enter problem type and issue level. Definitions shared below:

Problem Type

Select Account Related for issues with your account, licenses, downloads, or payment.

Select Product Related for technical issues with Qlik products and platforms.

Priority

If your issue is account related, you will be asked to select a Priority level:

Select Medium/Low if the system is accessible, but there are some functional limitations that are not critical in the daily operation.

Select High if there are significant impacts on normal work or performance.

Select Urgent if there are major impacts on business-critical work or performance.

Severity

If your issue is product related, you will be asked to select a Severity level:

Severity 1: Qlik production software is down or not available, but not because of scheduled maintenance and/or upgrades.

Severity 2: Major functionality is not working in accordance with the technical specifications in documentation or significant performance degradation is experienced so that critical business operations cannot be performed.

Severity 3: Any error that is not Severity 1 Error or Severity 2 Issue. For more information, visit our Qlik Support Policy.

Escalate a Support Case

If you require a support case escalation, you have two options:

- Request to escalate within the case, mentioning the business reasons.

To escalate a support incident successfully, mention your intention to escalate in the open support case. This will begin the escalation process. - Contact your Regional Support Manager

If more attention is required, contact your regional support manager. You can find a full list of regional support managers in the How to escalate a support case article.

Resources

A collection of useful links.

Qlik Cloud Status Page

Keep up to date with Qlik Cloud's status.

Support Policy

Review our Service Level Agreements and License Agreements.

Live Chat and Case Portal

Your one stop to contact us.

Recent Documents

-

Qlik Sense Utility - Functions and Features

The QlikSenseUtil comes bundled with Qlik Sense Enterprise on Windows. It's executable is by default stored in: %Program Files%\Qlik\Sense\Repository\... Show MoreThe QlikSenseUtil comes bundled with Qlik Sense Enterprise on Windows. It's executable is by default stored in:

%Program Files%\Qlik\Sense\Repository\Util\QlikSenseUtil\QlikSenseUtil.exe

Qlik Sense Util is no longer supported as a backup and restore tool. For the officially supported backup and Restore Process, see Backup and Restore.

It serves as a:

- Qlik Sense Port Checker: Pings every active port Qlik sense is using for every node. Note that some ports can be for internal use and might not be exposed to the node you are pinging from.

- Data structure checker: Mostly applicable for a synchronized persistence deployment. Checks that the data references are not broken.

- Duplicate server nodes: Mostly applicable for a synchronized persistence deployment. Checks there are no duplicate server nodes in the database.

- Remove old app binaries: Remove deleted app binaries from the selected folder.

- Get system configuration: Returns the system configuration from the database.

- Connection string editor: A tool for editing the connection string.

- Service Cluster: Functionality for updating the service cluster in the database.

- Log Fetcher: A tool for fetching logs from the system. If the time span is shorter than 24h all logs will be summarized in one single file. This will make trouble shooting easier if you are looking for events what occurred within a short time frame.

- Fetching logs with a time span larger than 24h will copy the logs and paste them in their logical structure. The filter functionality selects every row with the specified keyword and creates log files based on rows selected.

Note : Qlik Support prefers using the Log Collector included in How To Collect Qlik Sense Log Files

- Fetching logs with a time span larger than 24h will copy the logs and paste them in their logical structure. The filter functionality selects every row with the specified keyword and creates log files based on rows selected.

- Service type checker: Mostly applicable for a synchronized persistence deployment. Checking for duplicated service types in the database.

-

Qlik Sense PosgreSql connector cut off strings containing more than 255 characte...

The native Qlik PostgreSql connector (ODBC package) does not import strings with more of 255 characters. Such strings are just cut off, the following ... Show MoreThe native Qlik PostgreSql connector (ODBC package) does not import strings with more of 255 characters. Such strings are just cut off, the following characters are not shown in the applications. No warnings are thrown during the script execution.

The problem affects the Qlik connector but not all the DNS drivers.

Environment

Qlik Sense February 2023 and higher versions

Qlik CloudResolution

When setting up the PostgreSQL connection, set the parameter TextAsLongVarchar with value 1 in the connector Advanced settings.

When TextAsLongVarchar is set, and the Max String Length is set to 4096, 4096 characters are loaded.

Notice that there still are limitations to this functionality related to the datatype used in the database. Data types like text[] are currently not supported by Simba and they are affected by the 255 characters limitation even when the TextAsLongVarchar parameter is applied.

Qlik has opened an improvement request to Simba to support them.

As workaround, it is possible to test a custom connector using a DSN driver to deal with these data types.

Internal Investigation ID

QB-21497

-

Qlik Replicate DB2 Z/OS Source Endpoint Date column not loading to Target

DB2 Z/OS Source Endpoint loading a table with date columns leads to the data coming in as blank. The source data has valid dates but is not loading to... Show MoreDB2 Z/OS Source Endpoint loading a table with date columns leads to the data coming in as blank. The source data has valid dates but is not loading to the target in the Qlik Replicate Task

Resolution

The ODBC Driver for DB2 Z/OS requires the 11.5.6 or the 11.5.8 ODBC driver to be installed on the Qlik Replicate Server.

Cause

ODBC driver 11.5.9 or other installed.

Related Content

z/OS Prerequisites | Qlik Replicate Help

Environment

Qlik Replicate 2023.5

-

Qlik Replicate - MySQL source defect and fix (2023.11)

Upgrade installation or fresh installation of Qlik Replicate 2023.11 (includes builds GA, PR01 & PR02), Qlik Replicate reports errors for MySQL or Mar... Show MoreUpgrade installation or fresh installation of Qlik Replicate 2023.11 (includes builds GA, PR01 & PR02), Qlik Replicate reports errors for MySQL or MariaDB source endpoints. The task attempts over and over for the source capture process but fail, Resume and Startup from timestamp leads to the same results:

[SOURCE_CAPTURE ]T: Read next binary log event failed; mariadb_rpl_fetch error 0 () [1020403] (mysql_endpoint_capture.c:1060)

[SOURCE_CAPTURE ]T: Error reading binary log. [1020414] (mysql_endpoint_capture.c:3998)

Environment:

- Replicate 2023.11 (GA, PR01, PR02)

- MySQL source database , any version

- MariaDB source database , any version

Fix Version & Resolution:

Upgrade to Replicate 2023.11 PR03 (coming soon)

Workaround:

If you are running 2022.11, then keep run it.

No workaround for 2023.11 (GA, or PR01/PR02) .

Cause & Internal Investigation ID(s):

Jira: RECOB-8090 , Description: MySQL source fails after upgrade from 2022.11 to 2023.11

There is a bug in the MariaDB library version 3.3.5 that we started using in Replicate in 2023.11.

The bug was fixed in the new version of MariaDB library 3.3.8 which be shipped with Qlik Replicate 2023.11 PR03 and upper version(s).Related Content:

support case #00139940, #00156611

Replicate - MySQL source defect and fix (2022.5 & 2022.11)

-

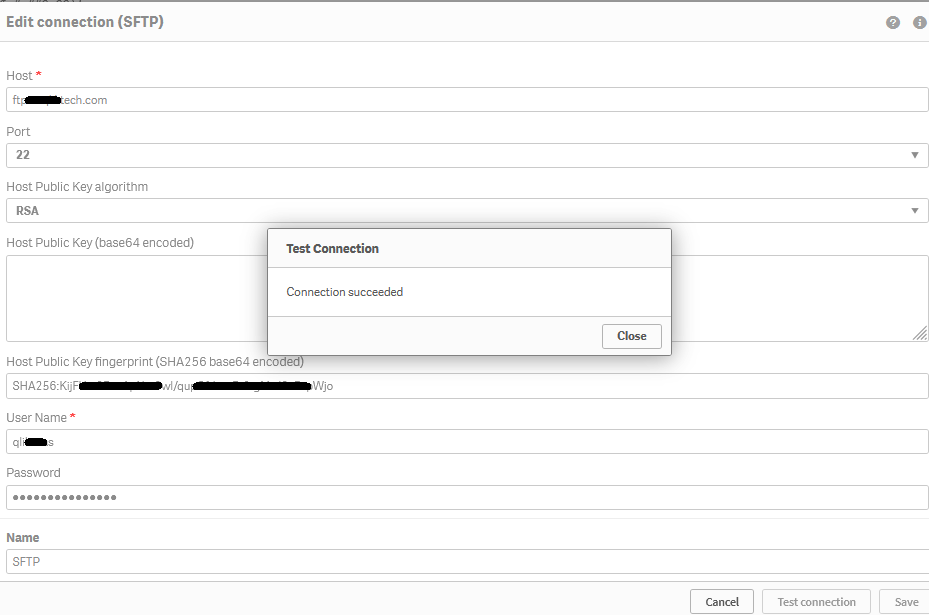

How to create an SFTP data connection in a Qlik Cloud Application using 'fingerp...

Steps Retrieve the SFTP server fingerprint (this example uses port 22) Open a Windows command prompt Run the following command: sftp -P 22 username... Show MoreSteps

- Retrieve the SFTP server fingerprint (this example uses port 22)

- Open a Windows command prompt

- Run the following command:

sftp -P 22 username@ftptest.test.com

Where username is 'username' and 'ftptest.test.com' is the sftp hostname. - The response received will be similar to:

C:\Windows\System32>sftp -P 22 username@ftptest.test.com The authenticity of host 'ftptest.test.com (18x.6x.15x.21x)' can't be established. RSA key fingerprint is SHA256:KijFUxxxxxxxxxxxxxx/xxxxxxxxxxxxxxxxxapWjoif the above does not retrieve your fingerprint, contact your SFTP server administrator who can provide fingerprint or public key details for you.

- Copy the RSA fingerprint

- Create a Qlik Cloud Data Connection

- Open your Qlik Cloud Application and navigate to the data load editor

- Under Data Connection choose Create new connection

- Search and select SFTP

- Enter your credentials and fingerprint information

- Hostname

- (ie: myftpserver.domain.com. Do not pre-pend the address with ftp:// nor sftp://)

- Username

- Password

- Insert fingerprint

- Hostname

- Click Test connection

If there are connectivity issues, to request data from your data connections through a firewall, you need to add the underlying (all three) IP addresses for your region to your firewall's allow list. These addresses are static and will not change. See Allowlisting domain names and IP addresses.

Other Diagnostics:

Error in SFTP connector

- Error shows public key mismatch error in Qlik SFTP connector

Diagnostic

- Open Command Prompt and enter "ssh-keyscan -t rsa <host ip or name) | ssh-keygen -lf -"

Example: (insert a valid SFTP server host IP)

- C:\Windows\System32>ssh-keyscan -t rsa 192.168.xxx.255 | ssh-keygen -lf -

Result:

- "(stdin) is not a public key file"

In case of this or other SFTP connection errors, please work with the SFTP server administrator or SFTP server vendor in order in order to enable the generation of a publicly available fingerprint.Related Information:

The information in this article is provided as-is and to be used at own discretion. Depending on tool(s) used, customization(s), and/or other factors ongoing support on the solution below may not be provided by Qlik Support.

- Retrieve the SFTP server fingerprint (this example uses port 22)

-

QMC Reload Failure Despite Successful Script in Qlik Sense Nov 2023 and above

Reload fails in QMC even though script part is successfull in Qlik Sense Enterprise on Windows November 2023 and above.When you are using a NetApp bas... Show MoreReload fails in QMC even though script part is successfull in Qlik Sense Enterprise on Windows November 2023 and above.

When you are using a NetApp based storage you might see an error when trying to publish and replace or reloading a published app.In the QMC you will see that the script load itself finished successfully, but the task failed after that.

ERROR QlikServer1 System.Engine.Engine 228 43384f67-ce24-47b1-8d12-810fca589657

Domain\serviceuser QF: CopyRename exception:

Rename from \\fileserver\share\Apps\e8d5b2d8-cf7d-4406-903e-a249528b160c.new

to \\fileserver\share\Apps\ae763791-8131-4118-b8df-35650f29e6f6

failed: RenameFile failed in CopyRenameExtendedException: Type '9010' thrown in file

'C:\Jws\engine-common-ws\src\ServerPlugin\Plugins\PluginApiSupport\PluginHelpers.cpp'

in function 'ServerPlugin::PluginHelpers::ConvertAndThrow'

on line '149'. Message: 'Unknown error' and additional debug info:

'Could not replace collection

\\fileserver\share\Apps\8fa5536b-f45f-4262-842a-884936cf119c] with

[\\fileserver\share\Apps\Transactions\Qlikserver1\829A26D1-49D2-413B-AFB1-739261AA1A5E],

(genericException)'

<<< {"jsonrpc":"2.0","id":1578431,"error":{"code":9010,"parameter":

"Object move failed.","message":"Unknown error"}}ERROR Qlikserver1 06c3ab76-226a-4e25-990f-6655a965c8f3

20240218T040613.891-0500 12.1581.19.0

Command=Doc::DoSave;Result=9010;ResultText=Error: Unknown error

0 0 298317 INTERNAL&

emsp; sa_scheduler b3712cae-ff20-4443-b15b-c3e4d33ec7b4

9c1f1450-3341-4deb-bc9b-92bf9b6861cf Taskname Engine Not available

Doc::DoSave Doc::DoSave 9010 Object move failed.

06c3ab76-226a-4e25-990f-6655a965c8f3Resolution

Potential workarounds

- Roll back your upgrade to a version earlier than November 2023

- Change the storage to a file share on a Windows server

Cause

The most plausible cause currently is that the specific engine version has issues releasing File Lock operations. We are actively investigating the root cause, but there is no fix available yet.

An update will be provided as soon as there is more information to share.

Internal Investigation ID(s)

QB-25096

QB-26125Environment

- Qlik Sense Enterprise on Windows November 2023 and above

-

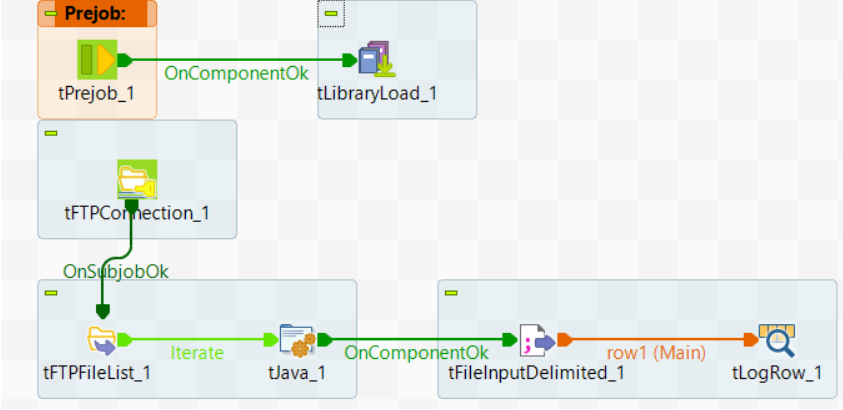

Talend Data Integration: Reading FTP files directly without downloading them to ...

The dedicated tFTPxxx components do not support reading files directly from FTP servers. Usually the file is obtained from the FTP server to the local... Show MoreThe dedicated tFTPxxx components do not support reading files directly from FTP servers. Usually the file is obtained from the FTP server to the local system first and then the file is read. However, this can cause performance issues when many files or large files need to be read.

Resolution

One solution is to use the JSch library, which allows the creation of an InputStream object from a file via SFTP. Then, use the tFileInputDelimited component to read data from the InputStream object. This avoids downloading the file to the local system.

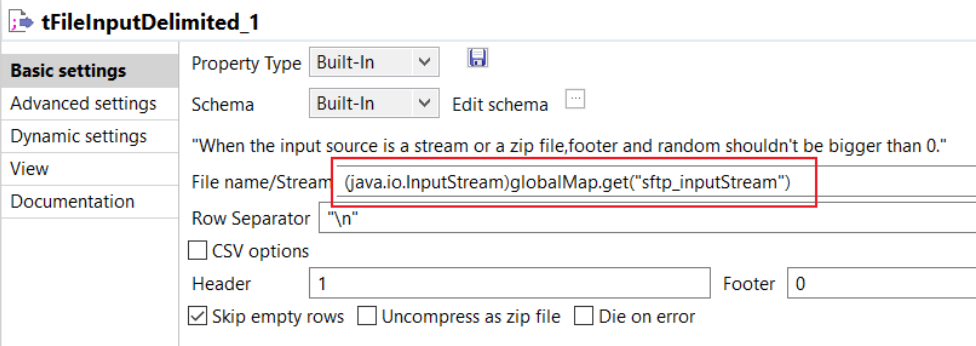

For example, in the following Job, the Java code iterates over the FTP files one by one, using a JSch library file and a tFileInputDelimited component to read the file directly.

The tLibraryLoad component loads the jsch-0.1.55.jar JSch library file, then the tJava component uses the following code to create the InputStream object for the current FTP file.

JSch jsch = new JSch(); Session session = null; try { session = jsch.getSession("username", "localhost", 22); session.setConfig("StrictHostKeyChecking", "no"); session.setPassword("password"); session.connect(); Channel channel = session.openChannel("sftp"); channel.connect(); ChannelSftp sftpChannel = (ChannelSftp) channel; java.io.InputStream in=sftpChannel.get( ((String)globalMap.get("tFTPFileList_1_CURRENT_FILE"))); globalMap.put("sftp_inputStream",in); } catch (JSchException e) { e.printStackTrace(); } catch (SftpException e) { e.printStackTrace(); }The tFileInputDelimited component reads the data from the InputStream object:

Environment

-

Qlik Talend Products: Java 17 Migration Guide

From R2024-05, Java 17 will become the only supported version to start most Talend modules, enforcing the improved security of Java 17 and eliminating... Show MoreFrom R2024-05, Java 17 will become the only supported version to start most Talend modules, enforcing the improved security of Java 17 and eliminating concerns about Java's end-of-support for older versions. In 2025, Java 17 will become the only supported version for all operations in Talend modules.

Starting from v2.13, Talend Remote Engine requires Java 17 to run. If some of your artifacts, such as Big Data Jobs, require other Java versions, see Specifying a Java version to run Jobs or Microservices.Content

- Prerequisites

- Procedure

- Windows

- Linux

- MAC OS

- Multiple JDK versions

- Studio

- Remote Engine

- ESB - Runtime

- Studio

- Talend Administration Center (TAC)

- CICD

- Windows Users

- Linux Users

- Jenkins Users

- Additional Notes

- Specifying a Java version to run Jobs or Microservices

Prerequisites

Qlik Talend Module Patch Level and Version Studio Supported from R2023-10 onwards Remote Engine 2.13 or later Runtime 8.0.1-R2023-10 or later Procedure

Windows

For Windows users, please follow the JDK installation guide (docs.oracle.com).

Linux

For Linux users, please follow the JDK installation guide (docs.oracle.com).

MAC OS

For MAC OS users, please follow the JDK installation guide (docs.oracle.com).

Multiple JDK versions

When working with software that supports multiple versions of Java, it's important to be able to specify the exact Java version you want to use. This ensures compatibility and consistent behavior across your applications. Here is how you can specify a specific Java version on the following products (such as build servers, shared application server, and similar):

Studio

For Studio users who are using multiple JDKs, please follow the appropriate instructions listed above and follow the proceeding additional steps:

- Backup and edit the <Studio Home>\Talend-Studio-win-x86_64.ini file

- Prepend:

-vm

<JDK17 HOME>\bin\server\jvm.dll

Remote Engine

For Remote Engine (RE) users who are using multiple JDKs, please follow the appropriate instructions listed above and follow the proceeding additional steps.

- Backup and edit the <RE HOME>/etc/talend-remote-engine-wrapper.conf file

- Modify the set.default.JAVA_HOME= property to point to the <JDK 17 HOME> path.

Note 1: If Remote Engine is not installed as a service, the JDK file will be set in the <RE HOME>/bin/setenv file.

Note 2: When it comes to running Jobs or Microservices, you retain the flexibility to either use the default Java 17 version or choose older Java versions, through straightforward configuration of the engine.

How to modify?

Check the following etc folder based configuration and change it to installed jdk/jre path:

{

org.talend.ipaas.rt.dsrunner.cfg--> ms.custom.jre.path

org.talend.remote.jobserver.server.cfg--> org.talend.remote.jobserver.commons.config.JobServerConfiguration.JOB_LAUNCHER_PATH

}

ESB - Runtime

For Runtime users who are using multiple JDKs, please follow the appropriate instructions listed above and follow the proceeding additional steps.

- Backup and edit the <Runtime home>/etc/<TALEND-8-CONTAINER service>-wrapper.conf

- Modify the set.default.JAVA_HOME=C:\<JDK 17 HOME> path

If Runtime is not running as a service:

- Backup and edit the <Runtime home>/bin/setenv.sh

- Modify the SET JAVA_HOME= <JDK 17 HOME> path

Studio

- Data Integration (DI): After installing the 8.0 R2023-10 Talend Studio monthly update or a later one, if you switch the Java version to 17 and relaunch your Talend Studio with Java 17, you must enable your project settings for Java 17 compatibility.

- Go to Studio

- Go to File

- Edit Project properties

- Go to Build

- Go to Java Version

- Activate "Enable Java 17 compatibility"

With the Enable Java 17 compatibility option activated, any Job built by Talend Studio cannot be executed with Java 8. For this reason, verify the Java environment on your Job execution servers before activating the option.

- Big Data Users: Do not enable Java 17 compatibility unless your Spark Cluster supports Java 17.

Talend Administration Center (TAC)

To use Talend Administration Center with Java 17, you need to open the <tac_installation_folder>/apache-tomcat/bin/setenv.sh file and add the following commands:

# export modules export JAVA_OPTS="$JAVA_OPTS --add-opens=java.base/sun.security.x509=ALL-UNNAMED --add-opens=java.base/sun.security.pkcs=ALL-UNNAMED"

Windows users use <tac_installation_folder>\apache-tomcat\bin\setenv.bat

CICD

Windows Users

For Java 17 users, Talend CICD process requires the following Maven options:

- Backup and edit <Maven_home>\bin\mvn.cmd

- Modify to:

set "MAVEN_OPTS=%MAVEN_OPTS% --add-opens=java.base/java.net=ALL-UNNAMED --add-opens=java.base/sun.security.x509=ALL-UNNAMED --add-opens=java.base/sun.security.pkcs=ALL-UNNAMED"

Linux Users

For Java 17 users, Talend CICD process requires the following Maven options:

- Backup and edit <Maven_home>/bin/mvn

- Modify to:

export MAVEN_OPTS="$MAVEN_OPTS \ --add-opens=java.base/java.net=ALL-UNNAMED \ --add-opens=java.base/sun.security.x509=ALL-UNNAMED \ --add-opens=java.base/sun.security.pkcs=ALL-UNNAMED"

Jenkins Users

- Backup and edit the jenkins_pipeline_simple.xml

- Include the following in the Talend_CI_RUN_CONFIG parameter:

<name>TALEND_CI_RUN_CONFIG</name> <description>Define the Maven parameters to be used by the product execution, such as: - Studio location - debug flags These parameters will be put to maven 'mavenOpts'. If Jenkins is using Java 17, add: --add-opens=java.base/java.net=ALL-UNNAMED --add-opens=java.base/sun.security.x509=ALL-UNNAMED --add-opens=java.base/sun.security.pkcs=ALL-UNNAMED </description>

Additional Notes

Specifying a Java version to run Jobs or Microservices

Overview

Enable your Remote Engine to run Jobs or Microservices using a specific Java version.

By default, a Remote Engine uses the Java version of its environment to execute Jobs or Microservices. With Remote Engine v2.13 and onwards, Java 17 is mandatory for engine startup. However, when it comes to running Jobs or Microservices, you can specify a different Java version. This feature allows you to use a newer engine version to run the artifacts designed with older Java versions, without the need to rebuild these artifacts, such as the Big Data Jobs, which reply on Java 8 only.

When developing new Jobs or Microservices that do not exclusively rely on Java 8, that is to say, they are not Big Data Jobs, consider building them with the add-opens option to ensure compatibility with Java 17. This option opens the necessary packages for Java 17 compatibility, making your Jobs or Microservices directly runnable on the newer Remote Engine version, without having to go through the procedure explained in this section for defining a specific Java version. For further information about how to use this add-opens option and its limitation, see Setting up Java in Talend Studio.

Procedure

- Stop the engine.

- Browse to the <RemoteEngineInstallationDirectory>/etc directory.

- Depending on the type of the artifacts you need to run with a specific Java version, do the following:

For both artifact types, use backslashes to escape characters specific to a Windows path, such as colons, whitespace, and directory separators, while keeping in mind that directory separators are also backslashes on Windows.

Example:

c:\\Program\ Files\\Java\\jdk11.0.18_10\\bin\\java.exe

- For Jobs, in the <RemoteEngineInstallationDirectory>/etc/org.talend.remote.jobserver.server.cfg file, add the path to the Java executable file.

Example:

org.talend.remote.jobserver.commons.config.JobServerConfiguration.JOB_LAUNCHER_PATH=c:\\jdks\\jdk11.0.18_10\\bin\\java.exe

- For Microservices, in the <RemoteEngineInstallationDirectory>/etc/org.talend.ipaas.rt.dsrunner.cfg, add the path to the Java executable file.

Example:

ms.custom.jre.path=C\:/Java/jdk/bin

Make this modification before deploying your Microservices to ensure that these changes are correctly taken into account.

- For Jobs, in the <RemoteEngineInstallationDirectory>/etc/org.talend.remote.jobserver.server.cfg file, add the path to the Java executable file.

- Restart the engine.

-

Qlik Replicate and MS-CDC source endpoint: CDC Data Replication stopped

The Qlik Replicate task log (with source_capture and trace logging enabled) shows LSN information similar to: 00023576: 2024-03-08T17:46:03 [SOURCE_CA... Show MoreThe Qlik Replicate task log (with source_capture and trace logging enabled) shows LSN information similar to:

00023576: 2024-03-08T17:46:03 [SOURCE_CAPTURE ]T: MS-CDC capture loop. SQL Server start time '2024-03-06 22:41:29.170', start LSN '000A9B8100000050000D', end time '2024-03-08 19:46:04', end LSN '' (sqlserver_mscdc.c:3684)

00023576: 2024-03-08T17:46:08 [SOURCE_CAPTURE ]T: MS-CDC capture loop. SQL Server start time '2024-03-06 22:41:29.170', start LSN '000A9B8100000050000D', end time '2024-03-08 19:46:09', end LSN '' (sqlserver_mscdc.c:3684)This indicates the LSN is looping and that the LSN was purged.

Resolution

- If you can restore the LSN, the task can be resumed.

- If the LSN cannot be restored, the task will require a full reload.

Environment

-

Qlik Replicate an Oracle target: ORA-03156: OCI call timed out

A Qlik Replicate task with an Oracle target fails with the following in the task log: [TARGET_LOAD ]T: ORA-03156: OCI call timed out This can be expec... Show MoreA Qlik Replicate task with an Oracle target fails with the following in the task log:

[TARGET_LOAD ]T: ORA-03156: OCI call timed out

This can be expected with long-running executions during a full load.

Resolution

To disable the timeout, set the Internal Parameter executeTimeout on the Oracle target endpoint.

- Go to the Oracle Endpoint connection

- Switch to the Advanced tab

- Click Internal Parameters

- Add executeTimeout with a value of 0

For more information about Internal Parameters, see Qlik Replicate: How to set Internal Parameters and what are they for?

Environment

-

SQL MS-Replication: How to find tables (articles) in a Publication

This article briefly explains how to find what tables (articles) are used in a Publication. This method is useful to identify issues when a table does... Show MoreThis article briefly explains how to find what tables (articles) are used in a Publication. This method is useful to identify issues when a table does not capture updates.

Resolution

Run the following query from Microsoft Studio Management or any Database Utility (such as DBeaver):

SELECT

msp.publication AS PublicationName,

msa.publisher_db AS DatabaseName,

msa.article AS ArticleName,

msa.source_owner AS SchemaName,

msa.source_object AS TableName

FROM distribution.dbo.MSarticles msa

JOIN distribution.dbo.MSpublications msp ON msa.publication_id = msp.publication_id

ORDER BY

msp.publication,

msa.article;Environment

-

Qlik Compose Data Warehouse - Incremental Load Patterns for Non-Replicate Data S...

Qlik’s Data Integration Platform provides functionality to support near real-time data delivery from traditional sources, files, and some SaaS environ... Show MoreQlik’s Data Integration Platform provides functionality to support near real-time data delivery from traditional sources, files, and some SaaS environments via Qlik Replicate. Qlik Compose for Data Warehouses is integrated with Qlik Replicate to automate the incremental load process of the data warehouse based on change data capture (CDC) from the source system.

However, there are certain scenarios where the automated incremental load processes cannot be leveraged. (For example, SAP Hana Log based replication, third party data ingestion or cloud data sharing programs).

In those instances there are extract transform and load (ETL) patterns that can be implemented in Qlik Compose to support incremental data loads. This paper will describe these scenarios and show how to implement custom incremental load patterns in Qlik Compose and how those patterns can be applied to query or view based mappings.

-

QlikView HSTS (HTTP Strict-Transport-Security response header)

HSTS (HTTP Strict-Transport-Security response header) security check failed. HTTP Strict Transport Security (HSTS) is a policy mechanism that helps to... Show MoreHSTS (HTTP Strict-Transport-Security response header) security check failed.

HTTP Strict Transport Security (HSTS) is a policy mechanism that helps to protect websites against man-in-the-middle attacks such as protocol downgrade attacks and cookie hijacking. It allows web servers to declare that web browsers (or other complying user agents) should automatically interact with it using only HTTPS connections, which provide Transport Layer Security (TLS/SSL), unlike the insecure HTTP used alone.

Resolution

Before adding HSTS to either the QlikView AccessPoint or the QlikView Management Console (QMC), set both up to use HTTPS. See for QlikView AccessPoint and QMC with HTTPS and a custom SSL certificate instructions.

HSTS for the QlikView AccessPoint

Custom response headers can be set in both the QlikView WebServer (beginning with 12.30) and Microsoft IIS (all QlikView versions).

The custom header needed for HSTS is: Strict-Transport-Security

- Run text editor (e.g. Notepad) as Administrator

- Edit QlikView WebServer configurations file. The default path is C:\ProgramData\QlikTech\WebServer\config.xml

- Locate CustomHeaders element within the config file. For more information, see QlikView WebServer: Custom HTTP Header.

- Add custom response header as <Header> element(s) with sub-elements defining Strict-Transport-Security as the name and your desired max-age= as value.

Example:<Config> ... <Web> ... <CustomHeaders> <Header> <Name>Strict-Transport-Security</Name> <Value>max-age=31536000</Value> </Header> </CustomHeaders> </Web> </Config> - Restart QlikView WebServer service

For information on how to configure custom headers with Microsoft IIS, see Setting Custom HTTP Headers in IIS for QlikView. The site https://https.cio.gov/hsts/ gives information on how to setup the webserver to enable HSTS.

Testing can be achieved using any number of third party sites, such as:HSTS for the QlikView Management Console (QMC)

This setting was introduced with QlikView 12.70 (May 2022) SR1.

QVManagementService.exe.Config Changes:

- Stop the QlikView Management Services

- Go to ProgramFiles => qliktech => management service => open QVManagementService.exe.config using an administrator notepad

- Update this value to true =>

<add key="UseHSTS" value="true" /> - To enable HSTS to header this value has to be set to true

<add key="UseHTTPS" value="true" />

Environment:

- Run text editor (e.g. Notepad) as Administrator

-

Connector reply error: Executing non-SELECT queries is disabled. Please contact ...

Qlik ODBC connector package (database connector built-in Qlik Sense) fails to reload with error Connector reply error: Executing non-SELECT queries i... Show MoreQlik ODBC connector package (database connector built-in Qlik Sense) fails to reload with error Connector reply error:

Executing non-SELECT queries is disabled. Please contact your system administrator to enable it.

The issue is observed when the query following SQL keyword is not SELECT, but another statement like INSERT, UPDATE, WITH .. AS or stored procedure call.Environment:

- Qlik Sense Enterprise on Windows

- Qlik Sense Enterprise SaaS

- Qlik Sense Desktop

- QlikView Server

- QlikView Desktop

Cause

See the Qlik Sense February 2019 Release Notes for details on item QVXODBC-1406.

Resolution

By default, non-SELECT queries are disabled in the Qlik ODBC Connector Package and users will get an error message indicating this if the query is present in the load script. In order to enable non-SELECT queries, allow-nonselect-queries setting should be set to True by the Qlik administrator.

To enable non-SELECT queries:- Modify the QvOdbcConnectorPackage.exe.config found in the locations mentioned below.

Set the parameter allow-nonselect-queries to True

This is case-sensitive. true will not work.

In a multi node environment, the changes need to be applied to all nodes.

Configuration file QvOdbcConnectorPackage.exe.config locations:

- Qlik Sense Enterprise: C:\Program Files\Common Files\Qlik\Custom Data\QvOdbcConnectorPackage

- Qlik Sense Desktop: C:\Users\user-name\AppData\Local\Programs\Common Files\Qlik\Custom Data\QvOdbcConnectorPackage

- QlikView: C:\Program Files\Common Files\QlikTech\Custom Data\QvOdbcConnectorPackage

As we are modifying the configuration files, these files will be overwritten during an upgrade and will need to be made again.

- Non-select statements are now enabled and generally this is sufficient to resolve the issue.

If, however, you need to run SQL statements without any data table returned (INSERT/ UPDATE/ DELETE/ DROP), you can optionally add the keyword !EXECUTE_NON_SELECT_QUERY at the end of the query. Other queries that return data (such as WITH ... AS in PostgreSQL) do not need this keyword.

The EXECUTE_NON_SELECT_QUERY signals to the script processing engine that the query may not return any data.

Only apply !EXECUTE_NON_SELECT_QUERY if you use the default connector settings (such as bulk reader enabled and reading strategy "connector"). Applying !EXECUTE_NON_SELECT_QUERY to non-default settings may lead to unexpected reload results and/or error messages.

More details are documented in the Qlik ODBC Connector package help site.

Related Content:

Feature Request Delivered: Executing non-SELECT queries with Qlik Sense Business

Execute SQL Set statements or Non Select Queries -

How to enable SAP HANA CTS Mode support in Qlik Replicate

Qlik Replicate can take advantage of the SAP HANA CTS mode feature. CTS mode must be enabled on the SAP HANA Source. To enable the feature in Qlik Re... Show MoreQlik Replicate can take advantage of the SAP HANA CTS mode feature.

CTS mode must be enabled on the SAP HANA Source.

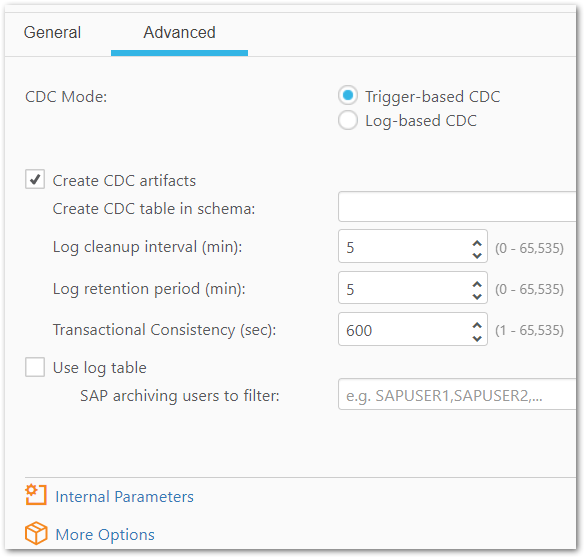

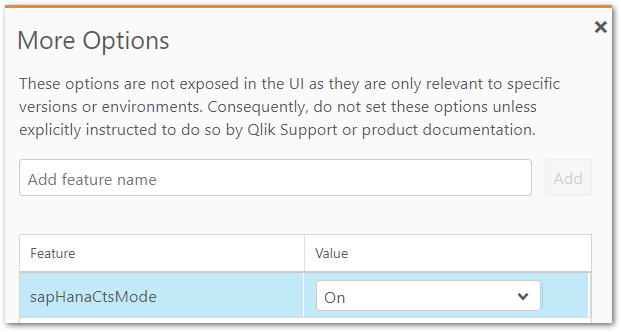

To enable the feature in Qlik Replicate:

- In the Qlik Replicate console, navigate to your SAP HANA Source Endpoint

- Open the Advanced tab. open and click on the Advanced Tab.

You need to manually drop the current triggers as the new table name with CTS enabled will be attrep_cdc_changes_cts, not attrep_cdc_changes. By default, attrep_cdc_changes_cts is partitioned with default value of 100,000,000 rows. SAP HANA allows maximum 16K partitions. Updating threshold for each partition can impact performance and hence should be thoroughly tested in a Non-Production environment. These steps do not include the migration to CTS Mode where you can Stop and or Resume the Tasks by enabling this feature.

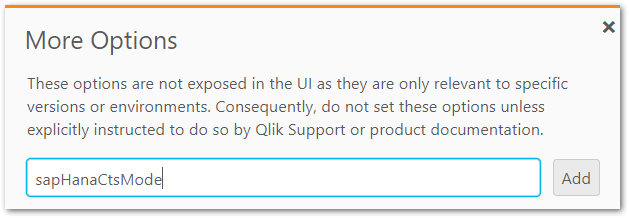

- Click More Options

- Input the feature sapHanaCtsMode and click Add

- Set the value to On

- The new settings will take effect when you stop and restart the task from the endpoint.

Environment

The information in this article is provided as-is and to be used at own discretion. Depending on tool(s) used, customization(s), and/or other factors ongoing support on the solution below may not be provided by Qlik Support.

Related Content

Change CTS Configuration | SAP HANA Help Portal

-

Qlik Replicate Log Stream task error: End of time-line reached and all records w...

When a reload target is performed on the logstream staging task (parent) you will get the following error in every Log Stream remote task (child): [SO... Show MoreWhen a reload target is performed on the logstream staging task (parent) you will get the following error in every Log Stream remote task (child):

[SOURCE_CAPTURE ]W: End of timeline, no more records to read (ar_cdc_channel.c:1210)

[SORTER ]E: End of time-line reached and all records were applied, task will stop [1020101] (sorter_transaction.c:3486)The task stops replication to the remote task.

Environment

- Qlik Replicate with Log Steam tasks

Resolution

There are 2 optional ways to resolve this issue:

Option 1: Reload the Log Stream remote (child) task

Option 2: Start the Log Stream stagging task (parent) from the timestamp before its reload. To do this please follow the steoos below:- Find the log of the log stream staging task(parent) just before the reload was performed. i.e. reptask_TASKNAMEof_STAGING_TASK?????.log. that has a date just before the reload was performed.

- Go to the end of the log in step one and get the line that says Final saved task state. At the end of the line get the timestamp

- Once you get the timestamp, you will need to convert the EPOCH time to Server Time

- Use that time to start the task.

-

Qlik Sense ODBC database connector performance increase: useBulkReader

Qlik has introduced an update with Qlik Cloud and Qlik Sense Enterprise on Windows which significantly increases the performance of any ODBC database ... Show MoreQlik has introduced an update with Qlik Cloud and Qlik Sense Enterprise on Windows which significantly increases the performance of any ODBC database Connector when working with larger datasets.

The change was rolled out to Qlik Cloud in October 2022 and is available in any Qlik Sense Enterprise on Windows version beginning November 2022.

All new connections automatically have a Bulk Reader feature that uses larger portions of data in the iterations within a load (instead of loading data row by row). This can result in faster load times for larger datasets.

To add this feature to an existing connection, open the connection properties window by selecting Edit and then click Save. The Bulk Reader feature is now turned on. You don’t have to change any connection properties to invoke this feature.

While we believe that most customers will want all their ODBC connections to use this new capability, it is possible to turn off the Bulk Reader feature for a particular connector. To do this, add the parameter useBulkReader with a value of False to the Advanced section of the connector properties window.

Environment

Qlik Cloud

Qlik Sense Enterprise on WindowsThe information in this article is provided as-is and to be used at own discretion. Depending on tool(s) used, customization(s), and/or other factors ongoing support on the solution below may not be provided by Qlik Support.

Related Content

-

Qlik Sense : Reloads failing with error "General Script Error in statement handl...

Many reloads are failing on different schedulers with General Script Error in statement handling. The issue is intermittent.The connection to the data... Show MoreMany reloads are failing on different schedulers with General Script Error in statement handling. The issue is intermittent.

The connection to the data source goes through our Qlik ODBC connectors.The Script Execution log shows a “General Script Error in statement handling” message. Nevertheless, the same execution works if restarted after some time without modifying the script that looks correct.

The engine logs show:

CGenericConnection: ConnectNamedPipe(\\.\pipe\data_92df2738fd3c0b01ac1255f255602e9c16830039.pip) set error code 232

Environment

- Qlik Sense Enterprise on Windows May 2021

- Qlik Sense Enterprise on Windows September 2020 and higher (less frequent occurrence)

Resolution

Change the reading strategy within the current connector:

Set reading-strategy value=engine in C:\ProgramFiles\Common Files\Qlik\CustomData\QvOdbcConnectorPackage\QvODBCConnectorPackage.exe.config

reading-strategy value=connector should be removed

Switching the strategy to engine may impact NON SELECT queries. See Connector reply error: Executing non-SELECT queries is disabled. Please contact your system administrator to enable it. for details.

Internal Investigation ID(s):

QB-8346

-

Wrong content node response type when previewing Qlik NPrinting reports or execu...

The error message Wrong content node response type and other report failures can be caused by invalid applied filters or a cycle that tries to select ... Show MoreThe error message Wrong content node response type and other report failures can be caused by invalid applied filters or a cycle that tries to select an invalid value for the field (a value that doesn't exist in the field).

Note: By default, 'verify filter' is enforced programmatically in NPrinting 17 meaning that if a filter produces empty values in a chart, a report will not be produced and the error you will see is Wrong Content Node Type error.

Environment:

Example 1:

Your source contains the Year field that contains the values 2012, 2013 and 2014 but you added a filter with Year = 2015 to the report resulting in an empty data set.

Example 2:

You added to the report the filter Year = 2014 and the Country field into the Levels node. Remember that adding a field in the Levels node is like a filter. When the report is created it is possible that a value of Country has an empty data set for the year selected. For example, there are no sales in Italy in 2014. This generates the error.

-

Qlik Application Automation: How to automatically rerun a failed automation

An automation will not automatically rerun or retry if it fails. You can, however, rerun a failed automation by using an additional automation. Cauti... Show MoreAn automation will not automatically rerun or retry if it fails. You can, however, rerun a failed automation by using an additional automation.

Caution should be taken when implementing this solution to prevent running endless loops or reruns.

Considerations

Before a solution can be implemented you must decide if the rerun should:

- Retry the failed automation run using the same, if any, input.

- Initiate an entirely new run of the automation.

Retry Automation Run

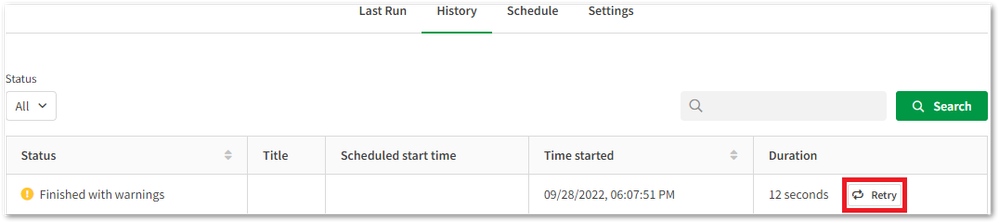

The block Retry Automation Run can be used to retry a specific run of the automation using the same, if any, inputs. This block is useful if the monitored automation has its run mode set to Webhook or Triggered. It is also possible to manually re-run these runs using the Retry button in the automations run history.

Run Automation

The block Run Automation can be used to initiate a new run of the automation. This block is useful if the automation that fails has the run mode set to Scheduled, and the inputs of the failed run wouldn’t be unique.

Rerun example

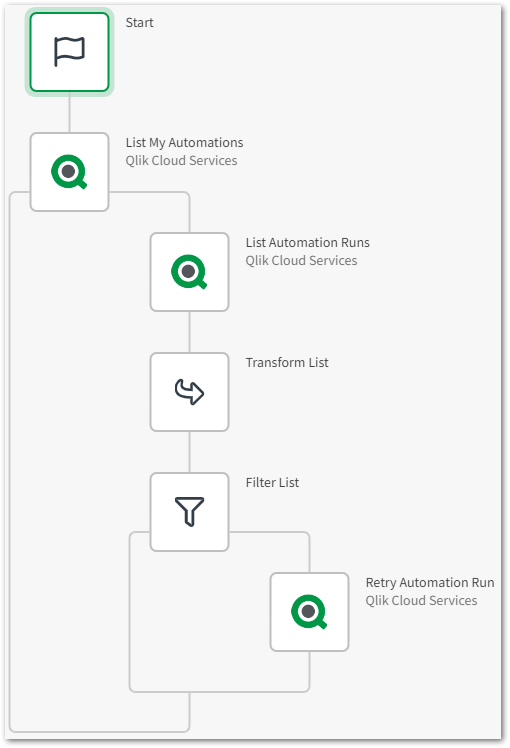

The following example shows how a failed automation can be retried using the Retry Automation Run. Depending on the specifics of the automation you want to rerun, you may want to use the Run Automation block instead.

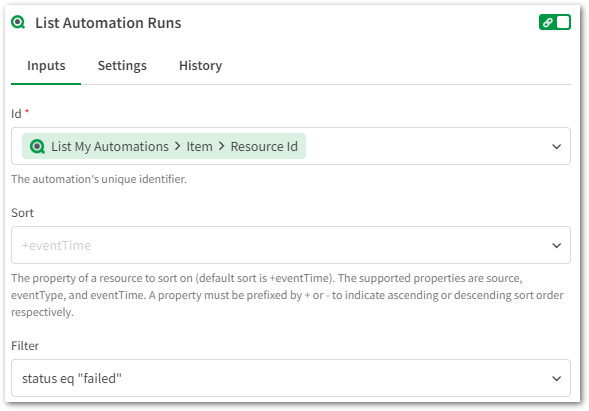

- Use the block List My Automations to get all of your automations.

- Then use the block List Automation Runs to only get the runs that have failed by filtering on status:

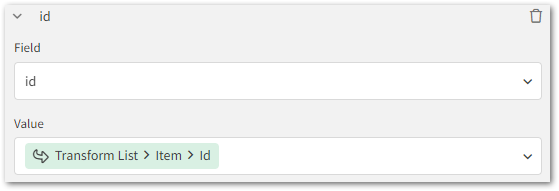

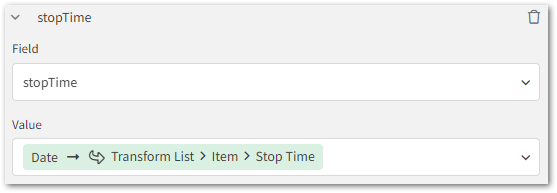

- Use the block Transform List to create a list of failed runs. Add the two fields id and stopTime to this list and configure them such as this:

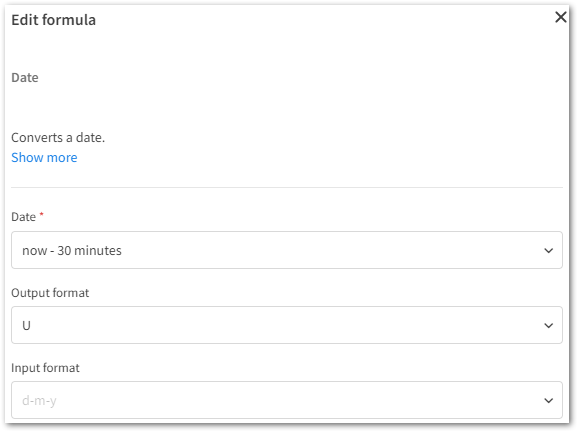

The value for stopTime must be transformed using the Date formula so that the value may be used for comparisons. The output format must be changed to Unix format (U):

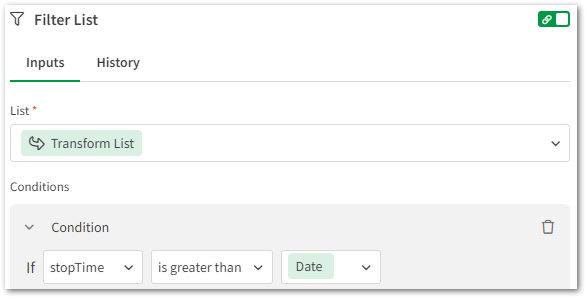

- Now use the block Filter List to only get the most recently failed runs.

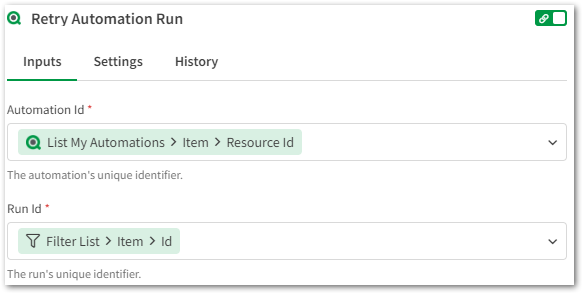

The condition that controls how recent failed runs you would like to retry can be configured according to your needs by modifying the "Date" formula. Ensure that you configure the output format to be in Unix format (U). In this case runs that has failed within 30 minutes will be rerun: - The final step is to include one of the blocks Retry Automation Run or Run Automation, depending on what you are trying to achieve. This is an example of how the Retry Automation Run should be configured:

In case the Run Automation block is used, only the "Automation Id" is needed as input.

Full Automation

The information in this article is provided as-is and to be used at own discretion. Depending on tool(s) used, customization(s), and/or other factors ongoing support on the solution below may not be provided by Qlik Support.