Unlock a world of possibilities! Login now and discover the exclusive benefits awaiting you.

- Qlik Community

- :

- All Forums

- :

- Deployment & Management

- :

- Re: Qlik Sense not using all CPU cores

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Qlik Sense not using all CPU cores

Hi,

We recently started doing performance testing before our final deployment. We did some stress tests on a large application with +300M rows in the fact table. It is running on a dual E5-2667V4 3.2Ghz 8-cores / 128gb RAM server. We are running in a dedicated vmware environment (no other vm).

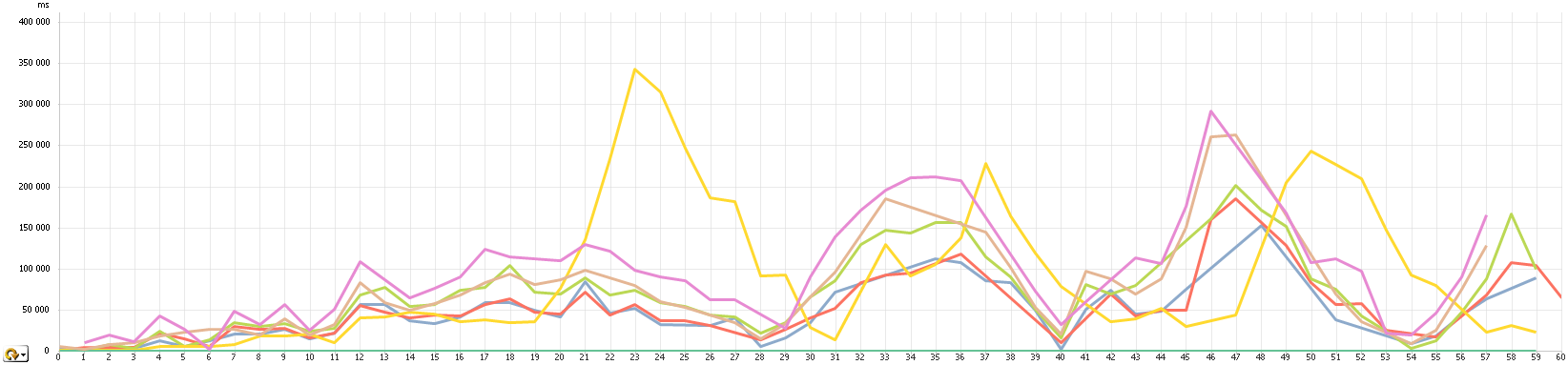

We simulated up to 50 users working concurrently on the server. We realized that the hardware was not large enough for such a project. Response times ran up to 6 minutes. On the following chart, users are added every 30 seconds.

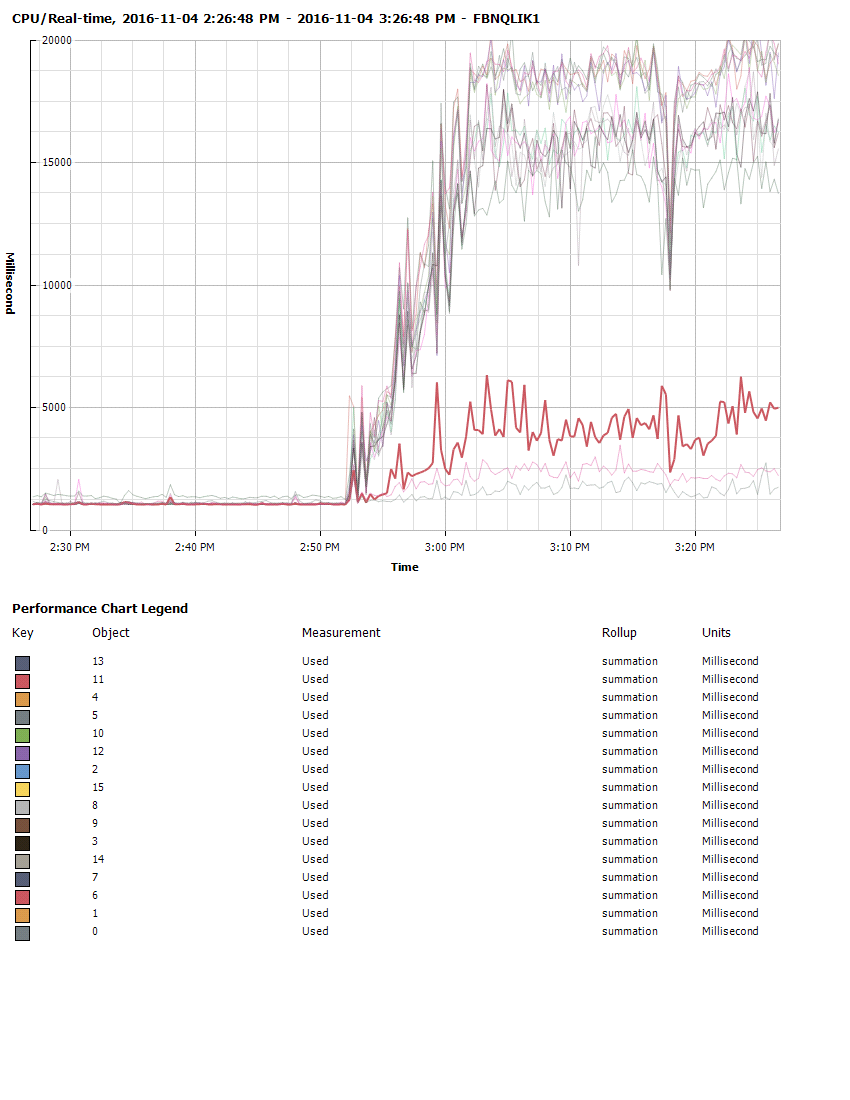

But when looking at the CPU, it barely reaches 65%. Some of the CPU cores are never used.

Memory is not an issue in this case. We barely use half of it. Disk and network don't show much usage. We feel the bottleneck is either the memory speed or the CPU. Any idea why some cores are left over?

Basically, we will need to run 300-500M rows on a relatively complex application with up to 200 concurrent users. After load, memory usage is around 40Gb. QVDs on disk are 26.3Gb. Any idea on the required hardware for such a thing?

Thanks!

- « Previous Replies

-

- 1

- 2

- Next Replies »

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I'll keep you updated. Interestingly this is the case here: the smallest charts (considering size of data and also from full separated small tables) arrive at the latest.

- « Previous Replies

-

- 1

- 2

- Next Replies »