Unlock a world of possibilities! Login now and discover the exclusive benefits awaiting you.

Welcome to

Qlik Community!

Recent Discussions

-

reference line on x-axis -line chart

Hi, I want to add reference line on my x- axis.my x-axis is my ItemID.my Y axis is cumulative margin. I tried to crate whale chart and I want to know ... Show MoreHi,

I want to add reference line on my x- axis.

my x-axis is my ItemID.

my Y axis is cumulative margin.I tried to crate whale chart and I want to know where is the spot when my whale chart start to descending with the reference line.

this is my line chart so far:I tried to add the reference line with this expression:

Aggr(If(Rangesum(above(total ROUND(sum(margin)),0,RowNo())) = Max(total Rangesum(above(total ROUND(sum(margin)),0,RowNo()))), ItemID), ItemID)

but I don't see any line on my X-axis..Can someone help me please?

-

Which feature consumes more resources, Window function or Group by?

Hi, I've been using it very well since the Window function was added.I wonder if the window function or the group by consumes more resources.And what ... Show MoreHi,

I've been using it very well since the Window function was added.

I wonder if the window function or the group by consumes more resources.

And what functions perform faster when loading data?

I don't know the difference because my test data capacity is small.Reply, Thanks!

-

Qlik Replicate - Using UPSERT mode when writing to Snowflake

Hi Qlik Support, When using an UPSERT error handling policy (as a result of enabling the "Apply changes using SQL MERGE" option) in a Replicate task w... Show MoreHi Qlik Support,

When using an UPSERT error handling policy (as a result of enabling the "Apply changes using SQL MERGE" option) in a Replicate task which writes to Snowflake, is Replicate able to correctly prevent duplicate records from being written to the Snowflake target even though Snowflake is known to not enforce the uniqueness of primary keys.Apologies if this question has already been addressed in a different community post.

Thanks,

Nak

-

Moving data from oracle to SQL

I am moving data from oracle to SQL server. I am required to move data only for 110 oracle tables out of 500 tables. I habe to keep running this job e... Show MoreI am moving data from oracle to SQL server. I am required to move data only for 110 oracle tables out of 500 tables. I habe to keep running this job everyday to capture the deltas as well. I can read a context file which will contain the list of all the tables to read data from oracle and through dynamic schema start doing an upsert into sql server. I.am not sure if this is an efficient way to solution this business ask. Would love to hear from the experts if there is a better way to this.

Thanks

Ravi

-

About Google Cloud Storage

Hi!, reading documentation about Qlik Web storage provider 'Google Cloud Storage', it says that you only can put data into a bucket in Qlik SAAS, are ... Show MoreHi!, reading documentation about Qlik Web storage provider 'Google Cloud Storage', it says that you only can put data into a bucket in Qlik SAAS, are there any plans to have this in Qlik Sense Enterprise?

Storing data from your Qlik Sense app in Google Cloud Storage

You can store table data into your Google Cloud Storage bucket in the Data load editor. You can either create a new load script or edit the existing script.

Information noteThe data storage feature is currently only available on Qlik Sense SaaS. -

SAP hana endpoint

Hi Team , we are facing below issue. But the task is running. and slowly changes are getting captured. source is saphana target : Azure sql db 00... Show MoreHi Team ,

we are facing below issue. But the task is running. and slowly changes are getting captured.

source is saphana

target : Azure sql db

00028240: 2024-04-22T01:28:14 [SOURCE_CAPTURE ]T: RetCode: SQL_ERROR SqlState: S1000 NativeError: 146 Message: [SAP AG][LIBODBCHDB DLL][HDBODBC] General error;146 Resource busy and NOWAIT specified: (lock table failed on vid=3, owner's lockMode: EXCLUSIVE, transID: 24627011275) [1022502] (ar_odbc_stmt.c:2816)

00028240: 2024-04-22T01:28:15 [SOURCE_CAPTURE ]T: Failed (retcode -1) to execute statement: 'LOCK TABLE "XXATT"."attrep_cdc_log" IN EXCLUSIVE MODE NOWAIT' [1022502] (ar_odbc_stmt.c:2810)

could you please let us know what could be done.

Thanks.

-

Facing anywhere existence error

Source: On-Prem SQL server Target: Azure SQL server We are copying 196 tables from source to target. For whole night it was running fine. Full load an... Show MoreSource: On-Prem SQL server

Target: Azure SQL server

We are copying 196 tables from source to target. For whole night it was running fine. Full load and Change processing was perfect over a night.

Suddenly we encountered this error.

-

Expression or data model adjustment?

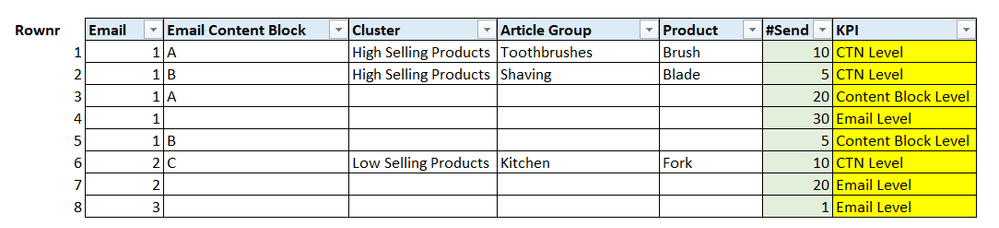

I've simplified my data model in the follow table with some sample data. To give some context, the data exists out of 3 concatenated data sets: 1. Ema... Show MoreI've simplified my data model in the follow table with some sample data.

To give some context, the data exists out of 3 concatenated data sets:

1. Email

2. Email + Email Content Block

3. Email + Email Content Block + Product

An email can have multiple content blocks

Content blocks can contain multiple productsWhen i select email 1, i get a nice overview with the included content blocks and Products.

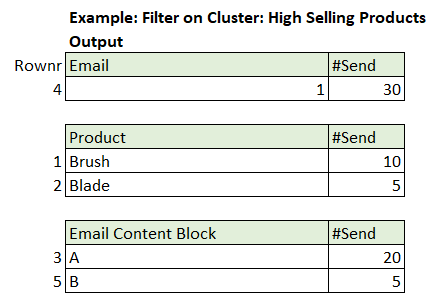

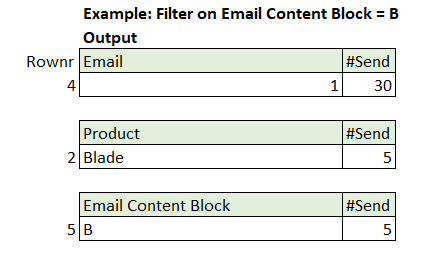

However, i also want to do this vice versa. Hence, when i select CTN i want to see the linked content blocks and emails and the respective #Send value.

See below for the desired output:

Select Brush, i want to see the sends from row 4, because this email (1) does include this product.

I also want to see the sends from row 3, because this content block (A) also included this product.

And i also want to see, but that will anyway work, the sends from row 1, because those are the sends for this product.Same method when i filter in the Cluster.

And same when is filtered on the Email Content Block.

-

Full load task

Hi I had a quick question about full load, let's say if I started my task and its running for 2 hours, meanwhile any new changes that are coming on th... Show MoreHi I had a quick question about full load, let's say if I started my task and its running for 2 hours, meanwhile any new changes that are coming on that DB2 table - will those also be replicated?

Thanks

-

Comparar hora en rango

Hola, estoy intentando crear un rango cada 15 minutos, donde recibo una hora y valido si es mayor a desde y menor a hasta la función definitivamente n... Show MoreHola, estoy intentando crear un rango cada 15 minutos, donde recibo una hora y valido si es mayor a desde y menor a hasta

la función definitivamente no está funcionando

a continuación un ejemplo de como debiese funcionar

Hora_RangoHora Desde Hasta if(Hora_RangoHora>=Desde,1,0) if(Hora_RangoHora<=Hasta,1,0) 00:00:48 00:00:00 00:14:59 1 1 00:00:48 00:15:00 00:29:59 0 1 00:00:48 00:30:00 00:44:59 0 1 00:00:48 00:45:00 00:59:59 0 1 00:00:48 01:00:00 01:14:59 0 1 00:00:48 01:15:00 01:29:59 0 1 00:00:48 01:30:00 01:44:59 0 1 00:00:48 01:45:00 01:59:59 0 1 00:00:48 02:00:00 02:14:59 0 1 00:00:48 02:15:00 02:29:59 0 1 00:00:48 02:30:00 02:44:59 0 1 00:00:48 02:45:00 02:59:59 0 1 00:00:48 03:00:00 03:14:59 0 1 00:00:48 03:15:00 03:29:59 0 1 00:00:48 03:30:00 03:44:59 0 1 00:00:48 03:45:00 03:59:59 0 1 00:00:48 04:00:00 04:14:59 0 1 00:00:48 04:15:00 04:29:59 0 1 00:00:48 04:30:00 04:44:59 0 1 00:00:48 04:45:00 04:59:59 0 1 00:00:48 05:00:00 05:14:59 0 1 00:00:48 05:15:00 05:29:59 0 1 00:00:48 05:30:00 05:44:59 0 1 00:00:48 05:45:00 05:59:59 0 1 00:00:48 06:00:00 06:14:59 0 1 00:00:48 06:15:00 06:29:59 0 1 00:00:48 06:30:00 06:44:59 0 1 00:00:48 06:45:00 06:59:59 0 1 00:00:48 07:00:00 07:14:59 0 1 00:00:48 07:15:00 07:29:59 0 1 00:00:48 07:30:00 07:44:59 0 1 00:00:48 07:45:00 07:59:59 0 1 00:00:48 08:00:00 08:14:59 0 1 00:00:48 08:15:00 08:29:59 0 1 00:00:48 08:30:00 08:44:59 0 1 00:00:48 08:45:00 08:59:59 0 1 00:00:48 09:00:00 09:14:59 0 1 00:00:48 09:15:00 09:29:59 0 1 00:00:48 09:30:00 09:44:59 0 1 00:00:48 09:45:00 09:59:59 0 1 00:00:48 10:00:00 10:14:59 0 1 00:00:48 10:15:00 10:29:59 0 1 00:00:48 10:30:00 10:44:59 0 1 00:00:48 10:45:00 10:59:59 0 1 00:00:48 11:00:00 11:14:59 0 1 00:00:48 11:15:00 11:29:59 0 1 00:00:48 11:30:00 11:44:59 0 1 00:00:48 11:45:00 11:59:59 0 1 00:00:48 12:00:00 12:14:59 0 1 00:00:48 12:15:00 12:29:59 0 1 00:00:48 12:30:00 12:44:59 0 1 00:00:48 12:45:00 12:59:59 0 1 00:00:48 13:00:00 13:14:59 0 1 00:00:48 13:15:00 13:29:59 0 1 00:00:48 13:30:00 13:44:59 0 1 00:00:48 13:45:00 13:59:59 0 1 00:00:48 14:00:00 14:14:59 0 1 00:00:48 14:15:00 14:29:59 0 1 00:00:48 14:30:00 14:44:59 0 1 00:00:48 14:45:00 14:59:59 0 1 00:00:48 15:00:00 15:14:59 0 1 00:00:48 15:15:00 15:29:59 0 1 00:00:48 15:30:00 15:44:59 0 1 00:00:48 15:45:00 15:59:59 0 1 00:00:48 16:00:00 16:14:59 0 1 00:00:48 16:15:00 16:29:59 0 1 00:00:48 16:30:00 16:44:59 0 1 00:00:48 16:45:00 16:59:59 0 1 00:00:48 17:00:00 17:14:59 0 1 00:00:48 17:15:00 17:29:59 0 1 00:00:48 17:30:00 17:44:59 0 1 00:00:48 17:45:00 17:59:59 0 1 00:00:48 18:00:00 18:14:59 0 1 00:00:48 18:15:00 18:29:59 0 1 00:00:48 18:30:00 18:44:59 0 1 00:00:48 18:45:00 18:59:59 0 1 00:00:48 19:00:00 19:14:59 0 1 00:00:48 19:15:00 19:29:59 0 1 00:00:48 19:30:00 19:44:59 0 1 00:00:48 19:45:00 19:59:59 0 1 00:00:48 20:00:00 20:14:59 0 1 00:00:48 20:15:00 20:29:59 0 1 00:00:48 20:30:00 20:44:59 0 1 00:00:48 20:45:00 20:59:59 0 1 00:00:48 21:00:00 21:14:59 0 1 00:00:48 21:15:00 21:29:59 0 1 00:00:48 21:30:00 21:44:59 0 1 00:00:48 21:45:00 21:59:59 0 1 00:00:48 22:00:00 22:14:59 0 1 00:00:48 22:15:00 22:29:59 0 1 00:00:48 22:30:00 22:44:59 0 1 00:00:48 22:45:00 22:59:59 0 1 00:00:48 23:00:00 23:14:59 0 1 00:00:48 23:15:00 23:29:59 0 1 00:00:48 23:30:00 23:44:59 0 1 00:00:48 23:45:00 23:59:59 0 1 código de carga

MaestroFecha:LOAD DistinctID_Fecha,Date(Floor(ID_Fecha),'DD/MM/YYYY') as Fecha,Year(ID_Fecha) as Año,Month(ID_Fecha) as Mes,Dual(Year(ID_Fecha)&'-'&Month(ID_Fecha), monthstart(ID_Fecha)) AS AñoMes,Dual('Q'&Num(Ceil(Num(Month(ID_Fecha))/3)),Num(Ceil(NUM(Month(ID_Fecha))/3),00)) AS Quarter,time(ID_Fecha) as HoraResident Consolidado;Maestro_RangoHora_TEMP:LOAD HoraDesde as HDesde,HoraHasta as HHastaFROM [lib://Aldesa:DataFiles/Maestros Aldesa Excel.xlsx](ooxml, embedded labels, table is [Maestro Hora]);Maestro_RangoHora_TEMP2:LOADTime(MakeDate(1,1,2024)&' '&HDesde) as Desde,Time(MakeDate(1,1,2024)&' '&HHasta) as HastaResident Maestro_RangoHora_TEMP;Drop table Maestro_RangoHora_TEMP;Join (Maestro_RangoHora_TEMP2) Load Distinct time(ID_Fecha)as Hora_RangoHora Resident MaestroFecha;Maestro_RangoHora_Temp3:LOAD Distinct Hora_RangoHora,Desde as HoraDesde,Hasta as HoraHasta,IF(Hora>=HoraDesde and Hora<=HoraHasta,HoraDesde&'-'&HoraHasta,'Vacio') as RangoHorarioResident Maestro_RangoHora_TEMP2;Drop table Maestro_RangoHora_TEMP2;Maestro_RangoHora:NoConcatenateLOAD Hora_RangoHora as Hora, HoraDesde, HoraHasta,RangoHorario Resident Maestro_RangoHora_Temp3 Where RangoHorario<>'Vacio';Drop table Maestro_RangoHora_Temp3;

Lots of Qlik Talend Data Integration Sessions!

Wondering about Qlik Talend Data Integration Sessions? There are 11, in addition to all of the Data & Analytics. So meet us in Orlando, June 3 -5.

Qlik Community How To's

Browse our helpful how-to's to learn more about navigating Qlik Community and updating your profile.

Do More with Qlik - Delivering Real-Time, Analytics-Ready Data

Join us on April 24th at 10 AM ET for the next Do More with Qlik webinar focusing on Qlik’s Data Integration & Quality solutions.

Your journey awaits! Join us by Logging in and let the adventure begin.

Customer Story

Qlik Data Integration & Qlik Replicate story

Qlik enables a frictionless migration to AWS cloud by Empresas SB, a group of Chilean health and beauty retail companies employing 10,000 people with 600 points of sale.

Customer Story

Building a Collaborative Analytics Space

Qlik Luminary Stephanie Robinson of JBS USA, the US arm of the global food company employing 70,000 in the US, and over 270,000 people worldwide.

Location and Language Groups

Choose a Group

Join one of our Location and Language groups. Find one that suits you today!

Healthcare User Group

Healthcare User Group

A private group is for healthcare organizations, partners, and Qlik healthcare staff to collaborate and share insights..

Japan Group

Japan

Qlik Communityの日本語のグループです。 Qlik製品に関する日本語資料のダウンロードや質問を日本語で投稿することができます。

Brasil Group

Brazil

Welcome to the group for Brazil users. .All discussions will be in Portuguese.

Blogs

Community News

Hear from your Community team as they tell you about updates to the Qlik Community Platform and more!