Unlock a world of possibilities! Login now and discover the exclusive benefits awaiting you.

Search our knowledge base, curated by global Support, for answers ranging from account questions to troubleshooting error messages.

Recent Documents

-

How to create an SFTP data connection in a Qlik Cloud Application using 'fingerp...

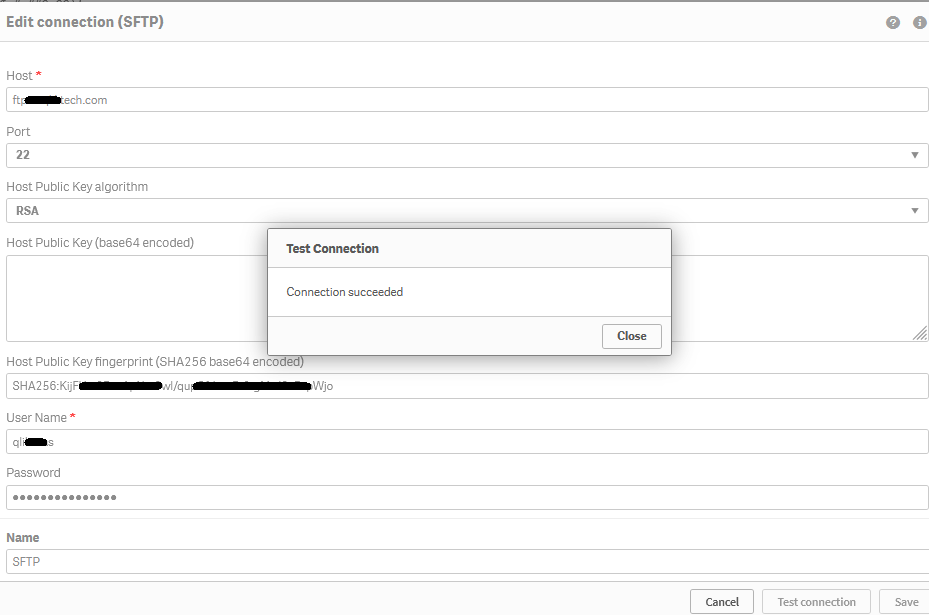

Steps Retrieve the SFTP server fingerprint (this example uses port 22) Open a Windows command prompt Run the following command: sftp -P 22 username... Show MoreSteps

- Retrieve the SFTP server fingerprint (this example uses port 22)

- Open a Windows command prompt

- Run the following command:

sftp -P 22 username@ftptest.test.com

Where username is 'username' and 'ftptest.test.com' is the sftp hostname. - The response received will be similar to:

C:\Windows\System32>sftp -P 22 username@ftptest.test.com The authenticity of host 'ftptest.test.com (18x.6x.15x.21x)' can't be established. RSA key fingerprint is SHA256:KijFUxxxxxxxxxxxxxx/xxxxxxxxxxxxxxxxxapWjoif the above does not retrieve your fingerprint, contact your SFTP server administrator who can provide fingerprint or public key details for you.

- Copy the RSA fingerprint

- Create a Qlik Cloud Data Connection

- Open your Qlik Cloud Application and navigate to the data load editor

- Under Data Connection choose Create new connection

- Search and select SFTP

- Enter your credentials and fingerprint information

- Hostname

- (ie: myftpserver.domain.com. Do not pre-pend the address with ftp:// nor sftp://)

- Username

- Password

- Insert fingerprint

- Hostname

- Click Test connection

If there are connectivity issues, to request data from your data connections through a firewall, you need to add the underlying (all three) IP addresses for your region to your firewall's allow list. These addresses are static and will not change. See Allowlisting domain names and IP addresses.

Other Diagnostics:

Error in SFTP connector

- Error shows public key mismatch error in Qlik SFTP connector

Diagnostic

- Open Command Prompt and enter "ssh-keyscan -t rsa <host ip or name) | ssh-keygen -lf -"

Example: (insert a valid SFTP server host IP)

- C:\Windows\System32>ssh-keyscan -t rsa 192.168.xxx.255 | ssh-keygen -lf -

Result:

- "(stdin) is not a public key file"

In case of this or other SFTP connection errors, please work with the SFTP server administrator or SFTP server vendor in order in order to enable the generation of a publicly available fingerprint.Related Information:

The information in this article is provided as-is and to be used at own discretion. Depending on tool(s) used, customization(s), and/or other factors ongoing support on the solution below may not be provided by Qlik Support.

- Retrieve the SFTP server fingerprint (this example uses port 22)

-

Qlik Replicate and SAP ODP Endpoint: row counts do not match between Qlik Replic...

comparing the number of rows between the backend SAP ODB environment and the Qlik Replicate Full Load task, Qlik Replicate does not show the correct v... Show Morecomparing the number of rows between the backend SAP ODB environment and the Qlik Replicate Full Load task, Qlik Replicate does not show the correct value.

Example:

Qlik Replicate shows 357,462 rows loaded, while the SAP ODP (ODQMON) job log shows 1.5 million rows.

Resolution

Qlik is investigating this as RECOB-8294. No workaround is currently available.

Information provided on this defect is given as is at the time of documenting. For up-to-date information, please review the most recent Release Notes, or contact support with the RECOB-8294 for reference.

Cause

Product Defect ID: RECOB-8294

Environment

-

Qlik Replicate SAP ODP Log Stream Source error SDK endpoint encountered a recove...

A Qlik Replicate Task reading from Log Stream SAP ODP Source endpoint task fails with: Failed during unloadSDK endpoint encountered a recoverable erro... Show MoreA Qlik Replicate Task reading from Log Stream SAP ODP Source endpoint task fails with:

Failed during unload

SDK endpoint encountered a recoverable error [1024719]Resolution

This error is currently under investigation as QB-26198, however, no resolution is available as the SAP ODP Source Endpoint does not support the Log Stream Staging Task setup.

Information provided on this defect is given as is at the time of documenting. For up-to-date information, please review the most recent Release Notes, or contact support with the QB-26198 for reference.

Cause

Product Defect ID: QB-26198

Environment

-

Qlik Sense PosgreSql connector cut off strings containing more than 255 characte...

The native Qlik PostgreSql connector (ODBC package) does not import strings with more of 255 characters. Such strings are just cut off, the following ... Show MoreThe native Qlik PostgreSql connector (ODBC package) does not import strings with more of 255 characters. Such strings are just cut off, the following characters are not shown in the applications. No warnings are thrown during the script execution.

The problem affects the Qlik connector but not all the DNS drivers.

Environment

Qlik Sense February 2023 and higher versions

Qlik CloudResolution

When setting up the PostgreSQL connection, set the parameter TextAsLongVarchar with value 1 in the connector Advanced settings.

When TextAsLongVarchar is set, and the Max String Length is set to 4096, 4096 characters are loaded.

Notice that there still are limitations to this functionality related to the datatype used in the database. Data types like text[] are currently not supported by Simba and they are affected by the 255 characters limitation even when the TextAsLongVarchar parameter is applied.

Qlik has opened an improvement request to Simba to support them.

As workaround, it is possible to test a custom connector using a DSN driver to deal with these data types.

Internal Investigation ID

QB-21497

-

Qlik Replicate DB2 Z/OS Source Endpoint Date column not loading to Target

DB2 Z/OS Source Endpoint loading a table with date columns leads to the data coming in as blank. The source data has valid dates but is not loading to... Show MoreDB2 Z/OS Source Endpoint loading a table with date columns leads to the data coming in as blank. The source data has valid dates but is not loading to the target in the Qlik Replicate Task

Resolution

The ODBC Driver for DB2 Z/OS requires the 11.5.6 or the 11.5.8 ODBC driver to be installed on the Qlik Replicate Server.

Cause

ODBC driver 11.5.9 or other installed.

Related Content

z/OS Prerequisites | Qlik Replicate Help

Environment

Qlik Replicate 2023.5

-

Qlik Replicate - MySQL source defect and fix (2023.11)

Upgrade installation or fresh installation of Qlik Replicate 2023.11 (includes builds GA, PR01 & PR02), Qlik Replicate reports errors for MySQL or Mar... Show MoreUpgrade installation or fresh installation of Qlik Replicate 2023.11 (includes builds GA, PR01 & PR02), Qlik Replicate reports errors for MySQL or MariaDB source endpoints. The task attempts over and over for the source capture process but fail, Resume and Startup from timestamp leads to the same results:

[SOURCE_CAPTURE ]T: Read next binary log event failed; mariadb_rpl_fetch error 0 () [1020403] (mysql_endpoint_capture.c:1060)

[SOURCE_CAPTURE ]T: Error reading binary log. [1020414] (mysql_endpoint_capture.c:3998)

Environment:

- Replicate 2023.11 (GA, PR01, PR02)

- MySQL source database , any version

- MariaDB source database , any version

Fix Version & Resolution:

Upgrade to Replicate 2023.11 PR03 (coming soon)

Workaround:

If you are running 2022.11, then keep run it.

No workaround for 2023.11 (GA, or PR01/PR02) .

Cause & Internal Investigation ID(s):

Jira: RECOB-8090 , Description: MySQL source fails after upgrade from 2022.11 to 2023.11

There is a bug in the MariaDB library version 3.3.5 that we started using in Replicate in 2023.11.

The bug was fixed in the new version of MariaDB library 3.3.8 which be shipped with Qlik Replicate 2023.11 PR03 and upper version(s).Related Content:

support case #00139940, #00156611

Replicate - MySQL source defect and fix (2022.5 & 2022.11)

-

Qlik Replicate and MS-CDC source endpoint: CDC Data Replication stopped

The Qlik Replicate task log (with source_capture and trace logging enabled) shows LSN information similar to: 00023576: 2024-03-08T17:46:03 [SOURCE_CA... Show MoreThe Qlik Replicate task log (with source_capture and trace logging enabled) shows LSN information similar to:

00023576: 2024-03-08T17:46:03 [SOURCE_CAPTURE ]T: MS-CDC capture loop. SQL Server start time '2024-03-06 22:41:29.170', start LSN '000A9B8100000050000D', end time '2024-03-08 19:46:04', end LSN '' (sqlserver_mscdc.c:3684)

00023576: 2024-03-08T17:46:08 [SOURCE_CAPTURE ]T: MS-CDC capture loop. SQL Server start time '2024-03-06 22:41:29.170', start LSN '000A9B8100000050000D', end time '2024-03-08 19:46:09', end LSN '' (sqlserver_mscdc.c:3684)This indicates the LSN is looping and that the LSN was purged.

Resolution

- If you can restore the LSN, the task can be resumed.

- If the LSN cannot be restored, the task will require a full reload.

Environment

-

Qlik Replicate an Oracle target: ORA-03156: OCI call timed out

A Qlik Replicate task with an Oracle target fails with the following in the task log: [TARGET_LOAD ]T: ORA-03156: OCI call timed out This can be expec... Show MoreA Qlik Replicate task with an Oracle target fails with the following in the task log:

[TARGET_LOAD ]T: ORA-03156: OCI call timed out

This can be expected with long-running executions during a full load.

Resolution

To disable the timeout, set the Internal Parameter executeTimeout on the Oracle target endpoint.

- Go to the Oracle Endpoint connection

- Switch to the Advanced tab

- Click Internal Parameters

- Add executeTimeout with a value of 0

For more information about Internal Parameters, see Qlik Replicate: How to set Internal Parameters and what are they for?

Environment

-

SQL MS-Replication: How to find tables (articles) in a Publication

This article briefly explains how to find what tables (articles) are used in a Publication. This method is useful to identify issues when a table does... Show MoreThis article briefly explains how to find what tables (articles) are used in a Publication. This method is useful to identify issues when a table does not capture updates.

Resolution

Run the following query from Microsoft Studio Management or any Database Utility (such as DBeaver):

SELECT

msp.publication AS PublicationName,

msa.publisher_db AS DatabaseName,

msa.article AS ArticleName,

msa.source_owner AS SchemaName,

msa.source_object AS TableName

FROM distribution.dbo.MSarticles msa

JOIN distribution.dbo.MSpublications msp ON msa.publication_id = msp.publication_id

ORDER BY

msp.publication,

msa.article;Environment

-

Qlik Compose Data Warehouse - Incremental Load Patterns for Non-Replicate Data S...

Qlik’s Data Integration Platform provides functionality to support near real-time data delivery from traditional sources, files, and some SaaS environ... Show MoreQlik’s Data Integration Platform provides functionality to support near real-time data delivery from traditional sources, files, and some SaaS environments via Qlik Replicate. Qlik Compose for Data Warehouses is integrated with Qlik Replicate to automate the incremental load process of the data warehouse based on change data capture (CDC) from the source system.

However, there are certain scenarios where the automated incremental load processes cannot be leveraged. (For example, SAP Hana Log based replication, third party data ingestion or cloud data sharing programs).

In those instances there are extract transform and load (ETL) patterns that can be implemented in Qlik Compose to support incremental data loads. This paper will describe these scenarios and show how to implement custom incremental load patterns in Qlik Compose and how those patterns can be applied to query or view based mappings.

-

Connector reply error: Executing non-SELECT queries is disabled. Please contact ...

Qlik ODBC connector package (database connector built-in Qlik Sense) fails to reload with error Connector reply error: Executing non-SELECT queries i... Show MoreQlik ODBC connector package (database connector built-in Qlik Sense) fails to reload with error Connector reply error:

Executing non-SELECT queries is disabled. Please contact your system administrator to enable it.

The issue is observed when the query following SQL keyword is not SELECT, but another statement like INSERT, UPDATE, WITH .. AS or stored procedure call.Environment:

- Qlik Sense Enterprise on Windows

- Qlik Sense Enterprise SaaS

- Qlik Sense Desktop

- QlikView Server

- QlikView Desktop

Cause

See the Qlik Sense February 2019 Release Notes for details on item QVXODBC-1406.

Resolution

By default, non-SELECT queries are disabled in the Qlik ODBC Connector Package and users will get an error message indicating this if the query is present in the load script. In order to enable non-SELECT queries, allow-nonselect-queries setting should be set to True by the Qlik administrator.

To enable non-SELECT queries:- Modify the QvOdbcConnectorPackage.exe.config found in the locations mentioned below.

Set the parameter allow-nonselect-queries to True

This is case-sensitive. true will not work.

In a multi node environment, the changes need to be applied to all nodes.

Configuration file QvOdbcConnectorPackage.exe.config locations:

- Qlik Sense Enterprise: C:\Program Files\Common Files\Qlik\Custom Data\QvOdbcConnectorPackage

- Qlik Sense Desktop: C:\Users\user-name\AppData\Local\Programs\Common Files\Qlik\Custom Data\QvOdbcConnectorPackage

- QlikView: C:\Program Files\Common Files\QlikTech\Custom Data\QvOdbcConnectorPackage

As we are modifying the configuration files, these files will be overwritten during an upgrade and will need to be made again.

- Non-select statements are now enabled and generally this is sufficient to resolve the issue.

If, however, you need to run SQL statements without any data table returned (INSERT/ UPDATE/ DELETE/ DROP), you can optionally add the keyword !EXECUTE_NON_SELECT_QUERY at the end of the query. Other queries that return data (such as WITH ... AS in PostgreSQL) do not need this keyword.

The EXECUTE_NON_SELECT_QUERY signals to the script processing engine that the query may not return any data.

Only apply !EXECUTE_NON_SELECT_QUERY if you use the default connector settings (such as bulk reader enabled and reading strategy "connector"). Applying !EXECUTE_NON_SELECT_QUERY to non-default settings may lead to unexpected reload results and/or error messages.

More details are documented in the Qlik ODBC Connector package help site.

Related Content:

Feature Request Delivered: Executing non-SELECT queries with Qlik Sense Business

Execute SQL Set statements or Non Select Queries -

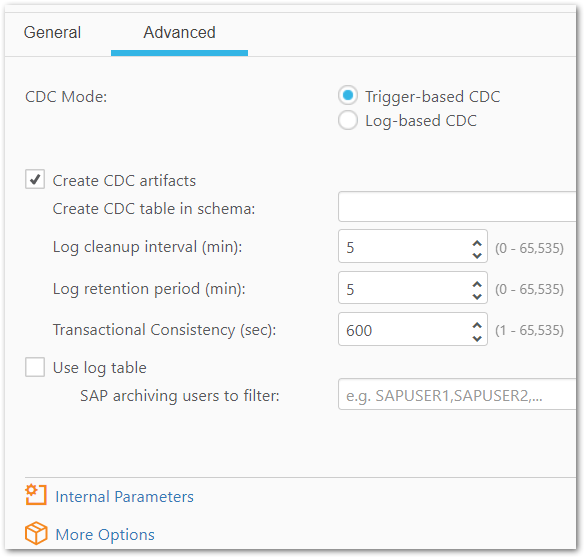

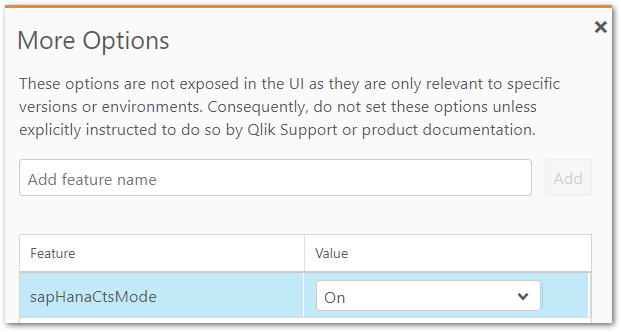

How to enable SAP HANA CTS Mode support in Qlik Replicate

Qlik Replicate can take advantage of the SAP HANA CTS mode feature. CTS mode must be enabled on the SAP HANA Source. To enable the feature in Qlik Re... Show MoreQlik Replicate can take advantage of the SAP HANA CTS mode feature.

CTS mode must be enabled on the SAP HANA Source.

To enable the feature in Qlik Replicate:

- In the Qlik Replicate console, navigate to your SAP HANA Source Endpoint

- Open the Advanced tab. open and click on the Advanced Tab.

You need to manually drop the current triggers as the new table name with CTS enabled will be attrep_cdc_changes_cts, not attrep_cdc_changes. By default, attrep_cdc_changes_cts is partitioned with default value of 100,000,000 rows. SAP HANA allows maximum 16K partitions. Updating threshold for each partition can impact performance and hence should be thoroughly tested in a Non-Production environment. These steps do not include the migration to CTS Mode where you can Stop and or Resume the Tasks by enabling this feature.

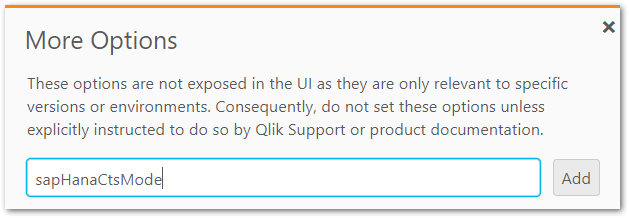

- Click More Options

- Input the feature sapHanaCtsMode and click Add

- Set the value to On

- The new settings will take effect when you stop and restart the task from the endpoint.

Environment

The information in this article is provided as-is and to be used at own discretion. Depending on tool(s) used, customization(s), and/or other factors ongoing support on the solution below may not be provided by Qlik Support.

Related Content

Change CTS Configuration | SAP HANA Help Portal

-

Qlik Replicate Log Stream task error: End of time-line reached and all records w...

When a reload target is performed on the logstream staging task (parent) you will get the following error in every Log Stream remote task (child): [SO... Show MoreWhen a reload target is performed on the logstream staging task (parent) you will get the following error in every Log Stream remote task (child):

[SOURCE_CAPTURE ]W: End of timeline, no more records to read (ar_cdc_channel.c:1210)

[SORTER ]E: End of time-line reached and all records were applied, task will stop [1020101] (sorter_transaction.c:3486)The task stops replication to the remote task.

Environment

- Qlik Replicate with Log Steam tasks

Resolution

There are 2 optional ways to resolve this issue:

Option 1: Reload the Log Stream remote (child) task

Option 2: Start the Log Stream stagging task (parent) from the timestamp before its reload. To do this please follow the steoos below:- Find the log of the log stream staging task(parent) just before the reload was performed. i.e. reptask_TASKNAMEof_STAGING_TASK?????.log. that has a date just before the reload was performed.

- Go to the end of the log in step one and get the line that says Final saved task state. At the end of the line get the timestamp

- Once you get the timestamp, you will need to convert the EPOCH time to Server Time

- Use that time to start the task.

-

Qlik Sense ODBC database connector performance increase: useBulkReader

Qlik has introduced an update with Qlik Cloud and Qlik Sense Enterprise on Windows which significantly increases the performance of any ODBC database ... Show MoreQlik has introduced an update with Qlik Cloud and Qlik Sense Enterprise on Windows which significantly increases the performance of any ODBC database Connector when working with larger datasets.

The change was rolled out to Qlik Cloud in October 2022 and is available in any Qlik Sense Enterprise on Windows version beginning November 2022.

All new connections automatically have a Bulk Reader feature that uses larger portions of data in the iterations within a load (instead of loading data row by row). This can result in faster load times for larger datasets.

To add this feature to an existing connection, open the connection properties window by selecting Edit and then click Save. The Bulk Reader feature is now turned on. You don’t have to change any connection properties to invoke this feature.

While we believe that most customers will want all their ODBC connections to use this new capability, it is possible to turn off the Bulk Reader feature for a particular connector. To do this, add the parameter useBulkReader with a value of False to the Advanced section of the connector properties window.

Environment

Qlik Cloud

Qlik Sense Enterprise on WindowsThe information in this article is provided as-is and to be used at own discretion. Depending on tool(s) used, customization(s), and/or other factors ongoing support on the solution below may not be provided by Qlik Support.

Related Content

-

Qlik Sense : Reloads failing with error "General Script Error in statement handl...

Many reloads are failing on different schedulers with General Script Error in statement handling. The issue is intermittent.The connection to the data... Show MoreMany reloads are failing on different schedulers with General Script Error in statement handling. The issue is intermittent.

The connection to the data source goes through our Qlik ODBC connectors.The Script Execution log shows a “General Script Error in statement handling” message. Nevertheless, the same execution works if restarted after some time without modifying the script that looks correct.

The engine logs show:

CGenericConnection: ConnectNamedPipe(\\.\pipe\data_92df2738fd3c0b01ac1255f255602e9c16830039.pip) set error code 232

Environment

- Qlik Sense Enterprise on Windows May 2021

- Qlik Sense Enterprise on Windows September 2020 and higher (less frequent occurrence)

Resolution

Change the reading strategy within the current connector:

Set reading-strategy value=engine in C:\ProgramFiles\Common Files\Qlik\CustomData\QvOdbcConnectorPackage\QvODBCConnectorPackage.exe.config

reading-strategy value=connector should be removed

Switching the strategy to engine may impact NON SELECT queries. See Connector reply error: Executing non-SELECT queries is disabled. Please contact your system administrator to enable it. for details.

Internal Investigation ID(s):

QB-8346

-

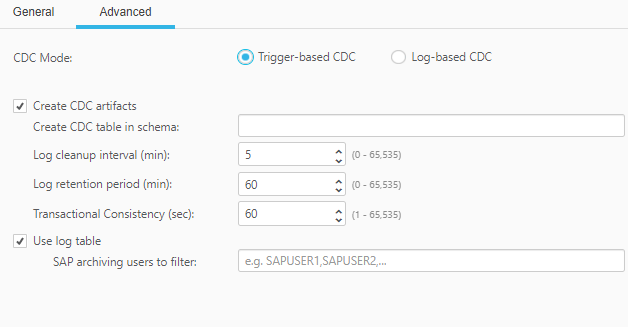

Qlik Replicate and SAP HANA Trigger based CDC

This is an attempt to provide more details on how the Trigger based CDC method works for SAP HANA source end points. Environment Qlik Replicate W... Show MoreThis is an attempt to provide more details on how the Trigger based CDC method works for SAP HANA source end points.

Environment

When we work with SAP HANA source endpoint, we have two options (Advanced tab):

- Log Based CDC

- Trigger Based CDC

Log Based CDC is the recommended approach and it reads the data from Online Logs/Backup Logs or combination of both giving preference to Backup logs. But in some cases this can be non-beneficial if the redo log size is huge (depending on the DB size and number of tables) and Replicate task do not have most of the tables from DB. In these scenarios sorter operation in Replicate has to read and store all data from redo before it can figure out the required data and process those.

To avoid this drawback we have the other method which is Trigger based CDC and it focuses only on the tables mentioned in the task and doesn't consider everything else that happens in the Database.

This is how it works:

- Create three triggers (INSERT, UPDATE and DELETE) on the source tables.

- The triggers are defined to write any of those three operation data to attrep_cdc_changes table

- Then the task reads from the attrep_cdc_changes and process the records.

- We can also choose to have Log table as per the settings, which adds one more table attrep_cdc_log

- When the Use log table option in the SAP HANA endpoint's Advanced tab is enabled, changes are copied from the attrep_cdc_changes table to the attrep_cdc_log table. During the task, Replicate reads the changes from the attrep_cdc_log table and deletes the old data according to the cleanup settings (specified in the endpoint's Advanced tab).

User guide which provides details on the required permissions to enable the option: Permissions

The information in this article is provided as-is and to be used at own discretion. Depending on tool(s) used, customization(s), and/or other factors ongoing support on the solution below may not be provided by Qlik Support.

-

Old orphan tasks visible in Qlik Enterprise Manager

Qlik Enterprise Manager lists old orphaned tasks, sometimes displaying them to be in recovering mode. These tasks do not exist in Qlik Replicate and a... Show MoreQlik Enterprise Manager lists old orphaned tasks, sometimes displaying them to be in recovering mode. These tasks do not exist in Qlik Replicate and are not shown in the Qlik Replicate UI.

How can we remove the orphaned tasks from Qlik Enterprise Manager?

Resolution

The task exists in an orphantaskname folder on Qlik Replicate. This folder can be deleted.

On the Qlik Replicate server:

- Locate and open the data folder:

~\data\tasks\orphantaskname - Delete the folder

Environment

- Locate and open the data folder:

-

Qlik Replicate and Oracle as Source: To use CDC, the Oracle database must be con...

After implementing an Oracle Database Failover or otherwise switching to a new database, Qlik Replicate tasks may not start processing CDC and repeat ... Show MoreAfter implementing an Oracle Database Failover or otherwise switching to a new database, Qlik Replicate tasks may not start processing CDC and repeat the message:

[SOURCE_CAPTURE ]I: Start processing new RESETLOGS_ID '1077621340' that does not still have archived Redo logs

This is an identical error message as seen in Unable to resume Qlik Replicate tasks due to the error Start processing new RESETLOGS_ID 'xxxxxx' that does not still have archived Redo logs, but implementing the solution has no effect.

Creating and running a new task reveals a different error:

[SOURCE_CAPTURE ]E: To use CDC, the Oracle database must be configured to archived Redo logs [1022313]

Resolution

The database must be in archivelog mode in order to enable CDC. See Prerequisites | Qlik Replicate with Oracle as source.

In addition, note that Qlik Replicate does checks when the task is started fresh, not at every resume.

Environment

-

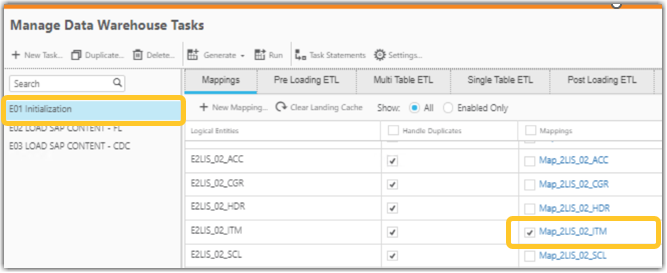

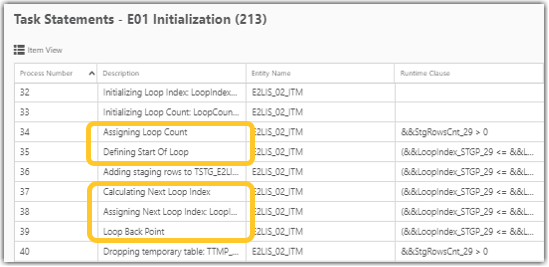

Loop Statement in Qlik Compose, tasks take longer than expected to load data

Qlik Compose logs statements such as: 2024-03-11 18:38:24.056 [engine ] [INFO ] [] PI# 36 RUN : Adding staging rows to TSTG_E2LIS_02_ITM_P 18:38:30.4... Show MoreQlik Compose logs statements such as:

2024-03-11 18:38:24.056 [engine ] [INFO ] [] PI# 36 RUN : Adding staging rows to TSTG_E2LIS_02_ITM_P

18:38:30.431 [engine ] [INFO ] [] PI# 39 RUN : Loop Back Point.

pool-2-thread-3 2024-03-11 18:38:30.431 [engine ] [INFO ] [] Loop on 35,36,37,38Loop Statements run in an iteration manner, which can lead to performance issues and tasks taking longer to load data.

Resolution

Compose runs the process step (Adding staging rows to TSTG_E2LIS_02_ITM_P) in an iteration manner when any of the columns are not mapped. Either we have to map this column or define an expression for this column and regenerate the statements. Hence the process step will not be in the loop.

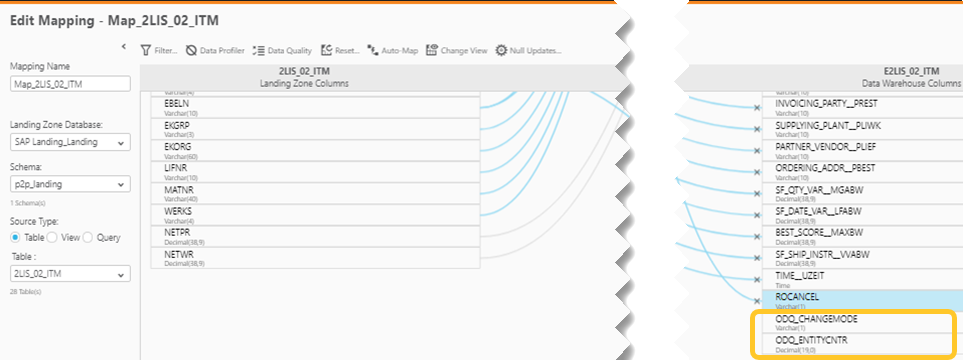

- In the below example (Job E01 Initialization Map_2LIS_02_ITM) some of the columns are not mapped

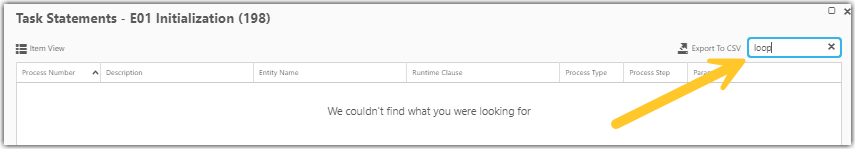

- In the Task Statements, we see the Loop Statements

- Once the columns are mapped and the task statements regenerated, the loop statements are removed

After mapping the missed columns, the task can be able to load the data faster.

Cause

Compose runs the process step (Adding staging rows to TSTG_E2LIS_02_ITM_P) in an iteration manner when any of the columns are not mapped.

Internal Investigation ID(s)

QB-21468

QB-25783Environment

- In the below example (Job E01 Initialization Map_2LIS_02_ITM) some of the columns are not mapped

-

Splitting up GeoAnalytics connector operations

Many of the GeoAnalytics can be executed with a split input of the indata table. This article explains which and how to modify the code that the conne... Show MoreMany of the GeoAnalytics can be executed with a split input of the indata table. This article explains which and how to modify the code that the connector produces. Operations that cannot be Splittable are mostly the aggregating and hence not Splittable. When loadable tables are used for input, inline tables are created in loops and can be used for a quick way to split. Of course it's possible to write custom code to do the splitting instead.

Making calls with large indata tables often causes time outs on the server side, splitting is a way around that.

Environment:

Splittable ops Non-Splittable ops Special ops, splittable - AddressPointLookup

- Intersects

- IpLookup

- NamedAreaLookup

- NamedPointLookup

- PointToAddressLookup

- Routes

- TravelAreas

- Within

- Closest

- Cluster

- Dissolve

- Load

- IntersectsMost

- Simplify

- Binning

-

SpatialIndex

Binning and SpatialIndex

Bining and SpatialIndex differs from other operations, they are not placing any call to the server if the indata are internal geometries, ie lat ,ong points. The operations als produce the same type of results so the resulting tables can be concatenated.

Resolution:

Example, a Within operation

Before the edit

The code as the connector produces it:

/* Generated by Idevio GeoAnalytics for operation Within ---------------------- */ Let [EnclosedInlineTable] = 'POSTCODE' & Chr(9) & 'Postal.Latitude' & Chr(9) & 'Postal.Longitude'; Let numRows = NoOfRows('PostalData'); Let chunkSize = 1000; Let chunks = numRows/chunkSize; For n = 0 to chunks Let chunkText = ''; Let chunk = n*chunkSize; For i = 0 To chunkSize-1 Let row = ''; Let rowNr = chunk+i; Exit for when rowNr >= numRows; For Each f In 'POSTCODE', 'Postal.Latitude', 'Postal.Longitude' row = row & Chr(9) & Replace(Replace(Replace(Replace(Replace(Replace(Peek('$(f)', $(rowNr), 'PostalData'), Chr(39), '\u0027'), Chr(34), '\u0022'), Chr(91), '\u005b'), Chr(47), '\u002f'), Chr(42), '\u002a'), Chr(59), '\u003b'); Next chunkText = chunkText & Chr(10) & Mid('$(row)', 2); Next [EnclosedInlineTable] = [EnclosedInlineTable] & chunkText; Next chunkText='' Let [EnclosingInlineTable] = 'ClubCode' & Chr(9) & 'Car5mins_TravelArea'; Let numRows = NoOfRows('TravelAreas5'); Let chunkSize = 1000; Let chunks = numRows/chunkSize; For n = 0 to chunks Let chunkText = ''; Let chunk = n*chunkSize; For i = 0 To chunkSize-1 Let row = ''; Let rowNr = chunk+i; Exit for when rowNr >= numRows; For Each f In 'ClubCode', 'Car5mins_TravelArea' row = row & Chr(9) & Replace(Replace(Replace(Replace(Replace(Replace(Peek('$(f)', $(rowNr), 'TravelAreas5'), Chr(39), '\u0027'), Chr(34), '\u0022'), Chr(91), '\u005b'), Chr(47), '\u002f'), Chr(42), '\u002a'), Chr(59), '\u003b'); Next chunkText = chunkText & Chr(10) & Mid('$(row)', 2); Next [EnclosingInlineTable] = [EnclosingInlineTable] & chunkText; Next chunkText='' [WithinAssociations]: SQL SELECT [POSTCODE], [ClubCode] FROM Within(enclosed='Enclosed', enclosing='Enclosing') DATASOURCE Enclosed INLINE tableName='PostalData', tableFields='POSTCODE,Postal.Latitude,Postal.Longitude', geometryType='POINTLATLON', loadDistinct='NO', suffix='', crs='Auto' {$(EnclosedInlineTable)} DATASOURCE Enclosing INLINE tableName='TravelAreas5', tableFields='ClubCode,Car5mins_TravelArea', geometryType='POLYGON', loadDistinct='NO', suffix='', crs='Auto' {$(EnclosingInlineTable)} SELECT [POSTCODE], [Enclosed_Geometry] FROM Enclosed SELECT [ClubCode], [Car5mins_TravelArea] FROM Enclosing; [EnclosedInlineTable] = ''; [EnclosingInlineTable] = ''; /* End Idevio GeoAnalytics operation Within ----------------------------------- */After edit

The header and the call is moved inside of the loop. chunkSize decides how big each split is.

Note that the first inline table now comes after the first one, this to get the call inside of the iteration.

/* Generated by Idevio GeoAnalytics for operation Within ---------------------- */ Let [EnclosingInlineTable] = 'ClubCode' & Chr(9) & 'Car5mins_TravelArea'; Let numRows = NoOfRows('TravelAreas5'); Let chunkSize = 1000; Let chunks = numRows/chunkSize; For n = 0 to chunks Let chunkText = ''; Let chunk = n*chunkSize; For i = 0 To chunkSize-1 Let row = ''; Let rowNr = chunk+i; Exit for when rowNr >= numRows; For Each f In 'ClubCode', 'Car5mins_TravelArea' row = row & Chr(9) & Replace(Replace(Replace(Replace(Replace(Replace(Peek('$(f)', $(rowNr), 'TravelAreas5'), Chr(39), '\u0027'), Chr(34), '\u0022'), Chr(91), '\u005b'), Chr(47), '\u002f'), Chr(42), '\u002a'), Chr(59), '\u003b'); Next chunkText = chunkText & Chr(10) & Mid('$(row)', 2); Next [EnclosingInlineTable] = [EnclosingInlineTable] & chunkText; Next chunkText='' Let numRows = NoOfRows('PostalData'); Let chunkSize = 1000; Let chunks = numRows/chunkSize; For n = 0 to chunks Let [EnclosedInlineTable] = 'POSTCODE' & Chr(9) & 'Postal.Latitude' & Chr(9) & 'Postal.Longitude'; Let chunkText = ''; Let chunk = n*chunkSize; For i = 0 To chunkSize-1 Let row = ''; Let rowNr = chunk+i; Exit for when rowNr >= numRows; For Each f In 'POSTCODE', 'Postal.Latitude', 'Postal.Longitude' row = row & Chr(9) & Replace(Replace(Replace(Replace(Replace(Replace(Peek('$(f)', $(rowNr), 'PostalData'), Chr(39), '\u0027'), Chr(34), '\u0022'), Chr(91), '\u005b'), Chr(47), '\u002f'), Chr(42), '\u002a'), Chr(59), '\u003b'); Next chunkText = chunkText & Chr(10) & Mid('$(row)', 2); Next [EnclosedInlineTable] = [EnclosedInlineTable] & chunkText; [WithinAssociations]: SQL SELECT [POSTCODE], [ClubCode] FROM Within(enclosed='Enclosed', enclosing='Enclosing') DATASOURCE Enclosed INLINE tableName='PostalData', tableFields='POSTCODE,Postal.Latitude,Postal.Longitude', geometryType='POINTLATLON', loadDistinct='NO', suffix='', crs='Auto' {$(EnclosedInlineTable)} DATASOURCE Enclosing INLINE tableName='TravelAreas5', tableFields='ClubCode,Car5mins_TravelArea', geometryType='POLYGON', loadDistinct='NO', suffix='', crs='Auto' {$(EnclosingInlineTable)} SELECT [POSTCODE], [Enclosed_Geometry] FROM Enclosed SELECT [ClubCode], [Car5mins_TravelArea] FROM Enclosing; [EnclosedInlineTable] = ''; [EnclosingInlineTable] = ''; Next chunkText='' /* End Idevio GeoAnalytics operation Within ----------------------------------- */Example, a AddressPointLookup operation

Before the edit

The code as the connector produces it:

/* Generated by GeoAnalytics for operation AddressPointLookup ---------------------- */ Let [DatasetInlineTable] = 'id' & Chr(9) & 'STREET_NAME' & Chr(9) & 'STREET_NUMBER'; Let numRows = NoOfRows('data'); Let chunkSize = 1000; Let chunks = numRows/chunkSize; For n = 0 to chunks Let chunkText = ''; Let chunk = n*chunkSize; For i = 0 To chunkSize-1 Let row = ''; Let rowNr = chunk+i; Exit for when rowNr >= numRows; For Each f In 'id', 'STREET_NAME', 'STREET_NUMBER' row = row & Chr(9) & Replace(Replace(Replace(Replace(Replace(Replace(Peek('$(f)', $(rowNr), 'data'), Chr(39), '\u0027'), Chr(34), '\u0022'), Chr(91), '\u005b'), Chr(47), '\u002f'), Chr(42), '\u002a'), Chr(59), '\u003b'); Next chunkText = chunkText & Chr(10) & Mid('$(row)', 2); Next [DatasetInlineTable] = [DatasetInlineTable] & chunkText; Next chunkText='' [AddressPointLookupResult]: SQL SELECT [id], [Dataset_Address], [Dataset_Geometry], [CountryIso2], [Dataset_Adm1Code], [Dataset_City], [Dataset_PostalCode], [Dataset_Street], [Dataset_HouseNumber], [Dataset_Match] FROM AddressPointLookup(searchTextField='', country='"Canada"', stateField='', cityField='"Toronto"', postalCodeField='', streetField='STREET_NAME', houseNumberField='STREET_NUMBER', matchThreshold='0.5', service='default', dataset='Dataset') DATASOURCE Dataset INLINE tableName='data', tableFields='id,STREET_NAME,STREET_NUMBER', geometryType='NONE', loadDistinct='NO', suffix='', crs='Auto' {$(DatasetInlineTable)} ; [DatasetInlineTable] = ''; /* End GeoAnalytics operation AddressPointLookup ----------------------------------- */After edit

The header and the call is moved inside of the loop. chunkSize decides how big each split is.

/* Generated by GeoAnalytics for operation AddressPointLookup ---------------------- */ Let numRows = NoOfRows('data'); Let chunkSize = 1000; Let chunks = numRows/chunkSize; For n = 0 to chunks Let [DatasetInlineTable] = 'id' & Chr(9) & 'STREET_NAME' & Chr(9) & 'STREET_NUMBER'; Let chunkText = ''; Let chunk = n*chunkSize; For i = 0 To chunkSize-1 Let row = ''; Let rowNr = chunk+i; Exit for when rowNr >= numRows; For Each f In 'id', 'STREET_NAME', 'STREET_NUMBER' row = row & Chr(9) & Replace(Replace(Replace(Replace(Replace(Replace(Peek('$(f)', $(rowNr), 'data'), Chr(39), '\u0027'), Chr(34), '\u0022'), Chr(91), '\u005b'), Chr(47), '\u002f'), Chr(42), '\u002a'), Chr(59), '\u003b'); Next chunkText = chunkText & Chr(10) & Mid('$(row)', 2); Next [DatasetInlineTable] = [DatasetInlineTable] & chunkText; [AddressPointLookupResult]: SQL SELECT [id], [Dataset_Address], [Dataset_Geometry], [CountryIso2], [Dataset_Adm1Code], [Dataset_City], [Dataset_PostalCode], [Dataset_Street], [Dataset_HouseNumber], [Dataset_Match] FROM AddressPointLookup(searchTextField='', country='"Canada"', stateField='', cityField='"Toronto"', postalCodeField='', streetField='STREET_NAME', houseNumberField='STREET_NUMBER', matchThreshold='0.5', service='default', dataset='Dataset') DATASOURCE Dataset INLINE tableName='data', tableFields='id,STREET_NAME,STREET_NUMBER', geometryType='NONE', loadDistinct='NO', suffix='', crs='Auto' {$(DatasetInlineTable)} ; [DatasetInlineTable] = ''; Next chunkText='' /* End GeoAnalytics operation AddressPointLookup ----------------------------------- */