Unlock a world of possibilities! Login now and discover the exclusive benefits awaiting you.

Search our knowledge base, curated by global Support, for answers ranging from account questions to troubleshooting error messages.

Recent Documents

-

Using Kafka with Qlik Replicate

This Techspert Talks session covers: How Replicate works with Kafka Kafka Terminology Configuration best practices Chapters: 01:06 - Kafka Archit... Show More -

How to: automation monitoring app for tenant admins with Qlik Application Automa...

This article shows how to use the Qlik Application Automation Monitoring App. It explains how to set up the load script and how to use the app for mon... Show MoreThis article shows how to use the Qlik Application Automation Monitoring App. It explains how to set up the load script and how to use the app for monitoring Qlik Application Automation usage statistics for a cloud tenant.

Index:

- How to configure the load script

- Qlik Application Automation Monitoring app content

- Loading data

- Limitations

The app included is an example and not an official app. The app is provided as-is.

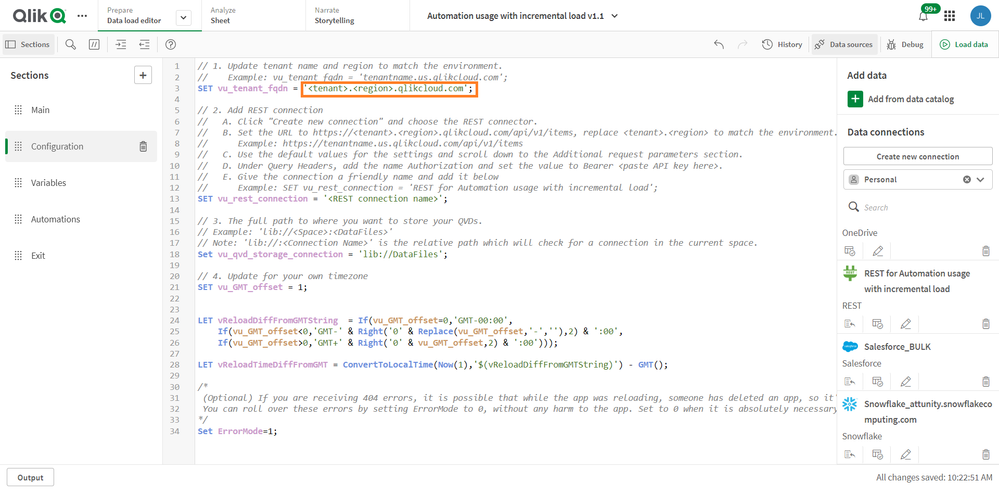

How to configure the load script

There are four steps for the configuration of the load script:

- Configure your tenant here by typing the name of your tenant and region here:

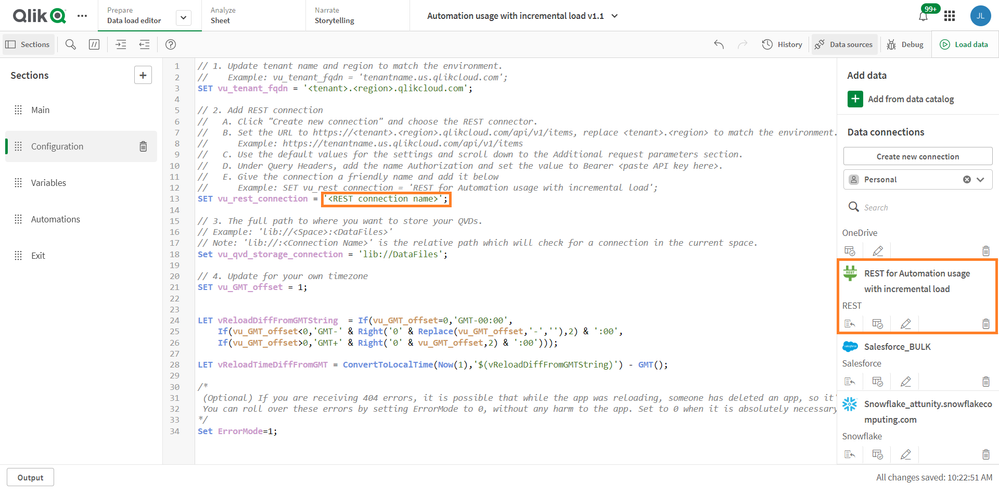

- Configure your data connection by typing the name of an existing data connection. The data connection must be configured in the pane to the right, see picture. If you do not have one, you must create one.

- Configure your data folder location here:

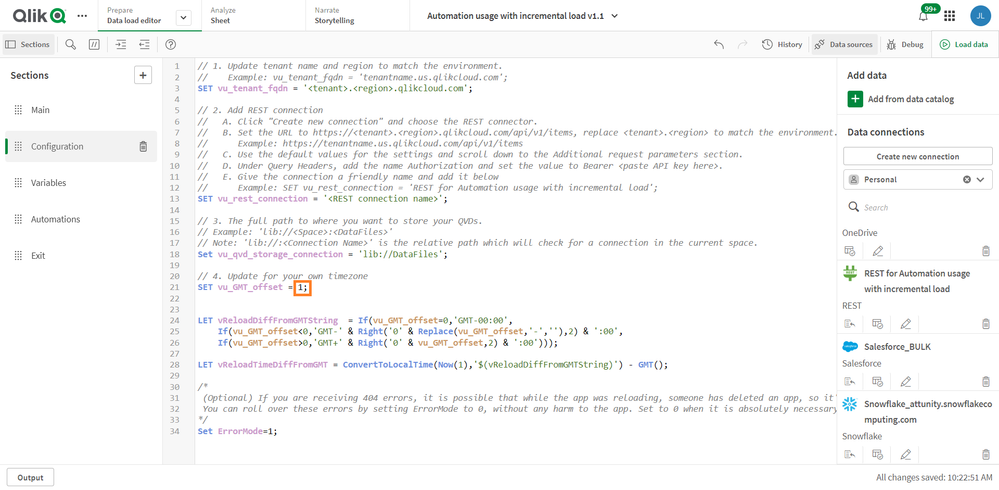

- Configure your time zone here, using deviation from GMT, for example, Central European time +1:

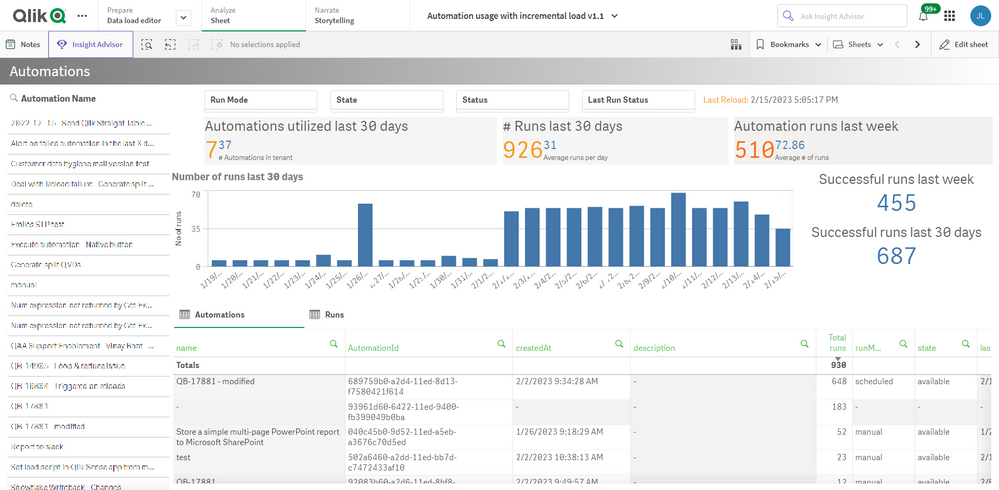

Qlik Application Automation Monitoring app content

The monitoring app includes four sheets that present various information on the Qlik Application Automation usage in the current tenant.

Automations

Filtering is available based on Automation Name, Last Run Status, Run Mode, State & Status.

- # Runs last 30 days

- Automation runs last week

- Automations in tenant

- Automations utilized last 30 days

- Average # of runs

- Average runs per day

- Successful runs last 30 days

- Successful runs last week

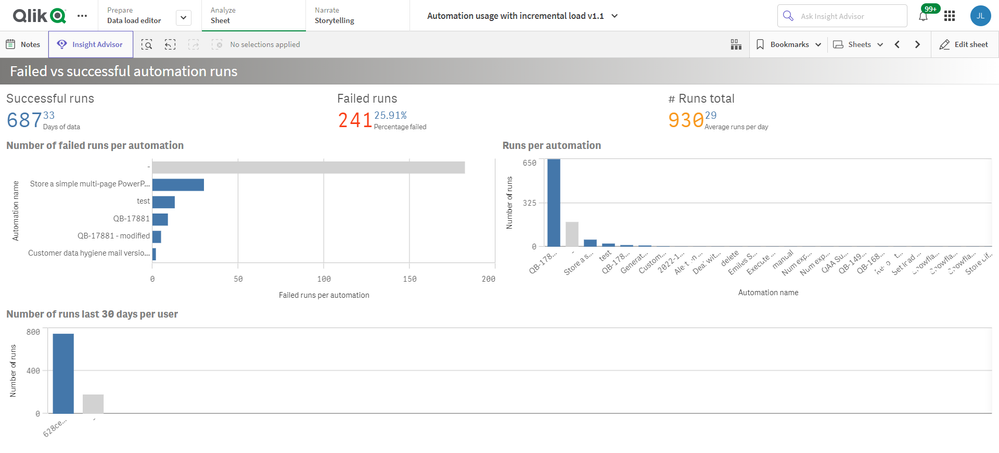

Failed vs successful automation runs

- # Runs total

- Average runs per day

- Days of data

- Failed runs

- Number of failed runs per automation

- Number of runs last 30 days per user

- Percentage failed

- Runs per automation

- Successful runs

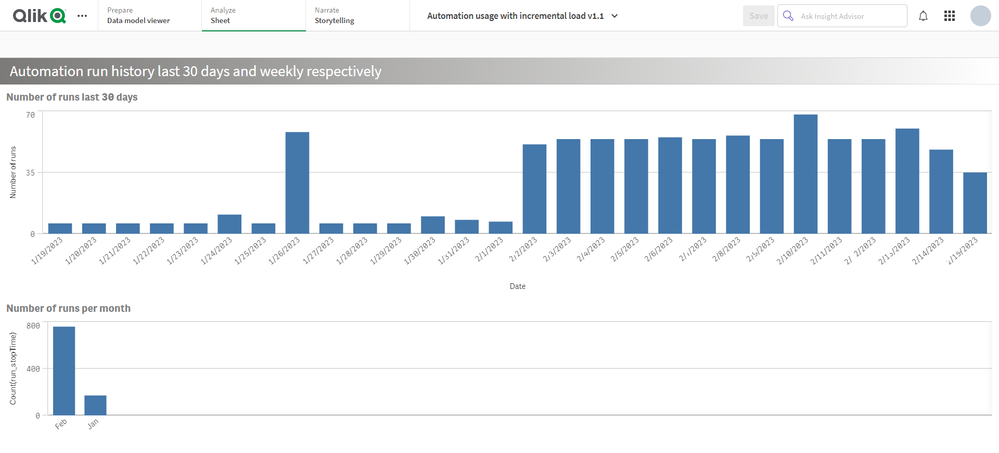

Automation run history last 30 days and weekly respectively

- Number of runs last 30 days

- Number of runs per month

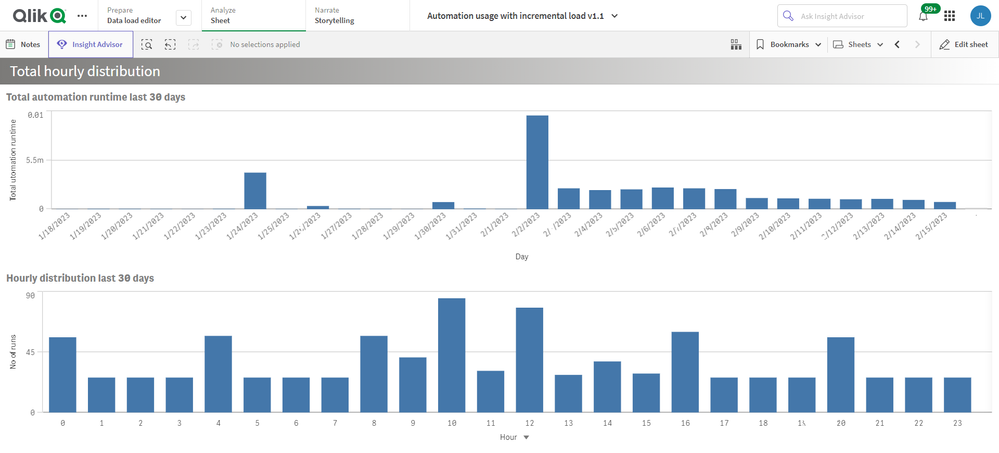

Total hourly distribution

- Hourly distribution last 30 days

- Total automation runtime last 30 days

Loading data

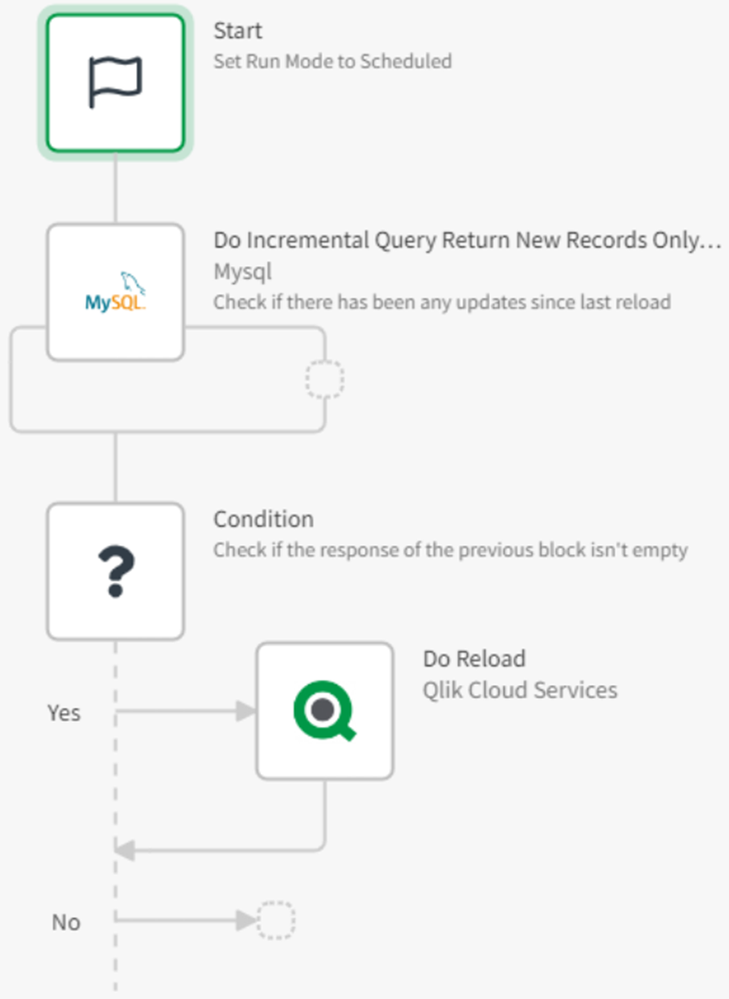

The Qlik Application Automation Monitoring app facilitates incremental load, that is, only added data is loaded into the app.

Important: Qlik Application Automation runs are only stored for 30 days. When data is loaded into the app for the first time, only 30 days of history is loaded, thus only 30 days of history will be available in the app. After this initial data load, data older than 30 days will be available in the app thanks to the incremental data load. If data is loaded at least once every 30 days, continuous data will be available in the app.

Limitations

- At the initial load, only 30 days of history are loaded. This is because Qlik Application Automation history is only stored for 30 days.

- For continuous data in the app, data must be loaded at least every 30 days.

- The API can only return the last 5000 runs for every automation. Please keep this in mind when scheduling the reloads of this app.

Environment

Qlik Cloud

Qlik Application AutomationThe information in this article is provided as-is and is to be used at your own discretion. Depending on tool(s) used, customization(s), and/or other factors ongoing support on the solution below may not be provided by Qlik Support.

-

DataRobot Connector unavailable in Qlik Cloud data connections

When attempting to create a DataRobot connection in the Qlik Cloud tenant hub, the connection cannot be found Navigating to Add New > Data connection ... Show MoreWhen attempting to create a DataRobot connection in the Qlik Cloud tenant hub, the connection cannot be found

Navigating to Add New > Data connection > and searching for DataRobot does not find the data connection option.

Resolution

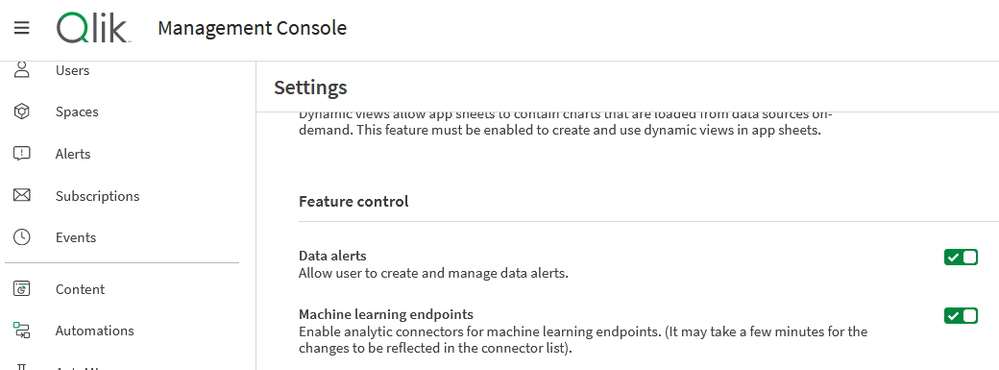

- Go to your TMC (tenant management console) settings and

- Scroll down to the Feature control section

- Enable the following switch: Machine learning endpoints

- Return to the tenant hub

- Now go to Add New

- Click Data connection

- Type DataRobot and select it to continue with creating the DataRobot connection

Related Content

Environment

Qlik Cloud

The information in this article is provided as-is and will be used at your discretion. Depending on the tool(s) used, customization(s), and/or other factors, ongoing support on the solution below may not be provided by Qlik Support.

-

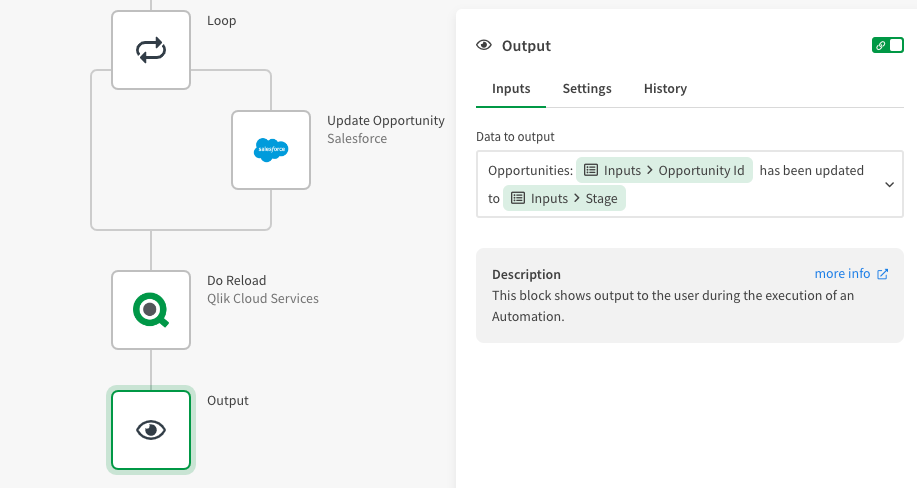

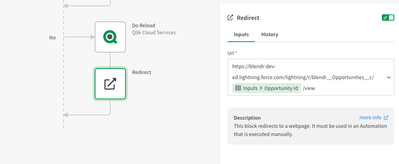

Make your Qlik Sense Sheet interactive with writeback functionality powered by Q...

Table of content: Writeback use caseTwo ways to execute an automation from a Qlik Sense Sheet1. Native 'execute automation' action in the Qlik button... Show MoreTable of content:

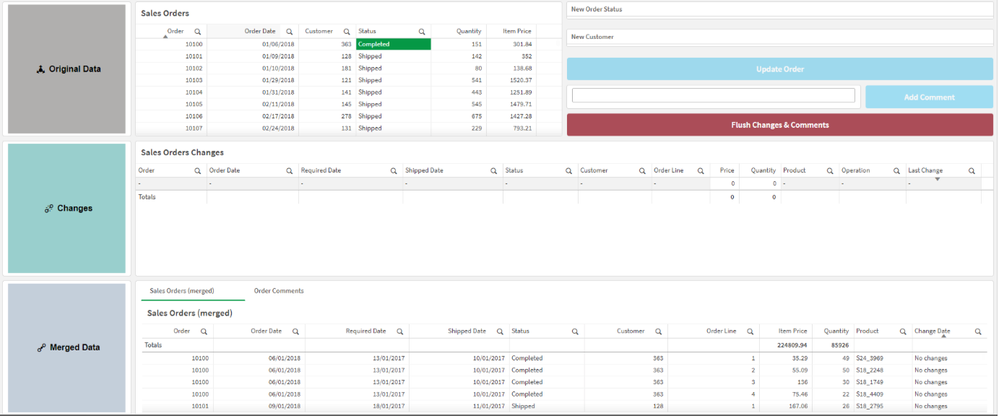

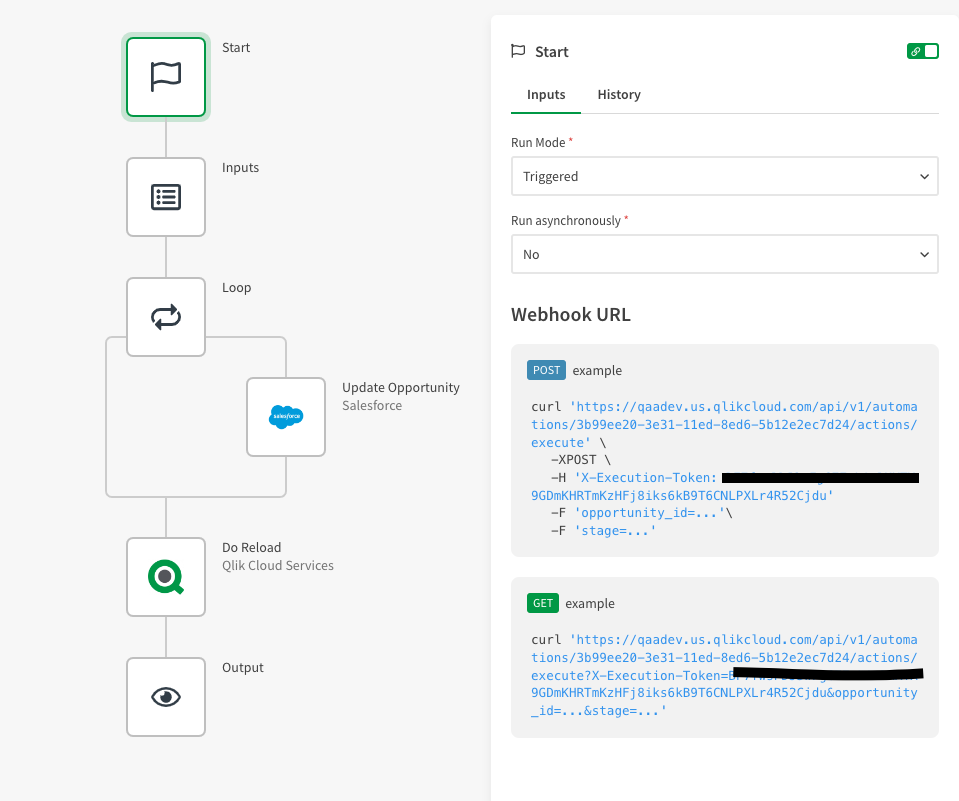

- Writeback use case

- Two ways to execute an automation from a Qlik Sense Sheet

- 1. Native 'execute automation' action in the Qlik button

- 2. Link a triggered automation from your Qlik Sense Sheet.

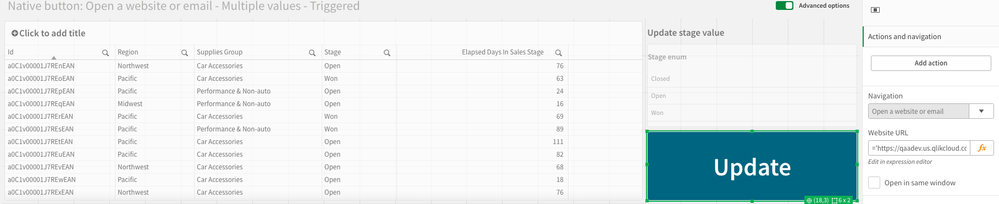

- Example 1: Open website or URL (button)

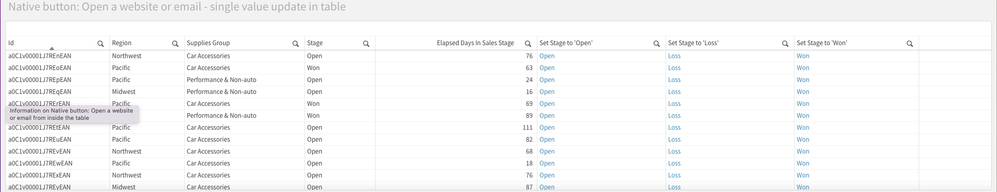

- Example 2: Add an action link in your straight table

- Example 2: Extension with input forms

- Additional Notes

- Demo application with automations

-

Tool to troubleshoot Talend Studio connection to Talend Management Console

The TestConnectivity.jar command line tool assists in troubleshooting Studio connections to the Talend Management Console (TMC). It provides output o... Show MoreThe TestConnectivity.jar command line tool assists in troubleshooting Studio connections to the Talend Management Console (TMC). It provides output of connection information from simple to detailed through debug logging.

Content:

Example Usage

java <Optional parameter(s)> -jar TestConnectivity.jar <TMC location or URL> <Optional URL>

example: java -Djava.security.debug=certpath -Djavax.net.debug=all -jar TestConnectivity.jar US|& tee -a testconnectivity.out

Sample Commands

java -jar TestConnectivity.jar HELP

java -jar TestConnectivity.jar DEBUG

java -jar TestConnectivity.jar US

java -jar TestConnectivity.jar EU

java -jar TestConnectivity.jar AP

java -jar TestConnectivity.jar URL http://mywebserver.com/

java -jar TestConnectivity.jar DEBUG URL http://mywebserver.com/ ftp://speedtest.tele2.net/Java Debug Settings

-Djava.security.debug=certpath

-Djavax.net.debug=ssl

-Djavax.net.debug=all (Use this for default truststore information)Additional Parameters

-Dhttps.proxySet=true

-Dhttps.proxyHost=MYPROXYHOST

-Dhttps.proxyPort=9090

-Dhttps.proxyUser=ME

-Dhttps.proxyPassword=MYPASSWORD

-Dhttps.nonProxyHosts=127.0.0.1|localhost|*.MYCOMPANY.com

-Djavax.net.ssl.trustStoreType=JKS

-Djavax.net.ssl.trustStore="C:/certs/cacerts"

-Djavax.net.ssl.trustStorePassword="changeit"

-Djavax.net.ssl.keyStoreType=JKS

-Djavax.net.ssl.keyStore="C:/certs/cacerts"

-Djavax.net.ssl.keyStorePassword="changeit"Environment

- Talend Studio 7.3

- Talend Studio 8.0.1

-

Qlik Talend Products: Java 17 Migration Guide

From R2024-05, Java 17 will become the only supported version to start most Talend modules, enforcing the improved security of Java 17 and eliminating... Show MoreFrom R2024-05, Java 17 will become the only supported version to start most Talend modules, enforcing the improved security of Java 17 and eliminating concerns about Java's end-of-support for older versions. In 2025, Java 17 will become the only supported version for all operations in Talend modules.

Starting from v2.13, Talend Remote Engine requires Java 17 to run. If some of your artifacts, such as Big Data Jobs, require other Java versions, see Specifying a Java version to run Jobs or Microservices.Content

- Prerequisites

- Procedure

- Windows

- Linux

- MAC OS

- Multiple JDK versions

- Studio

- Remote Engine

- ESB - Runtime

- Studio

- Talend Administration Center (TAC)

- CICD

- Windows Users

- Linux Users

- Jenkins Users

- Additional Notes

- Specifying a Java version to run Jobs or Microservices

Prerequisites

Qlik Talend Module Patch Level and Version Studio Supported from R2023-10 onwards Remote Engine 2.13 or later Runtime 8.0.1-R2023-10 or later Procedure

Windows

For Windows users, please follow the JDK installation guide (docs.oracle.com).

Linux

For Linux users, please follow the JDK installation guide (docs.oracle.com).

MAC OS

For MAC OS users, please follow the JDK installation guide (docs.oracle.com).

Multiple JDK versions

When working with software that supports multiple versions of Java, it's important to be able to specify the exact Java version you want to use. This ensures compatibility and consistent behavior across your applications. Here is how you can specify a specific Java version on the following products (such as build servers, shared application server, and similar):

Studio

For Studio users who are using multiple JDKs, please follow the appropriate instructions listed above and follow the proceeding additional steps:

- Backup and edit the <Studio Home>\Talend-Studio-win-x86_64.ini file

- Prepend:

-vm

<JDK17 HOME>\bin\server\jvm.dll

Remote Engine

For Remote Engine (RE) users who are using multiple JDKs, please follow the appropriate instructions listed above and follow the proceeding additional steps.

- Backup and edit the <RE HOME>/etc/talend-remote-engine-wrapper.conf file

- Modify the set.default.JAVA_HOME= property to point to the <JDK 17 HOME> path.

Note 1: If Remote Engine is not installed as a service, the JDK file will be set in the <RE HOME>/bin/setenv file.

Note 2: When it comes to running Jobs or Microservices, you retain the flexibility to either use the default Java 17 version or choose older Java versions, through straightforward configuration of the engine.

How to modify?

Check the following etc folder based configuration and change it to installed jdk/jre path:

{

org.talend.ipaas.rt.dsrunner.cfg--> ms.custom.jre.path

org.talend.remote.jobserver.server.cfg--> org.talend.remote.jobserver.commons.config.JobServerConfiguration.JOB_LAUNCHER_PATH

}

ESB - Runtime

For Runtime users who are using multiple JDKs, please follow the appropriate instructions listed above and follow the proceeding additional steps.

- Backup and edit the <Runtime home>/etc/<TALEND-8-CONTAINER service>-wrapper.conf

- Modify the set.default.JAVA_HOME=C:\<JDK 17 HOME> path

If Runtime is not running as a service:

- Backup and edit the <Runtime home>/bin/setenv.sh

- Modify the SET JAVA_HOME= <JDK 17 HOME> path

Studio

- Data Integration (DI): After installing the 8.0 R2023-10 Talend Studio monthly update or a later one, if you switch the Java version to 17 and relaunch your Talend Studio with Java 17, you must enable your project settings for Java 17 compatibility.

- Go to Studio

- Go to File

- Edit Project properties

- Go to Build

- Go to Java Version

- Activate "Enable Java 17 compatibility"

With the Enable Java 17 compatibility option activated, any Job built by Talend Studio cannot be executed with Java 8. For this reason, verify the Java environment on your Job execution servers before activating the option.

- Big Data Users: Do not enable Java 17 compatibility unless your Spark Cluster supports Java 17.

Talend Administration Center (TAC)

To use Talend Administration Center with Java 17, you need to open the <tac_installation_folder>/apache-tomcat/bin/setenv.sh file and add the following commands:

# export modules export JAVA_OPTS="$JAVA_OPTS --add-opens=java.base/sun.security.x509=ALL-UNNAMED --add-opens=java.base/sun.security.pkcs=ALL-UNNAMED"

Windows users use <tac_installation_folder>\apache-tomcat\bin\setenv.bat

CICD

Windows Users

For Java 17 users, Talend CICD process requires the following Maven options:

- Backup and edit <Maven_home>\bin\mvn.cmd

- Modify to:

set "MAVEN_OPTS=%MAVEN_OPTS% --add-opens=java.base/java.net=ALL-UNNAMED --add-opens=java.base/sun.security.x509=ALL-UNNAMED --add-opens=java.base/sun.security.pkcs=ALL-UNNAMED"

Linux Users

For Java 17 users, Talend CICD process requires the following Maven options:

- Backup and edit <Maven_home>/bin/mvn

- Modify to:

export MAVEN_OPTS="$MAVEN_OPTS \ --add-opens=java.base/java.net=ALL-UNNAMED \ --add-opens=java.base/sun.security.x509=ALL-UNNAMED \ --add-opens=java.base/sun.security.pkcs=ALL-UNNAMED"

Jenkins Users

- Backup and edit the jenkins_pipeline_simple.xml

- Include the following in the Talend_CI_RUN_CONFIG parameter:

<name>TALEND_CI_RUN_CONFIG</name> <description>Define the Maven parameters to be used by the product execution, such as: - Studio location - debug flags These parameters will be put to maven 'mavenOpts'. If Jenkins is using Java 17, add: --add-opens=java.base/java.net=ALL-UNNAMED --add-opens=java.base/sun.security.x509=ALL-UNNAMED --add-opens=java.base/sun.security.pkcs=ALL-UNNAMED </description>

Additional Notes

Specifying a Java version to run Jobs or Microservices

Overview

Enable your Remote Engine to run Jobs or Microservices using a specific Java version.

By default, a Remote Engine uses the Java version of its environment to execute Jobs or Microservices. With Remote Engine v2.13 and onwards, Java 17 is mandatory for engine startup. However, when it comes to running Jobs or Microservices, you can specify a different Java version. This feature allows you to use a newer engine version to run the artifacts designed with older Java versions, without the need to rebuild these artifacts, such as the Big Data Jobs, which reply on Java 8 only.

When developing new Jobs or Microservices that do not exclusively rely on Java 8, that is to say, they are not Big Data Jobs, consider building them with the add-opens option to ensure compatibility with Java 17. This option opens the necessary packages for Java 17 compatibility, making your Jobs or Microservices directly runnable on the newer Remote Engine version, without having to go through the procedure explained in this section for defining a specific Java version. For further information about how to use this add-opens option and its limitation, see Setting up Java in Talend Studio.

Procedure

- Stop the engine.

- Browse to the <RemoteEngineInstallationDirectory>/etc directory.

- Depending on the type of the artifacts you need to run with a specific Java version, do the following:

For both artifact types, use backslashes to escape characters specific to a Windows path, such as colons, whitespace, and directory separators, while keeping in mind that directory separators are also backslashes on Windows.

Example:

c:\\Program\ Files\\Java\\jdk11.0.18_10\\bin\\java.exe

- For Jobs, in the <RemoteEngineInstallationDirectory>/etc/org.talend.remote.jobserver.server.cfg file, add the path to the Java executable file.

Example:

org.talend.remote.jobserver.commons.config.JobServerConfiguration.JOB_LAUNCHER_PATH=c:\\jdks\\jdk11.0.18_10\\bin\\java.exe

- For Microservices, in the <RemoteEngineInstallationDirectory>/etc/org.talend.ipaas.rt.dsrunner.cfg, add the path to the Java executable file.

Example:

ms.custom.jre.path=C\:/Java/jdk/bin

Make this modification before deploying your Microservices to ensure that these changes are correctly taken into account.

- For Jobs, in the <RemoteEngineInstallationDirectory>/etc/org.talend.remote.jobserver.server.cfg file, add the path to the Java executable file.

- Restart the engine.

-

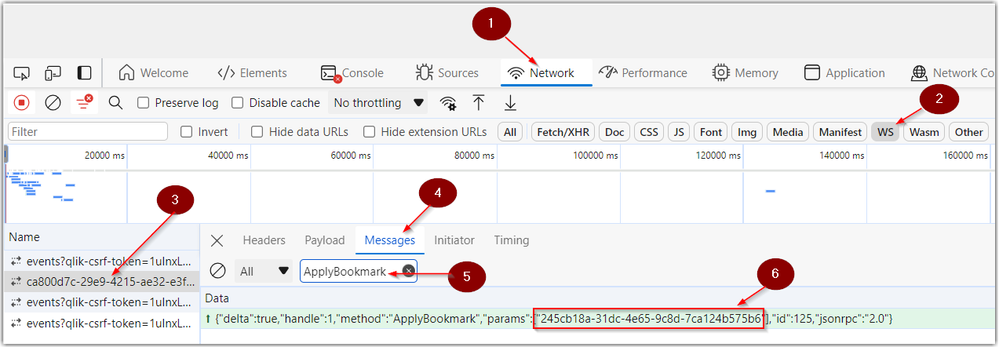

How to determine a bookmark ID in Qlik Cloud

Looking for the bookmark ID in Qlik Cloud? Follow the below steps to obtain the ID using Chrome developer tools: Open Chrome Developer Tools and clic... Show MoreLooking for the bookmark ID in Qlik Cloud? Follow the below steps to obtain the ID using Chrome developer tools:

- Open Chrome Developer Tools and click on the Network tab

- Click on the filter WS (for WebSockets)

- Reload the page to make sure you see your connection in the Name column

- Click on Messages

- Enter ApplyBookmark in the messages filter pane

- Locate the bookmark ID in the highlighted message

Environment

-

Snowflake - How to get started with Snowflake in Automations

Note: we've recently (May 25th) released a new version of the Snowflake connector, if you had automations using Snowflake prior to that date, the conn... Show MoreNote: we've recently (May 25th) released a new version of the Snowflake connector, if you had automations using Snowflake prior to that date, the connector will show as Snowflake - deprecated. To use this new version, simply replace those blocks with blocks from the current Snowflake connector.

This article gives an overview of the available blocks in the Snowflake connector in Qlik Application Automation. It will also go over some basic examples of retrieving data from a Snowflake database and creating a record in a database.

Connector overview

This connector has the following blocks:

- List Tables: returns a list of the tables from the connected database.

- List Records: returns a list of records from the specified table

- Insert Record: create one record in the specified table.

- Upsert Record: create or update one record in the specified table.

- Insert Bulk: create multiple records in the specified table.

- Upsert Bulk: create or update multiple records in the specified table.

- Do Query: do a generic SQL query against the connected Snowflake database.

- Update Record by One Field: update a single record in the specified table.

- Delete Record: delete one record in the specified table.

- List Schemas: returns a list of schemas from the connected database.

Authentication

To create a new connection to Snowflake, the following parameters are required:

- account_name -> your snowflake account/tenant name, 'ABC' if you Snowflake URL is abc.snowflakecomputing.com.

- username -> username for the user that has remote access to the snowflake database.

- password -> password used to authenticate the above username

- dbname -> the name of the database you want to use for this connection. If you want to connect to multiple databases in the same automation, you'll need to create multiple connections.

- warehouse -> the name of the warehouse you want to use for this connection.

Examples

Insert a new record into a table

- Add the Insert Record block from the Snowflake connector to your automation.

- Configure the block to point to a table in the database you're currently connected to, feel free to use the do lookup function for this.

- Run the automation. This will insert a new record in your Snowflake table.

Use the Do Query block to create a new table

The Do Query block can be used to perform actions in Snowflake that aren't supported by the other blocks. See the below example on creating a new table.

- Create a new automation

- Search for the Snowflake connector in the left-hand side menu and find the Do Query block. Drag this block inside the automation. Highlight the Do Query block by clicking it and configure it in the right-hand side menu.

- Add your query to create a new table in the Query input field. Here is a query example that creates a table:

- Run the automation. This will create a new table with the specified structure within the selected database.

The information in this article is provided as-is and to be used at own discretion. Depending on tool(s) used, customization(s), and/or other factors ongoing support on the solution below may not be provided by Qlik Support.

-

How to import and export master items using Microsoft Excel with Qlik Applicatio...

This article explains how to import and export master items to and from a Qlik Sense app using the Microsoft Excel connector in Qlik Application Autom... Show MoreThis article explains how to import and export master items to and from a Qlik Sense app using the Microsoft Excel connector in Qlik Application Automation.

Content:

- Export master items to a Microsoft Excel sheet

- Import master items from a Microsoft Excel sheet

- Edge cases & next steps

The first part of this article will explain how to export all of your master items configured in your Qlik Sense App to a Microsoft Excel sheet. The second part will explain how to import those master items from the Microsoft Excel sheet back to a Qlik Sense App.

Export master items to a Microsoft Excel sheet

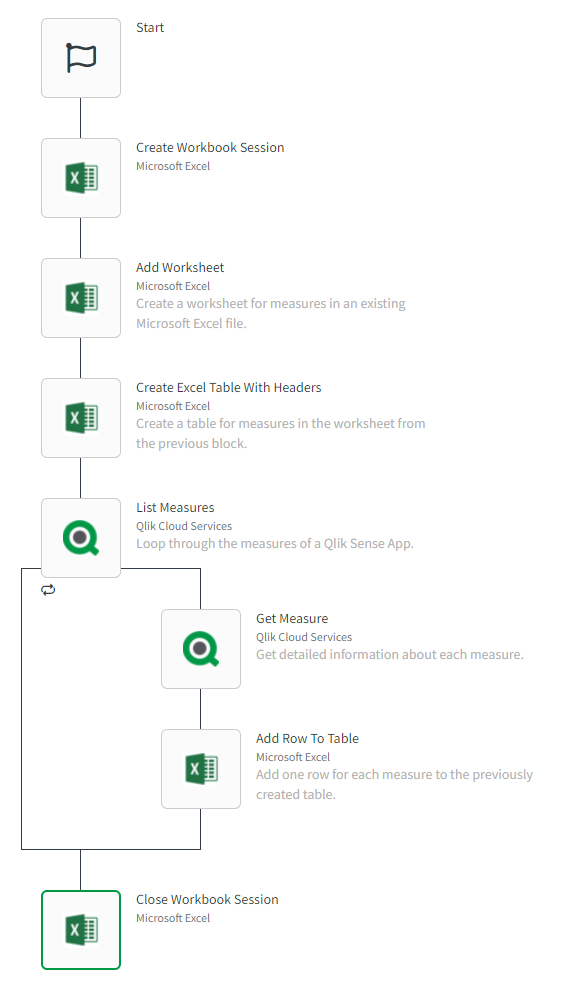

For this, you will need a Qlik Sense app in your tenant that contains measures, dimensions, and variables you want to export. You'll also need an empty Microsoft Excel file. The image below contains a basic example on exporting master items.

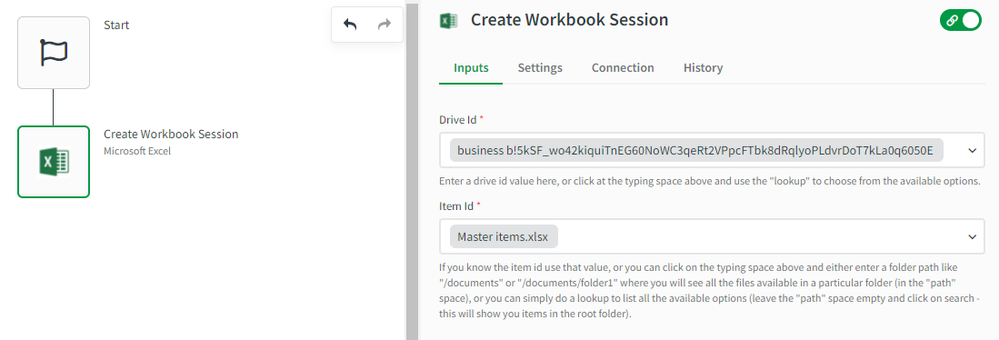

The following steps will guide you through recreating the above automation:- Add the Create Workbook Session block from the Microsoft Excel connector. Configure it with the following settings:

- Drive id -> use do lookup

- Item id -> use do lookup to find the empty destination file, if you don't know the path of your file, you can do an empty search (if it isn't located in a folder)

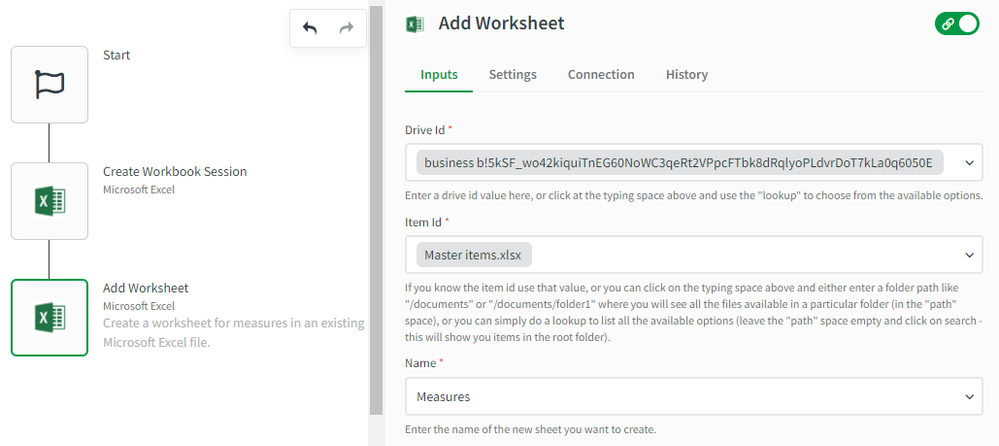

- Add the Add Worksheet block from the Microsoft Excel connector. Use the same Drive Id & Item Id from the previous step and configure the Name parameter to a string of your choice. In this example, we'll use Measures.

- Add the Create Excel Table With Headers block from the Microsoft Excel connector. This block will create a table inside the sheet from the previous step. Specify the following in the block's configuration:

- Start Row -> 1

- Start Column -> A

- End Column -> E

- Headers -> Field,Name,Label Expression,Description,Tags,Measure Color, Segment Color, Number Format

- Name -> Measures

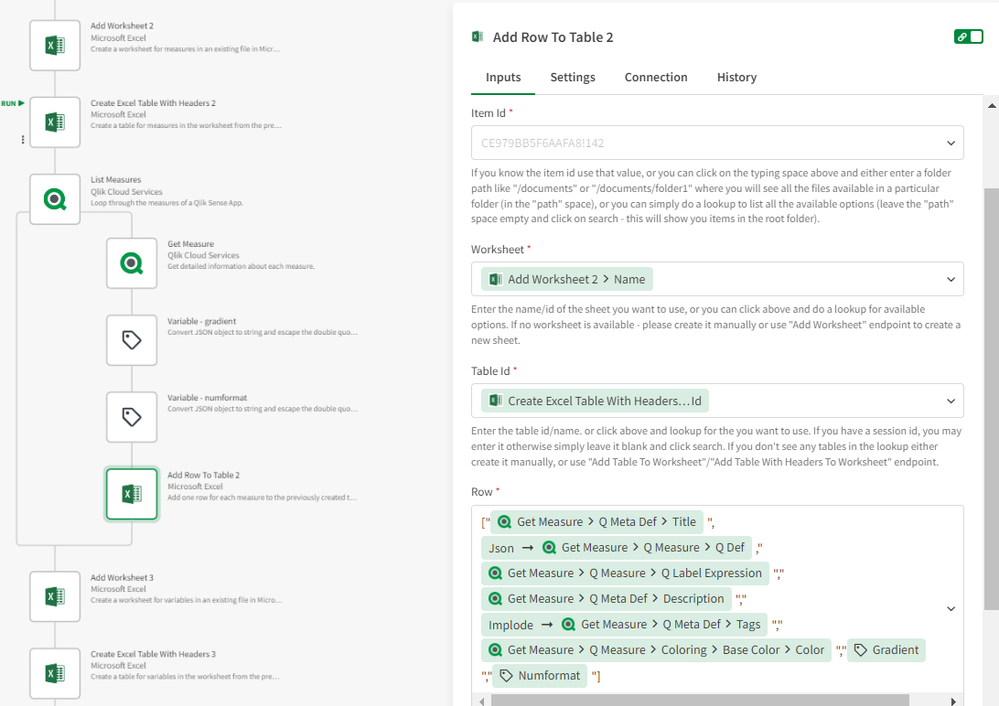

- Add a List Measures block from the Qlik Cloud Services connector and also a Get Measure block inside the loop created by the List Measures block, configure it to get the current item in the loop. Add two variables to convert Gradient and NumFormat JSON objects to string.

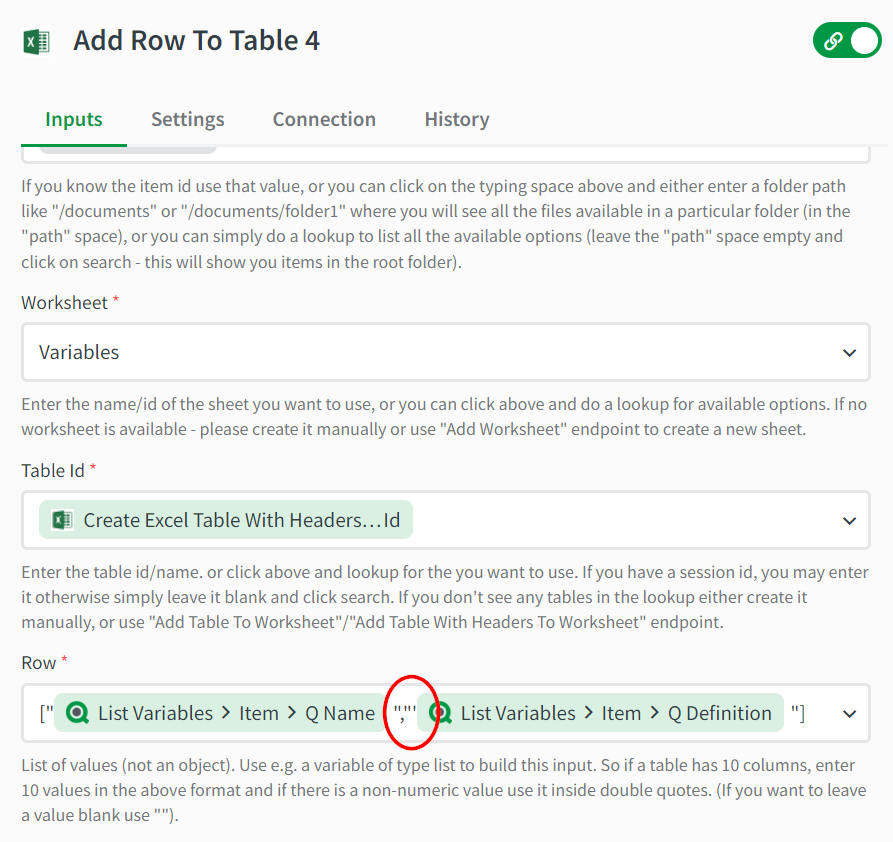

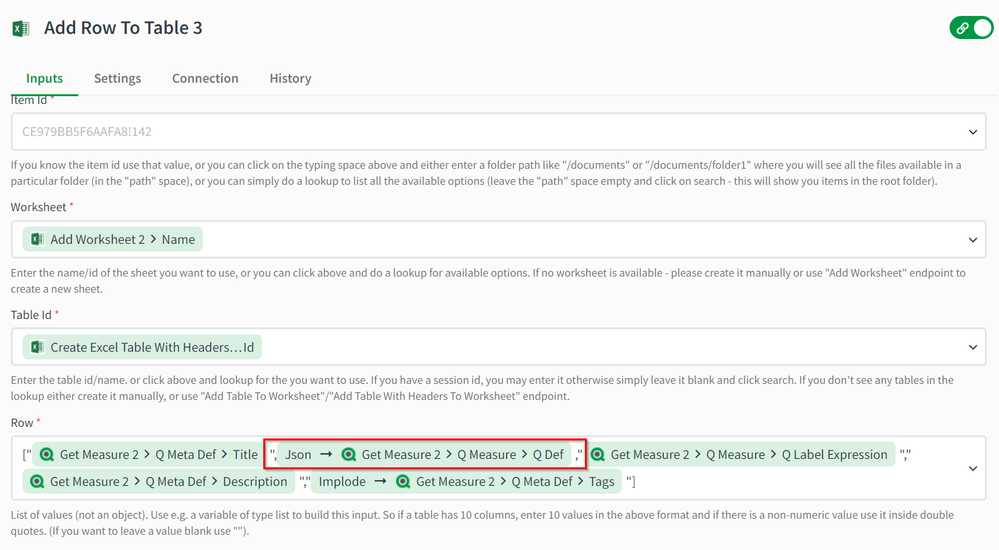

- Add an Add Row To Table block from the Microsoft Excel connector inside the loop after the Get Measure block. This will add every measure's information to the table one by one. Set the Drive Id, Item Id, Worksheet, and Table Id to the corresponding values from the previous blocks.

The Row parameter should be an array of values for every header we specified in the Create Excel Table With Headers block (and in the same order). Title, Label expression, and Description should be encapsulated in double-quotes. Apply the JSON encode formula to the value for the Definition (qDef) and apply the Implode formula to the value for the Tags. - Add a Close Workbook Session block from the Microsoft Excel connector. Specify the same Drive Id & Item Id as in the previous blocks and configure the Session Id to the Id returned by the Create Workbook Session block.

An export of the above automation can be found at the end of this article as Export master items to a Microsoft Excel sheet.json

Import master items from a Microsoft Excel sheet

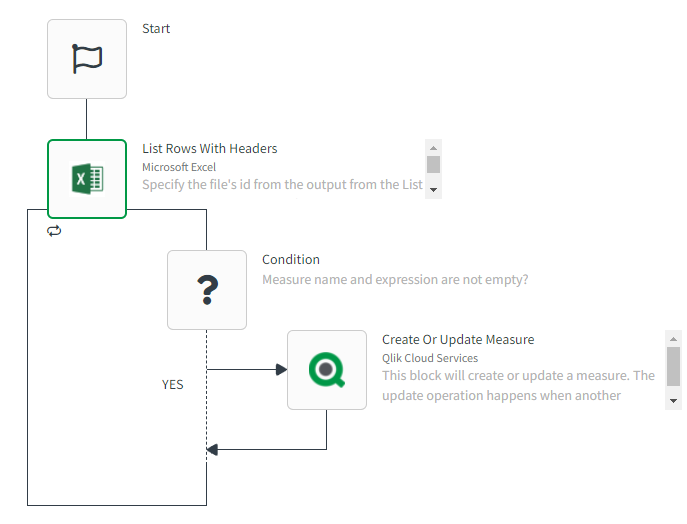

For this example, you'll first need a Microsoft Excel file with sheets configured for each master item type (dimensions, measures, and variables). Use the above example to generate this file. The image below contains a basic example on importing master items from Microsoft Excel to a Qlik Sense app.

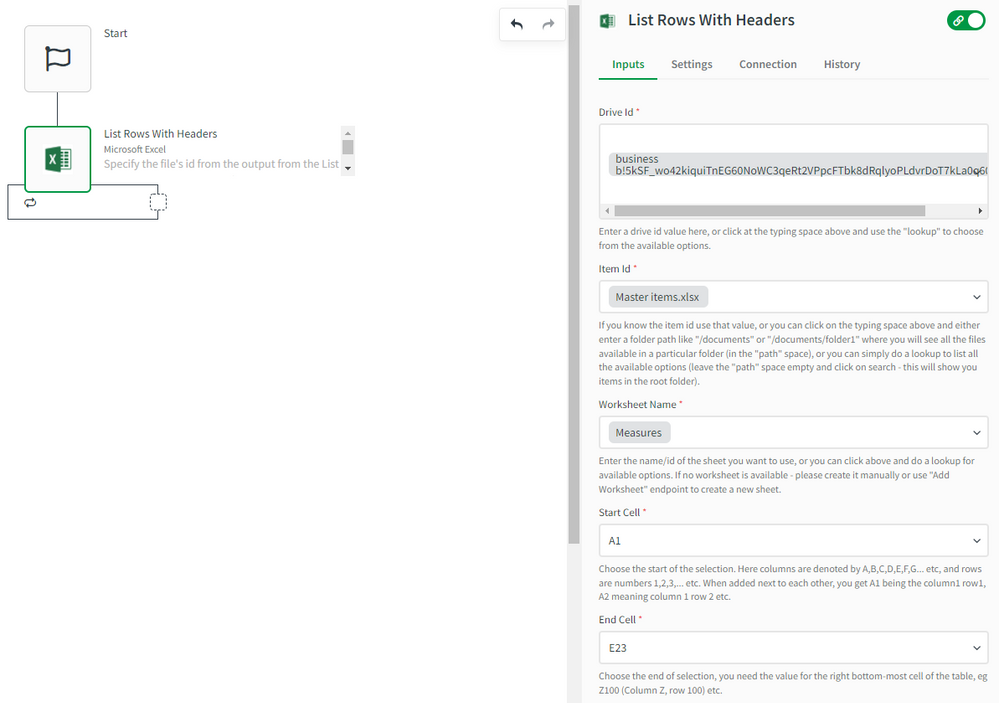

- Add the List Rows With Headers block from the Microsoft Excel connector to read master items from the Excel file. Configure the block with the following settings:

- Drive Id -> use do lookup

- Item Id -> use do lookup to find the empty destination file, if you don't know the path of your file, you can do an empty search (if it isn't located in a folder)

- Worksheet Name -> the name of the sheet that contains the measures. Feel free to use do lookup

- Start Cell -> the upper-left cell of the measures table, this should include the header row, for example, A1

- End Cell -> the bottom right cell of the measures table, for example, E23

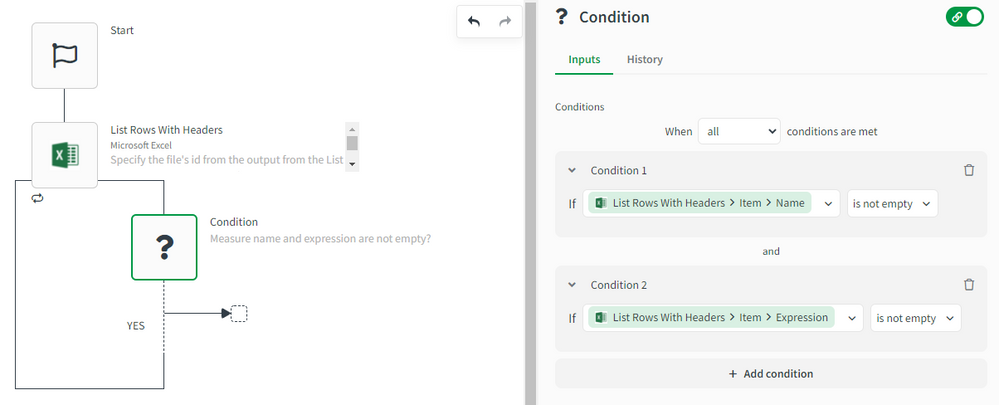

- Add a Condition block inside the loop created by the List Rows With Headers block. The condition will verify that every measure row has the required information to create a measure (name and expression).

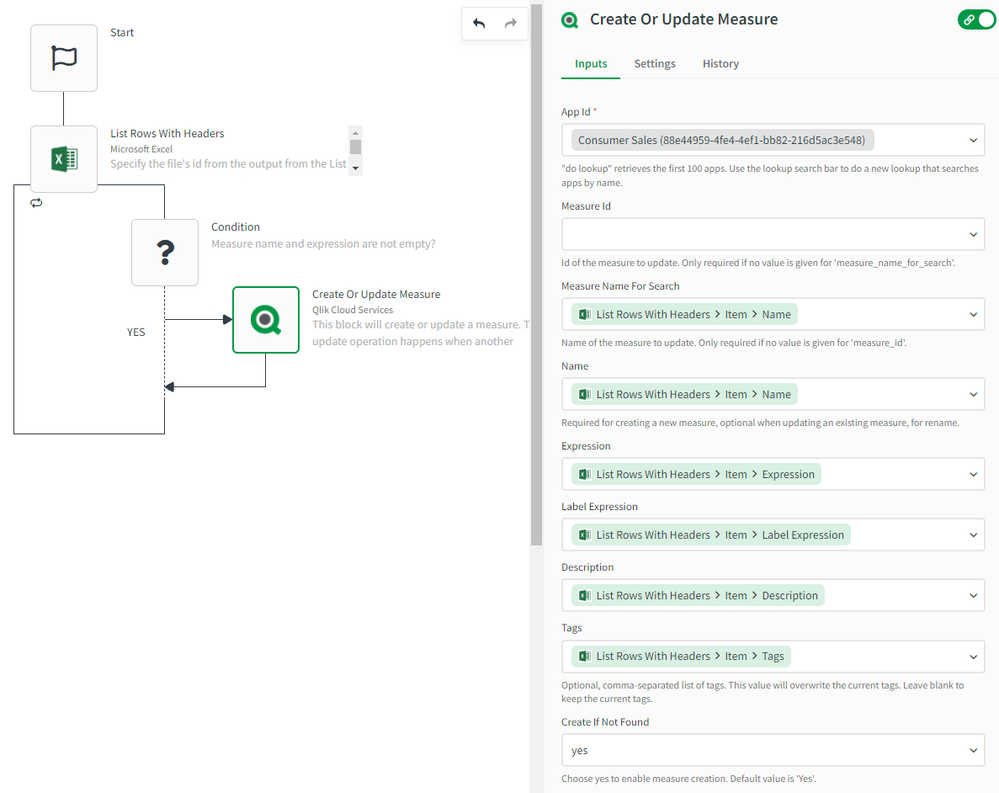

See the below image for an example: - Add the Create Or Update Measure block from the Qlik Cloud Services connector to create or update the measure in the destination app. Map each field from the row in the Excel table to the corresponding input field in the Create Or Update Measure block. Measure Id can be left empty since we're matching measures by Name (Measure Ids can be different across apps). See the below image for an example:

An export of the above automation can be found at the end of this article as Import master items from a Microsoft Excel Sheet.json

Follow the same steps to build automations that import/export dimensions and variables.

Edge cases & next steps

Let's go over some edge cases when exporting information to Microsoft Excel:

- Fields that start with an equals-sign '=' (for example, some variables' definitions) are treated as Excel functions and can be deemed invalid by the Excel API. You can resolve this by adding a single quote before the input field's mapping in the automation.

- Fields that contain newlines (for example measure expressions that contain comments) are invalidated by the Excel API. The solution here is to use the JSON formula to encode the string.

Please check the following articles for more information about working with master items in Qlik Application Automation and also uploading data to Microsoft Excel.

- How to get started with Microsoft Excel

- Distribution of Master items using Qlik Application Automation

- Uploading data to Microsoft Excel

Follow the steps provided in this article How to import & export automations to import the automation from the shared JSON file.

The information in this article is provided as-is and to be used at own discretion. Depending on tool(s) used, customization(s), and/or other factors ongoing support on the solution below may not be provided by Qlik Support.

-

How to add a list of values in a selection to a report in Qlik Application Autom...

This article explains how a list can be used as values for the Add Selection To Report and Add Selection To Sheet blocks in the Qlik Reporting connect... Show MoreThis article explains how a list can be used as values for the Add Selection To Report and Add Selection To Sheet blocks in the Qlik Reporting connector in Qlik Application Automation.

You might have noticed that the Values input field in these blocks only allows you to specify values one by one. But in some scenarios, you'll want to specify a list of field values instead of adding them one by one.

If you're new to reporting, please read our Reporting tutorial first.

Preparation

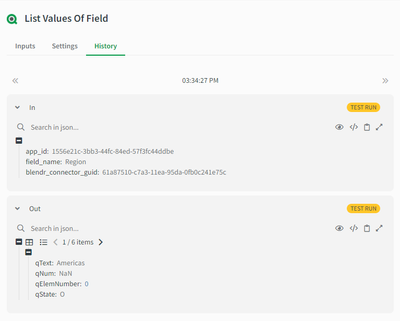

The source of these values can either be the List Values Of Field block from the Qlik Cloud Services connector or any List ... block from a 3rd party storage tool like Microsoft Excel. In this example, we'll use the List Values Of Field block.

The example automation used in this article looks like this:

And this is what the example output of the List Values Of Field block looks like:

Solution

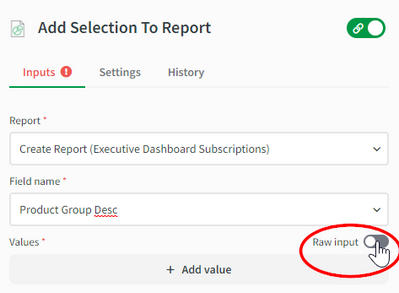

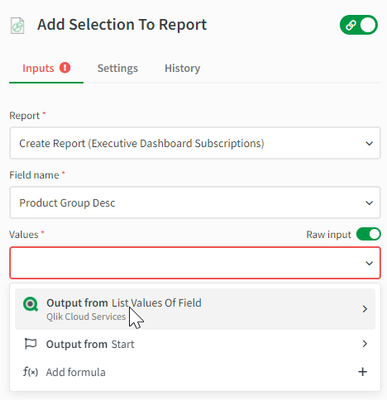

In this case, the qText parameter is required as the value to make selections. Go to the Add Selection To Report block, make sure to specify the same field name as the one used in the List Values Of Field block, and enable the "Raw input" mode:

Remove the square brackets from the input field and click it to select the "Output from List Values Of Field" as the input for the Values input field:

This will take you to the output of the List Values Of Field block and then click the qText parameter. And choose "Select all qText(s) from list ListValuesOfField" in the next screen.

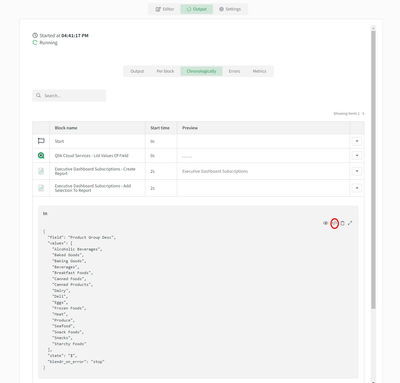

That's it! When the automation now runs, a list of strings is mapped as the value for this selection. You can verify this by toggling the view mode in the automation's chronological output view:

If you want to use multiple selections for this report, add additional Add Selection To Report or Add Selection To Sheet blocks.

The information in this article is provided as-is and to be used at own discretion. Depending on tool(s) used, customization(s), and/or other factors ongoing support on the solution below may not be provided by Qlik Support.

Related Content

-

Optimizing Performance for Qlik Sense Enterprise

This Techspert Talks session addresses: Understanding Back-end Infrastructure Measure Monitor Troubleshoot Tip: Download the LogAnalyzer app here: ... Show MoreThis Techspert Talks session addresses:

- Understanding Back-end Infrastructure

- Measure

- Monitor

- Troubleshoot

Tip: Download the LogAnalyzer app here: LogAnalysis App: The Qlik Sense app for troubleshooting Qlik Sense Enterprise on Windows logs.

00:00 - Intro

01:22 - Multi-Node Architecture Overview

04:10 - Common Performance Bottlenecks

05:38 - Using iPerf to measure connectivity

09:58 - Performance Monitor Article

10:30 - Setting up Performance Monitor

12:17 - Using Relog to visualize Performance

13:33 - Quick look at Grafana

14:45 - Qlik Scalability Tools

15:23 - Setting up a new scenario

18:26 - Look QSST Analyzer App

19:21 - Optimizing the Repository Service

21:38 - Adjusting the Page File

22:08 - The Sense Admin Playbook

23:10 - Optimizing PostgreSQL

24:29 - Log File Analyzer

27:06 - Summary

27:40 - Q&A: How to evaluate an application?

28:30 - Q&A: How to fix engine performance?

29:25 - Q&A: What about PostgreSQL 9.6 EOL?

30:07 - Q&A: Troubleshooting performance on Azure

31:22 - Q&A: Which nodes consume the most resources?

31:57 - Q&A: How to avoid working set breaches on engine nodes?

34:03 - Q&A: What do QRS log messages mean?

35:45 - Q&A: What about QlikView performance?

36:22 - Closing

Resources:

LogAnalysis App: The Qlik Sense app for troubleshooting Qlik Sense Enterprise on Windows logs

Qlik Help – Deployment examples

Using Windows Performance Monitor

PostgreSQL Fine Tuning starting point

Qlik Sense Shared Storage – Options and Requirements

Qlik Help – Performance and Scalability

Q&A:

Q: Recently I'm facing Qlik Sense proxy servers RAM overload, although there are 4 nodes and each node it is 16 CPUs and 256G. We have done App optimazation, like delete duplicate app, remove old data, remove unused field...but RAM status still not good, what is next to fix the performace issue? Apply more nodes?

A: Depends on what you mean by “RAM status still not good”. Qlik Data Analytics software will allocate and use memory within the limits established and does not release this memory unless the Low Memory Limit has been reached and cache needs cleaning. If RAM consumption remains high but no other effects, your system is working as expected.

Q: Similar to other database, do you think we need to perform finetuning, cleaning up bad records within PostgresQL , e.g.: once per year?

A: Periodic cleanup, especially in a rapidly changing environment, is certainly recommended. A good starting point: set your Deleted Entity Log table cleanup settings to appropriate values, and avoid clean-up tasks kicking in before user morning rampup.

Q: Does QliKView Server perform similarly to Qlik Sense?

A: It uses the same QIX Engine for data processing. There may be performance differences to the extent that QVW Documents and QVF Apps are completely different concepts.

Q: Is there a simple way (better than restarting QS services)to clean the cache, because chache around 90 % slows down QS?

A: It’s not quite as simple. Qlik Data Analytics software (and by extent, your users) benefits from keeping data cached as long as possible. This way, users consume pre-calculated results from memory instead of computing the same results over and over. Active cache clearing is detrimental to performance. High RAM usage is entirely normal, based Memory Limits defined in QMC. You should not expect Qlik Sense (or QlikView) to manage memory like regular software. If work stops, this does not mean memory consumption will go down, we expect to receive and serve more requests so we keep as much cached as possible. Long winded, but I hope this sets better expectations when considering “bad performance” without the full technical context.

Q: How do we know when CPU hits 100% what the culprit is, for example too many concurrent user loading apps/datasets or mutliple apps qvds reloading? can we see that anywhere?

A: We will provide links to the Log Analysis app I demoed during the webinar, this is a great place to start. Set Repository Performance logs to DEBUG for the QRS performance part, start analysing service resource usage trends and get to know your user patterns.

Q: Can there be repository connectivity issues with too many nodes?

A: You can only grow an environment so far before hitting physical limits to communication. As a best practice, with every new node added, a review of QRS Connection Pools and DB connectivity should be reviewed and increased where necessary. The most usual problem here is: you have added more nodes than connections are allowed to DB or Repository Services. This will almost guarantee communication issues.

Q: Does qlik scalability tools measure browser rendering time as well or just works on API layer?

A: Excellent question, it only evaluates at the API call/response level. For results that include browser-side rendering, other tools are required (LoadRunner, complex to set up, expert help needed).

Transcript:

Hello everyone and welcome to the November edition of Techspert Talks. I’m Troy Raney and I’ll be your host for today's session. Today's presentation is Optimizing Performance for Qlik Sense Enterprise with Mario Petre. Mario why don't you tell us a little bit about yourself?

Hi everyone; good to be here with everybody once again. My name is Mario Petre. I’m a Principal Technical Engineer in the Signature Support Team. I’ve been with Qlik over six years now and since the beginning, I’ve focused on Qlik Sense Enterprise backend services, architecture and performance from the very inception of the product. So, there's a lot of historical knowledge that I want to share with you and hopefully it's an interesting springboard to talk about performance.

Great! Today we're going to be talking about how a Qlik Sense site looks from an architectural perspective; what are things that should be measured when talking about performance; what to monitor after going live; how to troubleshoot and we'll certainly highlight plenty of resources and where to find more details at the end of the session. So Mario, we're talking about performance for Qlik Sense Enterprise on Windows; but ultimately, it's software on a machine.

That's right.

So, first we need to understand what Qlik Sense services are and what type of resources they use. Can you show us an overview from what a multi-node deployment looks like?

Sure. We can take a look at how a large Enterprise environment should be set up.

And I see all the services have been split out onto different nodes. Would you run through the acronyms quickly for us?

Yep. On a consumer node this is where your users come into the Hub. They will come in via the Qlik Proxy Service and consume applications via the Qlik Engine Service, that ultimately connects to the central node and everything else via the Qlik Repository Service.

Okay.

The green box is your front-end services. This is what end users tap into to consume data, but what facilitates that in the background is always the Repository Service.

And what's the difference between the consumer nodes on the top and the bottom?

These two nodes have a Proxy Service that balances against their own engines as well as other engines; while the consumer nodes at the bottom are only there for crunching data.

Okay.

And then we can take a look at the backend side of things. Resources are used to the extent that you're doing reloads, you will have an engine there as well as the primary role for the central node, active and failover which is: the Repository Service to coordinate communication between all the rest of the services. You can also have a separate node for development work. And ultimately we also expect the size of an environment to have a dedicated storage solution and a dedicated central Repository Database host either locally managed or in one of the cloud providers like AWS RDS for example.

Between the front-end and back-end services where's the majority of resource consumption, and what resources do they consume?

Most of the resource allocation here is going to go to the Engine Service; and that will consume CPU and RAM to the extent that it's allocated to the machine. And that is done at the QMC level where you set your Working Set Limits. But in the case of the top nodes, the Proxy Service also has a compute cost as it is managing session connectivity between the end user's browser and the Engine Service on that particular server. And the Repository Service is constantly checking the authorization and permissions. So, ultimately front-end servers make use of both front-end and back-end resources. But you also need to think about connectivity. There is the data streaming from storage to the node where it will be consumed and then loading from that into memory. And these are three different groups of resources: you have compute; you have memory, and you have network connectivity. And all three have to be well suited for the task for this environment to work well.

And we're talking about speed and performance like, how fast is a fast network? How can we even measure that?

So, we would start for any Enterprise environment, we would start at a 10 Gb network speed and ultimately, we expect response time of 4 MS between any node and the storage back end.

Okay. So, what are some common bottlenecks and issues that might arise?

All right. So, let's take a look at some at some examples. The Repository Service failing to communicate with rim nodes, with local services. I would immediately try to verify that the Repository Service connection pool and network connectivity is stable and connect. Let's say apps load very very slow for the first time. This is where network speed really comes into play. Another example: the QMC or the Hub takes a very very long time to load. And for that, we would have to look into the communication between the Repository Service and the Database, because that's where we store all of this metadata that we will try to calculate your permissions based on.

And could that also be related to the rules that people have set up and the number of users accessing?

Absolutely. You can hurt user experience by writing complex rules.

What about lag in the app itself?

This is now being consumed by the Engine Service on the consumer node. So, I would immediately try to evaluate resource consumption on that node, primarily CPU. Another great example for is high Page File usage. We prefer memory for working with applications. So, as soon as we try to cache and pull those results again from disk, performance we'll be suffering. And ultimately, the direct connectivity. How good and stable is the network between the end users machine and the Qlik Sense infrastructure? The symptom will be on the end user side, but the root cause is almost always (I mean 99.9% of the time) will be down to some effect in the environment.

So, to get an understanding of how well the machine works and establish that baseline, what can we use?

One simple way to measure this (CPU, RAM, disk network) is this neat little tool called iPerf.

Okay. And what are we looking at here?

This is my central node.

Okay. And iPerf will measure what exactly?

How fast data transfer is between this central node and a client machine or another server.

And where can people find iPerf?

Great question. iPerf.fr

And it's a free utility, right?

Absolutely.

So, I see you've already got it downloaded there.

Right. You will have to download this package, both on the server and the client machine that you want to test between. We'll run this “As Admin.” We call out the command; we specify that we want it to start in “server mode.” This will be listening for connection attempts.

Okay.

We can define the port. I will use the default one. Those ports can be found in Qlik Help.

Okay.

The format for the output in megabyte; and the interval for refresh 5 seconds is perfectly fine. And then, we want as much output as possible.

Okay.

First, we need to run this. There we go. It started listening. Now, I’m going to switch to my client machine.

So, iPerf is now listening on the server machine and you're moving over to the client machine to run iPerf from there?

Right. Now, we've opened a PowerShell window into iPerf on the client machine. Then we call the iPerf command. This time, we're going to tell it to launch in “Client Mode.” We need to specify an IP address for it to connect to.

And that's the IP address of the server machine?

Right. Again, the port; the format so that every output is exactly the same. And here, we want to update every second.

Okay.

And this is a super cool option: if we use the bytes flag, we can specify the size of the data payload. I’m going to go with a 1 Gb file (1024 Mb). You can also define parallel connections. I want 5 for now.

So, that's like 5 different users or parallel streams of activity of 1 Gb each between the server machine and this client machine?

Right. So, we actually want to measure how fast can we acquire data from the Qlik Sense server onto this client machine. We need to reverse the test. So, we can just run this now and see how fast it performs.

Okay. And did the server machine react the same way?

You can see that it produced output on the listening screen. This is where we started. And then it received and it's displaying its own statistics. And if you want to automate this, so that you have a spot check of throughput capacity between these servers, we need to use the log file option. And then we give it a path. So, I’m gonna say call this “iperf_serverside…” And launch it. And now, no output is produced.

Okay.

So, we can switch back to the client machine.

Okay. So, you're performing the exact same test again, just storing everything in a log file.

The test finished.

Okay. So, that can help you compare between what's being sent to what's being received, and see?

Absolutely. You can definitely have results presented in a way that is easy to compare across machines and across time. And initial results gave us a throughput per file of around 43.6, 46, thereabouts megabytes per second.

So, what about for an end user who's experiencing issues? Can you use iPerf to test the connectivity from a user machine on a different network?

Yep. So, in in the background we will have our server; it's running and waiting for connections. And let's run this connection now from the client machine. We will make sure that the IP address is correct; default port; the output format in megabytes; we want it refreshed every second; and we are transferring 1 Gb; and 5 parallel streams in reverse order. Meaning: we are copying from the server to the client machine. And let's run it.

Just seeing those numbers, they seem to be smaller than what we're seeing from the other machine.

Right. Indeed. I have some stuff in between to force it to talk a little slower. But this is one quick way to identify a spotty connection. This is where a baseline becomes gold; being able to demonstrate that your platform is experiencing a problem. And to quantify and to specify what that problem is going to reduce the time that you spend on outages and make you more effective as an admin.

Okay. That was network. How can admins monitor all the other performance aspects of a deployment? What tools are available and what metrics should they be measuring?

Right. That's a great question. The very basic is just Performance Monitor from Windows.

Okay.

The great thing about that is that we provide templates that also include metrics from our services.

Can you walk us through how to set up the Performance Monitor using one of those templates?Sure thing. So, we're going to switch over first to the central node. So, the first thing that I want to do is create a folder where all of these logs will be stored.

Okay. So, that's a shared folder, good.

And this article is a great place to start. So, we can just download this attachment

So, now it's time to set up a Performance Monitor proper. We need to set up a new Data Collector Set.

Giving it a name.

And create from template. Browse for it, and finish.

Okay. So it’s got the template. That's our new one Qlik Sense Node Monitor, right?

Yep. You'll have multiple servers all writing to the same location. The first thing is to define the name of each individual collector; and you do that here. And you can also provide subdirectory for these connectors, and I suggest to have one per node name. I will call this Central Node.

Everything that comes from this node, yeah.

Correct. You can also select a schedule for when to start these. We have an article on how to make sure that Data Collectors are started when Windows starts. And then a stop condition.

Now, setting up monitors like this; could this actually impact performance negatively?

There is always an overhead to collecting and saving these metrics to a file. But the overhead is negligible.

Okay.

I am happy with how this is defined. Now, this static collector on one of the nodes is already set up. There is an option here that's called Data Manager. What's important here to define is to set a Minimum Free Disk. We could go with 10 Gb, for example; and you can also define a Resource Policy. The important bit is Minimum Free Disk. We want to Delete the Oldest (not the largest) in the Data Collector itself. We should change that directory and make sure that it points to our central location instead of locally; and we'll have to do this for every single node where we set this up.

Okay. So, that's that shared location?

Yep.

And you run the Data Collector there. And it creates a CSV file with all those performance counters. Cool.

So, here we have it now. If we just take a very quick look inside, we'll see a whole bunch of metrics. And if you want to visualize these really really quick, I can show you a quick tip that wasn't on the agenda but since we're here: on Windows, there is a built-in tool called Relog that is specifically designed for reformatting Performance Monitor counters. So, we can use Relog; we'll give it the name of this file; the format will be Binary; the output will be the same, but we'll rename it to BLG; and let's run it.

And now it created a copy in Binary format. Cool thing about this Troy is that: you can just double click on it.

It's already formatted to be a little more readable. Wow! Check that out.

There we go. Another quick tip: since we're here, first thing to do is: select everything and Scale; just to make sure that you're not missing any of the metrics. And this is also a great way to illustrate which service counters and system counters we collect. As you can see, there's quite a few here.

Okay. So, that Performance Monitor is, it's set up; it's running; we can see how it looks; and that is going to run all the time or just when we manually trigger it?

You can definitely configure it to run all the time, and that would be my advice. Its value is really realized as a baseline.

Yeah. Exactly. That was pretty cool seeing how that worked, using all the built-in utilities. And that Relog formatting for the Process Monitor was new to me. Are there any other tools you like to highlight?

Yeah. So, Performance Monitor is built-in. For larger Enterprises that may already be monitoring resources in a centralized way, there's no reason why you shouldn't expect to include the Sense resources into that live monitoring. And this could be done via different solutions out there. A few come to mind like: Grafana, Datadog, Butler SOS, for example from one of our own Qlik luminaries.

Can we take a quick look at Grafana? I’ve heard of that but never seen it.

Sure thing. This is my host monitor sheet. It's nowhere built to a corporate standard, but you can see here I’m looking at resources for the physical host where these VMs are running as well as the domain controller, and the main server where we've been running our CPU tests. And the great part about this is I have historical data as far back I believe as 90 days.

So, this is a cool tool that lets you like take a look at the performance and zoom-in and find the processes that might be causing some peaks or anything you want to investigate?

Right. Exactly. At least come up with a with a narrow time frame for you to look into the other tools and again narrow down the window of your investigation.

Yeah, that could be really helpful. Now I wanted to move on to the Qlik Sense Scalability Tools. Are those available on Qlik community?

That's right. Let me show you where to find them. You can see that we support all current versions including some of the older ones. You will have to go through and download the package and the applications used for analysis afterwards. There is a link over here. So, once the package is downloaded, you will get an installer. And the other cool thing about Scalability Tools is that you can use it to pre-warm the cache on certain applications since Qlik Sense Enterprise doesn't support application pre-loading.

Oh, cool. So, you can throttle up applications into memory like in QlikView. Can we take a look at it?

Yes, absolutely. This is the first thing that you'll see. We'll have to create a new connection. So, I’ll open a simple one that I’ve defined here and we can take a look at what's required just to establish a quick connection to your Qlik Sense site.

Okay, but basically the scenario that you're setting up will simulate activity on a Qlik Sense site to test its performance?

Exactly. You'll need to define your server hostname. This can be any of your proxy nodes in the environment. The virtual proxy prefix. I’ve defined it as Header and authentication method is going to be WebSocket.

Okay.

And then, if we want to look at how virtual users are going to be injected into the system, scroll over here to the user section. Just for this simple test, I’ve set it up for User List where you can define a static list of users like so: User Directory and UserName.

Okay. So, it's going to be taking a look at those 2 users you already predefined and their activity?

Exactly. We need to test the connection to make sure that we can connect to the system. Connection Successful. And then we can proceed with the scenario. This is very simple but let me show you how I got this far. So, the very first thing that we should do is to Open an App.

So, you're dragging away items?

Yep. I’m removing actions from this list. Let's try to change the sheet. A very simple action. And now we have four sheets, and we'll go ahead and select one of them.

Okay, so far, we have Opening the App and immediately changing to a sheet?

Yep. That's right. This will trigger actions in sequence exactly how you define them. It will not take into consideration things like Think Time. I will just define a static weight of 15 seconds, and then you can make selections.

But this is an amazing tool for being able to kind of stress test your system.

It's very very useful and it also provides a huge amount of detail within the results that it produces. One other quick tip: while defining your scenario, use easy to read labels, so that you can identify these in the Results Application. Let's assume that the scenario is defined. We will go ahead and add one last action and that is: to close, to Disconnect the app. We'll call this “OpenApp.” We'll call this “SheetChange.” Make sure you Save. The connection we've tested; we've defined our list of users. First, let's run the scenario. There is one more step to define and that is: to configure an Executor that will use this scenario file to launch a workload against our system. Create a New Sequence.

This is just where all these settings you're defining here are saved?

Correct. This is simply a mapping between the execution job that you're defining and which script scenario should be used. We'll go ahead and grab that. Save it again; and now we can start it. And now in the background if we were to monitor the Qlik Sense environment, we would see some amount of load coming in. We see that we had some kind of issue here: empty ObjectID. Apparently I left something in the script editor; but yeah, you kind of get the idea.

So, all this performance information would then be loaded into an app that is part of the package downloaded from Qlik community. How does that look?

So, here you will see each individual result set, and you can look at multiple-exerciser runs in the single application. Unfortunately, we don't have more than one here to showcase that, but you would see multiple-colored lines. There is metrics for a little bit of everything: your session ramp, your throughput by minute, you can change these.

CPU, RAM. This is great.

Exactly. CPU and RAM. These are these are not connected. We don't have those logs, but you would have them for a setup run on your system. These come from Performance Monitor as well, so you could just use those logs provided that the right template is in place. We see Response Time Distribution by Action, and these are the ones that I’ve asked you to change and name so that they're easy to understand.

Once your deployment is large enough to need to be multi-node and the default settings are no longer the best ones for you, what needs to be adjusted with a Repository Service to keep it from choking or to improve its performance?

That's a great question Troy. So, the first thing that we should take a look at is how the Repository communicates with the backend Database and vice versa. The connection pool for the Repository is always based on core count on the machine. And the best rule of thumb that we have to date is to take your core count on that machine, multiply it by 5, and that will be the max connection pool for the Repository Service for that node.

Can you show us where that connection pool setting can be changed?

Yes. So, we will go ahead and take a look. Here we are on the central node of my environment. You'll have to find your Qlik installation folder. We'll navigate to the Repository folder, Util, QlikSenseUtil, and we'll have to launch this “As Admin.”

Okay.

We'll have to come to the Connection String Editor. Make sure that the path matches. We just have to click on Read so that we get the contents of these files. And the setting that we are about to change is this one.

Okay. So, the maximum number of connections that the Repository can make?

Yes. And this is (again) for each node going towards the Repository Database.

Okay.

Again, this should be a factor of CPU cores multiplied by 5. If 90 is higher than that result, leave 90 in place. Never decrease it.

Okay, that's a good tip.

Right. I change this to 120. I have to Save. What I like to do here is: clear the screen and hit Read again; just to make sure that the changes have been persisted in the file.

Okay.

Once that's done, we can close this. We can restart the environment. We can get out of here.

So, there you adjusted the setting of how many connections this node can make to the QSR. Then assuming we do the same on all nodes, where do we adjust the total number of connections the Repository itself can receive?

That should be a sum of all of the connection strings from all of your nodes plus 110 extra for the central node. By default, here is where you can find that config file: Repository, PostgreSQL, and we'll have to open this one, PostgreSQL. Towards the end of the file…

Just going all the way to the bottom.

Here we have my Max Connections is 300.

Okay. One other setting you mentioned was the Page File and something to be considered. How would we make changes or adjust that setting?

Right. So, this is a Windows level setting that's found in Advanced System Settings; Advanced tab; Performance; and then again Advanced; and here we have Virtual Memory.

Okay.

We have to hit Change. We'll have to leave it at System Managed or understand exactly which values we are choosing and why. If you're not sure, the default should always be System Managed.

Now, I want to know what resources are available for Qlik Sense admins; specifically, what is the Admin Playbook?

It's a great starting place for understanding what duties and responsibilities one should be thinking about when administering a Qlik Sense site.

So, these are a bunch of tools built by Qlik to help analyze your deployment in different ways. I see weekly, monthly, quarterly, yearly, and a lot of different things are available there.

Yeah. So, we can take a look at Task Analysis, for example. The first time you run it, it's going to take about 20 minutes; thereafter about 10. The benefits: it shows you really in depth how to get to the data and then how to tweak the system to work better based on what you have.

Yeah, that's great.

Right? So, not only we put the tools in your hands, but also how to build these tools as you can here. See here, we have instructions on how to come up with these objects from scratch. An absolute must-read for every system admin out there.

Mario, we've talked about optimizing the Qlik Sense Repository Service, but not about Postgres? Do larger Enterprise level deployments affect its performance?

Sure. The thing about Postgres is again: we have to configure it by default for compatibility and not performance. So, it's another component that has to be targeted for optimization.

The detail there that anything over 1 Gb from Postgres might get paged - that sounds like it could certainly impact performance.

Right, because the buffer setting that we have by default is set to 1 Gb; and that means only 1 Gb of physical memory will be allocated to Postgres work. Now, we're talking about the large environment 500 to maybe 5,000 apps. We're talking 1000s of users with about 1000 of them peak concurrency per hour.

So, can we increase that Shared Buffer setting?

Absolutely. And in fact, I want to direct you to a really good article on performance optimization for PostgreSQL. And when we talk about fine-tuning, this article is where I’d like to get started. We talk about certain important factors like the Shared Buffers. So, this is what we define to 1 Gb by default. Their recommendation is to start with 1/4 of physical memory in your system. 1 Gb is definitely not one quarter of the machines out there. So, it needs tweaking.

And again these are settings to be changed on the machine that's hosting the Repository Database, right?

That's correct. That's correct.

Now, is there an app that you're aware of that would be good to kind of look at all these logs and analyze what's going on with the performance?

Absolutely. This is an application that was developed to better understand all of the transactions happening in a particular environment. It reads the log files collected with the Log Collector either via the tool or the QMC itself.

Okay.

It's not built for active monitoring, but rather to enhance troubleshooting.

Sure. So, basically it's good for looking at a short period of time to help troubleshooting?

Right. The Repository itself communicates over APIs between all the nodes and keeps track of all of the activities in the system; and these translate to API calls. If we want to focus on Repository API calls, we can start by looking at transactions.

Okay.

So, this will give us detail about cost. For example, per REST call or API call, we can see which endpoints take the most, duration per user, and this gives you an opportunity to start at a very high level and slowly drill in both in message types and timeframe. Another sheet is the Threads Endpoints and Users; and here you have performance information about how many worker-threads the Repository Service is able to start, what is the Repository CPU consumption, so you can easily identify one. For example, here just by discount, we can see that the preview privileges call for objects is called…

Yeah, a lot.

Over half a million times, right? And represents 73% of the CPU compute cost.

Wow, nice insights.

And then if we look here at the bottom, we can start evaluating time-based patterns and select specific time frames and go into greater detail.

So, I’m assuming this can also show resource consumption as well?

Right. CPU, memory in gigabytes and memory in percent. One neat trick is: to go to the QMC, look at how you've defined your Working Set Limits, and then pre-define reference lines in this chart. So, that it's easier to visualize when those thresholds are close to being reached or breached. And you do that by the add-ons reference lines, and you can define them like this.

That's just to sort of set that to match what's in the QMC?

Exactly.

Makes a powerful visualization. So, you can really map it.

Absolutely. And you can always drill down into specific points in time we can go and check the log details Engine Focus sheet; and this will allow us to browse over time, select things like errors and warnings alone, and then we will have all of the messages that are coming from the log files and what their sources.

Yeah. That's great to have it all kind of collected here in one app, that's great.

Indeed.

To summarize things, we've talked about to understand system performance, a baseline needs to be established. That involves setting up some monitoring. There are lots of options and tools available to do that; and it's really about understanding how the system performs so the measurement and comparisons are possible if things don't perform as expected.

And to begin to optimize as well.

Okay, great. Well now, it's time for Q&A. Please submit your questions through the Q&A panel on the left side of your On24 console. Mario, which question would you like to address first?

We have some great questions already. So, let's see - first one is: how can we evaluate our existing Qlik Sense applications?

This is not something that I’ve covered today, but it's a great question. We have an application on community called App Metadata Analyzer. You can import this into your system and use it to understand the memory footprint of applications and objects within those applications and how they scale inside your system. It will very quickly illustrate if you are shipping applications with extremely large data files (for example) that are almost never used. You can use that as a baseline for both optimizing local applications and also in your efforts to migrating to SaaS, if you feel like you don't want to bother with all of this Performance Monitoring and optimization, you can always choose to use our services and we'll take care of that for you.

Okay, next question.

So, the next question: worker schedulers errors and engine performance. How to fix?

I think I would definitely point you back to this Log Analysis application. Load that time frame where you think something bad happened, and see what kind of insights you can you can get by playing with the data, by exploring the data. And then narrow that search down if you find a specific pattern that seems like the product is misbehaving. Talk to Qlik support. We'll evaluate that with you and determine whether this is a defect or not or if it's just a quirk of how your system is set up. But that Sense Log Analysis app is a great place to start. And going back to the sheet that I showed: Repository and Engine metrics are all collected there. And these come from the performance logs that we already produce from Qlik Sense. You don't need to load any additional performance counters to get those details.

Okay.

All right. So, there is a question here about Postgres 9.6 and the fact that it's soon coming end of life. And I think this is a great moment to talk about this. Qlik Sense client-managed or Qlik Sense Enterprise for Windows supports Postgres 12.5 for new installations since the May release. If you have an existing installation, 9.6 will continue to be used; but there is an article on community on how to in-place upgrade that to 12.5 as a standalone component. So, you don't have to continue using 9.5 if your IT policy is complaining about the fact that it's soon coming to the end of life. As we say, we are aware of this fact; and in fact, we are shipping a new version as of the May 2021 release.

Oh, great.

So, here's an interesting question. If we have Qlik Sense in Azure on a virtual machine, why is the performance so sluggish? How do you fine-tune it? I guess first we need to understand what would you mean by sluggish? But the first thing that I want to point to is: different instance types. So, virtual machines in virtual private cloud providers are optimized for different workloads. And the same is true for AWS, Azure and Google Cloud platform. You will have virtual machines that are optimized for storage; ones that are optimized for compute tasks or application analytics; some that are optimized for memory. Make sure that you've chosen the right instance type and the right level of provisioned iOps for this application. If you feel that your performance is sluggish, start increasing those resources. Go one tier up and reevaluate until you find a an instance type that works for you. If you wish to have these results (let's say beforehand), you will have to consider using the Scalability Tools together with some of your applications against different instance types in Azure to determine which ones work best.

Just to kind of follow up on that question, if we're looking at that multi-node example from Qlik help, what nodes would you consider would require more resources?

Worker nodes in general. And those would be front and back-end.

So, a worker node is something with an engine, right?

Exactly. Something with an engine. It can either be front-facing together with a proxy to serve content, or back-end together with a scheduler a service to perform reload tasks. These will consume all the resources available on a given machine.

Okay.

And this is how the Qlik Sense engine is developed to work. And these resources are almost never released unless there is a reason for it, because us keeping those results cached is what makes the product fast.

Okay.

Oh, here's a great one about avoiding working set breaches on engine nodes. Question says: do you have any tips for avoiding the max memory threshold from the QIX engine? We didn't really cover this this aspect, but as you know the engine allows you to configure memory limits both for the lower and higher memory limit. Understanding how these work; I want to point you back to that QIXs engine white paper. The system will perform certain actions when these thresholds are reached. The first prompt that I have for you in this situation is: understand if these limits are far away from your physical memory limit. By default, Qlik Sense (I believe) uses 70 / 90 as the low and high working sets on a machine. With a lot of RAM, let's say 256 - half a terabyte of RAM, if you leave that low working set limit to 70 percent, that means that by default 30 of your physical RAM will not be used by Qlik Sense. So. always keep in mind that these percentages are based on physical amount of RAM available on the machine, and as soon as you deploy large machines (large: I’m talking 128 Gb and up) you have to redefine these parameters. Raise them up so that you utilize almost all of the resources available on the machine ,and you should be able to visualize that very very easily in the Log Analysis App by going to Engine Load sheet and inserting those reference lines based on where your current working sets are. Of course, the only way really to avoid a working set limit issue is to make sure that you have enough resources. And the system is configured to utilize those resources, so even if you still get them after raising the limit and allowing the - allowing the product to use as much RAM as it can without of course interfering with Windows operations (which is why you should never set these to like 99, 98, 99). Windows needs RAM to operate by itself, and if we let Qlik Sense to take all of it, it will break things. If you've done that and you're still having performance issues, that means you need more resources.

Yeah. It makes sense.

Oh, so here is another interesting question about understanding what certain Qlik Repository Service (QRS) log messages say. There is a question here that says: try to meet the recommendation of network and persistence the network latency should be less than 4 MS, but consistently in our logs we are seeing the QRS security management retrieved privileges in so many milliseconds. Could this be a Repository Service issue or where would you suggest we investigate first? This is an info level message that you are reporting. And it's simply telling you how long it took for the Repository Service to compute the result for that request. That doesn't mean that this is how long it took to talk to the Database and back, or how long it took for the request to reach from client to the server; only how long it took for the Repository Service to look up the metadata look up the security rules and then return a result based on that. And I would say this coming back in 384 milliseconds is rather quick. It depends on how you've defined these security rules. If these security rules are super simple and you are still getting slow responses, we would definitely have to look at resource consumption. But if you want to know how these calls affect resource consumption on the Repository and Postgres side, go back to that Log Analysis App. Raise your Repository performance logs in the QMC to Debug levels so that you get all of the performance information of how long each call took to execute. And try to establish some patterns. See if you have calls that take longer to execute than others; and where are those coming from any specific apps, any specific users? All of these answers come from drilling down into the data via that app that I demoed.

Okay Mario, we have time for one last question.

Right. And I think this is an excellent one to end. We talked a whole bunch here about Qlik Sense, but all of this also applies to QlikView environments. We are always looking at taking a step back and considering all of the resources that are playing in the ecosystem, not just the product itself. And the question asks: is QlikView Server performance similar to how it handles resources Qlik Sense? The answer is: yes. The engine is exactly the same in both products. If you read that white paper, you will understand how it works in both QlikView and Qlik Sense. And the things that you should do to prepare for performance and optimization are exactly the same in both products. Excellent question.

Great. Well, thank you very much Mario!

Oh, it's been my pleasure Troy. That was it for me today. Thank you all for participating. Thank you all for showing up. Thank you Troy for helping me through this very very complicated topic. It's been a blast as always. And to our customers and partners, looking forward to seeing your questions and deeper dives into logs and performance on community.

Okay, great! Thank you everyone! We hope you enjoyed this session. Thank you to Mario for presenting. We appreciate getting experts like Mario to share with us. Here's our legal disclaimer and thank you once again. Have a great rest of your day. -

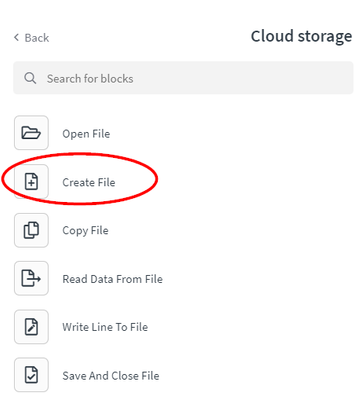

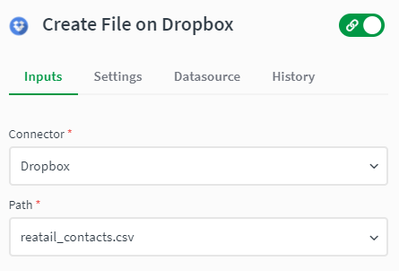

How to create a CSV file with the Cloud Storage connector in Qlik Application Au...

This article explains how the Cloud Storage connector can be used to create CSV files. Note that this is a generic connector that can be used with mu... Show More -

Understanding Set Analysis

This Techspert Talks session covers: How Set Analysis works Possible and Excluded selections Using Advanced Expressions Chapters: 01:02 - What... Show More -

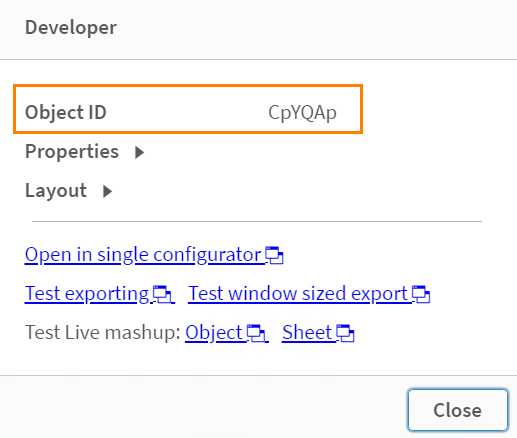

How to identify an object in a Qlik Sense app by object id

You may need to locate a specific Qlik Sense App object which has been identified to be causing errors in the Qlik Sense log files or to be using larg... Show MoreYou may need to locate a specific Qlik Sense App object which has been identified to be causing errors in the Qlik Sense log files or to be using large amounts of RAM as identified by the Telemetry dashboard.

How do you isolate this object and locate it in the Qlik Sense App?

- Access the Qlik Sense App in the hub and navigate to the desired sheet

- Append /options/developer/ at the end of the URL

- Must disable Touch Screen

- Right-click on the object. Choose the developer option

- The object ID is visible in the new pop up

Environment:

-

Configure Qlik Cloud with an AWS Cognito IDP

The attached document guides the reader through adding the necessary application configuration in AWS Cognito and Qlik Sense Enterprise SaaS (Qlik Clo... Show MoreThe attached document guides the reader through adding the necessary application configuration in AWS Cognito and Qlik Sense Enterprise SaaS (Qlik Cloud) identity provider configuration so that Qlik Sense Enterprise SaaS users may log into a tenant using their AWS Cognito credentials.

Content of the document:

- Prerequisites

- Considerations when using AWS Cognito with Qlik Cloud

- AWS Cognito Configuration

- Qlik Cloud Tenant Configuration

This customization is provided as is. Qlik Support cannot provide continued support of the solution. For assistance, reach out to our Professional Services or engage in our active Integrations forum.

Environment

-

How to disable Task Log Data Encryption in Qlik Replicate

Environment Qlik Replicate May 2023 (Version 2023.5 and later) What is Replicate log file encryption? Starting from version "November 2021" (Versi... Show MoreEnvironment

- Qlik Replicate May 2023 (Version 2023.5 and later)

What is Replicate log file encryption?

Starting from version "November 2021" (Version 2021.11), Qlik Replicate introduced support for log data encryption to safeguard customer data snippets from being exposed in verbose task log files. However, this enhancement also presented its own set of challenges, such as making it very difficult to read the logs and complicating troubleshooting processes, the steps of Decrypting Qlik Replicate Verbose Task Log Files takes time.

How to disable Task Log Data Encryption?

In response to customer feedback and feature requests, we implemented a new feature allowing users the flexibility to disable log encryption in Qlik Replicate. This enhancement was rolled out with the release of Qlik Replicate version 2023.5 GA. This article serves as a guide on how to effectively disable Task Log Data Encryption.

Steps

- Stop the Replicate Server services.

- Open <REPLICATE_INSTALL_DIR>\bin\repctl.cfg and add one line disable_log_encryption.

{ "port": 3552, "plugins_load_list": "repui", ... ... "enable_data_logging": true, "disable_log_encryption": true } - Save the repctl.cfg file and start the Replicate Server services.

- Now the task log files display database data, sample lines like:

00029680: 2024-03-01T18:30:26:105005 [TARGET_LOAD ]V: Column name: ID value: 441260d008490: 2600012C000000000000000000000000 | &..,............ 1260d0084a0: 000000 | ... (sqlserver_endpoint_imp.c:2787) 00029680: 2024-03-01T18:30:26:105005 [TARGET_LOAD ]V: Column name: NAME value: trx222 1260d0084a8: 747278323232 | trx222 (sqlserver_endpoint_imp.c:2787)

Where the database table's column "ID" value is "44", and the column "NAME" value is "trx222" which are in plain text format as well as hexadecimal format datas.

Important Note:

Please exercise caution as verbose task logs may contain sensitive end-user data, and disabling encryption could potentially lead to data leakage. Always ensure appropriate measures are taken to protect sensitive information.Related Content

How to Decrypt Qlik Replicate Verbose Task Log Files

-

How to export more than 100000 cells using the Get Straight Table Data block in ...

Currently, in Qlik Application Automation it is not possible to export more than 100,000 cells using the Get Straight Table Data block. Content: Full ... Show MoreCurrently, in Qlik Application Automation it is not possible to export more than 100,000 cells using the Get Straight Table Data block.

Content: