Unlock a world of possibilities! Login now and discover the exclusive benefits awaiting you.

- Qlik Community

- :

- All Forums

- :

- Qlik NPrinting

- :

- Re: How to store multiple values in a single Qlik ...

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

How to store multiple values in a single Qlik Variable?

I have a requirement of storing multiple email addresses of users in a single variable so that the variable can be used in NPrinting Task to distribute the mail. I have data in this format-

Employee_Detail:

load * inline [

EmpId,User,Email_id

1,Garima,'garima.kul@abc.com,gar.kuls@abc.com'

2,Kunal,kunal.dhawan@abc.com

3,Naresh,naresh.kr@abc.com

4,Durgesh,durgesh.pl@abc.com

5,Subodh,subodh.chn@abc.com

6,Sayali,sayali.ra@abc.com

];

so the variable should be able to store multiple addresses against a single user., eg for EmpId 1 there are 2 email addresses so variable should store both the mail addresses.

Thanking in anticipation.

- « Previous Replies

-

- 1

- 2

- Next Replies »

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi,

In the loadscript, you add something like this:

tmp:

LOAD concat(distinct Email_id, ',') AS All_Email

resident Employee_Detail;

let vEmails = peek('All_Email', 0, 'tmp');

drop table tmp;

In the layout, you can basically use the same expression: concat(distinct Email_id, ',')

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@fosuzuki is right. My additional comments below to clarify:

What you have to remember is that you either have to concatenate all emails in script - this will not be a dynamic solution though.

On the other hand if you want to have dynamic emails selected based on user filter you need to create a variable as expression starting with "=" sign in your variable definition.

So your variable should be:

LET vEmails = '=concat(distinct Email_id, ',')';

The reasons for equal sign are explained here: https://help.qlik.com/en-US/nprinting/June2020/Content/NPrinting/Troubleshooting/Variables-dont-expa...

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

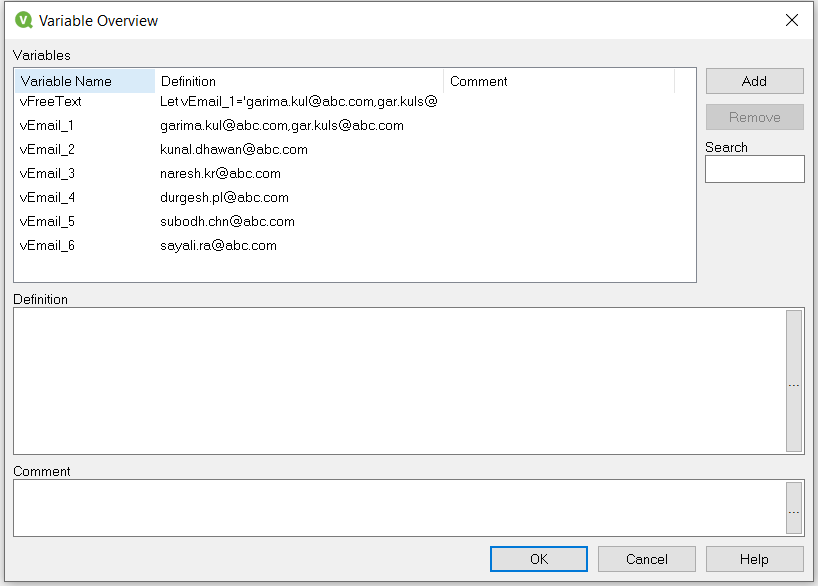

Check this code:

Employee_Detail:

load * inline [

EmpId,User,Email_id

1,Garima,'garima.kul@abc.com,gar.kuls@abc.com'

2,Kunal,kunal.dhawan@abc.com

3,Naresh,naresh.kr@abc.com

4,Durgesh,durgesh.pl@abc.com

5,Subodh,subodh.chn@abc.com

6,Sayali,sayali.ra@abc.com

];

tab1:

LOAD Concat('Let vEmail_'&EmpId&'='&Chr(39)&Email_id&Chr(39),';') As FreeText

Resident Employee_Detail;

Let vFreeText=Peek('FreeText');

$(vFreeText);

Drop Table tab1;- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

For each EmpId, a variable will be created and stores the Email IDs associated.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I think your solution although it shows how to dynamically create various variables for each row it does not make sense in this context.

Please note that the topic is in regards to NPrinting. Based on the context I can see that this variable will be used for report distribution in email (variable). So if you create a new variable for each EmpId there will be no easy way of referencing this variable in dynamic email (in NPrinting). The typical and one feasible solution is the one where you have an expression as variable definition (look up mine previous comment ) and you simply reference a variable in your dynamic email FROM, CC or BCC.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I agree with you @Lech_Miszkiewicz

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I just created a Nprinting test and it is working good using a small twist on the above information from Lech and company. Instead of inline I am building off a excel sample of some employee data with a new field called special. Inside of the qlik app I placed the following in the variables creator:

=Concat(IF(Special='Y', Testemail), '; ')

You could designate this by dept., role, special designation, etc.

In the Nprinting Task, email section, I just placed the Qlik Variable in the To section. This worked wonderfully and would be easy to change on the fly. Will be using this for special purpose reports!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

When working with NPrinting always think about performance!!

your formula

=Concat(IF(Special='Y', Testemail), '; ')

should be replaced with

=Concat({<Special*={'Y'}>} Testemail), '; ')

On large data amount it may have a major impact on report production performance!

https://community.qlik.com/t5/Qlik-Design-Blog/Performance-of-Conditional-Aggregations/ba-p/1463021

"...The bottom line is that Set Analysis is the method you should use for large data amounts. For smaller data amounts, it doesn’t really matter which method you choose: They are all fast enough..."

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

@Lech_MiszkiewiczThat is great point that I overlooked and a wonderful solution. This will ultimately scale up to a very large data set and that would have likely caused performance issues! I will update my notes and keep that handy!

- « Previous Replies

-

- 1

- 2

- Next Replies »