Unlock a world of possibilities! Login now and discover the exclusive benefits awaiting you.

Qlik Sense documentation and resources.

Recent Documents

-

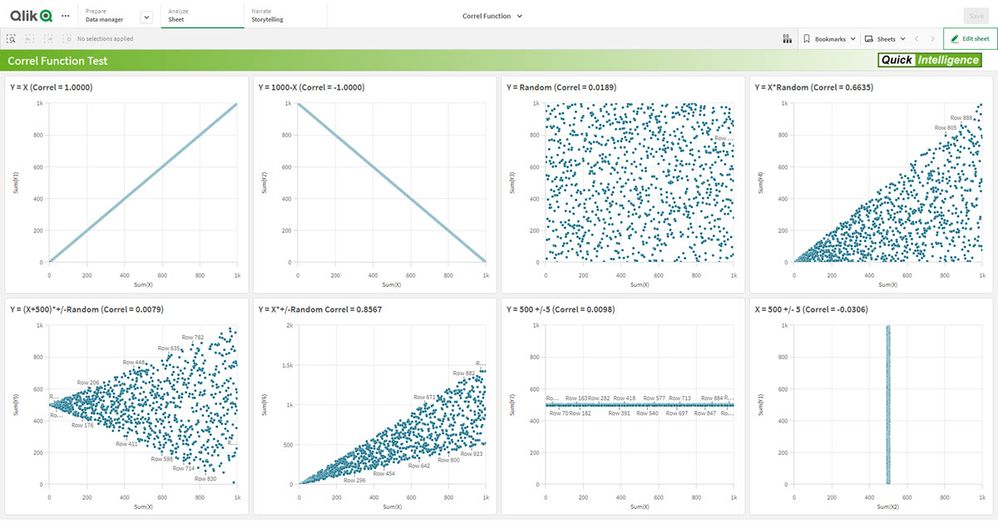

Qlik Sense App: Create Test Data For Correl Function Using AutoGenerate

A common use case in Qlik Sense is to generate some test data in order to demonstrate a function or to run some testing. The AutoGenerate function is ... Show MoreA common use case in Qlik Sense is to generate some test data in order to demonstrate a function or to run some testing. The AutoGenerate function is a great way of getting a large number of rows with some made up data to test or demo over.

Recently I was asked to implement a Correlation Coefficient and having not used the correl function in Qlik before I wanted to check that it gave sensible results before putting it into a live app (spoiler: it works fine). To do this I used AutoGenerate to give me X values from 1 to 1,000 and then various Y values to give positive, negative and non correlated data sets.

This app gives the various views of the correlation coefficient, but more importantly it shows how to generate test data, including random values, random selections of dimensions and generated dummy IDs.

The app is accompanied by a blog post, which can be found here:

https://www.quickintelligence.co.uk/generating-test-data-in-qlik-load-scripts/If you have any comments or suggestions of other ways you can autogenerate test data please comment on this document, or on the comments on the blog post.

Cheers!

Steve

-

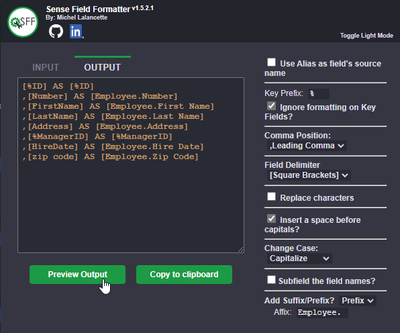

Introducing the Sense Field Formatter

Introducing Sense Field Formatter Version 1.7 - A new feature for the front end! With the latest version, you can now quickly copy the output in expre... Show MoreIntroducing Sense Field Formatter

Version 1.7 - A new feature for the front end!

With the latest version, you can now quickly copy the output in expression editor windows using a button.

Check it out on YouTube : https://www.youtube.com/watch?v=CXPMbQSD9mQ

It is very likely that many of you build your data models so that you can easily reuse certain parts, such as dimensions.

Although some development methods such as the Qlik Deployment Framework (QDF) help speed things up, the task of renaming fields when reusing a QVD is still time-consuming.

Let's imagine that a fact table contains 3 customer dimensions and 2 warehouse dimensions and that for each of these dimensions you add a prefix, or that your fields are written in a multilingual way ([Customer/Customer/Cliente]) and you want to get the middle value. You could also use it to rename fields from an SQL database and format everything for a nice front-end result in Qlik.

It's now easier than ever with Sense Field Formatter.

What it does

(https://youtu.be/k3HQpJivyZM)

Allows a user to format a list of fields, delimited by commas, into an alias and standardized format.

The user has multiple configuration options to choose from.

When the extension icon is clicked, a popup menu is displayed where the formatting takes place and is pasted into the clipboard.

User Settings

Use Alias as the field's source name

Will use the alias name (at the right of "AS") from your source fields as your new source fields.

e.g. [SourceField] AS [SourceAlias] -> [SourceAlias] AS [OutputAlias]

Key Prefix

The character(s) used to identify the key fields in Qlik. Formatting will be handled differently here as the Prefixing will be done AFTER (the right) the key prefix to avoid breaking the key formatting.

e.g. Prefixing a key field with "Customer." will generate this: [%MyKeyField] AS [%Customer.MyKeyField]

Ignore formatting on Key Fields?

Fields starting with the identified key will NOT be formatted.

Comma Position

Places the comma either at the start or end of the line.

Field Delimiter

Should the field delimiters be double quotes or square brackets.

Replace characters

Find what: The character(s) you are looking to replace. Multiple characters can be replaced at once.

Replace with: Replace all occurrences of the character(s) in the fields.

Insert a space before capitals

Will insert a space before each capital letter in the fields, except for the first character.

e.g. [MyNewField] -> [My New Field]

Change Case

Applies a case change to all the fields.

Uppercase: Replaces each lowercase letter with a corresponding uppercase char.

Lowercase: Replaces each uppercase letter with a corresponding lowercase char.

Capitalize: The first letter of each word will be in uppercase.

Subfield the field names?

Extract substring components from a parent string field, where the original record fields consist of two or more parts separated by a delimiter.

The function can be used, for example, to extract the column name in a specific language if the field is multilingual.

*e.g. Applying a subfield of 3 with a delimiter of '/' [Client/Cliente/Customer] -> [Customer]

Delimiter: A character within the input text that divides the string into component parts.

Field no: Specifies which of the substrings of the parent string text is to be returned. Use the value 1 to return the first substring, 2 to return the second substring, and so on.

Add Suffix/Prefix?

Adds an affix, either placed before the field (prefix) or at the end (suffix). Useful when reusing the same dimension over and over for multiple scenarios.

e.g. Using the customer dimension where a customer may be a Ship To or Bill To customer.

Prefix : [Customer Name] -> [ShipTo.Customer Name]

Suffix : [Customer Name] -> [Customer Name.ShipTo]

Affix: The text that will be used as an affix. Make sure to add a space to your affix if you'd like the new string to remain spaced out.

Installing the plugin

Visit the Store:

Visit my GitHub: https://github.com/MichelLalancette/SenseFieldFormatter

-

Variabilizing your Connections

Connecting to data sources is easy right? You simply use data connection manager, or choose a table from an existing connection right. The "wizards" i... Show MoreConnecting to data sources is easy right? You simply use data connection manager, or choose a table from an existing connection right. The "wizards" insert the Connection string into the Load Script and you are off to the races for your queries.

LIB CONNECT TO 'Snowflake_qlik.us-east-1.snowflakecomputing.com (qlikpe_qlikservice)';

Then some yahoo says "Hey Qlik Dork the customer actually has 2 server instances and they needed the data loaded from both." Now you could simply duplicate the section of code and do the same thing for the second instance. It's not that hard after all. A cut here, a paste there, a CONCATENATE before a LOAD there and bingo ... 2 different server instances.

But your Spidey senses start tingling because you know what it really means is "The next customer might have 4, and the next might have 7." And since they will actually be the ones changing the code, you don't know for sure how proficient they are with their cut, paste, concatenate or bingo skills. But what can you possibly do, the connections are hard coded? Right?

Wrong. The compiler needs a connection string but the reality is it doesn't care how it gets it. Which means ... you can use a Variable for it.

Set vInstance = 'Snowflake_qlik.us-east-1.snowflakecomputing.com (qlikpe_qlikservice)';

LIB CONNECT TO '$(vInstance )';

I hear you out there shouting "But that doesn't achieve anything other than making the code more complicated!" But you didn't let me finish. Of course I'm not going to do my Set vDesc on a line by itself, I'm going to LOOP through the connections and set the variable vDesc inside the loop like this.

SnowflakeInstances:

Load * Inline [

SFConx, SFType, SFInstance

SF_AT, NotLive, 'Snowflake_attunity_partner.xxxxx.snowflakecomputing.com '

SF_PE, Live, 'Snowflake_qlik.xxxxx.snowflakecomputing.com'

];Let vNumRows = NoOfRows('SnowflakeInstances')-1;

For i = 0 to $(vNumRows)Let vConx = peek ('SFConx',$(i),'SnowflakeInstances');

Let vInstance = peek ('SFInstance',$(i),'SnowflakeInstances');

Let vType = peek ('SFType', $(i), 'SnowflakeInstances');

LIB CONNECT TO '$(vInstance )';Yeah the customer with 2 different Snowflake instances is covered.

Yeah the customer with 3 different Snowflake instances is covered.

Yeah the customer with ... you get the picture. All customers is covered. 1 to N instances is all the same to you.

Let's say I am reading a Table called SESSIONS. My table header takes advantage of that vConx variable:

[$(vConx)_SESSIONS]:

And when I want to Store the data into a QVD so I can handle incremental loads, that variable is handy again:

Store [$(vConx)_SESSIONS] into [$(vQVDLocation)/$(vConx)_SESSIONS.qvd] (qvd);

What in the world is the vType variable used for? Does Live or NotLive even make sense?

It does in my particular use case. I have some very large instances that I need to read from for "my" testing, that I only have limited access to. Thus I need to connect Live one time, but I want to read the data from my QVD file when it's flagged as NotLive the rest of the time. A simple little IF THEN ELSE and I'm all set. I either choose to read the data from the server or simply read it from the QVD with no incremental loading.

IF (vType = 'Live') Then

If you were observant you will have noticed that I used a variable for the storage location as well as the QVD filename. Because you know the customers that receive this application will all create different Connection paths for the QVD files so I simply let them define it in the beginning of the code rather than requiring them to change it in every single section of the load script. Plus I'm cooking with gas when I deploy to SaaS and my storage is a different space and not just a file location.

I like to variabilize as much of my code as possible. It's either because I'm lazy -OR- because I'm dedicated to easily modifiable code -OR- both. 😀

-

Sub-routines to speed-up your ETL: "Change Data Capture" strategy with Qlik leve...

Hello Qlik Community,Here is a video where I discuss an optimised "Change Data Capture" strategy with Qlik and leveraging its bulk (optimised) insert ... Show MoreHello Qlik Community,

Here is a video where I discuss an optimised "Change Data Capture" strategy with Qlik and leveraging its bulk (optimised) insert for speeding up your ETL process from the source DB through all the transformation parts and into your front-end application.

I include easy-to-use sub-routines also that will help you achieve this process if you have not already implemented it and these are available here and discussed/explained in the video.

The text of the video below, if you prefer reading:

I have seen quite a number of instances where an "incremental load" in Qlik was barely more than fetching the data from the database in what can be called an incremental manner.

Basically a Where-clause in the SQL statement which extracts a limited amount of data which is then apended to a QVD, typically one that gets larger and larger. Since this QVD is one big data file, all subsequent transformation processes have to go through the whole data set to produce the final product. Hence, even though some of the data is historical and unchanged from the previous day, all the corresponding fields need to be recalculated or re-transformed since there is only one QVD file. This process makes ETL processes unnecesarily long, because the whole data set needs to be loaded and transformed rather than just the portion of data that is new - the incremental, "change data capture" part of the data.The "Change Data Capture" strategy I elaborate upon in this video, is a process that will minimise the amount of time the QVD takes to get stored and also minimise the subsequent ETL processes to get to the final data set that is required for the front-end application and analysis.

Here I show the incremental ETL I have so often witnessed. Fetch the change-data from the DB, apend it to the existing QVD resulting in an ever growing QVD, which can in some instances I have seen, take up to 20-25 minutes to write to disk.

The "Change Data Capture" strategy I want to elaborate on, is fetching the data incrementally, and THEN write out a Year-Month QVD, based on a date-time stamp, hopefully on that is akin to a "Last_Modified_Date" for that record.

The key to this strategy is identifying a Primary_Key in the source DB data and a Date-time field upon which the Year-Month QVD slices will be based on.

This will minimise the QVD store time, because the QVD store time will max-out in any given month, on the last day of the month. Then for the next month, the QVD store time will drop and build up to a new maximum for that month, then the month after that drop again.Each subsequent transformation layer can follow this Year-Month QVD logic, thereby ensuring the full ETL takes a minimum amount of time to complete.

Then, if you ensure you have ordered the data reverse chronologically in the QVDs, you can use the WHERE NOT(EXISTS(Primary_Key)) to reverse chronologically LOAD all the QVDs. Hence, if there are any duplicates of that Primary_Key, Qlik will only load the latest record of it. And naturally, since the WHERE-NOT-EXISTS is based on ONE field, the loading will be optimised and execute very quickly.

This is the essence of an Optimised "Change Data Capture" strategy with Qlik and leveraging its bulk (optimised) insert for speeding up your ETL process.

I have included a few-subs that can be used in this process and that might help you quickly set up this sort of dataflow architecture.

1) FullLoad_Oracle(parDB_Connection,parDB_Owner,parDB_Table,parDB_WHERE_Clause)

Sub to load and store on full DB table.

N.B. The WHERE clause you include must start with 'WHERE [....]'.

2) FullyAutomatedIncrementalLoad_Oracle(parDB_Connection,parDB_Owner,parDB_Table,parDB_WHERE_Clause,parPrimaryKey,parDateTimeField,parDBTableFieldsChecker_YES)

Sub to load and store a DB table extracting incrementally and storing only the lastest YYYYMM QVD.

N.B. The WHERE clause you include must start with 'AND [....]'.

Key requirements for this sub is the identification of the PRIMARY-KEY in the table and the DATE-TIME field to use for incremental loading

and which will also be the manner in which the script will store YYYYMM QVDs.

3) QVDFileNamesList(parQVDTargetFolder,parQVDNamePrefix,parLoadLastNFiles)

When a folder has been created full of YYYYMM QVDs, load the LIST of files name in reverse-chronological order.

4) QVDFileNamesLoad(parQVDTargetFolder,parQVDNamePrefix,parLoadLastNFiles,parOPTIONALsubName)

When a folder has been created full of YYYYMM QVDs, load the QVD FILES in reverse-chronological order.

999) QVDCreateYMSlicesFromQVD(parSourceQVDFolder,parSourceQVDFile,parTargetFolder,parDateFieldNameToCreateQVDSlices)

If you have a large QVD with a lot of data, in order to start the process of creating YYYYMM QVDs in an incremental load with the above subs,

use this sub to start the initial slices of YYYYMM QVDs. -

Qlik SAP Connector - Enablement Series

Hello and welcome, SAP is a widely used enterprise software for mapping and supporting business processes in many companies of all sizes. The data fro... Show MoreHello and welcome,

SAP is a widely used enterprise software for mapping and supporting business processes in many companies of all sizes. The data from a SAP ERP and BW landscape is often an important data source for BI analysis. For years, Qlik has offered a connector package to efficiently read, process and visualize SAP data. With this documentation, we want to give you an overview and deep dive of the scope and use cases of the various SAP connectors.

In a collection of enablement videos, we will explain both the basics and best practices of all integration options of SAP interfaces into Qlik - enriched by collaterals such as code snippes and links for additional, helpful resources

It is an ongoing project. The content is constantly being expanded and updated.

The following list shows you the topics that we cover. All topics on which there are already recordings are linked accordingly.

Topic Status Video Collaterals Qlik Connector for SAP - Overview and positioning released Messaging None SAP SQL Connector - Basics released SQL Basics None SAP SQL Connector - Best Practices released SQL Bonus Attachment SAP Extractor Connector - Basics released Extractor Basics None SAP Extractor Connector - Best Practices planned SAP Bex Connector - Basics released Bex Basics None SAP Bex Connector - Best Practices released Bex Bonus None SAP Bapi Connector - Basics planned SAP Bapi Connector - Best Practices planned Custom Connectors - Report planned Custom Connectors - Query planned Custom Connectors - DSO planned Custom Connectors - OLAP planned SAP Security - Reuse BI Analysis Authorization released SAP Security None Installation released Setup None Feel free to discover, comment and ask questions. We look forward to exchanging ideas and discuss challenges with you on the topic of SAP.

Best regards,

Matthias Anders and Stephan J. Mueller

-

How to delete a sheet via Qlik repository API using Postman Desktop

Hi techies,The Qlik Sense Repository Service (QRS) contains all data and configuration information for a Qlik Sense site. The data is normally added a... Show MoreHi techies,

The Qlik Sense Repository Service (QRS) contains all data and configuration information for a Qlik Sense site. The data is normally added and updated using the Qlik Management Console (QMC) or a Qlik Sense client, but it is also possible to communicate directly with the QRS using its API. This enables the automation of a range of tasks, for example:

- Start tasks from an external scheduling tool

- Change license configurations

- Extract data about the system

We will learn how to connect with Qlik Sense via repository API using POSTMAN Desktop tool. For practice we will delete a sample sheet by repository API request.

Sheet deletion is one of the operations we can perform via API request. We have different endpoints which we can use in API request to perform operation.

We can know what are available end points in Qlik API at https://help.qlik.com/en-US/sense-developer/April2020/Subsystems/RepositoryServiceAPI/Content/Sense_RepositoryServiceAPI/RepositoryServiceAPI-QRS-API-Endpoints.htm

Prerequisites:

- Qlik Sense Enterprise

- Postman Desktop tool

- Administrator user for installation of Postman.

- Service user account to send API request.

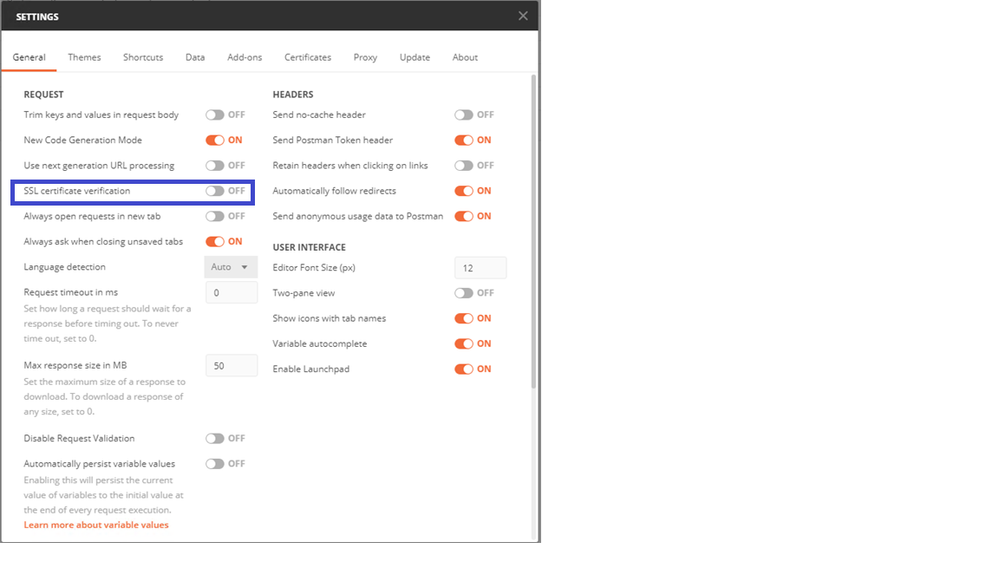

Installation and configuration of Postman:

- Download Postman desktop version and install with administrator user.

- Download Qlik Sense Enterprise certificates from QMC > Certificate section and export a copy of the certificates used in the site.

- Arbitrary machine name (best practice is to use the machine you are making the API calls from)

- No password on the files

- Include secrets key

- PEM Format

- Go to Process with PEM Certificates (Simplest, Doesn't Require OpenSSL), Install Qlik certificates by referring https://support.qlik.com/articles/Basic/How-to-configure-Postman-desktop-app-to-connect-to-Qlik-Sense?articleId=How-to-configure-Postman-desktop-app-to-connect-to-Qlik-Sense

- Open Postman Desktop=> Go to SSL certificate verification and disable SSL certificate verification

4. Certificates verification: Add host name, certificates as per below snapshot.:

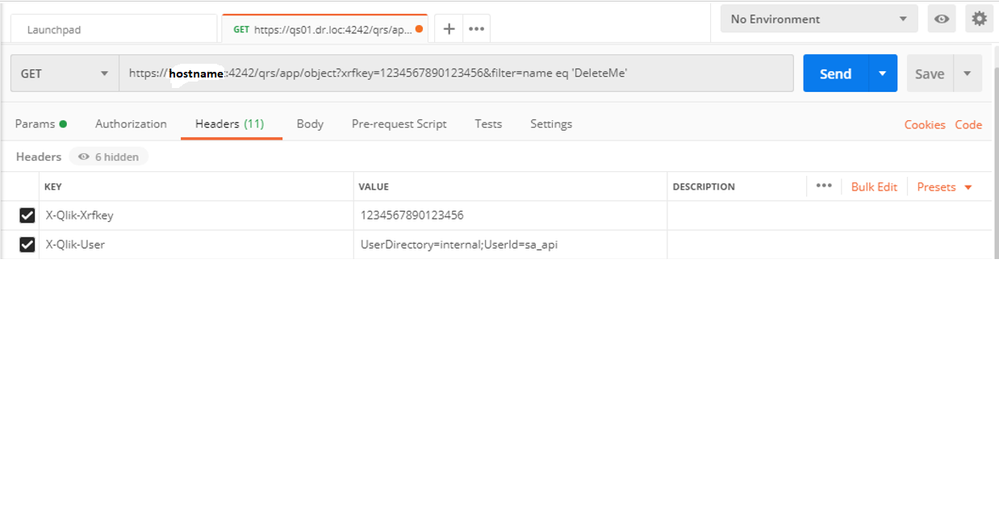

5. Test connectivity of Postman with QSE. A valid test of postman connectivity with service account would be the following.

- Request: https://hostname:4242/qrs/about?xrfkey=1234567890123456

- X-Qlik-Xrfkey = 1234567890123456(Xrfkey string is a random string. The only characters that are allowed for use in the Xrfkey parameter are 0 - 9, a - z, and A - Z.)

- X-Qlik-User= UserDirectory=Domain;UserId=qliksense(qliksense is service user in this article)

If everything is fine then you will see Status 200 OK and build version etc. information of QSE.

How to delete a sheet using repository API

- Go to hub and create a sample sheet in any app i.e. DeleteMe

- Create a request https://hostname:4242/qrs/app/object?xrfkey=1234567890123456

- End point in above request is object.

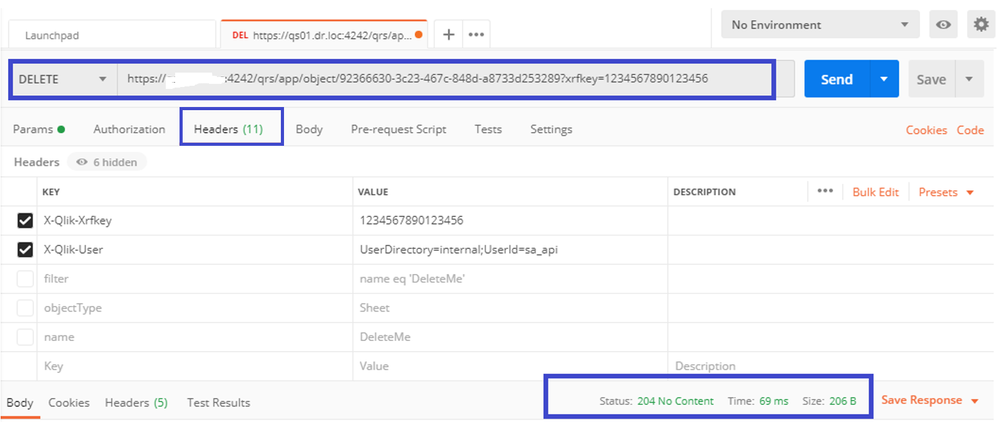

- Go to headers=>set X-Qlik-Xrfkey to any string i.e. 1234567890123456

- X-Qlik-User= UserDirectory=internal;UserId=sa_api

- Note we are using sa_api user to delete a sheet. Here, service user is ineffective to delete any sheet.

Section 2:

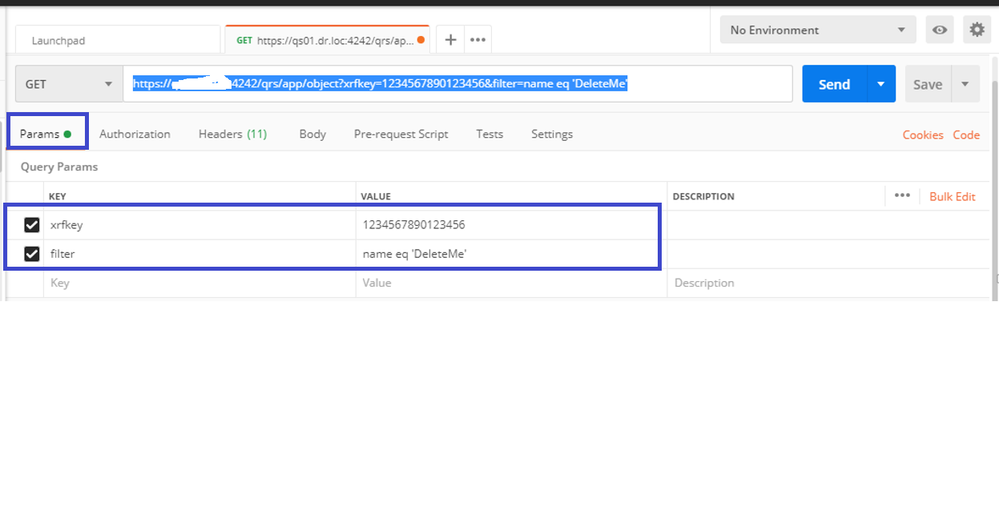

- Go to Params:

- set xrfkey = 1234567890123456

- Filter = name eq 'DeleteMe'

- This will make request as https://hostname:4242/qrs/app/object?xrfkey=1234567890123456&filter=name eq 'DeleteMe'

- press GET button.

- This will bring us sheet details whose name is DeleteMe.

- GET /qrs/app/object?xrfkey=somerandomstring&filter=app.name eq 'SomeApp' and objectType eq 'sheet' and name eq 'deleteMe'. Is case insensitive

- Response:

{

"id": "92366630-3c23-467c-848d-a8733d253289",

"engineObjectType": "",

"description": "",

"objectType": "sheet",

"publishTime": "1753-01-01T00:00:00.000Z",

"published": false,

"name": "DeleteMe",

"engineObjectId": "77dfef2a-6fa4-4edf-bfce-12d07e14c735",

"contentHash": "AP=B$+WA3.Z[_H`@E'[;@C!Y9(95?D\/7S92!>TBLKP",

"privileges": null

}

7. Here we need Id detail from response received by GET Request: "id": "92366630-3c23-467c-848d-a8733d253289".

- This id 92366630-3c23-467c-848d-a8733d253289 we can find in QMC by going to App objects, filter the records by ID column

Check last column

8. Delete a sheet by https://hostname:4242/qrs/app/object/92366630-3c23-467c-848d-a8733d253289?xrfkey=1234567890123456 with keeping X-Qlik-User= UserDirectory=internal;UserId=sa_api

9. Check QMC or HUB, you will find that DeleteMe sheet is vanished.

Note: This solution uses repository service or API which removes entry of sheet from QSR database not the actually file from binary file present in disk. If you need to cleanse the sheet from disk, please refer this beautiful document created by Levi.

https://adminplaybook.qlik-poc.com/docs/asset_management/apps/remove_unused_private_sheets.html

You can do many operations as we used delete of sheet. Please check what are the endpoints available in Qlik. End points means what operation you are trying to do with Qlik using API request.

Reach out to me at kumar.rohit1609@gmail.com if there is need of any clarification or assistance

Connect with me on LinkedIn https://www.linkedin.com/in/rohit-kumar-67ba152b/

To get latest updates and articles, join Qlik community at https://www.facebook.com/QlikIntellectuals

When applicable please mark the appropriate replies as ACCEPT AS SOLUTION and LIKE it. This will help community members and Qlik Employees know which discussions have already been addressed and have a possible known solution. Please mark threads as LIKE if the provided solution is helpful to the problem, but does not necessarily solve the indicated problem. You can mark multiple threads as LIKE if you feel additional info is useful to others.