Unlock a world of possibilities! Login now and discover the exclusive benefits awaiting you.

- Qlik Community

- :

- All Forums

- :

- QlikView App Dev

- :

- Re: Interval match and performace

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Interval match and performace

Hi Guys,

I have the following scenario:

There are different Master data Tables like "Station, Measuringbar, Variable" and the keyfigure table with "HourlyData"

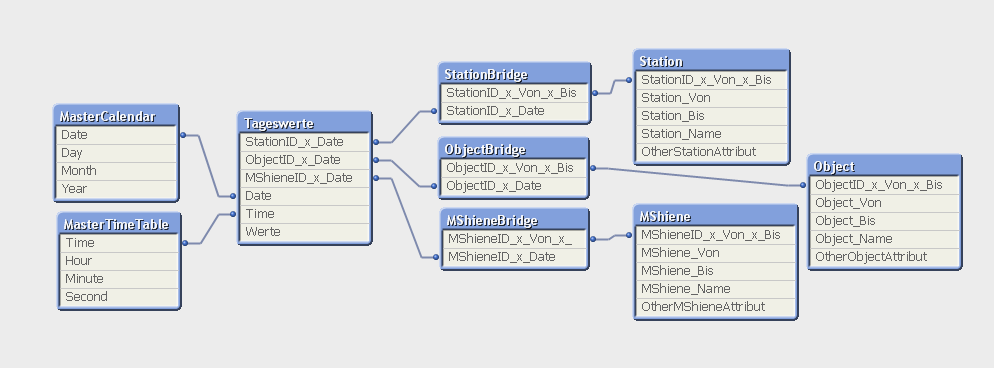

Now each of these master data tables have the following fields, ID, valid from, valid to etc. I made a calendar for two Years which are needed for reporting. My Datamodel looks like this normally without considering the Validity of the dates from valid from, valid to fields.

Station:

Load

Station_Id as %Key_Station_Id,

Valid_From_Station, //YYMMDDHH, Date with Hour Format 2012122106 where 06 is 06 Hrs

Valid_To_Station, //YYMMDDHH, Date with Hour Format

Station_Art_ID,

Station_Art_Txt

;

Variable:

Load

Station_Id & '_' & Measuringbar_Id as %Key_ID_Station_Measuringbar ,

Station_Id as %Key_Station_Id, // Connection to Station Table

Measuringbar_Id,

Upstream,

Downstream,

Valid_From_Variable, //YYMMDDHH, Date with Hour Format

Valid_To_Variable //YYMMDDHH, Date with Hour Format

;

MeasuringBar:

Load

Station_Id & '_' & Measuringbar_Id as %Key_ID_Station_Measuringbar ,

Valid_From_MeasuringBar, //YYMMDDHH, Date with Hour Format

Valid_To_MeasuringBar //YYMMDDHH, Date with Hour Format

;

HourlyData:

Station_Id & '_' & Measuringbar_Id as %Key_ID_Station_Measuringbar ,

Date & Hour as Date_Key,

Hour,

Status,

Volume,

Energy

;

Calendar:

Date & Hour as Date_Key, // Hrs 1 to 24 generated automatically with loop

Year,

Month,

Week,

Day

...

;

So my task is to build the datamodel taking in to count the Interval match validity when I select a Date (Year, Month, Day) of the calendar. Only the valid data from master tables at that particular point of time should be considered when I select a year, month, day etc...

For this I have done the following:

I built a table KeyFigures.

Qualify *;

Unqualify '%KEY*',DATE_VALID_FROM,DATUM_VALID_TO;

KeyFigures:

Load

'#STATION#'& %Key_Station_Id as %KEY_ID_OBJEKT,

'#STATION#' & Valid_From_Station &'#'& Valid_To_Station as %KEY_ID_OBJEKT_VALID_FROM_VALID_TO,

%Key_Station_Id

Valid_From_Station as DATE_VALID_FROM,

Valid_To_Station as DATUM_VALID_TO

from Station

Join (KeyFigures)

Load

'#MeasuringBar#'& Measuringbar_Id as %KEY_ID_WGOBJEKT,

'#MeasuringBar#'& %Measuringbar &'#'& Valid_From_MeasuringBar &'#'& Valid_To_MeasuringBar as %KEY_ID_OBJEKT_VALID_FROM_VALID_TO,

Valid_From_MeasuringBar as DATE_VALID_FROM,

Valid_To_MeasuringBar as DATUM_VALID_TO

from MeasuringBar;

Join (KeyFigures)

Load

'#Variable#'& %Key_ID_Station_Measuringbar as %KEY_ID_WGOBJEKT,

'#Variable#'&Valid_From_Variable &'#'& Valid_To_Variable as %KEY_ID_OBJEKT_VALID_FROM_VALID_TO,

Valid_From_Variable as DATE_VALID_FROM,

Valid_To_Variable as DATE_VALID_TO

from Variable;

UNQUALIFY *;

KeyFigures_Detail:

Load Distinct

%KEY_ID_WGOBJEKT,

%KEY_ID_WGOBJEKT_GUELTIG_VON_GUELTIG_BIS,

DATE_VALID_FROM,

DATE_VALID_TO

Resident KeyFigures;

inner Join IntervalMatch(Date_Key)

Load DATE_VALID_FROM,

DATE_VALID_TO

Resident KeyFigures_Detail;

Join(Variable)

Load Distinct

%KEY_ID_WGOBJEKT,

%KEY_ID_WGOBJEKT_GUELTIG_VON_GUELTIG_BIS,

Date_Key

Resident KeyFigures_Detail;

drop Table KeyFigures_Detail;

Drop Field %KEY_ID_WGOBJEKT from KeyFigures; // to remove the synthetic keys

Variable_MS: //Final Variable_MS as a Facttable

Load *,

%Key_ID_Station_Measuringbar &'#'& Date_Key as %KEY_ID_STATION_MSCHIENE_Date_Key

Resident Variable;

Drop Table Variable;

Drop Field Date_Key from HourlyData;

Now I get a Starschema Datamodel with the correct data and links but I could load only one month. For that the Table "Variable_MS" contains 800 Million datasets which is too much. The data is getting multiplicated due to interval match.

How can I change my Datamodel to be performant using Interval match and still get the 2 Years data.

I hope I will get some positive answers from profis..

Thanks in advance

Sravan

- « Previous Replies

-

- 1

- 2

- Next Replies »

Accepted Solutions

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Yes, I think the best solution is if you have one bridge table per dimension. Your structure should be

HIC

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Although I do not understand your data model completely, I can say that the JOINS you have in the script could cause these problems. I do not think you need them at all. Joining tables is most of the time not necessary, and it often causes tables to explode in size.

Can you post an image of your data model? (ctrl-T)

HIC

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Henric,

thanks for your answer.

The Joins in the script are to get the "Valid From" and "Valid To" fields together from all the Tables so that I can perform the Interval match only once. Otherwise, I dont need the joins.

Thought the table names are different, the data model looks as below..

Hope someone can help...

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

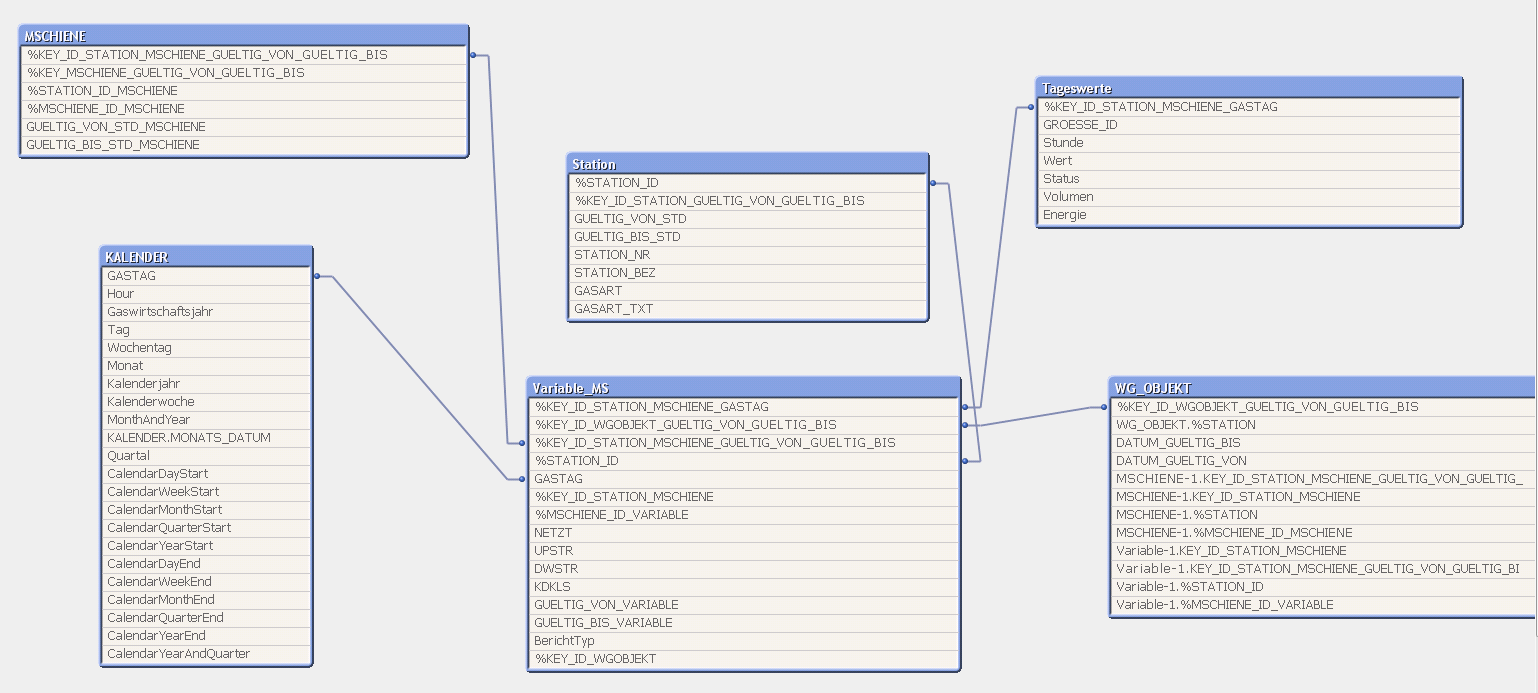

Got it. Slowly changing dimensions.

I would prefer that you aim for the following scheme:

1) Fact table: I don't understand whether the "Variable_MS" or the "Tageswerte" is the fact table, but I assume that it is "Tageswerte" (dass hört mann aus dem Namen...). In this table you should create a composite key consisting of the DimensionID and time/date.

2) Dimension table: All dimension fields that you want, but the only key should be a composite key made from DimensionID, GültigVon and GültigBis

3) Dimensional bridge table: A table created by using Intervalmatch that consists of two fields only - the two composite keys mentioned above. This table links the fact table and the dimensions.

Do this for all three dimensions: Object, Mshiene and station, and I think you will be all set.

I plan to write a blog about Slowling changing dimenisons, but I do not have time to do this before christmas.

HIC

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Henric,

Thanks for the answer and your time.

Facttable is actually the Tageswerte as you said and this table has Date and DimensionID

Tageswerte: //FactTable

Station_Id & '_' & Measuringbar_Id & '_' & Date & Hour as %Key_ID_Station_Measuringbar_Date,

//Composite Key

Station_Id & '_' & Measuringbar_Id as %Key_ID_Station_Measuringbar ,

Date & Hour as Date_Key,

Hour,

Status,

Volume,

Energy

;

Station: //Dim Table

Load

Station_Id & '_' & Valid_From_Station & '_' &Valid_To_Station as %Key_ID_Station_ValidFrom_ValidTo,

Station_Id as %Key_Station_Id,

Valid_From_Station, //YYMMDDHH, Date with Hour Format 2012122106 where 06 is 06 Hrs

Valid_To_Station, //YYMMDDHH, Date with Hour Format

Station_Art_ID,

Station_Art_Txt

;

etc... These composite Keys I have defined in my WG_OBJEKT table to connect. This is actually what you said "Dimensional bridge table" for all the Dimension Tables. This I have created by Joining...

What I did not understand is the "Dimensional bridge table" you have described above. Do you mean for each dimension table I have to create a Dimensional bridge table? This is where I have the problem and Performance problems. Can you please explain it.

Thanks a lot

sravan

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Yes, I think the best solution is if you have one bridge table per dimension. Your structure should be

HIC

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hey Henric,

Thanks for the help. I did that way and you confirmed it with the Datamodel. The Performance ist much better now.

Vielen Dank ![]()

sravan

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Henric,

I have one more question regarding this.

Their might be the case that the keyfigures Table (Tageswerte) is not filled and still the Masterdata (For Ex. Station) need to be anaylsed. As the calendar hangs on the Tageswerte, it does not show the values (For Example all the valid stations in 2012) when the data is not present in the Tageswerte.

There are ways to solve this like: One is

1) having two Calendar boxes on the layout with FROM and TO Date selections

and the chart which depends show the >= FROM Date and <=TO Date

but again for Example, If I want to analyze the Mschiene, I need to create two calendar boxes in new sheet to analyse the Mschiene. This is not elegant way. Any idea?

Thanks in Advance

Sravan

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

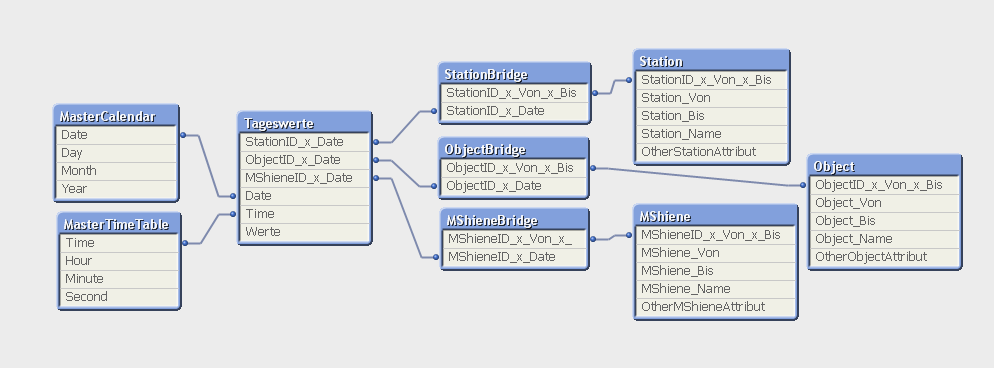

I don't think that additional calendars will be a good solution. Then I think it would be better to populate the Tageswerte table with records where the Werte is zero or NULL, so that you get the link to the calendar. Example:

Tageswerte:

Load ...,

AutoNumber(StationID & '|' & ObjectID & '|' & MSchieneID & '|' & Date) as GlobalID

From TageswerteSource;

DummyRecordsForTageswerte:

Load distinct StationID from Station;

Join Load distinct ObjectID from Object;

Join Load distinct MSchieneID from MSchiene;

Join Load distinct Date from MasterCalendar;

Concatenate (Tageswerte)

Load * where not Exists(GlobalID);

Load

AutoNumber(StationID & '|' & ObjectID & '|' & MSchieneID & '|' & Date) as GlobalID,

StationID & '|' & Date as StationID_x_Date,

ObjectID & '|' & Date as ObjectID_x_Date,

MSchieneID & '|' & Date as MSchieneID_x_Date,

Date,

Null() as Time,

Null() as Werte

resident DummyRecordsForTageswerte;

Drop table DummyRecordsForTageswerte;

// HIC

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Henric,

Thanks for the good suggestion. Actually My "Date" comprises of Date and Hour Field as follows: "YYYYMMDDhh"

That means, My Calendar composes of Date and Hr and for two years, its a big table containing 20000 entries. I don't need any Minutes and Seconds for my App. Only YYYYMMDDhh.

Now when I use distinct for 2 years in the below code the RAM goes up and does not respond as it multiplies the data because of join and hence Qv App does not respond. I cant remove the Hour field as my fields Validfrom and ValidTo from all the tables are in the format "YYYYMMDDhh".

Join Load distinct Date from MasterCalendar;

Need to see and workout. Any suggestions are welcome:)

Regards

Sravan

- « Previous Replies

-

- 1

- 2

- Next Replies »