Unlock a world of possibilities! Login now and discover the exclusive benefits awaiting you.

- Qlik Community

- :

- All Forums

- :

- QlikView App Dev

- :

- Performance of Cross table load vs for loop

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Performance of Cross table load vs for loop

Hello

I am having some issues with the cross table load performance. It is performing worse than a for loop with a where statement producing the same output table.

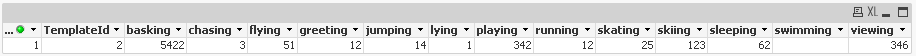

I have a table with columns as follows

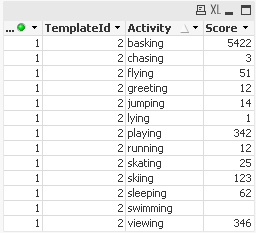

I need to transform it to this:

My problem as I mentioned is that I am getting better performance from a for loop with a where clause than from cross table load.

Here is an example of the code of the for loop that beats the cross table load.

set sFromDate = 2013-01-01;

set sFromDate = 2013-01-01;

set sTodate = 2014-01-01;

let vParameterNames = 'running','skiing','jumping','swimming','flying','skating','playing','sleeping','viewing','greeting','chasing','lying','basking';

for each parametername in $(vParameterNames)

Activity:

load

Key,

Activity.$(parametername) as Activity.Score,

'$(parametername)' as Activity.ParameterName,

applymap('MapParameterToGroup', '$(parametername)-'&TemplateID,'') as Activity.ParameterGroupName

FROM '$(sFileName)' (qvd)

where Activity.$(parametername) >= 0

and date#(BKDate,'YYYY-MM-DD') >= date#('$(sFromDate)','YYYY-MM-DD') and date#(BKDate,'YYYY-MM-DD') < date#('$(sToDate)','YYYY-MM-DD')

and TOTAL_SECONDS > 0;

next

And here is the code for the crosstable loaded table:

set sFromDate = 2013-01-01;

set sTodate = 2014-01-01

let vParameterNames = 'running','skiing','jumping','swimming','flying','skating','playing','sleeping','viewing','greeting','chasing','lying','basking'

Activity_temp:

crosstable (Activity.ParameterName, Activity.Score,2) load *;

load

Key,

TemplateID,

Activity.running as running,

Activity.skiing as skiing,

Activity.jumping as jumping,

Activity.swimming as swimming,

Activity.flying as flying,

Activity.skating as skating,

Activity.playing as playing,

Activity.sleeping as sleeping,

Activity.viewing as viewing,

Activity.greeting as greeting,

Activity.chasing as chasing,

Activity.lying as lying,

Activity.basking as basking

FROM '$(sFileName)' (qvd)

where date#(BKDate,'YYYY-MM-DD') >= date#('$(sFromDate)','YYYY-MM-DD') and date#(BKDate,'YYYY-MM-DD') < date#('$(sToDate)','YYYY-MM-DD')

and TOTAL_SECONDS > 0;

Activity:

load

Key,

Activity.ParameterName,

Activity.Score,

applymap('MapParameterToGroup', Activity.ParameterName & '-'&TemplateID,'') as Activity.ParameterGroupName

resident Activity_temp;

drop table Activity_temp;

The crosstable performs amost equally as the for loop. if needing to split the crosstable in two tables then using the for loop is quicker than the cross table load. In my experiment with 1,000,000 rows and 13 columns the for loop finished in 60 seconds and the crosstable in 72 seconds. For me both are too slow when data size increases.

Unfortunately i need to transpose the table in order to display the data nicely in a straight table with horizontal linear gauge.

Hopefullly i am abusing the cross table load somehow in a way that it can be speeded up! ![]()

My other option is to create an extension object but then i have the problem of not being able to print that in a pdf report which is required.

Has anyone had similar issues?

regards

Johan

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

May be you can implement the incremental load on cross table.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hello Dathu,

thanks for your input.

If an incremental load is possible for the crosstable then I should also be able to do that for the for loop alternative. The performance gain would (probably) be similar for both alternatives and the crosstable load would therefore still be slower. I have tested the 2 alternatives with different sizes of input data which would simulate the lesser amount of source data that an incremental load would give me.

My issue is with the performance of the crosstable load. I just cannot understand why the crosstable load is so slow. The for/where solution to me is a very costly/bad solution which i didn't really think would be quicker than a built in algorithm.