Unlock a world of possibilities! Login now and discover the exclusive benefits awaiting you.

- Qlik Community

- :

- All Forums

- :

- QlikView App Dev

- :

- Unexpected lost of rows while using binary load wi...

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Unexpected lost of rows while using binary load with any further statement in the script

Hi Community,

I face a really strange issue using binary load.

I use a binary load of an application A from an application B. In the script of application B, as soon as I put another command after the binary, it changes the content of one particular table... Even if this command is a totally neutral one like TRACE. I don't understand.

With only the binary statement :

The same with just a neutral statement after :

Is someone can help me solving this incredible issue, please ?

Thanks in advance,

Stéphane

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Did you check the number of records also within the table-viewer? Maybe there are any further selections triggered by any actions/macros.

Happens your check always directly after a reload or was there any saving, closing and re-opening of the application? If the differences happens after opening the application it indicates any data-reduction through a section access.

- Marcus

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thanks for your reply Marcus.

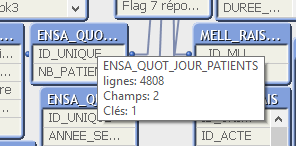

Well, the wrong number of rows is the same in the table-viewer :

I check directly without any saving, closing... Between both pictures of my first post, I have only uncommented the TRACE statement and launch the reload.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I haven't really an idea what might go wrong and so I would just try to exclude any possibilities ...

This means if there exists an prj-folder I would remove it (rename them or copy the qvw or similar) - the same with section access (both shouldn't be responsible but you never knows). Also checking both versions by closing the document and quitting QlikView as application to detect if there might any caching causing this issue.

Further doing it within a complete new qvw and ensuring that both releases (creator of A and in the usage of B) are the same.

- Marcus

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Actually, I come here after testing a lot of things like that.

I don't have prj-folder. Section access should normally come after the binary... If I do the section access in the application A, problem is the same in application B : ok with a unique binary statement, KO with whatever thing else behind.

This application A is a big one. With 60 tables in star schema. I recently do an optimisation to suppress all the unused fields of these tables thanks to the Rob's DocumentAnalyser. On the concerned table I suppressed a field in the SQL which was, actually, used to get the right agregation. This caused an error in the data. I found my mistake and corrected it. Data are now ok in application A. What is really strange is that in the application B, the wrong data that appearing when I add a statement after the binary are those of before this correction.

I'm totally lost. It's not logic at all.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Maybe any kind of caching - are you using BUFFER in any way?

Could you ensure that B is always loading from an absolute unique A? Maybe there is some kind of caching / load-balancing within your storage / OS.

- Marcus

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I am in agreement with Marcus, were you able to find something finally, if so, please consider posting what it was and then mark that as the solution after you post it. I cannot figure out what may have been caching and why though, but that is really the only thing making much sense at the moment. What version are you running, that is the only other thing of which I can think, is possibly may be a defect, so if you are not on the most current SR for the point release you are running, I would recommend trying the latest SR on that point release just to be sure things can still be reproduced then. Sorry I do not have anything better for you.

Regards,

Brett

I now work a compressed schedule, Tuesday, Wednesday and Thursday, so those will be the days I will reply to any follow-up posts.