Unlock a world of possibilities! Login now and discover the exclusive benefits awaiting you.

- Qlik Community

- :

- All Forums

- :

- QlikView App Dev

- :

- Reloading QVW file is taking more than 3 hours

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Reloading QVW file is taking more than 3 hours

Good evening everybody

Since a month ago, I've been working with a QV project and this involves tons of data. The process we created is using a QVW file that creates our QVD files on the server, then another QVD which is our Dashboard uploads information from those QVDs. We didn't have any issues at the begining due that information was only testing and not that much.

We have now started to upload information from production to our QVD files, for every month we have almost 2.5g of data. Now we have data since 10/13 and reloading our dashboard has become impossible.

It takes at least 4 hours to reload the Dashboard, something that does not work with our company business area due that they want to see updated information at least ever hour.

Creating the QVDs is not the issue but reloading QVW. We really need help on this due that time is consuming our reloading process and the spent time for that process won't be accepted by business.

Is there anyway to create an Incremental load just as QVDs but for QVW? I mean, we have data since 10/13 but that data is not refreshed anymore, at least we would like to refresh actual month but not the old ones. We have our QVDs separated by month.

I will really appreciate any help on this.

Rgards

- Tags:

- qlikview_scripting

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Jorge, You should seriously consider incremental load. Please go through this link.

http://www.learnallbi.com/incremental-load-in-qlikview-part1/

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

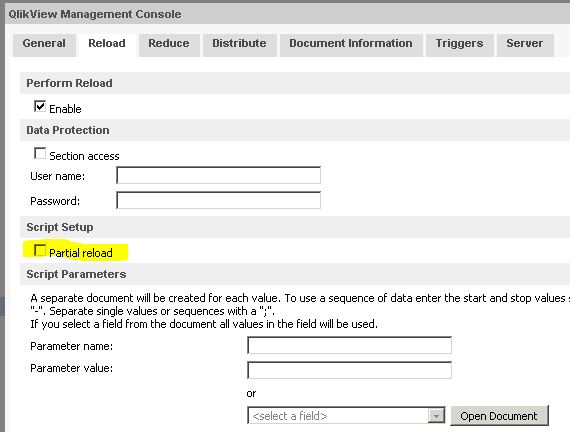

You can look into the partial reload functionality of QlikView.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi,

4 hrs is odd for refreshing.qvw.

1. How many QVD files are using in your app & size of each file ?

2. Do you Qlikmart in between QVD Source system and Application and if not then are you building Dashboard application by using QVD files or any other source data like excel/Database?

Can you build Qlikmart with your all input qvd's prior to Application and then do Binayload into your Dashboard application(Binary load is quick and hopefully it will reduce )

Thanks,

JaswantC

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Jorge,

you could set the task properties in the management console to perform a partial reload instead of a full reload of the visualization qvw.

Additionally you would have to edit your visualization load script to implement the logic for the partial reload (usage of "add" prefix in load statements).

hope this helps

regards

Marco

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

The most critical thing that you need to get right to ensure your front end document loads quickly is ensuring all QVD loads are optimized.

Loads will be optimized if you load directly from the QVD without changing the structure or content of the data (eg. adding columns or running functions over fields). You will also need to ensure that the only WHERE statement you have is a single WHERE EXISTS. Things like JOINS and RESIDENT LOAD will not be optimised either.

Once you have all your QVD loads optimised if things are still slow then you may want to look at optimizing the size of your QVD files. Dropping un-used columns and reducing the granularity of the data (by rounding numbers to a smaller number of decimal places, for instance) will all help.

I have done a number of blog posts on optimising performance, I shall post them here but they may take a while to surface due to moderation.

If you Google "qlikvew optimised qvd loads" you should find the most relevant article.

Hope that helps,

Steve

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Articles that may help with optimising your re-load routines:

http://www.quickintelligence.co.uk/qlikview-optimised-qvd-loads/

http://www.quickintelligence.co.uk/perfect-your-qlikview-data-model/

http://www.quickintelligence.co.uk/qlikview-incremental-load/

Steve

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

One further thought on this, could you perhaps aggregate your old QVD's so they are stored to so many rows, you could do this in the following way - using a very simple data model as an example:

Aggregated:

LOAD

Date,

0 as RowID,

sum(Value) as Value

FROM DetailedQVD_200801.qvd (qvd)

GROUP BY Date

;

STORE Aggregated INTO AggregatedQVD_200801.qvd (qvd);

In this example where you may have had thousands of individual transaction ID's in your old QVDs this would then get aggregated to 1 row per day in the new QVD. You could then blend detailed QVD's for recent months but have more aggregated QVD's for older months - where the detail is no longer important. You may chose to drop narrative details from old rows - whilst keeping it for later months - for example.

Picking the fields you group on and which ones you sum is the key to getting this working well.

If the optimized QVD load works for you though this approach of ditching old data may not be required.

Cheers,

Steve

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thanks all of you guys for your answers.

Jaswant

I'm using only Qlikview to build qvds and dashboards. My qvds are "partitioned" by month, each one of them is almost 1gb size. Then, I upload all of them to my dashboard and there is where my biggest problem is. Creating Qvds takes like 15 minutes per month, per day it is like 1 minute. But uploading those to the Dashboard is what kills my process.

Marco

I will take a look to the Partial Load, this is totally new for me but sounds like a great way to upload the data, specially new one. I will take a look at this

Steve

I have used a lot of Resident, I think I might need to optimize my script, but combined with the other solutions I might get a right path on this.

I will follow your example and let you know.

Thanks to all.

Cheers

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Jorge,

From what you say regarding resident it may be that you could use another layer of QVD production. When you are doing resident loads and joins across all of your historical files they will take a long time to do the join - as a record from this month will be trying to match with records from last October.

If you process each of your existing QVD's into the correct format for loading into the front end in another QVD generator (or attached to the end of your current one) then you will be processing one month at a time and all functions will run much quicker. The goal is then to be able to do an optimised load in the front end.

To see what difference a single optimised load will do for you try this:

LOAD

1 as NonOptimized,

*

FROM *.qvd (qvd);

Followed by:

LOAD

*

FROM *.qvd (qvd);

You should see quite a difference.

Cheers,

Steve