Unlock a world of possibilities! Login now and discover the exclusive benefits awaiting you.

- Qlik Community

- :

- All Forums

- :

- QlikView Administration

- :

- Dataflow example

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Dataflow example

Hi all!

I'm developing a basic dataflow of a business case.

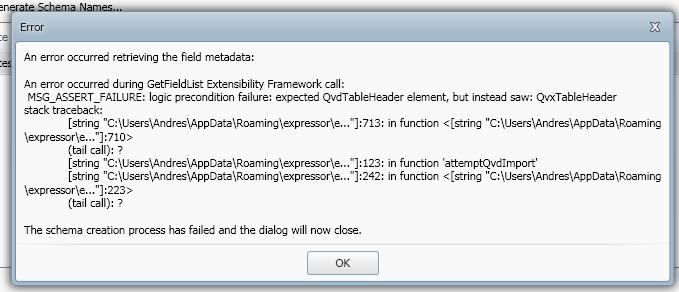

One of the requirements is to write all the data processed on the dataflow to a qvd file. Is this possible? because I tried setting on the out file the .qvd extension, I proved it in qlikview and it works. But then I have to read the same file on Expressor and it shows me this error.

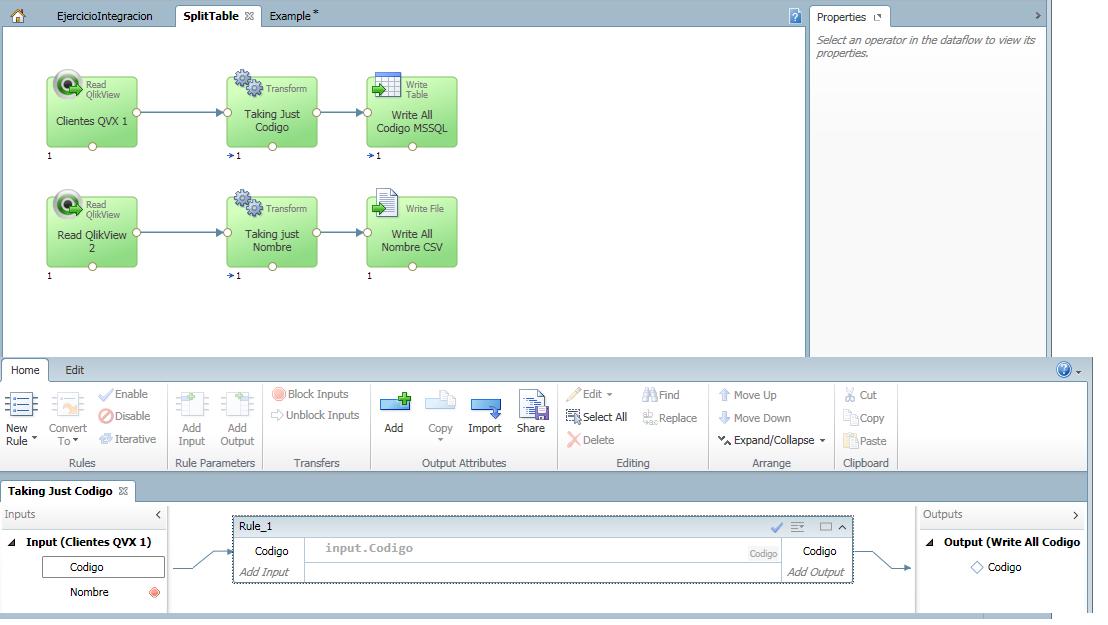

Another requirement is to read a table and then split or take 1 field and write all of its data on a MSSQL table and take the other field in a csv file. The data flow to do that is like this

My question is: The rule on the transformation operator is the only way to do this (Blocking the second field) and the other question is if I could create a table on my MSSQL database with the write table operator in Expressor, or I have to do it before on my database server. (Asume that the Connection to SQL server gives me administrator permissions)

If anyone can help me, thanks

Maria Jose

Accepted Solutions

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hello Maria,

One of the requirements is to write all the data processed on the dataflow to a qvd file. Is this possible?

Not yet - this is on the roadmap for a future rlease. The current extension you must set is .qvx when using the Write QlikView operator.

Currently QVE - can only read .QVX files that were generated by QVE itself. There is discussion about reading in other generated .QVX and .QVD files.

Another requirement is to read a table and then split or take 1 field and write all of its data on a MSSQL table and take the other field in a csv file.

My question is: The rule on the transformation operator is the only way to do this (Blocking the second field)

This can be done with a Copy Operator and two Transform Operators - where each one block a different field.

If I could create a table on my MSSQL database with the write table operator in Expressor, or I have to do it before on my database server. (Asume that the Connection to SQL server gives me administrator permissions)

Yes you can click the CREATE TABLE check box on the WRITE TABLE operator properties that will allow you to do this. As long as you have permissions - the DDL will be generated to do so.

Regards,

Mike T

Mike Tarallo

Qlik

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hello Maria,

One of the requirements is to write all the data processed on the dataflow to a qvd file. Is this possible?

Not yet - this is on the roadmap for a future rlease. The current extension you must set is .qvx when using the Write QlikView operator.

Currently QVE - can only read .QVX files that were generated by QVE itself. There is discussion about reading in other generated .QVX and .QVD files.

Another requirement is to read a table and then split or take 1 field and write all of its data on a MSSQL table and take the other field in a csv file.

My question is: The rule on the transformation operator is the only way to do this (Blocking the second field)

This can be done with a Copy Operator and two Transform Operators - where each one block a different field.

If I could create a table on my MSSQL database with the write table operator in Expressor, or I have to do it before on my database server. (Asume that the Connection to SQL server gives me administrator permissions)

Yes you can click the CREATE TABLE check box on the WRITE TABLE operator properties that will allow you to do this. As long as you have permissions - the DDL will be generated to do so.

Regards,

Mike T

Mike Tarallo

Qlik

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

See attached sample (import into QVE using Import functionality DO NOT UNZIP) and screen shot:

I created this with QVE 3.8 - should be available EOD today - but this may work if you import into 3.7.1.

The data files will be available here after you import it:

<drive: workspace path>\Metadata\Split.0\dfp\Dataflow1\external

Note you workspace path will vary.

Regards,

Mike T

Mike Tarallo

Qlik

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Mike!

Thanks for helping me with my doubts. I've done your example and it worked.

As I said, about setting the .qvd extension on the out file from a write operator, It worked as a qvd in a Qlikview application, but do you recomend using just the qvx extension to write an output file?

Regards

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Yes as a best practice to properly identify the file.

It probably worked as it ignored the extension - I just created one with the extension .mike and it worked. It's what is in the header of the document that is important.

Mike Tarallo

Qlik

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Okay, I got it.

Great Mike, thanks for your help!