Unlock a world of possibilities! Login now and discover the exclusive benefits awaiting you.

- Qlik Community

- :

- All Forums

- :

- QlikView App Dev

- :

- Re: Left Join Performance Issue

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Left Join Performance Issue

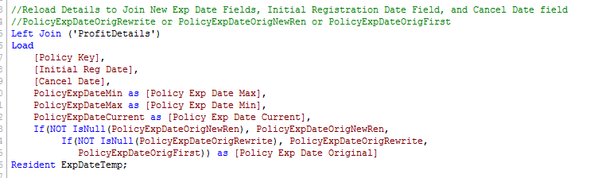

I was hoping someone could help me out and see why my script has major performance issues when left joining 6 fields onto a ~300k row table. It doesn't feel like it should be a major issue. I worked around this using a mapping load, but it seems odd.

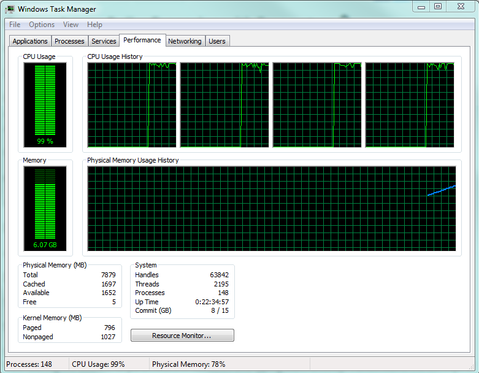

The second it gets to the Join my CPU usage jumps to 100% and the memory slowly builds until I am maxed out and have to abort. Note this still happens without the IsNull portion. I have tested it on a limited load and it does work. It gets stuck/working too hard AFTER it says "ExpDateTemp 285,245 Lines fetched"

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hello

I'd need to understand the table you are joining TO because I suspect that this is a combination of the first table and the second table, and not simply to do with the left join itself.

Can you include the script that generates the ProfitDetails table.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thanks for looking into this for me. Attached is the Profit Details load. 73 Fields.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Sorry, should have mentioned that my company doesn't allow me to access attachments on this site so I can't see that.

Let me take a guess:

I often see massive delays when I'm trying to execute a left join to a table that already has more than one field in my joined table, so in your example maybe the ProfitDetails file already has more than one of the following fields:

- Policy Key

- Initial Reg Date

- Cancel Date

- Policy Exp Date Max

- Policy Exp Date Min

- Policy Exp Date Current

- Policy Exp Date Original

If this is the case then across 300k rows of data it is trying to execute joins across multiple fields and therefore trying to discover what the synthetic key should be in order to join that data.

The way to overcome this problem is to ensure that the two tables have ONE and only one field that is used to join the tables. For example (and to keep this as short as I can) if your actual unique join between these tables is a combination of the Policy Key and Initial Reg Date then what you do is to create a key in the profit details table which is defined as [Policy Key] & [Initial Reg Date] AS KEY_Join1 and then do the same in the left join table load, but crucially do NOT load the individual fields of Policy Key and Initial Reg Date.

The alternative is that the performance issue is really only down to the nested if...then....else aspect of the load, which would be unusual, but if that is the case then perhaps move this statement to the ExpDateTemp table rather than the left join.

Hope this helps.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

It's quite a lot of fields, so I thought it would be too long to paste.

Thanks for the detail that does make sense, but all those fields are new (Except for Policy Key). It should be as simple as attaching extra fields on the end based on policy key. I have heard others mention that mapping load is preferential to join, but I still don't see why this would lag out.

ProfitDetails:

LOAD

RowNo()+Peek([Transaction ID],-1,'AirDetails') as [Transaction ID],

_Policy_Key as [Policy Key],

ACCT_YR as [Account Year],

ACCT_MO as [Account Month],

ACTG_YR_MO as [Account YearMonth],

Autonumber(_Policy_Key&'-'&ACTG_YR_MO,'PolYrMo') as [Policy YrMo Key],

Date(Date#( [ACTG_YR_MO] , 'YYYYMM')) as [Trans Reg Date],

SRCE_POL_N as [Policy Number],

SRCE_POL_EFF_D as [Policy Eff Date],

SRCE_POL_EXP_D as [Policy Exp Date],

INSD_NA as [Insured Name],

UNWTR_NA as [Underwriter Name],

NEW_RNWL_C as [New Renewal Code],

NEW_RNWL_DE as [New Renewal Desc],

SRCE_STUY_CO_C as [Writing Company Code],

SRCE_STUY_CO_NA as [Writing Company],

CMGMT_DIVS_C as [Division Code],

CMGMT_DIVS_DE as [Division Desc],

CMGMT_MKTG_RESP_C as [Marketing Responsibility Code],

CMGMT_MKTG_RESP_DE as [Marketing Responsibility Desc],

CMGMT_MGMT_RESP_C as [Management Responsibility Code],

CMGMT_MGMT_RESP_DE as [Management Responsibility Desc],

CMGMT_PRFY_ANL_C as [Profitabiltiy Analysis Code],

CMGMT_PRFY_ANL_DE as [Profitabiltiy Analysis Desc],

MCC_C as [MCC Code],

MCC_DE as [MCC Desc],

SRCE_GFS_PRDT_C as [Product Code],

SRCE_GFS_PRDT_DE as [Product Desc],

Text(IF(ISNULL(PROD_ID),CUR_PROD_ID,PROD_ID)) as [Producer ID],

IF(NOT ISNULL(PROD_ID) and CUR_PROD_ID = SRCE_SUB_PROD_C, SRCE_SRCE_PROD_NA, Null()) as [Producer Name],

INS_C as [Insurance Type Code],

Capitalize(INS_CD_DE) as [Insurance Type Desc],

Text(IF(ISNULL(RE_TRTY_C), RE_CO_C, RE_TRTY_C)) as [Reinsurance Company/Treaty Code],

IF(ISNULL(SRCE_RE_CO_DE), SRCE_RE_ASMD_CO_DE, SRCE_RE_CO_DE) as [Reinsurance Company Name],

Reinsur_Ident as [Reinsurance Contract Type],

SRCE_ENT_C as [Transaction Code],

SRCE_ENT_CD_DE as [Transaction Desc],

Autonumber(_Policy_Key&'-'&ACTG_YR_MO&'-'&SRCE_ENT_C,'PolYrMoTran') as [Policy YrMo Tran Key], //Mostly for Extract 29

Date(MakeDate(ATTCHNG_CTRY_Y,ATTCHNG_MO,ATTCHNG_DA),'YYYYMMDD') as [Transaction Eff Date],

//Match signs to Air Data for Extract Consistency

If(INS_C='CED',-USD_WP_MTH_A,USD_WP_MTH_A) as [Written Premium],

If(INS_C='DIR' or INS_C='ASM',-USD_COMM_MTH_A,USD_COMM_MTH_A) as Commission,

If(TAX_PLTDV_C='',Null(),TAX_PLTDV_C) as [State Code],

Capitalize(TAX_PLTDV_DE) as State,

BUS_CLSN_C as [Business Class Code],

POL_EFF_YR as [Policy Effective Year],

SRCE_SYS_SRCE_C as [Source System Code],

SRCE_SYS_SRCE_DE as [Source System],

SRCE_GFS_BU_C as [Business Unit Code],

SRCE_GFS_BU_DE as [Business Unit],

TAX_CTRY_ID as [Country ID],

TAX_CTRY_DE as [Country Desc],

INSD_N as [PROFIT Insured Number],

CUR_PROD_ID as [PROFIT Current Producer ID],

SRCE_SRCE_PROD_C as [PROFIT Lchubb Producer ID],

SRCE_SUB_PROD_C as [PROFIT Sub Producer Code],

SRCE_SRCE_PROD_NA as [PROFIT Lchubb Producer Name],

SRCE_RE_ASMD_CO_C as [PROFIT Assumed Reinsurance Comp Code],

SRCE_RE_CO_C as [PROFIT Reinsurance Comp Code],

SRCE_RE_CVR_DE as [PROFIT Reinsurance Coverage Desc],

LAC_WP_MTH_A as [PROFIT Local Written Premium],

LAC_COMM_MTH_A as [PROFIT Local Commission],

SRCE_GFS_DEPT_C as [PROFIT Department Code],

SRCE_GFS_DEPT_DE as [PROFIT Department],

MRKTG_PGM_C as [PROFIT Marketing Program Code],

SRCE_PRDCG_BRCH_C as [PROFIT Producing Branch Code],

SRCE_PRDCG_BRCH_DE as [PROFIT Producing Branch Desc],

SRCE_PRDCG_BRCH_DE as [Writing Branch],

SRCE_SERV_BRCH_C as [PROFIT Service Branch Code],

SRCE_SERV_BRCH_DE as [PROFIT Service Branch Desc],

CURY_C as [PROFIT Currency Code],

SRCE_RE_CVR_C as [PROFIT Reinsurance Coverage Code],

'PROFIT' as Source

FROM

[.\Extract Results\PROFIT Premium Details.del]

(txt, codepage is 1252, embedded labels, delimiter is '|', msq);- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Yes, that's odd.

In terms of mapping load, I would only use it if I only wanted one field to join into the table, pretty inefficient way to join 5 or 6 or more fields from one table to another as you'd have to build mapping tables for each one.

Question: do you drop the ExpDateTemp table after this left join?

Is the left join the last thing to happen in the script?

When you run it with a limited load of 10 rows, what does the data model look like (Ctrl-T) and can you paste a picture of it (don't attach please as I won't be able to see it).

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

In general is a join a quite heavy transformation and should be only applied by rather small datasets. By larger datasets is often a mapping much faster - multiple fields could be string-concatenated and splitted again with subfield() so that really one mapping is enough (even multiple mappings are often more performant as the joins).

Beside this I assume that there are duplicate values in your key-field combination. In this case it's not only doubtful if the data are valid anymore else the creating of additionally records leads to an access of all symbol-tables (each field is a symbol-table with the distinct fieldvalues and a pointer to the datatable). This means the wider the table is the more work needs to be done. This and the combination of multiple key-fields is probably the cause for your experienced delaying.

Further I'm not sure that all of the process-steps are really multi-threaded (even if the task-manager indicates it) and your observed stuck of the routine by n records indicates that a certain process-step couldn't really handle the RAM requirement and maybe swapping it to the harddrive - or something in this way.

Beside this I hink you could optimize your if-loop with the isnull() check with something like this:

alt(date1, date2, date3, 'another default value')

- Marcus