Unlock a world of possibilities! Login now and discover the exclusive benefits awaiting you.

- Qlik Community

- :

- All Forums

- :

- QlikView App Dev

- :

- Re: Keep only rows/operations with the latest tran...

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Keep only rows/operations with the latest transfer date (per order)

Hi,

I want to share my solution for this problem and ask the more experienced developers if there is a possibility to solve this more elegant. I think my solution is not the best one. But it is a solution. ![]() I just couldn't test it against a larger dataset, but this will happen shortly.

I just couldn't test it against a larger dataset, but this will happen shortly.

Situation:

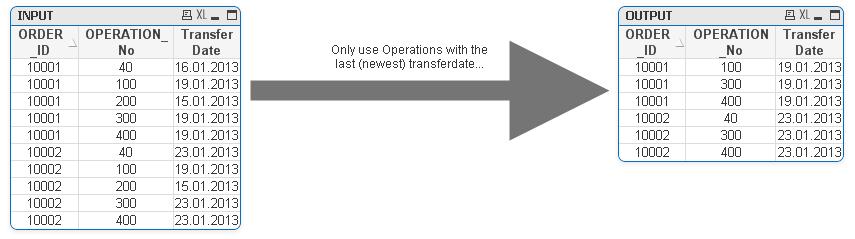

Datawarehouse stores our Orders. Every ORDER can have multiple OPERATIONS. So the combination of ORDER_ID and Operation_No is unique. Every Operation has a field called "Last transfer date". If an operation is deleted (for several reasons) from the operational system it stays in the DataWarehouse with the transfer date at this point. All other operations get a new transfer date every time the orders/operations changes. The transfer happens on a daily basis.

Problem:

If I use the original table I get too much operations including deleted ones and I end up with wrong results. So I have to clean it up in my app like the picture shows.

I achieve this (at the moment) by creating a mapping table with ORDER_ID and max(Transferdate) for every order. Than I load only the orders from the original table WHERE the maxdate from the mapping table = transfer date. Please take a look at the attached files...

I found this tricky because the "GROUP BY" clause needs all fields to have an aggregation expression or to be included in the "group by" statement. This makes it impossible (for me) to get the maxdate for the Orders, since the operation_id has no aggregation expression and can't be included in the group by statement. I try to avoid joining...

My solution looks a bit awkward for me and I appreciate every suggestions for improvements. I think another way would be to flag the relevant operations, but it complicates the expressions in the designer...

Best Regards,

Peter

- « Previous Replies

-

- 1

- 2

- Next Replies »

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Yes its just once a day.

I just wanted to make sure, that I understand it right.

Thank you very much.

- « Previous Replies

-

- 1

- 2

- Next Replies »