Unlock a world of possibilities! Login now and discover the exclusive benefits awaiting you.

- Qlik Community

- :

- Support

- :

- Support

- :

- Knowledge

- :

- Support Articles

- :

- Building automations for data loading

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

Building automations for data loading

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Building automations for data loading

Aug 26, 2021 10:11:23 AM

Mar 5, 2021 9:49:23 AM

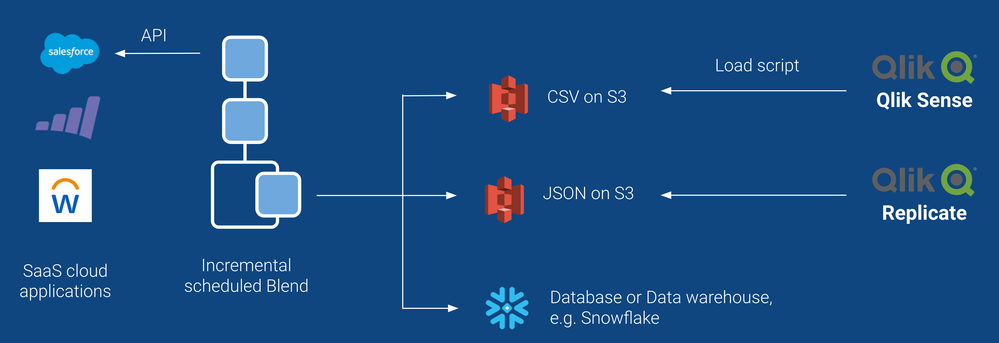

This article describes best practices for building automations in Qlik Application Automation, that load data from a source cloud application into data files on cloud storage. Example: writing data from Marketo or Salesforce into CSV files or JSON files on AWS S3.

The goal of these patterns is to implement automations that are part of an overall ETL (Extract, Transform, Load) or ELT (Extract, Load, Transform) flow. For example an automation could write data to S3 files, which are then loaded into Qlik Sense using a load script.

Qlik Application Automation is not an ETL or ELT tool

Before we dive in, please note that Qlik Application Automation is not an ETL or ELT tool.Qlik Application Automation is an iPaaS that uses APIs of cloud applications to read and write data. Automations process individual records/objects in loops, which is a different approach to batch-oriented CDC (change data capture) solutions such as Qlik Replicate.

That said, you can create an automation that reads data from a source (e.g. Marketo) and writes that data to e.g. CSV files on cloud storage, as we describe in the next paragraphs.

Handling the schema

Hardcoded schema with manual field mapping

The schema is typically hardcoded in the automation, by mapping the desired fields from the source to columns in the destination. This means that if a custom field is added in the source, the field mapping must be added in the automation so that the new column is added to the CSV files. If you are writing to e.g. Snowflake, you would have to add the column in the correct table manually.

Getting the schema from the source (meta data)

Some connectors have endpoints (blocks) to fetch meta data, e.g. "Describe object" in the Salesforce connector allows you to query the schema (standard fields and custom fields) of a given object in Salesforce. You can use this meta data to dynamically set the CSV columns in the automation.

Introspection of the data

Alternatively, you can also perform "introspection" on the data, e.g. by looking at one or more records and using the keys as columns. Note that if a certain key is missing in the record used for introspection, that column will be missing in your CSV file.

1. EXTRACT

1.1. Full data dump

The automation will read all records from the source and write these to a single CSV file.

On the next run, the file is removed and a new full dump file is created.

- Pro: easy to build, single file per object type

- Con: does not scale, e.g. if you have 1 million Accounts in a CRM and you use a daily schedule, you might hit API rate limits and/or the automation could become too slow.

1.2. Incremental data dump

The automation will read records from the source incrementally, and it will create a new CSV file on every run.

The first CSV file will typically contain a full dump of the data.

Subsequent files will contain all new and updated records since the previous run.

Learn more about incremental blocks

1.2.1 Only new data is added in the source (no updates)

Each record will only appear once in your set of CSV files. This makes loading of these CSV files in a final destinaion (e.g. a BI tool) straightforward.

1.2.2 Data is updated in the source (create + update)

An individual record may appear in multiple CSV files. E.g. let's assume John Doe was added to a CRM on Monday and his email was updated on Wednesday. John Doe will now appear twice in your CSV file set:

- CSV file Monday: John Doe, john@acme.com

- CSV file Tuesday:

- CSV file Wednesday: John Doe, john@newcompany.com

This requires attention when loading the CSV files into a final destination such as a BI tool. You want to make sure that John Doe appears only once in your dataset, and you want only the most recent record (from Wednesday) to survive. See below for an example load script in Qlik Sense that solves this issue.

1.2.3 Data is updated and deleted in the source (create + update + delete)

Records that are deleted in the source will simply "no longer show up" in the API. Most APIs do not have a way to query for deleted records. In order to make sure deleted records are also removed in the final destination (e.g. your BI dashboards), you can implement following pattern:

- Full sync on Sunday

- Incremental sync on Monday

- Incremental sync on Tuesday

- ...

- Incremental sync on Saturday

- Full sync on Sunday: delete all files, start from scratch by removing the pointer of the incremental block

This means you will always have a maximum of 7 CSV files per object type on your cloud storage.

1.3 Writing to CSV files

Create a new file and write a header CSV line with your columns.

Next, loop over your data, and use the CSV formula to convert each record (object) to a CSV line.

Learn more about the CSV formula

Pay attention to pitfalls when converting a JSON object to CSV:

- The order of keys in json can vary across records, while the columns are fixed in a CSV file

- Some keys may be missing across records, while missing columns in a line would corrupt your CSV file

The above pitfalls are solved by using the CSV formula with a fixed set of columns that is applied for each line that is written to the CSV file.

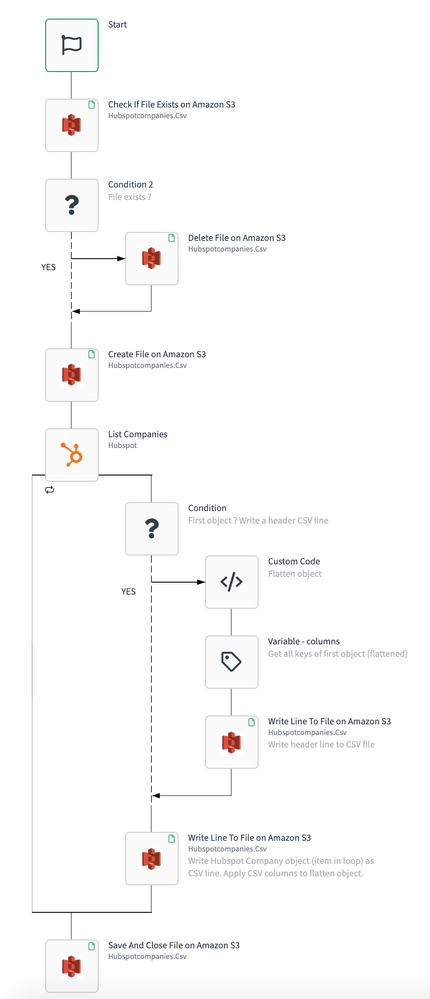

Example automation:

Notes on the above example automation:

- We use the following Condition to check if we are processing the first item (to write the CSV header line): { $.listCompanies.index } = 0

- We are using "introspection" on the first record to set the CSV columns. We flatten the object first with custom code. Coming soon: new formula "flatten". Then we use the formula "getkeys" to use the keys of the first flattened record as column names.

- We apply the CSV columns on each row that we write. This will map the keys of the actual object (Hubspot Company) to the CSV columns. For example, the column name "address.street" will map the nested property to the column with this name.

1.4 Writing to JSON files

Create a file on cloud storage with ".json" extension. Loop over your data and write individual objects to the file. Each object will automatically be converted to a JSON representation in the file.

Note: if your final destination is Qlik Sense, use CSV files instead (see above).

2. TRANSFORM

Transformations in automations are accomplished by using a combination of field mappings, formulas and variables. For example, you could transform an object from a source (e.g. an Account in a CRM) to a new object (e.g. a Customer) by using a variable of type "object" and by applying field mappings on individual fields and optionally using formulas.

Learn more about variables

Learn more about formulas

3. LOAD

The automations described here write the data to CSV or JSON files on cloud storage (e.g. AWS S3 or Dropbox or Google Cloud Storage). The goal however is to eventually load the data into a data warehouse, a data lake or a BI/visualisation tool such as Qlik Sense.

3.1 Writing to a database or data warehouse (e.g. Snowflake)

Instead of using CSV files, you could write directly to a database or even a data warehouse (MySQL or Snowflake), using one of the available connectors.

Note that an automation will write the data record by record using an "Insert" or "Update" block, and depending on the connector you could write in batches of e.g. 10 or 100 records to optimize performance.

As mentioned before, the Schema management is typically not handled in the automation. The automation assumes that the tables exist in the destination with all the required columns.

3.2 Loading data into Qlik Sense

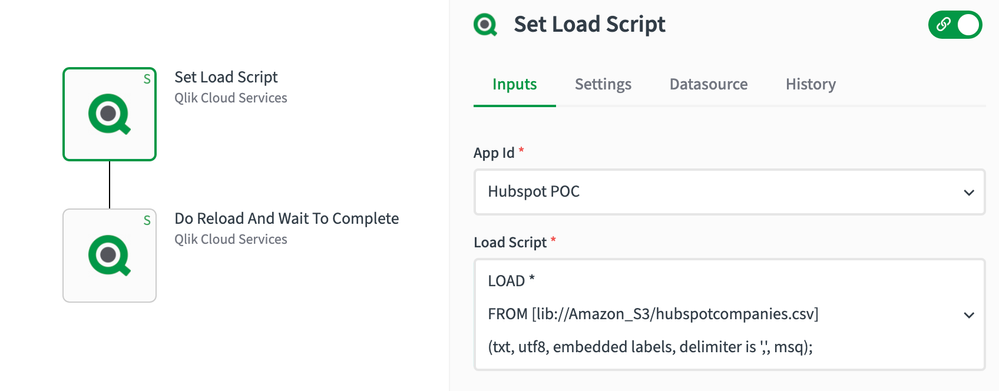

Once the data is written to CSV files on S3, you can load the data into Qlik Sense by setting up a datasource to your S3 bucket and using a load script.

3.2.1 Loading data into Qlik Sense using "full data dump" files

In this scenario you have one CSV file per table (object type) and you simple load these "full data dump" files into Qlik Sense.

Example load script in Qlik Sense:

LOAD *

FROM [lib://Amazon_S3/hubspotcompanies.csv]

(txt, utf8, embedded labels, delimiter is ',', msq);

We can use the QCS connector to set the load script from the automation and do a reload. Example:

3.2.2 Loading data into Qlik Sense using "incremental data dump" files

In case you have built an "incremental data dump" automation, you will have multiple CSV files per table. Example:

- CSV file Monday: initial dump of Account records

- CSV file Tuesday: incremental dump of Account records that were added or updated since Monday

- CSV file Wednesday: incremental dump of Account records that were added or updated since Tuesday

Etc.

As described in the above example with "John Doe", records may appear in multiple CSV files. The below load script will solve this issue in 3 steps:

- Load all CSV files one by one in a loop

- Order the data by most recent records first (we only want the most recent record to survive)

- Remove duplicates (so that only the most recent record survives)

Below is a load script example that loads "Leads" from a folder "Marketo" on S3.

Naming of each file: **Leads_*timestamp*.csv**

Example: Leads_2020-01-20.csv (daily schedule) or Leads_2020-01-20t14:50:00.csv (hourly schedule)

for each file in filelist('lib://Amazon_S3/Marketo/')

If Index('$(file)','csv') > 0 And Index('$(file)','leads_') > 0 Then

Leads_from_all_csv_files:

Load *,

Num(Textbetween(Filename(), '_', '.csv')) As FileTimestampNum

From [$(file)]

(txt, utf8, embedded labels, delimiter is ',');

End If

Next file

Leads_most_recent_first:

NoConcatenate

Load *

Resident Leads_from_all_csv_files

Order By FileTimestampNum Desc;

Leads:

Load Distinct

id As id_temp, *

Resident Leads_most_recent_first

Where Not exists(id_temp, id);

Drop Table Leads_from_all_csv_files, Leads_most_recent_first;

You will have to extend this load script for each object type (or table), e.g. one section for Accounts, one section for Leads and one section for Contacts. Each section will be a copy of the above with "leads" replaced with e.g. "accounts".

Here's another load script example, where the data is stored in a QVD, and on each reload only one new CSV file is processed. The CSV file can be deleted by the automation, once the load script has executed:

//load all data from QVD (if file already exists)

IF (FileSize('lib://DataFiles/Full_Data.qvd')>0) THEN

Full_Data:

LOAD

*

FROM [lib://DataFiles/Full_Data.qvd] (qvd);

END IF;

//append new data from one CSV file (contains new & updated records)

//add column with row number, needed in sorting, see below

Full_Data:

LOAD

*,

rowno() as rowno

FROM [lib://DataFiles/new_data.csv]

(txt, codepage is 28591, embedded labels, delimiter is ',', msq);

//sort by newest records first, needed to dedupe and keep only most recent version of a record

Full_Data_Sorted:

NoConcatenate

Load *

Resident Full_Data

Order By rowno Desc;

//remove duplicate records, this will keep most recent version of each record

Full_Data_Deduped:

Load Distinct

name As name_temp, *

Resident Full_Data_Sorted

Where Not exists(name_temp, name);

Drop field name_temp;

Drop Table Full_Data_Sorted, Full_Data;

//Store new dataset in QVD

Store Full_Data_Deduped into lib://DataFiles/Full_Data.qvd (qvd);