Unlock a world of possibilities! Login now and discover the exclusive benefits awaiting you.

- Qlik Community

- :

- Forums

- :

- Data Integration

- :

- Products & Topics

- :

- Qlik Replicate

- :

- Parallel Load - Not All Segments Used

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Parallel Load - Not All Segments Used

We use he parallel load feature of Qlik Replicate for our larger tables.

Our source is DB2 for LUW and target is MS SQL.

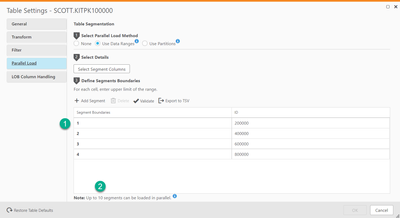

I created a new task with only one table and defined 4 segments (specified 3 data range values) in Parallel Load config area.

The table started loading but I could see on the SQL server side that it was only using 3 processes and not the expected 4.

Is there anyway to make it use/allocate all 4 processes ?

(I'd like to create 10 processes for this very large table - as it takes about 30 hours even with 4 segments.)

Thanks,

Martin

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hello @Martin11 ,

You can increase or decrease the number of segments that will be loaded in parallel. For example, if you selected the Use all table partitions option and the source table has 20 partitions, increasing the default number of concurrent tasks (5) may improve performance.

For example: If you select a table with 6 partitions and load the table using the Use Partitions method, 5 partitions will be loaded in parallel, corresponding with the default number of concurrent tasks (5). When one of the sub-tasks completes its run, it will be assigned to loading the sixth partition.

The currently set value is displayed at the bottom of the Parallel Load tab. You can modify this value in the Maximum number of tables to load in parallel field in the Full Load Tuning tab.

Let us know in case if you need additional information.

Regards,

Sachin B

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hello Sachin,

My source table does not have partitions.

The number of concurrent tasks is set to 10.

I've used Data Ranges to define 4 parallel segments for this table.

My question is: WHY DOES THE RELOAD OF THIS TABLE (which is the only table in the task) NOT ALWAYS USE ALL 4 DEFINED SEGMENTS/PROCESSES?

This is a very large table (630,000,000 rows); we are currently in the midst of the 2nd reload attempt.

The first reload attempt only used 3 threads - which I could see by viewing active processes on the target SQL server.

The first load attempt restarted after about 30 hours; no error message was given.

The 2nd reload attempt which is going now has been using all 4 threads / processes for parallel load.

Other than obvious restriction of number of concurrent processes, WHY does Replicate not always use all defined parallel load segments ?

Thanks,

Martin

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Occam's razor suggests that in fact it did start 4 threads but one failed or thought it was done.

Carefully study the reptask log file for started/completed and rows transferred messages.

Hint... once you start recognizing good per-thread search words, use 'find all' (Notepad++) or similar to see all 4 (or 3 🙂 and also do some find all using the load/unload thread number which you'll find as the first number on each log line before the timestamp. Using that you'll get a good picture of the steps to expect.

Maybe one of the 4 filters was incorrect or selected a much smaller dataset and it is simply done?

Hein.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hello @Martin11 , copy @Heinvandenheuvel , @SachinB ,

I'm not very sure how you monitor the processes in SQL Server side, however in my labs test, I can see the maximum 5 processes (SPID=67/74/76/78/80) running concurrently (total 4 segments defined in table setting; 5 different unload SQL are running), see the script and screen copy below.

BTW, some factors may impact the max processes, eg the max connections allowed, or other resource limitation etc. You may set SOURCE_UNLOAD to Trace and study the task log file to see if some clues there.

SELECT

p.spid, p.status, p.hostname, p.loginame, p.cpu, r.start_time, r.command,

p.program_name, text

FROM

sys.dm_exec_requests AS r,

master.dbo.sysprocesses AS p

CROSS APPLY sys.dm_exec_sql_text(p.sql_handle)

WHERE

p.status NOT IN ('sleeping', 'background')

AND r.session_id = p.spid Hope this helps.

Regards,

John.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thanks all, I'll try these out.