Unlock a world of possibilities! Login now and discover the exclusive benefits awaiting you.

- Qlik Community

- :

- All Forums

- :

- QlikView App Dev

- :

- Re: How To Remove Duplicates and Count Rows?

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

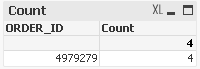

How To Remove Duplicates and Count Rows?

Hello:

I have a dataset that has duplicate entries in the data for LineItemId and LineItemFactId.

As always, any and all help is appreciated. Thanks in advance.

Accepted Solutions

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

You can take out those field which are unique combination and then can have sum of flag

like

Load distinct Order_id,

ParentLineItemId,

LineItemId,

1 as lineitemidcount

where not isnull(ParentLineItemId)

use sum(lineitemidcount)

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Perry,

Hope the below solution will help you to solve your issue:

Count({<ParentLineItemId-={'-'}>}distinct LineItemId)

Thanks & Regards,

Neelima

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

You can take out those field which are unique combination and then can have sum of flag

like

Load distinct Order_id,

ParentLineItemId,

LineItemId,

1 as lineitemidcount

where not isnull(ParentLineItemId)

use sum(lineitemidcount)

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thank you for the reply Neelima. Sorry for the delay in responding back to you. Your solution will definitely give me the count but does not remove the duplicates. As it turns out, the duplicate problem was due to issues with the data itself. I was verifying that and that's why it took me awhile to respond.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hello Ravi:

Sorry for the delay in replying. As it turns out, there's a problem with the data itself that's causing the duplicates. Your solution did remove some of the duplicate lines (where ParentLineItemId is null) and I suspect if not for the data issues, your solution would remove any others. Thanks for your reply and I'll be marking your answer as the correct answer. Thanks again.