Unlock a world of possibilities! Login now and discover the exclusive benefits awaiting you.

- Qlik Community

- :

- Support

- :

- Support

- :

- Product News

- :

- Release Notes

- :

- Qlik Catalog Release Notes - November 2021 Initial...

- Subscribe to RSS Feed

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

Qlik Catalog Release Notes - November 2021 Initial Release to Service Release 3

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Qlik Catalog Release Notes - November 2021 Initial Release to Service Release 3

Table of Contents

- What's new in Qlik Catalog November 2021 Service Release 3

- Noteworthy Enhancements with the November 2021 Initial Release (details later)

- Noteworthy Newly Resolved Issues with this Release (details later)

- Schema Change Detection for JDBC Addressed and Registered Entities

- Resolved Defects

- November 2021 SR3 (4.12.3)

- Fix Google Big Query Loads Concurrency Issue

- November 2021 SR2 (4.12.2)

- Address log4j2 Zero-Day Exploits, CVE-2021-45046 and CVE-2021-45105

- November 2021 SR1 (4.12.1)

- Address log4j2 Zero-Day Exploit, CVE-2021-44228

- Publish to Qlik Sense User Validation Support

- # Enter the directory and user name of a Sense 'RootAdmin' user.

- # Used to validate that the domain user being used for Publish to Qlik Sense has previously logged i...

- # Sense server. This prevents users known only to Catalog being inadvertently created in Sense.

- # Mandatory if multiple directories were specified in property 'qlik.sense.active.directory.name'.

- qlik.sense.root.admin.directory.name=AD

- qlik.sense.root.admin.user.name=sense-service

- Publishing to QVD Format When Base Directory Contains Symlink

- Non-ASCII Foreign Characters no Longer Escaped in Data Distribution

- Extended Support for Fields in Prepare Dataflows That Are Also Pig Reserved Words

- API Change to Correctly Filter Sources Listed in Sense Embedded "QVD Catalog"

- November 2021 Initial Release (4.12)

- JDBC Drivers Now Fully Isolated from Catalog Libraries

- Fix for Load Failures When Parquet Source Data Contains Ugly Records

- Fix for Inconsistent Record Counts If Ugly Data Present in Prepare Dataflow

- Append Load Option No Longer Available for Registered Entities

- Fixed Source Search Dialogs

- Fixed Failure when AD User Checks for Permission to Promote Entity

- Open Issues

- Issue Creating Linux Services that Require Podman on RHEL 8 and Ubuntu

- Upgrade notes

- Migrating to or Upgrading Tomcat 9

- Process if Upgrading From June 2020 or Earlier

- Downloads

- Qlik Catalog November 2021 SR3 - Application

- Qlik Catalog November 2021 SR3 - Installer

The following release notes cover the versions of Qlik Catalog released in November 2021.

What's new in Qlik Catalog November 2021 Service Release 3

Noteworthy Enhancements with the November 2021 Initial Release (details later)

- Schema Change Detection for JDBC Addressed and Registered Entities

- AWS EMR 6.4.0 Support

Noteworthy Newly Resolved Issues with this Release (details later)

- QDCB-1160 - Fix Google Big Query Loads Concurrency Issue

Schema Change Detection for JDBC Addressed and Registered Entities

Schema change detection has always been supported for addressed entities sourced from JDBC (relational) connections. However, business metadata such as business name and description was not preserved. As of the November 2021 release, business metadata is preserved.

As of the November 2021 release, schema change detection is supported for registered entities sourced from JDBC (relational) connections. New installations will have this feature automatically enabled. Upgraded installations will require the addition of a new property in file core_env.properties:

# If true, for ADDRESSED and REGISTERED entities loaded from JDBC relational sources, column additions

# and deletions will be detected and applied. If true, business metadata and tags will be preserved

# and not overwritten. Set to false for legacy behavior. Default: false (default to false so it is

# disabled for legacy customers that upgrade)

support.schema.change.detection=true

Set the above property to false to restore legacy behavior.

If this feature is enabled, all entity and field properties will survive reload, except entity properties "src.file.glob" and "header.validation.pattern", which must be updated to reflect column additions and deletions. This applies to both external and internal entities and fields.

All field data will survive reload, except for the following elements (as seen in the UI) which are directly derived from the JDBC column: Data Type, Index, Required (NOT NULL), Primary Key and Foreign Key.

The Load Log history of all prior loads will be kept. However, the sample data for prior loads is deleted as are distribution database table references to the prior loads (e.g., from Discover Query window). Note also that when initially defining an entity, if all columns are not selected to become fields, the unselected columns are automatically detected on first load. This feature ensures the full set of columns is always captured as fields.

AWS EMR 6.4.0

The multi-node version of Catalog may now be deployed on proper edge nodes of EMR 6.4.0 Hadoop cluster environments. Please see the multi-node installation guide for instructions on building such a node.

Resolved Defects

November 2021 SR3 (4.12.3)

Fix Google Big Query Loads Concurrency Issue

Jira ID: QDCB-1160

When multiple simultaneous Google Big Query JDBC loads were run, loads would sometimes fail. A message like the following was logged: FileNotFoundException: <OAuthPvtKeyPath from JDBC URI> (No such file or directory).

This issue was fixed by making the management of the private key file thread-safe.

November 2021 SR2 (4.12.2)

Address log4j2 Zero-Day Exploits, CVE-2021-45046 and CVE-2021-45105

Jira ID: QDC-1285

Single-node Catalog has been upgraded to use log4j2 version 2.17.0.

November 2021 SR1 (4.12.1)

Address log4j2 Zero-Day Exploit, CVE-2021-44228

Jira ID: QDC-1285

Single-node Catalog has been upgraded to use log4j2 version 2.15.0 rather than version 2.13.2.

Publish to Qlik Sense User Validation Support

Jira ID: QDCB-1007

When "Publish to Qlik Sense" is initiated, Catalog authenticates itself to Qlik Sense using the certificates copied from the Sense server at setup (e.g., those in /usr/local/qdc/qlikpublish/certs). However, the user which is impersonated is that of the logged in Catalog user (or the user specified in core_env property "podium.qlik.username"). It is assumed that both Catalog and Sense have been configured to use the same Active Directory (AD) users.

However, until this release, if a local Catalog user (that did not exist in Sense) was used for Publish to Qlik Sense, a user record would inadvertently be created in Sense, even if the user did not exist in AD.

This release now validates that the Catalog user has previously logged into Sense and exists there. Publish to Qlik Sense will not succeed if the user is unknown to Sense. For this validation to occur, the following core_env properties must be set:

# Enter the directory and user name of a Sense 'RootAdmin' user.

# Used to validate that the domain user being used for Publish to Qlik Sense has previously logged into the

# Sense server. This prevents users known only to Catalog being inadvertently created in Sense.

# Mandatory if multiple directories were specified in property 'qlik.sense.active.directory.name'.

qlik.sense.root.admin.directory.name=AD

qlik.sense.root.admin.user.name=sense-service

If these properties are not set and Publish to Qlik Sense occurs, a WARN message is logged, but the publish does proceed:

2021-11-24 11:07:46,341 WARN Cannot validate that user AD/xxx exists on Sense server sense2.ad.podiumdata.net. A user record will be created if it does not exist, this may be undesired. Please set core_env properties qlik.sense.root.admin.directory.name and qlik.sense.root.admin.user.name [QSRestClientSvcImpl[https-jsse-nio-8443-exec-6]]

Publishing to QVD Format When Base Directory Contains Symlink

Jira ID: QDCB-1088

When publishing to QVD format, the publish job would fail with "code: 4, parameter: 'Invalid path'" if the Catalog base directory path contained a symlink. For example, if the "data" element in the path "/usr/local/qdc/data" was a symlink referencing "/app_data", then the above error was seen. The engine container cannot process paths on the host OS that contain symlinks.

This issue has been addressed by the use of the "readlink" command line utility. It is used to normalize the path prior to passing it to the engine container. Using the above example, instead of passing a path parameter of "/usr/local/qdc/data", a path of "/app_data" is passed to the engine container.

Non-ASCII Foreign Characters no Longer Escaped in Data Distribution

Jira ID: QDCB-1101

When viewing a Field's Data Distribution (aka profile) in the Discover module, non-ASCII foreign characters were displayed using their unicode escape sequences. For example, "São Miguel" was displayed as "S\u00E3o Miguel". This same issue was seen when viewing Load Log details for ugly data.

This issue has been addressed. Only control characters are now escaped in these situations. Non-ASCII foreign characters are no longer escaped.

Extended Support for Fields in Prepare Dataflows That Are Also Pig Reserved Words

Jira ID: QDCB-1107

Prepare Dataflows use Apache Pig and its dataflow language Pig Latin. Pig Latin has reserved words such as LOAD, STORE and REGISTER. Catalog applies special logic to Entity Fields, used in Prepare Dataflows, that are named the same as Pig Latin reserved words (e.g., a relational table has a "LOAD" column, which becomes an Entity Field in Catalog). This special logic is applied to a subset of the Pig Latin language.

With this release, the "REGISTER" reserved word is now included in the special handling, and Fields named "REGISTER" can be used in Prepare Dataflows.

This release also introduces the ability to register additional reserved words for special handling. A core_env property is used:

# Entity fields used in Prepare Dataflows may also be Pig reserved words (e.g., STORE). Frequently used reserved words

# are correctly handled if they are field names. This property may be used to augment the set of known reserved words

# with unanticipated words. Words must be comma separated. Default: not used

#pig.reserved.words.additional=register,CASE

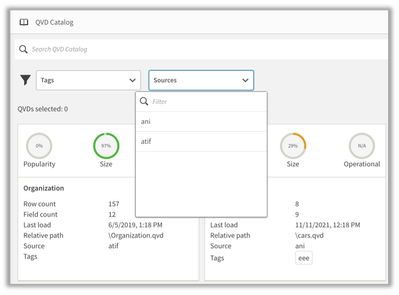

API Change to Correctly Filter Sources Listed in Sense Embedded "QVD Catalog"

Jira ID: QDC-1253

In Qlik Sense, data may be added to an app by using "QVD Catalog", a simplified, embedded version of Catalog which runs directly in Sense. It displays QVDs that have been discovered and loaded by Catalog, filtered to only display the QVDs to which the Sense user has access. However, there is a "Sources" drop-down filter which incorrectly listed all Catalog sources instead of only those to which the user has access. This issue has been addressed by a Catalog API functionality change. The list of Sources is now correctly filtered:

November 2021 Initial Release (4.12)

JDBC Drivers Now Fully Isolated from Catalog Libraries

Jira ID: QDCB-752, QDCB-1078

The following description is specific to Google BigQuery. However, it is applicable to all JDBC drivers used by Qlik Catalog to load data from relational database Sources.

The Google BigQuery JDBC driver consists of 50+ library jar files. Catalog (single-node) consists of 300+ library jar files. They each include different versions of the same libraries (e.g., guava, http-client, gson, etc.). Incompatibilities result, such as driver hangs, and "class not found" or "method not found" errors. These are collectively known as "classpath issues". This fix fully isolates the JDBC drivers used by Catalog, including the Google BigQuery driver, into their own class loader(s). The Google BigQuery JDBC driver is now isolated from the Catalog libraries, thereby ensuring only the correct libraries are loaded and run.

Here is a reminder on deploying JDBC drivers:

Any JDBC driver for your licensed RDBMS should be placed in the directory called out by the following core_env property. This directory is preferred over placing drivers in $TOMCAT_HOME/webapps/qdc/WEB-INF/lib, where they will be overwritten on upgrade and where they may interfere with other libraries.

# An alternate directory to WEB-INF/lib for JDBC driver jars.

# May also be set directly, for a given driver, on table

# podium_core.pd_jdbc_source_info, column alt_classpath.

# Restart required. Default: not set

jdbc.alternate.classpath.dir=/usr/local/qdc/jdbcDrivers

If a JDBC driver is particularly complicated and consists of multiple jars (e.g., the Simba Google BigQuery driver has 50+ jars), it can be further isolated into its own sub-directory. If you do this, you must run a SQL statement as follows (default password is “nvs2014!”; update path and name):

psql podium_md -U podium_md -c "update podium_core.pd_jdbc_source_info set alt_classpath = '/usr/local/qdc/jdbcDrivers/simbaBigQuery' where sname = 'BIGQUERY';"

Fix for Load Failures When Parquet Source Data Contains Ugly Records

Jira ID: QDCB-1072

Data loads were failing if Parquet format source data files contain characters that cause the record to be declared as ugly. For example, by default, the presence of non-ASCII characters (e.g., Chinese characters) will cause a record to be marked as ugly.

The error occurred when the code attempted to calculate the absolute offset of the "ugly" character(s). Due to Parquet using columnar storage this could not be calculated, and a NullPointerException occurred.

This issue has been fixed and the record offset is represented as -1.

A workaround for some data was to add Source (or Entity) properties "default.field.allow.non.ascii.chars" and "default.field.allow.control.chars", and set them to true. This prevented the records from being marked as ugly and avoided the issue.

Fix for Inconsistent Record Counts If Ugly Data Present in Prepare Dataflow

Jira ID: QDCB-1065

If load-controlling properties (e.g., "default.field.allow.non.ascii.chars") are not appropriately set on Discover target entities, when a Prepare Dataflow is executed ugly data may be detected during the "Profiling and Sampling" phase. In this case, the resulting Total, Good and Ugly record counts were inconsistent. This issue has been addressed.

Generally, whichever properties were previously added to the input entities in the Source module to enable "good" processing must also be added to the target entities in the Discover module.

Note that it may be difficult to discern why records are ugly, as there is no Load Log for the "Profiling and Sampling" phase of a Prepare Dataflow. However, the ugly records are present on disk, in a directory like the following: <base directory>/receiving/<source>/<entity>/<timestamp>/ugly.

Append Load Option No Longer Available for Registered Entities

Jira ID: QDCB-1069

The Append load option is no longer available for Registered Entities. Loads for Registered Entities only maintain representative sample and profile data. The underlying data is not retained. Each load is representative of a point-in-time, to which data cannot be appended.

Fixed Source Search Dialogs

Jira ID: QDCB-1082

Three Source search dialogs were broken -- an error similar to the following was generated: "pgui.error.code.DYNAMIC_ERROR - No handler found for GET /podium-trunk/source/internal/<id>/<search text>".

The following dialogs now function correctly:

- Security module, Source search on Group modification wizard

- Prepare module, Source search on Add Source wizard

- Explore module, Source search on Add Source wizard

Fixed Failure when AD User Checks for Permission to Promote Entity

Jira ID: QDCB-1089

When Entities need to be promoted, Catalog looks up the user and checks if the user is allowed to promote Entities. However, the lookup was performed using the user's simple name (e.g., jdoe) and not the login name (e.g., jdoe@ad.podiumdata.net). Therefore, when Active Directory (AD) users were logged in, the lookup failed and a NullPointerException resulted. Three scenarios have been fixed:

- Prepare Dataflow validation of registered Entity

- Prepare Dataflow execution of registered Entity, requiring promotion

- Publish of registered Entity, requiring promotion

Open Issues

Issue Creating Linux Services that Require Podman on RHEL 8 and Ubuntu

Jira ID: QDCB-1092

On RHEL 8 and Ubuntu, Qlik Catalog requires podman in order to run two pairs of containers: (1) a licenses service and engine pair; and (2) a DCaaS and connector pair for NextGen XML. There are launch and shutdown shell scripts for each pair (see installation guide). The startup of these containers may be automated using systemd services.

Issue: the service files (i.e., /etc/systemd/system/qlikContainers.service and /etc/systemd/system/nextgen-xml.service) currently assume Docker, rather than podman, is used. The references to "docker.service" must be removed. Edit each service file: (1) remove line "Requires=docker.service"; and (2) remove "docker.service" from the line beginning "After=".

Upgrade notes

Migrating to or Upgrading Tomcat 9

Beginning with the May 2021 release, only Apache Tomcat 9 is supported. The installer will prohibit other versions. If using Tomcat 7, please first initiate a migration to Tomcat 9 before installing this release. Then, when installing, the upgrade option (-u) is NOT used.

These instructions may also be used to upgrade from an older version of Tomcat 9 to a newer version.

|

Step |

Sample Commands |

|

Shutdown and rename old Tomcat 7 or 9 |

cd /usr/local/qdc (or cd /usr/local/podium) ./apache-tomcat-<OLD_VERSION>/bin/shutdown.sh mv apache-tomcat-<OLD_VERSION> old-apache-tomcat |

|

Download and expand Tomcat 9 - NOTE: adjust version 9.0.54 to use latest 9.0.x series |

wget https://archive.apache.org/dist/tomcat/tomcat-9/v9.0.54/bin/apache-tomcat-9.0.54.tar.gz tar -xf apache-tomcat-9.0.54.tar.gz rm apache-tomcat-9.0.54.tar.gz |

|

Copy core_env.properties from old Tomcat to new Tomcat 9 |

cp old-apache-tomcat/conf/core_env.properties apache-tomcat-9.0.54/conf/ |

|

If migrating from Tomcat 7: Extract server.xml from podium.zip and copy to new Tomcat |

unzip -j podium-4.10-<BUILD>.zip podium/config/tomcat9-server.xml -d . mv ./tomcat9-server.xml apache-tomcat-9.0.54/conf/server.xml |

|

If upgrading Tomcat 9: Copy server.xml from old Tomcat 9 to new Tomcat 9 |

cp old-apache-tomcat/conf/server.xml apache-tomcat-9.0.54/conf/ If the old Tomcat 9 was configured for HTTPS, and the keystore (jks file) was stored in the old Tomcat directory, migrate it to the new Tomcat directory, and update conf/server.xml to reference it. Consider placing the keystore file in a non-Tomcat directory such as /usr/local/qdc/keystore. |

|

Configure QDCinstaller.properties for Tomcat 9 |

Whether using an existing QDCinstaller.properties file from a previous install, or configuring one for the first time, ensure that it is updated to point to Tomcat 9: TOMCAT_HOME=/usr/local/podium/apache-tomcat-9.0.54 |

|

Finally, when the installer is run, do NOT specify upgrade mode (-u), as some files should be created as if it were a first-time install. |

./QDCinstaller.sh |

At this point, Tomcat 9, if newly installed, will support only HTTP on port 8080.

Verify successful Qlik Catalog startup and basic functionality.

Additional configuration will be required to configure HTTPS on port 8443, apply security headers, etc. If Tomcat 7 used HTTPS, the keystore (jks file) containing the public-private keypair should be copied to Tomcat 9 and conf/server.xml updated.

In addition, Tomcat 7 may have been configured as a service. It should be disabled. Tomcat 9 may be configured as a service to automatically start.

Please see the install guide for guidance on both of these.

Process if Upgrading From June 2020 or Earlier

Do not attempt to upgrade until the following is understood.

If upgrading from a version of Qlik Catalog prior to September 2020 (4.7) there are utilities that MUST be run after Catalog is upgraded. Once run, the utilities need never be run again.

The server may not start until the first two utilities have been run and will log a WARN at startup until the third is run. Do NOT upgrade the server until familiar with these utilities and the information required to run them. It will take time to gather this information. Gathering the information BEFORE Catalog is upgraded will minimize downtime.

Run the utilities in this order:

- jwt2CertsUtility -- please review readme.txt

This will be required if Qlik Sense Connectors have been defined in order to load QVDs.

Will need to gather networking info and certificate files from Qlik Sense servers.

May be run from any directory.

- singleNodeUpgradeForEntitiesWithBadOrUglyData.sh -- please review comment in script

This will be required only if the installation is single-node.

Will need podium_dist database info if defaults altered.

May be run from any directory.

- singleNodeUpgradeToGrantReadOnlyUserAccessToDistSchemas.sh -- please review comment in script

This will be required only if the installation is single-node.

Will need podium_dist database info if defaults altered.

May be run from any directory.

Downloads

Qlik Catalog November 2021 SR3 - Application

Qlik Catalog November 2021 SR3 - Installer

About Qlik

Qlik converts complex data landscapes into actionable insights, driving strategic business outcomes. Serving over 40,000 global customers, our portfolio provides advanced, enterprise-grade AI/ML, data integration, and analytics. Our AI/ML tools, both practical and scalable, lead to better decisions, faster. We excel in data integration and governance, offering comprehensive solutions that work with diverse data sources. Intuitive analytics from Qlik uncover hidden patterns, empowering teams to address complex challenges and seize new opportunities. As strategic partners, our platform-agnostic technology and expertise make our customers more competitive.