Unlock a world of possibilities! Login now and discover the exclusive benefits awaiting you.

- Qlik Community

- :

- Forums

- :

- Analytics & AI

- :

- Products & Topics

- :

- App Development

- :

- Re: Store new rows into QVD without loading QVD? (...

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Store new rows into QVD without loading QVD? (Qlik Sense)

Hi,

I am wondering if, in Qlik Sense, there is a way to store new rows into a qvd without needing to load the qvd.

For example:

- I am doing an incremental load off of a date and there are 100 new rows of data.

- The qvd that I need to store those 100 new rows into contains over 60 million rows

Is there a way to store those 100 new rows into the qvd with 60 million rows without having to load the 60 million rows?

Current Script

Incremental_Table:

NoConcatenate

Load

*

Resident Table1

Where IsNull(deletedDateTime) // Only loading rows that do not have a deleted timestamp

;

Drop Table table1;

// Qvd with over 60 million rows

Concatenate (Incremental_Table)

LOAD

*

FROM [lib://QlikData/Fact.qvd] (qvd);

Store Incremental_Table into [lib://QlikData/Fact.qvd] (qvd);

Drop TableIncremental_Table;

Thank you!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Olá.

Sorry for my english.

Maybe helpful.

https://www.analyticsvidhya.com/blog/2014/09/qlikview-incremental-load/

this article is in qlik view, but you can do the same for qlik sense without any problems.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thanks for the article. I am currently doing an incremental load.

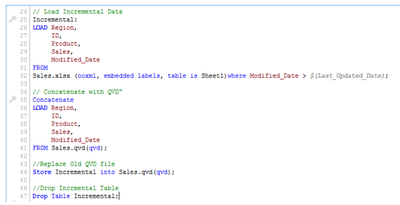

In the example below, lines 34-41, they are concatenating the existing qvd to the new rows. My issue is, it is a large qvd (over 60 million rows) and I was wondering if there was a way to store the new rows into the qvd without having to load the existing qvd into the app. I think the answer is no, but figured I would ask.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

It's not possible just to append any records to a qvd without loading them because Qlik doesn't use a linear row-storing else it's a column-based storing-format which only stored the distinct values of each fields and creates a bit-stuffed pointer to link the values with the records. This logic/structure needs to re-created by each change.

Usually this isn't a problem because an optimized load from a qvd is very fast even by large datsets because no extra/new processing is needed to load the data else they are just transferred from the storage/network into the RAM - means it depends completely from their performance how long it takes.

Beside this I suggest to reverse the order within your incremental approach and loading at first the larger historical qvd-data and then adding the new records.

- Marcus