Unlock a world of possibilities! Login now and discover the exclusive benefits awaiting you.

- Qlik Community

- :

- All Forums

- :

- QlikView Administration

- :

- Re: loading Multiple Files in Directory

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

loading Multiple Files in Directory

Hi Guys,

Need to load multiple files from a directory with extension .txt. How is this achieved in expressor?

Accepted Solutions

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

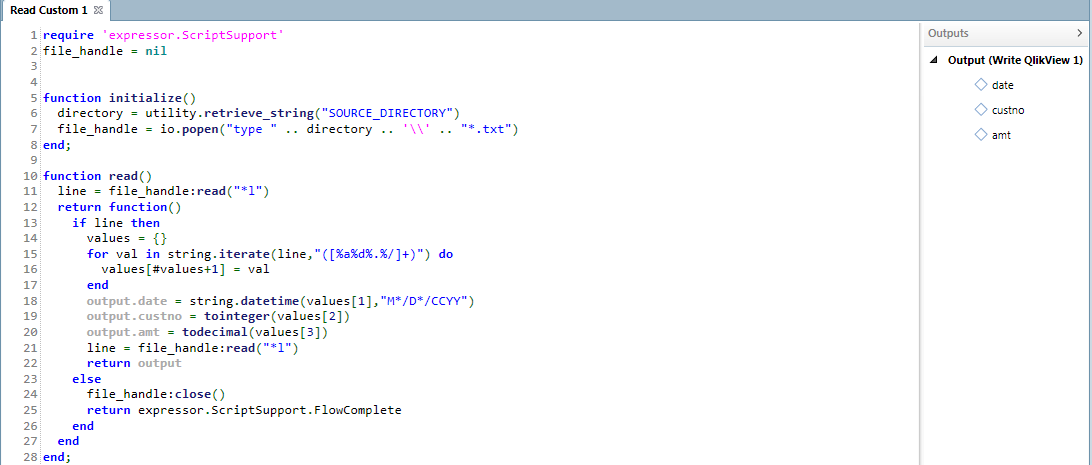

Here is a screen shot showing the code you could use within the Read Custom operator to read all the rows from multiple .txt files in a directory, processing them into a single output file, for example, a .qvx file.

In the initialize function, the code first extracts the directory location from an Expressor persistent variable and then uses the Windows type command line utility to concatentate the contents of the .txt files into a 'result set'.

In the read function, the code reads the first row from the 'result set'. Then, what is termed an Expressor iterative return function, processes each row in the 'result set', parsing it into its individual fields, using each field to initialize an output attribute, and finally emitting the record.

Lines 13-22 repeat until all rows have been processed. In lines 18-20, each field (all values are read from files as strings) is converted into its appropriate type. When there are no more rows, the conditional statement on line 13 returns false and the else clause (lines 23-25) executes. The return value expressor.ScriptSupport.FlowComplete indicates to the Expressor engine that processing has completed and the Read Custom operator may be destroyed.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Yes this is something that can be done with Expressor. But it does require some coding in a Read Custom operator or in a script.

Do all of the files have the same record structure? That is, the same fields in the same order in each row. Do you want the processed data from each input file to be written to the same output, for example a common file, or to different targets?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

yes all the files have the same structure , and they will all be read into the same output file after transforms

thanks,

Paresh

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Attach a sample file, or a few pseudo rows, and I can develop some sample code. Also, are you using the free download of Expressor or are you planning to purchase a license. There are, obviously, more options with a licensed version.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Using a free download of expressor.

columns in file

date,custno,amt

dd/mm/yyyy , int , decimal

there are around 15 files in csv format.

Easily achieved in qlikview script using

for each file in filelist($(dir))

Load *

from File

Next;

Using expressor to explore its metadata capabilities.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi John,

Is data lineage metadata extraction possible from a expressor package deployment ( using the desktop version)?

e.g. i have very simple txt transformed into a qvx file. In the transformation artifact the is some datatype casting taking place. How do i extract that and present that to an end user?

Regards,

Paresh

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hello Paresh -

Currently this is not available but as further integration continues between the two products, I suspect that it will be part of the QlikView Governance Dashboard as well as integrated into QlikView Expressor Desktop. So you are aware, the QlikView Governance Dashboard will be a free tool (available shortly) for QlikView customers to achieve visibility into their QlikView deployments. The QlikView Governance Dashboard does contain data lineage information in the current form for QlikView created and related data sources - it will show you what applications use which data sources as well as the derived expressions from those sources defined in the QlikView applications. So stay tuned.

Regards,

Mike T

Mike Tarallo

Qlik

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

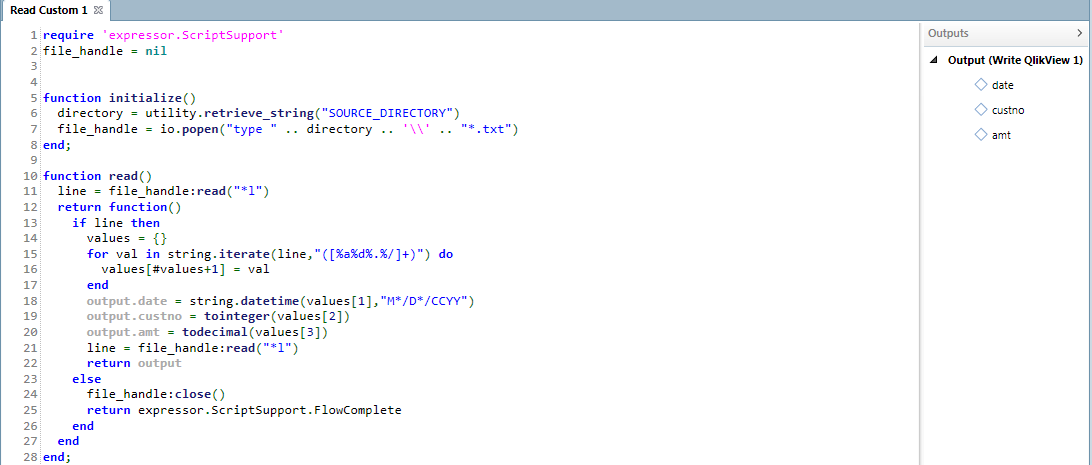

Here is a screen shot showing the code you could use within the Read Custom operator to read all the rows from multiple .txt files in a directory, processing them into a single output file, for example, a .qvx file.

In the initialize function, the code first extracts the directory location from an Expressor persistent variable and then uses the Windows type command line utility to concatentate the contents of the .txt files into a 'result set'.

In the read function, the code reads the first row from the 'result set'. Then, what is termed an Expressor iterative return function, processes each row in the 'result set', parsing it into its individual fields, using each field to initialize an output attribute, and finally emitting the record.

Lines 13-22 repeat until all rows have been processed. In lines 18-20, each field (all values are read from files as strings) is converted into its appropriate type. When there are no more rows, the conditional statement on line 13 returns false and the else clause (lines 23-25) executes. The return value expressor.ScriptSupport.FlowComplete indicates to the Expressor engine that processing has completed and the Read Custom operator may be destroyed.