Unlock a world of possibilities! Login now and discover the exclusive benefits awaiting you.

- Qlik Community

- :

- All Forums

- :

- Deployment & Management

- :

- High Availability questions

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

High Availability questions

Hello everyone,

I have some questions regarding High Availability in a QLIK Sense deployment. Im describing the Scenario first:

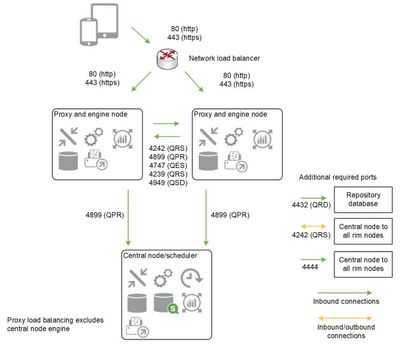

- We have 5 servers:

- 2 HA Proxy for load balancing requests to Consumer Nodes

- 1 Central Node

- 2 Consumer Nodes

- We Followed this diagram:

Given this scenario Im having some questions due to my implementing partner is giving me indications that feels very wrong. So here I go...

- In this scenario, I understand that if Central Node goes down totally, the consumer nodes should keep working by themselves, serving APPs to our visualization only clients. Am I correct?

- If Im not correct, my partner tells me that for that for this to be able to work, we need to introduce a PostgreSQL farm. Seriously, this fells really wrong, right? I know this can be done (move PostgreSQL to another server), but I dont think this needs to be a "must" for having true High Availability.

- Am I going to be able to upgrade this machines without losing service to our visualization only clients?

- What are the correct steps for upgrading multinode installations?

In my understanding, High Availability should bring us peace of mind that even when we accidentally lose an asset, the application will continue serving.

Im sorry if this is too simple, but seriously, my implementation partner is giving some serious headaches and now Im really confused.

Accepted Solutions

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Marcelo,

I won't draw any conclusions, but just describe how the system works...

1. All settings and configurations (treat it as a key component of your environment), as you probably know, stored in Repository Database, which is based on PostgreSQL. If QSR (Qlik Sense Repository) database is corrupted, the rest software is just useless...

2.Using the data from QSR database Central node (Central repository), being the brain of Qlik Sense Enterprise environment, manages all communication between RIM nodes and services, so if it's down - then RIM node won't be able to serve your users.

3. Having two Proxy nodes provides additional entry point to the Qlik Sense environment, rather than using 1 Proxy node; so availability in this case higher and protects the environment only from the case when "Proxy" goes down.

Few thoughts:

1. For highly pressured environments, I wouldn't use Central node as "Slave" scheduler (Master only), making sure Central node does ONLY its primary "managing" job;

2. All nodes need to work on the same version of Qlik Sense software, so upgrade on all machines needs to be done simultaneously (not literally, but Central first and then RIM nodes) - which means your system will be down for your users (any type) for some time; Moreover after upgrade "Apps migration process" has to finish before allowing users to go to the apps;

3. PostgresSQL farm with configured DR and failovers provides high availability to your database ONLY, but not for the full software suit;

4. So, if you'd like to protect yourself from every possible disruption with 99.9999999999% availability, the only way to provide this is to introduce a fully mirrored DR (disaster recovery) environment, to which users could be pointed out while the main Production is down. (Even in this case users will notice the change as soon as apps/visualizations need to be refreshed from new environment, assuming authentication is done smoothly) - is this a real requirement? whether it worth it?

Hope this helps you and in case of any questions feel free to ask.

Kind regards,

Andrei

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Marcelo,

I won't draw any conclusions, but just describe how the system works...

1. All settings and configurations (treat it as a key component of your environment), as you probably know, stored in Repository Database, which is based on PostgreSQL. If QSR (Qlik Sense Repository) database is corrupted, the rest software is just useless...

2.Using the data from QSR database Central node (Central repository), being the brain of Qlik Sense Enterprise environment, manages all communication between RIM nodes and services, so if it's down - then RIM node won't be able to serve your users.

3. Having two Proxy nodes provides additional entry point to the Qlik Sense environment, rather than using 1 Proxy node; so availability in this case higher and protects the environment only from the case when "Proxy" goes down.

Few thoughts:

1. For highly pressured environments, I wouldn't use Central node as "Slave" scheduler (Master only), making sure Central node does ONLY its primary "managing" job;

2. All nodes need to work on the same version of Qlik Sense software, so upgrade on all machines needs to be done simultaneously (not literally, but Central first and then RIM nodes) - which means your system will be down for your users (any type) for some time; Moreover after upgrade "Apps migration process" has to finish before allowing users to go to the apps;

3. PostgresSQL farm with configured DR and failovers provides high availability to your database ONLY, but not for the full software suit;

4. So, if you'd like to protect yourself from every possible disruption with 99.9999999999% availability, the only way to provide this is to introduce a fully mirrored DR (disaster recovery) environment, to which users could be pointed out while the main Production is down. (Even in this case users will notice the change as soon as apps/visualizations need to be refreshed from new environment, assuming authentication is done smoothly) - is this a real requirement? whether it worth it?

Hope this helps you and in case of any questions feel free to ask.

Kind regards,

Andrei

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hello Andrei,

I have a question on the fully mirrored DR environment.

1. What should be the configuration we need to do to have the automatic failover (ex:- when the production is down the DR should server the users automatically without any manual interventions and using a single URL)

2. How to keep the DR update with respect to Apps and other files without doing the replica of deployments manually. Are there any option to keep the Prod and DR in Sync automatically. (Ex:- when a new App is published in Production it should automatically reflected in DR too)

Regards,

Vinoth

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hello Qlik Experts,

Can anyone please give me DR plan for Qlik sense,

We are using Qlik sense August 2022 (patch 6) on windows server 2019

Currently we have two enviornmets,

1. DC - Having Central node and 2 Rim nodes and QSR databse externally hosted on server,

2. DR - Same configuration as DC,

At the time of DR activity we take backup of DC,

Restore QSR, Licenses, QSMQ and Senseservices,

then redistribute the certificates,

to make DR up and running,

But we want to skip the manual steps involved in DR activity and want to use DNS alias and QSR replication for DR activity,

Can anyone plese let us know how to achieve this,

Thanks in advance,

-Amit