Unlock a world of possibilities! Login now and discover the exclusive benefits awaiting you.

- Qlik Community

- :

- Support

- :

- Support

- :

- Knowledge

- :

- Support Articles

- :

- Qlik Replicate: Many-to-One Replication Configurat...

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

Qlik Replicate: Many-to-One Replication Configuration

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Qlik Replicate: Many-to-One Replication Configuration

Click here for Video Transcript

Environment

- Qlik Replicate , all versions

Overview

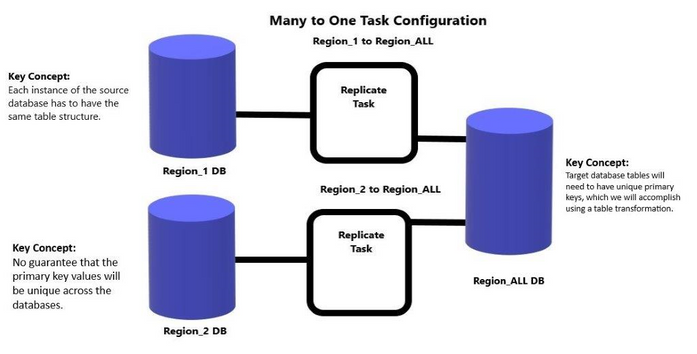

One popular configuration option for Replicate tasks is to handle a Many-to-One scenario. Imagine regional source sales databases that you want to consolidate into a single target database in order to simplify reporting needs across regions. This article will describe how to set up and configure replicate tasks to handle this situation, and introduce some key concepts for this to work.

Source Database

Lets start with the source databases.

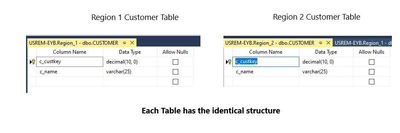

- Key Concept The source database tables structure must be identical across the many source database.

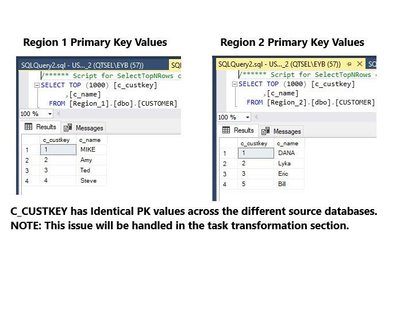

- Key Concept No guarantee that the primary key values on tables will be unique across the many source databases.

Each source table primary key will have its own unique key values. If these values are duplicates in other source database tables there will be conflicts in the target database as the many sources get replicated to the target

( There would be duplicate primary key issues).

NOTE: This potential conflict will be taken care of in the task design/transformation

Task configuration

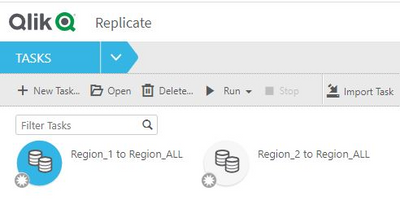

When configuring Replicate tasks to handle a Many to One scenario you will need to have a task for each source database. Each task will do Full Load and CDC to the same target database thus creating the merged database that contains all the tables and values from each source in one target database. In my example I will only have one table in my tasks called customer.

My example has (Region_1 to Region_ALL) and (Region_2 to Region_ALL) tasks configured.

For each task you define there will be a unique source endpoint and the identical target endpoint.

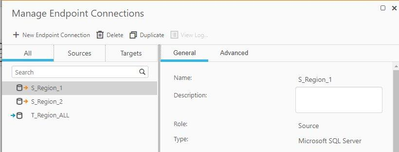

There are 2 source endpoints and only one target endpoint defined, as each task will use the same target endpoint.

Key Concept Each task must be set to Do Nothing on the Full Load/Full Load Settings/Target Table Preparation screen. If the tables do not exist then the task will create them. This setting insures that as each task runs its full load the task will not drop or delete records from the previous task full load. NOTE: It is okay to set this during the task creation as the task will still create the tables if they do not already exist.

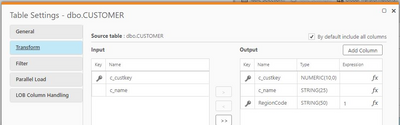

Key Concept Table level transformation adding a new field to the target side table that becomes part of the tables primary key. You can see the table transformation for the Customer table in this image.

The field RegionCode has been added to the target table Customer, then click on the "Key" column to add it to the PK on the target. For each task hard code a unique number for the region code. i.e. 1, 2, 3 etc.

NOTE: This does not impact the source table, it is target side only.

Target Database

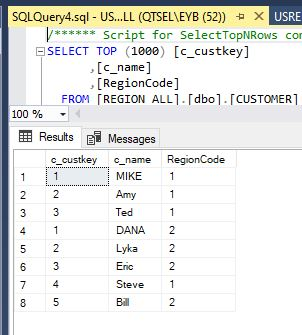

Lets have a look at the target side database/tables. You can see that the RegionCode field has been added to the table and it is part of the PK, thus insuring there will be no duplicate key errors.

The customer table structure.

The customer table showing the merged records from both Region 1 and Region 2 source databases.

NOTE: Please also watch the accompanying video on how to configure this many to one environment.

The information in this article is provided as-is and to be used at own discretion. Depending on tool(s) used, customization(s), and/or other factors ongoing support on the solution above may not be provided by Qlik Support.

Related Content

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

@Dana_Baldwin Is many to one replication supported for Azure ADLS targets? I'm not sure if there is a mechanism in place to handle writes to the same target file. I'm using Apply Changes which records files with a timestamp and there is the potential for the same filename especially when there are many sources writing to one target.

Alternatively, is there a way to avoid filename conflicts in the target? I haven't found a way to modify the target filename and append with something unique like the name of the source but am interested in any suggestions. There is post-upload processing where the file can be renamed afterwards but that may not help in this case.

Thanks!

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

@Dana_Baldwin I noticed that the Azure ADLS target docs specify the filename format but the last digit is missing on the files that I'm currently writing to data lake. Note that I'm using parquet rather than CSV but doubt that matters. I'm wondering if perhaps the last digit is some type of counter to handle name collisions if ADLS doesn't actually support many to one replication. If not then perhaps the docs are incorrect.

20141029-1134010000.csv <- docs

20240219-175816530.parquet <- sample file from my data

Thanks again!

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

Hi @BrianS1

I suspect you are right, that this configuration is only feasible with a traditional RDBMS target, not a file based target (or one that uses a file as a delivery method).

@Michael_Litz would you agree?

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

Yes, I believe so. I only tested with SQL server target.

Thanks,

Michael

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

@Dana_Baldwin @Michael_Litz thank you. Makes sense for support in RDMBS and not file targets at least directly. I'm wondering if perhaps Qlik Replicate resolves filename collisions through some other approach such as incrementing that last digit that's shown in the docs. Can you clarify?

Alternatively, do you have any suggestions on producing unique filenames? I don't think post-upload processing would resolve the issue because that's after the file is uploaded. Is there a way to do something like include the name of the task in the filename?

I could write the files to different folders but that causes some inefficiencies downstream when I process data from multiple folders.

Thanks again!

- Mark as Read

- Mark as New

- Bookmark

- Permalink

- Report Inappropriate Content

@BrianS1 I believe the file names are incremented as you have said. I don't see a way to influence the actual file name used. You might want to submit a feature request here if you would like more options - this goes directly to our Product Managers: https://community.qlik.com/t5/Ideas/idb-p/qlik-ideas

Thanks