Unlock a world of possibilities! Login now and discover the exclusive benefits awaiting you.

Recent Documents

-

How to create NPrinting GET and POST REST connections

NPrinting has a library of APIs that can be used to customize many native NPrinting functions outside the NPrinting Web Console. Environment: Qlik N... Show MoreNPrinting has a library of APIs that can be used to customize many native NPrinting functions outside the NPrinting Web Console.

Environment:

An example of two of the more common capabilities available via NPrinting APIs are as follows

- Connection reloads

- Publish Task executions

These and many other public NPrinting APIs can be found here: Qlik NPrinting API

In the Qlik Sense data load editor of your Qlik Sense app, two REST connections are required (These two REST Connectors must also be configured in the QlikView Desktop application>load where the API's are used. See Nprinting Rest API Connection through QlikView desktop)

- GET

- POST

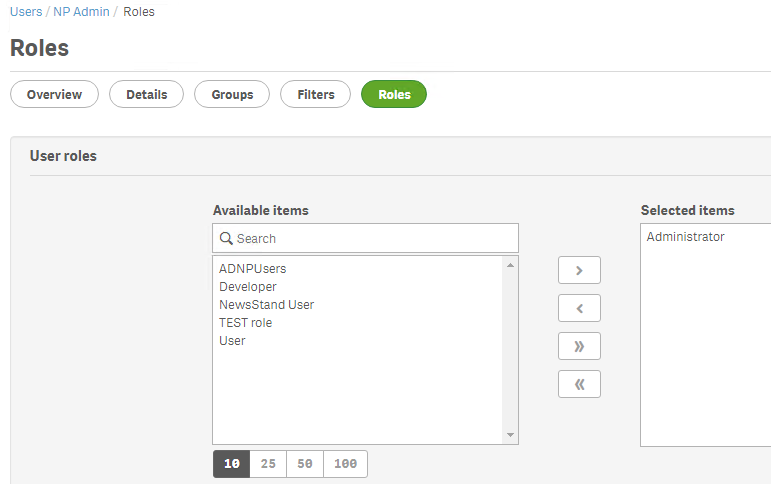

Requirements of REST user account:

- Windows Authentication is required in both these connectors. The required user account is the NPrinting service account (which is also ROOTADMIN on the Qlik Sense server)

- This user account must also be a member of the NPrinting 'Administrators' Security Role on the NPrinting Server.

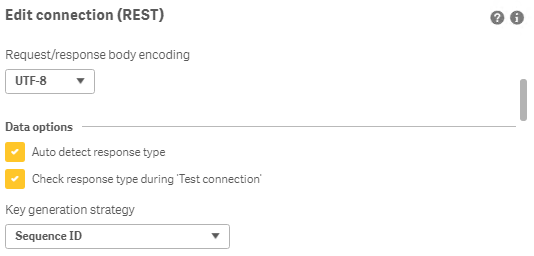

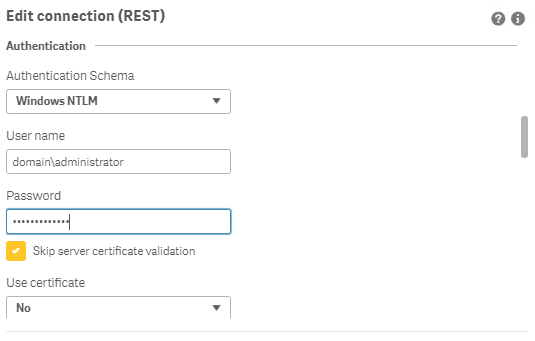

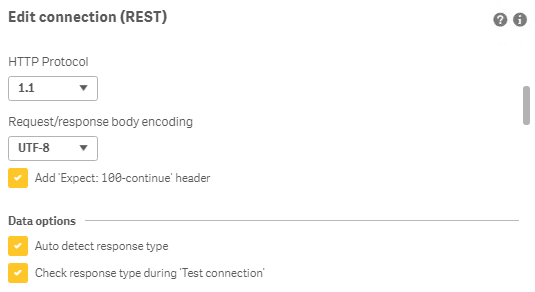

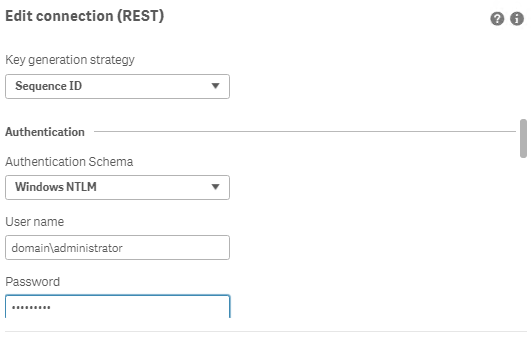

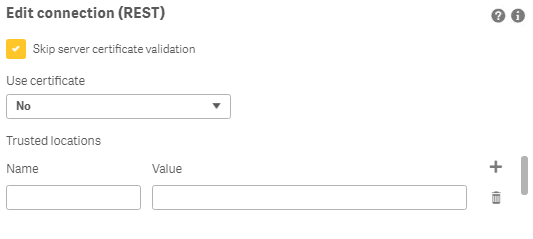

Creating REST "GET" connections

Note: Replace QlikServer3.domain.local with the name and port of your NPrinting Server

NOTE: replace domain\administrator with the domain and user name of your NPrinting service user account

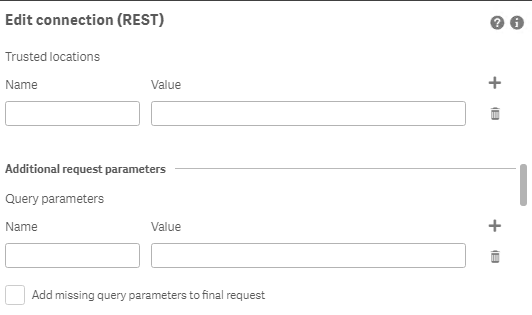

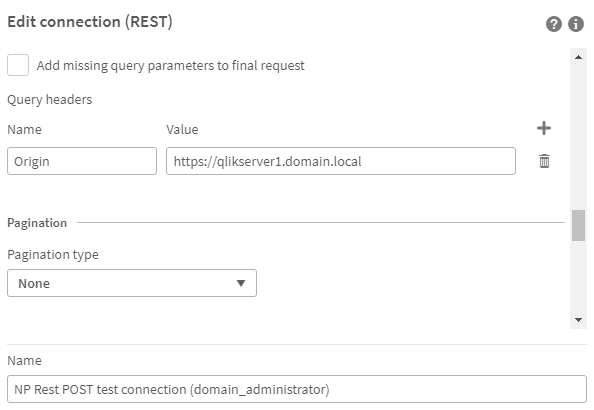

Creating REST "POST" connections

Note: Replace QlikServer3.domain.local with the name and port of your NPrinting Server

NOTE: replace domain\administrator with the domain and user name of your NPrinting service user account

Ensure to enter the 'Name' Origin and 'Value' of the Qlik Sense (or QlikView) server address in your POST REST connection only.

Replace https://qlikserver1.domain.local with your Qlik sense (or QlikView) server address.

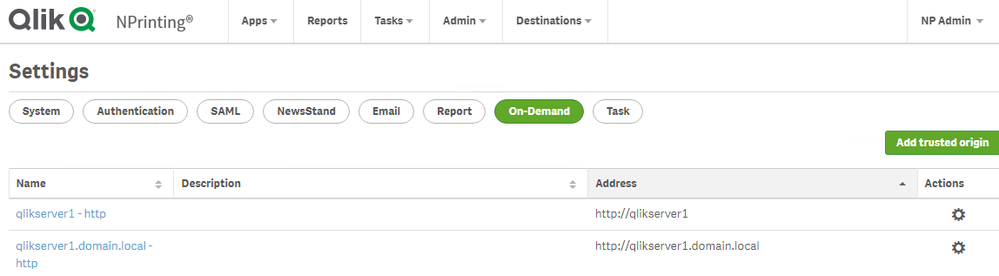

Ensure that the 'Origin' Qlik Sense or QlikView server is added as a 'Trusted Origin' on the NPrinting Server computer

Related Content

- Distribute NPrinting reports after reloading a Qlik App

- Extending Qlik NPrinting

- Run a Qlik NPrinting API POST command via QlikView reload script

- Troubleshooting Common NPrinting API Errors

NOTE: The information in this article is provided as-is and to be used at own discretion. NPrinting API usage requires developer expertise and usage therein is significant customization outside the turnkey NPrinting Web Console functionality. Depending on tool(s) used, customization(s), and/or other factors ongoing, support on the solution below may not be provided by Qlik Support.

-

Qlik Replicate target Oracle database experienced a transient CPU spike per quer...

During Change Data Capture (CDC) replication, the target Oracle database experienced a transient CPU spike. Database performance monitoring identified... Show MoreDuring Change Data Capture (CDC) replication, the target Oracle database experienced a transient CPU spike.

Database performance monitoring identified that the high CPU consumption was driven by the following automated bulk query generated by Qlik Replicate:

UPDATE /*+ PARALLEL(tempview) */ ( SELECT /*+ PARALLEL("CDC"."CATB_ENTRIES_DECOUPLING") PARALLEL("QLIK_TARGET"."attrep_changes69C66930_0000001") */ ...

The underlying cause of the resource spike was the execution of this query using aggressive Oracle PARALLEL hints, which exhausted available target DB CPU/memory resources.

Resolution

To prevent future resource contention and CPU/memory alerts in the target database, parallelism hints must be disabled or tuned within Qlik Replicate. This involves modifying two internal parameters across the Full Load and CDC stages: bulkUseParallel and directPathParallelLoad.

bulkUseParallel is for CDC / Change Processing and is enabled by default (true). It instructs Qlik Replicate to inject the Oracle PARALLEL hint into bulk DML statements for better target performance.

Setting it to false stops queries such as UPDATE /*+ PARALLEL(tempview) */ ... from executing in parallel, preventing CPU spikes.

This may cause a slight performance degradation during high-volume CDC processing.

directPathParallelLoad is for Full Load and enabled by default (true). It enables Direct Path loading using parallel processing only during the initial Full Load phase. It has no impact on the daily CDC.

Setting it to false proactively protects the DB during table reloads.

Will likely increase the time required to complete Full Load operations.

How to set the Internal Parameters in Qlik Replicate:

- Navigate to Manage Endpoint Connection → Targets and select your Endpoint

- Open the Advanced tab and select Internal Parameters

- Add bulkUseParallel and directPathParallelLoad

- Untick the Value checkbox to disable them

- Click OK

Environment

- Qlik Replicate

-

Qlik Compose Storage Zone Connection Failure Missing Hive JDBC Driver

After recreating the Amazon EMR cluster and updating the Storage Zone configuration in Qlik Compose with the new EMR master IP, the connection validat... Show MoreAfter recreating the Amazon EMR cluster and updating the Storage Zone configuration in Qlik Compose with the new EMR master IP, the connection validation failed with the following error:

Storage Zone connection failed

COMPOSE-E-ENGCONFAI

Java connection failed

SYS-E-GNRLERR, Required driver class not found:

com.simba.hive.jdbc41.HS2DriverThis issue caused the Storage Zone connection to fail, impacting data pipeline operations.

Resolution

- Verify that the required Hive JDBC driver (HiveJDBC41.jar) is present in the following directory on the Qlik Compose Agent server:

<Compose Install directory>/java/jdbc/ - Restart the Qlik Compose Agent service to ensure the driver is loaded:

sudo service compose-agent stop

sudo service compose-agent start - Validate the service is running:

sudo service compose-agent status

- Retest the Storage Zone connection in Qlik Compose UI, which succeeded after the restart.

Cause

The Hive JDBC driver (HiveJDBC41.jar) required for connecting to EMR HiveServer2 was either:

- Missing from the Compose Agent jdbc directory, or

- Added but not loaded because the Compose Agent service was not restarted

Qlik Compose does not dynamically load new JDBC drivers. The driver becomes available only after restarting the Compose Agent service.

See Prerequisites | Qlik Compose.

Environment

- Qlik Compose

- Verify that the required Hive JDBC driver (HiveJDBC41.jar) is present in the following directory on the Qlik Compose Agent server:

-

Installing Drivers for your Qlik Data Gateway

Purpose The purpose of this post is to help you install the database drivers necessary to allow your Qlik Data Gateway to communicate with your compa... Show MorePurpose

The purpose of this post is to help you install the database drivers necessary to allow your Qlik Data Gateway to communicate with your company's servers once you have completed the Qlik Data Gateway installation itself.

Background

If you are anything like me, perhaps you panicked a bit at the thought of installing the Qlik Data Gateway in a Linux environment. I have a lot of experience with .EXE installations in Windows environments. You know "Next – Next – Next – Finish." But an .RPM file? I had never even see that extension type before. If you were a Linux connoisseur beforehand, you probably guessed that my image for this post is an homage to the Fedora flavor of Linux. Otherwise you just thought it was an advertisement for the new "Raiders of the Lost Data" movie.

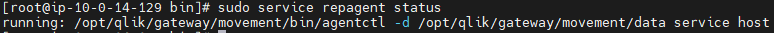

In any event, by now you have created your first Data Gateway, applied the registration key, completed the setup instructions and thankfully the command to check your Data Gateway service shows that it is running.

When you go back to the Data Gateway section of the Management Console and do a refresh your eyes fill you with happiness because your brand spanking new Data Movement Gateway shows "Connected.”

A lesser person would go celebrate right now. But you've decided to try and connect to a source before doing your happy dance. So, you create a new Data Integration project to the destination of your choice. While you will ultimately have many different data sources, let's imagine that you decide to start with a "SQL Server (Log Based)" connection, as your first source test.

You input the server connection details, but your SQL Server doesn't use a standard port for security. Finally, you find information online that you should input your server IP followed by a "comma and the port #". As an example, if your servers IP is 39.30.3.1 and your security port is 12345 you would input "39.30.3.1,12345'. Next you input the user and password credentials. Your last step is to choose the database. Easy peezy, lemon squeezey. Right?

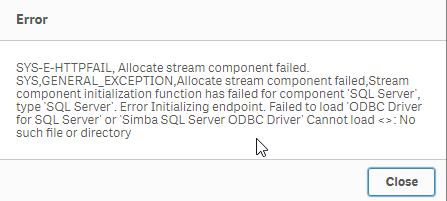

You press the "Load databases" button but suddenly a dialog comes up telling you that the Data Gateway can't connect because it can't find a SQL Server driver.

Driver Installation

Your heart starts beating quickly but naturally as a pro, you remain calm on the outside. Eventually you realize that whether on Windows or Linux, applications have always required drivers to communicate with servers. This is nothing new, we just got excited when we saw that connected message and thought we were done. Upon going back to the setup guide

you realize that there is in fact a link labeled "Setting up Data Gateway – Data Movement source connections."

So, you go ahead and click the link and it takes you to:

Wow, so many sources, and so many additional links to click to ensure the required drivers are in place for the sources your company will need. All the documentation is there, but I know firsthand that it can get a bit overwhelming, especially if Linux isn't your native language, which is the reason for this post.

Obviously every one of you reading this works in an environment that may require different data source connections than the others. Thus, there is no way for me to predict and help with your exact configuration. However, odds are strong that most of you likely require at least: SQL Server, Databricks, Snowflake, Postgres or MySQL, various combinations of them, or perhaps all of them.

As tedious, or imposing as it may be, I highly recommend you walk through the documentation for each data source you will need. But thanks to my buddy John Neal, I have attached a Linux shell script that can be executed to configure all 5 of those data sources for you. Given the many flairs and versions and configurations of Linux I can't ensure that it will work for everyone, but at least it is a start for those that may want to press an easy button, and those that like me may be somewhat or brand new to Linux.

If you choose to take advantage of it, understand that it is only being offered a shelp, and is not meant to replace the documentation. To utilize it you will need to do the following (Please note in my examples I have changed to the root user. If you are logged in as a normal user account, you may need to use SUDO "super user do"):

- Copy the attached "repldrivers-el7.sh.txt" file your Linux home directory where you placed the gateway .RPM file.

- Change the file name to simply be "repldrivers-el7.sh" so that it's clear that it is a shell script in case you or others see it in the future.

- Issue the following command from your prompt: "chmod 775 repldrivers-el7.sh" so that the file has the appropriate security allowing it to be executed.

- Issue the following command from your prompt: "./ repldrivers-el7.sh"

- Issue the following command from your prompt: "cd /etc"

- Issue the following command from your prompt: "/etc/odbcinst.ini"

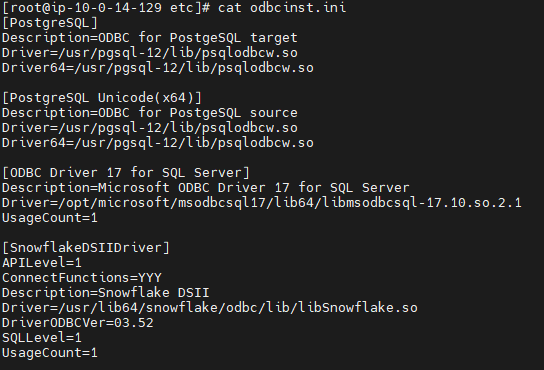

If all went well with the installation your output should look like similar to the following image that was part of my file:

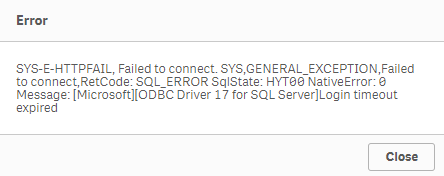

It's almost time to do our happy dance, but let's hold off until we test. In my starting example I asked you to assume we wanted to test against a "SQL Server (Log Based) connection." When we left off it was because we got an error message we had no driver while trying to load the list of databases. I will try that again.

Oh no, the heart rate is going up again.

We have successfully installed the Qlik Data Gateway. We have successfully installed the required drivers. Yet, we are getting this new error message. Let's focus on our breathing and try and digest the situation. What could cause our attempt to connect to our data source to timeout? I got it.

It's likely network security. We know what we want to talk to. We know the location. We know the credentials. But our networks aren't always wide open to do the talking. Resolving your connectivity/firewall issues may or not be with your abilities and if you are like me, you may need to seek the help of your IT/Networking team.

When I reached out to my friendly IT guru, here within Qlik, he was able to help me get everything in place so that my Linux server could speak with my database servers, including all of the needed ports.

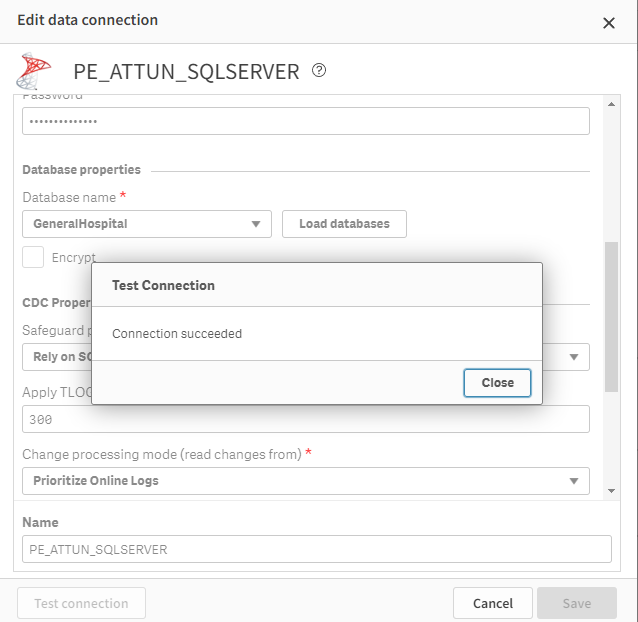

Once they were completed I was able to test and sure enough my data connection succeeded.

Whether or not you do a happy dance, as I did, I hope that this post has helped you get to that sweet smell of success. After all, someone has to be known as the amazing person who got your Qlik Data Gateway going so that others in the Data Engineering team could create all of those lights out Qlik Cloud Data Integration projects that would be feeding data in near real time to all of those wonderul analytics use cases. Hopefully with the help of the documentation and this post, that person is you my friends.

Challenge

One of the things I've long admired about the Qlik Community is their willingness to help each other through this Community site. If you are a Linux guru and are so inclined I would love to see you share other versions of the shell script that I have started. Maybe your organization is using another flair/version of Linux and you needed to make a few tweaks to my file. Maybe your organization needed Oracle added and you can tweak my file. Whatever the reason, I sure hope you will give back to the community by sharing all of those tweaks here. Who knows, your help might help them be able to do their happy dance. And we all know the world is a better place when more people do their happy dance.

Related Content

Qlik Data Gateway - Data Movement prerequisites and Limitations - https://help.qlik.com/en-US/cloud-services/Subsystems/Hub/Content/Sense_Hub/Gateways/dm-gateway-prerequisites.htm

Setting up the Data Movement gateway - https://help.qlik.com/en-US/cloud-services/Subsystems/Hub/Content/Sense_Hub/Gateways/dm-gateway-setting-up.htm

PS - I created both of the images here using a generative AI solution called MidJourney. I hope they've added to the fun of this post.

-

Qlik Talend Studio: Error tDataStewardshipTaskInput unable to connect to Talend ...

Qlik Talend Studio job fails connecting to the Data Stewardship Application in Qlik Talend Cloud with the error below: tDataStewardshipTaskInput_1 Una... Show MoreQlik Talend Studio job fails connecting to the Data Stewardship Application in Qlik Talend Cloud with the error below:

tDataStewardshipTaskInput_1 Unable to connect to Talend Data Stewardship.

java.io.IOException: Unable to connect to Talend Data Stewardship.Caused by: java.net.UnknownHostException: No such host is known (tds.eu.cloud.talend.com)

Resolution

To resolve this issue:-

Intermittent UnknownHostException can be caused by regional service outages. Check the Talend Cloud Status page for any ongoing incidents.

- Verify that you are targeting the correct URL for your account's data center.

Example: For the AWS EU region, the valid URL is https://tds.eu.cloud.talend.com

If your account is in a different region (US or Asia), replace eu with the correct code, such as us or ap.

See Talend Cloud Regions documentation to verify your specific data center's URLs. - Verify your network and proxy configuration:

- Check Proxy Settings: If you are behind a corporate firewall or proxy, Qlik Talend Studio or the Remote Engine may not be able to "see" the external URL.

In Qlik Talend Studio, go to Window > Preferences > General > Network Connections and configure your proxy. - Allowlist URLs: Ensure your network team has added *.cloud.talend.com to your organization's firewall allowlist. All required allowlist URLs can also be found in the Talend Cloud Regions documentation

- Check Proxy Settings: If you are behind a corporate firewall or proxy, Qlik Talend Studio or the Remote Engine may not be able to "see" the external URL.

- If you have verified all the above, but are still seeing the issue, you may be seeing other network-related issues, such as with DNS.

- Test name resolution: Run a command-line test to see if your OS can resolve the host:

Windows: nslookup tds.eu.cloud.talend.com

Linux/Mac: dig tds.eu.cloud.talend.com or ping tds.eu.cloud.talend.com - Flush DNS Cache: If the manual test fails, try flushing your DNS cache or restarting the application to clear any "bad" cached entries.

- Test name resolution: Run a command-line test to see if your OS can resolve the host:

Cause

This error indicates that the Qlik Talend Studio job was unable to resolve the hostname to an IP address at that instant, in this case, Qlik Talend Cloud Data Stewardship.

Environment

- Qlik Talend Studio

- Qlik Talend Cloud

-

-

Qlik Sense Enterprise on Windows May 2025: Slow Data Load Editor

The Data Load Editor in Qlik Sense Enterprise on Windows 2025 experiences noticeable performance issues. Resolution Fix Version Qlik Sense May 2025 ... Show MoreThe Data Load Editor in Qlik Sense Enterprise on Windows 2025 experiences noticeable performance issues.

Resolution

Fix Version

Qlik Sense May 2025 SR 6 and higher releases.

Workaround

A workaround is available. It is viable as long as the Qlik SAP Connector is not in use.

- In a Windows file browser, navigate to C:\Program Files\Common Files\Qlik\Custom Data\

- Move the QvSapConnectorPackage directory to a different location

No service restart is required.

Internal Investigation ID(s)

SUPPORT-6006

Environment

- Qlik Sense Enterprise on Windows

-

Qlik Replicate Log Stream Task: Retrieve all source columns on UPDATE with Oracl...

Beginning from Qlik Replicate version 2024.05, a new checkbox was added to Log Stream Staging tasks: Retrieve all source columns on UPDATE The option ... Show MoreBeginning from Qlik Replicate version 2024.05, a new checkbox was added to Log Stream Staging tasks: Retrieve all source columns on UPDATE

The option is available in Task Settings (A) > Change Processing > Change Processing Tuning (B)

It is enabled (C) by default.

When Retrieve all source columns on UPDATE is enabled, it will cause any table added to the task to issue an ALTER on the table to enable supplemental logging on all columns if the source database is Oracle.

Resolution

For high-transaction tables, enable Supplemental Logging on all columns during off-peak hours manually before adding them to the Qlik Replicate task.

Cause

In previous Qlik Replicate versions, supplemental logging was not required on all columns and was enabled only on Primary Key Columns. But with that new checkbox, it is required to be added to all columns.

When any new table is added to the Log Stream Staging task, Qlik Replicate issues an ALTER TABLE command to enable Supplemental Logging on all columns. This command can fail on high-transaction or busy tables in the source Oracle DB.

Environment

- Qlik Replicate

-

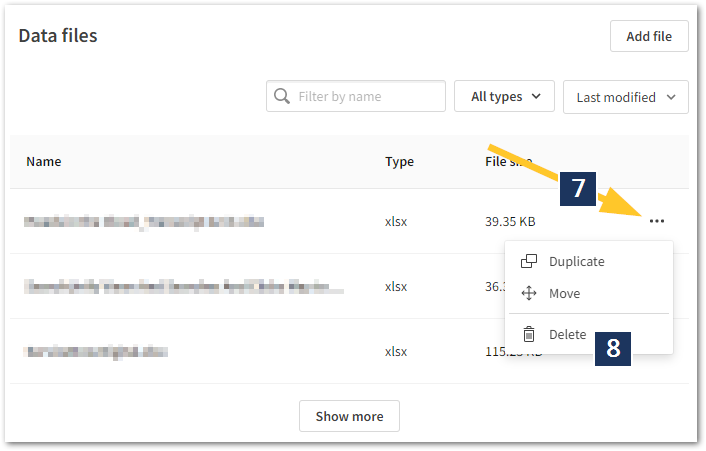

How To Delete Data Files and Datasets from Qlik Cloud Hub and Data Spaces

Method 1: From the Data Sources Open the Qlik Analytics Service Select Catalog Pick your Space Click Space Details then Select Data Files from the... Show MoreMethod 1: From the Data Sources

- Open the Qlik Analytics Service

- Select Catalog

- Pick your Space

- Click Space Details then

- Select Data Files from the context menu

- Locate the data you wish to delete

- Click the ellipses to open the next menu

- Click Delete

- Alternatively, add /data at the end of the URL.

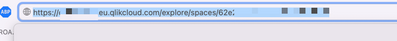

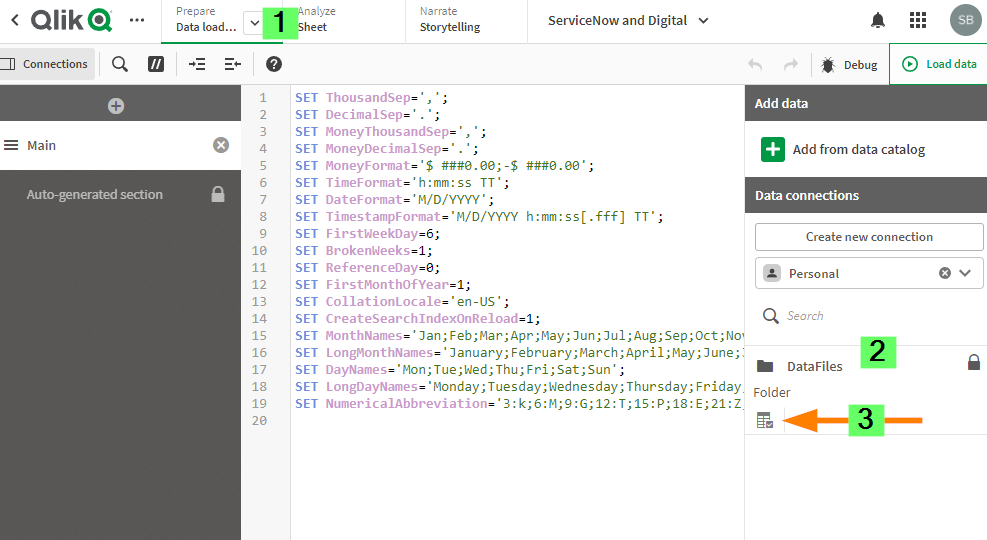

Method 2: From the Data Load Editor

- Open the App with a connection to the data files you wish to edit

- Go to Data Load Editor

- On the Right Side of the Data Load Editor under add data section select your data space.

- Click on the table icon under datafiles.

- select the Delete option on the right side of each file that you want to delete.

Environment

The information in this article is provided as-is and to be used at own discretion. Depending on tool(s) used, customization(s), and/or other factors ongoing support on the solution below may not be provided by Qlik Support.

-

Qlik Talend Cloud: Illegal Argument popup when creating new Snowflake connection

When using Key Pair authentication and creating a new Snowflake connection, you might encounter the following error: Illegal Argument Resolution The ... Show MoreWhen using Key Pair authentication and creating a new Snowflake connection, you might encounter the following error:

Illegal Argument

Resolution

The provided private key file (.p8) is not in the correct format, or the key file password is invalid.

To get the actual error, install SnowSQL utility in the Linux machine where the Qlik Data Movement gateway is installed and try to connect to the same account:

snowsql -a <account> -u <username> --private-key-path <path to file/rsa_key.p8>This will provide the exact error on why the connectivity is failing and assist in identifying which root cause applies.

Cause

Environment

- Qlik Talend Cloud

-

Qlik Replicate: Errors Due to Unsupported SQL Server Versions

Starting from Qlik Replicate versions 2024.5 and 2024.11, Microsoft SQL Server 2012 and 2014 are no longer supported. Supported SQL Server versions in... Show MoreStarting from Qlik Replicate versions 2024.5 and 2024.11, Microsoft SQL Server 2012 and 2014 are no longer supported. Supported SQL Server versions include 2016, 2017, 2019, and 2022. For up-to-date information, see Support Source Endpoints for your respective version.

Attempting to connect to unsupported versions, both on-premise and cloud, can result in various errors.

Examples of reported Errors:

- Qlik Replicate 2024.5 accessing Microsoft Azure SQL Server 2014

[SOURCE_CAPTURE ]W: Table 'dbo'.'tableName' has encrypted column(s), but the 'Capture data from Always Encrypted database' option is disabled. The table will be suspended (sqlserver_endpoint_capture.c:157) - Qlik Replicate 2024.11 accessing Microsoft SQL Server 2014

[SOURCE_UNLOAD ]E: RetCode: SQL_ERROR SqlState: 42S02 NativeError: 208 Message: [Microsoft][ODBC Driver 18 for SQL Server][SQL Server]Invalid object name 'sys.column_encryption_keys'. Line: 1 Column: -1 [1022502] (ar_odbc_stmt.c:4067)

Cause

The system view sys.column_encryption_keys is only available starting from SQL Server 2016. Attempting to query this view on earlier versions results in errors.

Reference: sys.column_encryption_keys (Microsoft Docs)

Resolution

Upgrade your SQL Server instances to a supported version (2016 or later) to ensure compatibility with Qlik Replicate 2024.5 and above.

Internal Investigation ID(s)

00375940, 00376089

Environment

- Qlik Replicate versions 2024.5, 2024.11, and higher

- Microsoft SQL Server 2014, 2012, and lower (unsupported)

- Qlik Replicate 2024.5 accessing Microsoft Azure SQL Server 2014

-

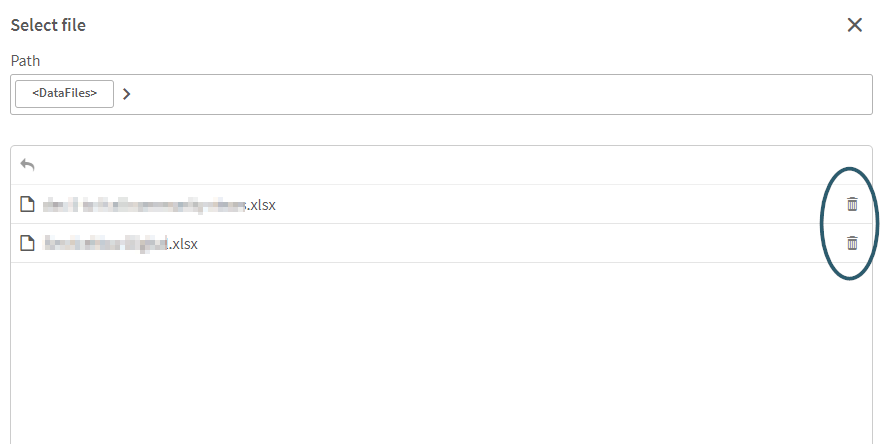

Qlik Sense Data Connectors are missing

Qlik Sense Connectors are missing from the Data source except few REST connectors. Resolution Solution 1 Repair Qlik Sense with the Qlik Sense Setup ... Show MoreQlik Sense Connectors are missing from the Data source except few REST connectors.

Resolution

Solution 1

Repair Qlik Sense with the Qlik Sense Setup file (identical version).

Solution 2

- Obtain a copy of the folder "C:\Program Files\Common Files\Qlik\Custom Data\" from a different, but working Qlik Sense Enterprise on a Windows environment (identical version). A test installation can be set up to obtain it.

- Replace the files/folders with the copied version. This action needs to be carried out on all nodes if it is a multi-node environment.

Solution 3

Encryption keys:

- Verify if the Encryption keys are present in the User's directory.

- Check if you have multiple encryption keys stored in the User's directory.

- Delete and recreate the Encryption keys using Setting an encryption key

Encryption keys will be stored in either "C:\Users\{sense service user}\AppData\Roaming\Qlik\QwcKeys\" Or "C:\Users\{sense service user}\AppData\Roaming\Qlik\Keys\"

Cause

- The Connector folders or executables are corrupted or deleted.

- Connector folders or executables are being scanned and removed from their path. Therefore, it is mandatory to exclude Qlik folders if any scanning software is present on Qlik Sense Windows machines. For more information about exclusions, please refer to the document titled Qlik Sense Folder And Files To Exclude From Anti-Virus Scanning

- Encryption Keys are either deleted or corrupted.

Related Content

An error occurred / Failed to load connection error message in Qlik Sense - Server Has No Internet

Environment

-

tS3Connection fails after upgrading Qlik Talend Studio to R2025-08 or later

After upgrading Qlik Talend Studio to patch R2025-08 or later, jobs using the tS3Connection or tS3List components fail with the error: Exception in co... Show MoreAfter upgrading Qlik Talend Studio to patch R2025-08 or later, jobs using the tS3Connection or tS3List components fail with the error:

Exception in component tS3List_1

java.lang.IllegalStateException: Connection pool shut downResolution

An additional change with the new SDK version is how the AWS "region" is handled. In Qlik Talend Studio R2025-07 and earlier, the Region and Endpoint field is shown as a dropdown below.

Using the DEFAULT value works for most cases:

With the new SDK 2.x, the region field is less flexible. The region must explicitly be defined to work correctly. To resolve connectivity issues after upgrading, it is best to explicitly define the AWS region used under the new Region text field:

Additional Information

- For all AWS regions, see: AWS service endpoints

- We recommend also reviewing all details for tS3Connection in our help documentation as per tS3Connection | help.qlik.com

Cause

With the release of R2025-08, Qlik Talend Studio migrated AWS component dependencies from Amazon Web Services SDK version 1.x to SDK version 2.x. This move was prompted by SDK 1.x having reached end of life as of December 31, 2025.

The Amazon DynamoDB, Amazon SQS, and Amazon S3 components were all updated. For the full release notes, see R2025-08 Talend Studio 8.0 - New Features.

Environment

- Qlik Talend Studio

-

Qlik Replicate and DB2 for iSeries source: Check if a receiver has been detached

When using IBM DB2 for iSeries as a source in Qlik Replicate, the task may report a warning if journal receiver numbers are not continuous. A typical ... Show MoreWhen using IBM DB2 for iSeries as a source in Qlik Replicate, the task may report a warning if journal receiver numbers are not continuous.

A typical warning message looks like:

[SOURCE_CAPTURE ]W: Journal entry sequence '2026' was read from journal receiver 'APSUPDB.QSQJRN0118'. The previous entry was read from receiver 'APSUPDB.QSQJRN0116'. Check if a receiver has been detached. (db2i_endpoint_capture.c:1836)

Resolution

Qlik Replicate reports this condition as a warning only. There is no impact on task execution or data integrity:

- The task continues to run normally

- No journal entries are lost

- No data inconsistency is introduced

This warning can be safely ignored unless accompanied by other errors or abnormal task behavior.

Cause

On the IBM DB2 for iSeries side, 'Check if a receiver has been detached' can occur if, for example, the process is holding or locking the journal. This temporarily prevents the system from creating or attaching the next journal receiver. In such cases, a receiver number may be allocated but never successfully created, resulting in a gap in the receiver numbering.

This behavior is normal on IBM i and does not indicate a defect. The system assigns journal receiver numbers, but sequential continuity is not guaranteed. IBM i only guarantees that receiver numbers increase monotonically, not that every number will exist.

Internal Investigation ID(s)

00420963, 00423959

Environment

- Qlik Replicate

- IBM DB2 for iSeries all versions

-

Qlik Replicate: DB2 LUW as target via ODBC errors

Using DB2 LUW (ODBC) as a target with a replication of a text column, the following error may be encountered: [TARGET_APPLY ]T: RetCode: SQL_ERROR Sql... Show MoreUsing DB2 LUW (ODBC) as a target with a replication of a text column, the following error may be encountered:

[TARGET_APPLY ]T: RetCode: SQL_ERROR SqlState: 42846 NativeError: -461 Message: [IBM][CLI Driver][DB2/AIX64] SQL0461N A value with data type "SYSIBM.LONG VARGRAPHIC" cannot be CAST to type "SYSIBM.TIMESTAMP". SQLSTATE=42846 [1022502] (ar_odbc_stmt.c:2864)

Resolution

The May 2026 version of Qlik Replicate contains a native DB2 LUW endpoint capable of processing the datatype correctly to prevent the error.

Workaround

Remove any tables that have multiple Text columns.

Internal Investigation ID(s)

SUPPORT-8188

Environment

- Qlik Replicate

-

Qlik Analytics: Partial reloads failing when Applymap() is used. In-application ...

A partial reload fails when Applymap() is used in a load statement that is not part of the partial reload itself. This affects any partial reloads. Th... Show MoreA partial reload fails when Applymap() is used in a load statement that is not part of the partial reload itself.

This affects any partial reloads.

This limitation can block the distribution list import in In-application reporting. In fact, when a distribution list is added by uploading a source file, a new section (Distribution List) is automatically generated in the application’s load script, and a partial reload for this session starts automatically.

If Applymap() is used anywhere in the application script, the partial reload fails, and the recipient list can't be imported.

Resolution

This is currently considered expected behavior in Qlik Sense Enterprise on Windows and Qlik Cloud Analytics. There are possible workarounds to address partial reload failures.

If the problem is limited to In-application reporting, it is possible to run a full reload of the application from the HUB once the Distribution List session is generated in the app script. Notice that this may cause a consumption of resources that can be charged to the tenant.

A more general workaround is to conditionally ensure that certain operations are limited to the partial reload only, using a schema like this in the script:

if IsPartialReload() then

*****

here the script involving mapping and Applymap()

*****

else

***

the rest of the script

***

endif;A similar approach is to use the partial reload prefix on the mapping table like:

"Mapping add LOAD".It may be necessary to work with conditions for operations like "drop field" in this case, dependent on whether the referenced field exists or not in the given reload context.

Here are two example scripts showing two possible methods.

Method One:

if IsPartialReload() then

Replace Load 'IsPartial' as Status autogenerate 1;

else

Load 'IsNormal' as Status autogenerate 1;

// Load mapping table of country codes:

map1:

mapping LOAD *

Inline [

CCode, Country

Sw, Sweden

Dk, Denmark

No, Norway];// Load list of salesmen, mapping country code to country

// If the country code is not in the mapping table, put Rest of the world

Salespersons:

LOAD *,

ApplyMap('map1', CCode,'Rest of the world') As Country

Inline [

CCode, Salesperson

Sw, John

Sw, Mary

Sw, Per

Dk, Preben

Dk, Olle

No, Ole

Sf, Risttu

] ;

// We don't need the CCode anymore

Drop Field 'CCode';

endif;

Partial_reload_Data:

Add only LOAD * inline [

Salesperson, CCode

Pierre, Sw

Viggo, Sw ];Method Two:

if IsPartialReload() then

Replace Load 'IsPartial' as Status autogenerate 1;

else

Load 'IsNormal' as Status autogenerate 1;

end if;

// Load mapping table of country codes:

map1:

mapping add LOAD *

Inline [

CCode, Country

Sw, Sweden

Dk, Denmark

No, Norway

] ;

// Load list of salesmen, mapping country code to country

// If the country code is not in the mapping table, put Rest of the world

Salespersons:

LOAD *,

ApplyMap('map1', CCode,'Rest of the world') As Country

Inline [

CCode, Salesperson

Sw, John

Sw, Mary

Sw, Per

Dk, Preben

Dk, Olle

No, Ole

Sf, Risttu

] ;

// We don't need the CCode anymore

if not IsPartialReload() then

Drop Field 'CCode';

end if;

Partial_reload_Data:

Add only LOAD * inline [

Salesperson, CCode

Pierre, Sw

Viggo, Sw ];Cause

This behavior is due to a known limitation in Qlik Sense Enterprise on Windows and Qlik Cloud Analytics.

Internal Investigation ID(s)

QB-5181

Environment

- Qlik Sense Enterprise on Windows

- Qlik Cloud Analytics

-

Qlik Cloud: Unable to load data as date format using Google Drive and Spreadshee...

When connecting to the Google Drive Spreadsheet connector, some date values are fetched as text. For example, there is a date table like the following... Show MoreWhen connecting to the Google Drive Spreadsheet connector, some date values are fetched as text.

For example, there is a date table like the following:

In the Data Load editor:

However, in the fetched results, the first two columns are returned as text:

Resolution

This is working as designed when using the Google Drive and Spreadsheet connector.

Two possible workarounds exist.

Use the Qlik Google Drive connector

- Upload the spreadsheet to the Google Drive as an XLSX file, and use the Qlik Google Drive connector instead.

- Use Select Data to fetch the data

Change the DateFormat in the load script

- In the default script, you can find the following parameter set.

- Change the format "M/DD/YYYY" to what you want to load, which is the same format as the spreadsheet.

- Reload the script, and you will see the data is fetched in the proper date format.

Internal Investigation ID(s)

SUPPORT-8842

Environment

- Qlik Cloud

- Upload the spreadsheet to the Google Drive as an XLSX file, and use the Qlik Google Drive connector instead.

-

Qlik Replicate and Hana source endpoint: How to handle DECIMAL CS_DECIMAL_FLOAT ...

When using SAP HANA as a source in Qlik Replicate, Qlik Replicate does not fully handle the DECIMAL CS_DECIMAL_FLOAT datatype by default. This can lea... Show MoreWhen using SAP HANA as a source in Qlik Replicate, Qlik Replicate does not fully handle the DECIMAL CS_DECIMAL_FLOAT datatype by default. This can lead to a loss of precision during replication.

For example, the value 345.56 in Hana replicated as 345 in Google BigQuery, a generic File target, or other target endpoints.

Assume we have a table defined as below:

create column table JOHNW.TESTDEC (

ID integer not null primary key,

name varchar(20),

dec1 DECIMAL(38,4) CS_FIXED,

dec2 DECIMAL CS_DECIMAL_FLOAT);

INSERT INTO johnw.testdec VALUES (1,'test',234.45,345.56);Resolution

There are two possible solutions.

- Use a VIEW with explicit casting, then replicate the VIEW instead of the original table in Qlik Replicate task:

CREATE OR REPLACE VIEW johnw.testdec_view2 AS

SELECT

id,

name,

dec1,

CAST(dec2 AS DECIMAL(30,4)) AS dec2

FROM johnw.testdec;

This ensures a fixed scale is applied before replication.

This workaround works for a Full Load ONLY task.

- Use source_lookup()

Adding a column with datatype NUMERIC(30,4), the expression is:

source_lookup('NO_CACHING','JOHNW','TESTDEC','DEC2','ID=:1',$ID)You may combine this with a CAST to enforce the desired precision or any other formatting.

This workaround is effective for both Full Load and Change Processing; however, it requires the source to have a primary key or a unique index.

The advantage is that no changes are needed in the source HANA database.

Cause

The DEC2 column is not a standard fixed DECIMAL.

- CS_FIXED → fixed precision/scale (e.g., DECIMAL(38,4))

- CS_DECIMAL_FLOAT → floating decimal (variable scale)

Qlik Replicate cannot handle it correctly by default in the current versions.

Environment

- Qlik Replicate all versions

- SAP Hana all versions

- Use a VIEW with explicit casting, then replicate the VIEW instead of the original table in Qlik Replicate task:

-

Qlik Sense Enterprise on Windows: Error retrieving the URL to authenticate: ENCR...

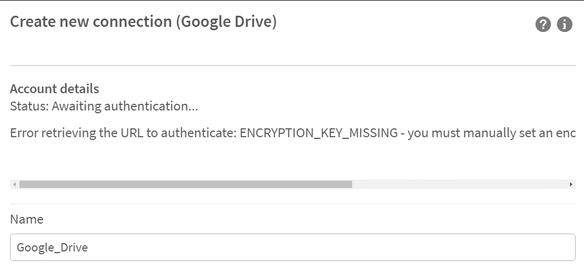

Some connectors require an encryption key before you create or edit a connection. Failing to generate a key will result in: Error retrieving the URL t... Show MoreSome connectors require an encryption key before you create or edit a connection. Failing to generate a key will result in:

Error retrieving the URL to authenticate: ENCRYPTION_KEY_MISSING - you must manually set an encryption key before creating new connections.

Environment

Qlik Sense Desktop February 2022 and onwards

Qlik Sense Enterprise on Windows February 2022 and onwards

all Qlik Web Storage Provider Connectors

Google Drive and Spreadsheets MetadataResolution

PowerShell demo on how to generate a key:

# Generates a 32 character base 64 encoded string based on a random 24 byte encryption key

function Get-Base64EncodedEncryptionKey {

$bytes = new-object 'System.Byte[]' (24)

(new-object System.Security.Cryptography.RNGCryptoServiceProvider).GetBytes($bytes)

[System.Convert]::ToBase64String($bytes)

}

$key = Get-Base64EncodedEncryptionKey

Write-Output "Get-Base64EncodedEncryptionKey: ""${key}"", Length: $($key.Length)"Example output:

Get-Base64EncodedEncryptionKey: "muICTp4TwWZnQNCmM6CEj4gzASoA+7xB", Length: 32

Qlik Sense Desktop

This command must be run by the same user that is running the Qlik Sense Engine Service (Engine.exe). For Qlik Sense Desktop, this should be the currently logged-in user.

Do the following:

-

Open a command prompt and navigate to the directory containing the connector .exe file. For example:

"cd C:\Program Files\Common Files\Qlik\Custom Data\QvWebStorageProviderConnectorPackage"

-

Run the following command:

QvWebStorageProviderConnectorPackage.exe /key {key}

Where {key} is the key you generated. For example, if you used the OpenSSL command, your key might look like: QvWebStorageProviderConnectorPackage.exe /key zmn72XnySfDjqUMXa9ScHaeJcaKRZYF9w3P6yYRr

-

You will receive a confirmation message:

Info: Set key. New key id=qseow_prm_custom.

Info: key set successfully!

Qlik Sense Enterprise on Windows

The {sense service user} must be the name of the Windows account which is running your Qlik Sense Engine Service. You can see this in the Windows Services manager. In this example, the user is: MYCOMPANY\senseserver.

Do the following:

-

Open a command prompt and run:

runas /user:{sense service user} cmd. For example:runas /user:MYCOMPANY\senseserver

-

Run the following two commands to switch to the directory containing the connectors and then set the key:

-

"cd C:\Program Files\Common Files\Qlik\Custom Data\QvWebStorageProviderConnectorPackage"

-

QvWebStorageProviderConnectorPackage.exe /key {key}

Where {key} is the key you generated. For example, if you used the OpenSSL command, your key might look like: QvWebStorageProviderConnectorPackage.exe /key zmn72XnySfDjqUMXa9ScHaeJcaKRZYF9w3P6yYRr

-

-

You should repeat this step, using the same key, on each node in the multinode environment.

-

Encryption keys will be stored in: "C:\Users\{sense service user}\AppData\Roaming\Qlik\QwcKeys\"

For example, encryption keys will be stored in "C:\Users\QvService\AppData\Roaming\Qlik\QwcKeys\"

Always run the command prompt while logged in with the Qlik Sense Service Account which is running your Qlik Sense Engine Service and which has access to all the required folders and files.

Cause

This security requirement came into effect in February 2022. Old connections made before then will still work, but you will not be able to edit them. If you try to create or edit a connection that needs a key, you will receive an error message: Error retrieving the URL to authenticate: ENCRYPTION_KEY_MISSING) - you must manually set an encryption key before creating new connections.

Related Content

-

-

QlikView reload error: connector connect error bundled QVConnect not found.

After upgrading to QlikView September 2025 IR, scheduled Publisher reload tasks fail with the following error: Error: Connector connect error: Bundled... Show MoreAfter upgrading to QlikView September 2025 IR, scheduled Publisher reload tasks fail with the following error:

Error: Connector connect error: Bundled QVConnect not found.

The same .qvw document can be reloaded successfully using QlikView Desktop.

Resolution

The issue is caused by QCB-33101, which has been resolved in QlikView September 2025 SR1. Upgrade to the latest available version.

See QlikView September 2025 Release Notes for details.

Internal Investigation ID(s)

QCB-33101

Environment

- QlikView

-

Integrating Salesforce CDC with Qlik Talend Studio or Talend Streaming

Is it possible to integrate Salesforce Change Data Capture (CDC) with Qlik Talend Studio or Talend Streaming? Not directly. Salesforce Change Data Cap... Show MoreIs it possible to integrate Salesforce Change Data Capture (CDC) with Qlik Talend Studio or Talend Streaming?

Not directly. Salesforce Change Data Capture (CDC) enables real-time event-driven integration by publishing data changes (create, update, delete, undelete) from Salesforce objects, which Talend Streaming alone does not natively support.

To consume Salesforce CDC events and integrate them with Talend Streaming, use Talend ESB. It can be deployed as an integration layer to receive CDC events and forward them to downstream streaming or messaging systems.

The typical process flow will be as follows:

- Salesforce publishes CDC events for enabled objects

- Talend ESB subscribes to Salesforce CDC using Apache Camel Salesforce components

- Talend ESB processes and routes events

- Events are delivered to Talend Streaming or another streaming or messaging system

This architecture enables near real-time data processing while keeping Talend Streaming decoupled from Salesforce-specific connectivity.

Talend ESB Capabilities for Salesforce CDC

Talend ESB leverages Apache Camel, including the Salesforce Camel component, to support CDC-based integrations.

Key capabilities include:

- Subscribing to Salesforce CDC events

- Event-driven message routing

- Integration with messaging systems and streaming platforms

- Reliable mediation and transformation of CDC events

For detailed documentation, see:

- Salesforce CDC integration with Talend ESB

- Apache Camel components available in Talend ESB (including Salesforce)

Licensing Requirements

To use Talend ESB/Runtime, you must have a Premium or Enterprise subscription. Talend default licenses, such as Data Integration, do not include Talend ESB/Runtime. See Qlik Talend Cloud® Plans and Pricing for Talend Data Integration and ESB pricing details.

If you are uncertain what your subscription includes, contact your Qlik account representative.

In summary

To integrate Salesforce CDC with Talend Streaming:

- Talend Streaming alone is not sufficient

- Talend ESB is required to consume Salesforce CDC events

- Talend ESB uses Apache Camel Salesforce components

- A Premium or Enterprise license is mandatory

- This approach ensures a scalable, event-driven integration architecture aligned with Talend’s supported capabilities.

Need more direct help? Contact your Qlik account representative for technical and architecture guidance.

Environment

- Qlik Talend Studio

- Talend ESB