Unlock a world of possibilities! Login now and discover the exclusive benefits awaiting you.

- Qlik Community

- :

- Blogs

- :

- Technical

- :

- Design

- :

- Use Case Reference Architecture - #3: Data Lakehou...

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

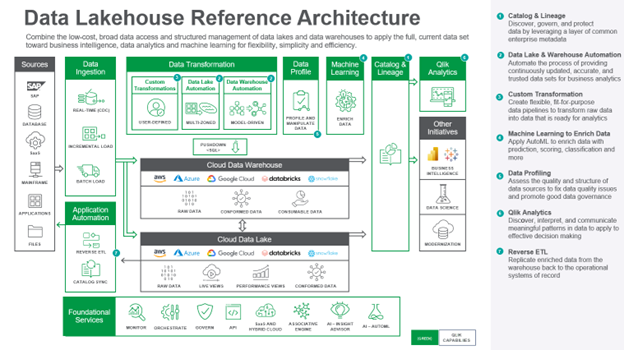

The architecture comprises the following components:

Data is ingested from transactional systems with low latency. Change data capture for real-time data replication ingests data without impairing production system performance.

Data Warehouse Automation accelerates the availability of analytics-ready data by automating the entire data warehouse lifecycle.

Data Lake Automation powers the process of providing continuously updated, accurate, and trusted data sets for business analytics.

Custom Transformation allows users to create flexible, fit-for-purpose data pipelines to transform raw data into data that is ready for analytics.

Data Profiling enables users to assess the quality and structure of data sources to fix data quality issues and promote good data governance.

Machine Learning enriches data with prediction, scoring, classification, and more.

Catalog & Lineage capabilities empower users to discover, govern, and protect data using AI and machine learning built on a layer of common enterprise metadata.

Analytics is used to discover, interpret, and communicate meaningful patterns in data to apply toward effective decision making

Reverse ETL replicates enriched data from the warehouse back to the operational systems of record.

You must be a registered user to add a comment. If you've already registered, sign in. Otherwise, register and sign in.