Unlock a world of possibilities! Login now and discover the exclusive benefits awaiting you.

- Qlik Community

- :

- Forums

- :

- More

- :

- Video Transcripts

- :

- How to Build Data Integration Pipelines with Qlik ...

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

How to Build Data Integration Pipelines with Qlik and Databricks

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

How to Build Data Integration Pipelines with Qlik and Databricks

1 – Data Platform Architecture (0:51)

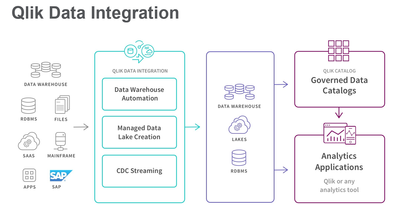

Qlik enables you to build and orchestrate data integration pipelines for Databricks Delta Lakes using the highly-automated and GUI-driven Qlik data integration platform. With Qlik, all required code is generated automatically -- rather than having to code it all yourself in notebooks.

The Qlik Data Integration platform supports Databricks Delta Lakes on all three major Public Clouds: Amazon Web Services, the Microsoft Azure Cloud, and the Google Cloud Platform.

In this demonstration you will see how data is moved from sources to the Data Lake Landing using Qlik Replicate, how to build and update the Databricks Delta lake automatically using Qlik Compose, and how to orchestrate the data pipeline using Qlik Enterprise Manager.

2 - Qlik Replicate (1:05)

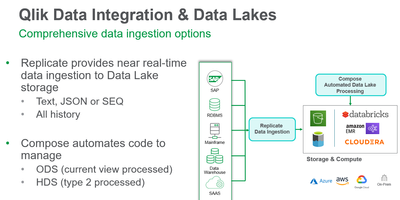

Qlik Replicate moves data from our source systems to the data lake.

We have already defined connections to both our relational source and our Databricks Delta Lake landing Zone. Creating a Qlik Replicate task to move the data is extremely simple: you just define the name, the task options, and then select the source and target connections.

In the Store Changes Settings, we’ll define the time interval for Qlik Replicate to manage partitions in the Databricks landing zone. One of the keys to low latency is the Speed partition mode that registers partitions in Databricks at the start of the time interval. Qlik Replicate will deliver data to the cloud throughout the time window. This enables Qlik Compose Live Views to provide consumers low latency data access as soon as Qlik Replicate has delivered incremental files to the cloud.

Deselecting the speed partition mode will result in more transactionally consistent live views because the live view will not show those changes until the partition has been closed. However, this might require you to shortening the partition interval to propagate changes to Compose in a timely manner.

In the control tables we will ask Qlik Replicate to manage Change Data Partitions in the data lake landing and DDL History – to support schema evolution in our Delta Lake.

3 – Replicate Landing Notebook (0:13)

Let’s move into a Databricks notebook and view the Customer data in the data lake landing zone.

We can also see a corresponding change processing table that will store changes awaiting processing into the data lake storage zone.

4- Qlik Compose (1:22)

We’ve moved into Qlik Compose, where we will define our automated Databricks Delta Lake. Qlik Compose creates and manages both data lake and data warehouse projects from the same application, making a Lakehouse far easier to implement. The sources for our data lake listed here here can come from tasks defined on multiple Replicate servers.

Building a data lake in Qlik Compose begins by discovering the objects directly from the Qlik Replicate metadata.

Here we see the entities defined in the Qlik Compose Metadata Layer, both a logical and physical view.

Then Qlik Compose automatically creates the tables in the data lake storage zone and the ETL tasks to load and process data.

Qlik Compose automatically defines two data storage ETL tasks to load this data set. The first processes bulk loads into the lake, and the second that processes change data capture records.

If we look into the full load ETL task, we see a mapping for each of the tables we are managing.

Qlik Compose automatically generates all the code necessary to load and maintain our data lake. Every operation is in dependency order, and steps that can be run in parallel will be grouped into a process step. Here we see one of the SparkSQL load instructions in detail. The time savings over writing your own notebooks is clear.

5- Qlik Enterprise Manager (1:11 thru 7)

Qlik Enterprise Manager enables our customers to manage all of their Qlik Replicate and Qlik Compose servers and tasks in a single interface that is secure, audited, and API-accessible.

For example, we see that the Replicate task in our pipeline has processed some changes to the data lake landing already.

6- **Move to Databricks UI***

If we were to look in the Databricks environment for a moment, we can the results of those changes reflected in the Qlik Compose live view and live history views even though the changes have not yet been physically processed into the Delta Lake storage zone yet. The key advantage of Qlik Compose live views is they reduce latency while also reducing the utilization and cost to the cluster for data processing operations. The Qlik Compose Live views are part of the Qlik Compose product and should not be confused with the Databricks Delta Live tables. The Qlik Compose live views provide low latency access to data curated by a Qlik Compose data pipeline on any supported data lake platform; whereas, the Databricks Delta Live tables are a notebook-based data pipeline building facility within Databricks itself.

7- ***Return to Enterprise manager – Run CDC Workflow***

Workflows that orchestrate adding and transforming data from multiple sources, tasks and servers to the data lake, can be managed centrally from Qlik Enterprise Manager.

In this example, let’s execute a Compose CDC workflow from Enterprise Manager. The Qlik Enterprise Manager provides REST, Dot-Net and python APIs in addition to the internal scheduler. You may also use a third party scheduling tool.

8- ***Return to Databricks UI *** (045)

Let’s return one final time to our Databricks notebook to see the results of the Change Data Capture processing on the storage zone.

We can see the contact of the first record has been updated in the customers table.

We also see that prior states of changed records have been moved to the customers archive table. These are the only two tables for the customer entity in the storage zone. The other result sets displayed are all views.

First, an up to date Qlik Compose live view is now in synch with the processed customer data.

A customer history live view renders a type 2 history of the change with from and to dates based on the base and archive tables.

The Qlik Compose live history customer view also adds data from any open partitions to the customer history.

9-Exit (0:17)

I hope you enjoyed this brief demonstration of the highly automated Qlik Data Integration Platform and see how simple it is to create a cloud-based data lake using Qlik and Databricks, then keep it hydrated with data from your source systems. Thank you!