Unlock a world of possibilities! Login now and discover the exclusive benefits awaiting you.

- Qlik Community

- :

- Blogs

- :

- Technical

- :

- Design

- :

- Introducing Qlik AutoML

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

There is no doubt that Machine Learning applications have become ubiquitous in today’s world. From using it to solve critical healthcare problems to recommending music/products, we have seen the kind of impact it can have in our daily lives. However, there is a fair cost associated with building ML-based solutions specifically when -

- dealing with the end-to-end ML pipeline

- having skilled resources (Data Scientists, ML Engineers) to build & deploy models

Typically, an ML pipeline would look like this -

Machine Learning process

Machine Learning processEach of these steps is complex and involves spending a crucial amount of time. Also, specific expertise(statistical, software engineering knowledge, etc.) is needed to be able to perform these tasks and ultimately productionize the models to be consumed by end-users. These factors have led to the possibility of automating the pipeline and helping cut down the manual costs.

Organizations today also need to be able to empower teams who are already data literate and leverage data for decision making. Consider a BI Engineer who is already part of the analytics process. Wouldn’t it be great if we can enable them to engineer the features, train & automatically select a robust model and help them deploy it without needing to rely on a team of data scientists & ML engineers? This has given rise to a new role called ‘Citizen Data Scientist’.

These are nascent steps towards the democratization of Machine Learning and can help organizations maximize their data & analytics strategy providing them with a matured analytics team. And this is where Qlik AutoML comes in!

Source: Qlik AutoML

Source: Qlik AutoML

Qlik AutoML is an automated machine learning platform for analytics teams used to generate models, make predictions, and test business scenarios using a simple, code-free experience. I had the opportunity to get my hands-on and the experience has only been promising. In this introductory blog, we will quickly walk through some of the features as part of the ML pipeline while solving a binary ‘classification’ problem.

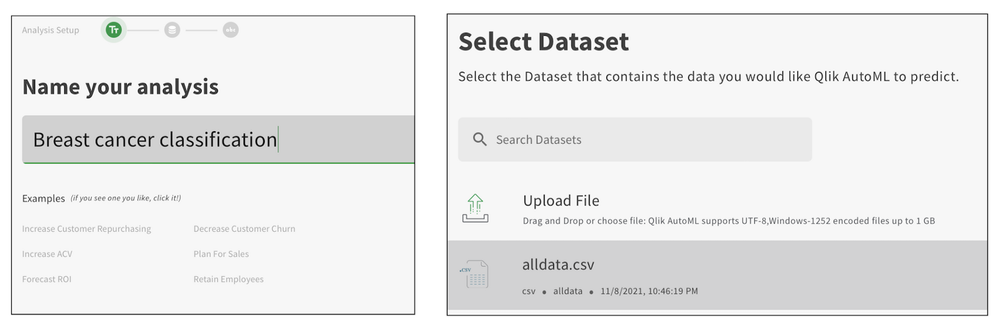

For this use case, we will use the Breast Cancer Wisconsin (Diagnostic) dataset and our goal is to classify blood cells as ‘benign’ or ‘malignant’. First, we will create our project and load the dataset using the AutoML interface.

Qlik AutoML presents a nice overview of the dataset for exploratory data analysis with information about unique values, null values, min/avg/max, etc.

Since our label is the ‘diagnosis’ field, we will set it as target.

The interface automatically creates a pipeline which by default consists of the preprocessing steps applied by Qlik AutoML such as null value imputation, encoding of categorical values, feature scaling, k-fold cross-validation, etc.

It also presents the list of algorithms based on the selected target label and you will have the option to select/deselect from this list.

Additionally, you can add Hyperparameter optimization into the pipeline that would tell the system to perform a search optimization over multiple parameter settings & models to find the best ones.

To start our training and let Qlik AutoML do its job of finding the best algorithm(good F1 score criteria) we will click on Analyze. As the training process runs, the interface would look like this.

After the training is over, the best candidate is automatically selected by the AutoML system. In our case, Logistic Regression is selected as the best model with an F1 score of 0.951. The analysis results are presented for further drill down. There are 4 key components as seen below.

Analysis results after training

Analysis results after trainingLet’s quickly take a look at each of these as they are crucial in helping citizen data scientists/analysts understand their model & features.

Feature importance

This view presents Permutation importance, i.e. how much the model performance depends on a feature, and SHAP importance, i.e. how each feature contributes to the predicted outcome.

Permutation importance can be beneficial in refining our model by dropping some of the less important features. In our case, we see that there are a lot of features(left image) that are not important, so will drop them later and refine our model to see if it improves performance.

Similarly, SHAP importance can help us understand the most important features. We know now that ‘texture_worst’, ‘radius_worst’, ‘concavity_mean’, etc. are some of the most important features that impact the decisions.

Correlations

This view lets us know how each features are correlated to each other in 2 forms — correlation matrix & target correlations.

Fit

Fit shows how well Qlik AutoML performed in comparison to the historical data. In our case, looks like the model did pretty well with the predictions.

Model Stats

The final view lets a way to evaluate our model. In a classification problem, typically this can be done by analyzing a ROC curve and Confusion matrix. Qlik AutoML also presents the same plots.

For our Logistic Regression model, the ROC curve looks like below. Classifiers that give curves closer to the top-left corner indicate better performance and we know that our model does great from this.

ROC Curve

ROC Curve

Next, let’s look at the Confusion matrix.

Confusion Matrix

Confusion Matrix

For our use case, i.e. classifying diagnosis of cancer cells, it is imperative to know the false negatives (i.e. where the predictions incorrectly indicate the absence of a condition when it is actually present). We can see that 3 of them are FN.

If you would like to explore all the models used in the training pipeline, the Model Metrics screen presents all the details. You can also understand the hyperparameters used in a specific model by clicking on a specific model. Here is an example from our Logistic Regression model.

Now, let’s use this analysis and predict on unknown test data(not used in training the model) to see how it performs.

The Create Predictions section allows us to load a test dataset and predict.

Here’s our Prediction analysis.

Analysis after prediction on test data

Analysis after prediction on test data

One of the interesting views in this analysis is the Scenarios where you can modify(increase/decrease) your features and see how it impacts the predictions. Let’s try something in our use case — we will increase the ‘texture_worst’ value and see how the results look.

Qlik AutoML presents a nice visual comparison in the form of grouped bar charts to understand how this scenario change has changed the predictions. Looks like an increase in the ‘texture_worst’ feature leads to more ‘Malignant’ patients.

Once we are satisfied with both training and test analysis, the AutoML system allows us to easily deploy and make a production version of the model via an API(Prediction API) for inferences. You can now integrate this into any workflow or framework that allows you to make HTTPS POST Requests.

This brings us to the end of this introductory blog on Qlik AutoML. My personal experience using the system has been seamless. Here are some key takeaways:

- easy-to-use interface (native Qlik Sense experience)

- quickly train, evaluate & deploy ML models with minimal adjustments

- visualization-assisted analysis

- no-code machine learning

- seamless integration with frameworks using Prediction API

In the next blog, we will deep dive into how to build, deploy and evaluate a Machine Learning model using Qlik AutoML and consume it in Qlik Sense to take advantage of augmented analytics.

~Dipankar, R&D Advocate

You must be a registered user to add a comment. If you've already registered, sign in. Otherwise, register and sign in.