Unlock a world of possibilities! Login now and discover the exclusive benefits awaiting you.

Recent Documents

-

How to use task chaining with Qlik Automate

This article explains how to perform task chaining for your Qlik Cloud apps using Qlik Automate. It'll start with a simple app reload example and fin... Show More -

Installing Drivers for your Qlik Data Gateway

Purpose The purpose of this post is to help you install the database drivers necessary to allow your Qlik Data Gateway to communicate with your compa... Show MorePurpose

The purpose of this post is to help you install the database drivers necessary to allow your Qlik Data Gateway to communicate with your company's servers once you have completed the Qlik Data Gateway installation itself.

Background

If you are anything like me, perhaps you panicked a bit at the thought of installing the Qlik Data Gateway in a Linux environment. I have a lot of experience with .EXE installations in Windows environments. You know "Next – Next – Next – Finish." But an .RPM file? I had never even see that extension type before. If you were a Linux connoisseur beforehand, you probably guessed that my image for this post is an homage to the Fedora flavor of Linux. Otherwise you just thought it was an advertisement for the new "Raiders of the Lost Data" movie.

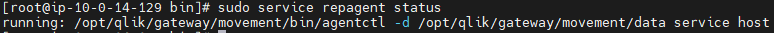

In any event, by now you have created your first Data Gateway, applied the registration key, completed the setup instructions and thankfully the command to check your Data Gateway service shows that it is running.

When you go back to the Data Gateway section of the Management Console and do a refresh your eyes fill you with happiness because your brand spanking new Data Movement Gateway shows "Connected.”

A lesser person would go celebrate right now. But you've decided to try and connect to a source before doing your happy dance. So, you create a new Data Integration project to the destination of your choice. While you will ultimately have many different data sources, let's imagine that you decide to start with a "SQL Server (Log Based)" connection, as your first source test.

You input the server connection details, but your SQL Server doesn't use a standard port for security. Finally, you find information online that you should input your server IP followed by a "comma and the port #". As an example, if your servers IP is 39.30.3.1 and your security port is 12345 you would input "39.30.3.1,12345'. Next you input the user and password credentials. Your last step is to choose the database. Easy peezy, lemon squeezey. Right?

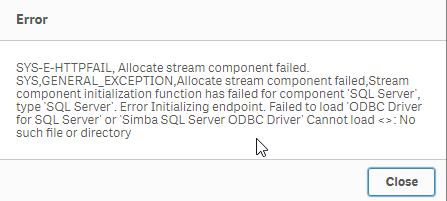

You press the "Load databases" button but suddenly a dialog comes up telling you that the Data Gateway can't connect because it can't find a SQL Server driver.

Driver Installation

Your heart starts beating quickly but naturally as a pro, you remain calm on the outside. Eventually you realize that whether on Windows or Linux, applications have always required drivers to communicate with servers. This is nothing new, we just got excited when we saw that connected message and thought we were done. Upon going back to the setup guide

you realize that there is in fact a link labeled "Setting up Data Gateway – Data Movement source connections."

So, you go ahead and click the link and it takes you to:

Wow, so many sources, and so many additional links to click to ensure the required drivers are in place for the sources your company will need. All the documentation is there, but I know firsthand that it can get a bit overwhelming, especially if Linux isn't your native language, which is the reason for this post.

Obviously every one of you reading this works in an environment that may require different data source connections than the others. Thus, there is no way for me to predict and help with your exact configuration. However, odds are strong that most of you likely require at least: SQL Server, Databricks, Snowflake, Postgres or MySQL, various combinations of them, or perhaps all of them.

As tedious, or imposing as it may be, I highly recommend you walk through the documentation for each data source you will need. But thanks to my buddy John Neal, I have attached a Linux shell script that can be executed to configure all 5 of those data sources for you. Given the many flairs and versions and configurations of Linux I can't ensure that it will work for everyone, but at least it is a start for those that may want to press an easy button, and those that like me may be somewhat or brand new to Linux.

If you choose to take advantage of it, understand that it is only being offered a shelp, and is not meant to replace the documentation. To utilize it you will need to do the following (Please note in my examples I have changed to the root user. If you are logged in as a normal user account, you may need to use SUDO "super user do"):

- Copy the attached "repldrivers-el7.sh.txt" file your Linux home directory where you placed the gateway .RPM file.

- Change the file name to simply be "repldrivers-el7.sh" so that it's clear that it is a shell script in case you or others see it in the future.

- Issue the following command from your prompt: "chmod 775 repldrivers-el7.sh" so that the file has the appropriate security allowing it to be executed.

- Issue the following command from your prompt: "./ repldrivers-el7.sh"

- Issue the following command from your prompt: "cd /etc"

- Issue the following command from your prompt: "/etc/odbcinst.ini"

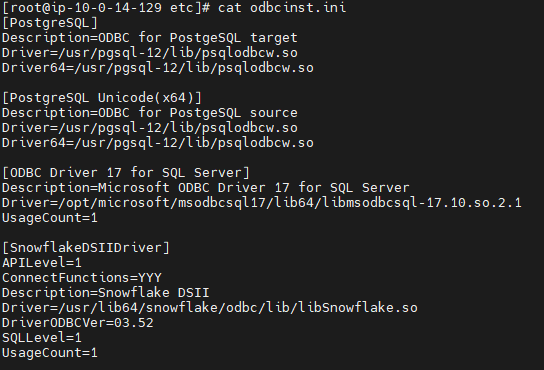

If all went well with the installation your output should look like similar to the following image that was part of my file:

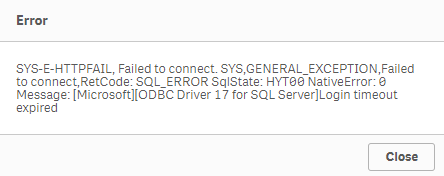

It's almost time to do our happy dance, but let's hold off until we test. In my starting example I asked you to assume we wanted to test against a "SQL Server (Log Based) connection." When we left off it was because we got an error message we had no driver while trying to load the list of databases. I will try that again.

Oh no, the heart rate is going up again.

We have successfully installed the Qlik Data Gateway. We have successfully installed the required drivers. Yet, we are getting this new error message. Let's focus on our breathing and try and digest the situation. What could cause our attempt to connect to our data source to timeout? I got it.

It's likely network security. We know what we want to talk to. We know the location. We know the credentials. But our networks aren't always wide open to do the talking. Resolving your connectivity/firewall issues may or not be with your abilities and if you are like me, you may need to seek the help of your IT/Networking team.

When I reached out to my friendly IT guru, here within Qlik, he was able to help me get everything in place so that my Linux server could speak with my database servers, including all of the needed ports.

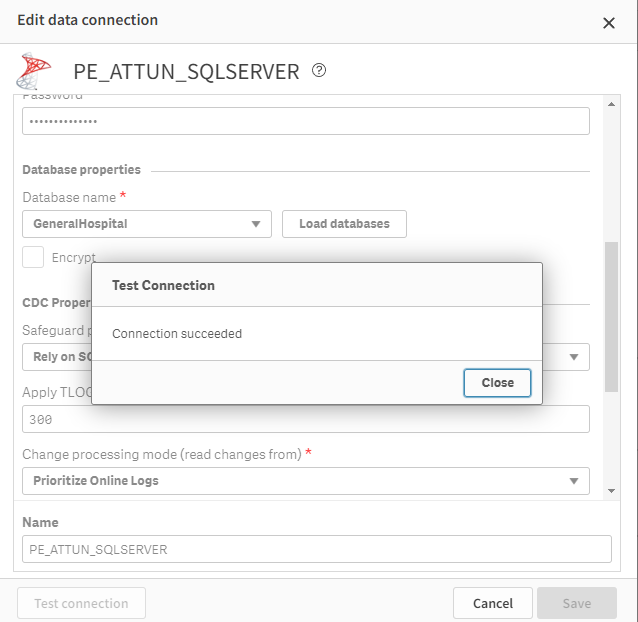

Once they were completed I was able to test and sure enough my data connection succeeded.

Whether or not you do a happy dance, as I did, I hope that this post has helped you get to that sweet smell of success. After all, someone has to be known as the amazing person who got your Qlik Data Gateway going so that others in the Data Engineering team could create all of those lights out Qlik Cloud Data Integration projects that would be feeding data in near real time to all of those wonderul analytics use cases. Hopefully with the help of the documentation and this post, that person is you my friends.

Challenge

One of the things I've long admired about the Qlik Community is their willingness to help each other through this Community site. If you are a Linux guru and are so inclined I would love to see you share other versions of the shell script that I have started. Maybe your organization is using another flair/version of Linux and you needed to make a few tweaks to my file. Maybe your organization needed Oracle added and you can tweak my file. Whatever the reason, I sure hope you will give back to the community by sharing all of those tweaks here. Who knows, your help might help them be able to do their happy dance. And we all know the world is a better place when more people do their happy dance.

Related Content

Qlik Data Gateway - Data Movement prerequisites and Limitations - https://help.qlik.com/en-US/cloud-services/Subsystems/Hub/Content/Sense_Hub/Gateways/dm-gateway-prerequisites.htm

Setting up the Data Movement gateway - https://help.qlik.com/en-US/cloud-services/Subsystems/Hub/Content/Sense_Hub/Gateways/dm-gateway-setting-up.htm

PS - I created both of the images here using a generative AI solution called MidJourney. I hope they've added to the fun of this post.

-

How to identify end user Client Browser, OS, Device and IP address in Qlik Sense

Is there any option to find out, which Browser, OS, Device and IP address of our users using to log in to Qlik Sense production system? Can this data ... Show MoreIs there any option to find out, which Browser, OS, Device and IP address of our users using to log in to Qlik Sense production system? Can this data be found in the Qlik Sense monitoring apps or Qlik Sense logs?

Note that this article covers the historic analysis of data and does not include how to identify access live and have Sense react accordingly.

Environments:Qlik Sense any versionMethod 1, using the Qlik Sense Proxy logs

This method requires for the Proxy log files logging level to be increased, and for Extended Security Environment to be enabled. Extended Security Environment has consequences in the environment, such as disabling the potential of sharing sessions across multiple devices. See the Qlik Online help for details.

Settings to Enable:- In the Qlik Sense Management Console, select the required Proxy

- Navigate to Logging

- Set the Audit Log level to Debug

- In the Qlik Sense Management Console, select the required Virtual Proxy

- Navigate to Advanced

- Enable "Extended Security Environment"

The proxy will now log additional information in:

C:\ProgramData\Qlik\Sense\Log\Proxy\Trace\[Server_Name]_Audit_Proxy

Example Output:

Audit.Proxy.Proxy.Core.Connection.ConnectionData [X-Qlik-Security, OS=Windows; Device=Default; Browser=Chrome 67.0.3396.99; IP=::ffff:172.16.16.100; ClientOsVersion=10.0; SecureRequest=true; LicenseContext=UserAccess; Context=AppAccess; ] || [X-Qlik-User, UserDirectory=DOMAIN; UserId=administrator]

For more information on where to find the logs see

How To Collect Qlik Sense Log FilesMethod 2, using the Qlik Sense HubService logs

A much more lighter-weight approach than method 1 would be to parse the HubService logs in C:\ProgramData\Qlik\Sense\Log\HubService. No additional settings are required.

Example Output:::ffff:192.168.56.1 - - [31/May/2019:12:36:40 +0000] "GET /about HTTP/1.1" 304 - "https://SERVERNAME/hub/?qlikTicket=9mDlmVfE-E1Nc3RT" "Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/74.0.3729.169 Safari/537.36"

-

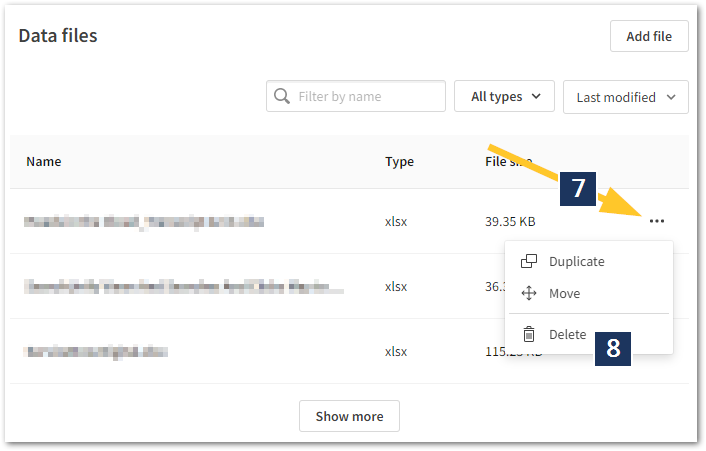

How To Delete Data Files and Datasets from Qlik Cloud Hub and Data Spaces

Method 1: From the Data Sources Open the Qlik Analytics Service Select Catalog Pick your Space Click Space Details then Select Data Files from the... Show MoreMethod 1: From the Data Sources

- Open the Qlik Analytics Service

- Select Catalog

- Pick your Space

- Click Space Details then

- Select Data Files from the context menu

- Locate the data you wish to delete

- Click the ellipses to open the next menu

- Click Delete

- Alternatively, add /data at the end of the URL.

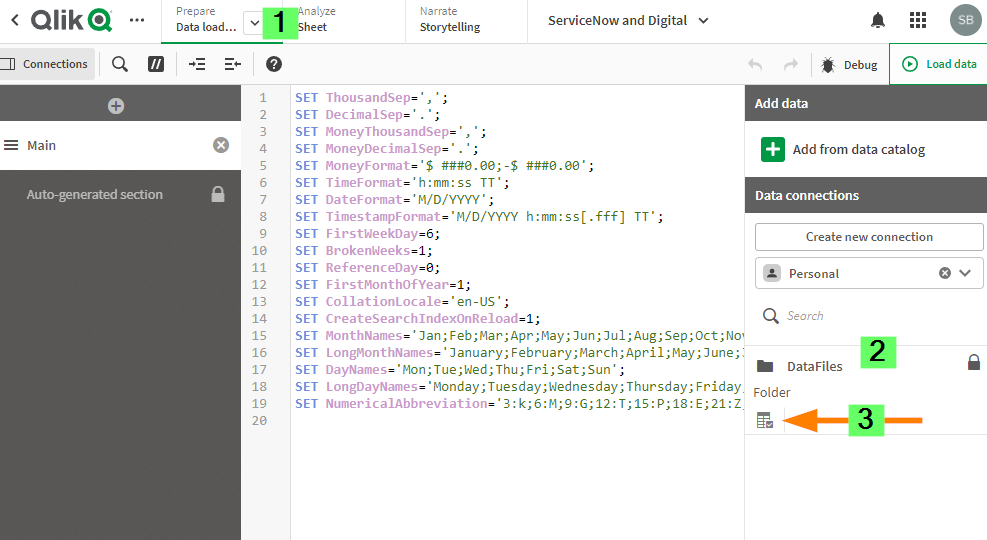

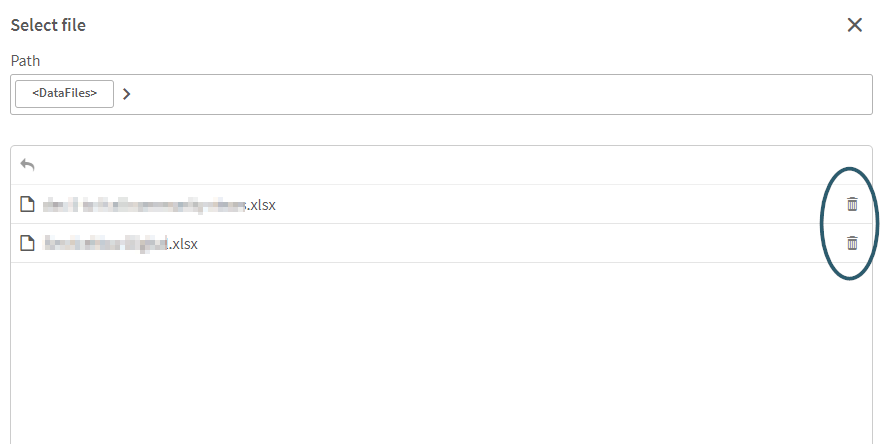

Method 2: From the Data Load Editor

- Open the App with a connection to the data files you wish to edit

- Go to Data Load Editor

- On the Right Side of the Data Load Editor under add data section select your data space.

- Click on the table icon under datafiles.

- select the Delete option on the right side of each file that you want to delete.

Environment

The information in this article is provided as-is and to be used at own discretion. Depending on tool(s) used, customization(s), and/or other factors ongoing support on the solution below may not be provided by Qlik Support.

-

How to disable the App Distribution Service (ADS) on a machine in a multinode en...

In a Windows multi-node deployment, the App Distribution Service (ADS) distributes apps from Qlik Sense Enterprise on Windows to Qlik Sense Enterprise... Show MoreIn a Windows multi-node deployment, the App Distribution Service (ADS) distributes apps from Qlik Sense Enterprise on Windows to Qlik Sense Enterprise SaaS. The service is installed on every node. However, Qlik Sense does not have load balancing for ADS, meaning if not all nodes have access to the apps, distribution may fail. See App Distribution from Qlik Sense Enterprise to Qlik Cloud fails when distributed from RIM NODE.

If you wish to disable app distribution from certain nodes:

- Log on to Qlik Sense node on which you wish to disable App Distribution.

- Stop the Qlik Sense Dispatcher Service.

- Open the "C:\Program Files\Qlik\Sense\ServiceDispatcher\services.conf" file with Notepad or any text editors.

- Find the [appdistributionservice] section and the[hybriddeploymentservice] section.

- Disable both by setting it to Disabled=true.

[appdistributionservice] Disabled=true Identity=Qlik.app-distribution-service DisplayName=App Distribution Service ExePath=dotnet\dotnet.exe UseScript=false [hybriddeploymentservice] Disabled=true Identity=Qlik.hybrid-deployment-service DisplayName=Hybrid Deployment Service ExePath=dotnet\dotnet.exe UseScript=false - Save the file.

- Restart the Qlik Sense Dispatcher Service.

Environment

- Qlik Sense multi-cloud environments. Any versions.

-

How to extract changes from the change store (Write table) and store them in a Q...

This article explains how to extract changes from a Change Store and store them in a QVD by using a load script in Qlik Analytics. The article also i... Show MoreThis article explains how to extract changes from a Change Store and store them in a QVD by using a load script in Qlik Analytics.

The article also includes

- An app example with an incremental load script that will store new changes in a QVD

- Configuration instructions for the examples

Scenario

This example will create an analytics app for Vendor Reviews. The idea is that you, as a company, are working with multiple vendors. Once a quarter, you want to review these vendors.

The example is simplified, but it can be extended with additional data for real-world examples or for other “review” use cases like employee reviews, budget reviews, and so on.

The data model

The app’s data model is a single table “Vendors” that contains a Vendor ID, Vendor Name, and City:

Vendors: Load * inline [ "Vendor ID","Vendor Name","City" 1,Dunder Mifflin,Ghent 2,Nuka Cola,Leuven 3,Octan, Brussels 4,Kitchen Table International,Antwerp ];The Write Table

The Write Table contains two data model fields: Vendor ID and Vendor Name. They are both configured as primary keys to demonstrate how this can work for composite keys.

The Write Table is then extended with three editable columns:

- Quarter (Single select)

- Action required? (Single select)

- Comment (Manual user input)

Prerequisites

- A shared space

- A managed space (optional but advised for the tutorial)

- A connection to the Change-stores API to the Analytics REST connector in the shared space. A step-by-step guide on creating this connection is available in Extracting write table changes with the REST connector in Qlik Cloud.

Steps

- Upload the attached .QVF file to a shared space

- Open the private sheet Vendor Reviews

- Click the Reload App (A) button and make sure data appears (B) in the top table

- Go to Edit sheet (A) mode

- Drag a Write Table Chart (B) on the top table, and choose the option Convert to: Write Table (C).

This transforms the table into a Write Table with two data model columns Vendor ID and Vendor Name.

- Go to the Data section in the Write Table’s Properties menu and add an editable column

- This prompts you to define a primary key inside the table. Click Define (A) in the table and use both Vendor ID and Vendor Name as primary keys (B).

You can also just use Vendor ID, but we want to show that this also supports composite primary keys. - Configure the editable column:

- Title: Quarter

- Show content: Single selection

- Add options for Q1Y26 through Q4Y26.

Tip! Also add an empty option by clicking the Add button without specifying a value.

- Add another Editable column with the below configuration

- Title: Action required

- Type: Single select

- Options: Yes and No

- Add another Editable column with the below configuration

- Title: Review

- Type: Single select

- Options: Yes and No

- The Write Table is now set up.

Go to the Write Table’s properties and locate the Change store (A) section. Copy the Change store ID (B).

- Leave the Edit sheet mode. Then add changes for at least two records. Save those changes.

- Go to the app’s load script editor and uncomment the second script section by first selecting all lines in the script section (CTRL+A or CMD+A) (A) and then clicking the comment button (B) in the toolbar.

- Configure the settings in the CONFIGURATION part at the end of the load script

- Update the load script with the IDs of the editable columns.

The easiest solution to get these IDs is to test your connection. Make sure the connection URL is configured to use the /changes/tabular-views endpoint and uses the correct change store ID.

- Copy and paste the example load script (for the editable columns only) and paste it in the app’s load script SQL Select statement that starts on line 159:

- Replace the corresponding * symbols in the LOAD statement that starts on line 176:

- Choose which records you want to track in your table by configuring the Exists Key on line 216.

This key will be used to filter the “granularity” on which we want to store changes in the QVD and data model, as the load script will only load unique existing keys (line 235).

- $(vExistsKeyFormula) is a pipe-separated list of the primary keys.

- In this example, Quarter is added as an additional part of the exists key to keep track of changes by Quarter.

- Optionally, this can be extended with createdBy and updatedAt to extend the granularity to every change made:

- Reload the app and verify that the correct change store table is created in your data model. The second table in the sheet should also successfully show vendors and their reviews.

Environment

- Qlik Cloud Analytics

-

Qlik Answers Agentic Analytics FAQ

This article provides answers to the most frequent questions asked about Qlik Answers. For the Qlik MCP FAQ, see Qlik Model Context Protocol (MCP) FAQ... Show MoreThis article provides answers to the most frequent questions asked about Qlik Answers.

For the Qlik MCP FAQ, see Qlik Model Context Protocol (MCP) FAQ.

What is Qlik Answers Agentic Analytics?

In February 2026, we launched our new agentic experience, which will enhance decision-making and improve productivity through a combination of assistants and agents running on a cutting-edge architecture. This initial release includes out-of-the-box agents for structured data analytics, unstructured knowledge, discovery of anomalies, and help and assistance. These agents take advantage of our foundational capabilities, including our data products and unique analytics engine, to execute complex, multi-step tasks in a trusted, scalable, and secure manner.

How will Qlik Answers evolve?

Qlik Answers is the primary AI assistant for people to interface with agentic AI. It will understand the intent of natural language questions and engage the underlying agentic framework to execute tasks, build responses, and take actions.

Qlik Answers now combines structured data analytics with unstructured content and general knowledge and reasoning from LLMs to deliver the most complete and relevant answers and insights, helping our customers improve decisions, productivity, and business outcomes in ways not possible before.

Looking ahead, as we build additional agents, such as prediction agents and pipeline agents, they will all be invoked through Qlik Answers. A broader set of agents is planned, all aimed at helping users get more value from their data and become more productive as Qlik continues to evolve.

What is the value to me as a customer?

With Qlik Answers now able to handle both structured and unstructured data, you can drive hundreds more informed decisions and actions each day. You can drive productivity through automation of a broad range of data and analytics tasks and workflows. And with plug-and-play simplicity, you can quickly deploy assistants in a matter of hours, reducing risk, speeding time-to-value, and future-proofing their investments in AI.

How much does the Qlik Answers cost?

For now, Qlik Answers will continue to be priced based on current models for the number of questions asked. You get capacity at corresponding levels in Standard, Premium, and Enterprise editions, as well as Qlik Sense Enterprise SaaS, with additional capacity available for purchase as needed.

There is currently no additional cost for structured data questions or task automation requests; a question is a question.

For additional details, refer to Pricing.

What if I have a valid subscription, but cannot use Qlik Answers yet?

Since launch, Qlik Answers has been rolled out across regions, and the process is still ongoing. If you have Standard, Premium, and Enterprise editions, check if your region already supports it (see Supported regions).

If it is not yet available to you, then:

- MCP is already available to you regardless, see Deploying and administering Qlik MCP server

- Be on the lookout for an in-product message alerting you once your tenant has access

Do I have to be a Cloud customer to use it?

Yes, you must be a Qlik Cloud customer to use Qlik Answers. Qlik Answers is built on cloud-native technologies, specifically large language models (LLMs) that require significant compute resources and specialized infrastructure, and there is no mechanism to deploy these technologies in an on-premises environment.

However, you don’t have to fully migrate their analytics environment or documents to the cloud to take advantage of Qlik Answers. Analytics apps can be pushed to the cloud as needed to support Qlik Answers. See Qlik Answers and applications distributed from Qlik Sense Enterprise on Windows for details.

Will I get both Qlik Answers and Insight Advisor?

No. You will use either Qlik Answers or Insight Advisor, not both at the same time.

Qlik Answers represents the AI-first experience going forward. When a tenant chooses Qlik Answers, that becomes the primary way users interact with analytics. Insight Advisor is not available in parallel within the same tenant.

This is a deliberate choice to avoid duplicated experiences, inconsistent results, and user confusion.

Will Qlik Answers become available on premises?

No. Qlik Answers is cloud only.

There are no plans to bring Qlik Answers to on-premises environments. The product relies on cloud native AI services, managed infrastructure, and continuous model evolution.

What happens to Insight Advisor? Is it going away?

Insight Advisor is not being discontinued.

If you remain on Insight Advisor, you can continue using it. However, within a tenant, you must choose between Insight Advisor and Qlik Answers. You cannot run both experiences side by side.

What happens to the business logic we already built in Insight Advisor?

The most important and relevant business logic is preserved when moving to Qlik Answers.

That said, Qlik Answers is built for a newer generation of AI-driven analytics. In many cases, customers will find they no longer need to manually build or maintain the same level of logic, because the system handles more of that automatically.

The value is not in recreating everything exactly as it was, but in moving to a simpler, more capable experience.

What is the difference between Qlik Answers and Qlik MCP?

This is essentially a buy vs build decision:

- Qlik Answers is a fully managed, out-of-the-box solution. It features AI agents specifically

designed to take advantage of our unique capabilities, including our analytics engine.

Everything is preconfigured, secured, monitored, and continuously improved. Customers can start using it immediately without worrying about infrastructure, model selection, or orchestration.

It’s an easy, plug-and-play solution. - Qlik MCP is for customers who want to build and customize themselves. It is aimed at broader AI initiatives and assistants, allowing organizations to build on top of Qlik’s trusted data and analytics foundation. It’s designed for technically advanced teams that want to integrate their own models, customize deeply, and manage their own AI stack.

Essentially, it’s “APIs for AI.” - If you want speed, simplicity, and support, Qlik Answers is the right choice. If you want full

control and customization, and to build and operate it yourself, MCP is the right path.

What AI models does Qlik Answers use?

Qlik Answers is built on AWS Bedrock and currently utilizes Anthropic Claude models. The specific model versions vary by agent function and are continuously evaluated and updated based on performance, accuracy, latency, and cost optimization.

Our Model Selection Philosophy:

Qlik maintains flexibility in model selection to continuously improve the user experience as AI technology evolves. Different agents within the Qlik Answers architecture may use different models optimized for their specific tasks (e.g., semantic understanding, code generation, reasoning).

Can I bring my own AI model to Qlik Answers?

No. Not at this stage.

Qlik Answers is a managed experience with curated models and configurations. Customers who want to use their own models or bring custom AI stacks should use MCP instead.

Does Qlik Answers work with my existing Qlik apps?

Yes. Qlik Answers works on top of existing Qlik Sense applications and uses the same data, logic, and security model.

But to get the best experience, apps should be prepared beforehand:

- Best practices for preparing applications for Qlik Answers

- Writing master item descriptions for Qlik Answers

Does it respect master items and business logic?

Yes. Master measures and dimensions are always prioritized. If business logic exists, Qlik Answers uses it rather than creating new calculations.

Does it generate charts automatically?

Yes. Qlik Answers generates appropriate visualizations such as KPIs, bar charts, or time-based charts depending on the question.

How does security work in Qlik Answers?

Qlik Answers inherits and enforces Qlik's established security model without exception. All existing security rules, section access configurations, and row-level security policies apply automatically.

Key security principles:

- Users only see data they are authorized to access

- Section access restrictions are enforced

- Application-level permissions control which apps users can query

Field-level security (if implemented) is respected in all analyses.

No additional security configuration is required. Organizations with complex security requirements can continue using their existing Qlik security implementations with confidence.

Can different users get different answers to the same question?

Yes, if their access rights differ. Answers are always scoped to the user’s permissions.

Do I need to prepare my data differently?

While no special data preparation is required beyond standard Qlik Sense data modelling best practices, the apps themselves should be prepared beforehand to give you the best experience possible:

- Best practices for preparing applications for Qlik Answers

- Writing master item descriptions for Qlik Answers

Does Qlik Answers support follow-up questions?

Yes. Qlik Answers understands conversational context, allowing users to refine or continue their analysis.

Does Qlik Answers support multiple languages?

At its initial GA release, Qlik Answers is optimized and fully supported for English language queries and responses.

While the underlying large language models have multilingual capabilities and may be able to process queries in other languages with varying degrees of accuracy, non-English language support is not officially validated, documented, or supported by Qlik at this time.

Additional language support is planned for future releases based on demand and regional priorities.

Does it replace analysts or data teams?

No. It accelerates analysis and reduces repetitive work but does not replace human expertise or decision-making.

Can I limit which apps are available to Qlik Answers?

Yes. Only enabled and indexed applications are available.

Does it work across multiple apps?

Not in the current GA release. Qlik Answers operates within the context of a single Qlik Sense application per query. Multi-application query capabilities are planned for a future release.

What scopes do I need to be able to ask questions?

If you want to ask questions in an app, you just need the ‘Data analysis’ scope. If you plan on asking questions to an assistant, you need the ‘Data analysis’ and ‘Search knowledge base’ scopes.

What is the risk of Cross-Region Inference?

Cross-region inference has minimal risks as the data still stays within the AWS Virtual Private Network. The only difference here is that the LLM call gets processed in a different region due to GPU availability.

What about the speed of responses?

We have made a deliberate design decision to prioritize the quality of answers and insights over the speed of responses. In general, Qlik provides a far richer reasoning process and answer than competing products, and this results in a longer response time. We are planning to improve and optimize this, as well as introduce a faster mode for simpler questions in the future.

How do I troubleshoot my app if I am not getting the answers I expect?

Qlik Answers always references its sources in detail. To begin troubleshooting, check the citations, which will show:

- The app used

- The fields and expressions used to build the visualization

- The knowledge base used

- The text chunks being referenced

In a case where you do not get the response you expect based on the sources, or you receive an error:

- Ask again in case of an error (see the appendix for possible error codes)

- Rewrite the prompt (see Best practices for chatting with Qlik Answers)

Has your app been prepared for Qlik Answers?

- Best practices for preparing applications for Qlik Answers

- Writing master item descriptions for Qlik Answers

What capacity limitations do we need to consider?

Your Qlik Cloud subscription determines the quota of questions asked by users. If you are licensed for Qlik Answers, both MCP and Qlik Answers will use your monthly question capacity. See Administering Qlik MCP server.

What happens when we reach a capacity limit?

Question capacity quotes are per month and reset every month. When you hit your limit, users can no longer ask questions until the next month. Overage is only allowed, depending on your subscription. For more information, see Qlik MCP server product description.

For more information on overage, see Overage.

What functional limitations do we need to consider?

- See Qlik Answers Limitations.

- Qlik Answers will work with one app or assistant at a time. It does not search through all apps.

How do we turn off features?

Features can be turned off for individual users through user scopes.

- Go to your Administration Center

- Go to Manage Users

- Switch to the Permissions tab

- Click User Defaults and change the scope as required

See Control access to AI features.

If you have previously enabled the feature, the entirety of Qlik’s Agentic Analytics can easily be turned off again by configuring AI features in Qlik:

- Go to your Administration Center

- Go to Settings

- Go to the Tenant tab

- Toggle Enable cross-region inference in AI features in Qlik

See Enable cross-region inference.

Appendix

Error codes

These error codes should only be used to reference what is an expected error. Retry if you receive any of these errors.

Retry and Processing Errors

- DAA-001: Retry limit exceeded.

- DAA-002: Maximum duplicate processing attempts exceeded.

App and Document Errors

- DAA-007: App ID not found in swarm.

- DAA-008: Failed to retrieve the document.

- DAA-009: Invalid App ID.

- DAA-010: App not indexed for answers.

Chart and Sheet Errors

- DAA-016: Failed to create chart.

- DAA-017: Unexpected error while adding chart.

- DAA-018: Sheet creation failed.

Expression and Hypercube Errors

- DAA-024: Expression builder (v2) error.

- DAA-025: Hypercube length exceeded.

Semantic Search Errors

- DAA-030: No related data found in semantic search.

- DAA-031: Error while fetching semantic search results.

- DAA-032: Not enough relevant assets found in search.

- DAA-033: Validation error during semantic search.

Access Verification Errors

- DAA-050: Missing access control context.

- DAA-051: HTTP error occurred during access control request.

- DAA-052: Access control request timed out.

- DAA-053: Connection error during access control request.

- DAA-054: Access control privilege denied.

- DAA-055: Unexpected error in access control process.

-

How to get started with Snowflake in Qlik Application Automation

This article overviews the available blocks in the Snowflake connector in Qlik Application Automation. It will also cover some basic examples of retri... Show MoreThis article overviews the available blocks in the Snowflake connector in Qlik Application Automation. It will also cover some basic examples of retrieving data from a Snowflake database and creating a record in a database.

Connector overview

The Snowflake connector has the following blocks:

- List Tables: returns a list of the tables from the connected database

- List Records: returns a list of records from the specified table

- Insert Record: create one record in the specified table

- Upsert Record: create or update one record in the specified table

- Insert Bulk: create multiple records in the specified table

- Upsert Bulk: create or update multiple records in the specified table

- Do Query: do a generic SQL query against the connected Snowflake database

- Update Record by One Field: update a single record in the specified table

- Delete Record: delete one record in the specified table

- List Schemas: returns a list of schemas from the connected database

Authentication

To create a new connection to Snowflake, the following parameters are required:

- account_name: your Snowflake account/tenant name. Type 'ABC' if your Snowflake URL is abc.snowflakecomputing.com.

Warning

Account names that include underscores can cause issues for certain features. For this reason, Snowflake also supports a version of the account name that substitutes the hyphen character (-) in place of the underscore character. For example, both of the following URLs are supported:

URL with underscores:

https://acme-marketing_test_account.snowflakecomputing.comURL with dashes:

https://acme-marketing-test-account.snowflakecomputing.comMore details about the account name can be found in the below Snowflake documentation

- username: username for the user with remote access to the Snowflake database.

- password: password used to authenticate the above username. If the key pair authentication method is being used, use this field to provide the decryption passphrase.

- dbname: the name of the database you want to use for this connection. You'll need to create multiple connections to connect to multiple databases in the same automation.

- warehouse: the name of the warehouse you want to use for this connection.

- keyfile: an optional parameter that is used for the key pair authentication method. Only the encrypted version of the private key is supported as this method offers enhanced authentication security. You must generate a Privacy Enhanced Mail (.pem) file and copy the contents into this field. Use the password field to provide the private key passphrase for decryption. For more information about the key pair authentication method, see Creating a Snowflake Key Pair based connector for Application Automation and Key-pair authentication and key-pair rotation (docs.snowflake).

The password field is a required field when configuring a Snowflake connection in Qlik Automate. While Snowflake does permit the use of unencrypted private keys, which do not require a passphrase, Qlik's connector mandates the password field for both username and password authentication and key pair authentication with a password-protected keyfile.

If you have a keyfile without a passphrase, tools such as OpenSSL can be used to add one.

Example OpenSSL command to add a passphrase:openssl pkcs8 -topk8 -in existing_key.p8 -out encrypted_key.p8

-in existing_key.p8: your current private key file

-out encrypted_key.p8: the output file for the encrypted key

You will be prompted to enter and confirm a new passphrase after running the command.

Examples

Insert a new record into a table

- Add the Insert Record block from the Snowflake connector to your automation.

- Configure the block to point to a table in the database you're currently connected to. You can use the do lookup function for this.

- Run the automation. This will insert a new record in your Snowflake table.

Use the Do Query block to create a new table

The Do Query block can be used to perform actions in Snowflake that aren't supported by the other blocks.

- Create a new automation

- Search for the Snowflake connector in the Block library menu on the left side of the canvas, and find the Do Query block. Drag this block inside the automation. Highlight the Do Query block by clicking it and configure it in the Block configuration menu on the right side of the canvas.

- Add your query to create a new table in the Query input field. Here is a query example that creates a table:

CREATE TABLE "MY_DATABASE"."PUBLIC"."TEST" (ID INT, "Description" varchar (100), "Serial" NUMBER, COST FLOAT, "Create_date" DATE, "Details" VARIANT); - Run the automation. This will create a new table with the specified structure within the selected database.

Upsert multiple records into a table

- Add the Upsert Bulk block from the Snowflake connector to your automation.

- Configure the block to point to a table in the database you're currently connected to. You can use the do lookup function for this.

- The value of the Where key input parameter should be a unique identifier that is used to check the existing records and update them if needed.

- The value of the Data input parameter should be Json List to pass multiple records as Json Objects, along with a unique identifier added as a key-value pair to the Json Object.

The information in this article is provided as-is and to be used at own discretion. Depending on tool(s) used, customization(s), and/or other factors ongoing support on the solution below may not be provided by Qlik Support.

-

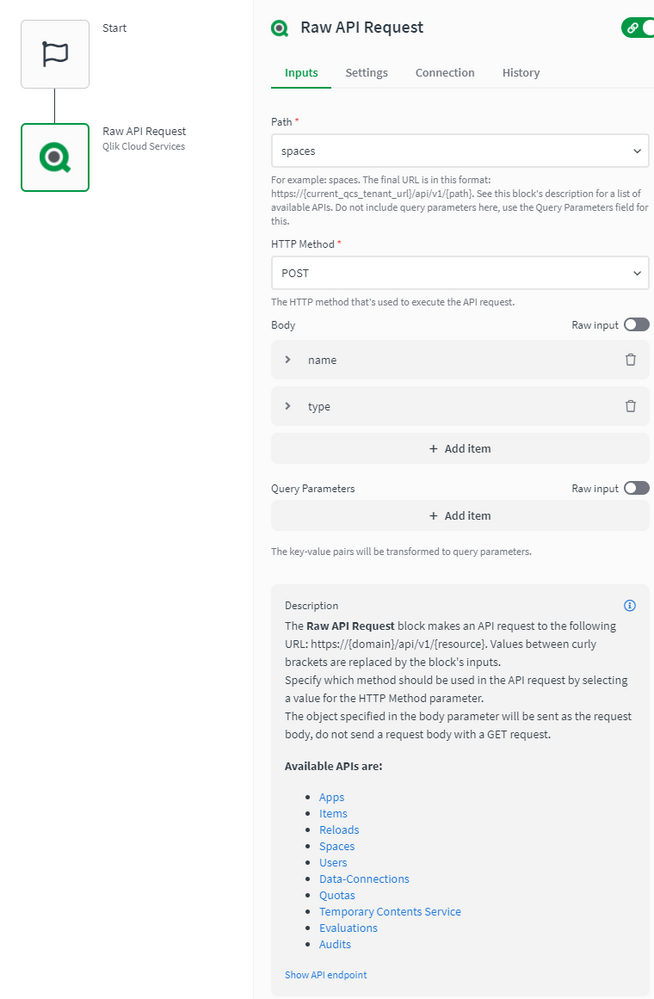

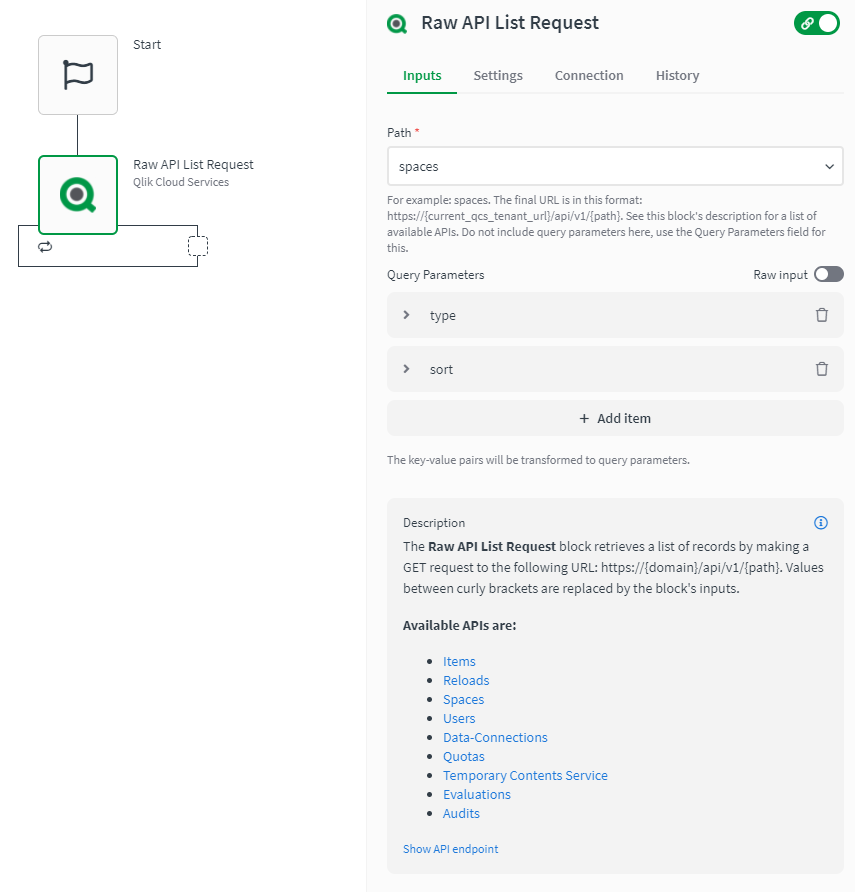

How to use the Raw API Request blocks in Qlik Automate

This article explains how the Raw API Request blocks in Qlik Automate can be used. Most connectors in Qlik Automate have a Raw API Request and a Raw ... Show More -

NPrinting Installation Fails with Error

NPrinting Initial Installation Fails with Error 0x080070643 (or similar) Environment Qlik NPrinting all supported versions Resolution Ensure yo... Show MoreNPrinting Initial Installation Fails with Error 0x080070643 (or similar)

Environment

- Qlik NPrinting all supported versions

Resolution

- Ensure you have carefully reviewed and met the installation requirements. See Planning your deployment.

- Ensure you have also carefully reviewed the companion NPrinting Product Release Notes (Release Notes) before installing.

- The downloaded NPrinting install files must be in an 'unblocked' state

- Ensure you have sufficient c:\drive space (NPrinting can only be installed on the c:\drive)

- Antivirus software should be disabled

- The Windows Server hosting NP server is online to the internet (if offline, ensure all windows updates are performed 'before' attempting to install NPrinting server software)

- The Windows Server hosting NPrinting has had all windows updates applied

- The NP server computer is installed exclusively (no other Qlik Products or earlier versions of NPrinting (ie: NP 16) are installed or have been previously installed)

- The Windows Server computer is a member of the same domain as the NPrinting Server service account

- The intended NPrinting domain service user account used to install the NPrinting Server software

- is a member of the local administrators group Windows Qlik NPrinting Engine service administrator

- has log on as a service > user rights assignment Windows Qlik NPrinting Server services administrator

- is NOT restricted in any way by Active Directory group policy

- is a member of the same domain as the NPrinting server computer

If any other version of NPrinting (ie NP 16 and earlier client server track) has been installed here previously or other unrelated software is installed, it would be recommended to reinstall Windows Server OS to ensure a clean start before installing NPrinting Server. (...or if this target Windows Server has been repurposed for use with NPrinting from some other function. It could already be damaged ie: damaged registry files).

*It is best to have a clean slate when installing Qlik NPrinting Server for the first time or if you suspect that the underlying Windows Server has become corrupted or damaged, or even when upgrading and Error 0x080070643 appears *

Related Information

-

How to extract changes from the Change Store (Write Table) and store them in a d...

This article explains how to extract changes from a Change Store by using the Qlik Cloud Services connector in Qlik Automate and how to sync them to a... Show MoreThis article explains how to extract changes from a Change Store by using the Qlik Cloud Services connector in Qlik Automate and how to sync them to a database.

The example will use a MySQL database, but can easily be modified to use other database connectors supported in Qlik Automate, such as MSSQL, Postgres, AWS DynamoDB, AWS Redshift, Google BigQuery, Snowflake.

The article also includes:

- Two automation examples you can download and import (see Qlik Automate: How to import and export automations):

- Automation Example to Extract Change Store History to MySQL Incremental.json

- Automation Example to Bulk Extract Change Store History to MySQL Incremental.json

- Configuration instructions for the examples

Content

- Prerequisites

- Creating the automation

- Insert changes in MySQL one by one

- Making this incremental

- Bulk updates

- Attachment configuration instructions

- Bonus!

- Replace field names

- User email instead of user id

- Triggering the automation from a sheet

Prerequisites

- A working Write Table with a set of editable columns and some example values already stored in it. More information about the Write Table chart can be found in Write Table | help.qlik.com.

- A MySQL (or similar) database table with columns that match your editable columns.

- Setting up the database, extend your database with metadata fields:

- userId: to store the user ID of the user who made a change

- updatedAt: to store the datetime when a change was saved

Here is an example of an empty database table for a change store with:

- a single PK “productId”

- editable columns are “AmountToOrder”, “Priority”, and “Note”.

- additional columns “userId” and “updatedAt”, which will be used to log user activity and merge separate changes into the same record

Creating the automation

- Create a new automation. See Qlik Automate for details.

- Add the List Change Store History block from the Qlik Cloud Services connector.

- Configure this block with the change store ID. You can copy this from the write table chart.

- Perform a manual run of the automation to make sure some records are returned. The List Change Store History block will return a list of objects for each cell that contains one or more changes. Every object includes the combination of primary key(s), the editable column for that cell, and a list of all values belonging to that cell.

- You have two options on how to perform this sync:

- Insert changes one by one

- Insert changes in bulk (this option is more complex but also more performant)

Insert changes in MySQL one by one

- Add a Loop block (A)inside the List Change Store History block and configure it to loop over the list of changes inside each object returned by the List Change Store History block (B) :

- Search for the MySQL connector (A) in the automation block library and drag the Upsert Record block inside the Loop block (B) :

- Create a new connection (C) to your MySQL database in the Connection tab of the Upsert Record block and activate it for the block by clicking it once created.

- Configure the Upsert Record block as follows

- Table: the database table you have created for the Write Table

- Where: the key:value mappings for the granularity on which you want to save the changes. In this example, it is a combination of the primary key (productId) with userId and updatedAt.

- productId (primary key): this comes from the cellKey in the List Change Store History block.

- userId and createdAt: these are defined for each change, so they should be retrieved from the item in the Loop block.

The userId maps to the createdBy parameter.

- productId (primary key): this comes from the cellKey in the List Change Store History block.

- Record: this is the key:value mapping for the individual change that should get updated. The Key maps to the columnName that is returned by the List Change Store History block, the Value maps to the cellValue parameter that is returned in the Loop block:

- This is what your automation will look like now:

Run the automation manually by clicking the Run button in the automation editor and review that you have records showing in the MySQL table:

Making this incremental

Currently, there is no incremental version yet for the Get Change Store History block. While this is on our roadmap, the automation from this article can be extended to do incremental loads, by first retrieving the highest updatedAt value from the MySQL table. The below steps explain how the automation can be extended:

- Add a Do Query block from the MySQL connector to the automation and configure the query as follows:

SELECT MAX(updatedAT) FROM <your database table>

- Run a test run of the automation without the other blocks attached to verify the result in the Do Query block’s History tab:

- Add a Condition block (A) to the automation and configure it to evaluate the MAX(updatedAt) (B) field from the Do Query block.

Because the Do Query block returns the value as part of a list, the automation editor prompts you to specify which item of the list you want to use. Use the default version option Select first item from list (C).

- Configure the operator in the Condition block to is not empty. If an updatedAt timestamp is found, the Yes part of the Condition block will be executed. If no timestamp is found, the No part will be executed.

- Add a Variable block (A)to the Yes part of the Condition block and create a new variable of type String named Filter. The setting is accessed from the Manage variables (B) button in the Variables block.

- Add an operation to the Variable block to Set value of Filter and type updatedAt gt “ in the input field.

Click in the input field to add a reference to the timestamp in the Do Query block, mirroring the Condition block configuration.

After the mapping is added, append it with an additional double quote character: - Right-click the Variable block and duplicate it, then add the duplicated block to the No part of the Condition (A).

Remove the Set value of Filter step and replace it with the Empty Variable (B) operation.

Right-click each Variable block and add a comment explaining the respective function.

- Reattach the original automation after the Condition block.

Verify that it is attached after the block and not inside the Yes or No sections.

- In the List Change Store History block (A), map the Filter variable to the Filter parameter (B) :

- Run the automation and confirm it only picks up new changes on new runs.

Bulk updates

The solution documented in the previous section will execute the Upsert Record block once for each cell with changes in the change store. This may create too much traffic for some use cases. To address this, the automation can be extended to support bulk operations and insert multiple records in a single database operation.

The approach is to transform the output of the List Change Store History block from a nested list of changes into a list of records that contains the changes grouped by primary key, userId, and updatedAt timestamp.

See the attached automation example: Automation Example to Bulk Extract Change Store History to MySQL Incremental.json.

- Drag the Loop and Upsert Record block outside the List Change Store History loop, but do not delete them:

- Add a Variable block inside the List Change Store History block loop (A) and create a new variable partialChangeRecord of type Object (B).

This variable will be used to map each cell value to the primary key(s), userId and updatedAt timestamp. - Add the first operation to the Variable to Empty it (A).

This is important to ensure that for every item in the loop, we start with an empty partialChangeRecord variable.

Next, add a Set key/values operation to set the primary key(s) (B).

In our example, we have a single primary key, productId, but if you have multiple fields, you should add them one by one.

Set it to the cellKey.rowKey parameter returned in the output from List Change Store History

- Drag the Loop block back into the automation and attach it to the Variable block.

Disconnect the Upsert Record block. - Add a new Variable block inside the Loop block and configure the Variable parameter to the partialChangeRecord object.

Now set additional Key/values in the variable for the primary userId, updatedAt, and the cellValue:

- userId: createdBy parameter returned in the loop

- updatedAt: updatedAt parameter returned in the loop

- For the Cell value, set the key to the columnName returned by the List Change Store History block and set the Value to the cellValue returned from the Loop block:

- uniqueKey: add a fourth keyValue pair uniqueKey.

This will be used later to merge various cell changes into a single record.

This combines the primary key(s), userId, and updatedAt timestamp, each separated by a pipe (|) symbol.

Click the input field, map the first parameter, then type a | -symbol, and click the input field again to map the next parameter:

- Add another Variable block inside the Loop block (A) and create a new list type variable listOfPartialChanges.

Add an Add item operation to add the partialChangeRecord variable (B) to this list.

- Add a Merge Lists block after the List Change Store History block.

The Merge Lists block will be used to merge the partial change records into full records for each change.

Configure both List parameters in the Merge Lists block to use the listOfPartialChanges variable.

- Go to the Settings tab of the Merge Lists block and apply this configuration:

- Item merge strategy: Merge list 1 item and list 2 item in one new item (default)

- List 1 unique key: uniqueKey

- List 2 unique key: uniqueKey

- On duplicate unique key: Merge item from list 2 into item from list 1

- When a property exists on both lists: Keep value from list 1 (default)

Due to an ongoing defect, this parameter is only available after refreshing the automation editor. As the parameters use the default values, this should not impact you.

- Perform a manual run of the automation to verify that the Merge Lists block output is merging.

You will notice that this list contains many duplicates. - Add a Deduplicate List block (A) and configure the List parameter to use the output from the Merge Lists block, then set the key to uniqueKey (B).

- Perform another manual run and confirm that the Deduplicate List block now only contains unique entries.

- The output still contains the uniqueKey parameter that is not compatible with the database.

There are two options: either extend the database or remove uniqueKey.

To remove it, add a Transform List block and set the Input List parameter to the Deduplicate list block:

- Configure the Fields in output list parameter from the Transform List block.

To make this easier, it is best to have example data from a manual run. If you haven’t performed a manual run yet, do one now.

Click the Add Field button and configure new fields:

- For the Field parameter, click the input field and start by mapping your primary keys

- For the Value parameter, do the same and select the corresponding parameter in the Item in input list option

- Repeat this for all other items in the list except for the uniqueKey

- For the Field parameter, click the input field and start by mapping your primary keys

- Add a Loop batch block (A) to the automation and configure it to loop over the Transform List block output in batches of 50 (B).

Adjust this batch size depending on your database and the number of editable columns.

- Add an Insert Bulk block from the MySQL connector within the Loop Batch block loop.

Configure it to use your database table variable (or hardcode your table name) and set the Values parameter to the Batch in Loop Batch parameter. - Run the automation and confirm that the database gets updated.

- Optionally, collapse all empty loop blocks to clean up the automation and provide comments to explain what the blocks and functions do.

This will help you understand this automation when you revisit it in the future.

To add a comment, right-click on the block and click Edit comment.

Attachment configuration instructions

The provided automations will require additional configuration after being imported, such as changing the store, database, and primary key setup.

Automation Example to Extract Change Store History to MySQL Incremental.json

- Variable - databaseTable -> configure with the name of your database table

- Variable - changeStoreId -> configure with your change store ID

- Upsert Record - MySQL -> replace the productId with your primary key, add additional primary keys if necessary

Automation Example to Bulk Extract Change Store History to MySQL Incremental.json

- Variable - databaseTable -> configure with the name of your database table

- Variable - changeStoreId -> configure with your change store id

- Variable - partialChangeRecord -> replace the productId with your primary key, add additional primary keys if necessary

- Variable - partialChangeRecord in Loop block -> Update the uniqueKey field by replacing the productId with your primary key, add additional primary keys if necessary

- Transform List -> replace the productId with your primary key, add additional primary keys if necessary

Bonus!

Replace field names

If field names in the change store don't match the database (or another destination), the Replace Field Names In List block can be used to translate the field names from one system to another.

- Search the Replace Field Names List block and add it to your automation.

- Provide the translations for the field names that need to be changed to match the destination system.

User email instead of user id

To add a more readable parameter to track the user who made changes, the Get User block from the Qlik Cloud Services connector can be used to map User IDs into email addresses or names.

- Search the Get user block (A) in the Qlik Cloud services connector and add it to your automation.

Configure it to use the createdBy parameter (B). - Update the Upsert Record block to use the output from Get User.

A user's name might not be sufficient as a unique identifier. Instead, combine it with a user ID or user email.

Triggering the automation from a sheet

Add a button chart object to the sheet that contains the Write Table, allowing users to start the automation from within the Qlik app. See How to run an automation with custom parameters through the Qlik Sense button for more information.

Environment

- Qlik Cloud Analytics

- Qlik Automate

- Two automation examples you can download and import (see Qlik Automate: How to import and export automations):

-

Qlik Sense authentication keeps prompting for login - How to troubleshoot it

When authenticating the Authentication Windows keeps prompting for login. Environment Qlik Sense Enterprise on Windows Troubleshooting Check if y... Show MoreWhen authenticating the Authentication Windows keeps prompting for login.

Environment

Troubleshooting

Check if you also have the issue when setting the QMC --> Virtual Proxy --> Windows authentication pattern to "Form" (instead of "Windows").

If you do not have the issue with the Form mode, the issue is most likely caused by a Browser policy or a Windows local policy.

When you try to authenticate with Qlik Sense, you can check and see the authentication in the audit logs:

C:\ProgramData\Qlik\Sense\Log\Proxy\Trace\SERVER_Audit_Proxy.txt

2319 20210908T153023.213+0200 INFO QlikServer1 Audit.Proxy.Proxy.SessionEstablishment.RedirectionHandler 38 dbc7b6a4-9314-41fb-b683-c70803402fe7 DOMAIN\qvservice Authentication required, redirecting client@http://[::1]:56417/ to https://localhost/internal_windows_authentication/ 0 be39c60e-e41a-4c82-98ee-470d5d685ec2 ::1 1861e8f3aacd2a0e7b02696ffe70d447fe88e659In the above log we do see the authentication is required: Authentication required

After having put the login and password you should have below line:

2325 20210908T153023.468+0200 INFO QlikServer1 Audit.Proxy.Proxy.SessionEstablishment.Authentication.TicketValidator 56 ec875129-8767-4313-9c40-6b38d19f535d DOMAIN\qvservice Issued ticket 'SN30j55B6QToyf8F' for user, valid for 1 minute(s) 0 DOMAIN administrator SN30j55B6QToyf8F b99ddb05183cd238af0af82c843141fd80855f90If you have nothing after the authentication required line, it means something is blocked outside Qlik, as nothing comes to the logs.

You can eventually check the Windows security event logs, if you do not see anything logged, then you migh want to check the local policy.

Resolution

In this example we suspect security policies, you might want to compare the Secpol of this not working server with another server where the authentication is working.

In the case of this article, we found out issue was caused because of one specific security (start secpol.msc --> local policies --> security options):

Network security: Restrict NTLM: Incoming NTLM traffic

If you select "Deny all accounts."

It will not be possible to authenticate with a Windows prompt.

The solution is to select "Allow All".

Note: We had some cases where the above wrong setting (deny all accounts) was provoking an HTTP ERROR 500 and in the Chrome .har file, error was: net::ERR_HTTP_RESPONSE_CODE_FAILURE

Cause

Caused by a local security policy which prohibit the NTLM authentication.

-

How to run an automation with custom parameters through the Qlik Sense button

This article explains how the Qlik Sense app button component can be used to send custom parameters directly to the automation without requiring a tem... Show MoreThis article explains how the Qlik Sense app button component can be used to send custom parameters directly to the automation without requiring a temporary bookmark. This can be useful when creating a writeback solution on a big app as creating and applying bookmarks could take a bit longer for big apps which adds delays to the solution. More information on the native writeback solution can be found here: How to build a native write back solution.

Contents

Button configuration

- In this example, we'll use an app that contains car sales opportunities from Salesforce. The straight table, the variable input dropdown, and the native button are important for this example.

- Start editing the sheet and go to the button configuration section, set the action to "Execute automation" and specify the automation that should run when the button is clicked. If your automation isn't shown, you can copy and paste the automation id.

- Additional input parameters can now be added through the "Add parameter" button.

- Parameters require a key and a value, it's possible to use Qlik Expressions as input for the value. These expressions are evaluated when the button is clicked.

- After adding the parameters, use the "Copy input block" button to copy the all parameters that will be sent to the automation to your clipboard.

Automation configuration

- If you want anyone who can access the button to be able to run the automation, then set the automation's run mode to "Triggered".

If you want to limit this to a specific group of users, you can leave the automation in Manual run mode and place it in a shared space that this group of users can access. More information about this is available here: Introducing Automation Sharing and Collaboration. Make sure to disable the Run mode: triggered option in the button configuration.

- Right-click in the automation canvas and select "Paste Block(s)" to add the Inputs block to the automation. Connect the new Inputs block to the Start block. Apart from the default parameters, you'll also find your custom parameters as Inputs in the block.

- You can now reference the custom parameters from the button in other blocks in the automation by selecting the output from the Inputs block.

Limitations

- The maximum payload size when triggering an automation is 32kb. When using the GetCurrentSelections expression, every field value will be sent to the automation. This could exceed the 32kb limit. Use the temporary bookmark solution as described in this article instead: Native writeback solutions.

- Other automation limitations are available here: Platform limitations.

- More info on configuring the native button can be found here: Creating buttons.

Related Content

Environment

- Qlik Cloud

- Qlik Application Automation

The information in this article is provided as-is and will be used at your discretion. Depending on the tool(s) used, customization(s), and/or other factors, ongoing support on the solution below may not be provided by Qlik Support.

- In this example, we'll use an app that contains car sales opportunities from Salesforce. The straight table, the variable input dropdown, and the native button are important for this example.

-

Optimizing Qlik Cloud App Performance

This Techspert Talks session covers: App performance evaluation tools How to improve app performance Development best practices Chapters: 01:5... Show More -

How to calculate the checksum of a Qlik patch or installer

After downloading a Qlik product installer or patch, you may want to verify the checksum of the file. This article explains how to compute the checksu... Show MoreAfter downloading a Qlik product installer or patch, you may want to verify the checksum of the file. This article explains how to compute the checksum with the MD5 hash.

This applies to products such as Qlik Talend Studio, Qlik Sense Enterprise on Windows, and similar.

On Windows

With PowerShell, using get-Filehash

Command: get-Filehash -algorithm

Example:get-Filehash .\Patch_20201218_R2020-12_v1-7.3.1.zip -algorithm md5Output:

Algorithm Hash Path

--------- ---- ----

MD5 A18D537FE8F466643FF2B36DC0713D9F C:\tmp\Patch_20201218_R2020-12_v1-7.3.1.zipFrom the command prompt, using certutil

Command: CertUtil

Example:CertUtil -hashfile Patch_20201218_R2020-12_v1-7.3.1.zip MD5Output:

MD5 hash of Patch_20201218_R2020-12_v1-7.3.1.zip:

a18d537fe8f466643ff2b36dc0713d9f

CertUtil: -hashfile command completed successfully.On Linux systems

Use either cksum or md5sum.

Command: cksum

Command: md5sum

Example:# cksum Patch_20201218_R2020-12_v1-7.3.1.zipOutput:

2689783428 702238968 Patch_20201218_R2020-12_v1-7.3.1.zip

Example:

# md5sum Patch_20201218_R2020-12_v1-7.3.1.zipOutput:

a18d537fe8f466643ff2b36dc0713d9f Patch_20201218_R2020-12_v1-7.3.1.zip

Environment

- All downloadable Qlik Products

-

Github - How to get started with Github in Automations

This article gives an overview of the available blocks in the Github connector in Qlik Application Automation. It will also go over some basic example... Show MoreThis article gives an overview of the available blocks in the Github connector in Qlik Application Automation. It will also go over some basic examples of retrieving file/blob contents from your repos as well as other functionalities within a GitHub account.

As with most connectors provided for automations the authentication for this connector is based on the oAuth2 Protocol, so when connecting to it you provide the user name and password of the account directly to the Github platform to request access so it is done in the most secure manner there is.

Let's now go over a few basic examples of how to use the Github connector:

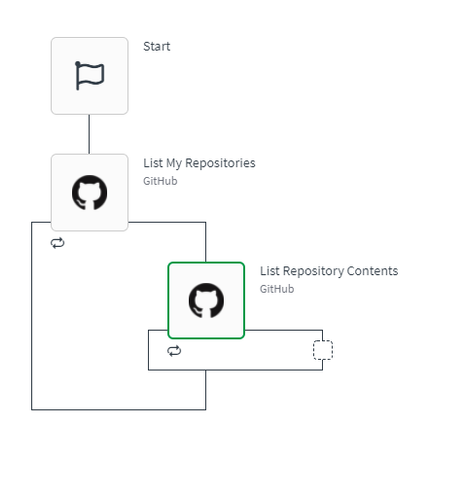

How to list owned repositories and check their contents from your Github account:

- Create an automation;

- From the left menu, select the Github connector;

- drag and drop the "List my repositories" block

- drag and drop the "List repositories contents" block inside the loop created by the first block:

Now the "list my repositories" block offers a couple of filtering options depending what result you want (all repos or just the private or public ones and if you want the result to come in sorted by some rule) but they are mostly optional. Not filling them in will return by default all repositories.

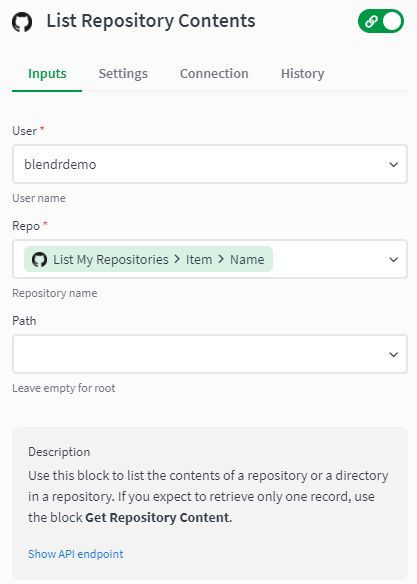

As for the "List repository contents" block you will need to fill in the username you use for your github account as well as the repository name which can be filled in with the results gotten from the first block. You can leave the path parameter empty to get the contents from the root folder or you can specify a path and the contents of that path will be returned.

As stated, if you expect to retrieve only one record, the use of "get repository content" block is more better suited. Also, you might want to switch this "List repository contents" block On Error status to either warning or ignore since Github API platform returns a 404 error if one of the queried repositories is empty.

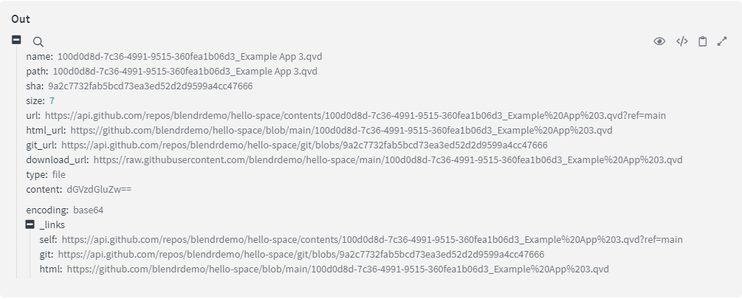

Now if you are planning to use the "Get repository content" block another warning should be mentioned and that this block only works for files or blobs up to a maximum of 1 MB in size, as per Githubs platform limitations. The response of this block should look like:

As you can see we have a couple of information stubs of that file, but most importantly from here is the SHA property, which is needed if you are planning to later on use the "Create or update file contents" block, required input parameter for the update of a file/blob.

Now if you're planning on updating files that are bigger than 1MB and you require the SHA of that file, we suggest using the list repository contents block and search for the required file and SHA in that result.

As for other functionalities of the Github connector we support also getting and listing commits or issues present in a repository, listing of users and many other requests but, if you are in need of a request that isn't present, we also offer the functionality to create your own requests to the Github API by making use of the RAW API blocks. These API blocks and their uses are explained in a separate article.

You can find attached to this article a simple JSON example which you can upload to your workspace, if you want to see a quick example of how to use version control to back up your QCS apps I suggest visiting the related article.

The information in this article is provided as-is and to be used at own discretion. Depending on tool(s) used, customization(s), and/or other factors ongoing support on the solution below may not be provided by Qlik Support.

Related Content

How to: Qlik Application Automation for backing up and versioning Qlik Cloud apps on Github

-

Qlik Write Table FAQ

This document contains frequently asked questions for the Qlik Write Table. Content Data and metadataQ: What happens to changes after 90 days?Q: Whic... Show More -

How to take control of your Qlik Cloud subscription: a guide to monitoring and m...

Understanding and managing your Qlik Cloud subscription consumption is essential for maintaining predictable costs, ensuring uninterrupted service, an... Show MoreUnderstanding and managing your Qlik Cloud subscription consumption is essential for maintaining predictable costs, ensuring uninterrupted service, and optimizing resource allocation across your organization. This guide provides you with the tools, strategies, and best practices to gain complete visibility into how your subscription is being consumed and implement proactive controls to stay within your capacity limits.

While Qlik Cloud measures consumption at the tenant level, you can achieve effective governance through strategic monitoring, automated alerting, and space-based management practices. This guide will walk you through the monitoring tools available, how to automate their deployment and refresh, and practical approaches to tracking consumption patterns and implementing controls that align with your organizational needs.

Content:

- Understanding the Administration activity center capacity consumption view

- The Home page capacity consumption dashboard

- How does this view fit into your monitoring strategy?

- Official consumption data: The Data Capacity Reporting App

- Automating the deployment of the consumption app

- Getting estimated usage with Qlik Cloud Monitoring Apps

- Automating Qlik Cloud Monitoring Apps deployment

- Proactive monitoring strategies: What to track and at what levels

- Monitoring automation runs

- Monitoring Data for Analysis

- Monitoring scheduled reloads

- Monitoring reports generated

- Practical approaches to controlling consumption

- 1. User education and communication

- 2. Alert-driven intervention workflow

- 3. Development vs. production separation

- Leveraging Qlik's alerting and distribution capabilities for consumption monitoring

- Available monitoring and distribution tools

- Setting up each monitoring approach

- Examples: Setting up consumption monitoring across key metrics

- Your action plan: From reactive to proactive

- Additional resources

Understanding the Administration activity center capacity consumption view

The Administration activity center Home page provides your first line of visibility into capacity consumption. Understanding what this view offers and how it complements the detailed monitoring apps will help you build an effective monitoring strategy.

The Home page capacity consumption dashboard

Navigate to the Administration activity center → Home to see a real-time dashboard summarizing capacity consumption. This view displays visual bar charts for consumption metrics relevant to your subscription:

Common metrics displayed:

- Data for Analysis: Purchased vs. used with color-coded breakdown (App Import, App Reload, Datafile); not available for user-based subscriptions

- Reports generated (monthly): Purchased count vs. used

- Standard automation runs (monthly): Purchased vs. used

- Third-party automation runs (monthly): Purchased vs. used

- Assistant questions asked (monthly): Purchased vs. used (if Qlik Answers is enabled)

Additional metrics may include Data Moved, Large App consumption, Qlik Predict deployed models, and others, depending on your subscription.

Metrics appear dynamically as features are adopted. If no one has asked an assistant question yet, that metric won't display until first use, keeping the dashboard focused on what you're consuming.

The Data for Analysis chart shows a current snapshot with the last update timestamp. Most metrics update multiple times per hour, providing near real-time visibility into your consumption position.

How does this view fit into your monitoring strategy?

The Administration activity center provides high-level consumption visibility designed for rapid assessment. For detailed analysis, investigation, and proactive monitoring, you'll complement this view with a set of monitoring apps.

Daily quick check (2 minutes):

- Scan consumption bars to identify any metrics approaching capacity

- Review indicators showing current usage levels

- Verify Data for Analysis timestamp shows recent data

When you need more detail, the Home page tells you what is being consumed. The monitoring apps tell you who, where, when, and why. Capacity subscriptions should use the Data Capacity Reporting App as the source of truth, while the Qlik Cloud Monitoring apps can be treated as estimated consumption reports, for example:

- For automation consumption details: Use the Automation Analyzer

- For data consumption details: Use the Data Capacity Reporting App and Reload Analyzer

- For report consumption details: Use the Report Analyzer

Use the Home page for daily checks and status awareness. When consumption requires attention or you need to understand trends, drill into the appropriate monitoring app for detailed analysis.

For more information, see Monitoring resource consumption.

Official consumption data: The Data Capacity Reporting App

The Data Capacity Reporting App is your official, billable record of consumption for capacity-based subscriptions. This Qlik-supported application is generated once per day (morning Central European Time) and provides the definitive view of your consumption against your entitlement.

The app tracks eight key value meters across the current and previous two months:

- Data for Analysis: Total data loaded into or created in Qlik Cloud (measured by monthly peak usage)

- Data Moved: Volume of data moved to cloud destinations via Qlik Talend Data Integration

- Third Party Transformations: Datasets used in transformations but not delivered by Qlik Talend Data Integration

- Automation Runs: All automation executions using Qlik Automate

- Reports: Reports distributed using Qlik Reporting Service

- Job Executions: Number of Talend job task runs (Qlik Talend Cloud subscriptions)

- Job Duration: Total duration of Talend job executions (Qlik Talend Cloud subscriptions)

This app represents your billable consumption record. The data in this app is what Qlik uses for official capacity reporting and billing purposes. When there's any discrepancy between this app and other monitoring sources, the Data Capacity Reporting App is the authoritative source. This app refreshes only once daily, meaning you see yesterday's official position, not real-time consumption. For more frequent monitoring and estimated usage, you'll complement this with the Qlik Cloud Monitoring Apps.

For detailed information, see Monitoring detailed consumption for capacity-based subscriptions.

Automating the deployment of the consumption app

Rather than manually distributing the consumption app from the Administration activity center each day, automate this process using the Capacity consumption app deployer template in Qlik Automate.

Setup steps:

- Create a new automation in Qlik Automate

- Navigate to the App Installer category

- Select "Capacity consumption app deployer" template

- Configure variables (space names, versions to keep)

- Test manually once

- Schedule to run daily around midday CET

This automation creates or uses designated spaces, imports the latest version, publishes it to a managed space, and maintains version history according to your configuration. You now have a single source of truth that updates automatically each day. Create automations or alerts on the published app for automated insights.

For complete details, see the Qlik Community article: Automate deployment of the Capacity consumption app with Qlik Automate.

Getting estimated usage with Qlik Cloud Monitoring Apps