Unlock a world of possibilities! Login now and discover the exclusive benefits awaiting you.

Analytics & AI

Forums for Qlik Analytic solutions. Ask questions, join discussions, find solutions, and access documentation and resources.

Data Integration & Quality

Forums for Qlik Data Integration solutions. Ask questions, join discussions, find solutions, and access documentation and resources

Explore Qlik Gallery

Qlik Gallery is meant to encourage Qlikkies everywhere to share their progress – from a first Qlik app – to a favorite Qlik app – and everything in-between.

Qlik Community

Get started on Qlik Community, find How-To documents, and join general non-product related discussions.

Qlik Resources

Direct links to other resources within the Qlik ecosystem. We suggest you bookmark this page.

Qlik Academic Program

Qlik gives qualified university students, educators, and researchers free Qlik software and resources to prepare students for the data-driven workplace.

Recent Blog Posts

-

Our Data Products Storylane has been given a revamp!

Our Data Products Storylane has been given a revamp! TAKE THE TOUR Data Products are highly trusted, re-usable, and consumable data assets. Data el... Show MoreOur Data Products Storylane has been given a revamp!

Data Products are highly trusted, re-usable, and consumable data assets. Data elements such as raw data, transformations, data quality rules, contracts, access patterns, and infrastructure have been organized into a single cohesive unit to align with specific requirements and objectives of a business to create a Data Product.

Data Products come with the Qlik Trust Score, a score given to data products based on seven factors, Validity, Completeness, Discoverability, Usage, Timeliness, Accuracy, and Diversity. The Qlik Trust Score ™ gives you confidence in the quality and health of your Data Products so you can be empowered when using them throughout your business. You can also view the Data Product Lineage, which is a flow chart that shows you the origin of the Data Product, so you can track it down to its source. Available Data Products can be found in the Data Marketplace, a collection of Data Products that are ready for use.

If you want to learn even more about Data Products, Datasets, Data Quality and Data Validation Rules check out Mike Tarallo's video series here: Data Products for Qlik Analytics

-

Upcoming Maintenance for Talend Cloud and Talend Management Console: March, Apri...

Update March 4th, 2026: added link to How to get Talend Management Console task schedules and pause and resume during a maintenance window using the A... Show MoreUpdate March 4th, 2026: added link to How to get Talend Management Console task schedules and pause and resume during a maintenance window using the API article

Updated April 24th, 2026: added impact on APIs (all down) and additional clarification on why tasks must be stopped and the impact on remote engines

Updated May 7th, 2026: added additional information on how to address Remote Engine impact

Updated May 12th, 2026: the anticipated impact for the remaining maintenance window has increased from 30 minutes to 90 minutesTalend Cloud and Talend Management Console will undergo scheduled maintenance in March, April, and May. This infrastructure modernization is a key step in unifying the Talend ecosystem with Qlik.

The alignment paves the way for a more seamless experience across both platforms. Over the coming months, you will gain access to integrated features that bridge data integration and analytics, enabling unified governance and a streamlined management experience across your entire data lifecycle.

The maintenance windows will occur per region, during off-peak hours, and are expected to have a maximum of 90 minutes of effective downtime.

What is the expected impact?

A full outage of Talend Cloud and Talend Management Console for a duration of up to 90 minutes within a preplanned 4-hour window.

The following applications will not be accessible:

- Talend Management Console (TMC)

- Talend Data Stewardship (TDS)

- Talend Data Preparation (TDP)

- Talend Data Inventory (TDC)

- Talend Pipeline Designer (TPD)

- Talend API Designer and Tester

- Talend Studio

- Talend Cloud Engines

All APIs for Talend Cloud will not be available during the outage. APIs impacted:

In detail:

- Cloud engines will not be available, and executions running on Cloud Engines will be terminated.

- Talend Studio users may be disconnected from their session, and it will not be possible to open a new Talend Studio session except in local mode.

- Executions that are already in progress during the outage will terminate correctly except on cloud engines, but all tasks or plans scheduled to start during those periods will be skipped.

- Skipped executions will not be tagged as failed, since they were never started. For this reason, check the execution status of your tasks and plans to ensure that all important ones are not skipped, or start them manually if necessary. See What do I need to do to prepare? further down in this blog post.

- Static IP addresses for Cloud Engines corresponding to Disaster Recovery regions will change during maintenance. See What follow-up actions are required? further down in this blog post.

What do I need to do to prepare?

- It is recommended to pause existing task runs during the maintenance window. Talend Remote Engines will continue processing tasks during the outage if they started before the maintenance window, but as they may have inconsistent statuses, we recommend pausing all tasks beforehand.

This concerns all jobs and plans scheduled to start or run during the maintenance window.

See Checking scheduled task runs against your maintenance timetable on how to identify these plans and jobs.Looking for information on how to identify, pause, and resume your tasks? See How to get Talend Management Console task schedules and pause and resume during a maintenance window using the API.

- If you are running Remote Engine Gen2, upgrade to the latest 2026-04. This is to prevent RE Gen2 from becoming unavailable after the maintenance. To do so:

- Upgrade your Remote Engine Gen2 to the latest 2026-04 release: Updating the Remote Engine Gen2 when installed using the execution script)

- Then re-establish the pairing: Re-establish the pairing of Remote Engine Gen2

What follow-up actions are required?

- After the maintenance window, check and monitor the execution status of tasks and plans, as well as the status of your Remote Engines.

In some instances, Remote Engines might require a restart if marked as unavailable in the Talend Management Console or if tasks cannot be executed as expected.

If restarting the Remote Engine does not resolve the complication, follow the pairing instructions in Pairing Remote Engines using a dedicated web service to reset the key and re-pair the Remote Engine.

If your Remote Engine Gen2 is unavailable or cannot execute tasks, then:

- Upgrade your Remote Engine Gen2 to the latest 2026-04 release: Updating the Remote Engine Gen2 when installed using the execution script)

- And re-establish the pairing: Re-establish the pairing of Remote Engine Gen2

- If you use a predefined static IP on Cloud Engine, you will need to allow the new Disaster Recovery Region's IP addresses, which will have changed at this point. While this does not immediately affect production, it will impact any potential Disaster Recovery process.

After the maintenance window, check your static IPs (Disaster Recovery) as documented in Using predefined static IP addresses for execution containers and update your firewalls accordingly.

No change is required for the active region's IP addresses. They will be migrated and will work as of today, ensuring no production interruption.

When will the maintenance take place?

Each region will undergo maintenance for 4 hours during off-peak hours, with a maximum of 90 minutes of effective downtime.

Region Maintenance Start Maintenance End Talend Cloud - AWS - Asia Pacific (Sydney)

au.cloud.talend.com

Wednesday 25 March 2026

22:00 AEDT (Sydney)UTC: 25/03/26 - 11:00

Thursday 26 March 2026

02:00 AEDT (Sydney)UTC: 25/03/26 - 15:00

Talend Cloud - AWS - Asia Pacific (Tokyo)

ap.cloud.talend.com

Monday 20 April 2026

22:00 JST (Tokyo)UTC: 20/04/26 - 13:00

Tuesday 21 April 2026

02:00 JST (Tokyo)UTC: 20/04/26 - 17:00

Talend Cloud - AWS - US East (N. Virginia)

us.cloud.talend.com

Monday 27 April 2026

02:00 EDTUTC: 27/04/26 - 6:00

Monday 27 April 2026

06:00 EDTUTC: 27/04/26 - 10:00

Talend Cloud - AWS - Europe (Frankfurt)

eu.cloud.talend.com

Tuesday 26 May 2026

21:00 CESTUTC: 26/05/26 - 19:00

Wednesday 27 May 2026

01:00 CESTUTC: 26/05/26 - 23:00

To identify which region your tenant is affected by, cross-reference Accessing Talend Cloud applications.

To track further updates during the scheduled Qlik Cloud Maintenance, please visit our Qlik Cloud Status page. This blog post will be updated with additional information where necessary.

Thank you for choosing Qlik,

Qlik Support -

Upcoming deprecation of Qlik Analytics charts in May 2027

Qlik constantly refines its Analytics, over time replacing old charts with new, modernized alternatives. These deprecations are announced well in adva... Show MoreQlik constantly refines its Analytics, over time replacing old charts with new, modernized alternatives. These deprecations are announced well in advance and come with instructions on how to best replace these old charts, whether that is to use a new one, several new ones, or make use of new settings.

This blog post covers the deprecation of charts in May 2027 and offers you guidance on how to replace them.

What charts are being deprecated and when?

The following seven visualization bundle charts are up for deprecation in May 2027. Most have already been removed from the asset panel and are no longer a part of recent applications.

- The Bar and Area chart

- The old Bullet chart

- The old Heatmap chart

- The Button for navigation

- The Share button

- The Show/Hide container

- The old Tabbed container

- The Multi KPI

What do I use instead?

Since these are old charts, most are no longer in use. If you happen to still have a very old application and need to replace deprecated charts, see Visualization bundle > Deprecated charts for more information on what to use instead.

How do I find out if my apps are using the deprecated charts?

Qlik recommends reviewing your apps for old charts. Depending on your platform (Qlik Cloud or Client-managed), there are different methods you can deploy.

Qlik Cloud

Qlik Cloud administrators should use the Qlik Cloud Monitoring Apps to track the usage. The App Analyzer has a sheet dedicated to where deprecated charts are being used on a tenant in Qlik Cloud. The App Analyzer is based on usage events rather than scanning every app. Use the App Analyzer to find which apps and sheets have charts that need to be updated to newer and more modern alternatives. The easiest way to install and update the Qlik Cloud Monitoring Apps is to use the automation template. If you already have the App Analyzer, just remove the automation and install a new one to get the latest version of the App Analyzer.

Client-managed

For client-managed installations, use the Monitoring apps. The Content Monitor app has a sheet for tracking deprecated charts. At reload, the Content Monitor app scans every app in the installation in order to list all applications and sheets that are using charts that are being deprecated. It also lists the installed extensions and their deprecation status. The Monitoring apps are bundled with the Qlik Analytics installation. The first version with the new sheet will be included in the May 2026 release. If you want to track usage in prior versions, the deprecated chart usage scanner will also be available on the product download page.

Thank you for choosing Qlik,

Qlik Support -

Qlik NPrinting - New Security Patches Available Now

A security issue has been identified in Qlik NPrinting, and patches have been made available. Details can be found in the Security Bulletin High Secur... Show MoreA security issue has been identified in Qlik NPrinting, and patches have been made available. Details can be found in the Security Bulletin High Security fix for Qlik NPrinting (CVE-pending).

We've released two releases across the latest versions of Qlik NPrinting to patch the reported issues. All versions of Qlik NPrinting prior to and including these releases are impacted:

- Qlik NPrinting February 2025 SR5

No workarounds can be provided. Customers should upgrade Qlik NPrinting to a version containing fixes for these issues.- Qlik NPrinting May 2026 IR

- Qlik NPrinting February 2025 SR 6

This issue only impacts Qlik NPrinting. Other Qlik products are NOT impacted.

All Qlik software can be downloaded from our official Qlik Download page (customer login required). Follow best practices when upgrading Qlik Sense.

Qlik provides patches for major releases until the next Initial or Service Release is generally available. See Release Management Policy for Qlik Software. Notwithstanding, additional patches for earlier releases may be made available at Qlik’s discretion.

The information in this post and Security Bulletin High Security fix for Qlik NPrinting (CVE-pending) is disclosed in accordance with our published Security and Vulnerability Policy.

Thank you for choosing Qlik,

Qlik Support -

Not All Catalogs Are Governance. Not All Governance Is a Catalog

One question I get asked a lot is "We're evaluating Qlik alongside a standalone data governance catalog. How do you compare?" It's a reasonable questi... Show MoreOne question I get asked a lot is "We're evaluating Qlik alongside a standalone data governance catalog. How do you compare?"

It's a reasonable question. But the framing inside it, that you're choosing between Qlik and a standalone governance catalog, misses something important about what governance requires. So let me try to untangle it.

The question behind the question

Data intelligence platforms are built to balance two distinct problems, and most organizations have both. The first is context capture: building a unified metadata view to understand and optimize your data integration landscape. That means technical context, operational context, and governance context, all in one place. The second is context delivery: getting trusted context, with clear ownership and accountability, to the analytics, APIs, and AI agents that need it to produce real business outcomes.

Both problems matter. They're related. However, they aren't the same problem, and they don't call for the same solution.

Two Catalogs, One Trusted Foundation

Context capture is where most metadata catalogs live, and the focus is heavily technical: inventorying schemas, tables, and columns across the estate; harvesting metadata from cross-platform sources; tracing column-level lineage from origin to destination; and powering search and discovery so data engineers and platform teams can find the right asset and understand its dependencies. Beyond those core technical jobs, the same catalogs also support regulatory and audit needs with cross-domain lineage, enterprise-wide policy enforcement, and classification. Qlik's Talend Data Catalog is one example of such a catalog solution.

Context delivery requires a knowledge catalog that makes trusted data easy to discover, understand, and consume. In Qlik Talend Cloud, this includes a data product marketplace where users can find and request trusted data products, domain-driven ownership that enables teams to publish and manage data with clear accountability, rich business and technical context that helps both people and AI agents understand data, and usage intelligence that provides visibility into adoption and value. Together, these capabilities transform governed data into a consumable asset for analytics, AI, and business operations.

The two aren't redundant. They serve different roles in the same data organization. Having both is what moves you from "we have a governance program" to "our governance actually produces something useful."But here's where I think the market conversation often stops too early.

Where Governance Has to Run

A standalone governance catalog typically answers a question about inventory : what data do we have, where did it come from, and what rules apply to it? It documents. It organizes. It certifies. What it can't answer is the question that matters most data practitioners - which is whether the data is trustworthy or not for a business use-case?

That's where Qlik's approach is different. The knowledge catalog capabilities in Qlik Talend Cloud don't just surface what data products are available. They carry live trust signals with them. The Qlik Trust Score on a data product reflects what happened in the pipeline: accuracy, completeness, and timeliness, computed at runtime. Anomalies get flagged before bad data reaches a consumer. Trustworthiness isn't a label someone applied. It's a measurement the product makes continuously.

The full sequence looks like this. Discover and understand your data through active metadata, lineage, and semantic search. Trust and validate it with Qlik Trust Score for AI, which measures accuracy, completeness, consistency, and timeliness as a live computed signal. Govern and secure it through PII detection and classification, role-based access, and policies enforced at runtime. Steward and operationalize it through quality improvement cycles and continuous remediation. Then activate and deliver it: data products integrated with analytics and AI tools, with usage tracking so you can measure impact.

Context capture builds the foundation. Context delivery makes it useful. A standalone catalog only handles part of that sequence.

The Practical Takeaway

When someone asks whether to use Qlik's knowlege catalog capabilities alongside a standalone governance catalog, my honest answer is: it depends on whether you are solving for context capture or context delivery or both.

If you're a data engineer or architect and solving for context capture — inventorying the estate, tracing lineage across your entire organizational estate, classifying assets, supporting audit and compliance — a metadata catalog can handle that work. If you're a business data steward or business user solving for context delivery — publishing trusted data products, enforcing contracts and SLAs, routing governed inputs to analytics and AI agents — you need a solution like Qlik Talend Cloud.

And if the real question is whether governance holds when data actually moves — whether SLAs are met, whether the Trust Score reflects what just ran in the pipeline — that's delivery work. No standalone catalog can answer it, and that has to come from the underlying integration and quality substrate that Qlik Talend Cloud provides.

In summary, context capture gives you the picture of your data. Context delivery puts that picture to work so that analytics, APIs, and AI agents that depend on it. Governance should not exist as a separate layer above the data stack. It must be natively integrated into every stage of the data lifecycle. Only then can organizations move beyond governance processes to deliver governance outcomes.

Don't get capture context, deliver it to drive your AI and Agentic outcomes. Try Qlik Talend Cloud® free to see this in action.

-

Important update on SAP Data Access Restrictions and your Qlik Integration

Dear Qlik Customers, In April 2026, SAP published a series of updates that will restrict your ability to extract certain SAP data using Qlik products.... Show MoreDear Qlik Customers,

In April 2026, SAP published a series of updates that will restrict your ability to extract certain SAP data using Qlik products. We are writing to explain what is changing, what it means for your Qlik integration, what you can do next, and how Qlik is responding.SAP Announcement

SAP has recently updated SAP Note 3255746 and its API Policy, which together prevent customers from using the ODP-RFC interface to perform bulk data extractions to non-SAP systems. Here is what you need to know.

Impacted Qlik Products:

The CDC connectivity via ODP will be at most risk by the implementation of the June 9th SAP security update, as it will be blocked. The main products affected are:

- Qlik Talend Cloud SAP ODP Connector

- Qlik Replicate SAP ODP Endpoint

Other impacted products that also offer ODP functionality are:

- Qlik Analytics (Qlik Sense / QlikView) SAP ODP Connector

- Talend Studio Component tSAPODPInput

The following Qlik Products do not use the ODP-RFC interface and therefore will not be impacted by the June SAP Security update:

- Qlik Gold Client

What's Changing and When:

- June 9, 2026: SAP will release a security patch that will begin blocking “unrestricted” calls from non-SAP applications, including affected Qlik connectors, that rely on the ODP-RFC interface.

- Temporary revert option: In SAP’s Operational Data Provisioning (ODP) Update Public FAQ, it is stated that “SAP provides a time-limited option to revert to unrestricted ODP-RFC calls. This flexibility will expire on December 2026.” This implies that SAP customers may be able to temporarily roll back this restriction, but only until December.

Recommended Next Steps

- Review the SAP Notes: Independently review SAP Note 3255746 (reclassification notice) and SAP Note 3439624 (self-assessment tool) via the SAP Support Portal at support.sap.com. Address your concerns and feedback through the appropriate SAP channels.

- Contract Review: Review your customer agreement and the API Policy, updated in April, 2026, to evaluate SAP’s ability to make unilateral changes to your data access rights, and to require additional purchases and/or charge egress fees.

- Audit your environment: SAP has released a self-assessment tool (SAP Note 3439624, available April 13, 2026) to help you identify all current ODP-RFC usage across your systems.

- The Qlik SAP ODP Connector will show as “unpermitted”, which means it will be blocked by the SAP June Security patch

- The Qlik SAP OData Connector will show as “permitted”, which implies it will continue to work with the SAP June Security

patch

- Evaluate Qlik’s Alternative SAP Connectors: Qlik supports several methods for SAP connectors, including OData and SAP Extractors. We continue to enhance our connectors and are looking to release a new SAP connector due later this year, which may prove a viable alternative.

- Work with Qlik: Reach out to your Qlik representative before implementing the SAP June Security patch, so we can partner with you on alternate Qlik solutions and ways to address these SAP restrictions. Together, we can evaluate your specific environment, understand your connectivity needs, and align on the best path forward before the December 2026 deadline.

Our Commitment

You should be in control of your business-critical data, wherever it creates value. Without interoperable architectures, SAP customers like you face higher costs, performance issues, and less freedom to choose, including loss of flexibility in implementing AI.

Qlik already supports alternatives to ODP and is actively developing additional migration paths and solutions to preserve your flexibility through this transition and beyond. We will continue to work on viable alternatives to our ODP endpoints and help you navigate this change on your terms, not SAP's. To learn more about how Qlik can support your specific environment, please reach out to your Qlik representative.

Additional Resources

- SAP’s Operational Data Provisioning (ODP) Update Public FAQ

- SAP Note 3255746 (reclassification notice) - Accessible via the SAP Support Portal at support.sap.com

- SAP Note 3439624 (self-assessment tool) - Accessible via the SAP Support Portal at support.sap.com

- “Open” on Whose Terms? What SAP Sapphire Won't Say. by Matt Hayes – Qlik GM Data Business Unit

This communication reflects Qlik's current interpretation of SAP's restrictions, which are outside our control and subject to change. Customers should consult SAP's official communications and seek independent guidance on operational and legal impact. Roadmap statements are forward-looking and not commitments; disruptions arising from SAP's decisions do not constitute a defect in Qlik's products or services.

-

Qlik and Starburst: Turn Fragmented Enterprise Data Into Governed, AI-Ready Inte...

Qlik recently announced its partnership with Starburst, combining Qlik data integration, replication, and analytics with Starburst federated query, co... Show MoreQlik recently announced its partnership with Starburst, combining Qlik data integration, replication, and analytics with Starburst federated query, context, and agentic capabilities to give you more choice across hybrid data estates.

For the full press release, see Qlik and Starburst Turn Fragmented Enterprise Data Into Governed, AI-Ready Intelligence.

We've also prepared a set of five articles for you to help get you started:

- Qlik Cloud Analytics and Starburst Galaxy

- Qlik Cloud Analytics and Starburst Enterprise

- Talend Studio and Starburst

- Qlik Sense Enterprise on Windows and Starburst Galaxy

- Qlik Sense Enterprise on Windows and Starburst Enterprise

Thank you for choosing Qlik,

Qlik Support -

Upcoming changes to how CSS for custom Qlik Analytics themes is handled

Edited 2nd of June, 2026: Updated Qlik Cloud date from August 18th.Edited 3rd of June, 2026: Added ?features=CLIENT_TLV_1804_LESS_CARDS,TLV_1804_NEW_D... Show MoreEdited 2nd of June, 2026: Updated Qlik Cloud date from August 18th.

Edited 3rd of June, 2026: Added ?features=CLIENT_TLV_1804_LESS_CARDS,TLV_1804_NEW_DEFAULT_THEME URL for reviewing themes, which replaces the previous URL; clarified top level selector warning.Qlik Cloud will now see the changes on or after August 18th, 2026.

Qlik is introducing changes to how custom themes in Qlik Analytics applications are handled, which may impact your CSS-styled themes if they include unsupported CSS modifications. This also applies to other means of modifying the CSS, such as the sheet input box, the Multi KPI CSS input, or any other 3rd party extension that allows CSS input.

These changes will impact both Qlik Cloud Analytics and Qlik Sense Enterprise on Windows.

For more information about custom themes, see Uploading and managing themes.

Why are these changes being made?

These changes improve how theming works in Qlik Analytics applications, which will enable us to deliver better-looking dashboards and generally enhanced theming.

What is being changed?

We’re restructuring theme settings:

- Removal of base card CSS overrides

- A theme with the _cards:true setting gets the base styles imported as JSON into the theme

- Removal of extra title padding for objects without title in a cards theme

- Replace the following with theme JSON settings:

- padding: applied on object level; the gap between border and object

- margin: applied on sheet level; the gap between objects

And we are adding new themes:

- Foundation (default)

- New Horizon

How can I verify if my themes are affected?

- Open any app that uses your custom theme and append the following to the URL: ?features=CLIENT_TLV_1804_LESS_CARDS,TLV_1804_NEW_DEFAULT_THEME

This will enable the flag to run your themes with the changes before they are released. - Verify that everything looks as you expect it to

- Review your browser's developer tools for any eventual JavaScript errors

Future-proof your themes

We’ve compiled a list of supported styling options for you that can already replace the need for custom CSS. See Obsolete CSS modifications.

Additionally, see the table below for an overview of the new theme properties that you can use in your theme instead of CSS. You will first need to enable the /feature/CLIENT_TLV_1804_LESS_CARDS flag to see them in effect in the application.

Theme Option Path in theme JSON Example Sheet background sheet.backgroundColor

"sheet": { "backgroundColor": "#f2f2f2"

}Sheet margin sheet.margin

"sheet": { "margin": "10px"

}Object paddings object.padding

"object": { "padding": "20px" // or "10px 10px 5px 10px"

}Borders

object.borderWidth

"object": { "borderWidth": "1px"

}object.borderColor

"object": { "borderColor": "#d9d9d9"

}

object.borderRadius

"object": { "borderWidth": "3px"

}Shadows object.shadow.boxShadow

"object": {

"shadow": {

"boxShadow": "0px 4px 10px 0px", "boxShadowColor": "#d9d9d9"

}

}object.shadow.boxShadowColor

"object": {

"shadow": {

"boxShadow": "0px 4px 10px 0px", "boxShadowColor": "#d9d9d9"

}

}To give you more context: Document Object Model (DOM) selectors in custom CSS are not a supported pattern. While they can be helpful for customizing the look and feel of applications, the DOM is subject to change at any time. To provide your feedback, raise tickets on the Qlik Ideation portal when you need custom CSS customization. This helps prioritize supported customization options to add to the platform.

Do not use top-level selectors such as qv-client or qv-card. These selectors are planned for removal in future versions. See CSS best practices for Sense themes for more information.

An example theme selector could be:

.qv-client.qv-card #grid .qv-object-wrapper .qv-object to change the border of all objects on sheets. When the change is made, the top level .qv-card class will be removed fromt the <body> tag, which breaks the selector.

It would need to change to: .qv-client #grid .qv-object-wrapper .qv-object to work after the update.

However it still uses the top level .qv-client, that, while not removed in this update, will be removed in the future, and is not present in certain qlik-embed scenarios. Meaning we are left with #grid .qv-object-wrapper .qv-object.

In summary, if you absolutely still need to use custom css, remove the top level selectors.What action do I need to take and when?

Qlik Cloud will see the changes on or after August 18th, 2026, while Qlik Sense Enterprise on Windows will be aligned in the November 2026 release.

While we do not expect your themes to be affected, we recommend testing them at the earliest. See the How can I verify if my themes are affected? section.

If you have any questions, we're happy to assist. Reply to this blog post or take your queries to our Support Chat.

Thank you for choosing Qlik,

Qlik Support -

Circular Dendrogram

Circular Dendrogram AnyChart A circular dendrogram lays out a hierarchy as a radial tree of explicit parent-child connections — wide and deep, o... Show MoreCircular DendrogramAnyChartA circular dendrogram lays out a hierarchy as a radial tree of explicit parent-child connections — wide and deep, on a single sheet. See how it makes a large hospital's org structure readable at a glance.

Discoveries

Take in 8 divisions, 42 departments, 84 services, and 214 staff in one view. Each top-level branch gets its own color and passes it down to its descendants, so the structure is easy to follow. Click a node to light up its path to the root, with the selection cross-filtering the whole sheet.

Impact

A hands-on walkthrough of all major features in action: click selections, chain mode, label formats, coloring, grouping options, tooltips, and more.

Audience

Qlik developers and BI consultants looking to visualize org charts, product taxonomies, file structures, and any nested-category data in their dashboards.

Data and advanced analytics

Built with the Circular Dendrogram extension for Qlik Sense, released in May 2026. Based on fictional hospital organization data.

-

From Qualifications to AI: Recent Student Learning Experiences at the University...

Preparing Students for the Qlik Business Analyst Qualification at the University of Worcester At the University of Worcester, students recently partic... Show MorePreparing Students for the Qlik Business Analyst Qualification at the University of Worcester

At the University of Worcester, students recently participated in a two-day Qlik Business Analyst Qualification Bootcamp designed to help them build confidence with analytics concepts while preparing for the Qlik Business Analyst Qualification.

The bootcamp was led by Qlik Luminary Nick Seagrave, who generously volunteered his time and expertise to support the students throughout the event. The initiative was also supported by Peter Clews, Lecturer in Web Applications at the University of Worcester, and made possible through the collaboration of Richard Wilkinson, Head of Computing, whose support helped bring the idea from concept to reality.

Throughout the two-day session, students worked through key business analytics concepts, explored data visualization best practices, and gained hands-on experience building dashboards and analyzing data with Qlik.

The highlight came on the second day, when all students who attended the bootcamp successfully passed the Qlik Business Analyst Qualification and earned their qualification badges.

Beyond earning a qualification, the students gained practical analytics skills that can help strengthen their employability and demonstrate their capabilities to future employers. As organizations continue to invest in data-driven decision-making, experience with analytics tools and recognized industry qualifications can provide students with a valuable advantage as they enter the job market.

The bootcamp also highlighted the value of collaboration between academia and industry. By combining the expertise of university educators with support from Qlik professionals and community members, students were able to gain both academic and practical perspectives on modern analytics.

Exploring Advanced Predictive Analytics and AI at AGH University of Krakow

While the Worcester bootcamp focused on business analytics foundations and qualifications, a recent session in Poland took students into some of the more advanced areas of modern analytics and artificial intelligence.

Working with AGH University of Krakow, the Qlik Academic Program delivered an advanced hands-on workshop for master's students interested in predictive analytics and AI.

The session was led by Piter Harb, Senior Technical Trainer at Qlik, and organized in collaboration with Janusz Opiła and his colleagues at AGH University of Krakow. Janusz teaches a variety of courses in business informatics, advanced analytics, and AI, including master's-level modules, and is passionate about connecting students with practical technologies and real-world applications.

During the workshop, students explored advanced analytics concepts through practical exercises and demonstrations using Qlik's latest capabilities. Topics included predictive analytics, artificial intelligence, advanced data visualization techniques, and Model Context Protocol (MCP), providing students with exposure to technologies that are increasingly shaping the future of analytics.

Rather than focusing solely on theory, the session emphasized practical application, allowing students to see how AI-driven analytics can be used to generate insights, support decision-making, and solve complex business challenges.

The feedback from both students and educators was extremely positive, highlighting the value of providing direct access to industry professionals and cutting-edge analytics technologies as part of the learning experience. The university has already expressed interest in exploring additional collaboration opportunities, demonstrating the growing importance of advanced analytics and AI skills within higher education.

Supporting Students Across the Analytics Journey

Although these two sessions focused on very different topics, they shared a common objective: helping students build skills that are relevant in today's increasingly data-driven world.

Whether supporting students preparing for an industry qualification or introducing master's students to advanced predictive analytics and AI concepts, the Qlik Academic Program remains committed to helping educators provide engaging, practical learning experiences that connect academic knowledge with real-world application.

A special thank you to Nick Seagrave, Richard Wilkinson, Peter Clews, Piter Harb, Janusz Opiła, and everyone involved in making these sessions possible.

We look forward to continuing to support educators and students across EMEA through workshops, guest lectures, qualifications, and hands-on learning opportunities throughout the year.

Interested in bringing Qlik into your classroom?

The Qlik Academic Program provides free access to Qlik Cloud Analytics, Qlik Talend Cloud, AI and Machines Learning Capabilities, learning resources, qualifications, workshops, and educator support for accredited universities worldwide.

Learn more and join the program today: qlik.com/academicprogram

-

New Qlik Analytics mobile application release: access controls and Intune integr...

An update is being deployed to Qlik Cloud tenants starting May 28th (today), offering improved governance of the Qlik Analytics mobile app. See Settin... Show MoreAn update is being deployed to Qlik Cloud tenants starting May 28th (today), offering improved governance of the Qlik Analytics mobile app. See Setting permissions for the Qlik Analytics mobile app.

What does the update include?

A new version of the Qlik Analytics mobile app is now available for download (in app stores). In addition, Tenant Administrators can now manage user access using three scopes:

- Allowed (standard mobile app access)

- Not Allowed (mobile app access denied)

- Allowed with Intune (mobile access with mandatory Intune enrollment)

In addition to access controls, these scopes strengthen the Microsoft Intune integration through a new server-side control for MAM deployment, replacing the previously user-controlled MAM toggle.

What does this mean for me?

The update takes effect once users update to the latest version of the mobile app. For most existing users, there will be no impact, as the default scope is set to Allowed, a control point not available in the previous release.

If you use Qlik Analytics and Intune

The following two things need to be taken into consideration:

- The solution now requires Microsoft Edge as the browser for the Qlik Cloud authorization flow.

- If you have deployed the Qlik Analytics app via MDM Intune, you will need to modify the scope for those users to be Allowed with Intune.

Some users may experience authentication issues after updating to the new version of the mobile app if this configuration is not completed by the Tenant Administrator.

Reference the configuration specifications for more information:

- Setting permissions for the Qlik Analytics mobile app

- Securing and configuring the Qlik Analytics mobile app with Microsoft Intune

Thank you for choosing Qlik,

Qlik Support -

Join Us Live! Q&A with Qlik: Q&A with Qlik: Qlik Talend Cloud Data Integration

Don't miss our next Q&A with Qlik! Pull up a chair and chat with our panel of experts to help you get the most out of your Qlik experience. REGIS... Show MoreDon't miss our next Q&A with Qlik! Pull up a chair and chat with our panel of experts to help you get the most out of your Qlik experience.

Not able to make it live? Don't worry! All registrants will get a copy of the recording the following week.

See you there!

Qlik Global Support

-

How Talend Routes Made the Qlik Games Live Data Playground Possible

At Qlik Connect 2026, the Qlik Games turned a conference into a live data playground. Every golf swing, bike sprint, and hockey goal fed real-time lea... Show MoreAt Qlik Connect 2026, the Qlik Games turned a conference into a live data playground. Every golf swing, bike sprint, and hockey goal fed real-time leaderboards and AI trading cards on big screens across the venue. At the heart of the solution was a deceptively simple but powerful toolkit: Qlik Talend Routes.

Routing excels in a variety of rolls like API orchestration and microservices messaging, — but one of its major transformative roles is bridging “tricky” sources that analytics platforms simply can’t reach on their own. That’s exactly what we needed here. Two very different technical headaches, one flexible routing layer, and clean, real-time data flowing into Qlik Open Lakehouse so the rest of the platform could work its magic.

The Bike Challenge: High-Velocity Telemetry Trapped in a Time-Series Database

Bike sensor data from ANT+ devices streamed straight into InfluxDB — a time-series store that no analytics application speaks natively in real time. The Talend Route listened for new events, enriched each one with rider and device context on the fly, and pushed the results forward. In milliseconds, clean, analytics-ready records were landing in Qlik Open Lakehouse via Kinesis, continually populating live leaderboards. What started as raw, high-velocity telemetry became contextualized, queryable data the moment it hit the lakehouse.

The Golf Challenge: The JSON File That Refused to Behave

The GSPro golf simulator wrote every stroke into a single .dat file — a JSON array that was completely overwritten after each swing. It was a moving target, not a clean event stream. The Talend Route watched the file for changes, intelligently split the array into individual strokes, filtered out duplicates with idempotency, and enriched each new record with golfer context. Clean JSON records then landed in dated, timestamped S3 folders for Qlik OLH to ingest. What began as a messy, stateful file became a reliable stream of enriched events the platform could trust.

Two completely different technical problems — one a high-velocity database listener, the other a file-watching, array-splitting challenge. The same routing layer handled both with ease, delivering the exact same outcome: clean, real-time, context-rich data that Qlik Open Lakehouse could immediately turn into live leaderboards, recent-attempt visuals, and AI trading cards.

The Power of Routing

By absorbing most of the source complexity, Talend Routes let the rest of the architecture shine. No custom one-off scripts. No forcing the analytics layer to become an ETL engine. Just flexible integration that made “impossible” sources behave like well-behaved, contextualized events on standard channels.

This matters more than it first appears. In a modern open lakehouse architecture — Apache Iceberg on S3, decoupled storage and compute, spot-instance economics — the routing layer becomes the quiet enabler that lets every other component do what it does best. The bike route turned a time-series database into a streaming source. The golf route turned a constantly-rewritten file into partitioned, idempotent events. Both fed the same downstream system without any special handling on the analytics side. That’s the real leverage: routing doesn’t just move data; it normalizes chaos so the platform can deliver speed, scale, and cost efficiency at the same time.

Conclusion

Key Takeaways

- When real-time sources are inaccessible or awkwardly formatted, routing is often the cleanest bridge.

- Different sources deserve different patterns — the right tool lets you choose without compromising the destination.

- Enrichment at the routing layer keeps downstream systems simple and fast.

- Small capabilities (idempotency, file streaming, dated partitioning) deliver outsized reliability and value.

The Bottom Line

Talend Routes turned sensor data that no analytics application could have consumed directly into the rhythm of Qlik Connect 2026. The same flexible approach scales far beyond conferences — it’s how modern data teams turn messy, real-world sources into governed, analytics-ready pipelines at production scale. Whether you’re dealing with device streams, legacy files, or anything else that feels just out of reach, routing could be the answer.

More about the Qlik Games:

-

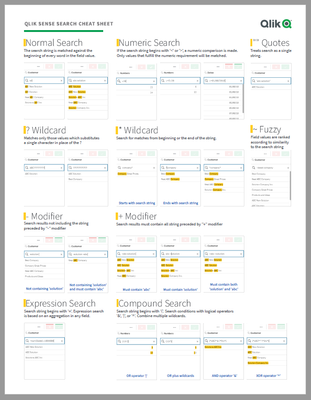

Qlik Sense Cheat Sheet version 2.0

It was back in 2015 when I first published the original Qlik Sense Search Cheat Sheet. Since then, and thanks to lots of individual contributors here ... Show MoreIt was back in 2015 when I first published the original Qlik Sense Search Cheat Sheet. Since then, and thanks to lots of individual contributors here in the Community, the Search Cheat Sheet has suffered several transformations to make it more complete and truthful.

@afurtado wrote me an email a few weeks ago because he was interested in getting the document localized for the Brazilian folks out there. In addition to thank him for his contribution and sending him the file I decided that it was about the time to get the Search Cheat Sheet an update.

Today I want to introduce a new version of the document. I added the compound search section to it (@jayanttibhe thanks for the tip), and I redesigned and rationalized the position of each element for better comprehension.

As an extra, I made the document multilanguage ready. So, if someone wants to translate the Cheat Sheet to other language (currently available in English, Spanish and Portuguese) please let me know in the comments section and I'll gladly tell you how to help us.

Hope you like it, and please share it.

Arturo

Updates:

Feb 28: French version thanks to @arychener

Feb 25: Italian added thanks to @AntonioCostantino . Russian translation updated

Feb 13, 2020: Cheat sheet includes now the ^ Wildcard to be consistent with Qlik Help. Russian language added thanks to the contribution of @martynova

Jan 29, 2020: German language added thanks to the contribution of @g_mitschke

-

Action required: Snowflake authentication migration guide for Qlik Analytics fol...

Snowflake is rolling out stronger authentication requirements as part of their platform security initiative. Starting May 2026, new connections using ... Show MoreSnowflake is rolling out stronger authentication requirements as part of their platform security initiative. Starting May 2026, new connections using only username and password will no longer be accepted, and all existing password-only connections will stop working between August and October 2026.

For the full rollout schedule, see Snowflake's authentication enforcement timeline.

If your Qlik Sense Cloud Analytics, Qlik Sense Enterprise on Windows, or QlikView environment connects to Snowflake, you will need to update those connections to use either key-pair authentication or OAuth before this change takes effect.

Who does this apply to?

This applies to any Qlik Cloud Analytics, Qlik Sense Enterprise on Windows, and QlikView connection to Snowflake, where the authentication method is set to Username and Password only. Connections that already use key-pair authentication or OAuth do not need to change.

To check your connections, review the Data connections and look for any Snowflake connections using username and password authentication.

What are my migration options?

There are two supported authentication methods you can migrate to.

Key-Pair Authentication

Key-pair authentication uses an RSA private/public key pair assigned to the Snowflake user account. It is the most straightforward migration path and does not require an identity provider. This option is recommended for most customers.

For setup steps, see Snowflake key-pair authentication documentation and the Qlik Snowflake connector guide.

OAuth

OAuth integrates with your existing identity provider (such as Okta or Azure AD) and is well-suited for organisations that want centralised access control and token-based authentication. It requires an OAuth security integration to be configured in Snowflake.

See the Qlik Snowflake connector guide and Snowflake OAuth documentation.

OAuth support for Snowflake was introduced to Qlik Sense Enterprise on Windows with our May 2026 release.

If you have any questions, we are, as always, happy to assist. Reply to this blog post or take your queries to our Support Chat.

Thank you for choosing Qlik,

Qlik Support -

【オンデマンド配信】データ連携が導く公共サービス改善のヒント

気候変動による災害の激甚化、人口減少による担い手不足、インフラ老朽化、サイバーリスクの高まりなど、行政を取り巻く環境はこれまでにないスピードで変化しています。こうした複合的な社会課題に対し、行政担当者には、限られた人員で迅速かつ的確な対応が求められています。本 Web セミナーでは、サンフランシスコ... Show More気候変動による災害の激甚化、人口減少による担い手不足、インフラ老朽化、サイバーリスクの高まりなど、行政を取り巻く環境はこれまでにないスピードで変化しています。こうした複合的な社会課題に対し、行政担当者には、限られた人員で迅速かつ的確な対応が求められています。

本 Web セミナーでは、サンフランシスコ交通局(SFMTA)、フランスのスマート水道(m2ocity / 現 Veolia)、フランス国家安全保障省の危機管理といった海外の事例を取り上げ、分断されたデータをつなぐことで、どのように業務効率化やサービスの質向上を実現したのかを解説します。日本の行政 DX に役立つヒントをお届けします。※ パソコン・タブレット・スマートフォンで、どこからでもご参加・ご視聴いただけます。

今すぐ視聴する -

Financial Analysis

As a follow-up to my previous blog post titled Finance Report with Waterfall Chart, I wanted to share an awesome demo that showcases financial reporti... Show MoreAs a follow-up to my previous blog post titled Finance Report with Waterfall Chart, I wanted to share an awesome demo that showcases financial reporting visualizations including a profit & loss statement with a waterfall chart. Qlik's Dennis Jaskowiak and Ekaterina Kovalenko, and partner Dawid Marciniak from HighCoordination, created the Financial Analysis demo based off of Jedox data, incorporating many enhancements to the straight table and pivot table. In addition to the waterfall chart, they use inline SVG to create lollipop charts and bar charts in financial statements.

Here is a look at some of the sheets:

The Dashboard provides a high-level overview of profit and loss, cash flow and liquidity. On this sheet, view the use of inline SVG bar charts and lollipop charts.

The P&L sheet provides a more detailed look at profit and loss.

The Cost Center sheet uses the pivot table to show sales costs.

Find a detailed look of cash flow on the Cash Flow sheet.

This is just some of the sheets you will find in the Financial Analysis demo. If you are looking for appealing ways to visual your financial data while keeping it concise and clean, download the Financial Analysis demo (QVF) here.

Thanks,

Jennell

-

Why Summer Is the Best Time to Learn Analytics and AI

During the school year, students often juggle lectures, exams, projects, part-time jobs, and extracurricular activities. Summer provides breathing r... Show MoreDuring the school year, students often juggle lectures, exams, projects, part-time jobs, and extracurricular activities. Summer provides breathing room to focus on learning something new without the stress of a full academic workload.Instead of studying for multiple classes at once, students can spend dedicated time:- Exploring analytics platforms

- Learning data visualization techniques

- Understanding AI concepts

- Working on hands-on projects

- Experimenting with real-world datasets

This freedom allows students to learn at their own pace while building confidence with technologies that are shaping the future of work.Self-Paced Learning Builds Real SkillsOne of the biggest advantages of learning analytics and AI over the summer is flexibility. Students can choose what interests them most and learn in a way that fits their schedule.Whether spending a few hours a week or diving into full-scale projects, self-paced learning helps students:- Develop technical and analytical thinking

- Practice solving real-world problems

-

Build dashboards

- Gain experience with cloud-based analytics tools

- Learn modern AI-driven workflows

Hands-on learning is especially important in analytics because employers increasingly value practical experience alongside academic knowledge.With access to modern cloud platforms like Qlik Cloud Premium Analytics through the Qlik Academic Program, students can gain experience using the same enterprise-level technologies used by organizations worldwide.Analytics and AI Help Students Differentiate ThemselvesIn a crowded job market, students are always looking for ways to stand out. Learning analytics and AI demonstrates initiative, curiosity, and adaptability — qualities employers highly value.Students who spend the summer building these skills can strengthen:- Resumes

- LinkedIn profiles

- Portfolios

- Internship applications

- Graduate school applications

Projects built during the summer can also become conversation starters during interviews, helping students showcase both technical ability and problem-solving skills.As AI continues transforming industries, students who understand how to work alongside intelligent technologies will be better positioned for long-term career success.The Future Belongs to Data-Literate StudentsAnalytics and AI are no longer niche technical skills reserved for specialists. They are becoming foundational skills across nearly every industry.Summer provides students with a unique opportunity to explore these technologies in a practical, accessible, and career-focused way. Whether learning data visualization, experimenting with AI-powered analytics, or building a personal project, every step helps prepare students for the future workforce.The students who invest in learning analytics and AI today will be the ones leading tomorrow’s data-driven world.If you are interested in learning more about the Qlik Academic Program, feel free to contact me at brittany.fournie@qlik.com More information about the program, including how to apply, can be found at qlik.com/academicprogram. -

Qlik Talend Cloud Migration Toolkit (QTCMT)

Migrating a Talend Studio project to the cloud is no small undertaking, but we are here to help. Brought to you by Savraj Rakkar, Benoit Barranco, and... Show MoreMigrating a Talend Studio project to the cloud is no small undertaking, but we are here to help.

Brought to you by Savraj Rakkar, Benoit Barranco, and Sébastien Leduc.

The Qlik Talend Cloud Migration Toolkit (QTCMT) is a structured migration solution built for teams running Talend on-premises who are looking to move to Qlik Talend Cloud and upgrade their project code to Talend 8. Whether the destination is the cloud or an on-premises environment, the Qlik Talend Cloud Migration Toolkit provides a clear, automated path to get there.

The Qlik Talend Cloud Migration Toolkit also extends beyond Talend Administration Center (TAC), offering an inventory view of Qlik Enterprise Manager (QEM) and Stitch environments so teams can see their full data integration footprint in one place.

The Core Capabilities

Talend Administration Center

- Register a Talend Administration Center server and browse the complete asset inventory

- Generate assessment reports to understand the migration scope before committing to any changes

- Migrate administrative assets (users, groups, projects, authorizations) and runtime assets (tasks, triggers, plans), including artifact uploads to Nexus 3 or JFrog Artifactory, CVE report generation, and Talend 8 code upgrades

- Automate on-premises upgrades to Talend 8 without a cloud migration

- Run project quality checks to surface best-practice violations and a broad range of code issues, with exportable reports

Qlik Enterprise Manager and Stitch

- View Enterprise Manager and Stitch inventories directly within QTCMT to understand the broader environment before migrating a single asset

Why use the Qlik Talend Cloud Migration Toolkit?

- Automated, phased workflows reduce migration effort significantly, cutting weeks or months off a manual migration timeline in many cases

- Full visibility into Talend Administration Center, Qlik Enterprise Manager, and Stitch environments is available before any assets are touched

- Asset configurations, parameters, authorizations, and project code are preserved throughout the migration process

- The phased approach allows assets to be migrated in stages, reducing risk to production environments and giving teams control over the pace

- Teams not yet ready to migrate can still use Qlik Talend Cloud Migration Toolkit to assess their current environment and plan ahead using the assessment and project quality tools

Why Move to Qlik Talend Cloud?

Moving to Qlik Talend Cloud means less time managing servers, upgrades, and infrastructure, and more time focused on actual work. New features ship monthly, so the platform improves continuously without any effort from the team. Data stays current across connected systems in real time, and the platform scales as needs grow. Teams also benefit from unlimited users and execution engines, backed by a generous initial capacity band.

Getting Started

The best first step is installing the Qlik Talend Cloud Migration Toolkit through the Qlik Help Migration Center and connecting it to an existing Talend Administration Center, Qlik Enterprise Manager, or Stitch environment to get an immediate picture of what is there. Full installation steps are available in the Qlik Talend Cloud Migration Toolkit documentation.

If you have any questions, we're happy to assist. Reply to this blog post or take your queries to our Support Chat.

Thank you for choosing Qlik,

Qlik Support -

Developing Word and PowerPoint reports with the expanded Microsoft O365 Add-in f...

Qlik's O365 Add-in offering for report developers has expanded with two new add-ins to enable Word document and PowerPoint presentation analytic repor... Show MoreQlik's O365 Add-in offering for report developers has expanded with two new add-ins to enable Word document and PowerPoint presentation analytic reports.

Report developers can now:

- Compose document or portrait style reports with data from a Qlik Sense app with an output format of .docx or .PDF (using the new Microsoft O365 Add-in for Word).

- Create PowerPoint presentation analytic reports from a Qlik Sense app with an output of .pptx or .PDF (using the new Microsoft O365 Add-in for PowerPoint).

How do I get started?

Qlik add-ins for Microsoft Office are installed using a manifest file. If you are using an existing manifest, you will need to download and deploy an updated file to access the new add-ins. See the deployment guide Deploying and installing Qlik add-ins for Microsoft Office.

The manifest covers all three productivity tool Add-ins. They cannot be deployed individually.

Notes on the PowerPoint add-in use

Qlik’s integration testing of Microsoft PowerPoint APIs shows that the O365 Add-in for PowerPoint can be unstable or slow at times. Our investigation with Microsoft reveals this to be a known challenge; some APIs on web vs desktop can result in different behaviors.

If your report developers have difficulty with the online PowerPoint Add-in, contact Qlik Support to open a case with us.

While we investigate the integration with Microsoft to determine if a solution is possible, consider developing your reports with the desktop version of PowerPoint.

For Qlik NPrinting Customers

-

Qlik NPrinting May 2026 Initial Release added support for exporting compatible Word and PowerPoint report templates in QDOCX and QPPTX formats for import into Qlik Cloud Reporting.

Please note that only single-connection templates can be exported.

See the Release Notes for details.

- Please note that due to Microsoft API limitations, it is not possible to embed data into an Excel table within the PowerPoint template to then produce native PowerPoint visualizations in the report. Refer to the help documentation for any other Word and PowerPoint report authoring limitations. See Qlik Reporting Service specifications and limitations for details.

If you have any questions, we're happy to assist. Reply to this blog post or take your queries to our Support Chat.

Thank you for choosing Qlik,

Qlik Support