Unlock a world of possibilities! Login now and discover the exclusive benefits awaiting you.

Recent Documents

-

How to Import an Excel Template

This guide walks you through importing an existing Excel template using the Spreadsheets extension in Qlik Sense (presented at Qlik Connect 2025).Chec... Show More -

Excel-Style Spreadsheets Inside Qlik Apps: How to Add [Tutorial]

This guide walks you through building a feature-rich spreadsheet that looks and feels like Excel in Qlik Sense using the Spreadsheets extension (first... Show MoreThis guide walks you through building a feature-rich spreadsheet that looks and feels like Excel in Qlik Sense using the Spreadsheets extension (first demoed at Qlik Connect 2025).

Check it out as a video tutorial or keep reading:

Step 1: Add the Spreadsheets Extension

- Open your Qlik app and go to the Assets panel.

- Head to Custom objects > AnyChart 4, and drag AnyChart Spreadsheets onto your app's sheet.

This creates a basic spreadsheet looking as follows:

Step 2: Add Data

- Select the object, open the Properties panel, go to Data, and click Add.

- Choose the new data section, enable Create synced sheet, and click Edit data.

- Add the dimensions and measures you want to use.

This creates one more sheet, titled DataSheet1, populated with your data.

Step 3: Format Your Spreadsheet

- To keep things clean, feel free to delete the empty sheet created by default, titled Sheet1.

- On DataSheet1, select your table area, head to the INSERT tab, and click Table. Confirm with OK.

- Apply formatting as needed, all in a familiar Excel-like interface. For example:

- Resize rows and columns.

- Change alignment and font settings from the HOME tab.

Step 4: Add Charts

You can also add charts within the spreadsheet's sheets, just like in MS Excel.

- Select your data, open the INSERT tab, and choose Insert Chart.

- Select a chart type, click OK, and place the chart where you need it.

Step 5: Save Changes

- Whenever you need to save everything you have done, open the USER tab and click Save to app.

Step 6: Apply Selections & See Your Spreadsheet in Action

- Apply filters or selections in your Qlik app.

- The spreadsheet updates instantly. Charts, tables, and formulas adjust to the selected data in real time.

This works as the spreadsheet is fully connected to Qlik’s associative engine.

Optional: Export to Excel

If you want to work outside of Qlik, such as in Microsoft Excel or Google Sheets, feel free to export the entire spreadsheet as an .xlsx file:

- Go to the FILE tab, choose Export, and select Excel format.

- Click Export Excel File, enter a file name, and confirm with OK.

- The file downloads immediately, ready to open in Excel or another compatible tool.

Here's how the downloaded XLSX file looks when opened in MS Excel:

Done

You now have a reactive spreadsheet, built with live Qlik data, ready to expand, customize, or share.

Helpful Links

- Download the extension

- Explore the demo app (view live or download QVF)

- See the documentation

- Request a personalized live demo

-

Moving QVD Files Between Spaces in Qlik Cloud Using a Script

Moving QVD Files Between Spaces in Qlik Cloud Using a Script In Qlik Cloud, moving QVD files between different spaces is not a straightf... Show More

Moving QVD Files Between Spaces in Qlik Cloud Using a Script

Thank you for the reading.Environment

- Qlik Cloud on the 20th november 2024

-

QCDI Internals for System Administrators

Qlik Cloud Data Integration (QCDI) enables data integration teams to build data integration pipelines rapidly using software automation that leverages... Show MoreQlik Cloud Data Integration (QCDI) enables data integration teams to build data integration pipelines rapidly using software automation that leverages an Extract, Load, and Transform (ELT) design pattern. A central feature of the ELT design pattern is pushdown SQL, where the transformation code generated automatically by the QCDI solution is “pushed down” to be executed on the cloud data warehouse platform, where the extreme scalability of such platforms can be leveraged fully.

One often-overlooked aspect of generating transformation code using QCDI automation is that it requires the QCDI solution to create and manage the schemas and tables against which it will execute the SQL code it generates.

While efficient and extensible, the ELT-based QCDI pipeline automation solution will create multiple schemas, tables, and views. A table that summarizes the schemas, tables, and views created by QCDI object type is included at the end of the paper. The body of this paper is devoted to describing the objects the QCDI pipeline automation creates, and their purpose, to allow your system administrators to better understand the implications to the physical database layer.

Environment

- Qlik Cloud Data Integration

The information in this article is provided as-is and to be used at own discretion. Depending on tool(s) used, customization(s), and/or other factors ongoing support on the solution below may not be provided by Qlik Support.

-

No Code Lambda Architecture

Leveraging the power of Qlik and Talend for increased productivity and reduced time to value with automated data pipelines. Lambda architecture is a ... Show MoreLeveraging the power of Qlik and Talend for increased productivity and reduced time to value with automated data pipelines.

- Lambda architecture is a data-processing architecture designed to handle massive quantities of data by taking advantage of both batch and stream-processing methods.

- Design and implementation of a Lambda architecture is typically complex due to the number of technologies needed to implement batch and streaming layers.

- In this white paper, we’ll demonstrate an approach that leverages the combined Qlik and Talend Data Integration stack to achieve a no-code implementation.

-

Non-materialized (Views)

SaaS Development For many developing in the Cloud can be confusing enough. They can't see/feel/touch the physical storage to know if it is really ther... Show MoreSaaS Development

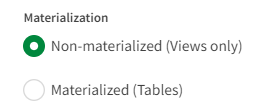

For many developing in the Cloud can be confusing enough. They can't see/feel/touch the physical storage to know if it is really there or not. While you and I are savvy and we understand all of it, imagine just for a minute the person who is already a little apprehensive starting to build a Transformation task in their Qlik Cloud Data Integration project. They go to the settings screen and they are greet with the following major choice:

Well now they are in a quandary. What in the world would it mean in a Cloud to not have their datasets materialized. They want to implement Rules that will transform the data. They want to add new fields. That has to be "materialized" somewhere doesn't it? It can't just be vapor? Can it?

Probably as confusing as hearing my voice in a video, but then finding out it wasn't me. I mean, either I'm there, or I'm not.

For the purposes of this post assume I have 2 Transformation tasks. One that uses the "Non-materialized (Views only) option, and the other uses the Materialized (Tables) option. I have prepared and ran both of those tasks so let's take a look at what each produces in our Snowflake target.

Target

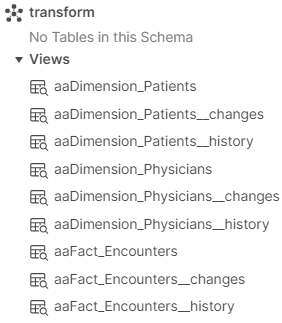

Just as expected the "Non-materialized (Views only)" option has generated SQL Views for each of my 3 datasets. If you have been following along with my series you will already understand what the __changes and __history tables are. If not, be sure to read my previous post What in the word is Type 2 and why isn't there a Type 1 post.

Now let's go ahead an browse the schema that I created for the "Materialized (Tables)" option. Notice that it has created ... give me a second to think about this.

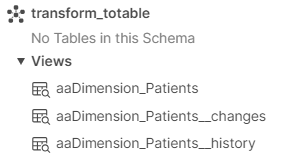

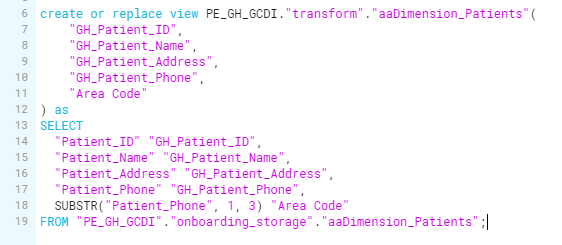

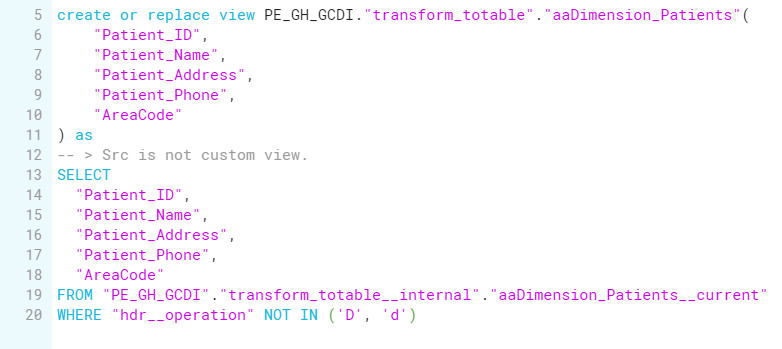

I have to admit that is curious. The option said it would create Tables, but I see views just like I did for the Non-materialized option. Maybe we need to look at the SQL behind the Views to see what they do. The view created for the "Non-materialized (Views only)" option has a Select clause that will read the data from the Storage schema for the project. Looking further we can see that the original fields are being aliased to have "GH_" as a prefix to each of them which is what my rule did. We also see a Substring function being executed to pull the first 3 characters of the Patient_Phone field and then calling that "Area Code."

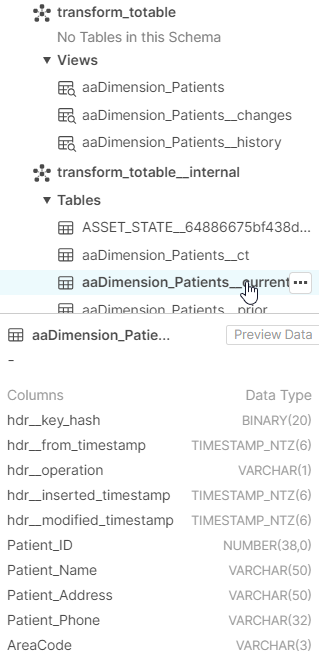

While there is a View created for our "Materialized (Tables)" option, the SQL code generated is quite different. Notice that it is selecting data from the transform_totable__internal schema rather than the storage schema. Note that it is directly reading the "AreaCode" field. (For the materialized task I did NOT put the rule in the for the GH_ prefix just to keep it clear the two were different for you.)

Aha, so there is a physical Table after all. Now we can all breath easier. I mean they can breath easier, we are the savvy ones and had already guessed this was probably what Qlik Cloud Data Integration would do for us when we saw those 2 options.

Why 2 options?

The logical question that "those other people are asking" now, is why in the world are there 2 different options?

The question that we, the savvy ones, would ask them is "How do you intend to read/use the data from the transform tasks?" Because the biggest difference between the two is "when the CPU work is done to apply rules, add new fields etc." Thus at a high level, you could surmise that if your transformation task will be referred to by further transformation tasks/data marts, it might be best to just leave the data in views, and only invoke the CPU when it is really needed. However, if the transformation task is the end of the line and if your applications/users will read it many times, it makes the most sense to materialize the work to a table so that the CPU work only occurs one time and can be read faster.

Another consideration might be that iff you are leveraging a lot of custom SQL and need to track Type 2 history for that custom SQL for use in generating Type 2 dimensions, then it is recommended you materialize to tables so that you can track the changes.

Providing the Non-materialized Views or the Tables allows you to materialize at any point in the Qlik Cloud Data Integration project where you want/need since it will impact performance.

Am I materialized or not?

As I generally do, I created a video to accompany this post. The entire video uses my voice, however, for the demo part I didn't actually record myself reading the script. But it's there. SERIOUSLY!!!!!

You see I had already recorded a completely video. It was awesome, but when I asked my friend David Freriks for some feedback, he thought I needed some images to help people understand which path I was talking about.

But I'm more a do a live demo from the seat of my pants with the cursor flying all over the screen kind of guy. I don't like just narrating images in Power Point.

After pondering for days, my buddy Clever Anjos offered a crazy suggestion. Utilize Play.HT to generate the narration using my voice. I know that seems preposterous, but that's because you are still reading instead of watching the video. Please be sure and leave your comments about the topic or the "voice" and the use of Generative #AI.

Related Content

Qlik Help content on Transformations and info about Non-materialized views.

-

Piping Data Straight into QVD files

Destinations The wonderful thing about Qlik Cloud Data Integration is that you can have your data flowing from so many source to so many Cloud Data Wa... Show MoreDestinations

The wonderful thing about Qlik Cloud Data Integration is that you can have your data flowing from so many source to so many Cloud Data Warehouse platforms. You know like Snowflake. Or Google Big Query. Or Azure Synapse. Or DataBricks. Or Amazon Redshift. Hydrating those targets and keeping them all fresh with Change Data Capture. You gotta love that.

For those Analytical minded folks in the crowd, Qlik Data Integration offers the chance to open up so many new avenues to surface fresh data. Back in November I posted about the simplicity of combining the CDC nature of Qlik Cloud Data Integration directly in your Qlik Sense applications. It showcased the magic of utilize the MERGE function in an application which greatly simplified the Incremental Load process.

I know what some of you were thinking when you read the article... "It can't possibly get any easier than that."

I also know that others were instead thinking ... "It would be so cool if Qlik would just pipe all that data directly to QVD files for me."

Whichever crowd you might have been/be in, you are in luck. One of the destinations where all that sparkling fresh water, I mean data, can flow to is Qlik Cloud.

That's right baby. All of the data, including the changes can be sent directly to QVD files in Qlik Cloud.

To Good to be True

I know that sounds to good to be true, so I'm going to walk you through how to do it, and show you what the result of it is. Obviously, the first step is to choose Qlik Cloud as the Target Platform for your new Qlik Cloud Data Integration project as pictured above.

Once you do, you will be prompted to select where to store the resulting QVD files. As pictured below, you can choose "Qlik managed storage" or "Customer managed storage." Qlik managed storage means that the QVD files will be created in the same Space that your project was created in. Whereas, selecting "Customer managed storage" will prompt you to identify an Amazon S3 bucket where you wish to store the output files.

Regardless of the location you choose to store the QVD files, you will be prompted to identify an Amazon S3 bucket where the initial load and the Change Data Capture (CDC) changes can be written to. You will need to input the Bucket Name, the Access Key and the Secret Key. That connection is like others, so once you create it, you will be able to select it in the future for other projects if you have any.

Once you configure that, you will see a project very similar to all others you have done, or those you have seen in my previous posts about Qlik Cloud Data Integration. You can name them, Prepare, and Run them in the exact same manner as other platforms.

Amazon S3 Staging Area

If you use any type of tool to browse your Amazon S3 bucket you will see folders in much the same structure as you will see in your Cloud Data Warehouses. Each Dataset will be represented in a similar fashion as well with a folder for it's Name, and one for the Changes _ct. Below you will notice that after I ran the full load I can see a CSV file for the Patients dataset. But the change table version has no other structures underneath.

Fifteen minutes after doing the full load I went and made changes to the Source data. The Change Data Capturing read the logs for that source, and voila I began seeing other CSV files show up in my Amazon S3 bucket under that _ct folder. You can tell by the timestamps when they changes were processed.

Pop Quiz

Rather than me telling you, can you guess (or even take educated guess) as to why 2 of the folder structures in the Amazon S3 bucket for the _ct for Patients have multiple files, while 2 have only 1 file? Seriously, look at the image and try to guess.

While you are thinking ... Here is an example of what you will find in those change CSV files.

If you actually took time to think, hopefully, you arrived at the conclusion that it was probably the "Change processing interval" I had scheduled for my project. Just like all of other project types the changes are accumulated in the Landing zone prior to being "batched" together in the Storage zone.

Let's Get to the QVD file part of this post

Important Note: The QVD files generated should be considered to be typical Stage 1 QVD files with the raw values. Within the Qlik Cloud Data Integration project for QVD's, unlike with other Storage Platform targets, you can not transform data in them or build data marts. The "why" is simple ... Qlik Cloud Data Integration is formed around the concept of pushing all work down to the underlying platforms. There is simply no way to graphically let you drag/drop and create all of the variations of how you might want your Qlik Script to work for your environment. Today you might want to concatenate tables, tomorrow you may need to create a linked table. You very well may already have a lot of Qlik Script files (QVS) with subroutines to do the work you need done that it simply wouldn't know about. Long story short, it's a good thing that it focuses on the raw delivery of the data and the incremental changes, and allows you to control the other layers.

If you added your project in a "clean" Space like below it's super easy to see the output QVD files.

If you create the project in your Personal space and happen to have hundreds and hundreds of files like the Qlik Dork, they don't immediately jump out at you.

But that's no reason to rip your bow-tie off, just use the Search feature and voila ... you can now see the file that was created.

Like any QVD in your Catalog you have the ability to Open the Dataset to view the Metadata about it. However, before I press the button to do that, I want you to examine the file name closely. Notice that the friendly name is "aaDimension_Patients".qvd, which matches our Dataset, the name slightly above is the more complete name with a subfolder structure.

The "path" part of the name is configurable via the project settings. Notice that I had accepted the default which included my very long project name, but I could have opted to place the QVD in the "Root" of the Space with no folder structure, or given it another Folder structure. The name also includes the name for the Landing zone, which I had also made very long. All this to say, think carefully about your naming convention

Note: It's not really a path structure, all of the files will appear in your DataFiles connection for the Space, but you can see that the "path" is reflected in the actual name so that should you have different projects, your QVD files won't be overwriting each other. For a dork like me, that's important.

Metadata

Sorry to have taken a side route when hovering over that "Open dataset" button above. Let's go ahead and press it. Voila, as expected, the data from our Qlik Cloud Data Integration was generated right into the QVD file.

What do you need to do in order to handle the Incremental Load changes? Not a thing. At the appropriate Change processing intervals you defined, the changes will automatically be included in the QVD file(s) for every Dataset.

[Disclaimer: This post isn't meant to spark a debate as to whether sending data directly to Qlik QVD files is a good or bad thing. It's not intended to suggest that you don't need a Data Warehouse to surface data via other methods. It is ONLY INTENDED to be used to demonstrate HOW TO take advantage of the functionality if that is your desire.]

-

Visualizing Changes Before they are There

"The answer is NO!" That's what you nearly yell at someone who has been persistently asking you to the see the latest/greatest data values which are f... Show More"The answer is NO!"

That's what you nearly yell at someone who has been persistently asking you to the see the latest/greatest data values which are flowing through your Qlik Cloud Data Integration pipeline prior to the 15 minute cycles in which the project batches them up and moves to the Storage layer from the Landing layer.

[Be sure to turn on that Data Freshness Layer in your projects]

Apparently they have clout and they push for a meeting. During which time you explain that the Landing layer is intended to rapidly record changes, not report them. You even show them that the Patients table shows exactly what was "landed" during the full load. (Notice it does not show the changes you will see in the __ct table.)

And the Patients__ct table shows the changes that have occurred. Clearly neither is suitable for "Mr. Whiny Pants" to query from.

Just as you begin to think the case is closed some Dork sitting in the back row chimes up "Well what if you give them a view that will let them see the latest/greatest values." You explain that while you "could" do that, it would totally defeat the entire reason your team selected Qlik Cloud Data Integration in the first place. That with Qlik Cloud Data Integration everything is automated, you simply design and it automates the creation and application of code. That if you return to hand building views you would be building up a mountain of technical debt again.

Live Views

Admittedly, that idea of a Live view of the data for those occasions where the end user(s) really do need the latest/greatest sounds rather magical. Well start clapping now my friend because Qlik Cloud Data Integration already provides those "live" views for you. I'm not kidding.

The really cool thing about them is that they handle any transformation or rules you might have applied. Say if you defined that all tables in your Storage layer must have their column names prefixed with "GH_."

How clever is that? Notice that indeed it shows you the most recent value for Patient 2 that has been changed, and it also let's you know in the "hdr__store" column if the record is the CURRENT one that exists in the Storage layer, or if it is showing you information from the Change Table (CT.) And will tell you if the value is the same as what was Loaded (L) or it if reflects an Update (U) that occurred at some point. The regular Patients view still only shows what batches are applied during the 15 minute cycles you have defined.

After your predefined batch cycle triggers ... your Patients view will show the latest/greatest values

And notice that the Live view would now reflect that both records are CURRENT for the most recent applied batch of changes, while still showing that Patient 1's values are still what was originally loaded, but Patient 2's values reflect updates that occurred at some point.

Flexibility

In my previous post about Type 2 History I brought up the fact that the functionality was completely controllable and was super flexible. You could turn it on/off as needed by table. Guess what? The same applies for Live views. They default to be on for the Storage layer, but you are free to turn them off.

At the table/Dataset level that setting is inherited, but you are free to override the setting so that Qlik Cloud Data Integration either generates or does not generate the Live view.

Heads up

Be aware, as noted in the Qlik Help for Qlik Cloud Data Integration that Live views require more resources than standard views. So consider when/where they are used, and take their use in context with the batch schedule time to just put the CDC data into the Storage layer.

The Morale of the Story

When and if any persistent end user is begging you to see the latest/greatest values even before your scheduled batches are applied your answer can now be an emphatic Yes. The morale of that story is that story is obviously that you should read more posts from me, prior to walking in to meetings like that. 🤣

For you viewing pleasure

-

What in the world is Type 2 and why isn't there a Type 1?

History Imagine you are starting your first Qlik Cloud Data Integration project. You've seen all the videos, your organization had a demo and the POC ... Show MoreHistory

Imagine you are starting your first Qlik Cloud Data Integration project. You've seen all the videos, your organization had a demo and the POC went great. Now here you are sitting down to begin a real use case you get the connections ok, and then suddenly you are presented with the Settings screen for the Onboarding stage and it defaults the History radio selections to "Data replication with historical data store (Type 2)" or choose "Data replication only."

You may have 2 quick questions:

- What in the world is Type 2

- Why in the world isn't there a choice for Type 1

The point of this post is to help you understand that fundamental question which is upfront as it really defines why you are moving the data and what you intend to use it for down the road. I would really love for the selections to read

- Everyone down the road will only care that the data matches whatever is in the source system

- There will be people who look at the data and will need to know what the values were as of the time transactions were conducted.

So which type of people will consume your data. The first type I mentioned or the second type I mentioned? Type 1 or Type 2? Oh sure you could read a bunch of fancy schmancy authors pontificating on Type 1 or Type 2, but in a nutshell that's what it boils down to.

Let's consider a practical example. Imagine that you have healthcare data. An Encounter table that contains information about a Patient's visit to the Hospital as well as information for the Physician who saw the patient. Imagine that a Patient or Physician changes their name or their phone number. Will anyone ever care to know what the Patient or the Physician information used to be? If for any reason the answer is YES, then you want to be sure that "Data replication with historical data store (Type 2)" is selected. If the answer in an emphatic NO, then you can feel free to change the response to "Data replication only" (because all my use cases are the first type, Type 1, where everyone only wants to know the current values).

Mixed Types

For the rest of this post we will pretend that our team declared that they only ever cared about seeing the current Patient information, but will need to see the historical Physician information. Since some of the data needs to be historical, I chose the Type 2 selection.

Which means that all of my tables will then have a history of changes. In my case, that is really no big deal. I mean after all how many Patients will change there names or phone numbers? Those changes will occur very slowly right? [I wrote that simply because when you read about Type 2 from those fancy schmancy authors pontificating they will refer to Type 2 as "Slowly Changing Dimensions." Which is what that means. You don't expect the data to change rapidly, you know the history will accumulate slowly.

I could simply choose to say "Who cares about a few bytes here and there" or perhaps I might say "Look I don't want any history written for this table no matter what the onboarding settings are." Or even "I know the onboarding settings say not to collect history but I want it for this table." The nice thing about Qlik Cloud Data Integration is that you have the flexibility to mix it up.

To override the Onboarding setting for any Dataset simply open the Storage task and where you see the Datasets listed, click on the Dataset you want to override and choose Settings. In this example I am going to ignore the history for the Patients table.

Then under the General section, I will tell Qlik Cloud Data Integration that I do not want it to maintain Historical Data for the table by selecting Off.

In my real world case, entire hospital system with thousands of tables, is it likely that I have a bunch of dimensions that we would never care about history for? Absolutely! Do you think I want the administrative nightmare of overriding the setting for a lot of tables? Absolutely not!

The great news is that I don't have to. Depending on what that threshold may be for you, you are welcome to create 2 different Onboarding tasks. One that will maintain that Type 2 history, and one that will not maintain the history. Then you can simply choose the appropriate tables for each of the tasks.

Changes

Imagine if you will over the course of Onboarding and Storing data for my Encounters, Patients and Physicians sure enough a few changes were made. Patient ID number 2 had a name change from "Patient Two" to "Patient Deux" and subsequently to "Patient Three." Notice that there are columns identifying which are the CURRENT values and which were the PRIOR values. There are also columns that identify when the value was current from and to.

There was also a change to Physician ID 2 over time and it's name changed from Dr. Two to Dr. Deux.

Use current data?

For the sake of your time I skipped over the Transform task and am jumping right to the Data Mart creation task. I have selected the Encounters table as my Fact. Notice at the bottom of the dialog below it wants to know if I want to "Use current data." Without the benefit of this history lesson (pun intended), and without the benefit of the above image you might think that's a really odd question. "No I don't want you to use my current data, use data from a few years ago for my data mart because analysts love that."

But as you do understand you won't ask that kind of silly question. So let's review our situation. We wish to not worry about changes over time for Patients, but we do wish to track changes over time for the Physicians. Thus, for this Data Mart based on Encounters if Physician 2 was name "Dr. Two" at the time of the Encounter we want to see "Dr. Two." but if the encounter was after the name change we want to see "Dr. Deux." But alas, Encounters (and many of your fact tables) has more than 1 date field. So Qlik Cloud Data Integration let's you (and me) select the one we wish to base our "slowly changing dimensional values" on. In my case, I have said "I want you to consider what the Physicians information was as of the Discharge_Date for the Encounter, not the Admission_Date.

To Denormalize or Not Denormalize? That is the question

Ah yes that famous quote from Shakespeare's "Hamlet." Ok, maybe it was slightly different. But we are dealing with much more important decisions like showing end users historical changes or not, and I want to ensure you get it right.

First you need to know that your FACT tables will always, always, always show the CURRENT values in the table. ALWAYS. If I changed an encounter over time from Physician ID = 1, to a 2, it will ALWAYS show that the Physician ID is 2. It's a FACT table. Our Dimension tables, Physicians and Patients can be either depending on all of the above.

Relational databases are all based on the premise that you don't want data duplicated, you want to maintain relationships. You "normalize" the data by using the relationships, thus we have a Physicians table and a Patients table to begin with. In order to see the Patient/Physician information for an encounter you have to utilize JOIN clauses in your SQL statements. Which aren't always fun.

Denormalizing the data means you want to reverse that process. Below I am basically saying "Look here Qlik Cloud Data Integration, for this Data Mart I want you to pull the Patient information into the Encounters FACT table so that my end users won't need to do any JOINS. I am fully aware there will be more BYTES written, but their ease of use is my primary goal."

I know that may be very childlike for you, but here is why I've put it in here. If you denormalize a Dimension to the Fact table ... the data will ALWAYS show the CURRENT value. It will not matter if you maintained history for the table or not. Because at the point you put it into the FACT table ... it becomes Factual data, not Dimensional data.

In my scenario that's exactly what I wanted anyway right. I know that my end users will never care about Patient history so making their life easy and removing a JOIN for them can go hand in hand. But I do not want that for the Physicians. My users will need to see the appropriate Physician information based on the Discharge Date in the Encounter. Thus as part of my modeling for the Data Mart I need to add the Physicians Dimension.

Results

After preparing and running the Data Mart task I end up with an Encounter (FACT) table that looks like this. Notice that despite the fact that Patient_ID 2 changed over time, the patient Name shows as "Patient Three" for every record. Remember, if it's in the Fact table, it's always the CURRENT value.

If you look closely at the above information for the Physician information you will see several Encounters for Physician_ID 2. The cool thing is that Qlik Cloud Data Integration automatically handles the "slow changes" by creating it's own synthetic key for the Physicians. Thus if the Encounter Discharge_Date was when "Dr. Two" was the active name, it can link to it, and if the Discharge_Date occurred after the name change to "Dr. Deux" it can link to it.

The Physicians table in the Data Mart looks like this. Notice it also generated the synthetic key. Making it the "Primary Key" for the Dimension in our Data Mart. Thus, if you need to associate the two, that hdr__ field is the one to do the association/join on.

Additional Data Marts

At any given time we would only focus on the specific needs for a given Data Mart. Clearly what I just showed was spot on for that Data Mart's needs and the needs I began talking about.

But guess what? In the "real world" there might also be a use case where end users do want to see the Patient information as of the Discharge_Date for the Encounter. If the Patient Data Set was configured to track that Type 2 history, we are in business and can create Data Mart 2 that would end up looking like this. Notice below that there are multiple Encounter records for Patient_ID 2 but hdr_..Patient key values 1, 2 and 4.

Things to Ponder

I rolled that off pretty quickly and there was 1 big assumption. That the Patient history was available in the project. If I had overwritten the Type 2 setting for the Patient table as I showed, or I had brought it in as part of an onboarding where I said to ignore it ... we would have been out of luck. Hence, the reason the Onboarding defaults to Type 2 so that you have the flexibility downstream.

I can't stress enough that your understanding of how Qlik Cloud Data Integrations tracks the historical values for tables is absolutely critical. Hopefully, this post helps gives you a great foundation to work from. The more you understand it, the more you are prepared to support a wide variety of scenarios.

Please feel free to post your comments or questions.

The information in this article is provided as-is and to be used at own discretion. Depending on tool(s) used, customization(s), and/or other factors ongoing support on the solution below may not be provided by Qlik Support.

Related Content

The data that I'm utilizing for this post, and will use in future ones is from a Qlik Community post I shared years ago regarding a mock health system called General Hospital. If you are curious/interested you can find that post here: https://community.qlik.com/t5/Healthcare/General-Hospital/td-p/1466753

If you want to see all of the steps above in action just grab some popcorn and enjoy the first video in my Qlik Cloud Data Integration series:

-

Qlik Data Integration for the Federal Government - Whitepaper

Federal data platforms are reaching such levels of data volume and complexity that traditional integration tools no longer suffice. The scale, speed,... Show More