Unlock a world of possibilities! Login now and discover the exclusive benefits awaiting you.

Recent Documents

-

The Content Monitor App for Qlik Sense Enterprise on Windows

The Qlik Sense on Windows Content Monitor is intended for Qlik Administrators. Its purpose is to monitor and analyze your Qlik Sense content, includin... Show MoreThe Qlik Sense on Windows Content Monitor is intended for Qlik Administrators. Its purpose is to monitor and analyze your Qlik Sense content, including app usage, resource consumption, and data sources. This helps with governance, optimization, and identifying unused content.

Requirements

- Platform: Qlik Sense Enterprise on Windows

- Version: November 2025 onwards

- Availability: Bundled with installation from version November 2025 onwards

- If you are running previous versions of Qlik Sense Enterprise on Windows, the Content Monitor is also available on Qlik's Downloads page here: Content Monitor (download page)

- This must be manually installed, and a weekly reload is recommended.

- The downloaded version is supported from Qlik Sense version Feb 2020.

Core Documentation

All technical details can be found in the two attached documents. These are your primary resources.

Qlik Sense Content Monitor User Guide

-

-

What it covers: A detailed, sheet-by-sheet explanation of the entire app. It describes what every KPI, chart, and table means for sections like "Weekly Summary," "Snapshot," "Applications," "Sessions," "Task Executions," "File Inventory," and "Infrastructure."

-

Use Case:

-

Guiding a customer on how to read and interpret the data.

-

Answering customer questions like, "What does the 'Session Concurrency' sheet show?" or "How do I read the 'File Inventory' sheet?"

-

- See the attached Qlik Sense Content Monitor User Guide

-

Configuration Guide

-

-

What it covers: This is the primary guide for setup and reload issues. It contains:

-

Detailed definitions for all script parameters (e.g.,

vCentralNodeHostName,vVirtualProxyPrefix,vServerLogFolder). -

Performance tuning options (e.g.,

vFileScanMaxDuration,vAppRetrievalLoop, exclusion lists). -

A "Trial Mode" section is used for troubleshooting initial reload failures.

-

A "Troubleshooting" section.

-

-

Use Case:

-

New installations.

-

Troubleshootings.

-

Tuning performance for long reloads.

-

-

See the attached Qlik Sense Content Monitor Configuration Guide

-

Environment

- Qlik Sense Enterprise on Windows

-

How to Replace the Operations Monitor App

Take a backup of the app and data associated with the app: Export the existing Operations Monitor app via the QMC (Apps > More actions > Export). Ba... Show More- Take a backup of the app and data associated with the app:

- Export the existing Operations Monitor app via the QMC (Apps > More actions > Export). Backup to a safe location.

- Backup the QVD files in the Central Node Log directory (C:\ProgramData\Qlik\Sense\Log by default)

- Verify you have an Operations Monitor QVF file in the DefaultApps section of the Qlik installation directory (C:\ProgramData\Qlik\Sense\Repository\DefaultApps by default). Ask support for a copy for your version of Qlik Sense if you cannot locate the file.

- Write down any reload tasks associated with the app so that you can restore them later

- Write down the original owner of the app (should be the internal repository user, INTERNAL\sa_repository)

- Remove the Operations Monitor App and associated data files:

- Delete the Operations Monitor app via the QMC (Apps > Delete). This removes both the app and associated reload tasks.

- Delete the QVD files associated with the Operations Monitor App (governance*.qvd) in the Central Node Log directory (C:\ProgramData\Qlik\Sense\Log by default)

- Restart all Qlik Sense services.

- Re-import the Operations Monitor App from the installation directory:

- Locate the default Operations Monitor app in the DefaultApps section of the Qlik installation directory (C:\ProgramData\Qlik\Sense\Repository\DefaultApps by default)

- Import the app via the QMC (Apps > Import)

- Change ownership of the app to the same user as before (should have been the internal repository user, INTERNAL\sa_repository)

- Recreate any reload tasks noted from step 1

- Reload the app, and verify the app works as expected.

- Take a backup of the app and data associated with the app:

-

Qlik Sense Enterprise on Windows and Security Vulnerability CVE-2023-26136 (toug...

Is Qlik Sense Enterprise on Windows affected by the security vulnerability CVE-2023-26136 (nvd.nist.gov)? CVE-2023-26136 is a vulnerability detected i... Show MoreIs Qlik Sense Enterprise on Windows affected by the security vulnerability CVE-2023-26136 (nvd.nist.gov)?

CVE-2023-26136 is a vulnerability detected in Node.js, a third-party component used by Qlik Sense Enterprise on Windows. Security scans may flag the vulnerability in a Qlik Sense Enterprise on Windows environment.

While Qlik was not directly impacted, the affected third-party component has been updated across supported versions.

Qlik Sense versions up to the following would be flagged by security scans:

- Qlik Sense Enterprise on Windows May 2024 patch 23

- Qlik Sense Enterprise on Windows November 2024 patch 17

- Qlik Sense Enterprise on Windows May 2025 patch 5

Resolution

Upgrade to any of the following (or later) versions:

- Qlik Sense Enterprise on Windows May 2024 patch 24

- Qlik Sense Enterprise on Windows November 2024 patch 18

- Qlik Sense Enterprise on Windows May 2025 patch 6

- Qlik Sense Enterprise on Windows November 2025 IR, and all subsequent versions, such as May 2026 IR and upward

In some instances, the Qlik Sense patch installer updates service binaries but does not remove leftover node_modules folders from previous installations. The old request/node_modules/tough-cookie directories may still exist on disk even though the running service no longer uses them.

If you notice stale folders post-upgrade:

- Run the following PowerShell script as Administrator to clean up stale folders:

# Qlik Sense - tough-cookie stale folder cleanup # Run as Administrator after upgrading to v14.231.26 or later # Take a backup or snapshot before running Write-Host "Starting tough-cookie cleanup..." -ForegroundColor Cyan # Step 1 - Stop Service Dispatcher (this will stop all dependent Qlik Sense services) Stop-Service -Name "QlikSenseServiceDispatcher" -Force -ErrorAction SilentlyContinue Write-Host "Stopped: QlikSenseServiceDispatcher" -ForegroundColor Yellow Start-Sleep -Seconds 5 # Step 2 - Remove tough-cookie directories $paths = @( "C:\Program Files\Qlik\Sense\ConverterService\node_modules\tough-cookie", "C:\Program Files\Qlik\Sense\DownloadPrepService\node_modules\request\node_modules\tough cookie", "C:\Program Files\Qlik\Sense\MobilityRegistrarService\node_modules\request\node_modules\tough cookie", "C:\Program Files\Qlik\Sense\NotifierService\node_modules\request\node_modules\tough-cookie" ) foreach ($path in $paths) { if (Test-Path $path) { Remove-Item -Path $path -Recurse -Force Write-Host "Removed: $path" -ForegroundColor Green } else { Write-Host "Not found (already clean): $path" -ForegroundColor Gray } } Write-Host "`nCleanup complete. Restart Service Dispatcher manually to bring all services back online." -ForegroundColor CyanThis script may need to be re-run after any future Qlik Sense Enterprise on Windows patch or reinstallation, as the installer may restore these directories.

The script is provided as is and to be used at your own risk.

- Restart the Qlik Sense Service Dispatcher service

Internal Investigation ID(s)

- SUPPORT-2237

-

How to Check the Patch Level of your Qlik Talend JobServer

There are two different ways to check the patch version of your Qlik Talend JobServer: Log in to your Qlik Talend Administration Center (TAC) and nav... Show MoreThere are two different ways to check the patch version of your Qlik Talend JobServer:

- Log in to your Qlik Talend Administration Center (TAC) and navigate to Conductor (A) > Servers (B)

The patch level can be found below the Server Version (C) column, as shown below.

The version will not be listed for any JobServers that are disconnected from Qlik Talend Administration Center. -

On the server that JobServer is installed, navigate to <JobServer>/agent

-

If the version of Talend JobServer is TPS-6002 (R2025-04) or higher, the patch level for JobServer can be found in the branding.properties file.

- If the branding.properties file is missing, then a lower version of Jobserver is being used. The full version can be found in <JobServer>/agent/lib and reviewing the org.talend.remote file extension name:

Example: org.talend.remote.server-8.0.2.20250129_0823_patch indicates the patch level is R2025-01 (TPS-6002)

-

Environment

- Qlik Talend Administration Center

- Log in to your Qlik Talend Administration Center (TAC) and navigate to Conductor (A) > Servers (B)

-

Qlik Enterprise Manager: patchendpoint API Error

QEM API used: PatchEndpointEndpoint for patching: SQL Server The following error occurs when trying to update the PatchEndpoint endpoint with the prop... Show MoreQEM API used: PatchEndpoint

Endpoint for patching: SQL ServerThe following error occurs when trying to update the PatchEndpoint endpoint with the prop value seen for the fields. The prop value seen for this endpoint is DO.SqlserverSettings.server.

{"error_code":"AEM_PATCH_ENDPOINT_INNER_ERR","error_message":"Failed to patch replication endpoint \"HS_MSSQL_ISOPROD\" from server \"dev\". Error \"SYS-E-HTTPFAIL, Failed to apply json-patch.\", Detailed error: \"SYS,GENERAL_EXCEPTION,Failed to apply json-patch,Failed to apply patch remove/replace for item '/db_settings/DO.SqlserverSettings.server'; item does not exist; Failed to apply json patch\"."}

Resolution

The correct prop value in this instance is 'server' for the server field. The prop value in this endpoint contains the combined value of the parent prop, while other endpoints will separate the parent prop value into its own parameter.

Sample JSON file with the correct prop value:

[

{ "op":"replace", "path":"/db_settings/server",

"value":"server_ip_address_here" }

]Cause

SQL Server endpoint elements did not separate the parent prop value, leading to a misleading element inspection. The last part of the prop value is all that is needed for the JSON file.

Environment

- Qlik Enterprise Manager

-

Windows Digital Signature is no longer verified for Qlik Products: Why is it dis...

Qlik Software Windows executables (such as Qlik Talend Studio, Talend Installers, Qlik NPrinting) come with an embedded digital signature, which signa... Show MoreQlik Software Windows executables (such as Qlik Talend Studio, Talend Installers, Qlik NPrinting) come with an embedded digital signature, which signals Windows (and any security software) that the executable has been verified.

The digital signature is typically valid for 2 to 3 years before requiring renewal.

However, when reviewing the signature, it may show that it was revoked by the issuer around April 28th of 2026, even though it should still be valid for another 6-12 months.

Consequently, security software may block any executable that does not have a valid or current digital signature, leading to users being unable to launch the installed Qlik software.

Resolution

While proceeding by bypassing the signature check is an option, it is not always a feasible workaround. Qlik is actively replacing the affected installation files with executables that include both the digital signature and a new (valid) countersignature.

Re-downloading the installation package from the Qlik Download page will resolve the issue in most instances.

- Qlik Sense Enterprise on Windows and Desktop May 2026 IR

- Qlik Sense Enterprise on Windows November 2024 Patch 28 (release expected May 27th)

- Qlik Sense Enterprise on Windows May 2025 Patch 20 (release expected May 27th)

- Qlik Sense Enterprise on Windows November 2025 Patch 10 (release expected May 27th)

Not all files have been replaced at this point. Specifically, major releases (IR) are still undergoing processing.

To prevent this from recurring, Qlik has updated its digital signing processes.

If your product is not available on the Qlik Download page (such as Qlik Talend Studio), contact Qlik Support to receive the newly compiled executable (R2024-05 through R2026-05).

Cause

The revocation of the digital signature was due to identifying a countersignature that did not have a timestamp set.

Internal Investigation ID(s)

ITSYS-16864

Environment

- Qlik Products

-

Qlik Direct Access Gateway: 1.7.13.0 installation stuck. "Microsoft Visual C++ 1...

Installing Qlik Data Gateway v1.7.13.0 on Windows may fail. The installation remains stuck in a loop. The system first requires a restart of Windows, ... Show MoreInstalling Qlik Data Gateway v1.7.13.0 on Windows may fail. The installation remains stuck in a loop.

The system first requires a restart of Windows, but will not complete the installation afterwards. Instead, a Microsoft Visual C++ Redistributable Modify Setup prompt will be displayed:

Whatever option is chosen, a new pop-up appears stating, 'Please restart your system before running the Qlik Data Gateway - Direct access installation'. This will restart the loop.

Resolution

Install v1.7.14.0 when available.

If it's necessary to perform a new installation in the meantime, use v1.7.11.0.

Cause

This is a known defect (SUPPORT-8964) affecting v1.7.13.0.

Internal Investigation ID(s)

SUPPORT-8964

Environment

- Qlik Direct Access Gateway

-

Qlik Replicate: Improving Databricks ODBC resilience with EnableRetryWithoutRetr...

This article documents how to improve Databricks ODBC resilience with the EnableRetryWithoutRetryAfterHeader parameter. Content Why does settingEnabl... Show MoreThis article documents how to improve Databricks ODBC resilience with the EnableRetryWithoutRetryAfterHeader parameter.

Content

- Why does settingEnableRetryWithoutRetryAfterHeader matter?

- The Default Retry Behavior

- Retry behavior withEnableRetryWithoutRetryAfterHeader

- When to apply EnableRetryWithoutRetryAfterHeader

- How to enableEnableRetryWithoutRetryAfterHeader

- Prerequisites

- Configuration

- Errors addressed byEnableRetryWithoutRetryAfterHeader

- HTTP 503 with No Retry-After Header (SqlState: 08S01)

- Delta Transaction Log Truncation (SqlState: 42K03)

The Databricks ODBC driver parameter EnableRetryWithoutRetryAfterHeader is a connection-level setting that controls how the driver responds to server-side failures when the server does not include a Retry-After HTTP header in its response.

By default, this parameter is disabled (0). When enabled (1), it instructs the driver to retry failed requests, even in the absence of explicit retry guidance from the server. This makes pipelines significantly more resilient to transient service disruptions and recoverable error conditions that would otherwise cause tasks to stall indefinitely.

The EnableRetryWithoutRetryAfterHeader parameter is available with driver version 2.9.1+.

Why does setting EnableRetryWithoutRetryAfterHeader matter?

The Default Retry Behavior

The Databricks ODBC driver includes built-in retry logic to handle transient failures. However, by default, this logic only activates when the server includes a Retry-After header in the error response (the standard HTTP signal that tells a client how long to wait before retrying).

In practice, not all Databricks server error responses include this header. When it is absent:

- The driver has no retry signal to act on

- The failure is treated as terminal rather than transient

- The request is abandoned entirely

- Tables dependent on that connection enter a queued state with no further progress

This gap between intent (retry on recoverable errors) and behavior (skip retry without the header) is the source of a class of pipeline failures that can be difficult to diagnose because the driver produces no additional retry activity. It simply stops.

Retry behavior with EnableRetryWithoutRetryAfterHeader

Setting EnableRetryWithoutRetryAfterHeader=1 closes the retry gap. The driver no longer requires the Retry-After header to be present before retrying. Instead, it applies its retry logic to all eligible failures, using a built-in backoff strategy, regardless of whether the server explicitly instructs it to do so.

The practical benefits are:

- Transient 503 errors are recovered automatically: temporary service unavailability no longer causes immediate task failure

- Recoverable Delta read errors are retried: failures that would succeed on a subsequent attempt are allowed to do so

- Queue buildup is prevented: tables are not left stranded in a queued state due to a single unretried failure

- Pipeline stability improves without changes to data architecture or retention policies

When to apply EnableRetryWithoutRetryAfterHeader

This parameter should be considered a standard configuration for any Databricks ODBC connection where pipeline reliability is a priority, particularly in environments that:

- Process high volumes of tables or run long-duration tasks

- Are subject to Delta Lake log retention policies

- Connect to Databricks endpoints that may return 503 responses during maintenance windows or high-load periods

- Have previously experienced unexplained queued states or stalled tasks

How to enable EnableRetryWithoutRetryAfterHeader

Prerequisites

- Databricks ODBC Driver version 2.9.1 or higher (parameter is not available in earlier versions)

- Recommended: upgrade to version 2.9.2 or later for the most stable behavior

Configuration

Add the following to the Databricks Delta endpoint connection using the following internal parameter:

additionalConnectionProperties=EnableRetryWithoutRetryAfterHeader=1No other changes to the connection string or data architecture are required.

Errors addressed by EnableRetryWithoutRetryAfterHeader

The following errors are direct indicators that the driver encountered a failure without retry-header guidance. Both have been observed to be resolved by enabling the parameter.

HTTP 503 with No Retry-After Header (SqlState: 08S01)

RetCode: SQL_ERROR

SqlState: 08S01

NativeError: 124

Message: [Simba][Hardy] (124) A 503 response was returned but no Retry-After header was provided. Original error: HTTP Response code 503,

TEMPORARILY_UNAVAILABLE: HTTP Response code: 503 [1022502]

Alternative:

RetCode: SQL_ERROR SqlState: 08S01 NativeError: 124 Message: [Simba][Hardy] (124) A 503 response was returned but no Retry-After header was provided. Original error: UnknownWhat is happening: The Databricks service returned an HTTP 503 Service Unavailable, a standard, transient condition signaling that the server is temporarily unable to handle the request. The correct behavior is to wait briefly and retry.

Why it fails without the parameter: The driver's retry mechanism is waiting for a Retry-After header that was not included in the response. Without it, the driver takes no retry action and the connection is treated as a hard failure.

How the parameter helps: With EnableRetryWithoutRetryAfterHeader=1, the driver retries the request using its internal backoff strategy, allowing the pipeline to recover transparently from the temporary service disruption.

Delta Transaction Log Truncation (SqlState: 42K03)

RetCode: SQL_ERROR

SqlState: 42K03

NativeError: 35

Message: [Simba][Hardy] (35) Error from server: error code: '0'error message: 'org.apache.hive.service.cli.HiveSQLException:Error running query: [DELTA_TRUNCATED_TRANSACTION_LOG]

com.databricks.sql.transaction.tahoe.DeltaFileNotFoundException:[DELTA_TRUNCATED_TRANSACTION_LOG]

Unable to reconstruct state at version 16 as the transaction log has been truncated due to manual deletion or the log retention policy (delta.logRetentionDuration=30 days) and checkpoint retention policy

(delta.checkpointRetentionDuration=2 days)What is happening: Delta Lake could not reconstruct the table state because the transaction log had been truncated, either through manual deletion or by the log retention policy (delta.logRetentionDuration=30 days) and checkpoint retention policy (delta.checkpointRetentionDuration=2 days). The required log file (00000000000000000000.json) was no longer present, preventing reconstruction of the state at version 16.

Why it fails without the parameter: When the driver encounters a server-side error response without a Retry-After header, it does not retry. Conditions that may be transiently recoverable, such as a momentary log unavailability, are never reattempted, and the task stalls.

How the parameter helps: Enabling retries allows the driver to reattempt the read operation, recovering from transient log access issues that do not persist beyond the initial attempt.

Environment

- Qlik Replicate

-

Qlik Talend Administration Center: Update jdbc driver jar caused NoSuchFileExcep...

After updating the SQL Server JDBC driver in the Talend Administration Center (TAC), executing metaservlet calls to associate task results in a deploy... Show MoreAfter updating the SQL Server JDBC driver in the Talend Administration Center (TAC), executing metaservlet calls to associate task results in a deployment failure. The system specifically fails because it is still searching for the old driver version, resulting in a NoSuchFileException.

Resolution

To resolve the dependency conflict and refresh the application cache, follow these steps:

- Verify JAR Location and ensure the new JDBC JAR file exists only in the following directory:

/talend/tac/apache-tomcat/webapps/tac/WEB-INF/lib/Do not keep multiple versions or duplicates in other library folders to avoid classpath conflicts.

- Stop the Qlik Talend Administration Center service and Tomcat instance

- Clear Tomcat Cache

Since Tomcat caches compiled JSP files and session data, manually clear the work and temp directories by renaming the current folders:

- tomcat/work > tomcat/work_bak

- tomcat/temp > tomcat/temp_bak

- Create new, empty work and temp folders

- Start the Qlik Talend Administration Center service

Tomcat will rebuild the cache using the current libraries present in the WEB-INF/lib folder.

Cause

The error indicates that although the old driver was removed from the file system, Qlik Talend Administration Center (or the underlying Tomcat container) still holds a reference to the deleted JAR file. This suggests a caching issue within the Tomcat application server.

Environment

- Qlik Talend Administration Center

- Verify JAR Location and ensure the new JDBC JAR file exists only in the following directory:

-

Qlik NPrinting tasks executions are blocked without sending specific error messa...

Qlik NPrinting tasks stop progressing at random, even if the Qlik NPrinting services otherwise run without issue. Task executions are blocked, with no... Show MoreQlik NPrinting tasks stop progressing at random, even if the Qlik NPrinting services otherwise run without issue. Task executions are blocked, with no specific error messages logged.

The Rabbit logs read the following error:

missed heartbeats from client, timeout: 15s

The problem is similar to the one described in Qlik NPrinting task run but do not progress: stuck on same percentage, with some notable differences:

- It can occur on any Windows Server operating system, while the previous issue is restricted to 2019.

- The error message {{badmatch,{error,eacces}} is not present in the Rabbit logs.

- A missed heartbeats from client error is logged instead.

The problem can start after an upgrade to Qlik NPrinting 2025 or any later releases.

Resolution

- Verify that Qlik NPrinting has been installed on a dedicated server as required; see Unsupported configurations: Installation for details.

- Verify that all Qlik NPrinting Server Engine and Designer Anti Virus Folder Exclusions have been applied correctly

- If the problem persists, increase the timeout window to reduce heartbeat frequency.

This step requires changes to service configuration files. Back up all files before you proceed.

- Locate the configuration files in C:\Program Files\NPrintingServer\NPrinting\[ServiceName] folders

- Stop the Qlik NPrinting Services

- Set the following in the scheduler.config, engine.config, webengine.config, and audit.config (if active):

<add key="rabbitmq-timeout" value="60" />

<add key="rabbitmq-requested-heartbeat-sec" value="30" /> - In the scheduler.config, set:

<add key="engine-heartbeat" value="15" />

<add key="engine-heartbeat-offline-threshold" value="30" /> - Restart the services

NOTE: Changes made to NPrinting config files are NOT retained when upgrading NPrinting. Recommend documenting any changes made and reapply them following any Qlik NPrinting upgrade.

Cause

Qlik NPrinting services send heartbeats to verify that communication between them is open.

Recent versions of Qlik NPrinting are more sensitive to missed heartbeats, meaning a missed communication check can lead to the services being unable to process tasks as expected.

This may be caused by a busy Qlik NPrinting server (such as when the software is run on a shared server, resulting in unsustainably high CPU usage) or by the heartbeats being delayed by security scans.

Related Content

Internal Investigation ID(s)

- SUPPORT-8903

Environment

- Qlik NPrinting

-

Qlik Automate: Automation ownership changes for Analytics Admins May 2026

Qlik introduced a change in how automation permissions are handled for the Analytics Admin role. When was the change introduced? The change is already... Show MoreQlik introduced a change in how automation permissions are handled for the Analytics Admin role.

When was the change introduced?

The change is already live as of the 11th of May, 2026.

What does that mean for me?

Analytics Admins can now claim ownership of another user's automation. After claiming ownership, they can make necessary changes to it and enable the automation. However, they can no longer transfer ownership to another user.

How do I claim ownership of an automation?

As an Analytics admin, to claim ownership of an automation:

- Navigate to the Automations section in the Administration Console

- Locate the automation you want to claim ownership of, and click the Actions menu (...)

- Choose Claim ownership

This behavior change only applies to Analytics Admins. Tenant admins can still transfer ownership to any user with the appropriate access rights in the tenant.

Environment

- Qlik Cloud Analytics

- Qlik Automate

-

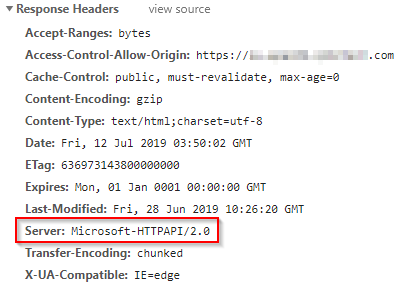

Qlik Analytics: Server Information In HTTP Header - Microsoft-HTTPAPI/2.0

HTTP Response Header exposes Microsoft-HTTPAPI/2.0 as the server source. An attacker could use this information to expose known vulnerabilities for th... Show MoreHTTP Response Header exposes Microsoft-HTTPAPI/2.0 as the server source. An attacker could use this information to expose known vulnerabilities for the server source.

Resolution

This header is included in the HTTP header by .NET framework, which means it can not be directly controlled by Qlik software.

The header is only added in Qlik software that runs in Windows environment, for example Qlik Sense Enterprise for Windows and QlikView Web Server.

There are two main approaches to removing this HTTP header;- Disable the server header for WCF Services in the Windows registry, as described in https://blogs.msdn.microsoft.com/dsnotes/2017/12/18/wswcf-remove-server-header/

- Suppress the HTTP header through a reverse proxy if this exists in front of Qlik Sense in the current deployment. For option details on this option, consult reverse proxy documentation. If you require assistance implementing the solution, our professional services are happy to be engaged.

Environment

Qlik Sense Enterprise on Windows, all version

QlikView, all versions

Qlik NPrinting, all versionsInternal Investigation IDs:

- QLIK-90522

-

Qlik Talend Remote Engine 2.13.13 SSL Communication Issue with Talend Studio

After upgrading to Remote Engine 2.13.13, when enabling the option to execute a job from Studio on a remote engine, the process fails due to SSL and P... Show MoreAfter upgrading to Remote Engine 2.13.13, when enabling the option to execute a job from Studio on a remote engine, the process fails due to SSL and PKCS-related errors.

sun.security.validator.ValidatorException: PKIX path building failed: sun.security.provider.certpath.SunCertPathBuilderException: unable to find valid certification path to requested target

Resolution

SSL Configuration for Talend Studio to Connect with Remote Engine

- Retain the Keystore and Truststore Passwords Generated During Installation

During the installation of Talend Remote Engine, SSL credentials are automatically generated. To retrieve the keystore password, execute the following command:

cat /opt/TalendRemoteEngine/bin/sysenv

and locate the line

TMC_ENGINE_JOB_SERVER_SSL_KEY_STORE_PASSWORD=<PASSWORD>

- Required Keystore and Truststore Files

The following files are necessary for secure communication between Talend Studio and the Remote Engine:

/opt/TalendRemoteEngine/etc/keystores/jobserver-client-keystore.p12

/opt/TalendRemoteEngine/etc/keystores/jobserver-client-truststore.p12 (Truststore file added with RE 2.13.13)Transfer these files to your Talend Studio workstation and store them in a dedicated folder.

- Configure Talend Studio to Use SSL

Edit the Studio startup configuration file, depending on your operating system:

- For Linux (x86_64): studio/Talend-Studio-linux-gtk-x86_64.ini

- For ARM architecture: studio/Talend-Studio-gtk-aarch64.ini

- For Windows: Talend-Studio-win-x86_64.ini

- Add the following JVM options to the configuration file, and adjust the file paths and password values accordingly:

-Dorg.talend.remote.client.ssl.force=true

-Dorg.talend.remote.client.ssl.keyStore=path_to_keystore/jobserver-client-keystore.p12

-Dorg.talend.remote.client.ssl.keyStoreType=PKCS12

-Dorg.talend.remote.client.ssl.keyStorePassword=<password_from_step_1>

-Dorg.talend.remote.client.ssl.keyPassword=<password_from_step_1><

-Dorg.talend.remote.client.ssl.trustStore=path_to_truststore/jobserver-client-truststore.p12

-Dorg.talend.remote.client.ssl.trustStoreType=PKCS12

-Dorg.talend.remote.client.ssl.trustStorePassword=<password_from_step_1>

-Dorg.talend.remote.client.ssl.disablePeerTrust=false

-Dorg.talend.remote.client.ssl.enabled.protocols=TLSv1.2,TLSv1.3

Cause

Talend Remote Engine enforces SSL communication by default, ensuring that all interactions with the engine are encrypted. If Studio does not have the appropriate certificates installed, it will be unable to establish a secure connection with the Remote Engine.

Environment

- Retain the Keystore and Truststore Passwords Generated During Installation

-

Qlik Talend Cloud: Illegal Argument popup when creating new Snowflake connection

When using Key Pair authentication and creating a new Snowflake connection, you might encounter the following error: Illegal Argument Resolution The ... Show MoreWhen using Key Pair authentication and creating a new Snowflake connection, you might encounter the following error:

Illegal Argument

Resolution

The provided private key file (.p8) is not in the correct format, or the key file password is invalid.

To get the actual error, install SnowSQL utility in the Linux machine where the Qlik Data Movement gateway is installed and try to connect to the same account:

snowsql -a <account> -u <username> --private-key-path <path to file/rsa_key.p8>This will provide the exact error on why the connectivity is failing and assist in identifying which root cause applies.

Cause

Environment

- Qlik Talend Cloud

-

Top 10 Viz tips - part X - Qlik Connect 2026

At Qlik Connect 2026 I hosted a session called "Fast 15" with my top 10 visualization tips. Here's the app I used with all tips including test data. ... Show MoreAt Qlik Connect 2026 I hosted a session called "Fast 15" with my top 10 visualization tips. Here's the app I used with all tips including test data.

Tip titles, more details in app:

- Product Catalogue

How to make a product catalogue. Here's a variant with the new Pivot table using inline SVG images. The product images have been converted to Base64 and inlined within the inline SVG. The text is just positioned on top of the image. - Table waterfall with subtotal and partial sum

How to make table waterfall with add bars, and bars for subtotal and retro sum. Spreadsheet for layout, rangesum() and above() for offset and bar, master measures and SVG for waterfall. - Switches revisited

How to make a switch. Create a variable vSwitch set value to 0 Use the button, to toggle the variable, use action "set variable" - Button examples

How to style a button. Examples of button styles. - Map with 3d bars

How make 3d bar map point layer. Use a simple SVG with two rects: the bar and the shadow in Data URI with inline SVG. Added a top and base triangle for a little extra 3d effect. - Rank box chart

How to make a rank box chart. Use unicode boxes and repeat(). - Filter pane examples

How to place a filter pane. Example of top panel, side panel and drawer style. - Half Gauge

How to make a multi half gauge. Use the map and two line layers. - Pie chart value and percentage in the same label

How to customize pie chart labels. Use dual() and number format measure expression. - Line chart symbols

How to add symbols in the line chart. Use dual and unicode symbols. - Control chart with combo chart

How to make a control chart with dynamic bounds. Use combo and measures for center, upper and lower bounds - Formatting x-axis and keep field

How to format the x-axis. Use dual and aggr.

I want to emphasize that many of the tips were discovered by others than me, I tried to credit the original author at all places when possible.

If you liked it, here's more in the same style:

- 24 days of visualization Season III, II, I

- Top 10 Tips Part X, IX, VIII, VII, VI, V, IV, III, II , I

- Let's make new charts with Qlik Sense

- FT Visual Vocabulary Qlik Sense version

- Similar but for Qlik GeoAnalytics : Part III, II, I

Thanks,

Patric - Product Catalogue

-

Qlik Replicate: Errors Due to Unsupported SQL Server Versions

Starting from Qlik Replicate versions 2024.5 and 2024.11, Microsoft SQL Server 2012 and 2014 are no longer supported. Supported SQL Server versions in... Show MoreStarting from Qlik Replicate versions 2024.5 and 2024.11, Microsoft SQL Server 2012 and 2014 are no longer supported. Supported SQL Server versions include 2016, 2017, 2019, and 2022. For up-to-date information, see Support Source Endpoints for your respective version.

Attempting to connect to unsupported versions, both on-premise and cloud, can result in various errors.

Examples of reported Errors:

- Qlik Replicate 2024.5 accessing Microsoft Azure SQL Server 2014

[SOURCE_CAPTURE ]W: Table 'dbo'.'tableName' has encrypted column(s), but the 'Capture data from Always Encrypted database' option is disabled. The table will be suspended (sqlserver_endpoint_capture.c:157) - Qlik Replicate 2024.11 accessing Microsoft SQL Server 2014

[SOURCE_UNLOAD ]E: RetCode: SQL_ERROR SqlState: 42S02 NativeError: 208 Message: [Microsoft][ODBC Driver 18 for SQL Server][SQL Server]Invalid object name 'sys.column_encryption_keys'. Line: 1 Column: -1 [1022502] (ar_odbc_stmt.c:4067)

Cause

The system view sys.column_encryption_keys is only available starting from SQL Server 2016. Attempting to query this view on earlier versions results in errors.

Reference: sys.column_encryption_keys (Microsoft Docs)

Resolution

Upgrade your SQL Server instances to a supported version (2016 or later) to ensure compatibility with Qlik Replicate 2024.5 and above.

Internal Investigation ID(s)

00375940, 00376089

Environment

- Qlik Replicate versions 2024.5, 2024.11, and higher

- Microsoft SQL Server 2014, 2012, and lower (unsupported)

- Qlik Replicate 2024.5 accessing Microsoft Azure SQL Server 2014

-

QlikView server communication interruptions following Microsoft Windows RC4 ciph...

Beginning on April 14, 2026, QlikView customers experienced outages and intermittent disruptions within their QlikView environments. These incidents c... Show MoreBeginning on April 14, 2026, QlikView customers experienced outages and intermittent disruptions within their QlikView environments. These incidents coincided with the deployment of Microsoft’s April 2026 security patches to Domain Controllers, which affected QlikView Server Service (QVS) communications over port 4747.

The Microsoft patches introduced changes targeting Kerberos authentication and RC4 encryption. As a result, QlikView environments where RC4 remained enabled (such as at the domain account or Windows server level) became unstable or non-functional.

The impact on QlikView may include, but is not limited to:

- Failed QlikView Distribution Server distribution tasks with an error code indicating an Authentication Failure.

- "No Server" message on the QlikView AccessPoint with an error message in the Web server log indicating that the Web server cannot connect to the QlikView Server.

- Failed Qlik NPrinting distributions to QlikView using a QVP Connection.

- Problems accessing the QlikView Server settings from the QlikView Management Console, or failures for the QlikView Server to come online after a restart.

This article documents the steps to reconfigure the environment to comply with the RC4 cipher suite deprecation.

Resolution

Information in this article is based on Microsoft's remediation steps and has been adjusted and expanded to include QlikView-specific instructions. For the original, see Detect and remediate RC4 usage in Kerberos | learn.microfot.com.

There are three stages; not all may be required. Always start at the first one.

Reset the Computer Account

These steps require you to remove the computer from the domain and then re-add it. Before proceeding, ensure you have a Local Administrator account and its password for each of the QlikView servers.

- Confirm the Domain Controllers and the QlikView server(s) have the Microsoft patch applied.

- Reset the Active Directory Computer Account (not the Service Account) on the Domain Controller’s Active Directory Users and Computers interface.

- Place the QlikView server(s) into a Workgroup:

- Log onto the local computer of the QlikView server

- Open the Server Manager

- Go to Local Server

- Go to Computer Name

- Place the server in a local group

- Reboot the QlikView server

- Repeat the step for all QlikView servers if you have additional nodes

- Return the QlikView server(s) to the Domain:

- After the reboot, return to the Server Manager

- Go to Local Server

- Go to Computer Name

- Re-join the Domain and reboot the server

- Repeat the step for all QlikView servers if you have additional nodes

- Test the QlikView environment to confirm this has resolved the interruptions.

Kerberos ticket reset

It may be necessary to clear the Kerberos ticket on the affected QlikView server(s).

- Open PowerShell or a Command Prompt with elevated permissions

- Run the command: Klist purge

Reset the Service Account

It may be necessary to reset the Domain password for the QlikView service account.

- Verify that all server Domain Controllers and Windows Servers on which the QlikView Application is installed are patched.

- Verify that RC4 encryption is disabled.

- Reset the QlikView service account password.

- Open the Services panel, then reapply the QlikView service account using the new password

- Restart the QlikView services.

If the QlikView service account was used for any data connection(s), they will need to be updated with the new password.

Related Content

Microsoft Patches:

- Windows Server 2022 (DC): KB5082142

- Windows Server 2019 (DC): KB5082123

- Windows Server 2016 (DC): KB508208

Environment

- QlikView

-

Qlik Replicate and DB2 for iSeries source: Check if a receiver has been detached

When using IBM DB2 for iSeries as a source in Qlik Replicate, the task may report a warning if journal receiver numbers are not continuous. A typical ... Show MoreWhen using IBM DB2 for iSeries as a source in Qlik Replicate, the task may report a warning if journal receiver numbers are not continuous.

A typical warning message looks like:

[SOURCE_CAPTURE ]W: Journal entry sequence '2026' was read from journal receiver 'APSUPDB.QSQJRN0118'. The previous entry was read from receiver 'APSUPDB.QSQJRN0116'. Check if a receiver has been detached. (db2i_endpoint_capture.c:1836)

Resolution

Qlik Replicate reports this condition as a warning only. There is no impact on task execution or data integrity:

- The task continues to run normally

- No journal entries are lost

- No data inconsistency is introduced

This warning can be safely ignored unless accompanied by other errors or abnormal task behavior.

Cause

On the IBM DB2 for iSeries side, 'Check if a receiver has been detached' can occur if, for example, the process is holding or locking the journal. This temporarily prevents the system from creating or attaching the next journal receiver. In such cases, a receiver number may be allocated but never successfully created, resulting in a gap in the receiver numbering.

This behavior is normal on IBM i and does not indicate a defect. The system assigns journal receiver numbers, but sequential continuity is not guaranteed. IBM i only guarantees that receiver numbers increase monotonically, not that every number will exist.

Internal Investigation ID(s)

00420963, 00423959

Environment

- Qlik Replicate

- IBM DB2 for iSeries all versions

-

Qlik Talend Cloud: Data Movement Gateway repagent installation in custom directo...

This article describes the diagnosis and resolution of a Qlik Data Gateway (repagent) service failure when installed in a non-default mount point (def... Show MoreThis article describes the diagnosis and resolution of a Qlik Data Gateway (repagent) service failure when installed in a non-default mount point (default is /opt and we installed using prefix keyword):

QLIK_CUSTOMER_AGREEMENT_ACCEPT=yes rpm -ivh qlik-data-gateway-data-movement.rpm --prefix /data

The service entered a failed state after exhausting its systemd restart limit, caused by a stale process and PID file left over from a previous crash. This article covers root cause analysis, step-by-step resolution, and preventative measures.

The following symptoms were observed when the issue was reported:

- repagent service showing as 'failed' in systemctl status

- Service had restarted 5 times and hit the StartLimitBurst threshold

- All Qlik Replicate tasks were not processing

- Journal logs showed: 'Main process exited, code=exited, status=1/FAILURE'

- Subsequent start attempts failed with: 'New main PID does not belong to service, and PID file is not owned by root. Refusing.'

From the systemctl status output:

Active: failed (Result: exit-code) since Wed 2026-04-15 21:16:53 BST

Main PID: 3220 (code=exited, status=1/FAILURE)

repagent.service: Start request repeated too quickly.Resolution

To restore the service, first verify the SELinux config is set to SELINUX=disabled and SELINUXTYPE=minimum:

vi /etc/selinux/config

# This file controls the state of SELinux on the system.

# SELINUX= can take one of these three values:

# enforcing - SELinux security policy is enforced.

# permissive - SELinux prints warnings instead of enforcing.

# disabled - No SELinux policy is loaded.

SELINUX=disabled

# SELINUXTYPE= can take one of these three values:

# targeted - Targeted processes are protected,

# minimum - Modification of targeted policy. Only selected processes are protected.

# mls - Multi Level Security protection.

SELINUXTYPE=minimumIf not, make the changes as per above and reboot the box. Then continue using a root user:

- Identify the stale process.

cat /home/qlik/gateway/movement/data/repagent.pid

This file will have the running pid number

ps -ef | grep 1234

This confirmed PID 1234 (agentctl) was still running as an orphan process under the qlik user. - Kill the stale process.

sudo kill -9 1234

This force-killed the orphan agentctl process and its child processes. - Remove the stale PID file.

rm -f /home/qlik/gateway/movement/data/repagent.pid - Reset the failed service state.

sudo systemctl reset-failed repagent

This cleared the systemd failure counter, allowing the service to be started again. - Start the service.

sudo systemctl start repagent

sudo systemctl status repagent - The service returned to an active (running) state successfully.

Confirmed resolution output:

Active: active (running) since the current date

Main PID: 10391 (agentctl)

Tasks: 93Cause

There are two root causes.

Stale Orphan Process (Primary Blocker)

When the repagent service originally crashed, it left behind an orphan process (PID 4082) that continued running independently of systemd. When systemd attempted to restart the service, it detected the stale PID file pointing to PID 4082, which it refused to adopt because it was not owned by root. This caused every restart attempt to fail with a 'protocol' error.

Step

Event

Result

1

repagent crashed

PID 1234 left as orphan

2

Systemd attempted restart

Detected stale PID 1234

3

Systemd refused to adopt PID

Failed with 'protocol' error

4

Restart counter hit limit (5)

Service permanently stopped

SQLite Native Library Warning (Secondary)

The journal also showed a secondary warning related to the System.Data.SQLite native library pre-loader failing to check the code base for assemblies loaded from a single-file bundle. This is a known .NET runtime compatibility warning and does not directly cause the service failure, but may indicate a .NET version mismatch worth monitoring.

System.Data.SQLite: Native library pre-loader failed to check code base

System.NotSupportedException: CodeBase is not supported on assemblies

loaded from a single-file bundle.Environment

- Qlik Talend Cloud

-

Qlik Talend Administration Center: You are using # DI users, but your license al...

After updating the license, connecting to the Talend Administration Center fails with: You are using # DI users, but your license allows only #, pleas... Show MoreAfter updating the license, connecting to the Talend Administration Center fails with:

You are using # DI users, but your license allows only #, please contact your account manager.

Resolution

Two solutions exist.

Deactivate Unused Users via Security Account

- Log in to Qlik Talend Administration Center using a Security Administrator or the default security account.

For information about the default security account, see Change the default password used to configure the database for details. - After logging in, navigate to Settings > Users and deactivate any unused or excess users

Modify Users Directly in Qlik Talend Administration Center Database

- Access the Qlik Talend Administration Center database directly

- Locate the user table and review existing users:

- Identify unused users

- Verify user types against the new license

- Update user types to comply with license constraints (e.g., change DQ to DI) or delete unnecessary users

License downgrade behavior: When downgrading licenses (for example, from DQ seats to DI seats), user configurations must also be updated.

- Users previously assigned as DQ must be manually changed to DI

- Failure to update the user type may cause access issues or license mismatches

Cause

The issue occurs due to a mismatch between the number or type of users defined in the system and those allowed by the updated license.

- The Available Users section reflects:

- The number of users currently created under active licenses

- The total number of users permitted by those licenses

- After a license update:

- If the user type and/or user count exceeds the new license limits, the system triggers the error

- Qlik Talend Administration Center functionality is restricted until the discrepancy is resolved

About User Types and License Seats

In Qlik Talend Administration Center, users are assigned different license seat types depending on their access rights and functional domain.

The main user/license types are:

- DI (Data Integration / ESB user)

Used for Data Integration and ESB-related activities. - DQ (Data Quality / Data Management user)

Used for Data Quality and data stewardship tasks. - MDM (Master Data Management user)

Used for Master Data Management domain. - NPA (No Project Access)

Users with no project-level access.

For more information regarding license types and features for users, see What domains can you work in depending on your user type and license.

Environment

- Qlik Talend Administration Center

- Log in to Qlik Talend Administration Center using a Security Administrator or the default security account.