Unlock a world of possibilities! Login now and discover the exclusive benefits awaiting you.

Recent Documents

-

Qlik Cloud Webhooks: Migration to new event formats is coming

The event payloads emitted by the Qlik Cloud webhooks service are changing. Qlik is replacing a legacy event format with a new cloud event format. Any... Show MoreThe event payloads emitted by the Qlik Cloud webhooks service are changing. Qlik is replacing a legacy event format with a new cloud event format.

Any legacy events (such as anything not already cloud event compliant) will be updated to a temporary hybrid event containing both legacy and cloud event payloads. This will start on or after November 3, 2025.

Please consider updating your integrations to use the new fields once added.

A formal deprecation with at least a 6-month notice will be provided via the Qlik Developer changelog. After that period, hybrid events will be replaced entirely by cloud events.

Webhook automations in Qlik Automate will not be impacted at this time.

Events impacted by this migration

The webhooks service in Qlik Cloud enables you to subscribe to notifications when your Qlik Cloud tenant generates specific events.

At the time of writing, the following legacy events are available:

Service Event name Event type When is event generated API keys API key validation failed com.qlik.v1.api-key.validation.failed The tenant tries to use an API key which is expired or revoked Apps (Analytics apps) App created com.qlik.v1.app.created A new analytics app is created Apps (Analytics apps) App deleted com.qlik.v1.app.deleted An analytics app is deleted Apps (Analytics apps) App exported com.qlik.v1.app.exported An analytics app is exported Apps (Analytics apps) App reload finished com.qlik.v1.app.reload.finished An analytics app has finished refreshing on an analytics engine (not it may not be saved yet) Apps (Analytics apps) App published com.qlik.v1.app.published An analytics app is published from a personal or shared space to a managed space Apps (Analytics apps) App data updated com.qlik.v1.app.data.updated An analytics app is saved to persistent storage Automations (Automate) Automation created com.qlik.v1.automation.created A new automation is created Automations (Automate) Automation deleted com.qlik.v1.automation.deleted An automation is deleted Automations (Automate) Automation updated com.qlik.v1.automation.updated An automation has been updated and saved to persistent storage Automations (Automate) Automation run started com.qlik.v1.automation.run.started An automation run began execution Automations (Automate) Automation run failed com.qlik.v1.automation.run.failed An automation run failed Automations (Automate) Automation run ended com.qlik.v1.automation.run.ended An automation run finished successfully Reloads (Analytics reloads) Reload finished com.qlik.v1.reload.finished An analytics app has been refreshed and saved Users User created com.qlik.v1.user.created A new user is created Users User deleted com.qlik.v1.user.deleted A user is deleted Any events not listed above will remain as-is, as they already adhere to the cloud event format.

How are events changing

Each event will change to a new structure. The details included in the payloads will remain the same, but some attributes will be available in a different location.

The changes being made:

- All new property names will be lowercase, which may cause some duplication of existing properties within the

dataobject. - The following properties or objects will be updated:

cloudEventsVersionis replaced byspecversion. For most events this will be fromcloudEventsVersion: 0.1tospecversion: 1.0+.contentTypeis replaced bydatacontentypeto describe the media type of the data object.eventIdis replaced byid.eventTimeis replaced bytime.eventTypeVersionis not present in the future schema.eventTypeis replaced bytype.extensions.actoris replaced byauthtypeandauthclaims.extensions.updatesis replaced bydata._updatesextensions.meta, and any other direct objects onextensionsare replaced by equivalents indatawhere relevant.- The direct properties of the

extensionsobject will be moved to the root and renamed to be lowercase if needed, such astenantId,userId,spaceId, etc.

Example: Automation created event

This is the current legacy payload of the automation created event:

{ "cloudEventsVersion": "0.1", "source": "com.qlik/automations", "contentType": "application/json", "eventId": "f4c26f04-18a4-4032-974b-6c7c39a59816", "eventTime": "2025-09-01T09:53:17.920Z", "eventTypeVersion": "1.0.0", "eventType": "com.qlik.v1.automation.created", "extensions": { "ownerId": "637390ef6541614d3a88d6c3", "spaceId": "685a770f2c31b9e482814a4f", "tenantId": "BL4tTJ4S7xrHTcq0zQxQrJ5qB1_Q6cSo", "userId": "637390ef6541614d3a88d6c3" }, "data": { "connectorIds": {}, "containsBillable": null, "createdAt": "2025-09-01T09:53:17.000000Z", "description": null, "endpointIds": {}, "id": "cae31848-2825-4841-bc88-931be2e3d01a", "lastRunAt": null, "lastRunStatus": null, "name": "hello world", "ownerId": "637390ef6541614d3a88d6c3", "runMode": "manual", "schedules": {}, "snippetIds": {}, "spaceId": "685a770f2c31b9e482814a4f", "state": "available", "tenantId": "BL4tTJ4S7xrHTcq0zQxQrJ5qB1_Q6cSo", "updatedAt": "2025-09-01T09:53:17.000000Z" } }This will be the temporary hybrid event for automation created:

{ // cloud event fields "id": "f4c26f04-18a4-4032-974b-6c7c39a59816", "time": "2025-09-01T09:53:17.920Z", "type": "com.qlik.v1.automation.created", "userid": "637390ef6541614d3a88d6c3", "ownerid": "637390ef6541614d3a88d6c3", "tenantid": "BL4tTJ4S7xrHTcq0zQxQrJ5qB1_Q6cSo", "description": "hello world", "datacontenttype": "application/json", "specversion": "1.0.2", // legacy event fields "eventId": "f4c26f04-18a4-4032-974b-6c7c39a59816", "eventTime": "2025-09-01T09:53:17.920Z", "eventType": "com.qlik.v1.automation.created", "extensions": { "userId": "637390ef6541614d3a88d6c3", "spaceId": "685a770f2c31b9e482814a4f", "ownerId": "637390ef6541614d3a88d6c3", "tenantId": "BL4tTJ4S7xrHTcq0zQxQrJ5qB1_Q6cSo", }, "contentType": "application/json", "eventTypeVersion": "1.0.0", "cloudEventsVersion": "0.1", // unchanged event fields "data": { "connectorIds": {}, "containsBillable": null, "createdAt": "2025-09-01T09:53:17.000000Z", "description": null, "endpointIds": {}, "id": "cae31848-2825-4841-bc88-931be2e3d01a", "lastRunAt": null, "lastRunStatus": null, "name": "hello world", "ownerId": "637390ef6541614d3a88d6c3", "runMode": "manual", "schedules": {}, "snippetIds": {}, "spaceId": "685a770f2c31b9e482814a4f", "state": "available", "tenantId": "BL4tTJ4S7xrHTcq0zQxQrJ5qB1_Q6cSo", "updatedAt": "2025-09-01T09:53:17.000000Z" }, "source": "com.qlik/automations" }Timeline

- November 3, 2025: Hybrid events start emitting (combined legacy and cloud event payloads).

- At least 6 months after a formal deprecation notice: Legacy fields will be removed, leaving fully cloud events only.

Environment

- Qlik Cloud

- Qlik Cloud Government

- All new property names will be lowercase, which may cause some duplication of existing properties within the

-

How to create NPrinting GET and POST REST connections

NPrinting has a library of APIs that can be used to customize many native NPrinting functions outside the NPrinting Web Console. Environment: Qlik N... Show MoreNPrinting has a library of APIs that can be used to customize many native NPrinting functions outside the NPrinting Web Console.

Environment:

An example of two of the more common capabilities available via NPrinting APIs are as follows

- Connection reloads

- Publish Task executions

These and many other public NPrinting APIs can be found here: Qlik NPrinting API

In the Qlik Sense data load editor of your Qlik Sense app, two REST connections are required (These two REST Connectors must also be configured in the QlikView Desktop application>load where the API's are used. See Nprinting Rest API Connection through QlikView desktop)

- GET

- POST

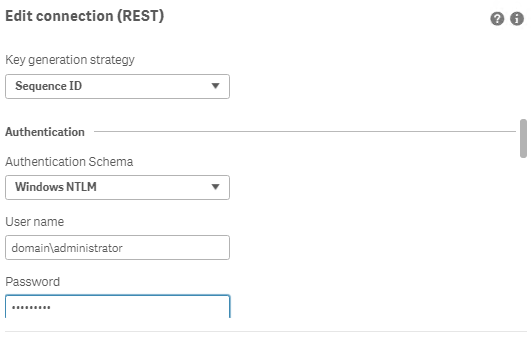

Requirements of REST user account:

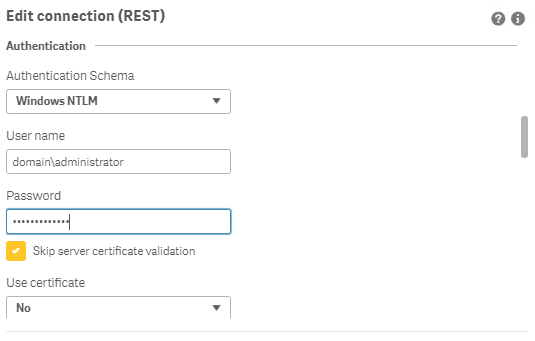

- Windows Authentication is required in both these connectors. The required user account is the NPrinting service account (which is also ROOTADMIN on the Qlik Sense server)

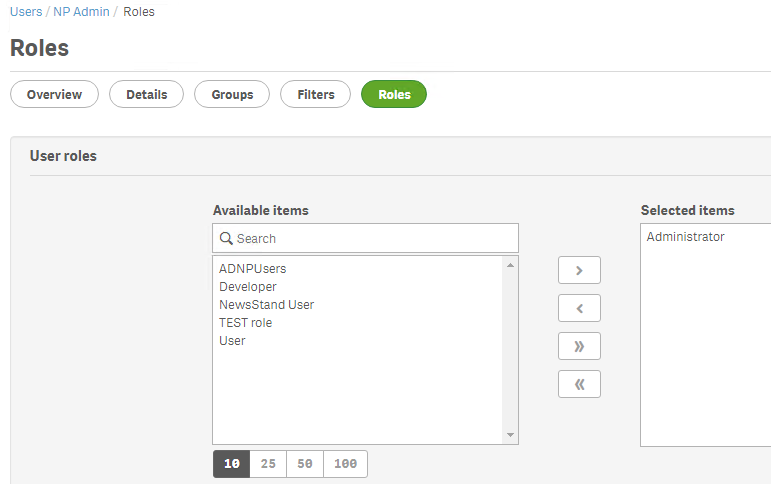

- This user account must also be a member of the NPrinting 'Administrators' Security Role on the NPrinting Server.

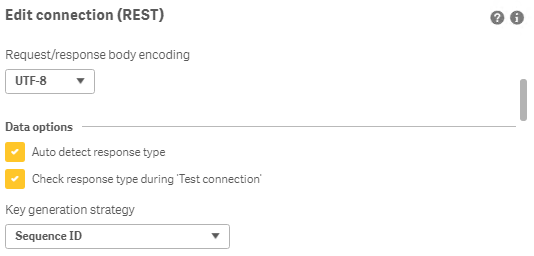

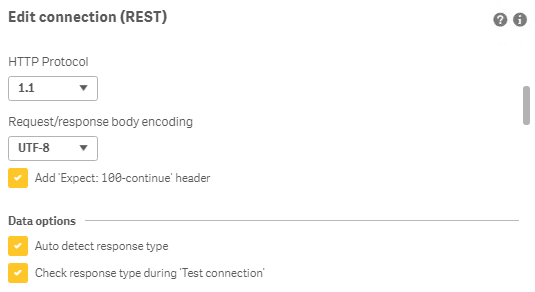

Creating REST "GET" connections

Note: Replace QlikServer3.domain.local with the name and port of your NPrinting Server

NOTE: replace domain\administrator with the domain and user name of your NPrinting service user account

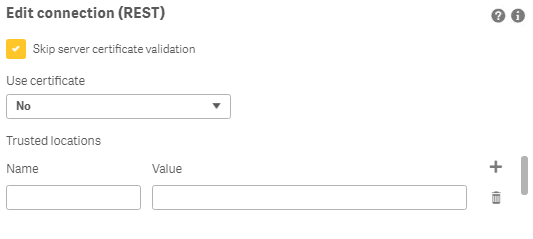

Creating REST "POST" connections

Note: Replace QlikServer3.domain.local with the name and port of your NPrinting Server

NOTE: replace domain\administrator with the domain and user name of your NPrinting service user account

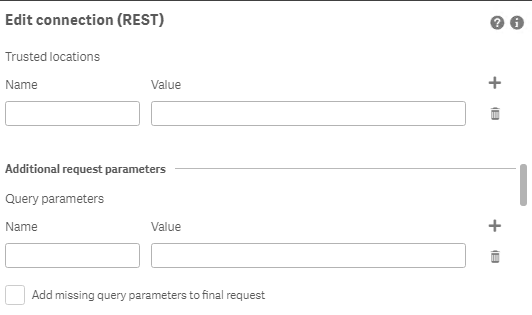

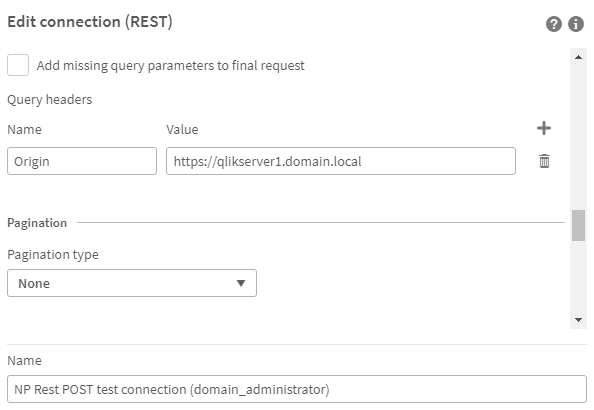

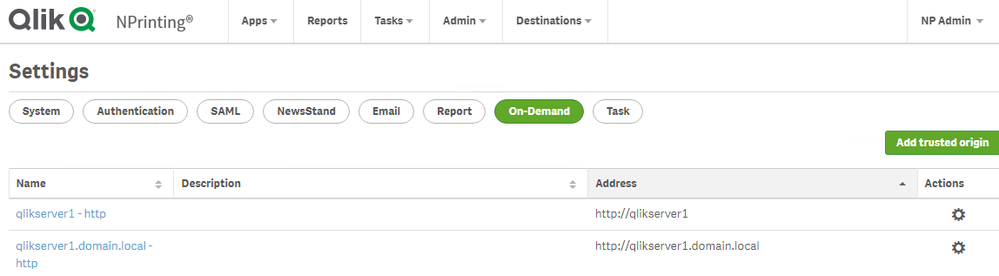

Ensure to enter the 'Name' Origin and 'Value' of the Qlik Sense (or QlikView) server address in your POST REST connection only.

Replace https://qlikserver1.domain.local with your Qlik sense (or QlikView) server address.

Ensure that the 'Origin' Qlik Sense or QlikView server is added as a 'Trusted Origin' on the NPrinting Server computer

Related Content

- Distribute NPrinting reports after reloading a Qlik App

- Extending Qlik NPrinting

- Run a Qlik NPrinting API POST command via QlikView reload script

- Troubleshooting Common NPrinting API Errors

NOTE: The information in this article is provided as-is and to be used at own discretion. NPrinting API usage requires developer expertise and usage therein is significant customization outside the turnkey NPrinting Web Console functionality. Depending on tool(s) used, customization(s), and/or other factors ongoing, support on the solution below may not be provided by Qlik Support.

-

Customizing Qlik Sense Enterprise on Windows Forms Login Page

Ever wanted to brand or customize the default Qlik Sense Login page? The functionality exists, and it's really as simple as just designing your HTML p... Show MoreEver wanted to brand or customize the default Qlik Sense Login page?

The functionality exists, and it's really as simple as just designing your HTML page and 'POSTing' it into your environment.

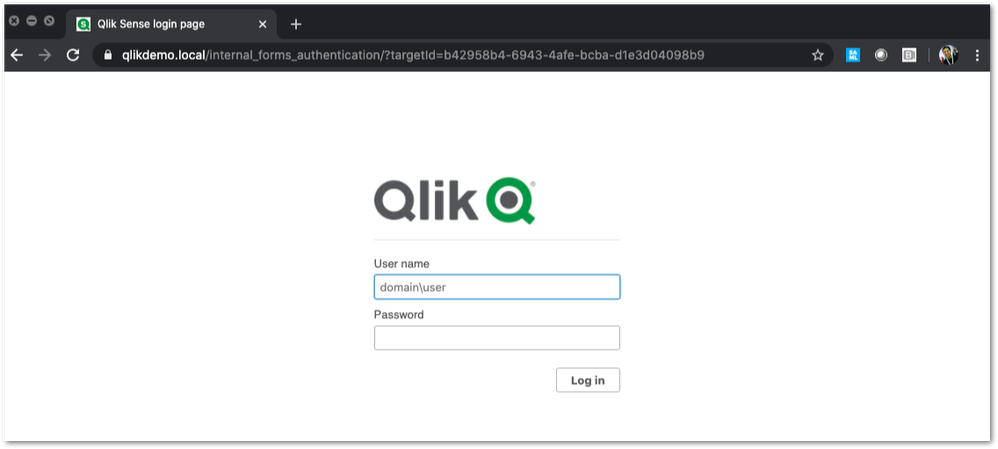

We've all seen the standard Qlik Sense Login page, this article is all about customizing this page.

This customization is provided as is. Qlik Support cannot provide continued support of the solution. For assistance, reach out to our Professional Services or engage in our active Integrations forum.

To customize the page:

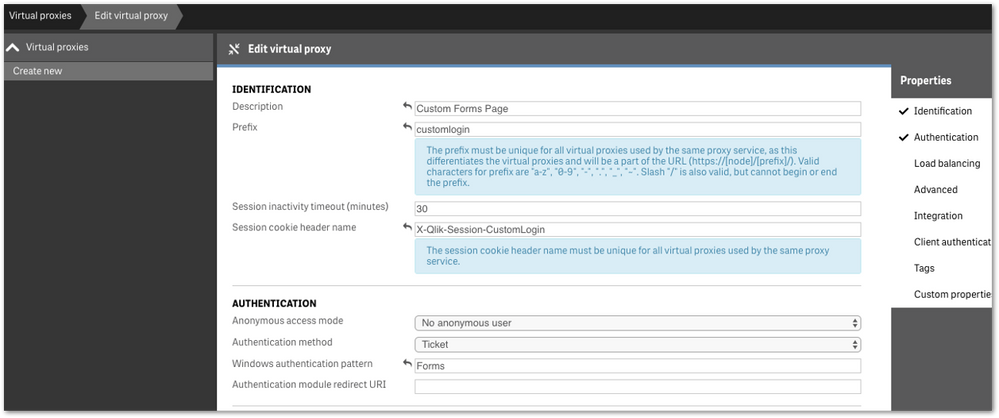

- We highly recommend setting up a new virtual proxy with Forms so you don't impact any users that are using auto-login Windows auth.

Example setup:

Description: Custom Forms Page

Prefix: customlogin

Session cookie header-name: X-Qlik-Session-CustomLogin

Windows authentication pattern: Forms - Once this is done, a good starting point is to download the default login page.

You can open up your web developer tool of choice, go to the login page, and download the HTML response from the GET http://<server>/customlogin/internal_forms_authentication request. It should be roughly a 273 line .html file. - Once you have this file, you can more or less customize it as much as you'd like.

Image files can be inlined as you'll see in the qlik default file, or can be referenced as long as they are publicly accessible. The only thing that needs to exist are the input boxes with appropriate classes and attributes, and the 'Log In' button. - After building out your custom HTML page and it looks great, it needs to be converted to Base64. There are online tools to do this, openssl also has this functionality.

Once you have your Base64 encoded HTML file, then you will want to PUT it into your sense environment. - First, do a GET request on /qrs/proxyservice and find the ID of the proxy service you want this login page to be shown for.

- You will then do a GET request on /qrs/proxyservice/<id> and copy the body of that response. Below is an example of that response.

{ "id": "8817d7ab-e9b2-4816-8332-f8cb869b27c2", "createdDate": "2020-03-23T15:39:33.540Z", "modifiedDate": "2020-05-20T18:46:13.995Z", "modifiedByUserName": "INTERNAL\\sa_api", "customProperties": [], "settings": { "id": "8817d7ab-e9b2-4816-8332-f8cb869b27c2", "createdDate": "2020-03-23T15:39:33.540Z", "modifiedDate": "2020-05-20T18:46:13.995Z", "modifiedByUserName": "INTERNAL\\sa_api", "listenPort": 443, "allowHttp": true, "unencryptedListenPort": 80, "authenticationListenPort": 4244, "kerberosAuthentication": false, "unencryptedAuthenticationListenPort": 4248, "sslBrowserCertificateThumbprint": "e6ee6df78f9afb22db8252cbeb8ad1646fa14142", "keepAliveTimeoutSeconds": 10, "maxHeaderSizeBytes": 16384, "maxHeaderLines": 100, "logVerbosity": { "id": "8817d7ab-e9b2-4816-8332-f8cb869b27c2", "createdDate": "2020-03-23T15:39:33.540Z", "modifiedDate": "2020-05-20T18:46:13.995Z", "modifiedByUserName": "INTERNAL\\sa_api", "logVerbosityAuditActivity": 4, "logVerbosityAuditSecurity": 4, "logVerbosityService": 4, "logVerbosityAudit": 4, "logVerbosityPerformance": 4, "logVerbositySecurity": 4, "logVerbositySystem": 4, "schemaPath": "ProxyService.Settings.LogVerbosity" }, "useWsTrace": false, "performanceLoggingInterval": 5, "restListenPort": 4243, "virtualProxies": [ { "id": "58d03102-656f-4075-a436-056d81144c1f", "prefix": "", "description": "Central Proxy (Default)", "authenticationModuleRedirectUri": "", "sessionModuleBaseUri": "", "loadBalancingModuleBaseUri": "", "useStickyLoadBalancing": false, "loadBalancingServerNodes": [ { "id": "f1d26a45-b0dd-4be1-91d0-34c698e18047", "name": "Central", "hostName": "qlikdemo", "temporaryfilepath": "C:\\Users\\qservice\\AppData\\Local\\Temp\\", "roles": [ { "id": "2a6a0d52-9bb4-4e74-b2b2-b597fa4e4470", "definition": 0, "privileges": null }, { "id": "d2c56b7b-43fd-44ad-a12f-59e778ce575a", "definition": 1, "privileges": null }, { "id": "37244424-96ae-4fe5-9522-088a0e9679e3", "definition": 2, "privileges": null }, { "id": "b770516e-fe8a-43a8-a7a4-318984ee4bd6", "definition": 3, "privileges": null }, { "id": "998b7df8-195f-4382-af18-4e0c023e7f1c", "definition": 4, "privileges": null }, { "id": "2a5325f4-649b-4147-b0b1-f568be1988aa", "definition": 5, "privileges": null } ], "serviceCluster": { "id": "b07fc5f2-f09e-4676-9de6-7d73f637b962", "name": "ServiceCluster", "privileges": null }, "privileges": null } ], "authenticationMethod": 0, "headerAuthenticationMode": 0, "headerAuthenticationHeaderName": "", "headerAuthenticationStaticUserDirectory": "", "headerAuthenticationDynamicUserDirectory": "", "anonymousAccessMode": 0, "windowsAuthenticationEnabledDevicePattern": "Windows", "sessionCookieHeaderName": "X-Qlik-Session", "sessionCookieDomain": "", "additionalResponseHeaders": "", "sessionInactivityTimeout": 30, "extendedSecurityEnvironment": false, "websocketCrossOriginWhiteList": [ "qlikdemo", "qlikdemo.local", "qlikdemo.paris.lan" ], "defaultVirtualProxy": true, "tags": [], "samlMetadataIdP": "", "samlHostUri": "", "samlEntityId": "", "samlAttributeUserId": "", "samlAttributeUserDirectory": "", "samlAttributeSigningAlgorithm": 0, "samlAttributeMap": [], "jwtAttributeUserId": "", "jwtAttributeUserDirectory": "", "jwtAudience": "", "jwtPublicKeyCertificate": "", "jwtAttributeMap": [], "magicLinkHostUri": "", "magicLinkFriendlyName": "", "samlSlo": false, "privileges": null }, { "id": "a8b561ec-f4dc-48a1-8bf1-94772d9aa6cc", "prefix": "header", "description": "header", "authenticationModuleRedirectUri": "", "sessionModuleBaseUri": "", "loadBalancingModuleBaseUri": "", "useStickyLoadBalancing": false, "loadBalancingServerNodes": [ { "id": "f1d26a45-b0dd-4be1-91d0-34c698e18047", "name": "Central", "hostName": "qlikdemo", "temporaryfilepath": "C:\\Users\\qservice\\AppData\\Local\\Temp\\", "roles": [ { "id": "2a6a0d52-9bb4-4e74-b2b2-b597fa4e4470", "definition": 0, "privileges": null }, { "id": "d2c56b7b-43fd-44ad-a12f-59e778ce575a", "definition": 1, "privileges": null }, { "id": "37244424-96ae-4fe5-9522-088a0e9679e3", "definition": 2, "privileges": null }, { "id": "b770516e-fe8a-43a8-a7a4-318984ee4bd6", "definition": 3, "privileges": null }, { "id": "998b7df8-195f-4382-af18-4e0c023e7f1c", "definition": 4, "privileges": null }, { "id": "2a5325f4-649b-4147-b0b1-f568be1988aa", "definition": 5, "privileges": null } ], "serviceCluster": { "id": "b07fc5f2-f09e-4676-9de6-7d73f637b962", "name": "ServiceCluster", "privileges": null }, "privileges": null } ], "authenticationMethod": 1, "headerAuthenticationMode": 1, "headerAuthenticationHeaderName": "userid", "headerAuthenticationStaticUserDirectory": "QLIKDEMO", "headerAuthenticationDynamicUserDirectory": "", "anonymousAccessMode": 0, "windowsAuthenticationEnabledDevicePattern": "Windows", "sessionCookieHeaderName": "X-Qlik-Session-Header", "sessionCookieDomain": "", "additionalResponseHeaders": "", "sessionInactivityTimeout": 30, "extendedSecurityEnvironment": false, "websocketCrossOriginWhiteList": [ "qlikdemo", "qlikdemo.local" ], "defaultVirtualProxy": false, "tags": [], "samlMetadataIdP": "", "samlHostUri": "", "samlEntityId": "", "samlAttributeUserId": "", "samlAttributeUserDirectory": "", "samlAttributeSigningAlgorithm": 0, "samlAttributeMap": [], "jwtAttributeUserId": "", "jwtAttributeUserDirectory": "", "jwtAudience": "", "jwtPublicKeyCertificate": "", "jwtAttributeMap": [], "magicLinkHostUri": "", "magicLinkFriendlyName": "", "samlSlo": false, "privileges": null } ], "formAuthenticationPageTemplate": "", "loggedOutPageTemplate": "", "errorPageTemplate": "", "schemaPath": "ProxyService.Settings" }, "serverNodeConfiguration": { "id": "f1d26a45-b0dd-4be1-91d0-34c698e18047", "name": "Central", "hostName": "qlikdemo", "temporaryfilepath": "C:\\Users\\qservice\\AppData\\Local\\Temp\\", "roles": [ { "id": "2a6a0d52-9bb4-4e74-b2b2-b597fa4e4470", "definition": 0, "privileges": null }, { "id": "d2c56b7b-43fd-44ad-a12f-59e778ce575a", "definition": 1, "privileges": null }, { "id": "37244424-96ae-4fe5-9522-088a0e9679e3", "definition": 2, "privileges": null }, { "id": "b770516e-fe8a-43a8-a7a4-318984ee4bd6", "definition": 3, "privileges": null }, { "id": "998b7df8-195f-4382-af18-4e0c023e7f1c", "definition": 4, "privileges": null }, { "id": "2a5325f4-649b-4147-b0b1-f568be1988aa", "definition": 5, "privileges": null } ], "serviceCluster": { "id": "b07fc5f2-f09e-4676-9de6-7d73f637b962", "name": "ServiceCluster", "privileges": null }, "privileges": null }, "tags": [], "privileges": null, "schemaPath": "ProxyService" } - In the response, locate the formAuthenticationPageTemplate field

- You can then take your base64 encoded HTML file, paste the value into the formAuthenticationPageTemplate field.

Example:

"formAuthenticationPageTemplate": "BASE 64 ENCODED HTML HERE",

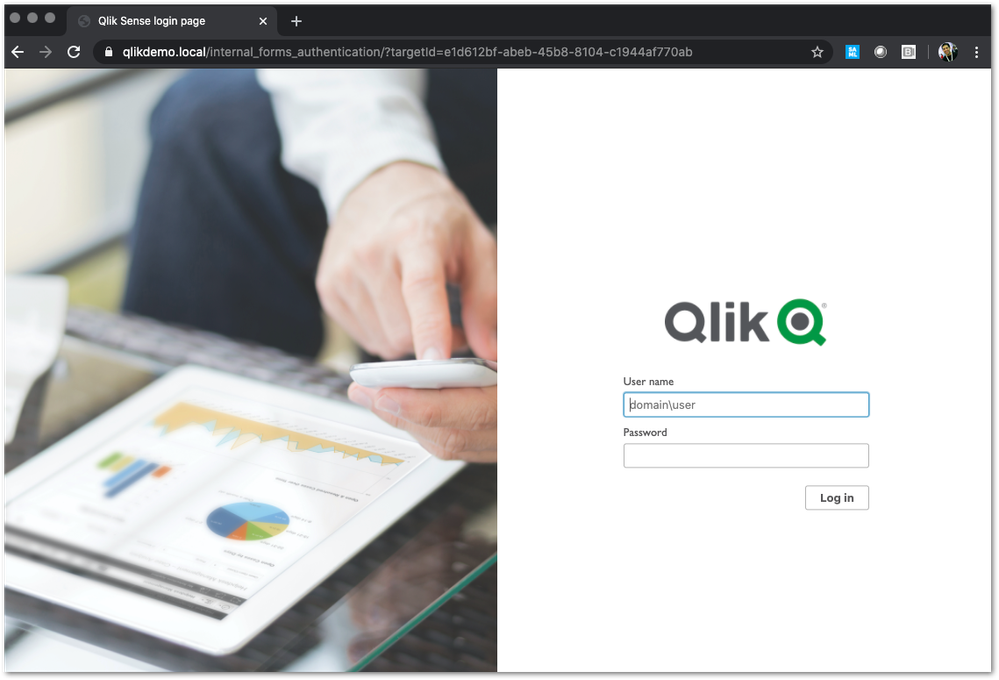

Once you have an updated body, you can use the PUT verb (with an updated modifiedDate) to PUT this body back into the repository. Once this is done, you should be able to goto your virtual proxy and you should see your new login page (very Qlik branded in this example):

If your login page does not work and you need to revert back to the default, simply do a GET call on your proxy service, and set formAuthenticationPageTemplate back to an empty string:

formAuthenticationPageTemplate": ""Environment:

- We highly recommend setting up a new virtual proxy with Forms so you don't impact any users that are using auto-login Windows auth.

-

Qlik Cloud: Content restricted or unavailable with Embedded Analytics User role ...

The Embedded Analytics User role is a role available in Qlik Cloud for use cases where your users should not access the Qlik Cloud hub directly. If t... Show More -

How to install and start using Qlik-CLI for SaaS editions of Qlik Sense

This video will demonstrate how to install and configure Qlik-CLI for SaaS editions of Qlik Sense. Content: RequirementsInstallation method OneInstall... Show MoreThis video will demonstrate how to install and configure Qlik-CLI for SaaS editions of Qlik Sense.

Content:

- Requirements

- Installation method One

- Installation Method Two

- Enable the Completion Feature (optional, but useful)

- Use Qlik-CLI

- Related Content

- Transcript

Requirements

- The user has access to a Qlik Cloud tenant

- The user has a professional license assigned in the tenant

- The user has the developer role assigned (required for API access)

- An API key can be obtained

Installation method One

- Download Qlik-CLI from GitHub

- Copy the executable to a local directory

- Add the Qlik-CLI as an Environment Variable Path.

- Open your Windows Control Panel

- Navigate to System

- Open Advanced system settings in the leftmost menu

- Click Environment Variables

- Locate Path in the User's System variables

- Click Edit

- Click New

- Add the path to Qlik-CLI for example C:\Tools\Qlik-QLI\

Modify the User Environment. Add path to Qlik-CLI. ie "C:\Tools\Qlik-QLI\"

- Confirm with OK until all windows are closed

- Confirm the Qlik-CLI location path to verify it the installation completed successfully

- Open PowerShell

- Execute:

get-command qlik - Closer PowerShell

Installation Method Two

- Download and install Chocolatey as documented on chocolatey.org.

- Open PowerShell

- Execute:

choco install qlik-cli - This completes the installation.

Enable the Completion Feature (optional, but useful)

- Verify if a PowerShell profile exists or create a new one

- Open PowerShell

- Execute:

if ( -not (Test-Path $PROFILE) ) { echo "" > $PROFILE }

- Open the profile with the command notepad $PROFILE:

qlik completion ps > "./qlik_completion.ps1" # Create a file containing the powershell completion. . ./qlik_completion.ps1 # Source the completion. - Save it.

- Restart PowerShell

Use Qlik-CLI

Advanced and additional instructions as seen in the video can be found at Qlik-CLI on Qlik.Dev. Begin with Get Started.

Related Content

- How to install Qlik-CLI for Qlik Sense on Windows

- Qlik CLI support and compatibility

- Qlik Dev - Qlik-CLI

- Microsoft PowerSheel Profile

- Generate your first API key

- Download Qlik-CLI from NuGet

- Download Qlik-CLI from GitHub

- How to use Qlik-CLI to Migrate Apps to Qlik Sense SaaS

Transcript

-

Advanced Qlik Sense System Monitoring

Content ChaptersEnvironment overviewZabbix Server set-upClone the Zabbix docker repositorySetting up environment variablesRe-link compose.yaml to our ... Show MoreContent

- Chapters

- Environment overview

- Zabbix Server set-up

- Clone the Zabbix docker repository

- Setting up environment variables

- Re-link compose.yaml to our preferred compose file

- Logging in for the first time

- Zabbix Agent installation on Windows Server

- Zabbix Server Configuration

- Adding the first Server

- Importing Qlik Sense Enterprise for Windows templates

- Linking templates to hosts

- Engine Healthcheck Monitoring with HTTP Agent example

- Steps to configure a new HTTP Agent for QSE Health monitoring

- Defining the Virtual Proxy prefix for Zabbix HTTP Agent

- Resources & Links

Chapters

- 01:33 - Why use Zabbix

- 02:35 - Architecture for demo

- 03:41 - Downloading the installer

- 04:36 - Installing Zabbix Server

- 08:37 - Installing the Zabbix agent

- 12:17 - Applying Qlik specific templates

- 14:28 - Reviewing Qlik-specific Dashboards

- 16:49 - Configuration details

- 18:42 - How to create a dashboard

- 20:30 - Q&A: Can Zabbix run on Windows?

- 21:16 - Q&A: Is Zabbix supported by Qlik?

- 21:36 - Q&A: Can this monitor data capacity?

- 22:45 - Q&A: Can the Zabbix agents affect performance?

- 23:20 - Q&A: Can it monitor bookmark size?

- 24:02 - Q&A: Can this monitor amount of data being used?

- 24:19 - Q&A: Can this monitor sheets, and objects in apps?

- 24:49 - Q&A: Is there a similar tool for Cloud?

- 25:36 - Q&A: Would this work with QlikView?

- 26:11 - Q&A: Does this read the app data?

- 26:26 - Q&A: Can this help measure how long to open an app?

The information in this article and video is provided as is. If you need assistance with Zabbix, please engage with Zabbix directly.

Environment overview

The environment being demonstrated in this article consists of one Central Node and Two Worker Nodes. Worker 1 is a Consumption node where both Development and Production apps are allowed. Worker 2 is a dedicated Scheduler Worker node where all reloads will be directed. Central Node is acting as a Scheduler Manager.

Zabbix Server set-up

The Zabbix Monitoring appliance can be downloaded and configured in a number of ways, including direct install on a Linux server, OVF templates and self-hosting via Docker or Kubernetes. In this example we will be using Docker. We assume you have a working docker engine running on a server or your local machine. Docker Desktop is a great way to experiment with these images and evaluate whether Zabbix fits in your organisation.

Clone the Zabbix docker repository

This will include all necessary files to get started, including docker compose stack definitions supporting different base images, features and databases, such as MySQL or PostgreSQL. In our example, we will invoke one of the existing Docker compose files which will use PostgreSQL as our database engine.

Source: https://www.zabbix.com/documentation/current/en/manual/installation/containers#docker-compose

git clone https://github.com/zabbix/zabbix-docker.gitSetting up environment variables

Here you can modify environment variables as needed, to change things like the Stack / Composition name, default ports and many other settings supported by Zabbix.

cd ./zabbix-docker/env_vars ls -la #to list all hidden files (.dotfiles) nano .env_webIn this file, we will change the value for

ZBX_SERVER_NAMEto something else, like "Qlik STT - Monitoring". Save the changes and we are ready to start up Zabbix Server.Re-link compose.yaml to our preferred compose file

./zabbix-docker folder contains many different docker compose templates, either using public images or locally built (latest and local tags).

You can run your chosen base image and database version with:

docker compose -f compose-file.yaml up -d && docker compose logs -f --since 1mOr unlink and re-create the symbolic link to compose.yaml, which enables managing the stack without specifying a compose file. Run the following commands inside the

zabbix-dockerfolder to use the latest Ubuntu-based image with PostgreSQL database:unlink compose.yamlln -s ./docker-compose_v3_ubuntu_pgsql_latest.yaml compose.yaml- Start the Zabbix stack in detached mode with

docker compose up -d

If you skip the

-dflag, the Docker stack will start and your command line will be connected to the log output for all containers. The stack will stop if you exit this mode with CTRL+C or by closing the terminal session. Detached mode will run the stack in background. You can still connect to the live log output, pull logs from history, manage the stack state or tear it down usingdocker compose down.Pro tip: you will be using

docker composecommands often when working with Docker. You can create an alias in most shells to a short-hand, such as "dc = docker compose". This will still accept all following verbs, such asstart|stop|restart|up|down|logsand all following flags.docker compose up -d && docker compose logs -f --since 1mwould becomedc up -d && dc logs -f --since 1m.Logging in for the first time

- By default, the Zabbix Web GUI will be exposed on ports 80/443

- Using tools like Portainer makes Docker stack management easier

Use the IP address of your Docker host: http://IPADDRESS or https://IPADDRESS.

The Zabbix server stack can be hosted behind a Reverse Proxy.

The default username is

Adminand the default password iszabbix. They are case sensitive.Zabbix Agent installation on Windows Server

Download link: https://www.zabbix.com/download_agents, in this case download the Windows installer MSI.

- Run the installer .msi

- Leave components unchanged

- Hostname = your machine hostname, we will have to use the same hostname when adding a Host in Zabbix Server.

- Zabbix server IP/DNS: IP address or DNS name of your Zabbix Server

- Agent listening port, the same port will be used when when adding a Host in Zabbix Server.

- Enable "Add agent location to the PATH" for convenience in the command line

- Finish installation

Zabbix Server Configuration

Adding the first Server

After Agent is installed, in Zabbix go to Data Collection > Hosts and click on Create host in the top right-hand corner. Provide details like hostname and port to connect to the Agent, a display name and adjust any other parameters. You can join clusters with Host groups. This makes navigating Zabbix easier.

Fig 1: Adding a Host

Note: Remember to change how Zabbix Server will connect to the Agent on this node, either with IP address or DNS. Note that the default IP address points to the Zabbix Server.

Importing Qlik Sense Enterprise for Windows templates

In the Zabbix Web GUI, navigate to Data Collection > Templates and click on the Import button in the top right-hand corner. You can find the templates file at the following download link:

LINK to zabbix templates

Linking templates to hosts

Once you have added all your hosts to the Data Collection section, we can link all Qlik Sense servers in a cluster using the same templates. Zabbix will automatically populate metrics where these performance counters are found. From Data Collection > Hosts, select all your Qlik Sense servers and click on "Mass update". In the dialog that comes up, select the "Link templates" checkbox. Here you can link/replace/unlink templates across many servers in bulk.

Select "Link" and click on the "Select" button. This new panel will let us search for Template groups and make linking a bit easier. The Template Group we provided contains 4 individual templates.

Fig 2: Mass update panel

Fig 3: Search for Template Group

Once you Select and Update on the main panel, all selected Hosts will receive all items contained in the templates, and populate all graphs and Dashboards automatically.

To review your data, navigate to Monitoring > Hosts and click on the "Dashboards" or "Graphs" link for any node, here is the default view when all Qlik Sense templates are linked to a node:

Fig 4: Host Dashboards

Fig 5: Repository Service metrics - Example

Engine Healthcheck Monitoring with HTTP Agent example

We will query the Engine Healthcheck end-point on QlikServer3 (our consumer node) and extract usage metrics from by parsing the JSON output.

Steps to configure a new HTTP Agent for QSE Health monitoring

We will be using a new Anonymous Access Virtual Proxy set up on each node. This Virtual Proxy will only Balance on the node it represents, to ensure we extract meaningful metrics from the Engine and we won't be load-balanced by the Proxy service across multiple nodes. There won't be a way to determine which node is responding, without looking at DevTools in your browser. You can also use Header or Certificate authentication in the HTTP Agent configuration.

Once the Virtual Proxy is configured with Anonymous Only access, we can use this new prefix to configure our HTTP Agent in Zabbix.

Defining the Virtual Proxy prefix for Zabbix HTTP Agent

In the Zabbix web GUI, go to Data collection > Hosts. Click on any of your hosts. On tabs at the top of the pop-up, click on Macros and click on the "Inherited and host macros" button. Once the list has loaded, search for the following Macro: {$VP_PREFIX}. This is set by default to "anon". Click on "Change" and set Macro value to your custom Virtual Proxy Prefix for Engine diagnostics, and click Update. The Virtual Proxy prefix will have to be changed on each node for the "Engine Performance via HTTP Agent" item to work. Alterantively, you can modify the MACRO value for the Template, this will replicate the changes across all nodes associated to this Template.

Fig 6: Changing Host Macros from Inherited values

To make this change at the Template level, go to Data collection > Templates. Search for the "Engine Performance via HTTP Agent" and click on the Template. Navigate to the Macros tab in the pop-up and add your Virtual Proxy Prefix here to make this the new default for your environment. No further changes to Node configuration are required at this point.

Fig 7: Changing Macros at the Template level

The Zabbix templates provided in this article contain the following Engine metric JSONParsers:

- Memory: Allocated, Committed, Free, Total Physical

- Calls, Selections

- Saturation status (true/false)

- Sessions: Active/Total

- Users: Active/Total

These are the same performance counters that you can see in the Engine Health section in QMC.

Stay tuned to new releases of the Monitoring Templates. Feel free to customise these to your needs and share with the Community.

Resources & Links

- Zabbix home page

- Zabbix Installation from containers documentation

- Zabbix Docker repository on GitHub

- Install Docker Engine on Ubuntu

Environment

- Qlik Sense Enterprise on Windows

-

Qlik NPrinting SAML authentication with Azure

This article explains how to implement SAML for NPrinting with Azure as the IdP. Environments: Qlik NPrinting To implement Azure SAML in Nprinting,... Show MoreThis article explains how to implement SAML for NPrinting with Azure as the IdP.

Environments:

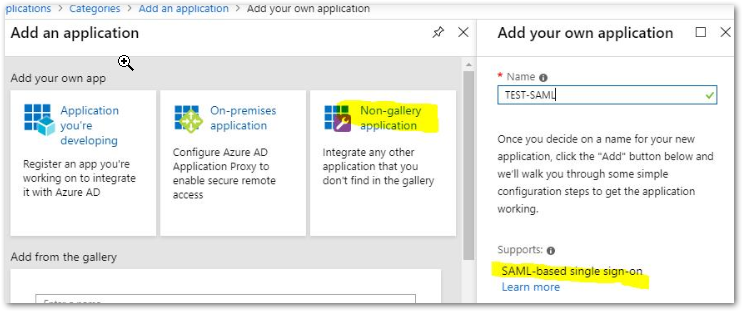

To implement Azure SAML in Nprinting, the following needs to be done:- Create your own application in Azure from this menu and choose a name for it.

-

Generate a Metadata XML file

Federation Metadata XML: Download - Configure SAML in NPrinting and upload your metadata file

See instructions on the following link: Confguring SAML

Below is an example of a working metadata file with only the needed fields, the simpler is to copy the corresponding elements (Azure EntityID, certificate for signing, SingleSignOnService for HTTP-POST and HTTP-Redirect) from the IdP metadata file downloaded from Azure and paste it into the corresponding parts of the code.

Then save the file as .xml and upload it to NPrinting:<?xml version="1.0"?> <EntityDescriptor xmlns="urn:oasis:names:tc:SAML:2.0:metadata" entityID="https://sts.windows.net/b26e23cf-787a-40e8-9d17-f0c9f9ad0821/"> <IDPSSODescriptor xmlns:ds="http://www.w3.org/2000/09/xmldsig#" protocolSupportEnumeration="urn:oasis:names:tc:SAML:2.0:protocol"> <KeyDescriptor use="signing"> <ds:KeyInfo xmlns:ds="http://www.w3.org/2000/09/xmldsig#"> <ds:X509Data> <ds:X509Certificate>MIIC8DCCAdigAwIBAgIQFUUu6ZQHg5FJ...Ud8tf9A/4A6+2SZm34gf8gcVPTXT/a</ds:X509Certificate> </ds:X509Data> </ds:KeyInfo> </KeyDescriptor> <SingleSignOnService Binding="urn:oasis:names:tc:SAML:2.0:bindings:HTTP-Redirect" Location="https://login.microsoftonline.com/b26e23cf-787a-40e8-9d17-f0c9f9ad0821/saml2"/> <SingleSignOnService Binding="urn:oasis:names:tc:SAML:2.0:bindings:HTTP-POST" Location="https://login.microsoftonline.com/b26e23cf-787a-40e8-9d17-f0c9f9ad0821/saml2"/> </IDPSSODescriptor> </EntityDescriptor>

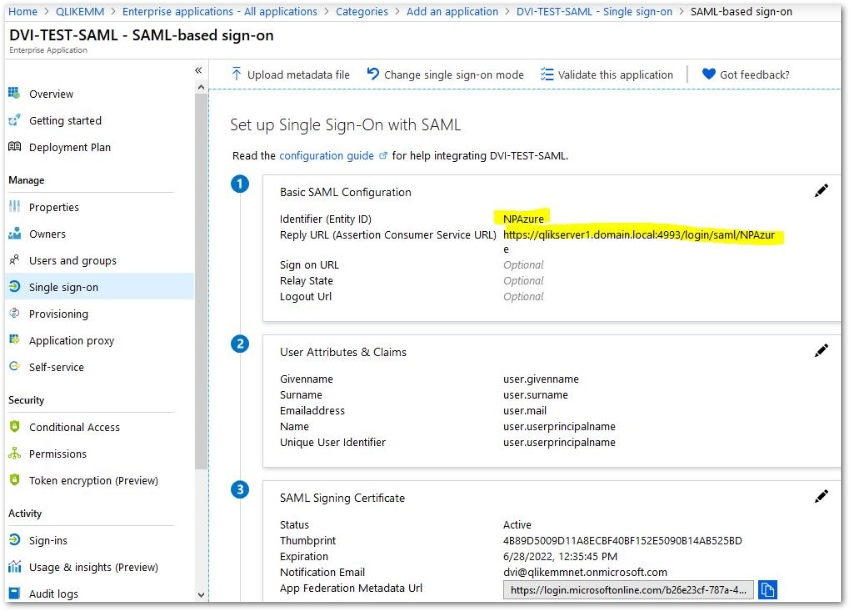

Alternatively, when you upload the Azure metadata file as is to NPrinting, check the Webengine log file to remove unwanted tags from the Azure metadata file (As there are many tags that are not supported by Azure, it may take some time) - In Azure, configure your Enterprise application created in step 1

The fields that need to be filled in on the Azure side are the 2 below, others are optional.

Identifier (Entity ID): The Entity ID set up in NPrinting in step 3

Reply URL (Assertion Consumer Service URL): The correct value can be found in the SP metadata file downloaded from NPrinting - Make sure you give the correct permissions to the Azure AD users you want to authorize to connect with SAML

The settings is now completed and SAML authentication should work.

Potential Troubleshooting Steps

- Remember that the user that logs in must already exist in NPrinting identified by either his email address or DOMAIN\Username (Based on the settings in step 3).

If the authentication fails, the error will be logged in the web engine logs in C:\Programdata\Nprinting\Logs\. - If issues are found with the implementation, among other reasons the following may be related:

- The email address attribute needs to be the full URL path, not just “emailaddress”

- The XML from AD/idp needs to have reference to only one certificate

- Irrelevant information in the XML from the idp needs to be removed as NP does not support and can cause errors.

- Create your own application in Azure from this menu and choose a name for it.

-

How to configure Qlik Sense Enterprise on-prem with Azure Entra ID as an Identit...

This article documents the basic steps to configure the SAML integration between Qlik Sense Enterprise on Windows (Client-Managed) and Microsoft Entra... Show MoreThis article documents the basic steps to configure the SAML integration between Qlik Sense Enterprise on Windows (Client-Managed) and Microsoft Entra ID. By connecting these two platforms, administrators can control which users are allowed to access Qlik Sense directly from Entra ID, provide users with seamless single sign-on using their Microsoft accounts, and manage identities from a centralized location.

If you are looking for instructions for Qlik Cloud Analytics, see How To: Configure Qlik Sense Enterprise SaaS to use Azure AD as an IdP.

Content

- Prerequisites

- Step One: Adding the Qlik Sense Enterprise on Windows app to your ENTRA ID.

- Step Two: Assigning users to your Qlik Sense Enterprise Client-Managed app in ENTRA ID.

- Step Three: Configuring Microsoft Entra SSO

- Step Four: Configuring SSO Qlik Sense Enterprise client-managed virtual proxy

- Step Five: Testing SSO.

Prerequisites

To get started, you need the following items:

- A Microsoft Entra subscription.

- A valid Qlik Sense Enterprise on Windows license.

- Along with Cloud Application Administrator, an Application Administrator can also add or manage applications in Microsoft Entra ID. For more information, see Azure built-in roles.

Step One: Adding the Qlik Sense Enterprise on Windows app to your ENTRA ID.

- Sign in to the Microsoft Entra admin center as at least a Cloud Application Administrator.

- Go to Entra ID.

- Select Enterprise Applications under the Manage part.

- Click New Application.

- In the search box, type Qlik Sense Enterprise Client-Managed and select the result.

- Click Create and wait for the app to be successfully added to your application list.

Step Two: Assigning users to your Qlik Sense Enterprise Client-Managed app in ENTRA ID.

- Open the created Qlik Sense Enterprise Client-Managed app, click on Users and groups on the left side.

- Click + Add user/group.

- Click None Selected under the Users.

- Select the user or multiple users you want to permit to access Qlik Sense, then click Select.

- Click Assign.

- Click Refresh and verify that the target user is added with the Role assigned.

Step Three: Configuring Microsoft Entra SSO

- Open the created Qlik Sense Enterprise Client-Managed app, and click Single sign-on on the left side.

- On the Select a Single Sign-on method page, select SAML.

- On the Set up Single Sign-On with SAML page, select the pencil icon for Basic SAML Configuration to edit the settings.

- In the Identifier (Entity ID) textbox, type a URL using the pattern: https://<Fully Qualified Domain Name>.qliksense.com”

This example uses the Qlik Sense Fully Qualified Domain Name https://qlikserver1.domain.local - In the Reply URL textbox, type a URL using the pattern: https://<Fully Qualified Domain Name>:443{/virtualproxyprefix}/samlauthn/

This example uses https://qlikserver1.domain.local:443/entrasaml/samlauthn/ - In the Sign on URL textbox, type a URL using the pattern: https://<Fully Qualified Domain Name>:443{/virtualproxyprefix}/hub

This example uses https://qlikserver1.domain.local:443/entrasaml/hub - Click Save.

- Go to the Set up Single Sign-On with SAML page.

- In the SAML Certificate section, find Federation Metadata XML, click Download, and save the file on your computer.

Step Four: Configuring SSO Qlik Sense Enterprise client-managed virtual proxy

All the following steps are taken in Qlik Sense Enterprise on Windows.

- Open the Qlik Sense Management Console (QMC) as a root admin user or a user who has permission to create a virtual proxy.

- Go to Virtual Proxies and click Create new (See Creating a virtual proxy for details).

- If not already visible, activate the following Properties in the right-side menu: Identification, Authentication, Load Balancing, and Advanced.

- Configure IDENTIFICATION as follows:

- Description: Describe the purpose of the virtual proxy.

- Prefix: Enter a unique name not yet used by any other virtual proxies; this value will be a part of the login URL for Qlik Sense.

- Session inactivity timeout (minutes): Set how long a session can remain idle before it expires; once this limit is reached, the session becomes invalid and the user is automatically signed out.

- Session cookie header name: Set a unique cookie name storing the session identifier after successful authentication.

- Configure AUTHENTICATION as follows:

- Anonymous access mode: This controls whether unauthenticated users can access Qlik Sense through this virtual proxy. The default setting is No anonymous user, which restricts access to authenticated users only. Leave it at the default.

- Authentication method: Select SAML from the dropdown list, which will surface additional settings.

- SAML host URI field: Enter the hostname that users will use to access Qlik Sense through this SAML proxy. This value should match the public URI of your Qlik Sense server.

- SAML entity ID: Use the same value configured in the SAML host URI field.

- SAML IdP metadata: Upload the identity provider metadata file previously downloaded from Microsoft Entra ID. Click Choose File and select the XML metadata file.

- SAML attribute user ID: Enter the attribute name or schema reference for the SAML attribute representing the UserID Microsoft Entra ID sends to the Qlik Sense server. Schema reference information is available in the Azure app screens post configuration. To use the name attribute, enter http://schemas.xmlsoap.org/ws/2005/05/identity/claims/name.

- SAML attribute for user directory: Enter the value for the user directory that's attached to users when they authenticate to Qlik Sense server through Microsoft Entra ID. Hardcoded values must be surrounded by square brackets []. To use an attribute sent in the Microsoft Entra SAML assertion, enter the name of the attribute in this text box without square brackets. In this example, we are using [domain].

- SAML signing algorithm: Defines the certificate signing algorithm used by the service provider (Qlik Sense server). If your server uses a trusted certificate generated with Microsoft Enhanced RSA and AES Cryptographic Provider, consider changing the algorithm to SHA-256. In this example, SHA-1 is used.

- SAML attribute mapping: Allows additional attributes (such as groups) to be passed to Qlik Sense for use in security rules. In this example, no additional attribute mapping is configured.

- Configure LOAD BALANCING as follows:

- Click Add new server node.

- Then select the engine node (or nodes) that Qlik Sense should route user sessions to for load balancing and click Add to confirm your selection.

- Configure ADVANCED as follows:

- Host allow list: Define all hostnames that are permitted when connecting to the Qlik Sense server. Enter the hostname users will use to access the server.

This value should match the SAML host URI, but without the https:// prefix.

- Leave the other settings at their default values

- Host allow list: Define all hostnames that are permitted when connecting to the Qlik Sense server. Enter the hostname users will use to access the server.

- Click Apply.

The Proxy will be restarted. Click OK to confirm. - After the restart, locate the Associated items section in the menu and click Proxies.

- Select the proxy node that will handle this virtual proxy connection in Qlik Sense, then click Link. Once the connection is established, the proxy node will appear in the list of associated proxies.

- A Refresh QMC prompt appears. Click to confirm. Once the page reloads, open the Virtual proxies section and locate the newly created SAML virtual proxy in the list.

- Select the entry, and the Download SP metadata button at the bottom of the screen will become active. Click this button to download and save the service provider metadata file for Qlik Sense.

- Open the SP metadata file and review the entityID and AssertionConsumerService entries. These correspond to the Identifier and Reply URL fields in the Microsoft Entra ID application configuration. If the values in Entra ID do not match those in the metadata file, update the Domain and URLs section of the application configuration so they are aligned with the settings from Qlik Sense.

Step Five: Testing SSO.

You can test the single sign-on setup either from the Microsoft Entra ID portal by selecting Test, or by navigating directly to the Qlik Sense sign-on URL and starting the login process from there.

-

How to setup Key Pair Authentication in Snowflake and How to configure this enha...

Snowflake supports using key pair authentication for enhanced authentication security as an alternative to basic authentication (i.e. username and pas... Show MoreSnowflake supports using key pair authentication for enhanced authentication security as an alternative to basic authentication (i.e. username and password). This article covers end-to-end setup for Key Pair Authentication in Snowflake and Qlik Replicate.

This authentication method requires, at minimum, a 2048-bit RSA key pair. You can generate the Privacy Enhanced Mail (i.e. PEM) private-public key pair using OpenSSL.

Qlik Replicate will use the ODBC driver to connect snowflake and ODBC is one of the supported clients which will support key pair authentication.

Let's assume, you decided to use key pair authentication for the Snowflake user which is used in Qlik Replicate to connect to Snowflake. You have to follow the below process to convert user authentication from basic to key pair.

When creating a Key Pair for Qlik Stitch Snowflake 'Destination' connections, you must set up a nocrypt private key before creating the public key.

Step 1: Generate the Private Key

You can generate either an encrypted version of the private key or an unencrypted version of the private key.

To generate an unencrypted version use the following command in the command prompt:

$ openssl genrsa 2048|openssl pkcs8 -topk8 -inform PEM -out rsa_key.p8 -nocrypt

To generate an encrypted version (which omits -nocrypt) use:

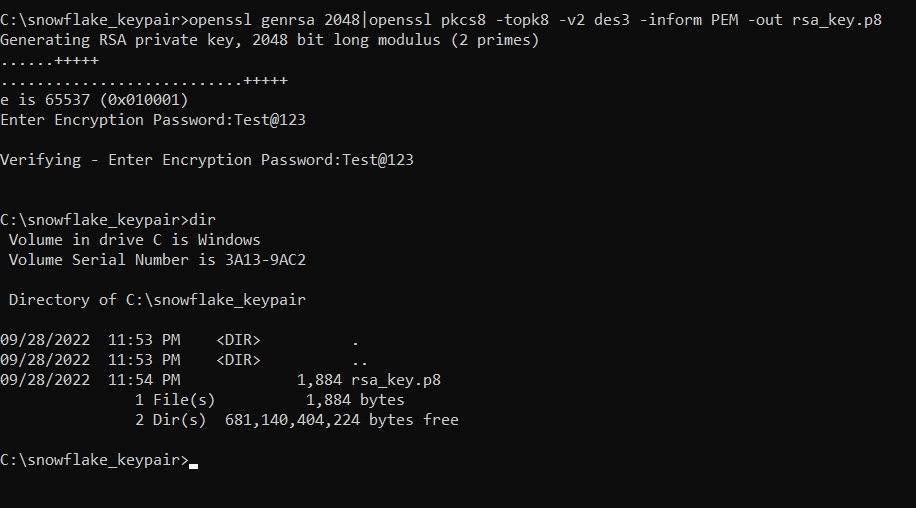

$ openssl genrsa 2048|openssl pkcs8 -topk8 -v2 des3 -inform PEM -out rsa_key.p8

In our example case, we generate an encrypted version of a private key.

We:

- Open a command prompt

- Run $ openssl genrsa 2048|openssl pkcs8 -topk8 -v2 des3 -inform PEM -out rsa_key.p8

- Enter our password

- And store the password to use in Qlik Replicate.

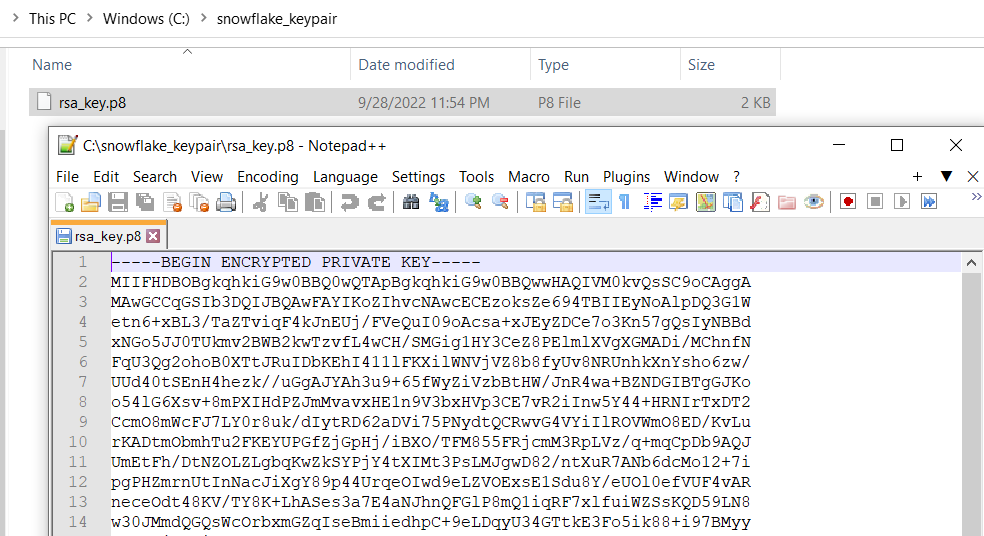

This generates a private key in PEM format:

Step 2: Generate a Public Key

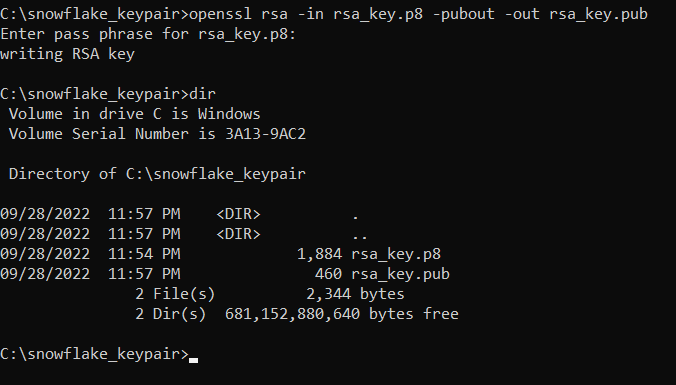

From the command line, we generate the public key by referencing the private key. The following command assumes the private key is encrypted and contained in the file named rsa_key.p8.

When it requests a passphrase, use the same password that we generated in step 1.

openssl rsa -in rsa_key.p8 -pubout -out rsa_key.pub

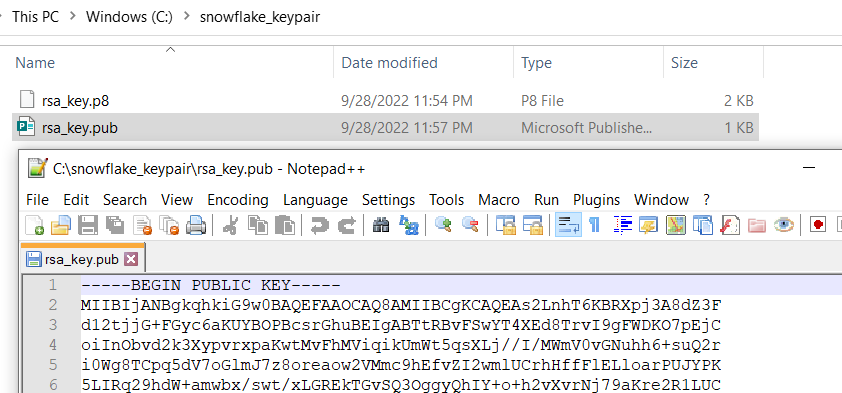

This command generates the public key in PEM format:

Step 3: Store the Private and Public Keys Securely

Copy the public and private key files to a local directory for storage and record the path to the files. Note that the private key is stored using the PKCS#8 (Public Key Cryptography Standards) format and is encrypted using the passphrase you specified in the previous step.

However, the file should still be protected from unauthorized access using the file permission mechanism provided by your operating system. It is your responsibility to secure the file when it is not being used.

Step 4: Assign the Public Key to a Snowflake User

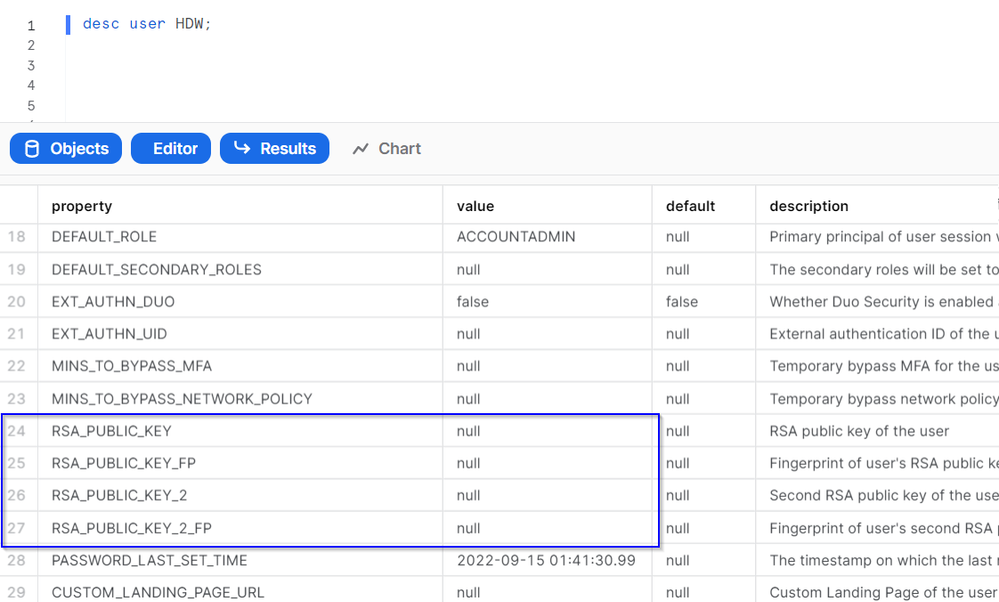

Describe the user to see current information. We can see that there is no public key assigned to the HDW user. Therefore, the user needs to use basic authentication.

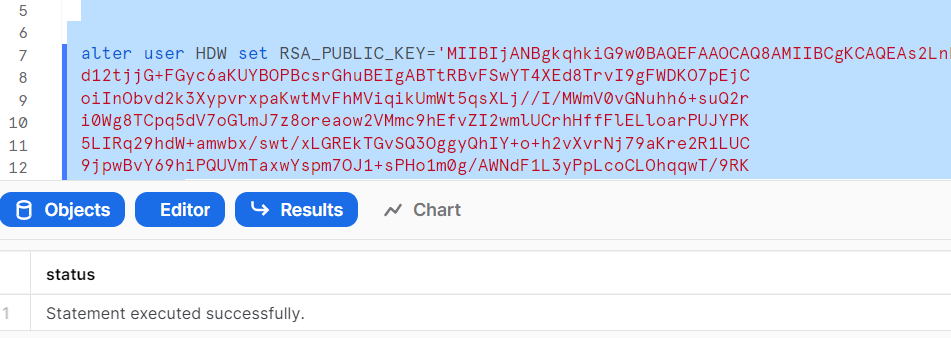

Execute an ALTER USER command to assign the public key to a Snowflake user.

Step 5: Verify the User’s Public Key Fingerprint

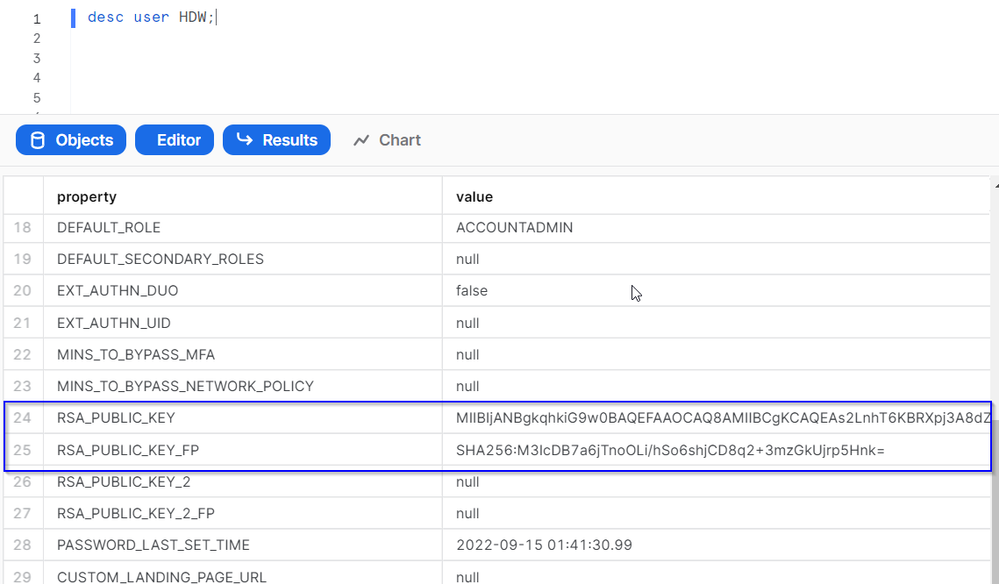

Execute a DESCRIBE USER command to verify the user’s public key.

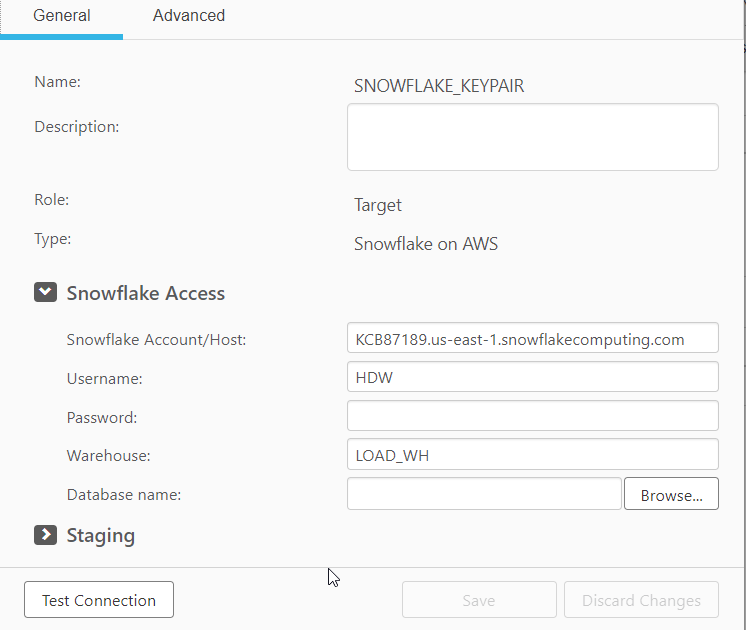

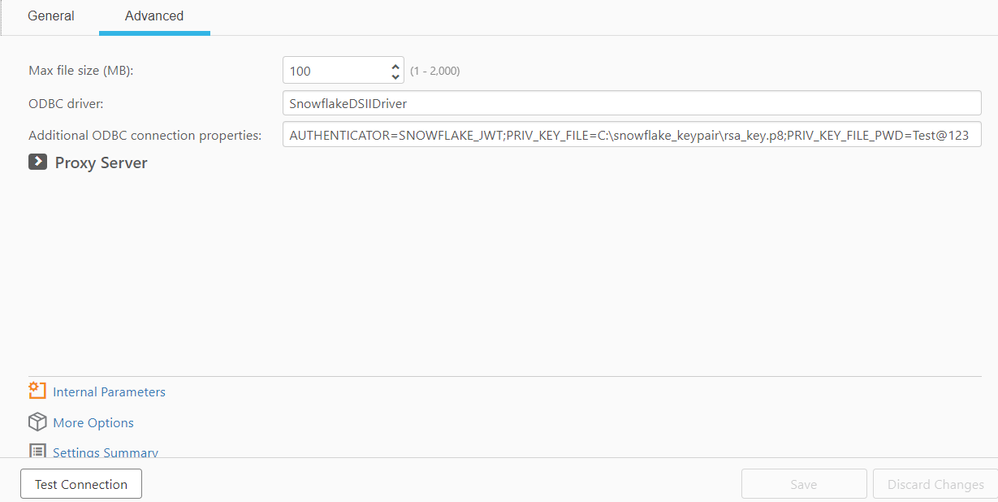

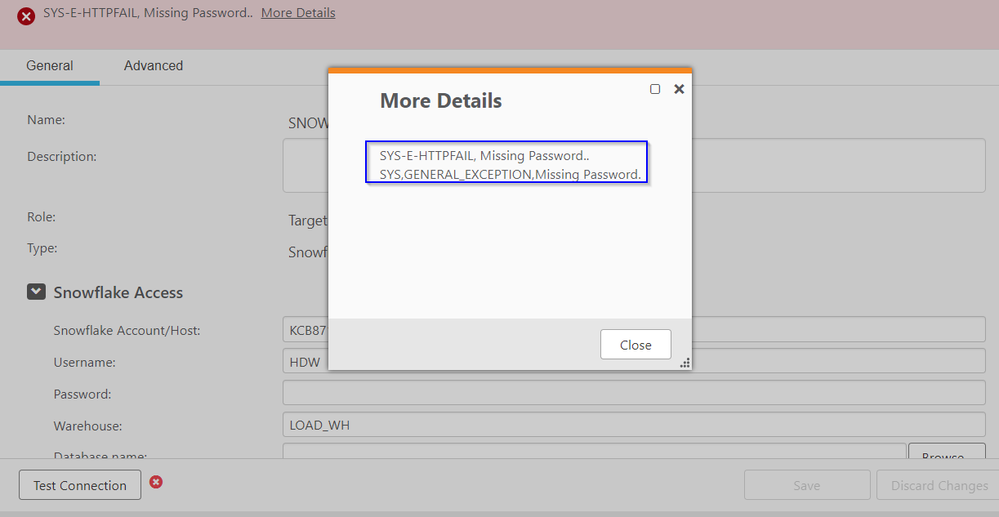

Step 6: Configure Qlik Replicate to Use snowflake Key Pair Authentication

- Enter the snowflake server name and the username that we configured key pair authentication.

- We need to set the below parameters to set the authentication method as SNOWFLAKE_JWT, passing the private key and password from step 1.

- Try to do the test connection\browse the database and you can see a missing password error. We can't ignore the password in the endpoint UI and at the same time, we shouldn't enter the password as we are using keypair authentication.

- You can key in a dummy password, such as dummy, dummy123, etc., in the UI to eliminate the missing password error.

Finally, browse the database and do the test connection.

Environment

Qlik Replicate

Snowflake Target -

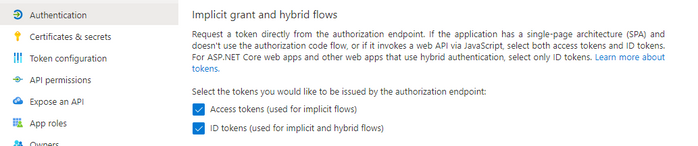

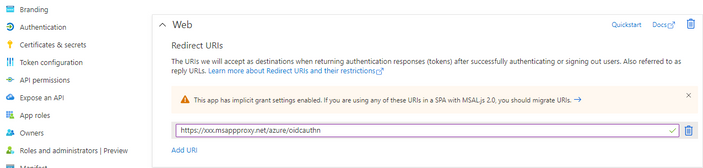

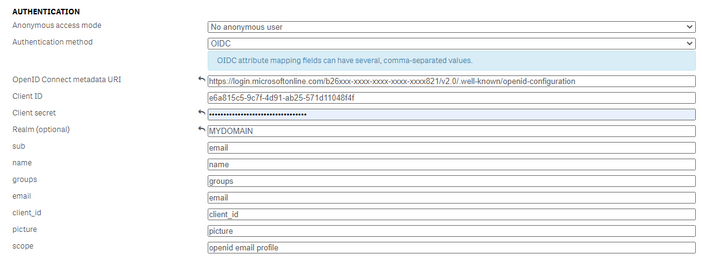

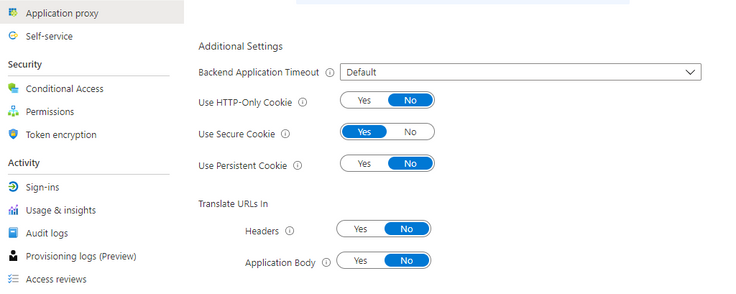

Qlik Sense for Windows: How to configure OIDC with Azure AD

This is a basic guide on how to configure a Qlik Sense virtual proxy with OIDC authentication. This customization is provided as is. Qlik Support ca... Show More -

Qlik Cloud detailed consumption report app fails to reload with error

After distributing the Consumption Report app from Qlik Cloud Administration > Settings, scheduled reloads of the app fail with the following error: ... Show MoreAfter distributing the Consumption Report app from Qlik Cloud Administration > Settings, scheduled reloads of the app fail with the following error:

Error: $(MUST_INCLUDE= [lib://snowflake_external_share:DataFiles/Capacity_Usage_Script_PROD.txt] cannot access the local file system in current script mode. Try including with LIB path.

Environment

- Qlik Cloud Analytics

- Qlik Talend Cloud

Resolution

The Consumption Report app isn't meant to be reloaded. The app should be distributed from Qlik Cloud Administration > Settings each day. Refer to Distributing detailed consumption reports for details:

Redistribute the app to obtain the most recent data. Apps stored on your tenant exist as separate instances and are not replaced by newer ones.

On the Talend side, refer to Distributing Data Capacity Reporting App for Talend Management Console for details on how to set up capacity reporting.

To automatically redistribute the app, see Automate deployment of the Capacity consumption app with Qlik Automate.

Cause

The Report Consumption app is meant to be distributed from Qlik Cloud Administration > Settings and not updated by a scheduled reload of the app.

-

Building Streaming Data Pipelines with Qlik Open Lakehouse

This Techspert Talks session covers: Streaming data via AWS Kenisis Qlik Open Lakehouse capabilities From Pipelines to Analytics Chapters: 01... Show More -

How to configure Qlik Cloud to use MS365 to send emails

This article documents how to configure a Qlik tenant to send emails using MS365. The information in this article is provided as-is and will be used a... Show MoreThis article documents how to configure a Qlik tenant to send emails using MS365.

The information in this article is provided as-is and will be used at your discretion. Depending on the tool(s) used, customization(s), and/or other factors, ongoing support on the solution below may not be provided by Qlik Support.

Prerequisite

An account with an active Office365 license is required for this setup.

MS365 Setup

First, we configure the MS365 tenant to support the configuration.

Once you have an account set up on the MS365 side, let's go to the Microsoft Tenant settings:

- Open the Microsoft Entra admin center (Home - Microsoft Entra admin center)

- Go to Overview

- In the overview screen, you will see your Tenant ID. Copy this value, you will need it to configure your Qlik Cloud Tenant.

- Click Application to register an application

- Click + New registration

- Name your application

- Select the Supported account type that matches your needs

- Click Register to register your application

- Create a Client Credential

- Click Certificates & Secrets

- Click + New client secret

- Describe (name) the client secret and select its expiration value

- Click Add to finish this step

- After creating your client secret, the following is displayed:

- Copy the value and save it in a safe place. You will need it to complete your Qlik Cloud Tenant setup.

- Enabling the right APIs:

- Go to API Permissions

- Click + Add Permission

- Select Microsoft Graph

- Select Application permissions

- In the Select permissions field look for Mail.Send

- Select the Mail.Send permission

Setting Application permissions to Mail.Send grants the application to use any email address from your organization.

- Click Add permission

- Click Grant admin consent for <MS Tenant Name> to finish this step

- Select an Owner for the application

- Click + Add Owner

- Type the name of the User who will be the Owner

- Mark the user and click Select

Qlik Cloud Tenant Setup

- Open your Administration activity center (see Accessing the Administration activity center)

- Go to the Email Provider section

- Select Microsoft 365

- Insert all previously set information:

- Sender email address: Insert an email registered on Microsoft. This can be your email address or another dedicated account created specifically for this purpose.

- Tenant ID: Obtained from the Microsoft Entra Overview section.

- Client ID: The Application (client) ID you previously created.

- Client secret: The Client secret value which you saved in the previous setup steps.

- Save the changes

- Send a test email

If mail.send is blocked by Active Directory group policy, mail delivery will fail. Please consult your AD administrator(s) if mail delivery fails when the above steps are followed.

Environment

- Qlik Cloud Analytics

- Microsoft Office 365

- Open the Microsoft Entra admin center (Home - Microsoft Entra admin center)

-

Setting Up Knowledge Marts for AI

This Techspert Talks session addresses: Synchronizing data in real time Connecting to Structured and Unstructured data Demonstration of chatbot appl... Show More -

SAML authentication works in Qlik Sense Enterprise on Windows even if the Token ...

The scenario: A Qlik Sense Enterprise on Windows environment is set up to use Azure SAML (AD FS) for authentication. On the Azure side, the Token Sign... Show MoreThe scenario: A Qlik Sense Enterprise on Windows environment is set up to use Azure SAML (AD FS) for authentication.

On the Azure side, the Token Signing Certificate embedded as the X509Certificate in the SAML IdP metadata within the Qlik virtual proxy configuration expired several weeks ago. A new certificate has not yet been issued.

It is still possible to log in to Qlik Sense Enterprise on Windows.

This behavior may raise the questions of:

- Is this a security concern?

- How long will the expired certificate last?

Resolution

In SAML, the IdP (Azure AD/AD FS) signs the assertion with its private key, and Qlik Sense validates it using the public key embedded in the IdP metadata (the X509Certificate).

The expiry date in the certificate is not actively checked by Qlik Sense during assertion validation.

Qlik only verifies that the signature matches the public key it has stored. So even if the certificate is expired, as long as the key pair hasn’t changed and the signature is valid, authentication succeeds. This is a common behavior and not specific to Qlik Sense.

Is this a security concern?

It is not considered a security issue. The expiry matters for trust and compliance, not for the cryptographic check Qlik performs.

When will the expiry become relevant?

- Azure rotates the signing certificate (such as after expiry or manual rollover). If you haven’t updated Qlik virtual proxy metadata with the new certificate, logins will fail because Qlik can’t validate the signature.

- If your organization enforces certificate validity checks at the proxy or via custom code (rare in standard Qlik deployments).

Do we need to update the certificate?

Even though Qlik doesn’t break immediately, we recommend updating the IdP metadata in Qlik Sense as soon as Azure issues a new signing certificate. This ensures future-proofing and compliance.

Environment

- Qlik Sense Enterprise on Windows

-

Qlik Cloud Analytics: Third-party extensions cannot be exported

Third-party extensions cannot be exported in Qlik Cloud Analytics. We suggest using Qlik native visualizations for any reporting use cases instead. A... Show More -

Qlik Sense Enterprise on Windows QRS API '/qrs/App/table' returns unexpected res...

In Qlik Sense Enterprise on Windows, using QRS API '/qrs/App/table' by providing a body returns more than expected rows. Example: Using POSTMAN, we... Show MoreIn Qlik Sense Enterprise on Windows, using QRS API '/qrs/App/table' by providing a body returns more than expected rows.

Example:

- Using POSTMAN, we perform a POST call including the body:

- With the above body, we expect that the response rows would ONLY contain name, id, and values as defined above in "list":

- However, this POST request returns more than the requested rows, such as id, name, valueType, choiceValues, and privileges:

Resolution

This behavior has been identified as defect SUPPORT-6127.

It is caused by the default value of the parameter HideCustomPropertyDefinition set to true in the Repository.exe.config file. Changing the parameter from true to false resolves it.

To change the value:

- Navigate to C:\Program Files\Qlik\Sense\Repository\ and locate Repository.exe.config

- Open Repository.exe.config in a text editor

- Find HideCustomPropertyDefinition and set its value from true to false

If you are running a multi node environment, make the change on each node. - Save the Repository.exe.config file‘false’

Cause

Issue related to the default configuration setting of the parameter HideCustomPropertyDefinition in the Repository.exe.config file.

Internal Investigation ID(s)

SUPPORT-6127

Environment

- Qlik Sense Enterprise on Windows

- Using POSTMAN, we perform a POST call including the body:

-

Qlik Sense Enterprise on Windows: Extended WebSocket CSRF protection

Beginning with Qlik Sense Enterprise on Windows 2024, Qlik has extended CSRF protection to WebSockets. For reference, see the Release Notes. In the ca... Show MoreBeginning with Qlik Sense Enterprise on Windows 2024, Qlik has extended CSRF protection to WebSockets. For reference, see the Release Notes.

In the case of mashups, extensions, and or other cross-site domain setups, the following two steps are necessary:

- Add additional response headers. These headers help protect against Cross-Site Forgery (CSRF) attacks.

- Change the applicable code in your mashup or extension.

Content

Add the Response Headers

The additional response headers are:

Access-Control-Allow-Credentials: true

Access-Control-Expose-Headers: qlik-csrf-tokenLocalhost and port 8080 are examples. Replace them with the appropriate hostname. Defining the port is optional.

If you have multiple origins, add each to the Host allow list.

Example:

For more information about adding response headers to the Qlik Sense Virtual proxy, see Creating a virtual proxy. Expand the Advanced section to access Additional response headers.

Adapt your Mashup or Extension code

In certain scenarios, the additional headers on the virtual proxy will not be enough and a code change is required. In these cases, you need to request the CSRF token and then send it forward when opening the session on the WebSocket. See Workflow for a visualisation of the process.

An example written in Enigma.js is available here:

The information and example in this article are provided as-is and are not directly supported by Qlik Support. More assistance can be found on the Qlik Integration forum. Professional Services are available to help where needed.

Workflow

Workflow

Verification

To verify if the header information is correctly passed on, capture the web traffic in your browser's debug tool.

Environment

- Qlik Sense Enterprise on Windows

-

Qlik Cloud: Login with Service Account Owner (SAO) error: User Allocation Requir...

The client Secret for a Single Sign-On Solution has expired. After successfully logging in with your unique tenant url recovery address https://type... Show MoreThe client Secret for a Single Sign-On Solution has expired.

After successfully logging in with your unique tenant url recovery address

https://type_your_tenant_here.eu.qlikcloud.com/login/recover

when using the current tenant Service Account Owner (SAO) account, logging in to the Qlik Cloud Administration Console fails with:

User allocation required You do not have a valid user allocation. Please contact an administrator for more information

Resolution

Once you successfully log in to the tenant via recovery link using the SAO (Service Account Owner) credentials, either navigate to the Qlik Cloud Administration Console by using

- the Settings icon

- or by directly using https://mytenant.eu.qlikcloud.com/console

This will allow you to access the Qlik Cloud Administration Console and update the client secret for your IDP settings.

Environment

- Qlik Cloud Analytics

NOTE: If you are logging in with credentials that are not your own (for example, a user that has left your organization) and the above steps fail with a responseCode: 401 error, it may be necessary to ask Qlik Customer Support to change the Server Account Owner (SAO) to an currently active user in your directory.

Once the SAO change is complete, follow the above resolution steps.

Related Content

-

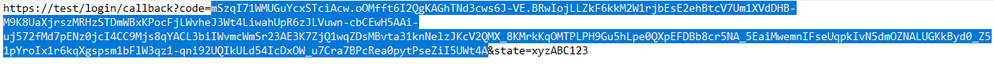

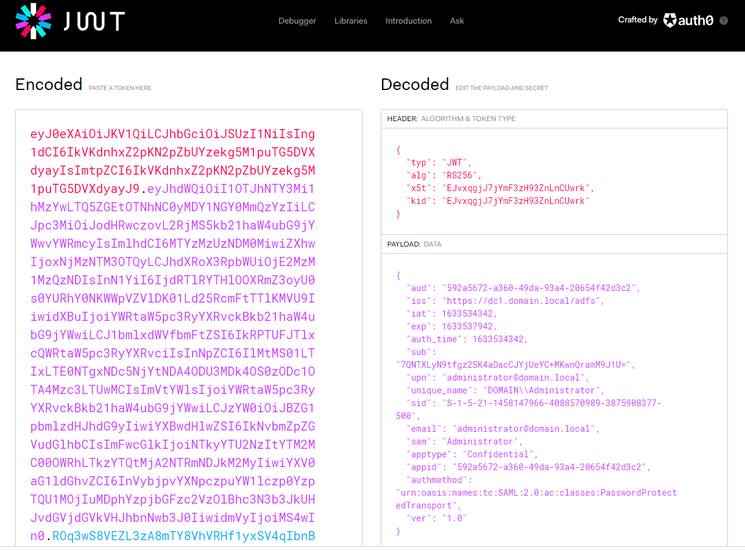

Qlik Sense: How to request an OIDC token manually and check if correct attribute...

This article explains how to request a token manually from your Identity provider token endpoint and verify if the required attributes are included i... Show More