Unlock a world of possibilities! Login now and discover the exclusive benefits awaiting you.

Search our knowledge base, curated by global Support, for answers ranging from account questions to troubleshooting error messages.

Featured Content

-

How to contact Qlik Support

Qlik offers a wide range of channels to assist you in troubleshooting, answering frequently asked questions, and getting in touch with our technical e... Show MoreQlik offers a wide range of channels to assist you in troubleshooting, answering frequently asked questions, and getting in touch with our technical experts. In this article, we guide you through all available avenues to secure your best possible experience.

For details on our terms and conditions, review the Qlik Support Policy.

Index:

- Support and Professional Services; who to contact when.

- Qlik Support: How to access the support you need

- 1. Qlik Community, Forums & Knowledge Base

- The Knowledge Base

- Blogs

- Our Support programs:

- The Qlik Forums

- Ideation

- How to create a Qlik ID

- 2. Chat

- 3. Qlik Support Case Portal

- Escalate a Support Case

- Resources

Support and Professional Services; who to contact when.

We're happy to help! Here's a breakdown of resources for each type of need.

Support Professional Services (*) Reactively fixes technical issues as well as answers narrowly defined specific questions. Handles administrative issues to keep the product up-to-date and functioning. Proactively accelerates projects, reduces risk, and achieves optimal configurations. Delivers expert help for training, planning, implementation, and performance improvement. - Error messages

- Task crashes

- Latency issues (due to errors or 1-1 mode)

- Performance degradation without config changes

- Specific questions

- Licensing requests

- Bug Report / Hotfixes

- Not functioning as designed or documented

- Software regression

- Deployment Implementation

- Setting up new endpoints

- Performance Tuning

- Architecture design or optimization

- Automation

- Customization

- Environment Migration

- Health Check

- New functionality walkthrough

- Realtime upgrade assistance

(*) reach out to your Account Manager or Customer Success Manager

Qlik Support: How to access the support you need

1. Qlik Community, Forums & Knowledge Base

Your first line of support: https://community.qlik.com/

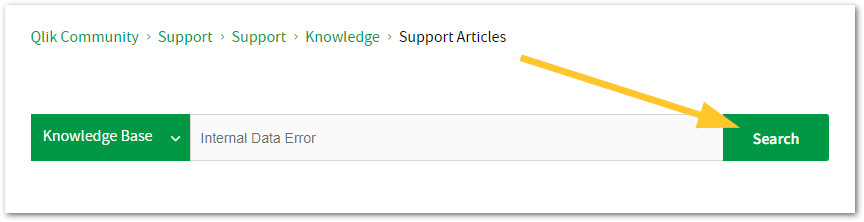

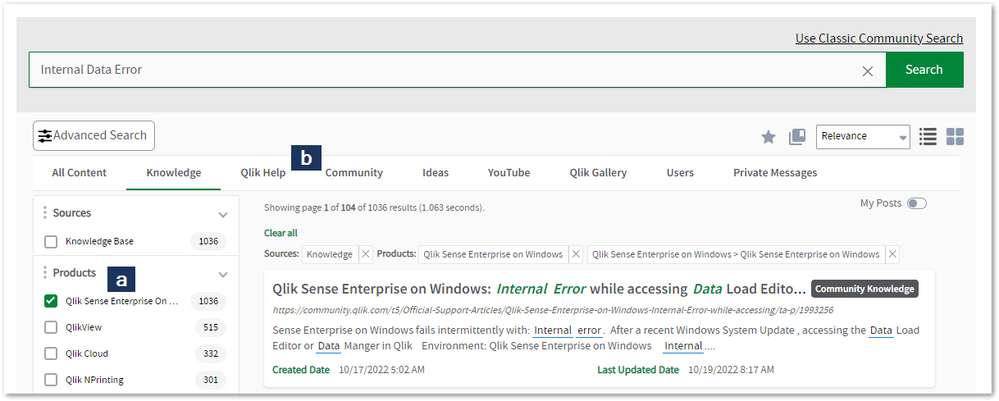

Looking for content? Type your question into our global search bar:

The Knowledge Base

Leverage the enhanced and continuously updated Knowledge Base to find solutions to your questions and best practice guides. Bookmark this page for quick access!

- Go to the Official Support Articles Knowledge base

- Type your question into our Search Engine

- Need more filters?

- Filter by Product

- Or switch tabs to browse content in the global community, on our Help Site, or even on our Youtube channel

Blogs

Subscribe to maximize your Qlik experience!

The Support Updates Blog

The Support Updates blog delivers important and useful Qlik Support information about end-of-product support, new service releases, and general support topics. (click)The Qlik Design Blog

The Design blog is all about product and Qlik solutions, such as scripting, data modelling, visual design, extensions, best practices, and more! (click)The Product Innovation Blog

By reading the Product Innovation blog, you will learn about what's new across all of the products in our growing Qlik product portfolio. (click)Our Support programs:

Q&A with Qlik

Live sessions with Qlik Experts in which we focus on your questions.Techspert Talks

Techspert Talks is a free webinar to facilitate knowledge sharing held on a monthly basis.Technical Adoption Workshops

Our in depth, hands-on workshops allow new Qlik Cloud Admins to build alongside Qlik Experts.Qlik Fix

Qlik Fix is a series of short video with helpful solutions for Qlik customers and partners.The Qlik Forums

- Quick, convenient, 24/7 availability

- Monitored by Qlik Experts

- New releases publicly announced within Qlik Community forums (click)

- Local language groups available (click)

Ideation

Suggest an idea, and influence the next generation of Qlik features!

Search & Submit Ideas

Ideation GuidelinesHow to create a Qlik ID

Get the full value of the community.

Register a Qlik ID:

- Go to: qlikid.qlik.com/register

- You must enter your company name exactly as it appears on your license or there will be significant delays in getting access.

- You will receive a system-generated email with an activation link for your new account. NOTE, this link will expire after 24 hours.

If you need additional details, see: Additional guidance on registering for a Qlik account

If you encounter problems with your Qlik ID, contact us through Live Chat!

2. Chat

Incidents are supported through our Chat, by clicking Chat Now on any Support Page across Qlik Community.

To raise a new issue, all you need to do is chat with us. With this, we can:

- Answer common questions instantly through our chatbot

- Have a live agent troubleshoot in real time

- With items that will take further investigating, we will create a case on your behalf with step-by-step intake questions.

3. Qlik Support Case Portal

Log in to manage and track your active cases in Manage Cases. (click)

Please note: to create a new case, it is easiest to do so via our chat (see above). Our chat will log your case through a series of guided intake questions.

Your advantages:

- Self-service access to all incidents so that you can track progress

- Option to upload documentation and troubleshooting files

- Option to include additional stakeholders and watchers to view active cases

- Follow-up conversations

When creating a case, you will be prompted to enter problem type and issue level. Definitions shared below:

Problem Type

Select Account Related for issues with your account, licenses, downloads, or payment.

Select Product Related for technical issues with Qlik products and platforms.

Priority

If your issue is account related, you will be asked to select a Priority level:

Select Medium/Low if the system is accessible, but there are some functional limitations that are not critical in the daily operation.

Select High if there are significant impacts on normal work or performance.

Select Urgent if there are major impacts on business-critical work or performance.

Severity

If your issue is product related, you will be asked to select a Severity level:

Severity 1: Qlik production software is down or not available, but not because of scheduled maintenance and/or upgrades.

Severity 2: Major functionality is not working in accordance with the technical specifications in documentation or significant performance degradation is experienced so that critical business operations cannot be performed.

Severity 3: Any error that is not Severity 1 Error or Severity 2 Issue. For more information, visit our Qlik Support Policy.

Escalate a Support Case

If you require a support case escalation, you have two options:

- Request to escalate within the case, mentioning the business reasons.

To escalate a support incident successfully, mention your intention to escalate in the open support case. This will begin the escalation process. - Contact your Regional Support Manager

If more attention is required, contact your regional support manager. You can find a full list of regional support managers in the How to escalate a support case article.

Resources

A collection of useful links.

Qlik Cloud Status Page

Keep up to date with Qlik Cloud's status.

Support Policy

Review our Service Level Agreements and License Agreements.

Live Chat and Case Portal

Your one stop to contact us.

Recent Documents

-

Qlik Talend Products: Java 17 Migration Guide

From R2024-05, Java 17 will become the only supported version to start most Talend modules, enforcing the improved security of Java 17 and eliminating... Show MoreFrom R2024-05, Java 17 will become the only supported version to start most Talend modules, enforcing the improved security of Java 17 and eliminating concerns about Java's end-of-support for older versions. In 2025, Java 17 will become the only supported version for all operations in Talend modules.

Starting from v2.13, Talend Remote Engine requires Java 17 to run. If some of your artifacts, such as Big Data Jobs, require other Java versions, see Specifying a Java version to run Jobs or Microservices.Content

- Prerequisites

- Procedure

- Windows

- Linux

- MAC OS

- Multiple JDK versions

- Studio

- Remote Engine

- ESB - Runtime

- Studio

- Talend Administration Center (TAC)

- CICD

- Windows Users

- Linux Users

- Jenkins Users

- Additional Notes

- Specifying a Java version to run Jobs or Microservices

Prerequisites

Qlik Talend Module Patch Level and Version Studio Supported from R2023-10 onwards Remote Engine 2.13 or later Runtime 8.0.1-R2023-10 or later Procedure

Windows

For Windows users, please follow the JDK installation guide (docs.oracle.com).

Linux

For Linux users, please follow the JDK installation guide (docs.oracle.com).

MAC OS

For MAC OS users, please follow the JDK installation guide (docs.oracle.com).

Multiple JDK versions

When working with software that supports multiple versions of Java, it's important to be able to specify the exact Java version you want to use. This ensures compatibility and consistent behavior across your applications. Here is how you can specify a specific Java version on the following products (such as build servers, shared application server, and similar):

Studio

For Studio users who are using multiple JDKs, please follow the appropriate instructions listed above and follow the proceeding additional steps:

- Backup and edit the <Studio Home>\Talend-Studio-win-x86_64.ini file

- Prepend:

-vm

<JDK17 HOME>\bin\server\jvm.dll

Remote Engine

For Remote Engine (RE) users who are using multiple JDKs, please follow the appropriate instructions listed above and follow the proceeding additional steps.

- Backup and edit the <RE HOME>/etc/talend-remote-engine-wrapper.conf file

- Modify the set.default.JAVA_HOME= property to point to the <JDK 17 HOME> path.

Note 1: If Remote Engine is not installed as a service, the JDK file will be set in the <RE HOME>/bin/setenv file.

Note 2: When it comes to running Jobs or Microservices, you retain the flexibility to either use the default Java 17 version or choose older Java versions, through straightforward configuration of the engine.

How to modify?

Check the following etc folder based configuration and change it to installed jdk/jre path:

{

org.talend.ipaas.rt.dsrunner.cfg--> ms.custom.jre.path

org.talend.remote.jobserver.server.cfg--> org.talend.remote.jobserver.commons.config.JobServerConfiguration.JOB_LAUNCHER_PATH

}

ESB - Runtime

For Runtime users who are using multiple JDKs, please follow the appropriate instructions listed above and follow the proceeding additional steps.

- Backup and edit the <Runtime home>/etc/<TALEND-8-CONTAINER service>-wrapper.conf

- Modify the set.default.JAVA_HOME=C:\<JDK 17 HOME> path

If Runtime is not running as a service:

- Backup and edit the <Runtime home>/bin/setenv.sh

- Modify the SET JAVA_HOME= <JDK 17 HOME> path

Studio

- Data Integration (DI): After installing the 8.0 R2023-10 Talend Studio monthly update or a later one, if you switch the Java version to 17 and relaunch your Talend Studio with Java 17, you must enable your project settings for Java 17 compatibility.

- Go to Studio

- Go to File

- Edit Project properties

- Go to Build

- Go to Java Version

- Activate "Enable Java 17 compatibility"

With the Enable Java 17 compatibility option activated, any Job built by Talend Studio cannot be executed with Java 8. For this reason, verify the Java environment on your Job execution servers before activating the option.

- Big Data Users: Do not enable Java 17 compatibility unless your Spark Cluster supports Java 17.

Talend Administration Center (TAC)

To use Talend Administration Center with Java 17, you need to open the <tac_installation_folder>/apache-tomcat/bin/setenv.sh file and add the following commands:

# export modules export JAVA_OPTS="$JAVA_OPTS --add-opens=java.base/sun.security.x509=ALL-UNNAMED --add-opens=java.base/sun.security.pkcs=ALL-UNNAMED"

Windows users use <tac_installation_folder>\apache-tomcat\bin\setenv.bat

CICD

Windows Users

For Java 17 users, Talend CICD process requires the following Maven options:

- Backup and edit <Maven_home>\bin\mvn.cmd

- Modify to:

set "MAVEN_OPTS=%MAVEN_OPTS% --add-opens=java.base/java.net=ALL-UNNAMED --add-opens=java.base/sun.security.x509=ALL-UNNAMED --add-opens=java.base/sun.security.pkcs=ALL-UNNAMED"

Linux Users

For Java 17 users, Talend CICD process requires the following Maven options:

- Backup and edit <Maven_home>/bin/mvn

- Modify to:

export MAVEN_OPTS="$MAVEN_OPTS \ --add-opens=java.base/java.net=ALL-UNNAMED \ --add-opens=java.base/sun.security.x509=ALL-UNNAMED \ --add-opens=java.base/sun.security.pkcs=ALL-UNNAMED"

Jenkins Users

- Backup and edit the jenkins_pipeline_simple.xml

- Include the following in the Talend_CI_RUN_CONFIG parameter:

<name>TALEND_CI_RUN_CONFIG</name> <description>Define the Maven parameters to be used by the product execution, such as: - Studio location - debug flags These parameters will be put to maven 'mavenOpts'. If Jenkins is using Java 17, add: --add-opens=java.base/java.net=ALL-UNNAMED --add-opens=java.base/sun.security.x509=ALL-UNNAMED --add-opens=java.base/sun.security.pkcs=ALL-UNNAMED </description>

Additional Notes

Specifying a Java version to run Jobs or Microservices

Overview

Enable your Remote Engine to run Jobs or Microservices using a specific Java version.

By default, a Remote Engine uses the Java version of its environment to execute Jobs or Microservices. With Remote Engine v2.13 and onwards, Java 17 is mandatory for engine startup. However, when it comes to running Jobs or Microservices, you can specify a different Java version. This feature allows you to use a newer engine version to run the artifacts designed with older Java versions, without the need to rebuild these artifacts, such as the Big Data Jobs, which reply on Java 8 only.

When developing new Jobs or Microservices that do not exclusively rely on Java 8, that is to say, they are not Big Data Jobs, consider building them with the add-opens option to ensure compatibility with Java 17. This option opens the necessary packages for Java 17 compatibility, making your Jobs or Microservices directly runnable on the newer Remote Engine version, without having to go through the procedure explained in this section for defining a specific Java version. For further information about how to use this add-opens option and its limitation, see Setting up Java in Talend Studio.

Procedure

- Stop the engine.

- Browse to the <RemoteEngineInstallationDirectory>/etc directory.

- Depending on the type of the artifacts you need to run with a specific Java version, do the following:

For both artifact types, use backslashes to escape characters specific to a Windows path, such as colons, whitespace, and directory separators, while keeping in mind that directory separators are also backslashes on Windows.

Example:

c:\\Program\ Files\\Java\\jdk11.0.18_10\\bin\\java.exe

- For Jobs, in the <RemoteEngineInstallationDirectory>/etc/org.talend.remote.jobserver.server.cfg file, add the path to the Java executable file.

Example:

org.talend.remote.jobserver.commons.config.JobServerConfiguration.JOB_LAUNCHER_PATH=c:\\jdks\\jdk11.0.18_10\\bin\\java.exe

- For Microservices, in the <RemoteEngineInstallationDirectory>/etc/org.talend.ipaas.rt.dsrunner.cfg, add the path to the Java executable file.

Example:

ms.custom.jre.path=C\:/Java/jdk/bin

Make this modification before deploying your Microservices to ensure that these changes are correctly taken into account.

- For Jobs, in the <RemoteEngineInstallationDirectory>/etc/org.talend.remote.jobserver.server.cfg file, add the path to the Java executable file.

- Restart the engine.

-

Qlik Gold Client Key Split Enhancement Overview

Key Split is a performance tuning option within Qlik Gold Client. Introduced in Qlik Gold Client 8.7.3, it is used to control which tables allow fo... Show More -

QMC Reload Failure Despite Successful Script in Qlik Sense Nov 2023 and above

Reload fails in QMC even though script part is successfull in Qlik Sense Enterprise on Windows November 2023 and above.When you are using a NetApp bas... Show MoreReload fails in QMC even though script part is successfull in Qlik Sense Enterprise on Windows November 2023 and above.

When you are using a NetApp based storage you might see an error when trying to publish and replace or reloading a published app.In the QMC you will see that the script load itself finished successfully, but the task failed after that.

ERROR QlikServer1 System.Engine.Engine 228 43384f67-ce24-47b1-8d12-810fca589657

Domain\serviceuser QF: CopyRename exception:

Rename from \\fileserver\share\Apps\e8d5b2d8-cf7d-4406-903e-a249528b160c.new

to \\fileserver\share\Apps\ae763791-8131-4118-b8df-35650f29e6f6

failed: RenameFile failed in CopyRenameExtendedException: Type '9010' thrown in file

'C:\Jws\engine-common-ws\src\ServerPlugin\Plugins\PluginApiSupport\PluginHelpers.cpp'

in function 'ServerPlugin::PluginHelpers::ConvertAndThrow'

on line '149'. Message: 'Unknown error' and additional debug info:

'Could not replace collection

\\fileserver\share\Apps\8fa5536b-f45f-4262-842a-884936cf119c] with

[\\fileserver\share\Apps\Transactions\Qlikserver1\829A26D1-49D2-413B-AFB1-739261AA1A5E],

(genericException)'

<<< {"jsonrpc":"2.0","id":1578431,"error":{"code":9010,"parameter":

"Object move failed.","message":"Unknown error"}}ERROR Qlikserver1 06c3ab76-226a-4e25-990f-6655a965c8f3

20240218T040613.891-0500 12.1581.19.0

Command=Doc::DoSave;Result=9010;ResultText=Error: Unknown error

0 0 298317 INTERNAL&

emsp; sa_scheduler b3712cae-ff20-4443-b15b-c3e4d33ec7b4

9c1f1450-3341-4deb-bc9b-92bf9b6861cf Taskname Engine Not available

Doc::DoSave Doc::DoSave 9010 Object move failed.

06c3ab76-226a-4e25-990f-6655a965c8f3Resolution

Qlik Sense Client Managed version:

- May 2024 Initial Release

- February 2024 Patch 4

- November 2023 Patch 9

Potential workarounds

- Change the storage to a file share on a Windows server

Cause

The most plausible cause currently is that the specific engine version has issues releasing File Lock operations. We are actively investigating the root cause, but there is no fix available yet.

Internal Investigation ID(s)

QB-25096

QB-26125Environment

- Qlik Sense Enterprise on Windows November 2023 and above

-

How to customize the text of a generic Qlik Sense error message

The information in this article is provided as is. Adjustments to error messages cannot be supported by Qlik Support and can lead to difficulties iden... Show MoreThe information in this article is provided as is. Adjustments to error messages cannot be supported by Qlik Support and can lead to difficulties identifying underlying root causes of technical issues experienced later. All changes will be reverted after an upgrade.

The method documented in this article is intended for later versions of Qlik Sense Enterprise on Windows.

The message texts are defined in JavaScript files located by default in path C:\Program Files\Qlik\Sense\Client\translate\. There are subfolders for each supported language, e.g. en-US for English.

To modify the message text edit e.g. the file hub.js in the folder corresponding to the language you want to change the text for.

To change e.g. the default message text for access denied messages you need to find the following line:"ProxyError.OnLicenseAccessDenied": "You cannot access Qlik Sense because you have no access pass.",

To change the text of the message you need to modify the second part of the text which is in brackets (see example below):

"ProxyError.OnLicenseAccessDenied": "This is a modified message text for access denied events",

To modify the error message:

- Navigate to C:\Program Files\Qlik\Sense\Client\translate\

- Open the folder corresponding to the language you wish to change, e.g. en-US for English.

- Open either the hub.js file or the qmc.js file. Older version of Qlik Sense relied on a single client.json file.

- Search for the standard error message

- Modify the message text accordingly. If the message you wish to modify cannot be located, then the message cannot be customized.

- Save the file

- Restart the Qlik Sense Proxy service

Users will not be able to see the new message until their browser cache has been cleared.

-

How to collect Snapshots for high memory usage in Replicate

Description: In order to troubleshoot high memory usage, we need to collect memory snapshots for the Replicate service or the task that's having high... Show More -

How to: Getting Started with the Qlik Cloud Data Integration connector in Qlik A...

This article is intended to guide users on how to work with the Qlik Cloud Data Integration connector in Automations. Content AuthenticationWorking w... Show More -

Stream are not visible or available on the hub in Qlik Sense May 2022

After upgrading or installing Qlik Sense Enterprise on Windows May 2022, users may not see all of their streams. The streams appear only after an inte... Show MoreAfter upgrading or installing Qlik Sense Enterprise on Windows May 2022, users may not see all of their streams.

The streams appear only after an interaction or a click anywhere on the Hub.

This is caused by defect QB-10693, resolved in May 2022 Patch 4.

Environment

Qlik Sense Enterprise on Windows May 2022

Workaround

To work around the issue without a patch:

- Locate and open the file: C:\Program Files\Qlik\Sense\CapabilityService\capabilities.json

- Modify the following line:

{"contentHash":"2ae4a99c9f17ab76e1eeb27bc4211874","originalClassName":"FeatureToggle","flag":"HUB_HIDE_EMPTY_STREAMS","enabled":true}

Set it from true to false:

{"contentHash":"2ae4a99c9f17ab76e1eeb27bc4211874","originalClassName":"FeatureToggle","flag":"HUB_HIDE_EMPTY_STREAMS","enabled":false} - Save the file.

If you have a multi node, these changes need to be applied on all nodes.

Note 2: As these are changes to a configuration file, they will be reverted to default when upgrading. The changes will need to be redone after patching or upgrading Qlik Sense. - Restart the Qlik Sense Dispatcher service AND the Qlik Sense Proxy Service

Note: If you have a multi node, all nodes need to be restarted.

Fix

A fix is available in May 2022, patch 4.

Streams will show up (after a few seconds) without the need to click or interact with the hub.

Important NOTE about feature HUB_HIDE_EMPTY_STREAMS:

When you activate HUB_HIDE_EMPTY_STREAMS, you will have an expected delay before all streams appear.

To improve this delay, from Patch 4, you can add HUB_OPTIMIZED_SEARCH (needs to be added manually as a new flag). As of now, HUB_OPTIMIZED_SEARCH tag will be available in the upcoming August 2022 release and is not planned for any patches (yet)

If this delay (seconds) is not acceptable, you will need to disable this HUB_HIDE_EMPTY_STREAMS capability.Cause

This defect was introduced by a new capability service.

Internal Investigation ID(s)

QB-10693

- Locate and open the file: C:\Program Files\Qlik\Sense\CapabilityService\capabilities.json

-

How To: Configure Qlik Sense Enterprise SaaS to use Azure AD as an IdP. Now with...

This article provides step-by-step instructions for implementing Azure AD as an identify provider for Qlik Cloud. We cover configuring an App registra... Show MoreThis article provides step-by-step instructions for implementing Azure AD as an identify provider for Qlik Cloud. We cover configuring an App registration in Azure AD and configuring group support using MS Graph permissions.

It guides the reader through adding the necessary application configuration in Azure AD and Qlik Sense Enterprise SaaS identity provider configuration so that Qlik Sense Enterprise SaaS users may log into a tenant using their Azure AD credentials.

Content:

- Prerequisites

- Helpful vocabulary

- Considerations when using Azure AD with Qlik Sense Enterprise SaaS

- Configure Azure AD

- Create the app registration

- Create the client secret

- Add claims to the token configuration

- Add group claim

- Collect Azure AD configuration information

- Configure Qlik Sense Enterprise SaaS IdP

- Recap

- Addendum

- Related Content (VIDEO)

Prerequisites

- An Microsoft Azure account

- A Microsoft Azure Active Directory instance

- A Qlik Sense Enterprise SaaS tenant

- The BYOIDP feature in your Qlik license is set to YES. Contact customer support to find out if you are entitled to bring your own identity provider to your tenant.

Helpful vocabulary

Throughout this tutorial, some words will be used interchangeably.

- Qlik Sense Enterprise SaaS: Qlik Sense hosted in Qlik’s public cloud

- Microsoft Azure Active Directory: Azure AD

- Tenant: Qlik Sense Enterprise SaaS tenant or instance

- Instance: Microsoft Azure AD

- OIDC: Open Id Connect

- IdP: Identity Provider

Considerations when using Azure AD with Qlik Sense Enterprise SaaS

- Qlik Sense Enterprise SaaS allows for customers to bring their own identity provider to provide authentication to the tenant using the Open ID Connect (OIDC) specification (https://openid.net/connect/)

- Given that OIDC is a specification and not a standard, vendors (e.g. Microsoft) may implement the capability in ways that are outside of the core specification. In this case, Microsoft Azure AD OIDC configurations do not send standard OIDC claims like email_verified. Using the Azure AD configuration in Qlik Sense Enterprise SaaS includes an advanced option to set email_verified to true for all users that log into the tenant.

- The Azure AD configuration in Qlik Sense Enterprise SaaS includes special logic for contacting Microsoft Graph API to obtain friendly group names. Whether those groups originate from an on-premises instance of Active Directory and sync to Azure AD through Azure AD Connect or from creation within Azure AD, the friendly group name will be returned from the Graph API and added to Qlik Sense Enterprise SaaS.

Configure Azure AD

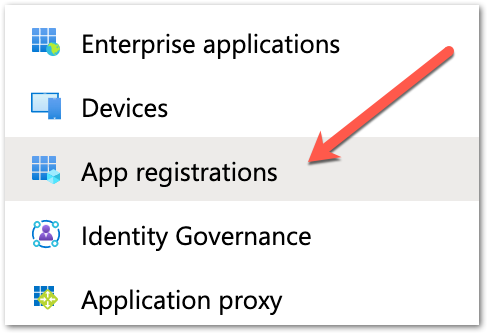

Create the app registration

- Log into Microsoft Azure by going to https://portal.azure.com.

- Click on the Azure Active Directory icon in the browser Or search for "Azure Active Directory" in the search bar on the top. The overview page for the active directory will appear.

- Click the App registrations item in the menu to the left.

- Click the New registration button at the top of the detail window. The application registration page appears.

- Add a name in the Name section to identify the application. In this example, the name of the hostname of the tenant is entered along with the word OIDC.

- The next section contains radio buttons for selecting the Supported account types. In this example, the default – Accounts in this organizational directory only – is selected.

- The last section is for entering the redirect URI. From the dropdown list on the left select “web” and then enter the callback URL from the tenant. Enter the URI https://<tenant hostname>/login/callback.

The tenant hostname required in this context is the original hostname provided to the Qlik Enterprise SaaS tenant.

Using the Alias hostname will cause the IdP handshake to fail. - Complete the registration by clicking the Register button at the bottom of the page.

- Click on the Authentication menu item on the left side of the screen.

- On the middle of the page, the reference to the callback URI appears. There is no additional configuration required on this page.

Create the client secret

- Click on the Certificates and secrets menu item on the left side of the screen.

- In the center of the Certificates and secrets page, there is a section labeled Client secrets with a button labeled New client secret. Click the button.

- In the dialog that appears, enter a description for the client secret and select an expiration time. Click the Add button after entering the information.

- Once a client secret is added, it will appear in the Client secrets section of the page.

Copy the "value of the client secret" and paste it somewhere safe.

After saving the configuration the value will become hidden and unavailable.

Add claims to the token configuration

- Click on the Token configuration menu item on the left side of the screen.

- The Optional claims window appears with two buttons. One for adding optional claims, and another for adding group claims. Click on the Add optional claim button.

- For optional claims, select the ID token type, and then select the claims to include in the token that will be sent to the Qlik Sense Enterprise SaaS tenant. In this example, ctry, email, tenant_ctry, upn, and verified_primary_email are checked. None of these optional claims are required for the tenant identity provider to work properly, however, they are used later on in this tutorial.

- Some optional claims may require adding OpenId Connect scopes from Microsoft Graph to the application configuration. Click the check mark to enable and click Add.

- The claims will appear in the window.

Add group claim

- Click on the API permissions menu item on the left side of the screen.

- Observe the configured permissions set during adding optional claims.

- Click the Add a permission button and select the Microsoft Graph option in the Request API permissions box that appears. Click on the Microsoft Graph banner.

- Click on Delegated permissions. The Select permission search and the OpenId permissions list appears.

In the OpenID permissions section, check email, openid, and profile. In the Users section, check user.read.

- In the Select permissions search, enter the word group. Expand the GroupMember option and select GroupMember.Read.All. This will grant users logging into Qlik Sense Enteprise SaaS through Azure AD to read the group memberships they are assigned.

- After making the selection, click the Add permissions button.

- The added permissions will appear in the list. However, the GroupMember.Read.All permission requires admin consent to work with the app registration. Click the Grant button and accept the message that appears.

Failing to grant consent to GroupMember.Read.All may result in errors authenticating to Qlik using Azure AD. Make sure to complete this step before moving on.

Collect Azure AD configuration information

- Click on the Overview menu item to return to the main App registration screen for the new app. Copy the Application (client) ID unique identifier. This value is needed for the tenant’s idp configuration.

- Click on the Endpoints button in the horizontal menu of the overview.

- Copy the OpenID Connect metadata document endpoint URI. This is needed for the tenant’s IdP configuration.

Configure Qlik Sense Enterprise SaaS IdP

- With the configuration complete and required information in hand, open the tenant’s management console and click on the Identity provider menu item on the left side of the screen.

- Click the Create new button on the upper right side of the main panel.

- Select OIDC from the Type drop-down menu item, and select Microsoft Entra ID (Azure AD) from the Provider drop-down menu item.

- Scroll down to the Application credentials section of the configuration panel and enter the following information:

- ADFS discovery URL: This is the endpoint URI copied from Azure AD.

- Client ID: This is the application (client) id copied from Azure AD.

- Client secret: This is the value copy and pasted to a safe location from the Certificates & secrets section from Azure AD.

- The Realm is an optional value used if you want to enter what is commonly referred to as the Active Directory domain name.

- Scroll down to the Claims mapping section of the configuration panel. There are five textboxes to confirm or alter.

- The sub field is the subject of the token sent from Azure AD. This is normally a unique identifier and will represent the UserID of the user in the tenant. In this example, the value “sub” is left and appid is removed. To use a different claim from the token, replace the default value with the name of the desired attribute value.

- The name field is the “friendly” name of the user to be displayed in the tenant. For Azure AD, change the attribute name from the default value to “name”.

- In this example, the groups, email, and client_id attributes are configured properly, therefore, they do not need to be altered.

In this example, I had to change the email claim to upn to obtain the user's email address from Azure AD. Your results may vary.

- The sub field is the subject of the token sent from Azure AD. This is normally a unique identifier and will represent the UserID of the user in the tenant. In this example, the value “sub” is left and appid is removed. To use a different claim from the token, replace the default value with the name of the desired attribute value.

- Scroll down to the Advanced options and expand the menu. Slide the Email verified override option ON to ensure Azure AD validation works. Scope does not have to be supplied.

- The Post logout redirect URI is not required for Azure AD because upon logging out the user will be sent to the Azure log out page.

- Click the Save button at the bottom of the configuration to save the configuration. A message will appear confirming intent to create the identity provider. Click the Save button again to start the validation process.

- The validation procedure begins by redirecting the person configuring the IdP to the login page for the IdP.

- After successful authentication, Azure AD will confirm that permission should be granted for this user to the tenant. Click the Accept button.

- If the validation fails, the validation procedure will return a window like the following.

- If the validation succeeds, the validation procedure will return a mapped claims window. If the validation states it cannot map the user's email address, it is most likely because the email_verified switch has not been turned on. Go ahead and confirm, move through the remaining steps, and update the configuration as per the previous step. Re-run the validation to map the email.

- After confirming the information is correct, the account used to validate the IdP may be elevated to a TenantAdmin role. It is strongly recommended to do make sure the box is checked before clicking continue.

- The next to last screen in the configuration will ask to activate the IdP. By activating the Azure AD IdP in the tenant, any other identity providers configured in the tenant will be disabled.

- Success.

- Please log out of the tenant and re-authenticate using the new identity provider connection. Once logged in, change the url in the address bar to point to https://<tenanthostname>/api/v1/diagnose-claims. This will return the JSON of the claims information Azure AD sent to the tenant. Here is a slightly redacted example.

- Verify groups resolve properly by creating a space and adding members. You should see friendly group names to choose from.

Recap

While not hard, configuring Azure AD to work with Qlik Sense Enterprise SaaS is not trivial. Most of the legwork to make this authentication scheme work is on the Azure side. However, it's important to note that without making some small tweaks to the IdP configuration in Qlik Sense you may receive a failure or two during the validation process.

Addendum

For many of you, adding Azure AD means you potentially have a bunch of clean up you need to do to remove legacy groups. Unfortunately, there is no way to do this in the UI but there is an API endpoint for deleting groups. See Deleting guid group values from Qlik Sense Enterprise SaaS for a guide on how to delete groups from a Qlik Sense Enterprise SaaS tenant.

Related Content (VIDEO)

Qlik Cloud: Configure Azure Active Directory as an IdP

-

Qlik Replicate: Unable to resolve the conflict between the execute task command(...

[TASK_MANAGER ]E: Unable to resolve the conflict between the execute task command('CDC only') and the task settings('full load only') [1021616] (repli... Show More[TASK_MANAGER ]E: Unable to resolve the conflict between the execute task command('CDC only') and the task settings('full load only') [1021616] (replicationtask.c:1680)

This error is an example of corrupted metadata for the task. The task settings saved in the SQLite files no longer match and will need a correction.

Resolution

There are two methods to refresh the metadata of the task when the task is set to CDC only. The first option should be used unless corruption prevents the option from starting properly.

Method One

Use the Advanced Run Options to start the task from a timestamp.

- Go to the Run Option Drop-down menu

- Click Advanced Run Options

- Choose Tables are already loaded. Start processing changes from: Date and Time:

Method Two

Use the Advanced Run Options to reload the Metadata of the tables completely. Note that this option will reload all your tables

- Go to the Run Option Drop-down menu

- Click Advanced Run Options -

- Choose Metadata only: Recreate all tables and then stop

Environment

-

Qlik Replicate: W: The metadata for source table 'dbo.table' is different than t...

[SOURCE_CAPTURE ]W: The metadata for source table 'dbo.table' is different than the corresponding MS-CDC Change Table. The table will be suspended. (s... Show More[SOURCE_CAPTURE ]W: The metadata for source table 'dbo.table' is different than the corresponding MS-CDC Change Table. The table will be suspended. (sqlserver_mscdc.c:805)

This warning can occur from hidden columns as Qlik Replicate is not able to identify the hidden columns that exist on the SQL Server database. This will cause a mismatch between Qlik Replicate and the SQL Server's version of the metadata.

Resolution

Hidden columns need to be revealed for Qlik Replicate to include them in the metadata. Columns that are hidden will look like the following in your DDL.

[$ValidFromDTM] [datetime2](7) GENERATED ALWAYS AS ROW START HIDDEN NOT NULL,

[$ValidToDTM] [datetime2](7) GENERATED ALWAYS AS ROW END HIDDEN NOT NULL,Example SQL queries to unhide the hidden columns:

ALTER TABLE <the table name> ALTER COLUMN [SysStartTime] drop HIDDEN;

ALTER TABLE <the table name> ALTER COLUMN [SysEndTime] drop HIDDEN;Cause

Qlik Replicate is not able to identify the hidden columns that exist on the SQL Server database. This will cause a mismatch between Replicate and SQL Server's version of the metadata.

Internal Investigation ID(s)

QB-25470

Environment

- Qlik Replicate

- SQL Server and SQL-related source endpoints

-

Qlik Sense P&L Pivot table object is blank when adding to the sheet

When adding P&L Pivot table to the sheet, the table is blank and there is no dimension and measure in the object container. When the object is selecte... Show MoreWhen adding P&L Pivot table to the sheet, the table is blank and there is no dimension and measure in the object container. When the object is selected, there is no Data menu to select on the Object Properties menu.

Resolution

- Repair the Qlik Sense installation using the Windows programs list (Repairing an installation)

- Following the repair, restart the Qlik Sense server.

Cause

Qlik Sense installation file may corrupt. Repairing Qlik Sense resolves the issue.

Environment

- All Qlik Sense Enterprise of Windows versions

-

Qlik Sense Management Console is not accessible after upgrade: Error Missing par...

After upgrading Qlik Sense Enterprise on Windows, the Qlik Sense Management Console (QMC) may fail to load. The following error is displayed: Initiali... Show MoreAfter upgrading Qlik Sense Enterprise on Windows, the Qlik Sense Management Console (QMC) may fail to load. The following error is displayed:

InitializationError: Initialization of the Qlik Management Console failed for the following reason: Missing parameter value(s)

Resolution

- Remove the installed Qlik Sense patch.

- Repair the Qlik Sense program in the Windows program list.

- Confirm the issue is fixed after repairing Qlik Sense, and install the Qlik Sense patch again.

Cause

The Qlik Sense version upgrade or patch update did not complete as expected.

Related Content

Installing and uninstalling Qlik Sense Patches

Environment

- All Qlik Sense Enterprise of Windows version

-

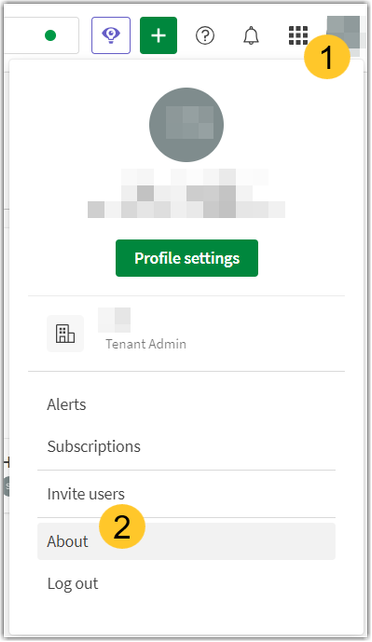

Find your Qlik Cloud Subscription ID and Tenant Hostname and ID

This article shows you how to locate your Qlik Cloud license number (Subscription ID) as well as your Tenant Hostname, your Tenant ID, and your Recove... Show MoreThis article shows you how to locate your Qlik Cloud license number (Subscription ID) as well as your Tenant Hostname, your Tenant ID, and your Recovery Address.

Only the Tenant Admin can see the Qlik Cloud Subscription ID and Tenant Hostname / ID.

If you are looking to change your Tenant Alias or Display name, see Assigning a hostname Alias to a Qlik Cloud Tenant and changing the Display Name.

- Log in to your Qlik Cloud Account and hover over your Profile Icon

- Click About

- This will show your:

- Tenant hostname

- Tenant ID

- Subscription ID

- Recovery address

Environment

Related

-

Qlik Talend Integration: How to Delete Elasticsearch Logs Automatically?

Elasticsearch logs are generated in the Logserver/elasticsearch-1.5.2/log directory. If the log files are not moved or deleted, disk space can fill up... Show MoreElasticsearch logs are generated in the Logserver/elasticsearch-1.5.2/log directory. If the log files are not moved or deleted, disk space can fill up. Can Elasticsearch log files be purged automatically?

Resolution

Update the Elasticsearch log configuration, and use the MaxBackupIndex option to determine how many backup files are kept before the oldest is erased.

The Elasticsearch logs configuration can be found in Logserver/elasticsearch-1.5.2/config/logging.yml.

The default configuration is:

"

file:

type: dailyRollingFile

file: ${path.logs}/${cluster.name}.log

datePattern: "'.'yyyy-MM-dd"

layout:

type: pattern

conversionPattern: "[%d{ISO8601}][%-5p][%-25c] %m%n"

"With this configuration a file will be created every day in order to save the previous day's log. As a result, the number of log files will increase, and it can lead to the disk full problem.

To restrict the amount of backup files, set MaxBackupIndex:

-

Stop these Talend services:

Talend Log Server search engine

Talend Log Server analytics and visualization platform

- Go to the <Talend_logserv_installation>elasticsearch-2.4.0\config\ folder.

- Open the logging.yml file and modify it as follows, adding maxFileSize and maxBackupIndex:

"

file:

type: rollingFile

file: ${path.logs}/${cluster.name}.log

maxFileSize : 200KB

maxBackupIndex: 4

layout:

type: pattern

conversionPattern: "[%d{ISO8601}][%-5p][%-25c] %m%n"

" - Save the file and restart the services.

Related Content:

Qlik Talend Product: Reducing the logging threshold for Elasticsearch in Talend Log Server

Environment

-

-

Qlik Talend API: How to call Talend API to do Plan Execution

To manage and browse executions of your Tasks, Plans and Promotions, please use the correct objects in the processing entity. As per our API doc http... Show MoreTo manage and browse executions of your Tasks, Plans and Promotions, please use the correct objects in the processing entity.

As per our API doc https://api.talend.com/apis/processing/2021-03/ and PlanExecutable section, the object step identifier(stepId) should be equivalent to stepExecutionID.

For example:

{

"executable": "b91cf8b2-5dd1-4b18-915b-4c447cee5267",

"executionPlanId": "0798b8d1-0e12-472f-be02-a0f04e792daa",

"rerunOnlyFailedTasks": true,

"stepId": "09043c9f-02d0-41f6-b3cb-0ea53ffde377"

}If we put stepId in the field, it is likely to get exception when calling API to execute plan

"Validation do not allow processing entity"The solution is to get all steps for a plan execution (order by designed execution, only steps, without error handlers) to gain stepExecutionID from query by issuing this API

{{apiUrl}}/processing/executions/plans/:planExecutionId/steps

And the executionID in the successful response array of StepExecution is the one you need to select and put in the stepID field of API call post /processing/executions/plansEnvironment

various Talend Products

-

Tabular Reporting events in the management console do not appear for all the use...

Issue reported Tabular Reporting events in the management console not showing for all the users in the tabular reporting recipient list Environment ... Show MoreIssue reported

Tabular Reporting events in the management console not showing for all the users in the tabular reporting recipient list

Environment

Resolution

When section access is used in a Qlik App, ensure to add all required recipients/users to the section access load script

For example, users in the Recipient import file should ideally match the users entered to the Section Access load script of the app.

This generally permits users to view management console details such as 'Events' assuming those user also have the necessary 'view' permissions in the tenant in which the app exists

Cause

If some of the recipients in the tabular reporting recipient list do not have access to the Space/App - they won't be considered in the task execution because they fail the governance.

ie: Recipients/users that are not added to the load script will not have access to the app nor associated management console events.

This is expected behavior.

Related Content

- Tabular reporting and section access

- URL to Events in your management console: https://yourtenant.us.qlikcloud.com/console/events

- Reviewing system events

-

DataRobot Predictions from Either Path You Choose

In my recent Getting SaaSsy with DataRobot post, I documented how to use the DataRobot Analytics Connector from within your Qlik Sense applications. I... Show MoreIn my recent Getting SaaSsy with DataRobot post, I documented how to use the DataRobot Analytics Connector from within your Qlik Sense applications. I know this will sound crazy but what if you want to make predictions on data you aren't loading into your application? Maybe you are collecting input parameters in your application from end users to play what-if games. Maybe you will record the predictions but you also want to take immediate action based on their values (ie prescriptive analytics.) Well, those things sound like perfect use cases for Qlik ApplicationAutomation.

It gets better my friends. Whether you are using a dedicated on-premise DataRobot server, a dedicated tenant or you are on the leading edge path with DataRobot's shiny new AI Cloud Manager using Paxata, Qlik Application Automation has you covered, and so do I.

In this post, I will help you identify the right DataRobot Connector Block to use for your path, help you understand how to execute predictions, and help you understand what to do with the output from the predictions.

Choose your Path

You have already chosen your DataRobot path, now it's just a matter of choosing the correct block from the DataRobot Connector. You probably would have guessed from the elaborate way I described the DataRobot choices which Qlik Application Automation block goes to which environment. But to be sure ... If you have a dedicated DataRobot server, or you have a dedicated tenant, you should use the List Prediction Explanation from Dedicated Prediction API block. If you are using the DataRobot AI Manager environment with Paxata, you should use the List Predictions block.

Oh no! What's that you are saying? You weren't told which path your organization chose, you were just given credentials and you just log in. Don't sweat it. I can help you with that. Just go to your Deployments within DataRobot, choose the Deployment you are going to execute predictions against, and choose Predictions, Prediction API and Real-time. DataRobot will provide all of the clues we need to choose the right block.

If the API URL contains App2.datarobot.com like in the first image below you are working with their AI Cloud and will need to use the List Predictions block. However, if you see a Dedicated path in your API_URL such as Qlik.orm.datarobot.com (second image) you will need to use the List Predictions fro Dedication Prediction API.

There are some other clues above as well. Notice in the second image the rest of the URL path contains api/v2/deployments, while the second image contains preApi/v1.0/deployments. It's basically DataRobot telling you which of their API's you need to utilize.

So how will that help you know for sure? One of the things that many people seldom look at with Qlik Application Automation blocks is the Description. Simply drag either/both of the blocks onto your canvas and scroll all the way down in the right panel. If you look at the List Predictions for Dedication Prediction API description you will see the following and notice it clearly indicates preApi/v1.0/deployments.

However, if you press the Show API endpoint link for the List Predictions block it will look like this. Both are dead giveaways as to the block/path you should choose.

Configuring your Connection

Regardless of which block you are using, you will first need to create a Connection for Qlik Application Automation to your DataRobot environment. If you have a Dedicated server your Connection details will look like this. Notice that you will need to copy the api_key value right from the DataRobot Deployment Details:

Your DataRobot AI Cloud connection will look similar and again you would need to copy your api_key from the deployment details. My DataRobot AI Cloud is just a "trial", hence I chose that region, while my dedicated tenant (above) is the US. The biggest difference is in the domain. Dedicated connections will be app.datarobot.com and AI Cloud connections will be app2.datarobot.com:

Choosing your Deployment

Once you test/save the connections we are ready to start making predictions. The List Predictions block is the easiest to set up so we will start with that one. Simply click the drop-down in the Deployment Id field, press "Do Lookup" and then choose the specific deployment model you are going to be making predictions against.

Then you simply provide the Input data you want to pass to the deployment to have predictions made for. More about the Prediction Data later, but for now notice that I've simply hard-coded a JSON string with field/value pairs:

The List Predictions fro Dedication Prediction API requires us to do a few more things that need to be completed. The first is the Dedicated Prediction Url. Good thing I had you bring up your deployment details because we will just copy it. Notice you do not need the HTTPS:// or the /predApi.. text, just the actual URL information.

Next will again simply click the drop-down for the Deployment ID, click Do Lookup and then choose our desired deployment.

Next, we copy the Data Robot Key from our deployment details and then we can insert our JSON block. Again, more later about that so don't panic in thinking I'm suggesting that you hand code the values you want to predict. It's just to make this section easier to navigate. 😁

Qlik Application Automation provides 4 additional parameters that are part of the DataRobot API specification. You can define the Passthrough Columns, Passthrough Columns Set, turn on Prediction Warnings and set the Decimals Number format.

You can refer directly to the DataRobot API documentation for all of the details you wish. For instance, notice that I have the Prediction Warning Enabled set to "true." Getting warnings sounded like a good idea. But alas, I ended up with an error.

Well, it turns out that in order to utilize the Prediction Warning Enabled there is work that must be done on our Deployment within DataRobot.

I guess I could have saved myself the trouble had I read the documentation. Oh well, I simply changed my default back to false so that the prediction can run.

Choosing your Prediction Input Data

Above I simply demonstrated the JSON format you need for your Prediction Data with hard-coded values. I've used my DataRobots and have predicted with a 99.99999999% confidence level that your goal in reading this isn't to hardcode 50+ input values each time you want a prediction. Instead, that data will come from somewhere else. Which is perfectly ok. Maybe you will be pulling the values from some other system as part of a workflow. When A event triggers this Qlik Application you will go do B and C and then assign output from those things to Variables that you will use as the Prediction Data. That's a great plan ... simply choose your variables, and use them where you need them in your Prediction Data. Notice I have already assigned the MasterPatientID variable and am in process of choosing the race variable below.

I'm so sorry. You don't like to use values, and you weren't doing A, B and C you were actually firing a SQL Query live based on input to your workflow and you wanted to use the data from the SQL Query. That is brilliant. Pulling the live data, when whatever event you have chosen triggers the Automation. You should write some posts. No problem, Qlik Application Automation will absolutely allow you to do that.

Or perhaps you are using a writeback solution, like Inphinity Forms, within a Qlik Sense application to capture input parameters and you wish to use those values. Do that.

Or perhaps you are ... You get the point. The Prediction Data simply needs to be a JSON block containing the field/value pairs. How you construct it, or read it from an S3 bucket, or pull it out of thin air doesn't matter. Which is the beauty of working with DataRobot within Qlik Application Automation.

Working with your Results

Woohoo, you now have a block that will execute a deployment in your DataRobot environment, regardless of which kind, and we are now ready for those wonderful predictions. Perhaps the first thing you noticed about the blocks List Predictions and List Predictions from Dedicate Prediction API was that they start with List as opposed to Get. It's of course because you may be passing a single row of data as Prediction Data or you could be passing many items in the JSON block. So these blocks are handled as lists, even if it is just a list of 1 prediction.

The DataRobot Connector for loading data into our applications simply returns the Prediction value, which is 0, in this case (the patient is not predicted to be readmitted.) However, notice below that within Qlik Application Automation either prediction block will return the Prediction as well, but it will also return a list of the Prediction values and the scores for each possible value. In my case, the 1, likely to be readmitted was scored at 0.0428973004, whereas the 0, the Prediction, was scored at .571026996.

Who cares?

Well, maybe you do. As I started this post I mentioned that perhaps we want to take action(s) based on the predictions which might be why you are making the prediction in your Qlik Application Automation workflow instead of just making the prediction in a Qlik Sense Application. If we are writing a flow that is "prescriptive" we might want to check the values. Ooooh .49999999999 vs .500000000001. Maybe that will be Action A, just email someone. While .000000001 vs .999999999 tells us that it's safe to go ahead and take the really expensive Action Z. So we might want to set up a Conditional expression.

Regardless of what we do with the values, Qlik Application Automation allows you to simply choose the values right from the block, just like it allowed you to choose Variables or data from another source.

What about Explanations?

If you don't already know me I will bring you up to speed quickly. I have very defined boundaries and am really particular about how things are worded. For example, take the phrase Data Science. Well, Science is explainable. Therefore, if something isn't explainable it isn't science. And if your predictions aren't explainable, then that isn't Data Science, it's just Data. One of the key reasons you are likely using DataRobot is the fact that it can so wonderfully return explanations for its predictions.

The Prediction of 0 above is nice. But knowing what factors led to the prediction may be just as valuable when helping us choose our prescriptive actions. Well, my friends, Qlik Application Automation has you covered for that scenario as well. In fact, you can see from the following image the block is literally raising its hand and begging you to choose it. List Prediction Explanation from Dedicated Prediction API will give you not only the Prediction, and the Prediction values but it will also return the explanations to you.

Wait something must be wrong. I see a qualitativeStrength of +++, but the second is --. What do those mean? Oh yeah, now I remember ... Qlik Application Automation is just calling the provided DataRobot API's so I might as well check the documentation from DataRobot so that I get a full and complete understanding of the input paramters I can choose for the block and understand the output values. Sure enough, it's covered.

https://docs.datarobot.com/en/docs/api/reference/predapi/pred-ref/dep-predex.html

Bonus Block

I see you out there on the leading edge doing Time Series Predictions in DataRobot. Not an issue, Qlik Application Automation has you covered with a block as well. Simply choose the List Time Series Predictions from Dedicated Prediction API and you will be good to go.

The initial inputs needed are already covered above. However, there are a few additional parameters you will need to input as well.

Of course, DataRobot has you covered with complete documentation at https://docs.datarobot.com/en/docs/api/reference/predapi/pred-ref/time-pred.html

The information in this article is provided as-is and to be used at own discretion. Depending on tool(s) used, customization(s), and/or other factors ongoing support on the solution below may not be provided by Qlik Support.

Related Content

Getting SaaSsy with Data Robot

Lions and Tigers and Reading and Writing Oh My -

Qlik Cloud: The authentication token associated with auto Provisioning is about ...

The Qlik Cloud Management Console displays a warning or error message, indicating that although you have stopped using SCIM, there is stil... Show MoreThe authentication token associated with auto Provisioning is about to expire

Or

The authentication token associated with auto Provisioning has expired

Resolution

The token must be deleted that was created for SCIM Auto-provisioning with Azure.

- Generate a developer API key in your SaaS platform from the profile settings, ensuring that API keys are enabled for the tenant.

- Using a tool like Postman or any other HTTP client, execute the following curl command to retrieve the API key ID associated with SCIM provisioning:

curl "https://<tenanthostname>/api/v1/api-keys?subType=externalClient" \ -H "Authorization: Bearer <dev-api-key>" -

Ensure to replace

<tenanthostname>with your actual tenant hostname and<dev-api-key>with your generated developer API key. Execute the command in Postman or a similar tool, and make sure to include the API key in the header for authorization. -

Once you have obtained the key ID from the output, copy it for later use in the deletion process.

-

To delete the API key associated with SCIM provisioning, execute the following curl command:

curl "https://<tenanthostname>/api/v1/api-keys/<keyID>" \ -X DELETE \ -H "Authorization: Bearer <dev-api-key>" - Again, replace

<tenanthostname>with your actual tenant hostname,<keyID>with the key ID you obtained in step 2, and<dev-api-key>with your developer API key. Execute this command in Postman or a similar tool, and ensure that the API key is included in the header for authorization.

By following these steps, you can successfully delete the token created for SCIM Auto-provisioning with Azure.

The information in this article is provided as-is and is to be used at your own discretion. Depending on the tool(s) used, customization(s), and/or other factors ongoing support on the solution below may not be provided by Qlik Support.

Cause

The token was not deleted when the SCIM Auto-Provisioning was disabled or completely removed.

Environment

-

The Qlik Cloud Azure Storage connector only supports MEMBER User Type Account

Using the GUEST user type when creating an Azure storage account in the Azure portal leads to an error after successful authentication from Qlik. The... Show MoreUsing the GUEST user type when creating an Azure storage account in the Azure portal leads to an error after successful authentication from Qlik.

The remote server returned an error: (400) Bad Request.

Resolution

Use a MEMBER account. Authentication with a GUEST user type is not supported for connecting from Qlik Cloud to Azure.

Related Content

Properties of a Microsoft Entra B2B collaboration user

Permission differences between member users and guest users

Configuring Azure Blob Storage in Qlik CloudEnvironment

- Qlik Cloud

- Azure Storage Connector

-

Qlik Sense QRS API using Xrfkey header in PowerShell

Qlik Sense Repository Service API (QRS API) contains all data and configuration information for a Qlik Sense site. The data is normally added and upd... Show More

Qlik Sense Repository Service API (QRS API) contains all data and configuration information for a Qlik Sense site. The data is normally added and updated using the Qlik Management Console (QMC) or a Qlik Sense client, but it is also possible to communicate directly with the QRS using its API. This enables the automation of a range of tasks, for example:- Start tasks from an external scheduling tool

- Change license configurations

- Extract data about the system

Using Xrfkey header

A common vulnerability in web clients is cross-site request forgery, which lets an attacker impersonate a user when accessing a system. Thus we use the Xrfkey to prevent that, without Xrfkey being set in the URL the server will send back a message saying: XSRF prevention check failed. Possible XSRF discovered.

Environments:Note: Please note that this example is related to token-based licenses and in case this is needed to be configured with Professional Analyser type of licenses you might need to use the following API calls:

- /qrs/license/professionalaccesstype/full

- /qrs/license/analyzeraccesstype/full

Furthermore, combining this with QlikCli and in case you need to monitor and more specifically remove users, the following link from community might be useful: Deallocation of Qlik Sense License

Resolution:

This procedure has been tested in a range of Qlik Sense Enterprise on Windows versions.- PowerShell 3.0 or higher (Installed by default in Windows 8 / Windows Server 2012 and later)

- Make sure the Qlik Repository service is up and running and port 4242 is open on the target server

Method 1: Authenticating through Qlik Proxy Service

- Go to PowerShell ISE and paste the following script

- In this example we are sending a GET request with a header of Xrfkey=12345678qwertyui and we are addressing the end point of /about. For more details on all end points, please refer to Connecting to the QRS API

$hdrs = @{} $hdrs.Add("X-Qlik-xrfkey","12345678qwertyui") $url = "https://qlikserver1.domain.local/qrs/about?xrfkey=12345678qwertyui" Invoke-RestMethod -Uri $url -Method Get -Headers $hdrs -UseDefaultCredentialsMethod 2: Use certificate and send direct request to Repository API

- Open Qlik Management Console and export the certificate. Please refer to Export client certificate and root certificate to make API calls with Postman for procedure.

- Make sure that port 4242 is open between the machine making the API call and the Qlik Sense server.

- Import the certificate on the machine you will use to make API calls. This must be imported in the personal certificate store of your user in MMC. The following PowerShell script is fetching automatically the Qlik Client certificate from the Certificate Personal store for the current user. You may need to modify the script if you have QlikClient certificates imported from different Qlik Sense servers in the store)

- Paste the below script in PowerShell ISE:

$hdrs = @{} $hdrs.Add("X-Qlik-xrfkey","12345678qwertyui") $hdrs.Add("X-Qlik-User","UserDirectory=DOMAIN;UserId=Administrator") $cert = Get-ChildItem -Path "Cert:\CurrentUser\My" | Where {$_.Subject -like '*QlikClient*'} $url = "https://qlikserver1.domain.local:4242/qrs/about?xrfkey=12345678qwertyui" Invoke-RestMethod -Uri $url -Method Get -Headers $hdrs -Certificate $cert

Execute the command.

A possible response for the 2 above scripts may look like this (Note that the JSON string is automatically converted to a PSCustomObject by PowerShell) :

buildVersion : 23.11.2.0 buildDate : 9/20/2013 10:09:00 AM databaseProvider : Devart.Data.PostgreSql nodeType : 1 sharedPersistence : True requiresBootstrap : False singleNodeOnly : False schemaPath : About

Related and advanced Content:

If there are several certificates from different Qlik Sense server, these can not be fetched by subject as there will have several certificates with subject QlikClient and that script will fail as it will return as array of certificates instead of a single certificate. In that case, fetch the certificate by thumbprint. This required more Powershell knowledge, but an example can be found here: How to find certificates by thumbprint or name with powershell