Unlock a world of possibilities! Login now and discover the exclusive benefits awaiting you.

- Qlik Community

- :

- All Forums

- :

- GeoAnalytics

- :

- Dissolve with Custom Polygons

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Dissolve with Custom Polygons

Hi all,

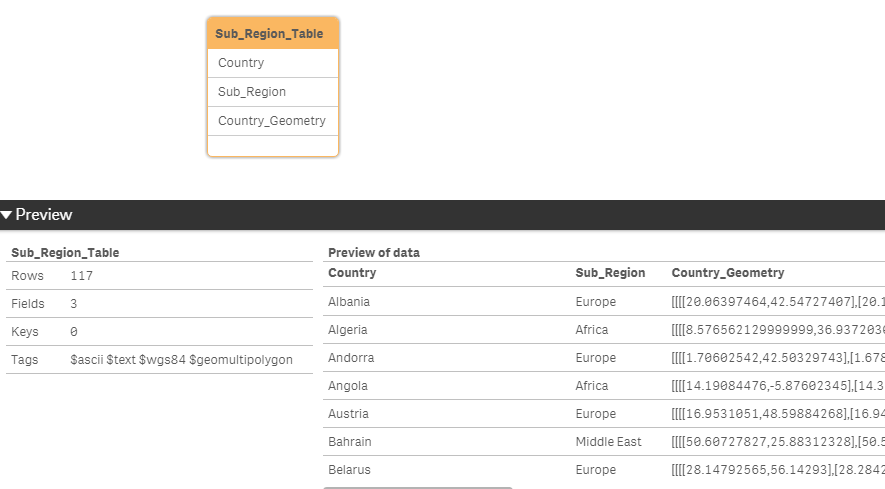

I'm trying to use the Dissolve function with custom polygons. I am trying to dissolve the Country_Geometry to create a Sub_Region_Geometry.

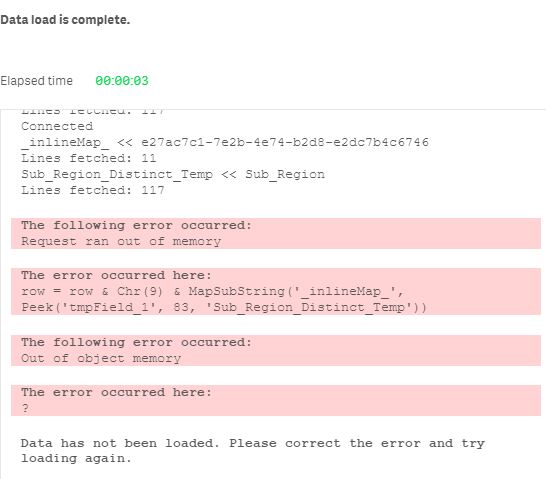

I am using the demo server, but the script is failing before it makes the SQL call.

The input table is fairly simple with 3 columns.

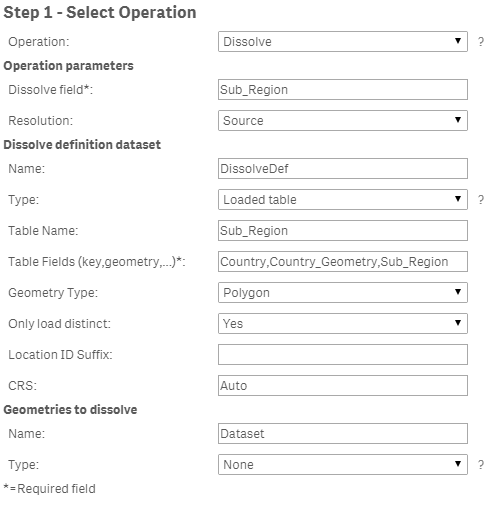

Below are the values I have entered.

This is the error message that pops up.

If I reduce the number of rows I send, the load does complete successfully. I have also tried a two dataset approach

I have seen examples of how to use the Dissolve operation but the all seem to use Location Named Area.

Any ideas where I'm going wrong?

Many thanks

- « Previous Replies

-

- 1

- 2

- Next Replies »

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Ruben,

Good spot! Apologies, the screenshots were taken at different times. The table name is in fact Sub_Region_Table and the field is Sub_Region. This is what I have used in the Geo Connector dialogue.

I've added the code below. If I limit the load the a few countries, the load is successful and the dissolving takes place. Unfortunately the upload functionality on community is complaining about the QVD but I'm happy to share this using another method if any one wants to try.

Sub_Region_Table:

LOAD

Country,

Sub_Region,

Country_Geometry

FROM [lib://Data/Sub_Region_Table.qvd]

(qvd)

//Where Match(Country,'Albania','Andorra','Bahrain')

;

LIB CONNECT TO 'GA';

/* Generated by GeoAnalytics for operation Dissolve ---------------------- */

[_inlineMap_]:

mapping LOAD * inline [

_char_, _utf_

"'", '\u0027'

'"', '\u0022'

"[", '\u005b'

"/", '\u002f'

"*", '\u002a'

";", '\u003b'

"}", '\u007d'

"{", '\u007b'

"`", '\u0060'

"´", '\u00b4'

" ", '\u0009'

];

IF FieldNumber('Country', 'Sub_Region_Table') = 0 THEN

call InvalidInlineData('The field Country in Sub_Region_Table is not available');

END IF

IF FieldNumber('Country_Geometry', 'Sub_Region_Table') = 0 THEN

call InvalidInlineData('The field Country_Geometry in Sub_Region_Table is not available');

END IF

IF FieldNumber('Sub_Region', 'Sub_Region_Table') = 0 THEN

call InvalidInlineData('The field Sub_Region in Sub_Region_Table is not available');

END IF

[Sub_Region_Table_Distinct_Temp]:

LOAD distinct [Country] AS tmpField_0, [Country_Geometry] AS tmpField_1, [Sub_Region] AS tmpField_2 resident Sub_Region_Table;

Let [DissolveDefInlineTable] = 'Country' & Chr(9) & 'Country_Geometry' & Chr(9) & 'Sub_Region';

Let numRows = NoOfRows('Sub_Region_Table_Distinct_Temp');

Let chunkSize = 1000;

Let chunks = numRows/chunkSize;

For n = 0 to chunks

Let chunkText = '';

Let chunk = n*chunkSize;

For i = 0 To chunkSize-1

Let row = '';

Let rowNr = chunk+i;

Exit for when rowNr >= numRows;

For Each f In 'tmpField_0', 'tmpField_1', 'tmpField_2'

row = row & Chr(9) & MapSubString('_inlineMap_', Peek('$(f)', $(rowNr), 'Sub_Region_Table_Distinct_Temp'));

Next

chunkText = chunkText & Chr(10) & Mid('$(row)', 2);

Next

[DissolveDefInlineTable] = [DissolveDefInlineTable] & chunkText;

Next

chunkText=''

DROP tables [Sub_Region_Table_Distinct_Temp];

[DissolveTable]:

SQL SELECT [Sub_Region], [Sub_Region_Geometry] FROM Dissolve(dissolveField='Sub_Region', resolution='source', dissolveDef='DissolveDef')

DATASOURCE DissolveDef INLINE tableName='Sub_Region_Table', tableFields='Country,Country_Geometry,Sub_Region', geometryType='POLYGON', loadDistinct='YES', suffix='', crs='Auto' {$(DissolveDefInlineTable)}

;

tag field [Sub_Region] with '$primarykey';

tag field [Sub_Region_Geometry] with '$geopolygon';

tag field [Sub_Region] with '$geoname';

tag field [Sub_Region_Geometry] with '$relates_Sub_Region';

tag field [Sub_Region] with '$relates_Sub_Region_Geometry';

[DissolveDefInlineTable] = '';

/* End GeoAnalytics operation Dissolve ----------------------------------- */

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Have you checked if there is really a peak in RAM usage?

I would say to use 'auto' instead of 'source' but seems you get the error before that.

There could be a workaround using bucles to merging polygons in different steps but maybe it's better to wait in case other user can give a better solution.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Yes, I think the data length is what is causing the message. My shapes are of a higher resolution then the GA ones.

There is a small spike in both the CPU and RAM but its nowhere near maxing out.

Yes the error happens before the query is sent to the GA server.

The phased approach could work but not sure if it would start to fail as they polygons grew.

Thanks for taking your time to look at this !

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Interestingly, I get a similar error message whilst peaking up to tow 83.

The error occurred here:

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Well, at least is consistent on that. For simplifly an iteration can work reducing datapoints in groups of countries, concatenating them all in one simplified_countries table, and use this table in dissolve.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Dissolve shouldn't be that memory intensive, any chance to see the indata?

Simplify and Dissolve is almost the same unless Resolution is set to Source.

You can try increase memory for the GA server by opening the Java tab in the Configure

Service desktop app. Changes comes into effect after restarting the service

under the General tab.

Thanks,

Patric

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

OK it was down to the source data, the "Russia" polygon has a length of 211,624 characters. If I do a simplify on just the Russian row I get the same out of memory error.

I will reduce the resolution of the shape in the load script and it should work fine.

Thanks for taking a look

- « Previous Replies

-

- 1

- 2

- Next Replies »