Unlock a world of possibilities! Login now and discover the exclusive benefits awaiting you.

Recent Documents

-

Stitch Migration to Qlik Cloud

This Techspert Talks session will address: Understanding the schemas Demonstration of the migration process Best practices and tips for a smooth tran... Show MoreThis Techspert Talks session will address:

- Understanding the schemas

- Demonstration of the migration process

- Best practices and tips for a smooth transition

Chapters:

- 01:05 - How to learn about Qlik Talend Cloud

- 01:52 - Why migrate to Qlik Talend Cloud

- 02:42 - Feature difference highlights

- 03:08 - Using the Migration Inventory Tool

- 06:58 - First look at Qlik Talend Cloud Data Integration

- 07:33 - Creating the first Project and Space

- 08:21 - Creating the Klaviyo Source connection

- 09:54 - Creating the Target connection

- 10:52 - Choosing the task settings

- 12:59 - Viewing Pipeline tasks in action

- 13:55 - Target table differences

- 15:19 - Creating the my SQL source

- 19:24 - Q&A: Will QTC features be added to Stitch?

- 20:04 - Q&A: What is the QTC warehouse architecture?

- 21:45 - Q&A: How to build incremental loads?

- 22:28 - Q&A: Can QTC load data from Marketo?

- 23:37 - Q&A: Why are the schemas different?

- 24:26 - Q&A: Where is the list of data sources?

- 25:23 - Q&A: Where can I get a test account to try it?

Resources:

- About Qlik Talend Cloud subscriptions

- Technical feature comparison

- Performing an inventory analysis

- Qlik Ideation

- Schema Differences in data loading

-

Installing Drivers for your Qlik Data Gateway

Purpose The purpose of this post is to help you install the database drivers necessary to allow your Qlik Data Gateway to communicate with your compa... Show MorePurpose

The purpose of this post is to help you install the database drivers necessary to allow your Qlik Data Gateway to communicate with your company's servers once you have completed the Qlik Data Gateway installation itself.

Background

If you are anything like me, perhaps you panicked a bit at the thought of installing the Qlik Data Gateway in a Linux environment. I have a lot of experience with .EXE installations in Windows environments. You know "Next – Next – Next – Finish." But an .RPM file? I had never even see that extension type before. If you were a Linux connoisseur beforehand, you probably guessed that my image for this post is an homage to the Fedora flavor of Linux. Otherwise you just thought it was an advertisement for the new "Raiders of the Lost Data" movie.

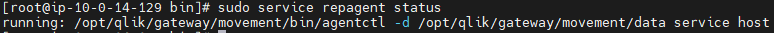

In any event, by now you have created your first Data Gateway, applied the registration key, completed the setup instructions and thankfully the command to check your Data Gateway service shows that it is running.

When you go back to the Data Gateway section of the Management Console and do a refresh your eyes fill you with happiness because your brand spanking new Data Movement Gateway shows "Connected.”

A lesser person would go celebrate right now. But you've decided to try and connect to a source before doing your happy dance. So, you create a new Data Integration project to the destination of your choice. While you will ultimately have many different data sources, let's imagine that you decide to start with a "SQL Server (Log Based)" connection, as your first source test.

You input the server connection details, but your SQL Server doesn't use a standard port for security. Finally, you find information online that you should input your server IP followed by a "comma and the port #". As an example, if your servers IP is 39.30.3.1 and your security port is 12345 you would input "39.30.3.1,12345'. Next you input the user and password credentials. Your last step is to choose the database. Easy peezy, lemon squeezey. Right?

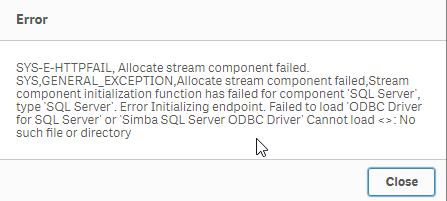

You press the "Load databases" button but suddenly a dialog comes up telling you that the Data Gateway can't connect because it can't find a SQL Server driver.

Driver Installation

Your heart starts beating quickly but naturally as a pro, you remain calm on the outside. Eventually you realize that whether on Windows or Linux, applications have always required drivers to communicate with servers. This is nothing new, we just got excited when we saw that connected message and thought we were done. Upon going back to the setup guide

you realize that there is in fact a link labeled "Setting up Data Gateway – Data Movement source connections."

So, you go ahead and click the link and it takes you to:

Wow, so many sources, and so many additional links to click to ensure the required drivers are in place for the sources your company will need. All the documentation is there, but I know firsthand that it can get a bit overwhelming, especially if Linux isn't your native language, which is the reason for this post.

Obviously every one of you reading this works in an environment that may require different data source connections than the others. Thus, there is no way for me to predict and help with your exact configuration. However, odds are strong that most of you likely require at least: SQL Server, Databricks, Snowflake, Postgres or MySQL, various combinations of them, or perhaps all of them.

As tedious, or imposing as it may be, I highly recommend you walk through the documentation for each data source you will need. But thanks to my buddy John Neal, I have attached a Linux shell script that can be executed to configure all 5 of those data sources for you. Given the many flairs and versions and configurations of Linux I can't ensure that it will work for everyone, but at least it is a start for those that may want to press an easy button, and those that like me may be somewhat or brand new to Linux.

If you choose to take advantage of it, understand that it is only being offered a shelp, and is not meant to replace the documentation. To utilize it you will need to do the following (Please note in my examples I have changed to the root user. If you are logged in as a normal user account, you may need to use SUDO "super user do"):

- Copy the attached "repldrivers-el7.sh.txt" file your Linux home directory where you placed the gateway .RPM file.

- Change the file name to simply be "repldrivers-el7.sh" so that it's clear that it is a shell script in case you or others see it in the future.

- Issue the following command from your prompt: "chmod 775 repldrivers-el7.sh" so that the file has the appropriate security allowing it to be executed.

- Issue the following command from your prompt: "./ repldrivers-el7.sh"

- Issue the following command from your prompt: "cd /etc"

- Issue the following command from your prompt: "/etc/odbcinst.ini"

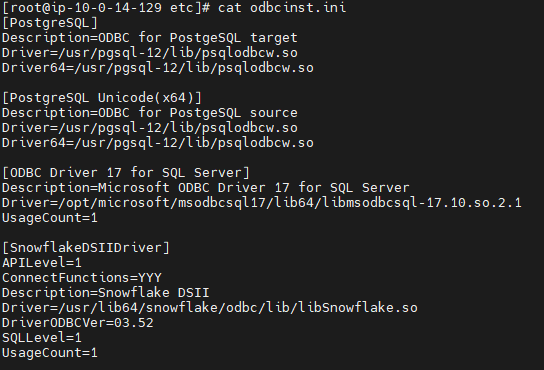

If all went well with the installation your output should look like similar to the following image that was part of my file:

It's almost time to do our happy dance, but let's hold off until we test. In my starting example I asked you to assume we wanted to test against a "SQL Server (Log Based) connection." When we left off it was because we got an error message we had no driver while trying to load the list of databases. I will try that again.

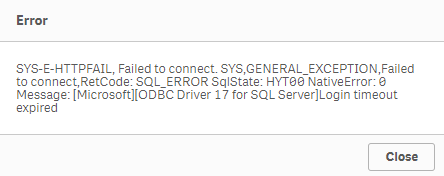

Oh no, the heart rate is going up again.

We have successfully installed the Qlik Data Gateway. We have successfully installed the required drivers. Yet, we are getting this new error message. Let's focus on our breathing and try and digest the situation. What could cause our attempt to connect to our data source to timeout? I got it.

It's likely network security. We know what we want to talk to. We know the location. We know the credentials. But our networks aren't always wide open to do the talking. Resolving your connectivity/firewall issues may or not be with your abilities and if you are like me, you may need to seek the help of your IT/Networking team.

When I reached out to my friendly IT guru, here within Qlik, he was able to help me get everything in place so that my Linux server could speak with my database servers, including all of the needed ports.

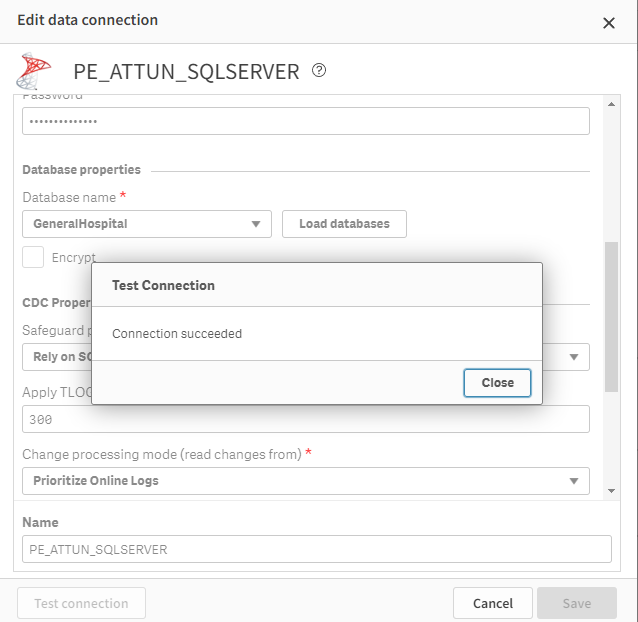

Once they were completed I was able to test and sure enough my data connection succeeded.

Whether or not you do a happy dance, as I did, I hope that this post has helped you get to that sweet smell of success. After all, someone has to be known as the amazing person who got your Qlik Data Gateway going so that others in the Data Engineering team could create all of those lights out Qlik Cloud Data Integration projects that would be feeding data in near real time to all of those wonderul analytics use cases. Hopefully with the help of the documentation and this post, that person is you my friends.

Challenge

One of the things I've long admired about the Qlik Community is their willingness to help each other through this Community site. If you are a Linux guru and are so inclined I would love to see you share other versions of the shell script that I have started. Maybe your organization is using another flair/version of Linux and you needed to make a few tweaks to my file. Maybe your organization needed Oracle added and you can tweak my file. Whatever the reason, I sure hope you will give back to the community by sharing all of those tweaks here. Who knows, your help might help them be able to do their happy dance. And we all know the world is a better place when more people do their happy dance.

Related Content

Qlik Data Gateway - Data Movement prerequisites and Limitations - https://help.qlik.com/en-US/cloud-services/Subsystems/Hub/Content/Sense_Hub/Gateways/dm-gateway-prerequisites.htm

Setting up the Data Movement gateway - https://help.qlik.com/en-US/cloud-services/Subsystems/Hub/Content/Sense_Hub/Gateways/dm-gateway-setting-up.htm

PS - I created both of the images here using a generative AI solution called MidJourney. I hope they've added to the fun of this post.

-

SAP Connection to Qlik Talend Cloud Data Integration

This Techspert Talks session covers: Completing connection requirements Setting up SAP connection to Qlik Talend Cloud Data Integration SAP data bes... Show More -

Troubleshooting Qlik Data Gateway Direct Access

This Techspert Talks session covers: How Data Gateways for Direct Access works What to do if things go wrong Troubleshooting best practices Chap... Show More -

Requested endpoint could not be provisioned due to failure to acquire a load slo...

Reloads fail with error: A reload fails with: Requested endpoint could not be provisioned due to failure to acquire a load slot: Your version of Direc... Show MoreReloads fail with error:

A reload fails with:

Requested endpoint could not be provisioned due to failure to acquire a load slot: Your version of Direct Access gateway is no longer supported. Go to 'Data gateways' in the Management Console to download the latest version (DirectAccess-0001)

Resolution

Upgrade Qlik Data Gateway - Direct Access to a supported version.

If you are already on a supported version of Qlik Data Gateway, rerun the reload. Likely, the services were still initializing when the issue occurred.

Cause

This message can occur when the Qlik Data Gateway version is not supported

Or it could also be caused if the services are starting when the request is sent, causing the version being misreported. This is usually an isolated issue with very few reload failures.

Environment

- Data Gateway Direct Access (All versions).

-

Data Gateway Direct Access disconnections (0x80004005): The remote party closed ...

The data connection between Qlik Data Gateway - Direct Access and Qlik Cloud may occasionally fail due to network connectivity issues. Commonly, the ... Show MoreThe data connection between Qlik Data Gateway - Direct Access and Qlik Cloud may occasionally fail due to network connectivity issues.

Commonly, the disconnects are short, and the data connection will recover automatically without the Qlik Sense app reload failing.

Longer connection interruptions may lead to a failed Qlik Sense app reload.Connection failure can be seen in DirectAccess.log at the time of failed connection, regardless if the app reload is successful or not:

[Service ] [ERROR] Connection failed

System.Net.WebSockets.WebSocketException (0x80004005): The remote party closed the WebSocket connection without completing the close handshake.Resolution

Install Qlik Data Gateway - Direct Access 1.6.6 or later.

Configure Data Gateway following recommendations documented in Qlik Help.Qlik Data Gateway has an automatic re-connect mechanism that restores failed connections and allows the Qlik Sense app reload to continue without the app reload failing.

If Qlik Sense app reload is not failing due to data gateway connection failure, the connection failed error can be ignored.

If the Qlik Sense app reloads fails due to a longer data gateway connection failure, it will typically reload successfully on the next scheduled or manual reload.For business-critical apps, it is possible to mitigate the impact of data gateway connection failure by implementing app-level reload resilience. This can be accomplished by using Qlik App Automation for reload with a retry mechanism and script-level retry for failed load statements, retrigger reload tasks from external tasks through API, etc.

Cause

A WebSocket connection is used between Qlik Data Gateway - Direct Access and Qlik Cloud. This persistent connection may fail I any network device in the routed path fails to keep the connection alive through the entire data load.

Data Gateway connects to Qlik Cloud through a private network(s), the public Internet, and Qlik Cloud infrastructure. Daily connection failure is not unusual on a low level in this complex connection path.

Related Content

Qlik Data Gateway - Direct Access. Reloads failing intermittently

Qlik Application Automation: How to automatically rerun a failed automation

Internal Investigation ID(s)

QB-25723

Environment

- Data Gateway Direct Access

-

Exploring Qlik Cloud Data Integration

This Techspert Talks session covers: What Qlik Cloud Data Integrations can do How it can be used Cloud Data Architecture Chapters: 01:10 - Dat... Show More -

Data Gateway - Direct Access. Initial validation for ODBC crashes

When working with Data Gateway - Direct Access, odbc drivers can have unexpected behaviors that can lead to crashes. Note: For more information about... Show MoreWhen working with Data Gateway - Direct Access, odbc drivers can have unexpected behaviors that can lead to crashes.

Note: For more information about how to enable dump file creation and Isolation capability for Data Gateway - Direct Access, please review the links included in the "Related Content" at the end of this article.

One known reason to cause crashes is the use of malformed SQL query statement.

Resolution

After configuring the dump file generation and Process Isolation, dump files will be created under the folder configured during the setup.

Once a crash occurs the file will include the process id associated to the reload:

dotnet.exe.XXXXX.dmp

Where XXXX will be the process id.

With that process id and looking for the date and time when the file was created, in the odbc connector log, is possible to find the ProcessId associated to the Id of the dump file and get the reload ID.

Once the reload id was identified, in order to get the query there are two options:

1. From the QMC get the reload script associated to the reload id to verify the query that was triggered.

2. Enable ODBC debug level in the configuration.properties located under C:\ProgramData\Qlik\Gateway\ folder

ODBC_LOG_LEVEL=DEBUG

The change requires restart of the DAG service.

This will record in the odbc-connector logs the queries, it will be necessary to search for the reload id and the last "GetTableMetadata" recorded for the given reload, a sample of this entry in the logs would be:

2024-11-15 13:05:15,399 [ 11] [DEBUG] oracle ProcessId=10804 RequestId=bda86421-258b-4d42-868c-bd4108d97a51 ClientSessionId= ReloadId=6737b783fe0a7d476b7ebaf1 Message=gRPC - GetTableMetadata() call... SELECT *

FROM ResidentialDataIf the query is executed from a third party tool (outside of Qlik products) and doesn't work, the problem is a malformed SELECT statement.

If the issue is not related to a malformed SELECT query, collect the dump file and contact Qlik Support for further analysis.

Cause

Malformed SQL query statement.

Related Content

Mitigating connector crashes during reload

How to collect dump files for the Qlik Data Gateway - Direct Access ODBC connector crashes.

Environment

- Data Gateway - Direct Access

-

Qlik Data Gateway - Direct Access: Reloads failing intermittently

Qlik Data Gateway (Direct Access) reloads will fail intermittently, some of the errors that can be seen are: Requested endpoint could not be provision... Show MoreQlik Data Gateway (Direct Access) reloads will fail intermittently, some of the errors that can be seen are:

Requested endpoint could not be provisioned due to failure to acquire a load slot: Command getReloadSlot error for reload <Reload ID>

Some errors that can be seen in the DirectAccessAgent log (usually located at C:\Program Files\Qlik\ConnectorAgent\data\logs:

[Service ] [ERROR] Connection failed

System.Net.WebSockets.WebSocketException (0x80004005): Unable to connect to the remote server

---> System.Net.Http.HttpRequestException: The SSL connection could not be established, see inner exception.

---> System.IO.IOException: Unable to read data from the transport connection: An existing connection was forcibly closed by the remote host..

---> System.Net.Sockets.SocketException (10054): An existing connection was forcibly closed by the remote host.Environment

- Qlik Data Gateway - Direct Access (All versions)

Resolution

It is suggested to make sure that both their tenant hostname (for example a12345bcdefghijklm.us.qlikcloud.com) and the tenant alias hostname (for example mytenantqlik.us.qlikcloud.com) are allowed on the machine hosting Data Gateway and that HTTPS (port 443) and WSS traffic are not being blocked.

If this configuration has been verified and things are still failing, don't hesitate to get in touch with Qlik Support.

Cause

Traffic is blocked leading to intermittent failures.

Related Content

Outbound traffic - allowlisting domain names and IP addresses

-

How to collect dump files for the Qlik Data Gateway - Direct Access ODBC connect...

If the ODBC Connector service bundled with the Qlik Data Gateway - Direct Access crashes intermittently, then any active reloads through the connector... Show MoreIf the ODBC Connector service bundled with the Qlik Data Gateway - Direct Access crashes intermittently, then any active reloads through the connector during the crash may fail with different errors. In this case, it may become necessary to collect crash dump files to proceed further with the investigation.

The logs show an ODBC crash at the time of the issue, this can be verified by reviewing the DirectAccess log located at C:\Program Files\Qlik\ConnectorAgent\data\logs

229 2023-07-26 21:00:59 [Manager ] [WARN ] ODBC process (1912) has exited (exit code: 0), going to restart it

Starting in Data Gateway - Direct Access version 1.7.1, all connector start/exit/restart events are recorded in connector_agent_[date].log

A PowerShell script provided by Qlik's development team is attached to the article. It can be used to create the necessary entries in the Registry. Note: It requires to be executed with admin rights, you might need to change Security Policies for successful execution (Set-ExecutionPolicy RemoteSigned)

- Open the Windows Registry

- Go to the following path HKLM\SOFTWARE\Microsoft\Windows\Windows Error Reporting\LocalDumps

- In the LocalDumps folder, create new registry keys for both dotnet.exe and ConnectorAgent.exe

- In each of the folders, add:

- A String value, name it DumpFolder and indicate the folder path to store the dump files

- A DWORD, name it DumpCount and set it to 10

- A DWORD, name it DumpType and set it to 2

- Reboot the server for the registry setting changes to take effect

Once the dump files are available, attach them to the case, along with any other relevant information.

Related Content

Necessary information for Qlik Data Gateway - Direct Access cases

Environment

Qlik Data Gateway - Direct Access (All versions)

-

Qlik Replicate Oracle Source Redo Log with Sequence not found error when DEST_ID...

Going to retrieve archived REDO log with sequence 2056016, thread 1 but fails REDO log with a sequence not found error. The Archived Redo Log has Prim... Show MoreGoing to retrieve archived REDO log with sequence 2056016, thread 1 but fails REDO log with a sequence not found error. The Archived Redo Log has Primary Oracle DB as DEST_ID 1 and the Standby DEST_ID 32 pointing to the correct location.

Environment

Qlik Replicate Release 2022.11 previous versions

Resolution

Cannot use DEST_ID greater than 31 for Primary or Standby Oracle Redo Log locations in prior releases of Replicate 2022.11. The new 2023.5 SR03 supports DEST_ID 32

Cause

Qlik Replicate 2022.11 only supported the DEST_ID 0 through 31

Related Content

2023.5 SR03

https://files.qlik.com/url/QR_2023_5_PR03

Internal Investigation ID(s)

JIRA RECOB-7509