Unlock a world of possibilities! Login now and discover the exclusive benefits awaiting you.

Product Innovation

By reading the Product Innovation blog, you will learn about what's new across all of the products in our growing Qlik product portfolio.

Support Updates

The Support Updates blog delivers important and useful Qlik Support information about end-of-product support, new service releases, and general support topics.

Qlik Academic Program

This blog was created for professors and students using Qlik within academia.

Community News

Hear it from your Community Managers! The Community News blog provides updates about the Qlik Community Platform and other news and important announcements.

Qlik Digest

The Qlik Digest is your essential monthly low-down of the need-to-know product updates, events, and resources from Qlik.

Qlik Learning

The Qlik Learning blog offers information about the latest updates to our courses and programs, as well as insights from the Qlik Learning team.

Recent Blog Posts

-

The True Value of Education

"It is not about what you know, it is about what you can do".For a person using an analytics application as their tool, they too should not have to worry about how to use the tool. For example, we do not want a user to worry about how to display bars side-by-side to show a reference line with the average value. We want them to focus on the important part: the analytical question. In this case it could be "What resources do we need to complete t... Show More"It is not about what you know, it is about what you can do".

For a person using an analytics application as their tool, they too should not have to worry about how to use the tool. For example, we do not want a user to worry about how to display bars side-by-side to show a reference line with the average value. We want them to focus on the important part: the analytical question. In this case it could be "What resources do we need to complete this project on time, and where do we need them?”. The "How do I do this in Qlik?" becomes "muscle memory"…it is just like tying your shoes.

People can learn things on their own, but they won’t connect the dots when they don’t know what they don’t know. There are features which may be better for them to use that they are not aware of. By using education, we teach them all of these and it becomes just like tying their shoe, so they free up their mind and are equipped to handle any question that comes at them.

To read the full article visit The True Value of Education | Qlik

-

Accessing user information

For any BI tool to work it needs to understand who is using the tool. If we know who is using the tool (authentication) we also need to understand things about them such as where they work, e-mail address etc. If we know who the user is and what describes him/here we can do things like giving them access to the right information and tailor their experience. We therefore have methods in both QlikView and Qlik Sense to access information describing... Show MoreFor any BI tool to work it needs to understand who is using the tool. If we know who is using the tool (authentication) we also need to understand things about them such as where they work, e-mail address etc. If we know who the user is and what describes him/here we can do things like giving them access to the right information and tailor their experience. We therefore have methods in both QlikView and Qlik Sense to access information describing the users in user directories. Even though we have this capability in both QlikView and Qlik Sense, their approach to doing it is very different.

The most common user directory used by our customers is LDAP. LDAP is a distributed database that is suitable for maintaining user information. It exists in both open solutions developed by the open source community (such as OpenLDAP) and in closed solutions (such as Microsoft Active Directory).

So what are the differences between QlikView and Qlik Sense?

QlikView is based on the concept with a directory service connector that fetch user information as it is needed. It doesn't replicate any data stored in the LDAP database, but instead asks a question every time it needs access to user information including groups.

Qlik Sense uses a sync approach, which means that it replicates the user information including groups from LDAP into a database and then keeps this in sync. This can be done in different ways (sync all users, sync with a filter, sync only users that access Qlik Sense) depending on your needs and size of directory.

There are two major usages of the user information, each with different needs: for management and for access control/authorization.

For management it is good if the information is current but not absolutely critical, so here the Qlik Sense scheduler is used to update the data.

For security however, it is critical that information is current. Therefore individual user records are updated as the user connects to the Qlik Sense environment.

So, are there any similarities in how you configure Qlik Sense and QlikView?

Yes

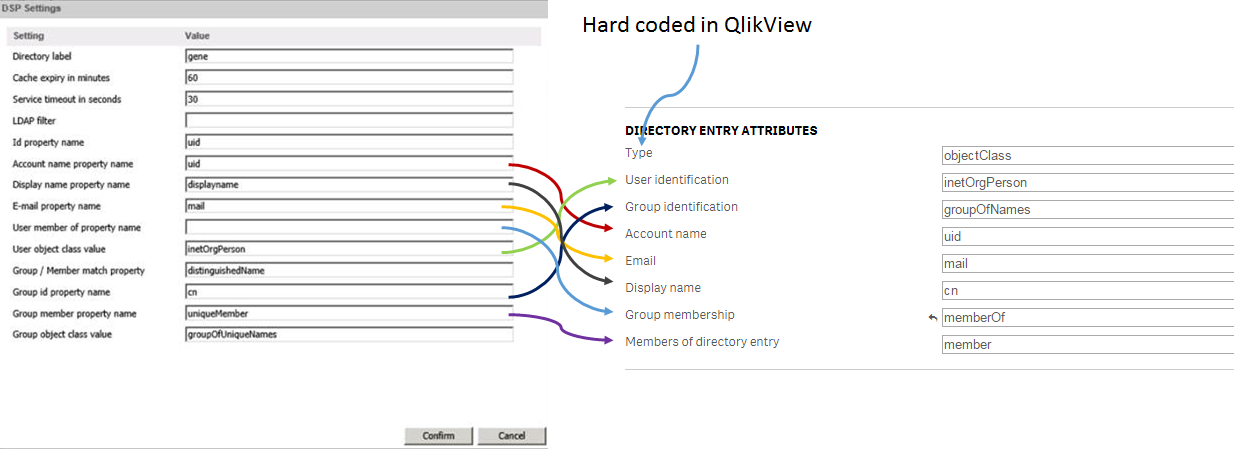

In the picture below I describe the relations between QlikView LDAP configuration and Qlik Sense LDAP Configuration.

I hope that you found these tips on how we access user information helpful. If you have questions on this blog post or have ideas of what you want to read about in the future, please don’t hesitate to add comments to post.

-

Contextualize your KPIs

Raw data is useful but data with additional contextual information is better because it helps us do what we already do naturally: compare, contrast, and weigh our options as part of the bigger picture. Contextual data is the supporting information that helps the reader more fully understand the primary data.Key performance indicators (KPIs) at the top of a Dashboard page are a great way to give the general summary of how something is functioning,... Show MoreRaw data is useful but data with additional contextual information is better because it helps us do what we already do naturally: compare, contrast, and weigh our options as part of the bigger picture. Contextual data is the supporting information that helps the reader more fully understand the primary data.

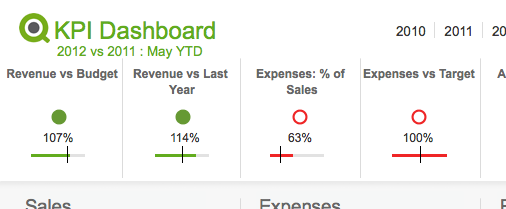

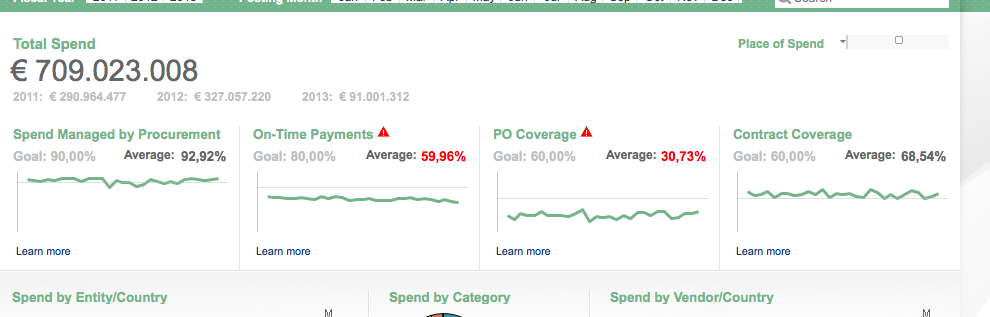

Key performance indicators (KPIs) at the top of a Dashboard page are a great way to give the general summary of how something is functioning, but the very next thought in any reader's mind is about context. Only people who are very familiar with the data can look at a number and place it in the context of the full story. The rest of us need additional information to contextualize this data. There are a variety of simple ways to give readers additional context so they can make smarter decisions.

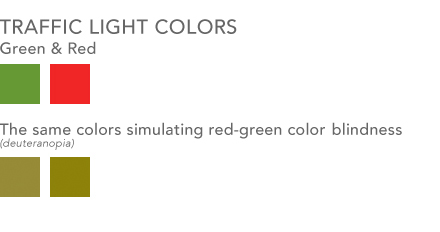

Traffic light

One way most people in BI are familiar with providing additional context is color coding information with traffic light colors. Green is good, yellow is a warning, red is bad. This visual metaphor is so common that most people understand it without any additional information. You might not know why a number is red, but at least you know there is a problem. Something to consider however is using an additional visual cue along with this metaphor for color blind users. Red-green color blindness is the most common form of color blindness which is where red & green colors shift to being shades of yellow and brown. You can use shapes / symbols along with your colors to improve the accessibility of this system. Perhaps you use up or down triangles in addition to coloring them green or red. Perhaps a fully colored circle can be green for good where an empty circle with just a red border can be bad. The shape is an additional indicator to users who have difficulty seeing the differences in your colors as to the context of your data.

Lines & Bars

Having a simple line or bar chart below a KPI can quickly place a KPI in the context of a larger whole. These are charts with no axes or written values. They are simply there to give context - drilling into the details is done elsewhere.

- A line chart helps show the overall trend. To see a KPI number in green is useful, but more useful is to see that perhaps the overall trend in sales is going down and pretty soon that number might be red.

- A bar/bullet chart is useful for showing, among other things, the completion of goals. Show a goal/reference line and show how well you met or exceeded that goal. This is essentially a more streamlined, quieter, more aesthetically pleasing version of a gauge chart.

This time last …

An additional piece of context you can add is some variation of "This Time Last …" year, quarter, month etc. You communicate to the reader that at some previous point in time the value of this field was something else and you are helping them compare the two values and judge if progress is being made.

You can employ any/all of these in your designs to help bring context to the data and enable users to make smarter decisions.

-

Counters in the Load

Often when you create scripts, you need to create new fields, based on counters. There are several ways to do this. Some are simple, others not so… Using RecNo() The RecNo() function simply counts the input records and returns the number of the current record. Simple, and very useful if you want to create a record ID. However, if you concatenate several input files, or use a While clause, the numbers will not be unique. Using RowNo() The RowNo(... Show MoreOften when you create scripts, you need to create new fields, based on counters. There are several ways to do this. Some are simple, others not so…

Using RecNo()

The RecNo() function simply counts the input records and returns the number of the current record. Simple, and very useful if you want to create a record ID. However, if you concatenate several input files, or use a While clause, the numbers will not be unique.

Using RowNo()

The RowNo() function is very similar to the RecNo(), but this instead counts the output records and returns the number of the current record. Also simple and useful, especially if you concatenate several input tables. In such a case, the function will return consecutive numbers for the output table.

Using AutoNumber()

The AutoNumber() function is useful if you want to put a number on a specific field value, or on an attribute that is a combination of field values. The attributes will be numbered and their numbers re-used appropriately.

AutoNumber( Product & '|' & Date ) as ID

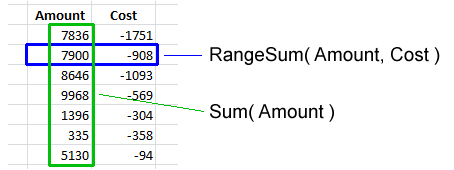

Using inline Peek() and RangeSum()

This is the mother of all record counters. Anything can be numbered; it can be done conditionally and anything can be used as condition. The idea is to fetch the counter value from the previous record and increase it only if some condition is fulfilled. Or reset it.

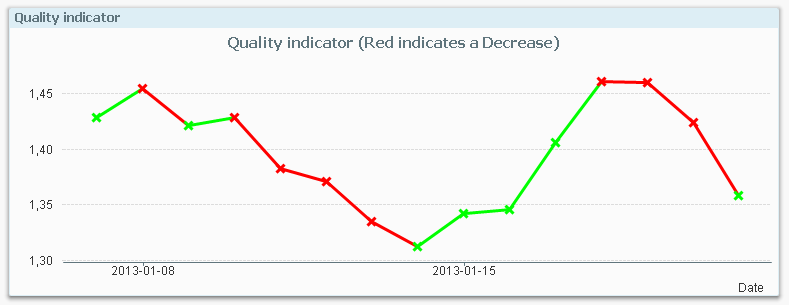

An example: For production quality control, some process indicator is measured and the Quality Manager wants to track trends and trend shifts. Then it is important to see the number of consecutive days that this indicator has increased or decreased. It is also good if the series of consecutive days can get a unique ID that can be used for selections and charts.

The following script creates these two fields; TrendID and DaysWithTrend.

Load *,

If( Change * Peek( Change ) > 0,

Peek( TrendID ),

RangeSum( 1, Peek( TrendID ))

) as TrendID,

If( Change * Peek( Change ) > 0,

RangeSum( 1, Peek( DaysWithTrend )),

0

) as DaysWithTrend;

Load

Indicator,

Indicator - Peek( Indicator ) as Change

Resident Daily_TQM_Measurement

Order By Date;First of all, the change of the indicator value is calculated in the bottom Load using the Peek() function. In the preceding Load, the change is used as condition in the If() function. The condition compares current record with the previous record using the last change and the second last change. If the product of the two is greater than zero, the trend has been the same two days in a row, so the TrendID of the previous record is used (the Peek() function) and DaysWithTrend is increased by one.

But if the condition is false, the TrendID is increased by one and the DaysWithTrend is reset to zero.

When a counter is increased, normal addition cannot be used since the Peek() will return NULL for the very first record. Instead the addition is made using the RangeSum() function.

Summary: You can create any auto-incrementing counter in a QlikView script. Just choose your counter function…

-

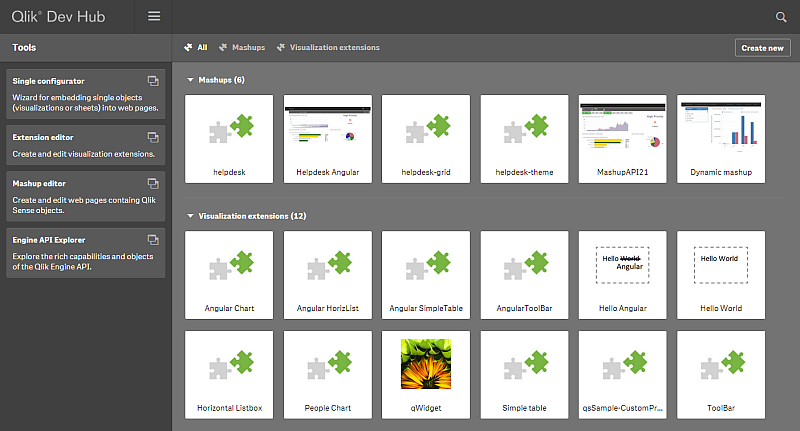

Qlik Dev Hub replaces Qlik Sense Workbench in Qlik Sense 2.1

For Qlik Sense 2.1, the Qlik Dev Hub, accessible at https://<ServerName>/dev-hub/ , replaces the Qlik Sense Workbench, formerly accessed by https://<ServerName>/workbench/ (this will now redirect you to the Dev Hub). In addition to a brand new user interface layout, the Dev Hub has a couple of new features worth mentioning.The new user interface has a sleek dark color design, and its layout more closely resembles the Qlik Sense client than its ... Show MoreFor Qlik Sense 2.1, the Qlik Dev Hub, accessible at https://<ServerName>/dev-hub/ , replaces the Qlik Sense Workbench, formerly accessed by https://<ServerName>/workbench/ (this will now redirect you to the Dev Hub). In addition to a brand new user interface layout, the Dev Hub has a couple of new features worth mentioning.

The new user interface has a sleek dark color design, and its layout more closely resembles the Qlik Sense client than its predecessor. The top nav bar has a drop-down menu that provides access to an “About” link, which displays information regarding the Qlik Dev Hub, and a “Help” link, which brings up helpful documentation in a new browser window. There’s an added search functionality also included on the top nav bar which lets you search through your mashups and extensions, which is really useful as your number of them grows. A second nav bar allows filter by “Mashups” or “Visualization extensions.” There is also a “Create new” button that lets you jump right into creating a mashup or extension.

A left-aligned nav bar allows you to choose from “Single configurator,” “Extension editor,” “Mashup editor,” or “Engine API Explorer.” The “Single Configurator” was previously not accessible from the Qlik Sense Workbench, and its inclusion in the Qlik Dev Hub is very convenient. The “Engine API Explorer” was previously known as the “Protocol tester” and has a new look to match the Dev Hub.

The “Mashup editor” has a couple new features. There are now menus on the left and right of the main work area which can be toggled to make the main work area larger. An added feature I’m very fond of is the ability to create new files for your mashup right from the top nav bar. The “Preview” tab has been improved, and you can now interact with your mashup in addition to dragging and dropping charts, which is a nice improvement because formerly if you had off-canvas chart areas, there was no way to access them to drag and drop charts.

The Qlik Dev Hub comes with quite a few new templates for both mashups and visualization extensions. I highly suggest you try some of these out, I think you’ll be amazed at how quickly you can put together a decent mashup, even with little to no web development skills. Steps below -

1) Navigate to the Qlik Dev Hub, click on the “Create new” button, give your mashup a name, and select either “Basic single page mashup” or “Slideshow mashup” from the dropdown menu.

2) Click the “Create and edit” button and this will open up your mashup in the “Mashup editor” for you.

3) Select an app from the dropdown menu in the left nav, and start dragging and dropping charts into the chart areas.

4) Customize as much as you want by modifying the auto-generated HTML and JS files

I’m going to include a few links to documentation below. If you haven’t tried the new Qlik Dev Hub yet, you should definitely check it out.

-

A Myth about the Number of Hops

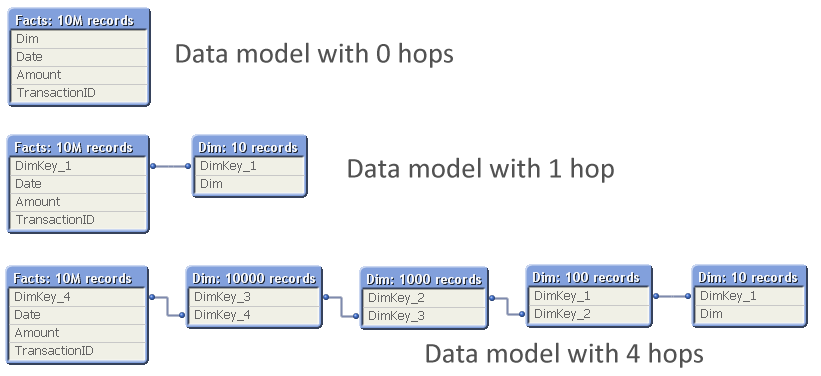

In the QlikCommunity forum I have often seen people claim that you should minimize the number of hops in your Qlik data model in order to get the best performance. I claim that this recommendation is not (always) correct. In most cases, you do not need to minimize the number of hops since it affects performance only marginally. This post will try to explain when an additional table significantly will affect performance and when it will not. The... Show MoreIn the QlikCommunity forum I have often seen people claim that you should minimize the number of hops in your Qlik data model in order to get the best performance.

I claim that this recommendation is not (always) correct.

In most cases, you do not need to minimize the number of hops since it affects performance only marginally. This post will try to explain when an additional table significantly will affect performance and when it will not.

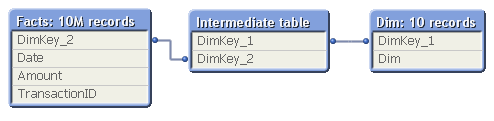

The problem is which data model to choose:

The question is: Should you normalize and have many tables, with several hops between the dimension table and the fact table? Or should you join the tables to remove hops?

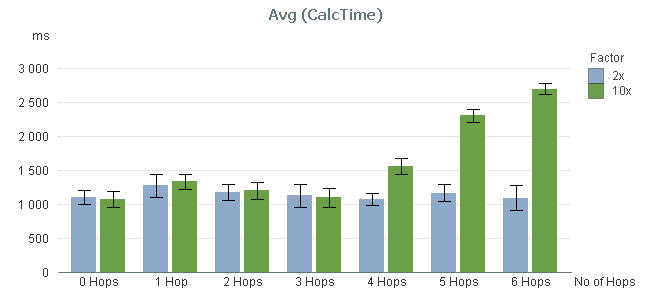

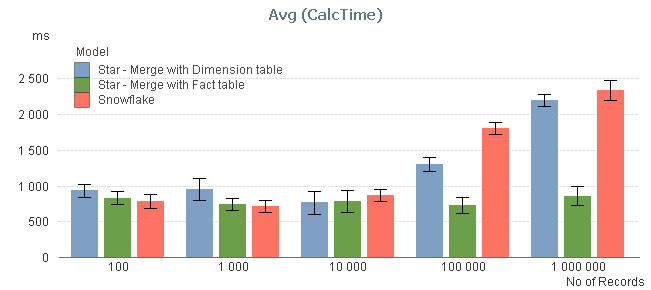

So, I ran a test where I measured the calculation time of a pivot table calculating a simple sum in a large fact table and using a low-cardinality dimension, while varying the number of hops between the two. The graph below shows the result. I ran two series of tests, one where the cardinality of the dimensional tables changed with a factor 10 for each table; and one where it changed with a factor 2.

You can clearly see that the performance is not affected at all by the number of hops – at least not between 0 and 3 hops.

By 4 hops, the calculation time in the 10x series however starts to increase slightly and by 5 hops it has increased a lot. But this is not due to the number of hops. Instead, it is the result of the primary dimension table (the dim table closest to the fact table) getting large: By 5 hops it has 100.000 records and can no longer be regarded as a small table.

To show this, I made a second test: I measured the calculation time of the same pivot table using a fix 3-table data model, varying the number of records in the intermediate table, but keeping the sizes of the other tables.

In real life, this structure would correspond to a part of a more complex data model, e.g.

- Facts - Products - Product Groups

- Order Lines - Order Headers - Customers

The result of my measurement can be seen in the red bars below:

The graph confirms that the size of the intermediate table is a sensitive point: If it has 10.000 records or less, its existence hardly affects performance. But if it is larger, you get a performance hit.

I also measured the calculation times after joining the intermediate table, first to the left with the fact table, and then to the right with the dimension table, to see if the calculation times decreased (blue and green bars). You can see that joining tables with 10.000 records or less, does not change the performance. But if you have larger tables, a join with the fact table may be a good idea.

Conclusions:

- The number of hops does not always cause significant performance problems in the chart calculation. But a large intermediate table will.

- If you have both a primary and a secondary dimension (e.g. Products and Product Groups), you should probably not join them. Leave the data model as a snowflake.

- If you have the facts in two large tables (e.g. Order Lines and Order Headers), you should probably join them into one common transaction table.

PS. A couple of disclaimers:

- The above study only concerns the chart calculation time - which usually is the main part of the response time.

- If the expression inside your aggregation function contains fields from different tables, none of the above is true.

- Your data is different than mine. You may get slightly different results.

Further reading related to this topic:

-

Logical Inference and Aggregations

Every time you click, the Qlik engine recalculates everything. Everything. A new selection implies a new situation: Other field values than before are possible; other summations need to be made; the charts and the KPIs get other values than before. The state vectors and the objects are invalidated. Everything needs to be recalculated since this is what the user demands. Well, there is of course a cache also – so that the Qlik engine doesn... Show MoreEvery time you click, the Qlik engine recalculates everything.

Everything.

A new selection implies a new situation: Other field values than before are possible; other summations need to be made; the charts and the KPIs get other values than before. The state vectors and the objects are invalidated. Everything needs to be recalculated since this is what the user demands.

Well, there is of course a cache also – so that the Qlik engine doesn’t have to recalculate something which has been calculated before. So it isn’t quite true that everything is recalculated: If a calculation has been made before, the result is simply fetched from the cache. But it is true that nothing is pre-calculated. There is no need for that. Everything can be done in real-time.

The Qlik engine is an on-demand calculation engine.

From a principal point, there are two steps in the recalculation of data: The logical inference in the data model, and the calculations of all objects, including sheet labels and alerts.

The logical inference is done first. The goal is to figure out which field values in the symbol tables are possible and which records in the data tables are possible, given the new selection. There is no number crunching involved - it is a purely logical process. The result is stored in the state vectors.

Think of it as if the selection propagates from one table in the data model to all other tables. Table after table is evaluated and the Qlik engine figures out which values and records are possible, and which are excluded.

When the logical inference is done, the Qlik engine starts to evaluate all exposed objects. List boxes and dimensions in charts must be populated and sorted. All expressions – in charts, in text boxes, in labels, in alerts – must be calculated. Objects that are on other sheets, minimized or hidden, are however not calculated.

The calculations are always aggregations based on the data records that have been marked as possible by the logical inference engine. I.e., the objects do not persist any data on their own.

The calculation phase is usually the phase that takes time – often over 90% of the response time is due to calculations. The calculations are asynchronous and multi-threaded on several levels: First of all, every object is calculated in its own thread. Secondly, in the 64-bit version, many aggregations e.g. Sum() are calculated using several threads, so that a sum in one single object can be calculated quickly using several CPUs.

Finally, when an object has been calculated, it is rendered. Since the calculation is asynchronous and multi-threaded, some objects are rendered long before other objects are ready.

And when an object has been rendered, you can click again. And everything is repeated.

PS. All of the above is of course true for both QlikView and Qlik Sense. Both use the same engine.

If you want to read more about the Qlik engine internals, see

Symbol Tables and Bit-Stuffed Pointers

-

Qlik Continuous Classroom is now available!

The Qlik Continuous Classroom is a new 24/7 self-service distance learning platform for Qlik Sense!Innovative customized role-based curriculum, courses, and delivery accelerates the power of Qlik to customers around the world!Qlik now provides users with a new way to learn that allows them to completely customize their learning journey based on their individual needs. Users can choose from dozens of modules equivalent to approximately 25 hours o... Show MoreThe Qlik Continuous Classroom is a new 24/7 self-service distance learning platform for Qlik Sense!

Innovative customized role-based curriculum, courses, and delivery accelerates the power of Qlik to customers around the world!

Qlik now provides users with a new way to learn that allows them to completely customize their learning journey based on their individual needs. Users can choose from dozens of modules equivalent to approximately 25 hours of work, with topics ranging from “Foundations of Building Visualizations” to ”Build and Play Stories”.

Learner-centric Distance Training Boosts Productivity with Qlik

The Qlik Continuous Classroom education package includes innovative offerings, such as:

- Videos with interactive exercises and quizzes – users decide when to start, stop, and resume as often as needed;

- The ability to interact directly with instructors and peer students, leveraging web conferencing tools and forums to quickly get answers as well as share best practices;

- Virtual office hours for immediate support; and

- One-to-many instructor-led virtual training, allowing whole teams to arrange an instructor-led live Webinar course customized to their needs.

More Information and Availability

Qlik Continuous Classroom is a subscription-based, self-service learning platform for Qlik Sense that can either be used on its own or supplemented by instructor-led courses. Users will have the option to preview videos prior to purchase. Today, customers and partners can purchase the Qlik Sense Business Analyst role, with additional roles to be added in the future. For more information and to try the platform for free, please visit http://qcc.qlik.com.

Check out the Qlik Continuous Classroom Video

-

It’s all Aggregations

I often see incorrect expressions being used in the QlikCommunity forum. Expressions that seem to work correctly – but really don’t… So, let me make this clear: Calculations in QlikView are aggregations. It doesn’t matter if it is a measure in a chart, or a calculated object label, or a show condition for an object, or a calculated color, or an expression search – all expressions in the user interface are evaluated as aggregations. (Except ca... Show MoreI often see incorrect expressions being used in the QlikCommunity forum. Expressions that seem to work correctly – but really don’t…

So, let me make this clear: Calculations in QlikView are aggregations.

It doesn’t matter if it is a measure in a chart, or a calculated object label, or a show condition for an object, or a calculated color, or an expression search – all expressions in the user interface are evaluated as aggregations. (Except calculated dimensions, and some search strings.)

This means that it is correct to use the Sum() function in an expression, since this is an aggregation function - a function that uses several records as input. But if you omit the aggregation function or use a scalar function only, e.g. RangeSum(), you can get an unexpected behavior.

Basically, all field references should be wrapped in an aggregation function. The Aggr() function and some constructions using the total qualifier can even have several layers of aggregations.

But if the created expression does not contain an aggregation function, the expression is ill-formed and potentially incorrect.

Examples:

=Sum(Amount)

=Count(OrderID)

These are both correct aggregations. Amount is wrapped in the Sum() function which will sum several records of the field Amount. OrderID is wrapped in the Count() function, which will count the records where OrderID has a value.=Only(OrderID)

This is also a correct aggregation. OrderID is wrapped in the Only() function, which will return the OrderID if there is only one value, otherwise NULL.=OrderID

A single field reference is not an aggregation, so this is an ill-formed expression. But QlikView will not throw an error. Instead it will use the Only() function to interpret the field reference. I.e., if there is only one value, this value will be used. But if there are several possible values, NULL will be used. So, it depends on the circumstances whether an expression without aggregation function is correct or not.=If(Year=Year(Today()), Sum(Amount1), Sum(Amount2))

Here, both the amounts are correctly wrapped in the Sum() function. But the first parameter of the if() function, the condition, is not. Hence, this is an ill-formed expression. If it is used in a place where there are several possible Years, the field reference will evaluate to NULL and the condition will be evaluated as FALSE, which is not what you want. Instead, you probably want to wrap the Year in the Min() or Max() function.=ProductGroup= 'Shoes'

=IsNull(ProductGroup)

These expressions can both be used as show conditions or as advanced searches. However, since there are no aggregation functions, the expressions are ill-formed. If you want to test whether there exists Shoes or NULL values among the field values, you probably want to use the following instead:

=Count(If(ProductGroup= 'Shoes', ProductGroup))>0

=NullCount(ProductGroup)>0Conclusions:

- An aggregation function is a function that returns a single value describing some property of several records in the data.

- All UI expressions, except calculated dimensions, are evaluated as aggregations.

- All field references in expressions must be wrapped in an aggregation function. If they aren’t, QlikView will use the Only() function.

Further reading related to this topic:

-

Introducing Qlik Sense 2.1

Hello Qlik Community! - I am pleased to announce the availability of Qlik Sense 2.1. Our third and final release in the Qlik Sense product family this year. With this release, Qlik is continuing its commitment to helping organizations use analytics to see the whole story that lives within their data. Version 2.1 provides the most complete Qlik Sense experience to date and allows customers to explore, create and collaborate with greater simplicity... Show MoreHello Qlik Community! - I am pleased to announce the availability of Qlik Sense 2.1. Our third and final release in the Qlik Sense product family this year. With this release, Qlik is continuing its commitment to helping organizations use analytics to see the whole story that lives within their data. Version 2.1 provides the most complete Qlik Sense experience to date and allows customers to explore, create and collaborate with greater simplicity by taking advantage of Qlik’s unique associative model.

Allow me to summarize some of the new feature highlights in this release:

- Individuals will benefit from a new visual exploration capability that allows users to explore analytics by changing properties, such as sorting and coloring, without having to enter edit mode or directly change the underlying content

- Groups will benefit from new storytelling features that allow data stories to be directly published to PowerPoint presentations to facilitate sharing and collaboration

- Organizations can take advantage of new variables that empower content creators and developers to build more sophisticated analytics

- Developers will be able empowered with a new development hub to more easily take full advantage of the Qlik Analytics Platform for web mashups, custom apps, and extensions

There is of course much more including additional user experience features and even new Qlik DataMarket Topical Packages. These premium subscription packages include 180 currencies and weather data for 2600 cities.

Now....enough reading and get to watching! The below New Feature video summarizes these highlights and then will link you to other videos to demonstrate and briefly show you how to use these new features.

Note:

- Qlik Sense Desktop is available right now http://www.qlik.com/us/explore/products/sense/desktop

- Qlik Sense Enterprise Server and other associated files for our customers and partners will be available via on our download site (customer and partner access only) later today.

- You can experience these features immediately by visiting and registering on the Qlik Sense Cloud.

Regards,

Michael Tarallo

Senior Product Marketing Manager

Qlik

@mtarallo

Qlik Sense 2.1 New Features Presentation

Other videos worth watching:

-

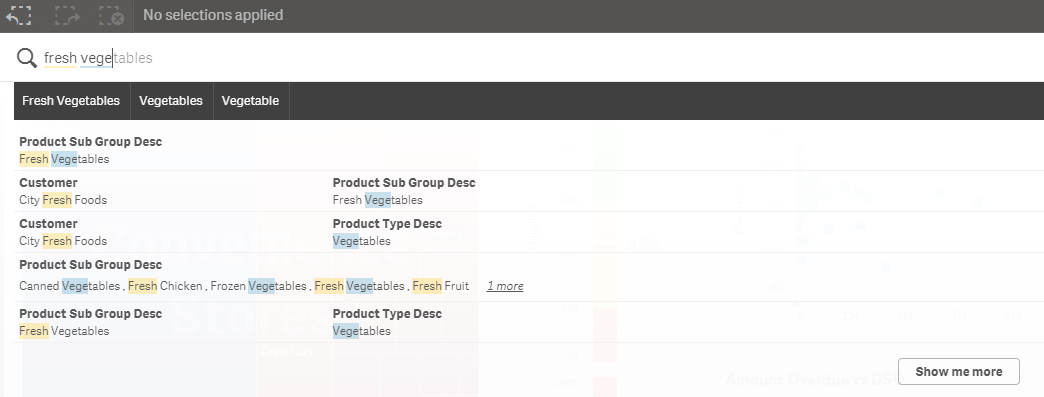

Smart Search

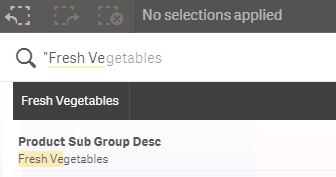

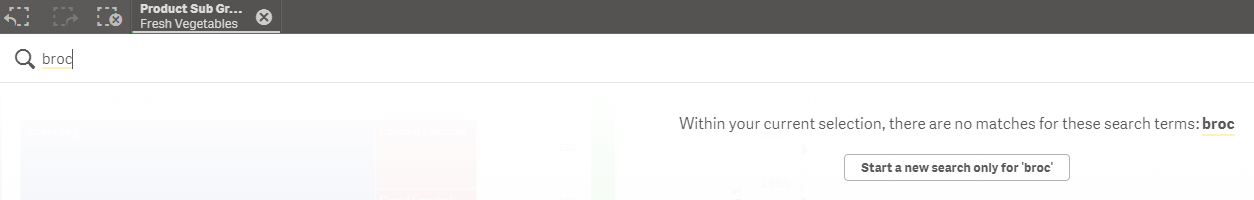

One of the great features in Qlik Sense is the Smart Search capabilities. Smart Search allows you to search the data in your app when you are on a sheet. All you have to do is select the Smart Search icon on the selection bar and type what you want to search for. In the image below I start typing “fresh vegetables” and data that matches “fresh” or “ve” (the start of the word “vegetables”) is displayed. From here, I can select one of the items... Show MoreOne of the great features in Qlik Sense is the Smart Search capabilities. Smart Search allows you to search the data in your app when you are on a sheet. All you have to do is select the Smart Search icon on the selection bar and type what you want to search for. In the image below I start typing “fresh vegetables” and data that matches “fresh” or “ve” (the start of the word “vegetables”) is displayed. From here, I can select one of the items found if it is what I am looking for. I will select “Fresh Vegetables” in the Product Sub Group Desc field and this selection will be added to the selection bar.

One thing I should note is that I did not use quotes when I searched for “Fresh Vegetables” so “fresh” and “vegetables” were interpreted as separate search terms. If I use quotes, my search returns less results (seen in the image below) because my search term was more specific and considered one search term versus two.

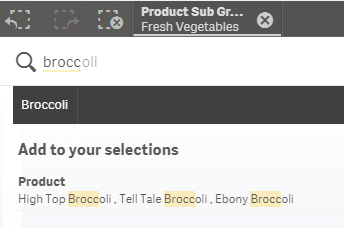

Now if I want to perform another search, I can do so and it will automatically search within my selections which is Fresh Vegetables. If I search for broccoli, all possible data will be displayed within my Fresh Vegetables selection.

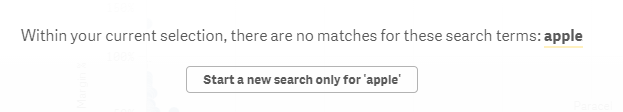

Now say I search for something that is not within my Fresh Vegetable selection like apple. I will be given a message indicating that no matches were found and will be prompted to start a new search for only apple if I chose. If I select the “Start a new search …” button, then my Fresh Vegetables selection will be de-selected before the search begins.

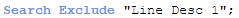

By default, Smart Search searches all fields in the data model but you have the option to set what fields you would like to include or exclude in the Smart Search. This can be done in the script by using the Search Include or Search Exclude statements:

Search Include * fieldlist

Search Exclude * fieldlist

Fieldlist is a comma separated list of the fields that should be included or excluded in the search. The Search Include statement is used to indicate which field(s) should be searched when performing a search. In some apps, there may be several fields that the user may not need to search. In that case, it is smart to use the Search Include to narrow down the list of fields to search. This can help with the performance of the search as well. The Search Exclude statement is used to indicate which field(s) should not be used in the search. This may be used to exclude ID or key fields that were used to build the data model but that the user does not need to search. In both statements, wildcard characters * and ? can be used. Just to show you how this works, if I add the Search Exclude statement below to my script and reload, it will exclude all values in the “Line Desc 1” field when a search is being performed.

In the Master Items, the “Line Desc 1” field is added as a Dimension named Product. When I performed the search earlier, broccoli results were found in the Product dimension. Now watch what happens when I do another search for Broccoli within my Fresh Vegetables selection. Broccoli does not come up in my search results because the exclude statement does not allow a search in the “Line Desc 1” field/Product dimension.

Smart Search provides an easy way for users to find the data they are looking for to filter their data. The Search Include and Search Exclude statements offer the developer a way to control the fields that can be searched. This can improve performance and it can make it easier for the user to focus on the fields that are most relevant. Keep these statements in mind the next time you are developing an app. It will improve the users search experience.

Thanks,

Jennell

-

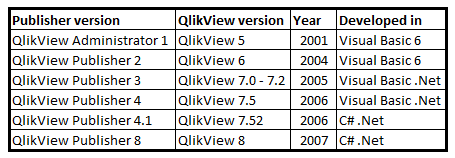

A Historical Odyssey: The Early Days of QlikView Publisher

With QlikView 4 and QlikView 5 we reached a much larger audience than before. We now had large enterprise customers that had demands on the product that we didn’t quite satisfactory fulfill: The demands were around Security, Distribution and Workflow. As a result, one large Swedish customer developed their own system to administrate QlikView: They developed software that used the QlikView COM Automation interface to update and distribute QlikVi... Show MoreWith QlikView 4 and QlikView 5 we reached a much larger audience than before. We now had large enterprise customers that had demands on the product that we didn’t quite satisfactory fulfill: The demands were around Security, Distribution and Workflow.

As a result, one large Swedish customer developed their own system to administrate QlikView: They developed software that used the QlikView COM Automation interface to update and distribute QlikView documents. They showed it to us and we were impressed – so impressed that we bought the code to develop it further.

As a result, we could in 2001 release the QlikView Administrator.

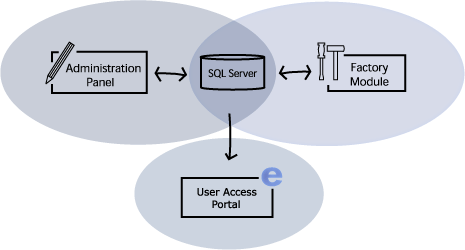

The Administrator had three basic components:

- The Factory – which later became The Distribution service

- The User Access Portal – which later became The Access Point

- The Administration Panel – which later became The Management Console

The Factory’s tasks were to update the QlikView documents and distribute them in a secure way. On the portal, the users could either download the documents for off-line use or connect to the documents using QlikWeb – which was the name of our server at the time. Finally, the administration panel was used to set the rules for how and when the updates should be made and to whom the documents should be distributed.

The Administrator was the basic workflow tool that our enterprise customers demanded. It contained tasks, scheduling, data reduction, document categorization, document distribution and it also set the user rights per document. So it covered all the basic needs.

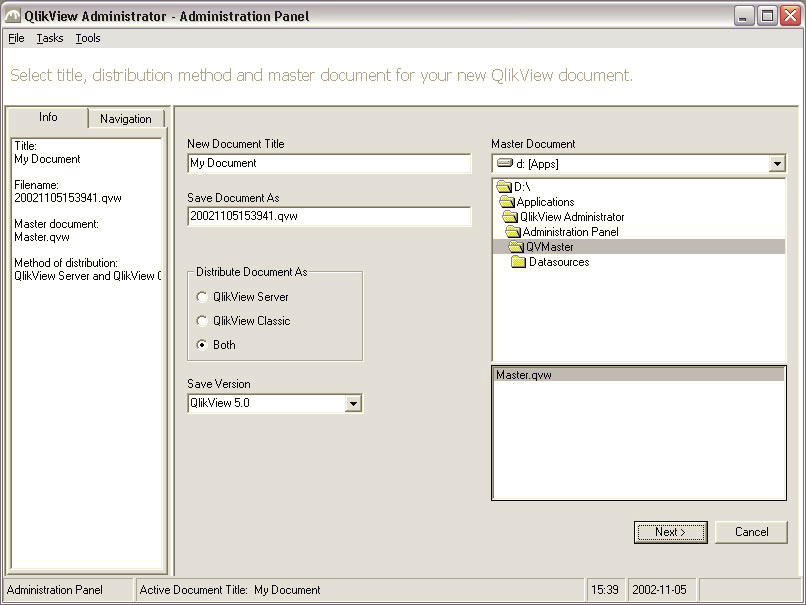

The administration panel for Administrator version 1

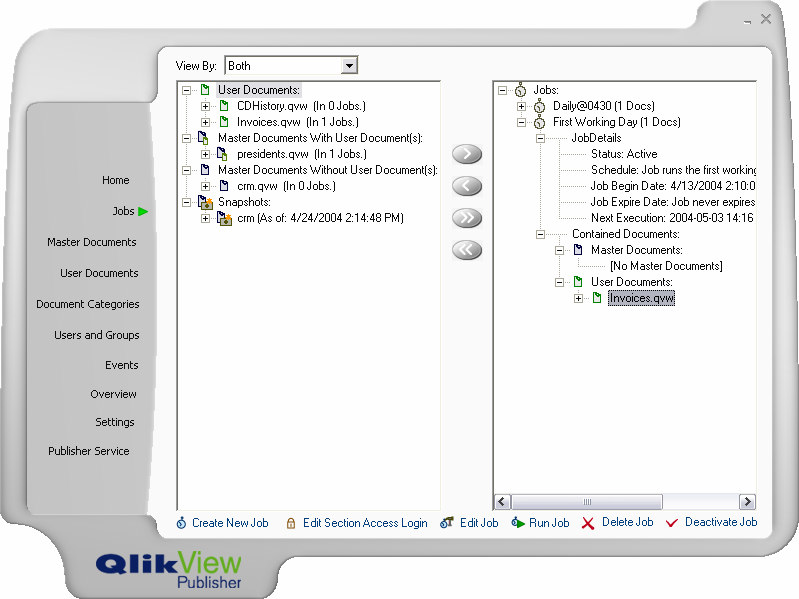

The name was not quite good, so we renamed it “QlikView Publisher”. We also improved the UI and the functionality and when we released version 2 a few years later, it was much richer in features and much more usable.

The administration panel for Publisher version 2

Initially, it was developed in Visual basic 6, but we soon were looking for a more modern development tool and today it is developed in C#.

The version numbers were not in sync with QlikView until QlikView 8. Before that, the Publisher had its own numbering. For QlikView 9 the QlikView Server and the QlikView Publisher were completely merged, with a common management console and a common installation. This also means that some of the original Publisher features became available also without a Publisher license, e.g. the reload of a document.

Although very much has changed since the first Publisher version, the basic concepts for the Publisher remain the same: Security, Distribution and Workflow.

Today, the Publisher is a mature workflow tool that allows our customers to manage the distribution of information both to off-line and on-line users. It can connect to a large number of directory services; it can be integrated with almost any authentication system and it can use either Windows integrated authorization or the QlikView internal authorization. It can take a master document, refresh it, reduce it so that the user only gets its own data and finally distribute it in any way the administrator wants it. It is an absolute necessity for a company with enterprise demands on security and data governance.

-

Qlik Sense Workbench. The visualization and Mash-Up Editor

What’s Qlik Sense Workbench and what’s for?Qlik Sense Workbench is a development toolbox used for building solutions with Qlik Sense. It includes JavaScript API libraries used for building Qlik Sense visualizations or for building mashup websites with Qlik Sense content.Qlik Sense Workbench provides developers with a quick start for creating custom visualizations and mashup websites, including code samples and templates for creating different typ... Show MoreWhat’s Qlik Sense Workbench and what’s for?

Qlik Sense Workbench is a development toolbox used for building solutions with Qlik Sense. It includes JavaScript API libraries used for building Qlik Sense visualizations or for building mashup websites with Qlik Sense content.

Qlik Sense Workbench provides developers with a quick start for creating custom visualizations and mashup websites, including code samples and templates for creating different types of visualizations as well as templates for creating basic mashup websites. Those templates constitute in fact the perfect starting point for your next mashup project.

Qlik Sense Workbench provides three JavaScript APIs for building extensions and mashups. The Extensions API for building visualizations, the Mashups API for building mashups websites, and the Backend API for communicating with the Qlik Sense engine.

How can I access to Workbench?

The good news is Workbench doesn't have a separate installation package, so if you already have Qlik Sense Server or Desktop you have Workbench working.

To launch Qlik Sense Workbench open a web browser and type in the URL https://ServerName/workbench/ . If you are a Qlik Sense Desktop user, make sure it is running and do the following, open a web browser and type in the URL http://localhost:4848/workbench/ et voila!

How does Workbench work?

To reply to that question I leave you with a 5 min video that will walk you through the mashup creation process.

Enjoy!

AMZ

PS: Remember you can find lots of useful videos, howtos and tutorials at the Qlik Youtube channel

Check out a step by step tutorial here: Creating a webpage based on the Qlik Sense Desktop Mashup API

-

Data Types in QlikView

There are no data types in QlikView. This is not a limitation – it is a conscious design decision. One of the initial requirements of QlikView was that it should be possible to mix data from different sources: We wanted users to be able to have a data model with some data from a database, some from an Excel sheet, and some from a comma delimited text file. Some of these sources have proper data types, others don’t. So relying on the data type... Show MoreThere are no data types in QlikView.

This is not a limitation – it is a conscious design decision.

One of the initial requirements of QlikView was that it should be possible to mix data from different sources: We wanted users to be able to have a data model with some data from a database, some from an Excel sheet, and some from a comma delimited text file. Some of these sources have proper data types, others don’t. So relying on the data types of the data source would be difficult. Further, we wanted the internal functions to be able to always return a relevant calculation – there must never be any type of conversion problems. We wanted simplicity for the user.

Enter the Dual format.

Enter the Dual format.The dual format is a brilliantly simple solution to the above requirements: Its core is that every field value has two values – one string that is displayed, and one number that is used for sorting and calculations. The two values are inseparable; they are like the two sides of a single coin. They are both needed to describe the field value properly.

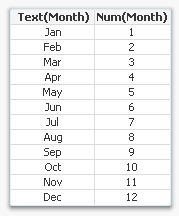

For example, months have the string values ‘Jan; ‘Feb’ … ‘Dec’, which are displayed. At the same time they have numeric values 1 to 12, which are used for sorting. Similarly, weekdays have the string values ‘Mon’; ‘Tue’ … ‘Sun’ and at the same time the numeric values 0 to 6.

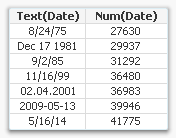

Dates and times have string values that look like dates, e.g. ‘12/31/2011’ or ‘06.06.1944 06:30’ and at the same time they have numeric values corresponding to the number of days since Dec 30 1899. As I write this, the (numeric) time is 41215.6971. How months, weekdays, dates and times should be displayed is defined in the environment variables in the beginning of the script.

Dates and times have string values that look like dates, e.g. ‘12/31/2011’ or ‘06.06.1944 06:30’ and at the same time they have numeric values corresponding to the number of days since Dec 30 1899. As I write this, the (numeric) time is 41215.6971. How months, weekdays, dates and times should be displayed is defined in the environment variables in the beginning of the script.This way QlikView can sort months, days and dates numerically, or calculate the difference between two dates. Numbers can be formatted in arbitrary ways. In essence, QlikView can have data that is much more complex than plain strings.

When dual values are used as parameters inside QlikView functions, the function always uses just one of the two representations. If a string is expected, as in the first parameter of Left(s,n), the string representation is used. And if a number is expected, as in the Month(date) function, the number representation is used.

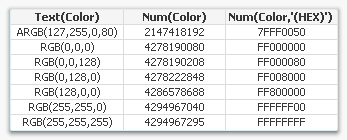

QlikView functions all return dual values, when possible. Even the color functions do, see table. However, string functions, e.g. Left(s,n), is the exception; because they don’t have a number that can be associated with the string, they leave the number part of the dual empty.

QlikView functions all return dual values, when possible. Even the color functions do, see table. However, string functions, e.g. Left(s,n), is the exception; because they don’t have a number that can be associated with the string, they leave the number part of the dual empty.Finally, there is of course a function with which you can create your own dual values: Dual(s,n). With it you can create any combination of string and number. Use it if you want to assign a sort order to strings or if you want to assign text to numeric values.

Here is how one of the top contributors of QlikCommunity uses duals for Booleans: Rob Wunderlich’s Dual flags.

Further reading related to this topic:

-

Is it a string or a number?

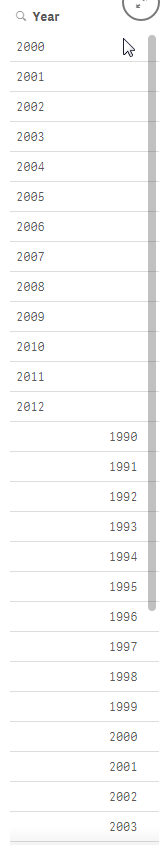

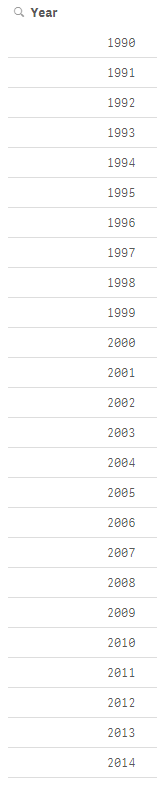

I am sure that I am not the only one who has added a filter pane to their Qlik Sense app and found a combination of strings and numbers when you were expecting all numeric data. This happened to me recently when I was building an app that had many data sources. I was loading a year field from each of the data sources but I soon realized that the data I was loading was not always numeric. So when I added a filter pane to my app and added the di... Show MoreI am sure that I am not the only one who has added a filter pane to their Qlik Sense app and found a combination of strings and numbers when you were expecting all numeric data. This happened to me recently when I was building an app that had many data sources. I was loading a year field from each of the data sources but I soon realized that the data I was loading was not always numeric. So when I added a filter pane to my app and added the dimension year, I ended up with a list like the image below (on the left). Some of the years were left-aligned and some were right-aligned.

One of my data sources had years from 2000 to 2012 which were loading as strings (left-aligned) while my other data source had years from 1990 to 2014 that were right-aligned. My obvious problem is I want each year listed once and to be consistent across all my data sources so that when a user wants to select 2010, they do not have to select both the string and numeric representation of the year.

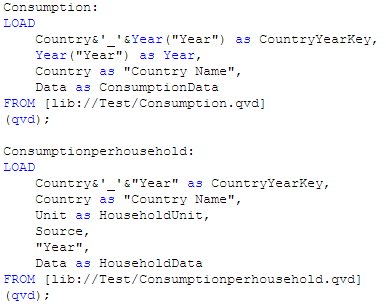

Part of the script that loaded these files looked like this:

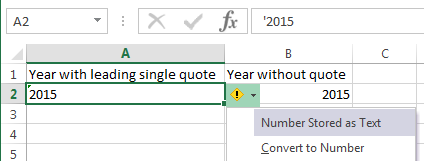

After some testing I realized that the second table shown above, Consumptionhousehold, was where the string year values were coming from. I did not generate the QVD being used for this app but I have found that sometimes numeric values are loaded as strings if there are additional characters in the original field. For instance, in Excel, a year column may be stored as text if there is a leading single quote. If you look at the image below, you can see that the year in column A is stored as text because it starts with a single quote (‘2015). In column B, the year is numeric because it is just numbers. When both these values are loaded into Qlik Sense, they are evaluated differently and can lead to problems.

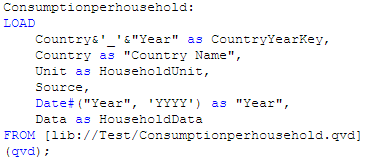

To resolve this issue I modified my script to evaluate the string year as a date by using the Date# function and then I added the format parameter (‘YYYY’) to indicate how the year is formatted (see image below). Going forward, I added this modification to my script every time I loaded year just to be on the safe side. You can read more about the Date function in blog titled The Date Function.

Once this modification was made I ended up with a list of years from 1990 to 2014 and they were all right-aligned and listed only once.

While this is a small problem, it can cause confusion when you are building an app especially if you do not realize it has happened. I noticed the issue because I added a filter pane to my app but had I not done that and tried to use the year field in a chart or in set analysis, my results would have been incorrect. The lesson I took from this is to always check to make sure that the data I am loading is loading as expected. I can easily test this by adding filter panes to my app or looking at the data model viewer to ensure that everything is loaded as planned. Good luck scripting.

Thanks,

Jennell

-

Qlik Community Home Page Revision

Hello Qlik Community Members,We have just completed some updates we think you will find useful.The categories on our Qlik Community Index page have been revised to provide easier navigation. The new categories are:Qlik SenseQlikViewGeneralUnder General you can find areas which are not product specific - such as Community Information, Education Services, Technical Blogs and Groups.We have also added two resource areas on the top right of the home... Show MoreHello Qlik Community Members,

We have just completed some updates we think you will find useful.

The categories on our Qlik Community Index page have been revised to provide easier navigation. The new categories are:

- Qlik Sense

- QlikView

- General

Under General you can find areas which are not product specific - such as Community Information, Education Services, Technical Blogs and Groups.

We have also added two resource areas on the top right of the home page highlighting:

- Benefits of joining Qlik Community

- Links to popular getting started resources

We will adding more getting started resources over the coming weeks to help new members learn best practices like where and how to post their questions. We hope you like the updates.

If you have a recommendation for resource materials, please contact sara.leslie@qlik.com.

-The Qlik Community Team

-

Engine API - Displaying Time of the created QVF on different regions

As I was working on an app that displays live data, I was called to display the time when the QVF was created. In Qlik Sense we have the function ReloadTime(), but how do we call that in our script from the API and how do we display that based on our region since, our server may very well be in another one?In an earlier post, I showed how do connect to our app with qsocks and the Engine API Engine API and qSocks - Connecting and getting a list of... Show MoreAs I was working on an app that displays live data, I was called to display the time when the QVF was created. In Qlik Sense we have the function ReloadTime(), but how do we call that in our script from the API and how do we display that based on our region since, our server may very well be in another one?

In an earlier post, I showed how do connect to our app with qsocks and the Engine API Engine API and qSocks - Connecting and getting a list of available apps

After we establish our connection and create the session, we will create our HyperQube of a simple ListObject.

var obj = {

"qInfo": {

"qId": "LB02",

"qType": "ListObject"

},

"qListObjectDef": {

"qStateName": "$",

"qDef": {

"qFieldDefs": [

"Round"

],

"qFieldLabels": [

"Round"

],

"qSortCriterias": [{

"qSortByExpression": -1,

"qExpression": {

"qv": ""

}

}]

},

"qInitialDataFetch": [{

"qTop": 0,

"qLeft": 0,

"qHeight": 100,

"qWidth": 2

}]

}

This only returns one field, in this case the "Round". In order to get the time we will need to create an expression and get it as a second field. So we add right after "qInitialDataFetch"

,

"qExpressions": [{

"qExpr": "=ReloadTime()"

}]

So now we can create our session and grab all of the fields. For now, we will just focus on the time so here we will take it from the array returned and store it in a global variable reloadTime.

qsocks.Connect(app.config).then(function(global) {

global.openDoc(id).then(function(app) {

app.createSessionObject(obj).then(function(list) {

list.getLayout().then(function(layout) {

reloadTime = layout.qListObject.qDataPages[0].qMatrix[0][1].qText;

});

});

});

});

Now we need to display this on our page but based on the timezone of the user.

First we need to create the returned date as a javascript date so we can work with it,

var lastReloadTime = new Date(reloadTime);

Get the time server/user time difference in hours.

var timeDiff = lastReloadTime.getTimezoneOffset() / 60;

Now set the time to the users time.

lastReloadTime.setHours(lastReloadTime.getHours() - timeDiff);

Finally Display it.

$('#lastReloadTime').html('LAST UPDATE: ' + lastReloadTime.toLocaleTimeString());

-

Do you Qualify?

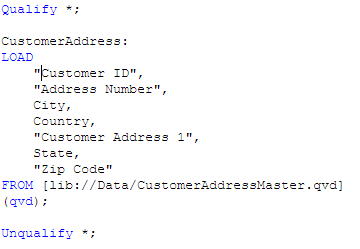

I was recently working on a project where I had several files that I needed to load in order to determine what data I needed and what data I did not need. I started by adding the files that I knew had data that I needed. Then I started to add the additional files to my data model one by one. Occasionally, I came across a file that had the same fields as a file I had already loaded. When I ran into this, I used the Qualify statement to load th... Show MoreI was recently working on a project where I had several files that I needed to load in order to determine what data I needed and what data I did not need. I started by adding the files that I knew had data that I needed. Then I started to add the additional files to my data model one by one. Occasionally, I came across a file that had the same fields as a file I had already loaded. When I ran into this, I used the Qualify statement to load the new table so that I could compare the data to the data I already loaded. Using the Qualify statement adds the table name as a prefix to the field name. For me this was helpful because I did not want to create synthetic tables in my data model. I simply wanted to compare the data in the two tables to see if they were the same before I added it to my data model.

I used Qualify * to turn on the qualification of the field names. I used the wildcard to qualify all fields loaded after the Qualify statement.

I could have also listed the fields that I wanted to Qualify instead of using the wildcard by doing something like this:

Whenever the qualify statement is used, it will qualify all of the fields loaded after the qualify statement until the end of the script is reached or until the script reaches an Unqualify statement. The Unqualify statement turns the field qualification off. The syntax is similar to the Qualify statement. I can use a wildcard or a list of field names.

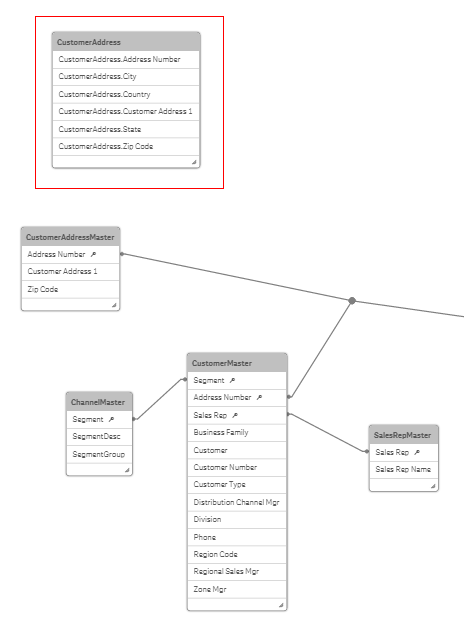

Once the fields are qualified, the field names will look like the image below and it will not be associated to the other tables in the data model since there are no matching field names.

Notice that CustomerAddress which is the table name is the prefix for all the fields in that table. Now that I have this table loaded, I can take a look at the data in this table and the previously loaded customer table to determine if this is the same data set and if I need to load the CustomerAddress table at all. It is a quick way to spot check the data before connecting it to the data model.

Now if I did not use the Qualify statement, my data model would have looked like the image below with a synthetic table because of the three field names that match (Address Number, Customer Address 1 and Zip Code) in the CustomerAddressMaster and CustomerAddress tables. Using Qualify prevented the synthetic table from being created.

The Qualify and Unqualify statements can be used when building your data model and exploring your data sources. The qualification of the field names eliminates matching field names therefore loading each table as an island unassociated to the other tables in the data model. This provides an easy way to view the data sources before actually building the data model – utilizing Qlik Sense or QlikView to explore the data first. Hopefully, you will find these statements useful.

Thanks,

Jennell

-

Data Literacy

A skill set rarely discussed in the BI narrative is that of data literacy. Much is made of newer & more advanced visualizations, but the ability to understand what you are seeing and make smart decisions from that is incredibly valuable. Only a person with a great degree of data literacy can successfully both read & manipulate complex data to arrive at meaningful insights.Reading & WritingData literacy comes in two parts: the front-end and the ba... Show MoreA skill set rarely discussed in the BI narrative is that of data literacy. Much is made of newer & more advanced visualizations, but the ability to understand what you are seeing and make smart decisions from that is incredibly valuable. Only a person with a great degree of data literacy can successfully both read & manipulate complex data to arrive at meaningful insights.

Reading & Writing

Data literacy comes in two parts: the front-end and the back-end. On the front-end a dashboard page requires the lowest degree of data literacy. Most people can read a well designed dashboard page and understand the general status of things. It is when the user advances to pages intended for critical analysis that the bar rises and users begin to drop out. Real analysis requires a data literate audience to get to the root cause of a KPI's status.

Marching hand-in-hand with front-end literacy is back-end literacy. While a great dashboard may not require much data literacy from the users it required a greater degree of data literacy from the person(s) who built it. What is the data being measured? Where does it come from? Are there compatibility issues between the data sets in an application? Building new visualizations or manipulating existing ones require familiarity with the data as well as how complex that data source is. Working with a simple data set requires relatively little data literacy but the more complex the data the greater the need for a data literate developer. Creating new objects is more than just technical development knowledge - it is understanding what you are measuring and why you want to measure it. Data literacy is often overlooked when it comes to the skill set of a great developer.

Increasing the data literacy of your organization, and yourself, is the key to spreading BI to the masses.

-

Creating a webpage based on the Qlik Sense Desktop Mashup API

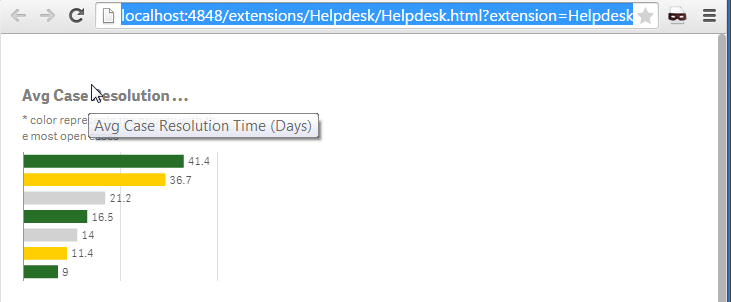

Required: Qlik Sense Desktop, Helpdesk Management.qvfFiles Created: Index.html, index.jsOk, we created a beautiful visualization that everyone in the company is excited about. One day we are asked to publish this onto our website and we are thinking… is this possible? Yes it is! Qlik Sense comes with a built in API that we can get any object that we have created in our app and put it on our website. In this article I will give you step by step di... Show MoreRequired: Qlik Sense Desktop, Helpdesk Management.qvf

Files Created: Index.html, index.js

Ok, we created a beautiful visualization that everyone in the company is excited about. One day we are asked to publish this onto our website and we are thinking… is this possible? Yes it is! Qlik Sense comes with a built in API that we can get any object that we have created in our app and put it on our website. In this article I will give you step by step directions on how to do that.

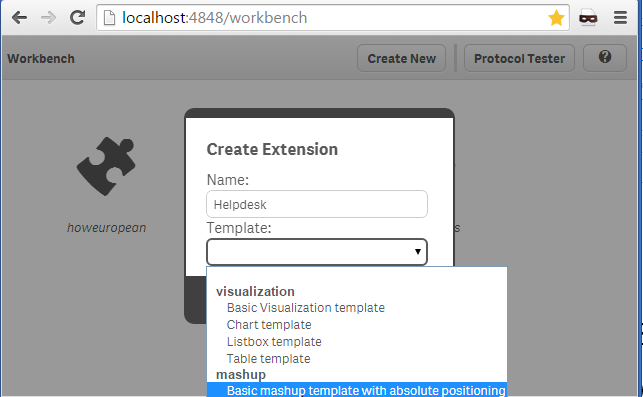

- Make sure that you have your Qlik Sense Desktop open. This will start a local server on port 4848 that you will be able to access your apps from. Name your App "Helpdesk" and from the template drop down select "Basic Mashup ..." and hit "Create"

- Now we need to enter into the app, so click on "Helpdesk" that was just created and from the popup window click on "Edit".

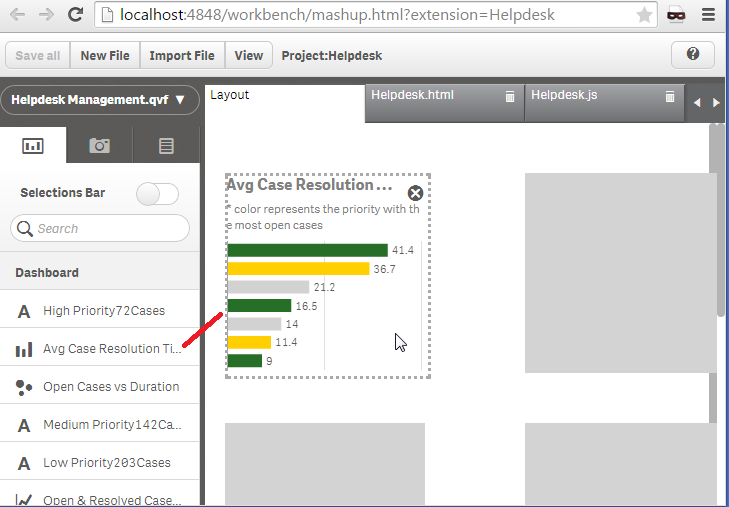

- There are 4 panels. The far left is where we choose our app and the objects that we will use in our webpage. On the right you will see the "Layout" tab. This is the place the we will drag our objects to and the 2 pages that automatically are created for us, "Helpdesk.html" and "Helpdesk.js". The workbench will create the basic code for us to get the objects from the Qlik Sense Desktop and html that will display these objects.

- Now, lets select our app from the left. Click on "Please choose an app" and select the "Helpdesk Management.qvf". All of the objects will be displayed for us to use. Lets select one bar graph to use in our webpage. Click on "Avg Case Resolution Time (Days)" and drag it to the "Layout" panel, onto the first grayed rectangle.

If you click on the "Helpdesk.html", you will see the included libraries. The css is needed for proper visualization and require.js to connect to the Qlik Sense Desktop and get the app.

our js file<link rel="stylesheet" href="https://community.qlik.com/resources/autogenerated/qlikui.css">

<link rel="stylesheet" href="https://community.qlik.com/resources/assets/client/client.css" media="all">

<script src="/resources/assets/external/requirejs/require.js"></script><script src="Helpdesk.js"></script>

and our placeholder for our objects<div id="QV01" style="position: absolute; top: 50px; left: 20px; width: 200px; height: 200px;" class="qvobject"></div>

- Now, moving on to the JavaScript file generated. This is composed of two parts. The fist, is the configuration.

var config = {

host: window.location.hostname,

prefix: "/",

port: window.location.port,

isSecure: window.location.protocol === "https:"

};

require.config( {

baseUrl: ( config.isSecure ? "https://" : "http://" ) + config.host + (config.port ? ":" + config.port: "") + config.prefix + "resources"

} ); - and then we have the actual connection code where we get the app and the specific object.

require( ["js/qlik"], function ( qlik ) {The getObject method gets the specified object and displays it in the div with id="QV01"

var app = qlik.openApp('Helpdesk Management.qvf', config);

app.getObject('QV01','a5e0f12c-38f5-4da9-8f3f-0e4566b28398');

} );<div id="QV01" style="position: absolute; top: 50px; left: 20px; width: 200px; height: 200px;" class="qvobject"></div - That's it! We created our first Mashup. Make sure to click on "Save All" and preview by clicking on the "View" button.

- The files are located under:

C:\Users\<username>\Documents\Qlik\Sense\Extensions\Helpdesk

I hope this was helpful enough to get you started with displaying your visualizations on a webpage. If you have this app published on your QMC, change in the JavaScript the host (step#6) of your server and the id of the app (step#7) to something like

var app = qlik.openApp('bb4a797c-b483-462b-84d4-53238ce1eeb1', config);---

More Info regarding the Qlik Desktop Workbench

- Make sure that you have your Qlik Sense Desktop open. This will start a local server on port 4848 that you will be able to access your apps from. Name your App "Helpdesk" and from the template drop down select "Basic Mashup ..." and hit "Create"