Unlock a world of possibilities! Login now and discover the exclusive benefits awaiting you.

Search our knowledge base, curated by global Support, for answers ranging from account questions to troubleshooting error messages.

Featured Content

-

How to contact Qlik Support

Qlik offers a wide range of channels to assist you in troubleshooting, answering frequently asked questions, and getting in touch with our technical e... Show MoreQlik offers a wide range of channels to assist you in troubleshooting, answering frequently asked questions, and getting in touch with our technical experts. In this article, we guide you through all available avenues to secure your best possible experience.

For details on our terms and conditions, review the Qlik Support Policy.

Index:

- Support and Professional Services; who to contact when.

- Qlik Support: How to access the support you need

- 1. Qlik Community, Forums & Knowledge Base

- The Knowledge Base

- Blogs

- Our Support programs:

- The Qlik Forums

- Ideation

- How to create a Qlik ID

- 2. Chat

- 3. Qlik Support Case Portal

- Escalate a Support Case

- Phone Numbers

- Resources

Support and Professional Services; who to contact when.

We're happy to help! Here's a breakdown of resources for each type of need.

Support Professional Services (*) Reactively fixes technical issues as well as answers narrowly defined specific questions. Handles administrative issues to keep the product up-to-date and functioning. Proactively accelerates projects, reduces risk, and achieves optimal configurations. Delivers expert help for training, planning, implementation, and performance improvement. - Error messages

- Task crashes

- Latency issues (due to errors or 1-1 mode)

- Performance degradation without config changes

- Specific questions

- Licensing requests

- Bug Report / Hotfixes

- Not functioning as designed or documented

- Software regression

- Deployment Implementation

- Setting up new endpoints

- Performance Tuning

- Architecture design or optimization

- Automation

- Customization

- Environment Migration

- Health Check

- New functionality walkthrough

- Realtime upgrade assistance

(*) reach out to your Account Manager or Customer Success Manager

Qlik Support: How to access the support you need

1. Qlik Community, Forums & Knowledge Base

Your first line of support: https://community.qlik.com/

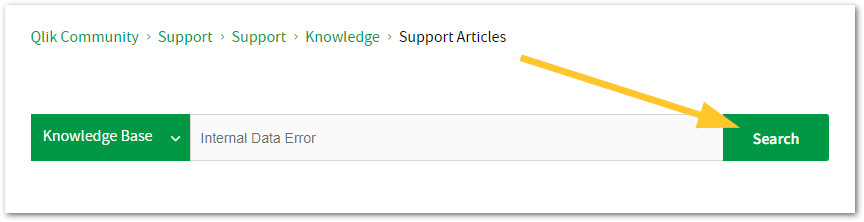

Looking for content? Type your question into our global search bar:

The Knowledge Base

Leverage the enhanced and continuously updated Knowledge Base to find solutions to your questions and best practice guides. Bookmark this page for quick access!

- Go to the Official Support Articles Knowledge base

- Type your question into our Search Engine

- Need more filters?

- Filter by Product

- Or switch tabs to browse content in the global community, on our Help Site, or even on our Youtube channel

Blogs

Subscribe to maximize your Qlik experience!

The Support Updates Blog

The Support Updates blog delivers important and useful Qlik Support information about end-of-product support, new service releases, and general support topics. (click)The Qlik Design Blog

The Design blog is all about product and Qlik solutions, such as scripting, data modelling, visual design, extensions, best practices, and more! (click)The Product Innovation Blog

By reading the Product Innovation blog, you will learn about what's new across all of the products in our growing Qlik product portfolio. (click)Our Support programs:

Q&A with Qlik

Live sessions with Qlik Experts in which we focus on your questions.Techspert Talks

Techspert Talks is a free webinar to facilitate knowledge sharing held on a monthly basis.Technical Adoption Workshops

Our in depth, hands-on workshops allow new Qlik Cloud Admins to build alongside Qlik Experts.Qlik Fix

Qlik Fix is a series of short video with helpful solutions for Qlik customers and partners.The Qlik Forums

- Quick, convenient, 24/7 availability

- Monitored by Qlik Experts

- New releases publicly announced within Qlik Community forums (click)

- Local language groups available (click)

Ideation

Suggest an idea, and influence the next generation of Qlik features!

Search & Submit Ideas

Ideation GuidelinesHow to create a Qlik ID

Get the full value of the community.

Register a Qlik ID:

- Go to register.myqlik.qlik.com

If you already have an account, please see How To Reset The Password of a Qlik Account for help using your existing account. - You must enter your company name exactly as it appears on your license or there will be significant delays in getting access.

- You will receive a system-generated email with an activation link for your new account. NOTE, this link will expire after 24 hours.

If you need additional details, see: Additional guidance on registering for a Qlik account

If you encounter problems with your Qlik ID, contact us through Live Chat!

2. Chat

Incidents are supported through our Chat, by clicking Chat Now on any Support Page across Qlik Community.

To raise a new issue, all you need to do is chat with us. With this, we can:

- Answer common questions instantly through our chatbot

- Have a live agent troubleshoot in real time

- With items that will take further investigating, we will create a case on your behalf with step-by-step intake questions.

3. Qlik Support Case Portal

Log in to manage and track your active cases in the Case Portal. (click)

Please note: to create a new case, it is easiest to do so via our chat (see above). Our chat will log your case through a series of guided intake questions.

Your advantages:

- Self-service access to all incidents so that you can track progress

- Option to upload documentation and troubleshooting files

- Option to include additional stakeholders and watchers to view active cases

- Follow-up conversations

When creating a case, you will be prompted to enter problem type and issue level. Definitions shared below:

Problem Type

Select Account Related for issues with your account, licenses, downloads, or payment.

Select Product Related for technical issues with Qlik products and platforms.

Priority

If your issue is account related, you will be asked to select a Priority level:

Select Medium/Low if the system is accessible, but there are some functional limitations that are not critical in the daily operation.

Select High if there are significant impacts on normal work or performance.

Select Urgent if there are major impacts on business-critical work or performance.

Severity

If your issue is product related, you will be asked to select a Severity level:

Severity 1: Qlik production software is down or not available, but not because of scheduled maintenance and/or upgrades.

Severity 2: Major functionality is not working in accordance with the technical specifications in documentation or significant performance degradation is experienced so that critical business operations cannot be performed.

Severity 3: Any error that is not Severity 1 Error or Severity 2 Issue. For more information, visit our Qlik Support Policy.

Escalate a Support Case

If you require a support case escalation, you have two options:

- Request to escalate within the case, mentioning the business reasons.

To escalate a support incident successfully, mention your intention to escalate in the open support case. This will begin the escalation process. - Contact your Regional Support Manager

If more attention is required, contact your regional support manager. You can find a full list of regional support managers in the How to escalate a support case article.

Phone Numbers

When other Support Channels are down for maintenance, please contact us via phone for high severity production-down concerns.

- Qlik Data Analytics: 1-877-754-5843

- Qlik Data Integration: 1-781-730-4060

- Talend AMER Region: 1-800-810-3065

- Talend UK Region: 44-800-098-8473

- Talend APAC Region: 65-800-492-2269

Resources

A collection of useful links.

Qlik Cloud Status Page

Keep up to date with Qlik Cloud's status.

Support Policy

Review our Service Level Agreements and License Agreements.

Live Chat and Case Portal

Your one stop to contact us.

Recent Documents

-

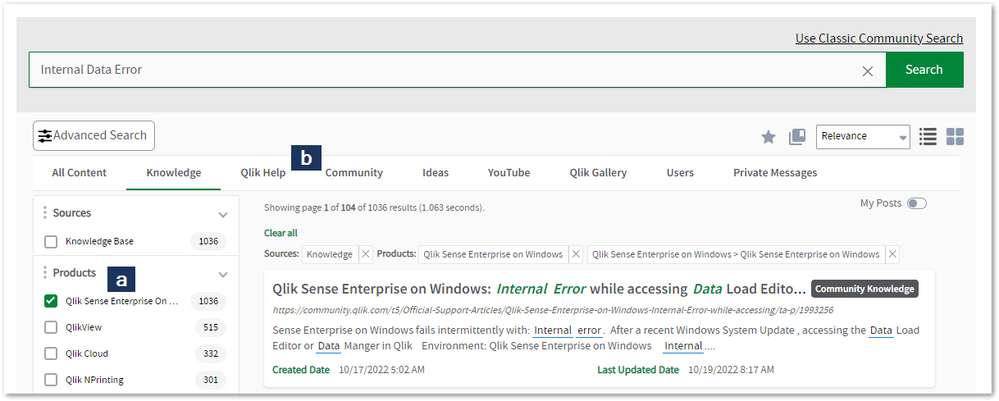

Qlik Sense Desktop hangs at "Initializing Add data"

Opening the Add data wizard or dragging/droping a data file into Qlik Sense Desktop, the screen hangs and the following error is displayed: Initializi... Show MoreOpening the Add data wizard or dragging/droping a data file into Qlik Sense Desktop, the screen hangs and the following error is displayed:

Initializing Add data

Resolution

- Make sure the user running Qlik Sense Desktop has full control to folder C:\Users\<username>\AppData\Local\Programs\Qlik\Sense\DataPrepService

- Run the matching installation file with admin privilege and repair the installation. You may need to download it from the Qlik download site again: https://demo.qlik.com/download/

- Internet Explorer limits the number of WebSockets. It may be necessary to increase them: Increase Maximum Number of Websocket per connections allowed for Internet Explorer (Cannot create connection) - Error Occurred on REST Endpoint Call

In some cases it was needed to uninstall and reinstall as below:

- Uninstall Qlik Sense Desktop from Control Panel / Uninstall programs

- Delete the program folders located in:

C:\Users\<username>\AppData\Local\Programs\Qlik\Sense

C:\Users\<username>\AppData\Local\Programs\Common Files\Qlik\Custom Data - Install Qlik Sense Desktop by Signing on as an Administrator and doing a Right Click Run as Administrator

- Select the Custom Installation option when you get asked and

- Install Qlik Sense Desktop to a Folder that all users have full rights access

- Select the same Folder in the Next screen. - Let the installation continue as normal

-

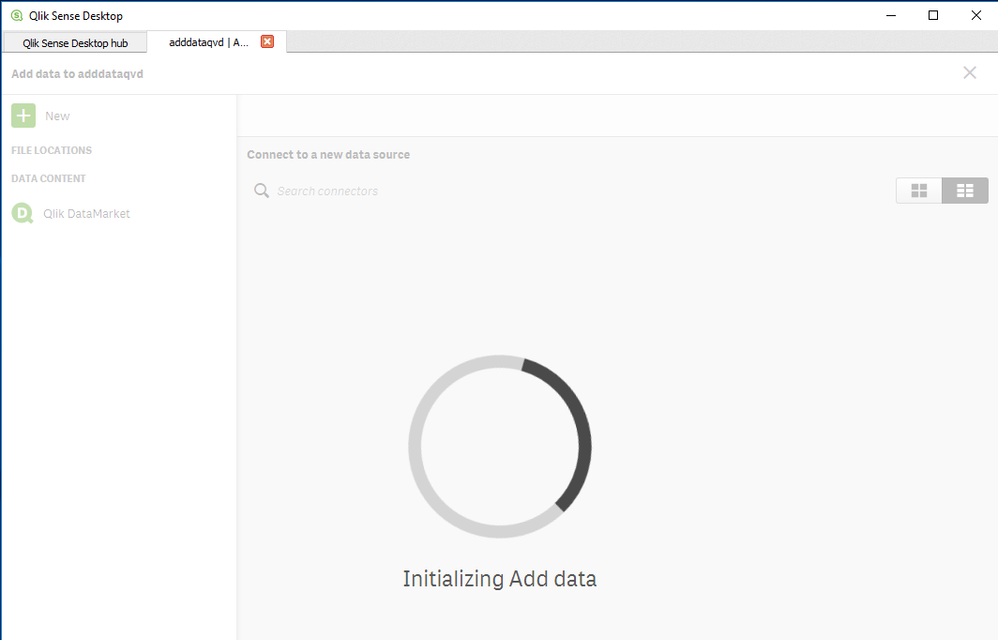

Tasks in the Qlik Sense Management Console don't update to show the correct stat...

Executing tasks or modifying tasks (changing owner, renaming an app) in the Qlik Sense Management Console and refreshing the page does not update the ... Show MoreExecuting tasks or modifying tasks (changing owner, renaming an app) in the Qlik Sense Management Console and refreshing the page does not update the correct task status. Issue affects Content Admin and Deployment Admin roles.

The behaviour began after an upgrade of Qlik Sense Enterprise on Windows.

Fix version:

This issue can be mitigated beginning with August 2021 by enabling the QMCCachingSupport Security Rule.

Solution for August 2023 and above:

Enable QmcTaskTableCacheDisabled.

To do so:

- Navigate to C:\Program Files\Qlik\Sense\CapabilityService\

- Locate and open the capabilities.json

- Modify or add the QmcTaskTableCacheDisabled flag for these values to true

{"contentHash":"CONTENTHASHHERE","originalClassName":"FeatureToggle","flag":"QmcTaskTableCacheDisabled","enabled":true}

Where CONTENTHASHHERE matches the number in all other features listed in the capabilities.json.

Example:

This will disable the caching on the tasks table only, leaving the overall QMC Cache intact to gain performance. If you had previously set QmcCacheEnabled, QmcDirtyChecking, QmcExtendedCaching to false, please set it to true again. - Restart the Qlik Sense services

Workaround for earlier versions:

Upgrade to the latest Service Release and disable the caching functionality:

To do so:

- Navigate to C:\Program Files\Qlik\Sense\CapabilityService\

- Locate and open the capabilities.json

- Modify the flag for these values to false

- QmcCacheEnabled

- QmcDirtyChecking

- QmcExtendedCaching

- Restart the Qlik Sense services

NOTE: Make sure to use lower case when setting values to true or false as capabilities.json file is case sensitive.

Should the issue persist after applying the workaround/fix, contact Qlik Support.

Internal Investigation ID(s):

Environment

-

Qlik Data transfer Feb 2021 new prefix behaviour

There's a new behaviour listed in the improvements section of the release notes. In previous versions, extracted QVDs were prefixed with the connec... Show More -

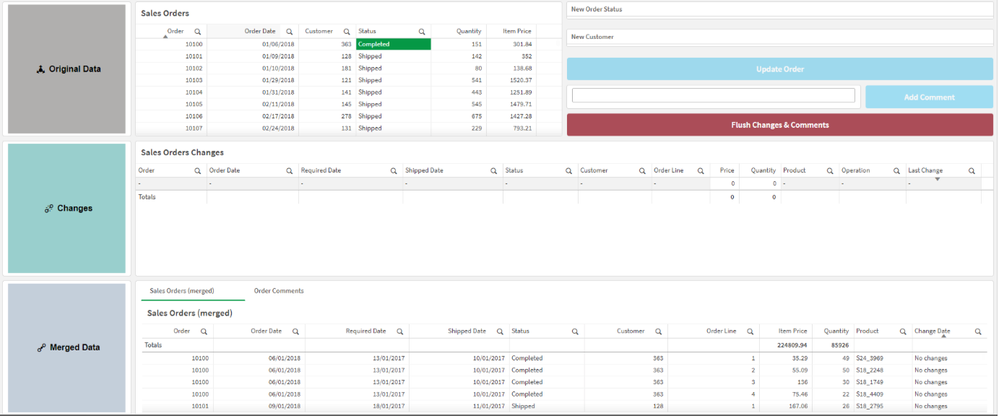

Make your Qlik Sense Sheet interactive with writeback functionality powered by Q...

Table of content: Writeback use caseTwo ways to execute an automation from a Qlik Sense Sheet1. Native 'execute automation' action in the Qlik button... Show MoreTable of content:

- Writeback use case

- Two ways to execute an automation from a Qlik Sense Sheet

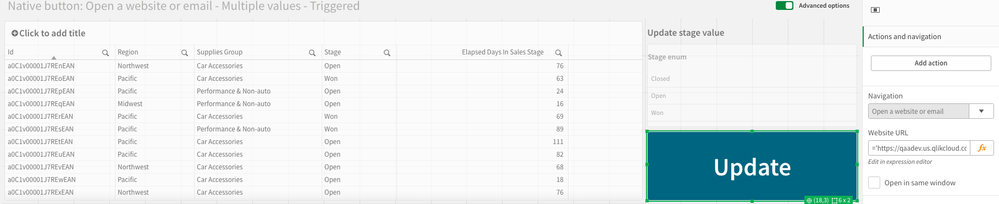

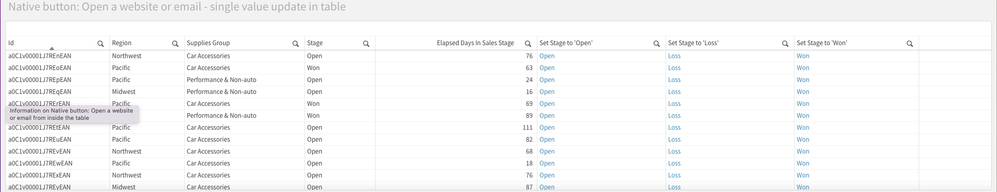

- 1. Native 'execute automation' action in the Qlik button

- 2. Link a triggered automation from your Qlik Sense Sheet.

- Example 1: Open website or URL (button)

- Example 2: Add an action link in your straight table

- Example 2: Extension with input forms

- Additional Notes

- Demo application with automations

-

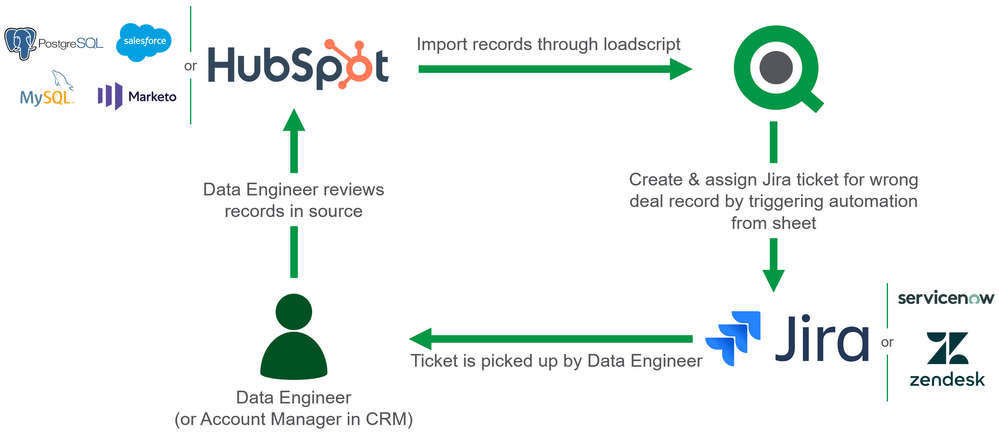

How to build a write back solution with native Qlik Sense components and Qlik Ap...

This article provides a step-by-step guide on building a write back solution with only native Qlik components and automations. Content: EnvironmentWr... Show MoreThis article provides a step-by-step guide on building a write back solution with only native Qlik components and automations.

Content:

- Environment

- Write back use cases

- 1. Ticket creation

- 2. Data annotation

- 3. Update records

- Tutorial: ticket creation

- 1. Configuring the button in the app

- 2. Configuring the automation

- Configuring the toast notification

- Reloads

- Limitations

Disclaimer for reporting use cases: this solution could produce inconsistent results in reports produced with automations; when using the button to pass through selections, the intended report composition and associated data reduction for the report may not be achieved. This is due to the fact that the session state of Qlik Application Automation cannot be transferred to the report composition definition that is passed to the Qlik Reporting Service.

Environment

- Qlik Sense app

- Qlik Application Automation

- A business tool that's used as the data source (we'll use HubSpot in this example)

Write back use cases

When analyzing results in a Qlik Sense app, it could happen you spot a mistake in your data or something that seems odd. To address this, you may want someone from your team to investigate this or you may want to update data in your source systems directly without leaving Qlik. Or maybe your data is just fine but you want to add a new record from within Qlik without having to open your business application. These scenarios fit in the following use cases:

- Ticket creation: create a ticket to ensure a data review or update task will get followed up by your data team

- Data annotation: add a comment or tag to one or more records in your source data

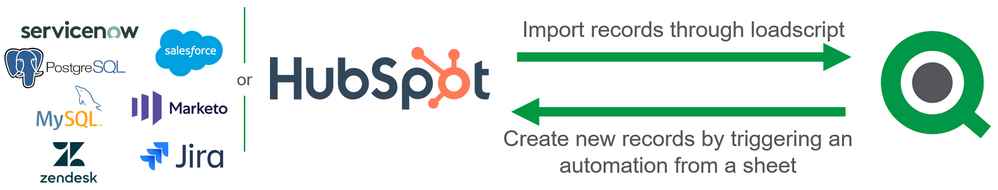

- Data change: add new records or update existing ones

1. Ticket creation

This is the least intrusive form of writing back that delegates the change to someone in your data team. The idea is that you create a ticket in a task management system like Jira or ServiceNow. Someone from your team will then pick up the ticket, investigate your comment, and review the data. The difference with sending an alert or email is that the ticketing system guarantees that the request is tracked.

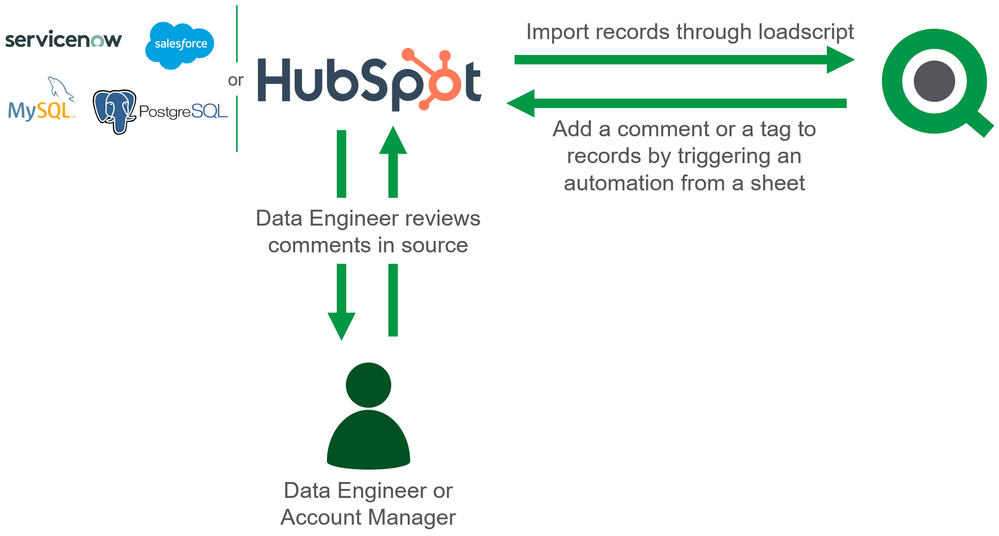

2. Data annotation

Another option to communicate changes is to write a comment or a tag for one or more records directly to the source system. This could be a comment on a deal record in your CRM or it could be stored in a separate database table if you're loading data from a database.

3. Update records

The final use case allows for updating records directly from within the sheet. Make sure you know who has access to the button before setting this up since this will allow users to change records directly.

Tutorial: ticket creation

All the above use cases can be realized in the same way: by configuring a native Qlik Sense button in your sheet to run an automation. Before you start this tutorial, make sure you already have an app and a new, empty automation. The tutorial has 2 parts:

- Configuring the button in the app

- Configuring the automation

1. Configuring the button in the app

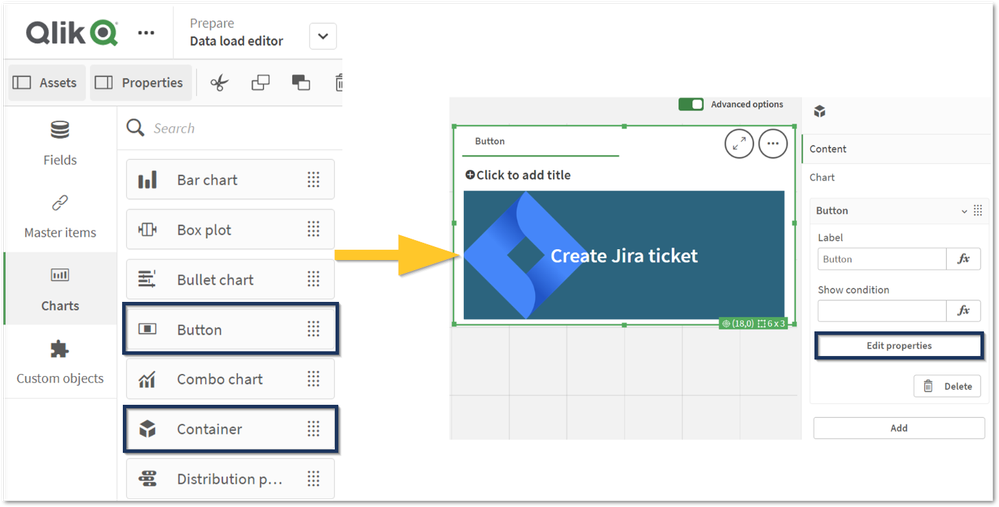

To configure the app, we'll use the following native Qlik Sense components:

- Button: Used to trigger the automation.

- Variable input: Used to allow users to specify parameters to use in the automation (for example, a variable to store a comment).

- Container: Used to group the variable inputs and the button for a better user experience.

- Table: Used to easily select records for the data annotation or ticket creation use case.

Steps:

- Open your app and add the Container component to a sheet that already contains a Table with records. Then add (a) Button to the Container component. To configure the button, click the (b) Container and go to (c) Edit properties on the button. Feel free to give the button a proper label, in this tutorial, we'll use "Create Jira ticket".

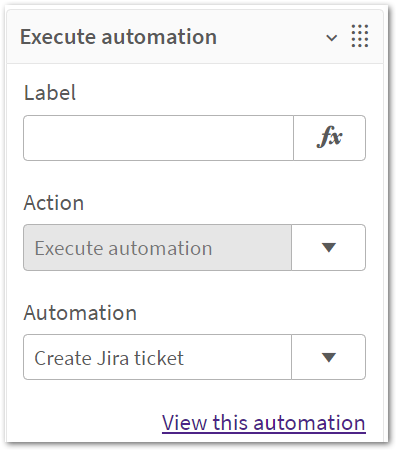

- Configure the button under the 'Actions and navigation' tab by adding a new action and setting it to 'Execute automation' and configure the automation parameter to use the empty automation you created earlier. You can only configure an automation that you own. It is not possible to configure someone else's automation. Tip: you can use the 'View this automation' link to open the automation editor in a new tab.

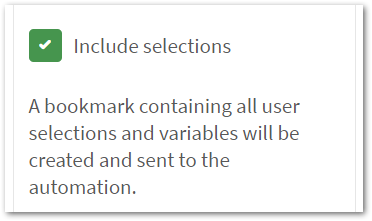

- Check the "Include selections" option. This way, on each click, the button will create a temporary bookmark and send its ID to the automation. The temporary bookmark contains the selections that were active when the button was clicked and also the variable state which contains any values the user has supplied for variables through the Variable Input components.

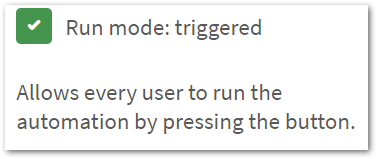

- Check the "Run mode: triggered" option as well. This will allow everyone who has access to the sheet to run the automation by clicking the button. If you don't select this checkbox, the automation will be executed over an API call, and only users with permission to run the automation will be able to run it through the button. Currently, only the automation owner has this permission.

-

Enable the "Show notifications" toggle, this will send a toast notification back to the user in the sheet after the automation completes. Feel free to increase the duration.

- Add new variables to your app. Do not use load script variables for this; instead, create the variable through the Edit Sheet page. Create the following variables, only give them a name, and you can leave the other fields blank.

- title_for_jira

- comment_for_jira

- assignee_for_jira

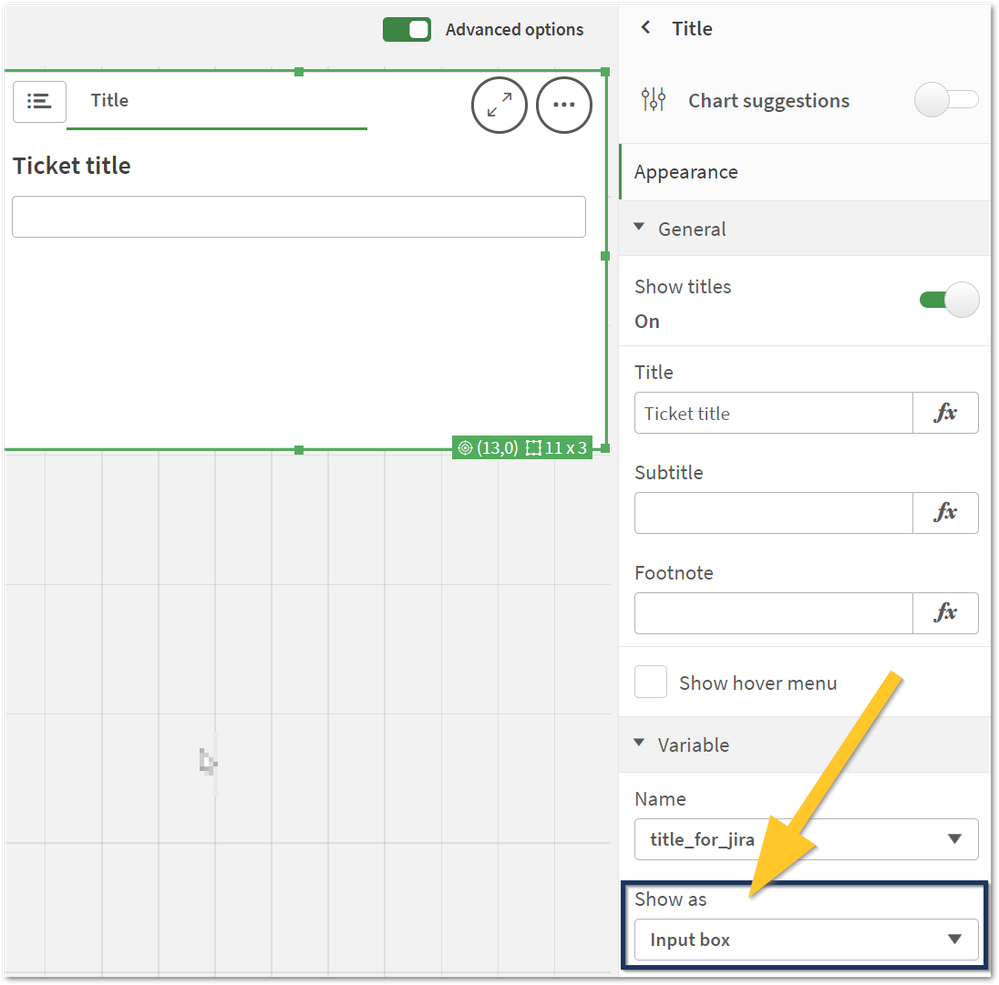

- Add a Variable Input component for each variable to the Container component that already has the Button from earlier. Re-arrange the components in the Container so the Button is last. Set the 'Show as' parameter for the Variable input for the ticket title and comment to the 'Input box'. For the ticket assignee, set it to 'Drop down'.

Note: you'll find the Variable Input under the 'Custom objects' category.

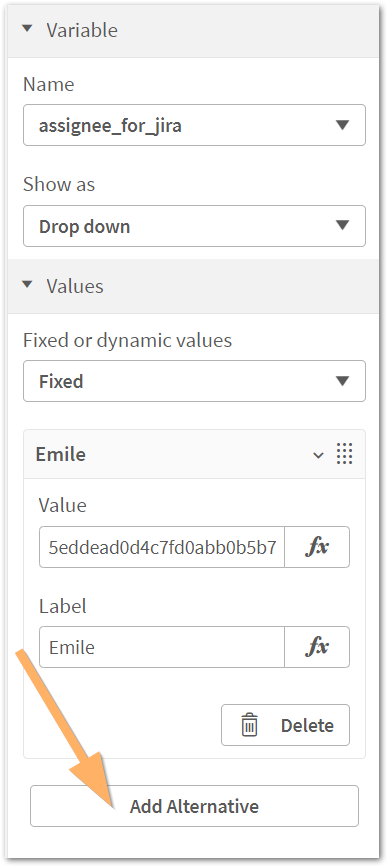

- To configure the dropdown, you'll need the user IDs of the possible assignees for the Jira ticket. One way of getting those is to use the List Users block in the Jira connector in an automation. Once you have the IDs, configure the dropdown 'Values' parameter by adding alternative values. The Value should be the user's ID and the Label should be the user's name.

- Your sheet should now look like this:

Tip: using a Container component will allow your variable inputs & button to scale better for smaller screens.

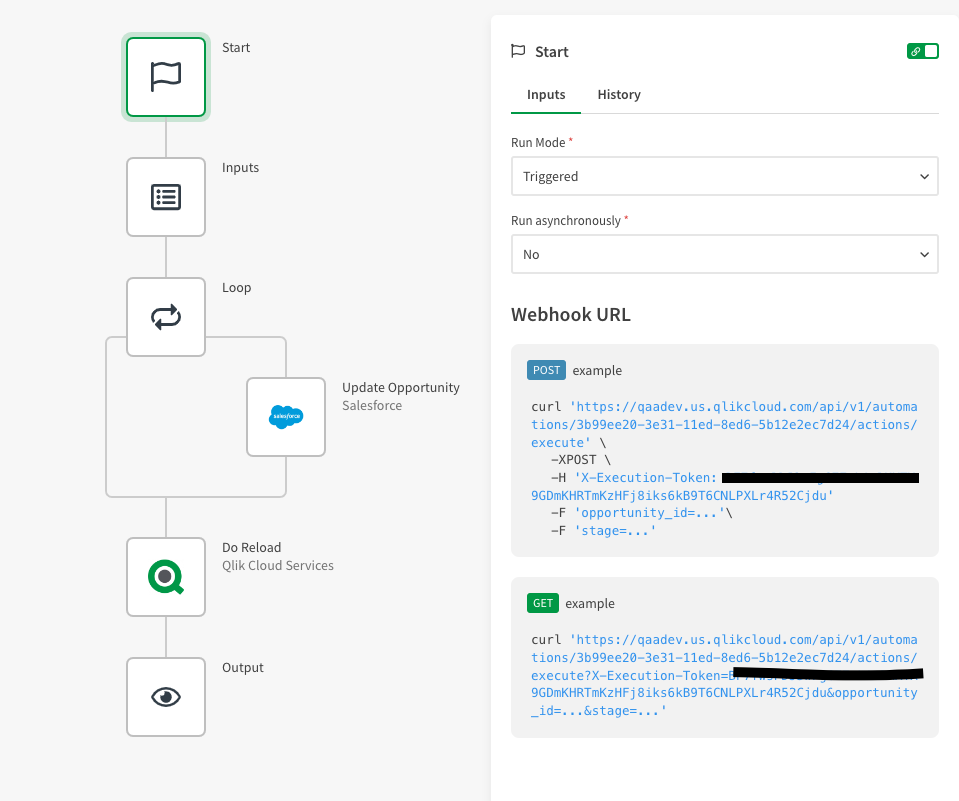

2. Configuring the automation

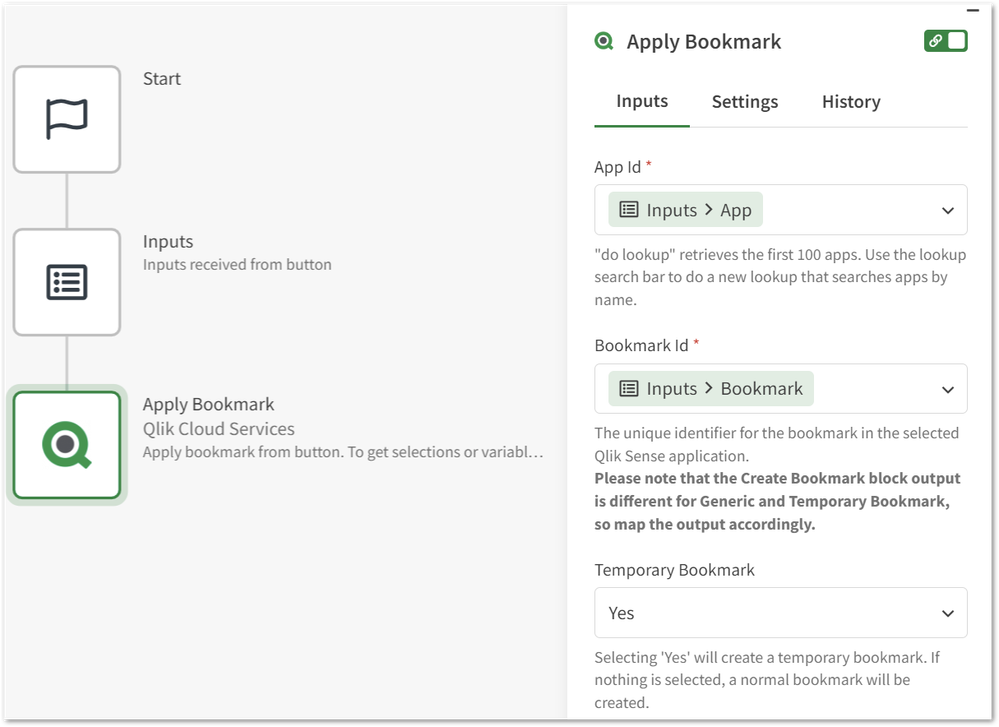

- Click the 'Copy input block' button in the Button's configuration section. This will copy a preconfigured Inputs block and Apply Bookmark block to your clipboard to get you started with the automation. Next, click the 'View this automation' link to open the automation editor in a new tab.

- Right-click in the automation canvas and select 'Paste Block(s)'. Connect the new blocks to the Start block. The Apply Bookmark block will ensure that any selections applied by the user, together with the variables state (whatever the user configured for the variables before clicking the button), are available in the automation session.

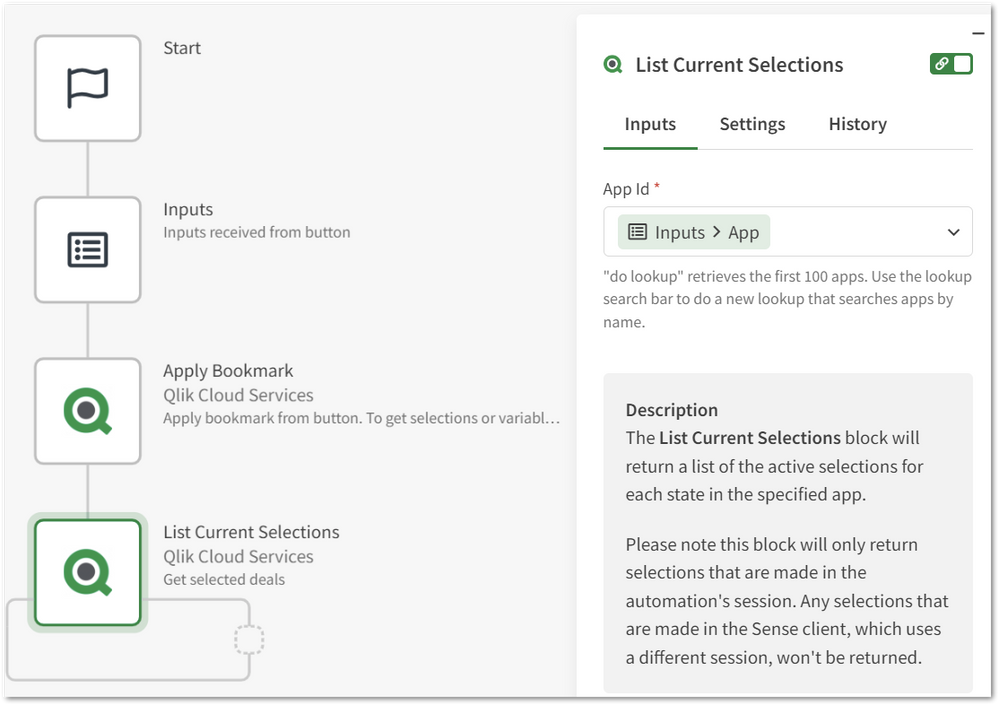

- Add a List Current Selections block, and configure it to use the App parameter provided through the Inputs block. This block will return all records the user selected (because they're sent over through the temporary bookmark).

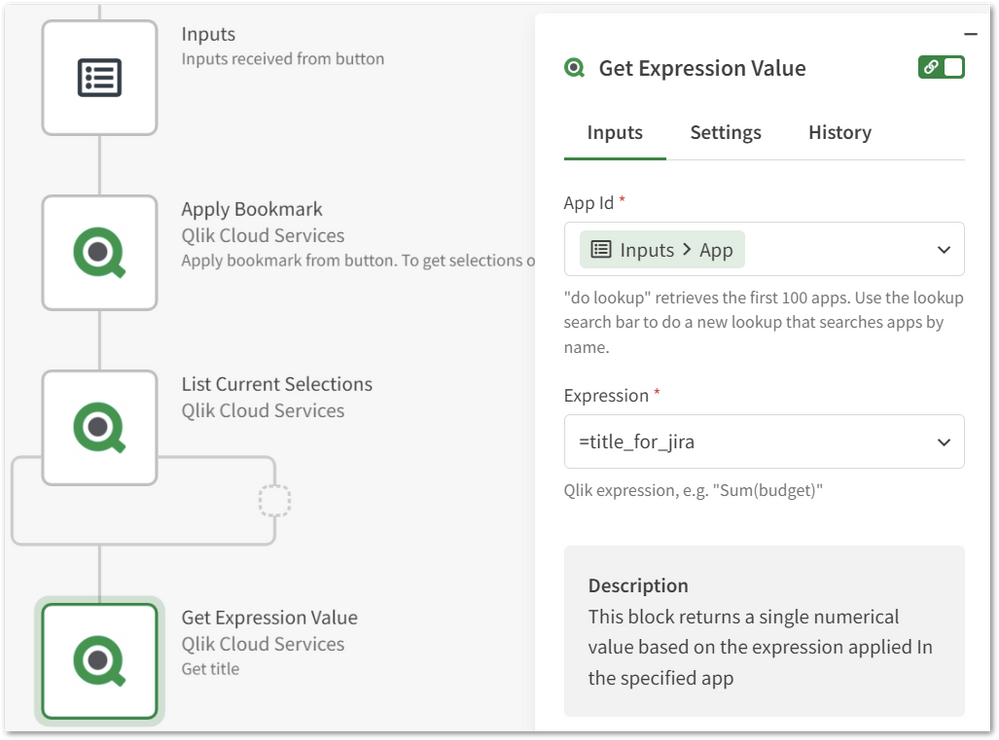

- Add the Get Expression block for each variable. Configure it to use the App parameter provided through the Inputs block. Use the following format for the expression, =variablename. For example: =title_for_jira. Do the same for the comment and assignee variables.

- If you haven't already, this is a good point to make a selection, configure the Variable Inputs and click the button from within the app and make sure that each block is returning the correct selections/variable values. Doing this will make it easier to configure the other blocks.

- Add a Create Issue block from the Jira connector and configure the following parameters by using the do lookup functionality. Project, Issue Type & Priority. These will be hard-coded for each new issue.

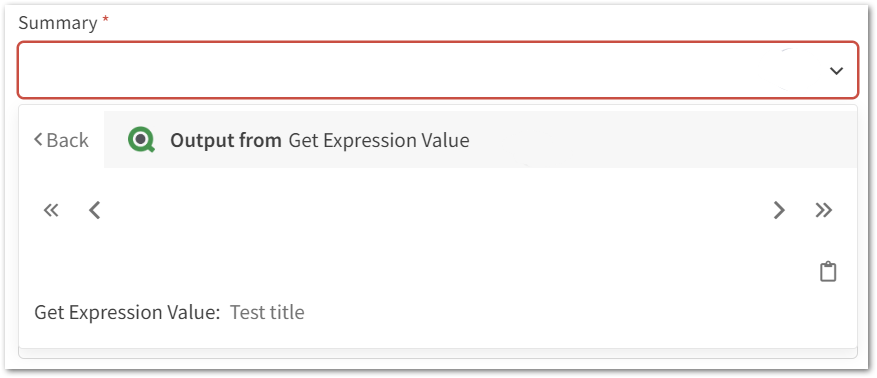

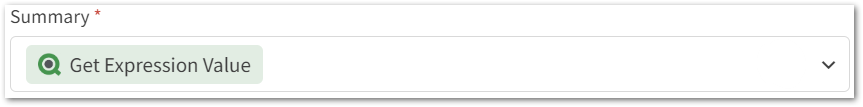

Tip: use a staging or test project in Jira while building the automation. - Map the expression value for the Summary parameter to the output of the Get Expression Value block that's retrieving the ticket title. Do the same for the assignee and set the Comment variable as the ticket's description.

- The deal IDs should be added as a comma-separated list to the issue's Description:

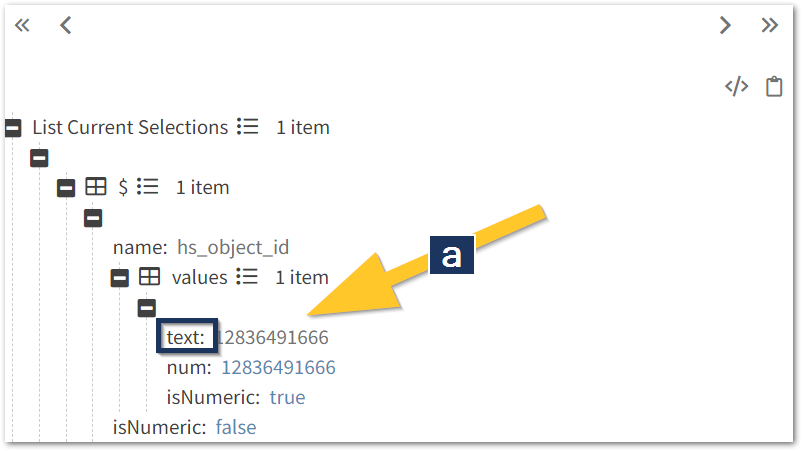

- Click the description input field and select the output from the List Current Selections block. Click the (a) values list and chose the 'Select first item from list' option on the next screen:

-

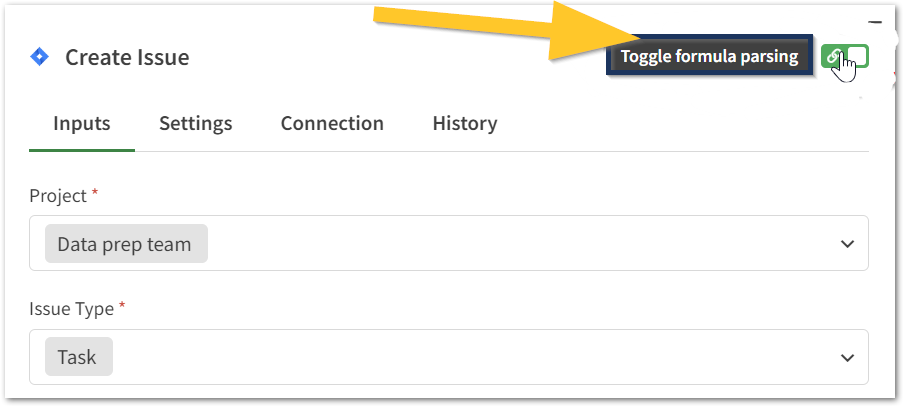

Upon automation run, this will resolve to the first text value selected for the field hs_object_id (which corresponds to the deal ID from HubSpot). To update this to a comma-separated list of IDs, the mapping must first be changed to output a list of all values for hs_object_id. To do this, toggle the formula parsing:

- Change the final 0 in the formula to an asterisk '*', and turn the formula parsing back on.

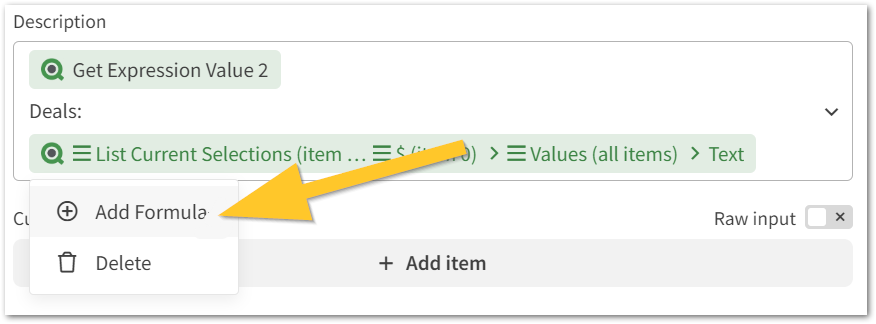

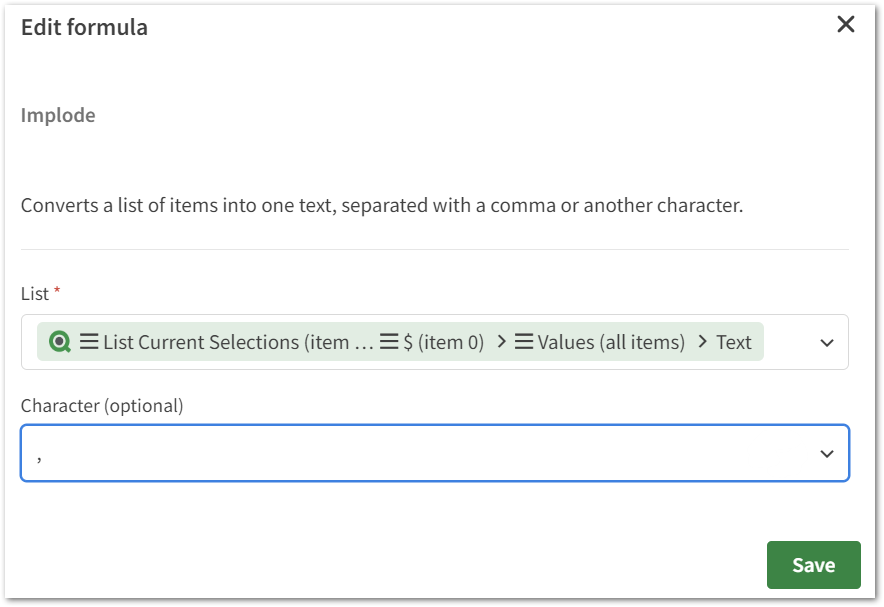

- Click the mapping and choose 'Add formula' from the context menu. Then, search for the Implode formula. This formula will turn a list into a comma-separated list. Configure it to use a comma as a separator:

- Do a new test run by selecting multiple deals in your sheet and then clicking the button, this should create a new ticket in Jira with the deal IDs as a comma-separated list.

- Click the description input field and select the output from the List Current Selections block. Click the (a) values list and chose the 'Select first item from list' option on the next screen:

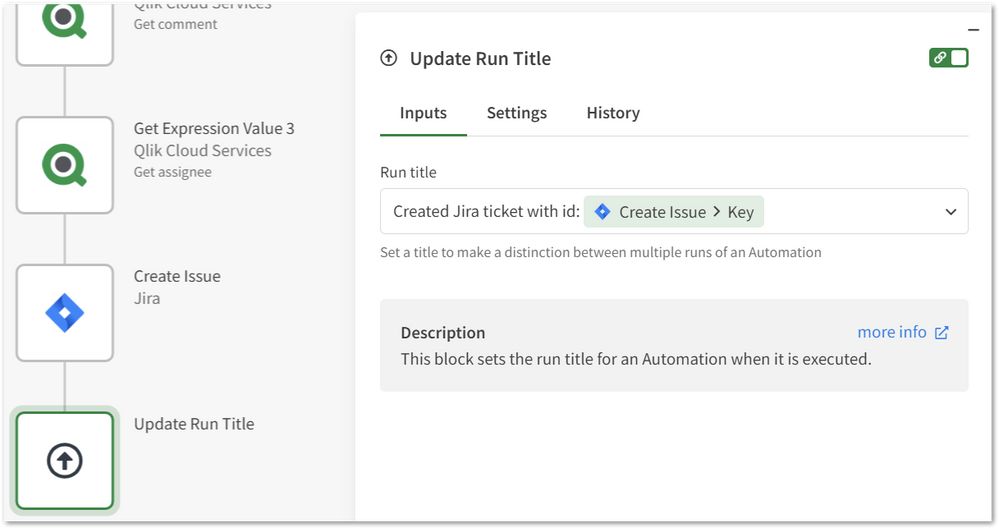

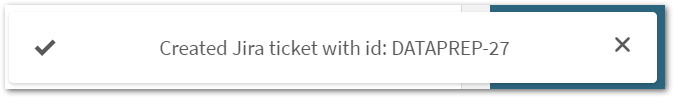

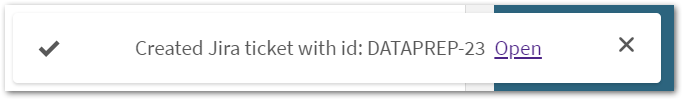

- Add an Update Run Title block to the end of the automation. This will show a toast notification to the user who clicked the button after the automation is completed. Learn more about configuring this and who can see the notification in the next part of this article: "Configuring the toast notification".

The resulting toast notification in the Qlik Sense app:

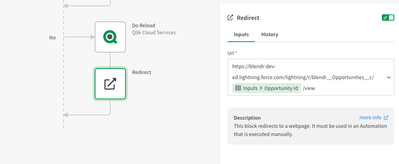

Bonus: add a link to the toast notification

Instead of showing a plain message in the toast notification, it's also possible to include a link to point the user to a certain resource. This can be done by configuring the Update Run Title block with the following snippet:

{"message":"Ticket created", "url": "https://<link to jira ticket>"}Configuring the toast notification

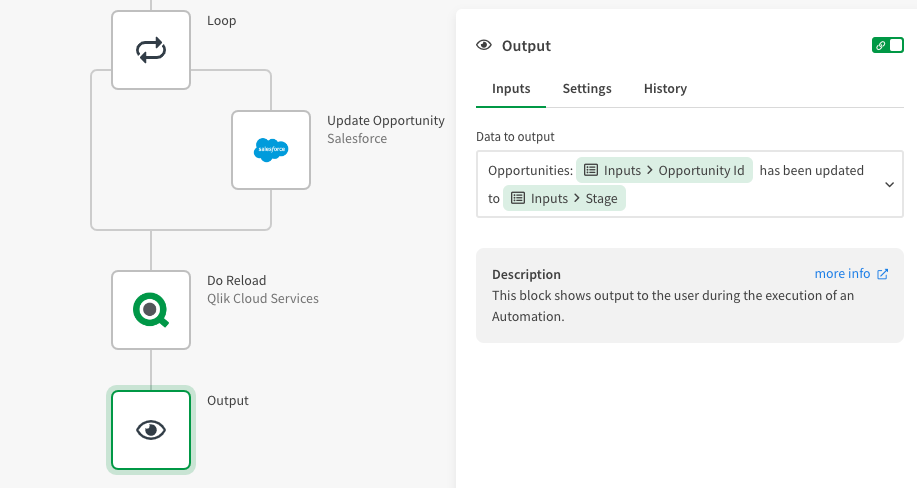

Depending on the button's configuration and the automation run mode, use either the Update Run Title block or the Output block to show the toast notification.

See the below table for each option:

Run mode configuration in the automation Run mode in the button Block for toast notification Who can see the notification Triggered async Triggered Update Run Title Automation owner only Triggered sync Triggered Output Everyone Triggered sync Not triggered Update Run Title Automation owner only The run mode in the button can be configured by toggling the 'Include Selections' option in the button's settings:

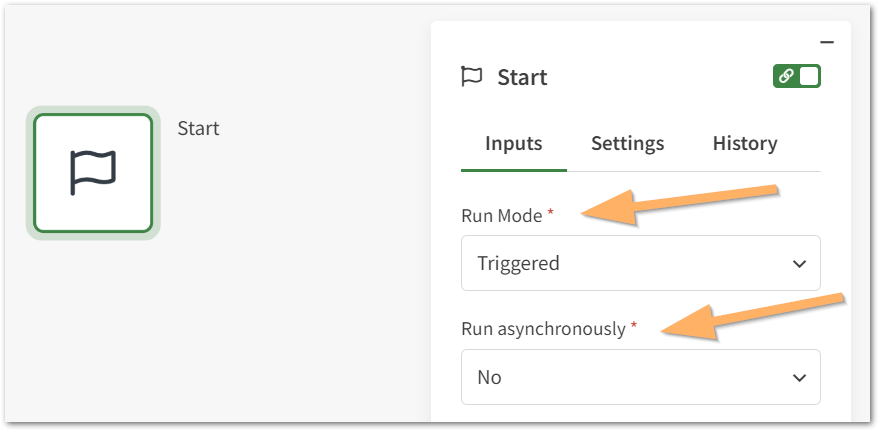

The run mode in the automation can be configured here in the Start block:

Reloads

After writing back to your source systems, you'll want to do a reload to see your changes reflected in the app. Be mindful of the impact of doing these reloads. If multiple people are using this button at the same time, you don't want to do a reload for each update.

Problems:

- Reload concurrency: the same app can not be reloaded in parallel. If multiple people are using the button at the same time, it won't be possible to do reloads for every click on the button.

Improvements:

- Partial reloads: use partial reloads to only load new data into the app. This way the reload takes less time.

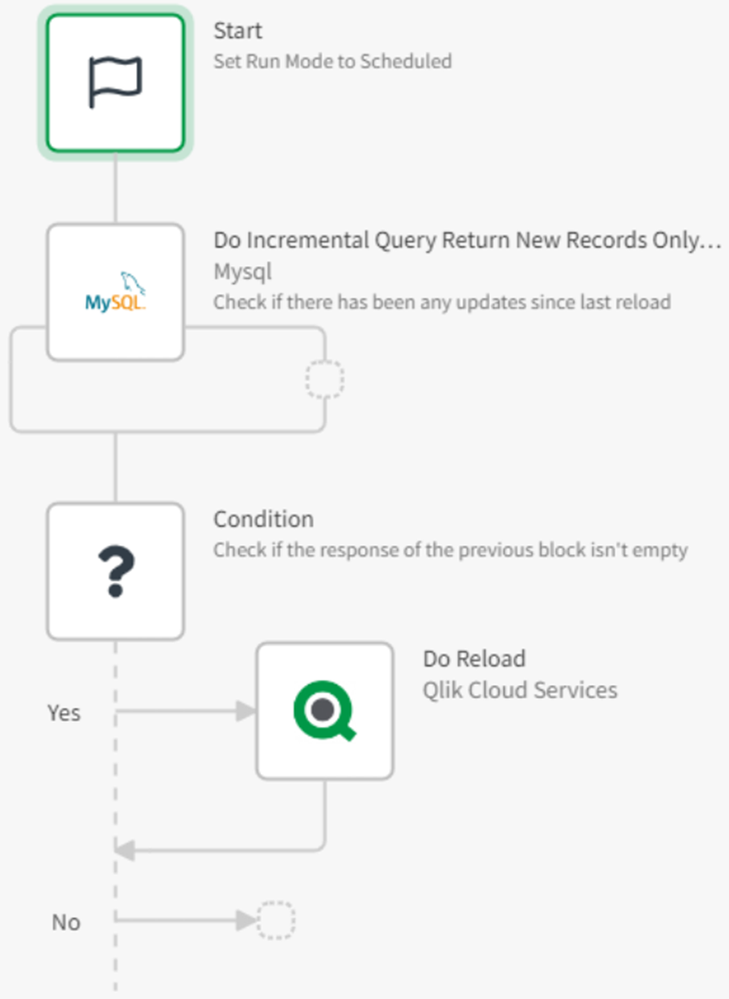

- Separate reloads: do not use the automation triggered by the button for reloads. Instead, use a separate process for this. For example:

- An additional button on the app that triggers reloads.

- A scheduled reload.

- Another automation with a "List Incremental" block that runs on a schedule and reads changes from the source. It will trigger a reload only if new records are available in the source. Note: not every connector in automations has an incremental block.

Limitations

- Public access in triggered mode: when the button is set to run the automation in triggered mode, everyone who can access the sheet and click the button will be able to execute the automation.

- No impersonation: when the automation runs, it will use the automation owner's Qlik account for the Qlik Cloud & Qlik Reporting connectors. It's not possible to impersonate the user who clicked the button. This means that any form of section access is not applied to the user who clicked the button.

- No parallel runs: the automation can not run in parallel. New runs get queued with a maximum queue size of 200 runs.

- Execution token is visible: when the button is set to run the automation in triggered mode, clicking the button exposes the automation's Execution Token from the network traffic in the developer console. This token can only be used to trigger that automation and has no use for other automations or other APIs. Generating a new token can be done by copying the automation.

- Toast notification timeout: the button will wait on the automation for a maximum of 10 minutes to return the toast notification. If the automation takes longer, it will still run to completion, but no toast notification will be shown.

- General limits: more information on limitations can be found here:

The information in this article is provided as-is and to be used at own discretion. Depending on tool(s) used, customization(s), and/or other factors ongoing support on the solution below may not be provided by Qlik Support.

-

Qlik Sense Installation InitDB call failed with exit code -1073741515

The Qlik Sense installation fails at the PostgreSQL installation step. Environment: Qlik Sense May 2021 - May 2023 for a 12.5 PostgreSQL Database ... Show More -

Qlik Talend Data Integration: BigQueryException: Connection has closed: javax.ne...

The BigQuery component encountered an error, which reads: "BigQueryException: Connection has closed: javax.net.ssl.SSLException: Connection reset." ... Show MoreThe BigQuery component encountered an error, which reads:

"BigQueryException: Connection has closed: javax.net.ssl.SSLException: Connection reset."

Cause

The error "BigQueryException: Connection has closed: javax.net.ssl.SSLException: Connection reset" typically signifies that the network connection to BigQuery was unexpectedly terminated. This could be due to various network issues, such as transient disruptions or restrictive network configurations like firewalls or proxies that terminate idle connections.

Resolution

Here are some strategies to manage and potentially resolve this issue:

Implement a Retry Mechanism:

Use a retry mechanism (tBigQueryXxx-->onSubjobError-->) with exponential backoff to handle connection resets gracefully. When a connection reset occurs, catch the exception, wait for a progressively increasing interval, and then retry the operation. This is especially useful for transient network issues.

Review Firewall and Proxy Configurations:

Ensure that all firewalls and proxy servers within your network path are properly configured to permit long-lived connections necessary for your BigQuery operations. These systems may be closing connections that remain idle for an extended period.

Batch Processing with Pagination:

Instead of attempting to load all results at once, consider breaking your query into smaller chunks. You can modify your query to retrieve subsets of the data and process each subset separately. This approach limits the impact of any single query failure.

Environment

- Talend Data Integration 7.3.1, 8.0.1

-

Qlik REST connector fails in Qlik cloud with error "Failed to connect to server ...

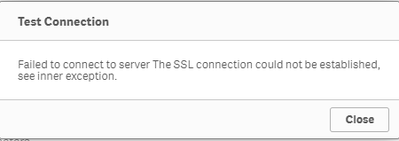

You might encounter the error "Failed to connect to server The SSL connection could not be established, see inner exception." when trying to connect t... Show MoreYou might encounter the error "Failed to connect to server The SSL connection could not be established, see inner exception." when trying to connect to a REST API endpoint using the Qlik REST connector in the Qlik Sense cloud services (but if you test the same connection on an on premise Qlik Sense version the connection might still work):

Please note that this kind of unexpected connection errors in the Qlik cloud might be caused due to incompatibility reason with the REST endpoint ciphers and the .NET 5.0 version in the Qlik Sense cloud.

Accordingly we recommend to check what kind of ciphers are required for your endpoint and compare with default ciphers for .NET 5.0. In case of an incompatibility we suggest to upgrade the ciphers on the endpoint or to use the Qlik DataTransfer.

Environment

- Qlik Sense SAAS

The information in this article is provided as-is and to be used at own discretion. Depending on tool(s) used, customization(s), and/or other factors ongoing support on the solution below may not be provided by Qlik Support.

-

Qlik Sense Desktop or Server Install Fails - Failed to Install MSI Package

The Qlik Sense Desktop or Server installation fails with: INSTALLATION FAILEDAN ERROR HAS OCCURREDFor detailed information see the log file. The inst... Show MoreThe Qlik Sense Desktop or Server installation fails with:

INSTALLATION FAILED

AN ERROR HAS OCCURRED

For detailed information see the log file.

The installation logs (How to read the installation logs for Qlik products) will read:

Error 0x80070643: Failed to install MSI package.

Error 0x80070643: Failed to configure per-user MSI package.

Detected failing msi: DemoAppsError 0x80070643: Failed to install MSI package.

Error 0x80070643: Failed to configure per-user MSI package.

Detected failing msi: SenseDesktop

Applied execute package: SenseDesktop, result: 0x80070643, restart: None

Error 0x80070643: Failed to execute MSI package.

ProgressTypeInstallation

Starting rollback execution of SenseDesktopCAQuietExec: Entering CAQuietExec in C:\WINDOWS\Installer\MSIE865.tmp, version 3.10.2103.0

CAQuietExec: "powershell" -NoLogo -NonInteractive -InputFormat None

CAQuietExec: Error 0x80070002: Command failed to execute.

CAQuietExec: Error 0x80070002: QuietExec Failed

CAQuietExec: Error 0x80070002: Failed in ExecCommon method

CustomAction CA_ConvertToUTF8 returned actual error code 1603 (note this may not be 100% accurate if translation happened inside sandbox)

MSI (s) (74:54) [10:44:37:941]: Note: 1: 2265 2: 3: -2147287035

MSI (s) (74:54) [10:44:37:942]: User policy value 'DisableRollback' is 0

MSI (s) (74:54) [10:44:37:942]: Machine policy value 'DisableRollback' is 0

Action ended 10:44:37: InstallFinalize. Return value 3.The Failed to Install MSI Package can have a number of different root causes.

Install C++ redistributable

Dependencies may be missing. Install the latest C++ redistributable.

.NET Framework update installation error: "0x80070643" or "0x643".

This issue may occur if the MSI software update registration has become corrupted, or if the .NET Framework installation on the computer has become corrupted (source: Microsoft, KB976982).

Repair or reinstall .NET framework.

PowerShell path not set as a system variable

How to troubleshoot:

Option 1- Start "Advanced System Settings" -> "Advanced" tab -> "Environment Variables"

- Select "Path" from "System variables" and Click Edit

- Check if the PowerShell path is listed

- If it is missing, add "%SYSTEMROOT%\System32\WindowsPowerShell\v1.0\" and save the change

Please make sure powershell.exe can be evoked successfully from %SYSTEMROOT%\System32\WindowsPowerShell\v1.0\ before adding the path.

Option 2- Run the batch file in the article Windows Commands To Retrieve Environment Information v2.14

- Open the result file and find the "Environment Variables" part

******************************************** ********* Environment Variables ********** ******************************************** ....... Path=C:\WINDOWS\system32;C:\WINDOWS;C:\WINDOWS\System32\Wbem;C:\WINDOWS\System32\WindowsPowerShell\v1.0\;C:\WINDOWS\System32\OpenSSH\;C:\Users\administrator\AppData\Local\Microsoft\WindowsApps;C:\WINDOWS\system32\inetsrv\ - If the path of PowerShell is missing, refer to option 1 to add into the system variables.

Web Platform Installer Extensions required

Install the Web Platform Installer Extension that includes the latest components of the Microsoft Web Platform, including Internet Information Services (IIS), SQL Server Express, .NET Framework and Visual Studio.

More information about the tool on the Microsoft page.Windows Updates are pending

Verify if there are pending Windows updates. Complete them and try again.

The Installer is not hosted on a local drive

The installation may fail if the installer is being executed from a network drive. Copy the installer to your local drive.

Environment:

-

Qlik Compose not purging the logs correctly

Qlik Compose does not purge logs as expected. Log retention is set in the log management settings, but is not cleaning up log files from: <INSTALL-DI... Show More -

Error connecting to the Sense service when connecting to AWS DynamoDB with Qlik ...

The Qlik Cloud DynamoDB connector is not able to process and list tables on the Data Preview screen, when DynamoDB tables have secondary indexes added... Show MoreThe Qlik Cloud DynamoDB connector is not able to process and list tables on the Data Preview screen, when DynamoDB tables have secondary indexes added.

Resolution

The issue is caused by an index being applied on non-existent columns or by indexes which are wrongly defined. It cannot be reproducible with correctly applied indexes on valid columns and valid column data types.

A new Qlik Cloud DynamoDB connector will be released with an updated DynamoDB driver, which intends to fix the issue when setting up a connection between Qlik Cloud and AWS DynamoDB.

Information provided on this defect is given as is at the time of documenting. For up to date information, please review the most recent Release Notes, or contact support with the ID QB-26946 for reference.

Workaround:

Remove the index is applied on non-existent columns or indexes that are wrongly defined.

Fix Version:

Qlik team is actively working with DynamoDB and will release the new DynamoDB connector with updated DynamoDB driver.

CauseProduct Defect ID: QB-26949

Environment

- Qlik Cloud

-

Qlik Talend Administration Center: could not execute statement database schema m...

While migrating the TAC database from one to another in the Talend Administration Center using the Metaservlet API (Given example is when migrating t... Show MoreWhile migrating the TAC database from one to another in the Talend Administration Center using the Metaservlet API (Given example is when migrating the database from H2 to Postgres SQL), you may encounter the following issue:{error":"Migration failed, please see migraion logs for more details.","returnCode":1}

Upon reviewing the migration logs, noticed the following error.

Can't create Quartz tables: org.hibernate.exception.SQLGrammarException: could not execute statement

database schema migration failed.

javax.persistence.PersistenceException: org.hibernate.exception.SQLGrammarException: could not execute statement

at org.hibernate.internal.ExceptionConverterImpl.convert(ExceptionConverterImpl.java:154)

at org.talend.migration.quartz.QuartzMigrationUtils.<init>(QuartzMigrationUtils.java:79)

at org.talend.migration.TalendMigrationApplication.call(TalendMigrationApplication.java:320)

at org.hibernate.exception.internal.SQLStateConversionDelegate.convert(SQLStateConversionDelegate.java:103)

org.hibernate.engine.query.spi.NativeSQLQueryPlan.performExecuteUpdate(NativeSQLQueryPlan.java:10)

at org.hibernate.internal.SessionImpl.executeNativeUpdate(SessionImpl.java:1509)

at

Caused by: org.postgresql.util.PSQLException: ERROR: relation "qrtz_job_details" already exists

at org.postgresql.core.v3.QueryExecutorImpl.receiveErrorResponse(QueryExecutorImpl.java:2725)

Cause

- Improper execution of the migration scripts.

- When the destination database is not empty or freshly initialized.

- Multiple executions of the migration Scripts: - If you've run the same migration script more than once, it might attempt to create the table again.

Note: Quartz can store Job and scheduling information in a relational database and quartz can automatically create tables with initialize-schema

Resolution

Verify the list of database tables and rename or drop any tables that contain the prefix "qrtz" in their names.

Related Content

Migrating database X to database Y

What does each table for quartz scheduler signify?

Environment

-

Talend Cloud Platform Engines: Cloud Engine, Remote Engine, and Remote Engine Ge...

Talend Cloud platform provides computational capabilities that allow organizations to securely run data integration processes natively from cloud to c... Show MoreTalend Cloud platform provides computational capabilities that allow organizations to securely run data integration processes natively from cloud to cloud, on-premises to cloud, or cloud to on-premises environments.

These capabilities are powered by compute resources, commonly known as Engines. This article covers the four basic types.

Content:

- Cloud Engine (CE)

- Remote Engine (RE)

- Remote Engine Gen2 (REG2)

- Cloud Engine for Design (CE4D)

- Cloud Engine versus Remote Engine

- Cloud Engine for Design versus Remote Engine Gen 2

- Need for additional engines

- Cloud Engine - usage considerations

- Remote Engine – recommendations

- Summary

Cloud Engine (CE)

A Cloud Engine is a compute resource managed by Talend in Talend Cloud that executes Job tasks.

- You can allocate Cloud Engines to environments in proportion to the number of concurrent task executions, workloads, and Job designs you plan to run.

- All environments can use unassigned Cloud Engines. If Cloud Engines are not allocated to specific environments, you may not be able to run certain tasks because other tasks might keep all the unassigned Cloud Engines occupied.

- Cloud Engines can handle parallel execution of three tasks. That means a maximum of three different tasks can run in parallel on a single Cloud Engine. (A task cannot run more than once concurrently on a single Cloud Engine.) So, if three different tasks are already running on a Cloud Engine or if the same task is already running on that engine, another Cloud Engine is selected to execute the task.

- If you run your task in Cloud Exclusive mode, you cannot execute other tasks on that Cloud Engine. You can only use Cloud Exclusive engines in environments that do not have Cloud Engines assigned to them.

- Cloud Engines have limited system resources – memory usage: 8 GB, disk usage: 200 GB.

- Only standard Data Integration Batch Jobs can run on Cloud Engines.

- You cannot group Cloud Engines together to form clusters.

- Cloud Engines are hosted on AWS or Azure Cloud.

- Talend manages Cloud Engines.

- Cloud Engines employ TCP communication.

Remote Engine (RE)

A capability in Talend Cloud platform that allows you to securely run data integration Jobs natively from cloud to cloud, on-premises to cloud, or cloud to on-premises environments completely within your environment for enhanced performance and security, without transferring the data through the Cloud Engines in Talend Cloud platform.

Java-based runtime (similar to a Cloud Engine) to execute Talend Jobs on-premises or on another cloud platform that you control.

- Remote Engines allow you to run Jobs, Routes, and Data Service tasks.

- Data Service and Route Microservice tasks can only be deployed on Remote Engines. OSGi type deployments require that Talend Runtime version 7.1.1 or higher is installed and running on the same machine as the Talend Remote Engine.

- Remote Engines support configurable max parallel execution: by default, a maximum of three different tasks can run in parallel on the same Remote Engine. However, this is a modifiable configuration.

- Remote Engines can be grouped to form clusters called Remote Engine Cluster. Remote Engines added to a cluster cannot be used to execute tasks directly from Talend Studio.

- Remote Engines are hosted on-premises or on the cloud.

- You manage Remote Engines.

- Remote Engines employ HTTPS communication.

Remote Engine Gen2 (REG2)

A Remote Engine Gen2 is a secure execution engine on which you can safely execute data pipelines (that is, data flows designed using Talend Pipeline Designer). It allows you to have control over your execution environment and resources because you can create and configure the engine in your own environment (Virtual Private Cloud or on-premises). Previously referred to as Remote Engines for Pipelines, this engine was renamed Remote Engine Gen2 during H1/2020. It is a Docker-based runtime to execute data pipelines on-premises or on another cloud platform that you control.

A Remote Engine Gen2 ensures:

- Data processing in a safe and secure environment, because Talend never has access to your pipelines' data and resources

- Optimal performance and security by increasing the data locality instead of moving large data to computation

Cloud Engine for Design (CE4D)

Cloud Engine for Design is a built-in runner that allows you to easily design pipelines without setting up any processing engines. With this engine you can run two pipelines in parallel. For advanced processing of data, Talend recommends installing the secure Remote Engine Gen2.

- CE4Ds have limited system resources – memory usage: 8 GB

- CE4Ds support a maximum of two pipelines that can run in parallel on a single CE4D

- CE4Ds should be used only for design purposes; that is, you shouldn’t use them to execute data pipelines in a Production environment

Cloud Engine versus Remote Engine

The following table lists a comparative perspective between the two engines:

Cloud Engine (CE)

Remote Engine (RE)

Consumes 45,000 engine tokens

Consumes 9,000 engine tokens

Runs within Talend Cloud platform – no download required

Downloadable software from Talend Cloud platform

Managed by Talend, run on-demand as needed to execute Jobs

Managed by the customer

No customer resources required

Customer can run on Windows, Linux, or OS X

Set physical specifications (Memory, CPU, Temp Disk Space)

Unlimited Memory, CPU, and Temp Space

Require data sources/targets to be visible through the internet to the Cloud Engine

Hybrid cloud or on-premises data sources

Restricted to three concurrent Jobs

Unlimited concurrent Jobs (default three)

Available within Talend Cloud portal

Available in AWS and Azure Marketplace

Runs natively within Talend Cloud iPaaS infrastructure

Uses HTTPS calls to Talend Cloud service to get configuration information and Job definition and schedules

Cloud Engine for Design versus Remote Engine Gen 2

Cloud Engine for Design (CE4D)

Remote Engine Gen 2 (REG2)

Consumes zero engine tokens

Consumes 9000 engine tokens

Build upon a Docker-compose stack

Build upon a Docker-compose stack

Available as Cloud Image and Instantiated in Talend Cloud platform on behalf of the customer

Available as an AMI Cloud Formation Template (for AWS) and Azure Image (for Azure)

Not available as downloadable software as this type of engine is only suitable for design using Pipeline Designer in Talend Cloud portal

Available as .zip or .tar.gz (for local deployment)

A Cloud Engine for Design is included with Talend Cloud platform, to offer a serverless experience during design and testing. However, it is not meant for production (that is, not for running pipelines in non-development environments). It won’t scale for prod-size volumes and long-running pipelines. It should be used for design teams to get a preview working and test execution during development. This engine should not be used for production execution.

It is used to run artifacts, tasks, preparations, and pipelines in the cloud, as well as creating connections and fetching data samples.

Static IPs cannot be enabled for CE4D within Talend Management Console

Not applicable as REG2 runs outside Talend Management Console (that is, in Customer Data Center)

Need for additional engines

Additional engines (CE or RE) may be required if you have one or more of the following use cases:

- Continuous delivery – for example, Dev and QA separate from UAT and Production environments

- Data access - data is in two different private locations where an engine is needed in each site (or a mix of Cloud and Remote Engines)

- Scalability - concurrent Job volume requires additional engines, Jobs are complex and require significant memory or CPU

These use cases depend on the deployment architecture in the specific customer environment and layout of the Remote Engine at the environment or workspace level configurations. This would need proper capacity planning and automatic horizontal and vertical scaling of the compute Engines.

Cloud Engine - usage considerations

Question

Guideline

How much data must be transferred per hour?

Each Cloud Engine can transfer 225 GB per hour.

How many separate flows can run in parallel?

Each Cloud Engine can run up to three flows in parallel.

How much temporary disk space is needed?

Each Cloud Engine has 200GB of temp space.

How CPU and memory intensive are the flows?

Each Cloud Engine provides 8 GB of memory and two vCPU. This is shared among any concurrent flows.

Are separate execution environments required?

Many users desire separate execution for QA/Test/Development and Production. If this is needed, additional Cloud Engines should be added as required.

Remote Engine – recommendations

If a source or target system is not accessible through the internet:

If one of the systems is not accessible using the internet, then a Remote Engine is needed.

When single flow requirements exceed the capacity of a Talend Cloud Engine:

If the Cloud Engine is too small (for example, the maximum memory of 5.25 GB, temporary space of 200 GB, two vCPU, or the maximum of 225 GB per hour) then, a Remote Engine is needed.

If a native driver is required:

If the solution requires a native driver, which is not part of the Talend action or Job generated code, a typical case for this is SAP with the JCO v3 Library, MS SQL Server Windows Authentication, then a Remote Engine is needed.

Data jurisdiction, security, or compliance reasons:

It may be desirable or required to retain data in a particular region or country for data privacy reasons. The data being processed may be subject to regulations such as PCI or HIPAA, or it may be more efficient to process the data within a single data center or public cloud location. These are all valid reasons to use a Remote Engine.

Summary

Cloud Engine (CE)

Remote Engine (RE)

Remote Engine Gen 2 (REG2)

Cloud Engines allow you to run batch tasks that use on-premises or cloud applications and datasets (sources, targets)

Remote Engines allow you to run batch tasks or microservices (APIs or Routes) that use on-premises or cloud applications and datasets (sources, targets)

The Remote Engine Gen2 is used to run artifacts, tasks, preparations, and pipelines in the cloud, as well as creating connections and fetching data samples

Consumes 45,000 engine tokens

Consumes 9,000 engine tokens

Consumes 9,000 engine tokens

No download required - Runs within Talend Cloud platform

Downloadable software from Talend Cloud platform

Downloadable software from Talend Cloud platform

Managed by Talend, run on-demand as needed to execute Jobs

Managed by the customer

Managed by the customer

No customer resources required

Can run on Windows, Linux, or OS X

Require compatible Docker and Docker compose versions for Linux, Mac, and Windows

Set physical specifications (Memory, CPU, and Temp Disk Space)

Unlimited Memory, CPU, and Temp Space

Unlimited Memory, CPU, and Temp Space

Require data sources/targets to be visible through the internet to the Cloud Engine

Hybrid cloud or on-premises data sources

Hybrid cloud or on-premises data sources

Restricted to three concurrent Jobs

Unlimited concurrent Jobs (default three)

Unlimited concurrent pipelines (configurable)

Available within Talend Cloud portal

Available in AWS and Azure Marketplace

Available as an AMI Cloud Formation Template (for AWS) and Azure Image (for Azure)

Runs natively within Talend Cloud iPaaS infrastructure

Uses HTTPS calls to Talend Cloud service to get configuration information and Job definition and schedules

Uses HTTPS calls to Talend Cloud service to get configuration information and pipeline definition and schedules

References

Talend Help Center documentation:

-

Qlik Sense - "Setting up connection to LDAP root node failed. Check log file"

When synchronizing Qlik Sense with Active Directory, you may encounter an error message saying "the User Directory Connector (UDC) is not configured, ... Show MoreWhen synchronizing Qlik Sense with Active Directory, you may encounter an error message saying "the User Directory Connector (UDC) is not configured, because the following error occurred: Setting up connection to LDAP root node failed. Check log file"

This often indicates a log on failure, i.e. the username and/or password is wrong.

Cause:

A common cause for this is wrong username and/or password.

Resolution:

- Attempt to set up the UDC and receive the error message

- Go to the the following location to get user management log file: %ProgramData%\Qlik\Sense\Log\Repository\Trace

- Locate the QLIKSERVER_UserManagement_Repository_<Date/timestamp>.txt file and open it

- Scroll to the bottom of the file

- Read the content of the line marked by ERROR

- If the error message is related to username and password related, move to the next steps, otherwise file a support case with us

- Verify that the user and password actually exists, and that they are in the domain

- In the username field, enter the domain name with a "back-slash", it should look like this domain_name\<username>

- Enter the password normally.

-

Security Rule - HubSection_Home explained

The HubSection_Home resource filter in Qlik Sense refers to the button which allows a user to navigate back to the Hub from inside of an application.D... Show MoreThe HubSection_Home resource filter in Qlik Sense refers to the button which allows a user to navigate back to the Hub from inside of an application.

Default ruleset:Resolution:

If an administrator should want to disable this functionality for their users, for example, if the application is embedded into another page. Then they will want to disable the default rule named HubSections.

The result with this rule disabled is as follows for the end user:

The result of this change will disable this functionality for all users. If an administrator wants to provide this functionality to a select set of users then the administrator can create a new rule in this schema:- Resource filter: HubSection_*

- Actions: Read

- Conditions: Some User Condition

- Example: user.roles like "*Admin*"

- This will show the Open Hub button for any user who has an Admin role assigned to their user account.

- Example: user.roles like "*Admin*"

- Context: Both Hub and QMC

-

Qlik Replicate and Salesforce source endpoint: UPDATE operations treated as INSE...

Using a Salesforce source endpoint, especially while using the Incremental Load source endpoint, all UPDATE operations are treated as INSERT operation... Show MoreUsing a Salesforce source endpoint, especially while using the Incremental Load source endpoint, all UPDATE operations are treated as INSERT operations for the table "UserRole". This leads to duplicate IDs found from the target table in the CDC processing stage.

Environment

- Qlik Replicate, all versions

- Salesforce source, all versions

Resolution

Set the task to UPSERT mode with Apply Conflicts set to Update the existing target record and Insert the missing target record. For more information see Apply Conflicts.

Note: this WA applied to Apply Change Mode, if the 'store changes' are enabled, duplicate ID is presented in __ct table still.

Cause

71 objects are missing the "CreatedDate" system field (including tables "AccountShare", "UserLogin", "UserRole", and similar). This is why Qlik Replicate can't identify if the change is inserted or updated, leading to both INSERT and UPDATE being converted to INSERT operation for these tables.

Internal Investigation ID(s)

00294581

-

Qlik Talend Data Integration: Task execution was blocked/suspended due to high f...

A task containing tMysqlOutput component, which performs insert/update operations, has been blocked/suspended due to a PAGEIOLATCH_SH wait type status... Show MoreA task containing tMysqlOutput component, which performs insert/update operations, has been blocked/suspended due to a PAGEIOLATCH_SH wait type status and has been pending for several hours.

Cause

PAGEIOLATCH_SH wait type usually comes up as the result of fragmented or unoptimized index.Often reasons for excessive PAGEIOLATCH_SH wait type are:

- I/O subsystem has a problem or is misconfigured

- Overloaded I/O subsystem by other processes that are producing the high I/O activity

- Bad index management

- Logical or physical drive misconception

- Network issues/latency

- Memory pressure

- Synchronous Mirroring and AlwaysOn AG

To resolve the issue of high PAGEIOLATCH_SH wait type, you can check the following:

- SQL Server, queries and indexes, as very often this could be found as a root cause of the excessive PAGEIOLATCH_SH wait types

- For memory pressure before jumping into any I/O subsystem troubleshooting

Always keep in mind that in case of high safety Mirroring or synchronous-commit availability in AlwaysOn AG, increased/excessive PAGEIOLATCH_SH can be expected.

Based on the SQL query check we figured out the avg_fragmentation_in_percent showing 90%+ , which means the index is maintenance badly.

USE DBName;

GO

-- Find the average fragmentation percentage of all indexes

-- in the HumanResources.Employee table.

SELECT a.index_id, name, avg_fragmentation_in_percent

FROM sys.dm_db_index_physical_stats (DB_ID(N'DBName'),

OBJECT_ID(N'dbo.TableName'), NULL, NULL, NULL) AS a

JOIN sys.indexes AS b

ON a.object_id = b.object_id AND a.index_id = b.index_id;

GO==Detecting Fragmentation==

The first step in deciding which defragmentation method to use is to analyze the index to determine the degree of fragmentation. By using the system function sys.dm_db_index_physical_stats, you can detect fragmentation in a specific index, all indexes on a table or indexed view, all indexes in a database, or all indexes in all databases. For partitioned indexes, sys.dm_db_index_physical_stats also provides fragmentation information for each partition.

The result set returned by the sys.dm_db_index_physical_stats function includes the following columns.Column Description avg_fragmentation_in_percent The percent of logical fragmentation (out-of-order pages in the index) fragment_count The number of fragments (physically consecutive leaf pages) in the index avg_fragment_size_in_pages Average number of pages in one fragment in an index Resolution

After the degree of fragmentation is known, refer to the table below to determine the most effective method to correct the fragmentation.avg_fragmentation_in_percent value Corrective statement > 5% and < = 30% ALTER INDEX REORGANIZE > 30% ALTER INDEX REBUILD WITH (ONLINE = ON)* * Rebuilding an index can be executed online or offline. Reorganizing an index is always executed online. To achieve availability similar to the reorganize option, you should rebuild indexes online.

These values provide a rough guideline for determining the point at which you should switch between ALTER INDEX REORGANIZE and ALTER INDEX REBUILD. However, the actual values may vary from case to case. It is important that you experiment to determine the best threshold for your environment. Very low levels of fragmentation (less than 5 percent) should not be addressed by either of these commands because the benefit from removing such a small amount of fragmentation is almost always vastly outweighed by the cost of reorganizing or rebuilding the index.

In general, fragmentation on small indexes is often not controllable. The pages of small indexes are sometimes stored on mixed extents. Mixed extents are shared by up to eight objects, so the fragmentation in a small index might not be reduced after reorganizing or rebuilding the index.Environment

- Talend Data Integration 7.3.1, 8.0.1

-

Qlik Replicate ODBC Task failure: CREATE SCHEMA "CLILIBF"

A task using ODBC as a target fails after an upgrade to 2023.5 or later versions: Errors 00001888: 2024-09-09T10:31:56:332057 [TARGET_LOAD ]E: Failed ... Show MoreA task using ODBC as a target fails after an upgrade to 2023.5 or later versions:

Errors 00001888: 2024-09-09T10:31:56:332057 [TARGET_LOAD ]E: Failed (retcode -1) to execute statement: CREATE SCHEMA "CLILIBF" [1022502] (ar_odbc_stmt.c:5082)

00001888: 2024-09-09T10:31:56:332057 [TARGET_LOAD ]E: RetCode: SQL_ERROR SqlState: 42000 NativeError: -552 Message: [IBM][System i Access ODBC Driver][DB2 for i5/OS]SQL0552 - Not authorized to CREATE DATABASE. [1022502] (ar_odbc_stmt.c:5090)

Before the upgrade, the tasks may have encountered the same error but could be run using a Full Load.

Environment

- Qlik Replicate

- ODBC

- DB2 iSERIES as target

Resolution

To resolve the issue, we will modify an Internal Parameter to the endpoints settings, as well as set a provider syntax.

- Add the Internal Parameter:

- Go to the Endpoint connection

- Switch to the Advanced tab

- Click Internal Parameters

- Modify the Parameter: additionalConnectionProperties

The old value should be DSN=TGT_PPCPROD

If no parameter exists, create it. - Add the new value: DSN=TGT_PPCPROD; DBQ=CLILIBF

This tells the ODBC driver that any references to table names without an explicit schema name should be resolved in schema CLILIBF.

- Go to the Endpoint connection

- Add the provider syntax

- Go to the Endpoint connection

- Switch to the Advanced tab

- Locate Provider Syntax and add the value GenericWithoutSchema

This indicates to the endpoint to disregard schema information, which is the cause of the error.

- Go to the Endpoint connection

Cause

Replicate Upgrade. Oracle to ODBC(iSeries) on 2023.5 will not do a Full Load.

Internal Investigation ID(s)

QB-29117

-

Qlik Talend Studio Q&A: Talend Cloud Remote Engine for AWS

Question IWhat are the compatible operating systems?The compatible operating systems can be found in the article: compatible-operating-systems.Please ... Show MoreQuestion I

What are the compatible operating systems?

The compatible operating systems can be found in the article: compatible-operating-systems.

Please check the section: Talend Remote Engine.

Question II

What are the compatible Java environments?

Java 8, Java 11, Java 17 can be used for task executions. By default, Talend Remote Engine uses Java 17 to run tasks. The compatible Java environments can be found in the article: launching-talend-cloud-remote-engine-for-aws-via-cloudformation.

Please check the section: Procedure ⇒ Step 10.

Furthermore, there are no differences whether Talend Cloud Remote Engine for AWS is launched using Cloud Formation or AMI.

Question III

Where is the Remote Engine installed?

It is installed in the following directory.

/opt/talend/ipaas/remote-engine-client/

Question IV

Where can I find the settings file to make parameter changes?

The settings file is located in the following directory.

/opt/talend/ipaas/remote-engine-client/etc/

Question V

Where can I find further details on Talend Cloud Remote Engine for AWS?

Please refer to Qlik Talend Documentation: talend-cloud-remote-engine-for-aws.Environment

-

Troubleshooting Qlik Replicate latency issues using the log files

For general advice on how to troubleshoot Qlik Replicate latency issues, see Troubleshooting Qlik Replicate Latency and Performance Issues. If your ta... Show MoreFor general advice on how to troubleshoot Qlik Replicate latency issues, see Troubleshooting Qlik Replicate Latency and Performance Issues.

If your task shows latency issues, one of the first things to do is to set the logging component performance to trace and run the task you identified for five to 10 minutes and review the resulting task log.

We advise you to:

- Open the task log in a text editor (such as Notepad++),

- then search source latency

- and choose Find all in current document.

This will list all available latency information. We can now identify a trend.

Remember, Target latency = Source latency + Handling latency.

No latency

[PERFORMANCE ]T: Source latency 0.00 seconds, Target latency 0.00 seconds, Handling latency 0.00 seconds (replicationtask.c:3703)

The source, target, and handling latency are all at 0 seconds.

Source latency

[PERFORMANCE ]T: Source latency 7634.89 seconds, Target latency 7634.89 seconds, Handling latency 0.00 seconds (replicationtask.c:3793)

[PERFORMANCE ]T: Source latency 7663.00 seconds, Target latency 7663.00 seconds, Handling latency 0.00 seconds (replicationtask.c:3793)

[PERFORMANCE ]T: Source latency 7690.12 seconds, Target latency 7693.12 seconds, Handling latency 3.00 seconds (replicationtask.c:3793)

[PERFORMANCE ]T: Source latency 7710.25 seconds, Target latency 7723.25 seconds, Handling latency 13.00 seconds (replicationtask.c:3793)

The source latency is higher than the handling latency. The key point is to look at handling latency, it must be lower than the source latency.

Cause:

- Source database busy

- Lack of network bandwidth to the source

- There are a lot of CDC changes to capture (the task has stopped for some time and only just resumed)

- There are a lot of CDC changes to capture (the source database has maintenance or a sudden influx of DML operations occurred)

If the source latency decreases during your monitoring, it is a good sign that the latency will recover; if it increases, review the causes mentioned above and resolve any outstanding source issues. You will want to consider reloading the task.

Target latency

[PERFORMANCE ]T: Source latency 2.05 seconds, Target latency 7116.05 seconds, Handling latency 7114.00 seconds (replicationtask.c:3793)

[PERFORMANCE ]T: Source latency 2.77 seconds, Target latency 7150.77 seconds, Handling latency 7148.00 seconds (replicationtask.c:3793)

[PERFORMANCE ]T: Source latency 2.16 seconds, Target latency 7182.16 seconds, Handling latency 7180.00 seconds (replicationtask.c:3793)

The target latency is higher than the source latency.

Cause:

- Target is busy

- Lack of network bandwidth to the target

- A bottleneck in the target database computing power, especially for cloud endpoints

If the target latency continues to increase, consider reloading the task.

Handling latency

Identifying whether or not you are looking at handling latency or target latency can be tricky. When the task has target latency, the queue is blocked, so the handling latency will automatically be higher as well (remember: Target latency = Source latency + Handling latency).

The key point to decide if it is handling latency is to check if there are a lot of swap files saved in the sorter folder inside the task folder of the Qlik Replicate server.

In addition, if the task log shows when the task is resumed, the handling latency increases dramatically from 0 seconds (or a low number) to a much higher value in a very short time. This can then be clearly identified as a handling latency:

2023-05-10T08:21:02:537595 [PERFORMANCE ]T: Source latency 5.54 seconds, Target latency 5.54 seconds, Handling latency 0.00 seconds (replicationtask.c:3788)

2023-05-10T08:21:32:610230 [PERFORMANCE ]T: Source latency 4.61 seconds, Target latency 55363.61 seconds, Handling latency 55359.00 seconds (replicationtask.c:3788)

This log shows handling latency increased from 0 seconds to 55359 seconds after only 30 seconds of a task's runtime. This is because Qlik Replicate will read all the swap files into memory when the task is resumed. In this situation, you need to reload the task or resume the task from a timestamp or stream position.

Related Content

Environment

- Qlik Replicate