Unlock a world of possibilities! Login now and discover the exclusive benefits awaiting you.

Search our knowledge base, curated by global Support, for answers ranging from account questions to troubleshooting error messages.

Recent Documents

-

Qlik Replicate: How to convert a Direct Task into a Log Stream Task without relo...

This article documents how to convert a Direct Task into a Log Stream Task without reloading the target. Resolution Stop the Direct task (Oracle-SQ... Show MoreThis article documents how to convert a Direct Task into a Log Stream Task without reloading the target.

Resolution

- Stop the Direct task (Oracle-SQLServer)

- Create a new Log Stream (Parent Task)

In our example, the task is called Logstream_new

In Parent Task (a), add same source (b) and same table(s) (c) as the ones used it in Direct Task and run the task - Create a Dummy Replication (Child) task

In our example, the task is called Child_Task_new - Open Qlik Enterprise Manager

- Select the Direct Task (Oracle-SQL Server)

- Click Export Task > With Endpoints Export

This exports the Direct Task JSON - Repeat the export for the Child Task, exporting the JDSON With Endpoints

- Open the Child Task JSON and copy all instances of the Source name

In our example, this is Duplicate_Oracle_Src - Open the Direct task (Oracle-SQLServer) JSON and replace all instances of the source name with child task source name

In our example, this is Duplicate_Oracle_Src - Import the Direct Task JSON (Oracle-SQLServer).

- Go to Qlik Replicate Server and Open the Oracle-Sqlserver Task and click on Source endpoint

- Enable Read changes from log stream staging folder and select your Staging directory in Log stream staging task

Re-enter the Password

- Start the task using the Advanced Run (a) option and select (b) Source change position: 0

Environment

- Stop the Direct task (Oracle-SQLServer)

-

Choice of Endpoint Driver for use with Qlik Replicate

Qlik Support advises using the driver version for your endpoints that was certified for the version of Qlik Replicate you are using. The use of a driv... Show MoreQlik Support advises using the driver version for your endpoints that was certified for the version of Qlik Replicate you are using. The use of a driver running a different version than what was certified can lead to unpredictable results.

Before troubleshooting a potential defect, always ensure a supported driver is in use.

However, it is acceptable in most cases to use a later patch for the driver so long as it is the same major version. For example:

The current release of Qlik Replicate is May 2024. It requires ODBC driver version 18.3 for Microsoft SQL Server source and target. The other currently supported Qlik Replicate releases, November 2023, May 2023, and November 2022, all were certified with driver version 18.1.

However, the driver version 18.3 can be used.

Generally speaking, if the version of the driver is the same and has only been patched (as is the case between driver 18.1 and 18.3) we do not anticipate any issues using it with November 2023, May 2023 and November 2022 versions. If an issue is encountered on these versions with ODBC driver version 18.3, please open a support case.

This is generally true with using a patched version of the certified driver for other databases/endpoints as well, but there might be exceptions. Some endpoints mandate only specific versions for the driver. If there is a doubt, please open a support case.

Environment

- Qlik Replicate 2023.11, 2023.5 & 2022.11

-

Qlik Talend Products: Java 17 Migration Guide

From R2024-05, Java 17 will become the only supported version to start most Talend modules, enforcing the improved security of Java 17 and eliminating... Show MoreFrom R2024-05, Java 17 will become the only supported version to start most Talend modules, enforcing the improved security of Java 17 and eliminating concerns about Java's end-of-support for older versions. In 2025, Java 17 will become the only supported version for all operations in Talend modules.

Starting from v2.13, Talend Remote Engine requires Java 17 to run. If some of your artifacts, such as Big Data Jobs, require other Java versions, see Specifying a Java version to run Jobs or Microservices.Content

- Prerequisites

- Procedure

- Windows

- Linux

- MAC OS

- Multiple JDK versions

- Studio

- Remote Engine

- ESB - Runtime

- Studio

- Talend Administration Center (TAC)

- Talend JobServer

- CICD

- Windows Users

- Linux Users

- Jenkins Users

- Additional Notes

- Specifying a Java version to run Jobs or Microservices

Prerequisites

Qlik Talend Module Patch Level and Version Studio Supported from R2023-10 onwards Remote Engine 2.13 or later Runtime 8.0.1-R2023-10 or later Procedure

Windows

For Windows users, please follow the JDK installation guide (docs.oracle.com).

Linux

For Linux users, please follow the JDK installation guide (docs.oracle.com).

MAC OS

For MAC OS users, please follow the JDK installation guide (docs.oracle.com).

Multiple JDK versions

When working with software that supports multiple versions of Java, it's important to be able to specify the exact Java version you want to use. This ensures compatibility and consistent behavior across your applications. Here is how you can specify a specific Java version on the following products (such as build servers, shared application server, and similar):

Studio

For Studio users who are using multiple JDKs, please follow the appropriate instructions listed above and follow the proceeding additional steps:

- Backup and edit the <Studio Home>\Talend-Studio-win-x86_64.ini file

- Prepend:

-vm

<JDK17 HOME>\bin\server\jvm.dll

Remote Engine

For Remote Engine (RE) users who are using multiple JDKs, please follow the appropriate instructions listed above and follow the proceeding additional steps.

- Backup and edit the <RE HOME>/etc/talend-remote-engine-wrapper.conf file

- Modify the set.default.JAVA_HOME= property to point to the <JDK 17 HOME> path.

Note 1: If Remote Engine is not installed as a service, the JDK file will be set in the <RE HOME>/bin/setenv file.

Note 2: When it comes to running Jobs or Microservices, you retain the flexibility to either use the default Java 17 version or choose older Java versions, through straightforward configuration of the engine.

How to modify?

Check the following etc folder based configuration and change it to installed jdk/jre path:

{

org.talend.ipaas.rt.dsrunner.cfg--> ms.custom.jre.path

org.talend.remote.jobserver.server.cfg--> org.talend.remote.jobserver.commons.config.JobServerConfiguration.JOB_LAUNCHER_PATH

}

ESB - Runtime

For Runtime users who are using multiple JDKs, please follow the appropriate instructions listed above and follow the proceeding additional steps.

- Backup and edit the <Runtime home>/etc/<TALEND-8-CONTAINER service>-wrapper.conf

- Modify the set.default.JAVA_HOME=C:\<JDK 17 HOME> path

If Runtime is not running as a service:

- Backup and edit the <Runtime home>/bin/setenv.sh

- Modify the SET JAVA_HOME= <JDK 17 HOME> path

Studio

- Data Integration (DI): After installing the 8.0 R2023-10 Talend Studio monthly update or a later one, if you switch the Java version to 17 and relaunch your Talend Studio with Java 17, you must enable your project settings for Java 17 compatibility.

- Go to Studio

- Go to File

- Edit Project properties

- Go to Build

- Go to Java Version

- Activate "Enable Java 17 compatibility"

With the Enable Java 17 compatibility option activated, any Job built by Talend Studio cannot be executed with Java 8. For this reason, verify the Java environment on your Job execution servers before activating the option.

- Big Data Users: Do not enable Java 17 compatibility unless your Spark Cluster supports Java 17.

Talend Administration Center (TAC)

To use Talend Administration Center with Java 17, you need to open the <tac_installation_folder>/apache-tomcat/bin/setenv.sh file and add the following commands:

# export modules export JAVA_OPTS="$JAVA_OPTS --add-opens=java.base/sun.security.x509=ALL-UNNAMED --add-opens=java.base/sun.security.pkcs=ALL-UNNAMED"

Windows users use <tac_installation_folder>\apache-tomcat\bin\setenv.bat

Talend JobServer

Follow the steps below to configure the JobServer to use the new Java version.

-

Navigate to the JobServer Configuration:

Go to the <JobServerRootDir>\conf directory, where <JobServerRootDir> is the path to your Talend JobServer installation. -

Open the Configuration File for Editing:

Locate the TalendJobServer.properties file and open it with a text editor of your choice. -

Set the Java 17 Executable Path:

Find the line dedicated to the Job launcher path within the file. You will modify this line to point to the Java 17 executable.- For Windows, if your Java installation path contains spaces, ensure to enclose the path in quotes.

org.talend.remote.jobserver.commons.config.JobServerConfiguration.JOB_LAUNCHER_PATH="C:\\Program Files\\Java\\jdk-17\\bin\\java.exe" - For Linux or Mac OS, the path doesn’t require quotes.

org.talend.remote.jobserver.commons.config.JobServerConfiguration.JOB_LAUNCHER_PATH=/usr/lib/jvm/java-17-openjdk/bin/java

Replace the example paths with the actual path where Java 17 is installed on your system. Ensure to point directly to the Java executable within the bin directory of your JDK installation.

- For Windows, if your Java installation path contains spaces, ensure to enclose the path in quotes.

-

Save Your Changes:

After editing, save the TalendJobServer.properties file. -

Restart Talend JobServer:

For the changes to take effect, restart your Talend JobServer.

After completing these steps, Talend JobServer will utilize Java 17 for executing Jobs, ensuring compatibility with the latest Java version supported by Talend modules.

CICD

Windows Users

For Java 17 users, Talend CICD process requires the following Maven options:

- Backup and edit <Maven_home>\bin\mvn.cmd

- Modify to:

set "MAVEN_OPTS=%MAVEN_OPTS% --add-opens=java.base/java.net=ALL-UNNAMED --add-opens=java.base/sun.security.x509=ALL-UNNAMED --add-opens=java.base/sun.security.pkcs=ALL-UNNAMED"

Linux Users

For Java 17 users, Talend CICD process requires the following Maven options:

- Backup and edit <Maven_home>/bin/mvn

- Modify to:

export MAVEN_OPTS="$MAVEN_OPTS \ --add-opens=java.base/java.net=ALL-UNNAMED \ --add-opens=java.base/sun.security.x509=ALL-UNNAMED \ --add-opens=java.base/sun.security.pkcs=ALL-UNNAMED"

Jenkins Users

- Backup and edit the jenkins_pipeline_simple.xml

- Include the following in the Talend_CI_RUN_CONFIG parameter:

<name>TALEND_CI_RUN_CONFIG</name> <description>Define the Maven parameters to be used by the product execution, such as: - Studio location - debug flags These parameters will be put to maven 'mavenOpts'. If Jenkins is using Java 17, add: --add-opens=java.base/java.net=ALL-UNNAMED --add-opens=java.base/sun.security.x509=ALL-UNNAMED --add-opens=java.base/sun.security.pkcs=ALL-UNNAMED </description>

Additional Notes

Specifying a Java version to run Jobs or Microservices

Overview

Enable your Remote Engine to run Jobs or Microservices using a specific Java version.

By default, a Remote Engine uses the Java version of its environment to execute Jobs or Microservices. With Remote Engine v2.13 and onwards, Java 17 is mandatory for engine startup. However, when it comes to running Jobs or Microservices, you can specify a different Java version. This feature allows you to use a newer engine version to run the artifacts designed with older Java versions, without the need to rebuild these artifacts, such as the Big Data Jobs, which reply on Java 8 only.

When developing new Jobs or Microservices that do not exclusively rely on Java 8, that is to say, they are not Big Data Jobs, consider building them with the add-opens option to ensure compatibility with Java 17. This option opens the necessary packages for Java 17 compatibility, making your Jobs or Microservices directly runnable on the newer Remote Engine version, without having to go through the procedure explained in this section for defining a specific Java version. For further information about how to use this add-opens option and its limitation, see Setting up Java in Talend Studio.

Procedure

- Stop the engine.

- Browse to the <RemoteEngineInstallationDirectory>/etc directory.

- Depending on the type of the artifacts you need to run with a specific Java version, do the following:

For both artifact types, use backslashes to escape characters specific to a Windows path, such as colons, whitespace, and directory separators, while keeping in mind that directory separators are also backslashes on Windows.

Example:

c:\\Program\ Files\\Java\\jdk11.0.18_10\\bin\\java.exe

- For Jobs, in the <RemoteEngineInstallationDirectory>/etc/org.talend.remote.jobserver.server.cfg file, add the path to the Java executable file.

Example:

org.talend.remote.jobserver.commons.config.JobServerConfiguration.JOB_LAUNCHER_PATH=c:\\jdks\\jdk11.0.18_10\\bin\\java.exe

- For Microservices, in the <RemoteEngineInstallationDirectory>/etc/org.talend.ipaas.rt.dsrunner.cfg, add the path to the Java executable file.

Example:

ms.custom.jre.path=C\:/Java/jdk/bin

Make this modification before deploying your Microservices to ensure that these changes are correctly taken into account.

- For Jobs, in the <RemoteEngineInstallationDirectory>/etc/org.talend.remote.jobserver.server.cfg file, add the path to the Java executable file.

- Restart the engine.

For Java Option Command --add-opens, using SPACE or = depends on OS, JDK version or the place where you setup, there are 3 cases:

1. support both SPACE and =

2. support SPACE only

3. support = only

Example:

--add-opens=java.base/java.net=ALL-UNNAMED

--add-opens java.base/java.net=ALL-UNNAMED -

Qlik Talend ESB: Activity monitoring with routes

Overview This article expands on the Talend Knowledge Base (KB) article, Improve Camel Flow Monitoring, by demonstrating how to use the monitoring s... Show More -

Qlik Talend Administration Center: How to resolve RepoProjectRefresher - Error G...

The following error message appears repeatedly in the logs. 2024-01-20 08:50:10 ERROR RepoProjectRefresher -2024-01-10 16:58:47 ERROR GC - D:\Talend\7... Show MoreThe following error message appears repeatedly in the logs.

2024-01-20 08:50:10 ERROR RepoProjectRefresher -

2024-01-10 16:58:47 ERROR GC - D:\Talend\7.3.1\tac\apache-tomcat\temp\_git\Cause

The main reason for the log type is that the RepoProjectRefresher faces out of memory issues. By default, TAC automatically caches/checks out the project source code into the tac\apache-tomcat\temp folder. When the source code accumulation is large, the RepoProjectRefresher module will consume a lot of memory. When maximum value is reached, a GC error will occur and a GC error log will be generated.

Note: The RepoRefresher cache functionality has been deprecated in the latest version of TAC v8.0.1

Resolution

Disable the Git caching mechanism for the TAC project by following these steps:

- Update whitelist setting for TAC DB t731 using query.

update t731.configuration set value='true' where configuration.key='git.whiteListBranches.enable'; update t731.configuration set value='\"Technical labels of project\",\"Active branch name\"' where configuration.key='git.whiteListBranches.list'; - Delete the previous Git whitelist cached setting file: talend\tomcat\webapps\tac\WEB-INF\classes\active_git_branches.csv

- Restart TAC.

Environment

- Update whitelist setting for TAC DB t731 using query.

-

Qlik Talend Data Integration: Implementing password vaults

Problem Description Currently, Talend does not have a built-in feature allowing the integration of password vaults. Root Cause Password vaults is a ... Show More -

Tabular On-Demand Reporting: There was an error downloading the report

Downloading a Tabular Reporting On-Demand report fails with: There was an error downloading the report Troubleshooting Enable web developer tools an... Show MoreDownloading a Tabular Reporting On-Demand report fails with:

There was an error downloading the report

Troubleshooting

- Enable web developer tools and start recording har file

- Attempt to download the report again

- Open the har file in a text editor

- Search for Chart not found

[{\"error\":{\"code\":404,\"message\":\"chart not found\"},\"exportErrors\":[{\"code\":\"REP-404002\",\"detail\":\"the chart does not exist or it is not available\",\"meta\":{\"appErrors\":[{\"appId\":\"c09eab27-2fca-49ff-8fae-7acf286c1f8b\",\"method\":\"GetObject\",\"parameters\":{\"chartID\":\"KpEJwyY\"}}]} - In this case chart ID KpEJwyY is not found when attempting to download the report

- Why? Yes, this chart object exists, but it is currently inside a private sheet.

Resolution

For tabular reporting to be able to use a chart, the chart must be on a public sheet.

- Locate the private sheet

- Right click on it

- Click Make Public

If the object is already in a public sheet, Tabular Reporting may not recognize the Qlik Sense theme used. To resolve this, change the currently used Qlik Sense Theme to, for example, the Horizon or Sense Classic theme and try again.

Cause

- Charts used in Tabular Reporting must be available in a public Qlik Sense app sheet.

- In rare cases, Tabular Reporting might not recognize the Sense theme used with the App

Related Content

Environment

- Tabular Reporting

- Qlik Cloud

Investigation ID

- QB-28182 (for QCS Sense Theme issue)

-

Migrating Qlik Sense Client-Managed To Qlik Cloud

This Techspert Talks session covers: - What to plan for- Migration Pathways- Cloud Best Practices Chapters: 01:20 - Cloud Tenant Locations 01:47... Show MoreThis Techspert Talks session covers:

- What to plan for

- Migration Pathways

- Cloud Best PracticesChapters:

- 01:20 - Cloud Tenant Locations

- 01:47 - Qlik Cloud Architecture

- 03:08 - Planning the migration

- 03:50 - Migration Journey

- 05:13 - Licensing Guardrails

- 06:39 - Migrating users, groups, and permissions

- 11:11 - Migrating apps

- 14:44 - Migrating the data connections

- 21:07 - Completed Migration

- 21:35 - 5 Key Take Aways

- 22:48 - Q&A: How to license sync with hybrid?

- 23:59 - Q&A: Best practices for 1 app migration?

- 24:47 - Q&A: What's included with license cost?

- 26:03 - Q&A: How to customize the UI?

- 26:45 - Q&A: How safe is the data in Cloud?

- 27:37 - Q&A: What are common stumbling blocks?

- 28:41 - Q&A: How are reload monitored?

- 29:28 - Q&A: What is the data size cap?

- 30:15 - Q&A: What cannot be migrated?

Resources:

- Qlik Cloud Best Practices

- Troubleshooting Qlik Data Gateway Direct Access

- Qlik Cloud Migration Center

- Qlik Professional Services

- Space-aware data source syntax examples

- Trust and Security at Qlik

- The Reload Analyzer for Qlik Cloud Customers

- Qlik Licensing Service Reference Guide

- Migrating NPrinting to Qlik Cloud Reporting

-

Talend 7.3 and prior will not validate after Talend Cloud License Renewal

After Talend Cloud License Renewal, Talend 7.3 and earlier license versions is active in Talend Cloud subscription page, but the license still shows a... Show MoreAfter Talend Cloud License Renewal, Talend 7.3 and earlier license versions is active in Talend Cloud subscription page, but the license still shows as expired when signing into Talend Studio 7.3 or prior versions.

Resolution

- Close Talend Studio.

- Delete the existing license file in the Talend Studio directory.

- Open Talend Studio and refetch the license using your credentials.

You can use your login username and password or username & personal access token to fetch the license in Talend Studio. Please select the correct Server URL based on your region (AWS-region or Azure).

Cause

Old license file before the renewal is still located in the Talend Studio directory. Possible corruption of the license file due to size of file.

Related Content

https://help.talend.com/en-US/studio-user-guide/7.3/launching-talend-studio?ver=5

Environment

- Talend Studio 7.3 & prior

-

Top 10 Viz tips - part VIII - Qlik Connect 2024

At Qlik Connect 2024 I hosted a session called "Top 10 Visualization tips". Here's the app I used with all tips including test data. Tip titles, more... Show MoreAt Qlik Connect 2024 I hosted a session called "Top 10 Visualization tips". Here's the app I used with all tips including test data.

Tip titles, more details in app:

- New, recurring and lost

- Phasor diagram

- Common 0 axis

- Force bar clipped axis

- Color by expression legend

- Combo line labels off

- Gantt table

- Dynamic classes

- Unicode symbols

- Hide fields

- KPI cards revisited

- Sankey as decomposition chart

- Image to map revisited

I want to emphasize that many of the tips were discovered by others than me, I tried to credit the original author at all places when possible.

If you liked it, here's more in the same style:

- 24 days of visualization Season III, II, I

- Top 10 Tips Part VIII, VII, VI, V, IV, III, II , I

- Let's make new charts with Qlik Sense

- FT Visual Vocabulary Qlik Sense version

- Similar but for Qlik GeoAnalytics : Part III, II, I

Thanks,

Patric -

Qlik Sense Enterprise on Windows: Engine service does not start with TLS 1.3 is ...

After installing Qlik Sense Enterprise on Windows and adding the scheduler functionality, the engine service stops right after start with no logs writ... Show MoreAfter installing Qlik Sense Enterprise on Windows and adding the scheduler functionality, the engine service stops right after start with no logs written in the Engine logs.

This applies to Windows Server 2022.

Resolution

Verify if TLS 1.3 has been enabled.

Neither the listed versions of Windows, nor Qlik Sense Enterprise on Windows support TLS 1.3. See Supported TLS and SSL Protocols and Ciphers.

Always take backups of your Windows Registry before proceeding. Manage the change with your Windows System Administrator if needed.

Once verified, disable TLS 1.3:

- Open the Windows Registry

- Navigate to [HKEY_LOCAL_MACHINE\SYSTEM\CurrentControlSet\Control\SecurityProviders\SCHANNEL\Protocols\TLS 1.3\Client

- Set the Enabled DWORD to 00000000

- Navigate to [HKEY_LOCAL_MACHINE\SYSTEM\CurrentControlSet\Control\SecurityProviders\SCHANNEL\Protocols\TLS 1.3\Server

- Set the Enabled DWORD to 00000000

For more detailed instructions, refer to Microsoft.

Alternatively:

- Open the Windows Registry

- Navigate to [HKEY_LOCAL_MACHINE\SYSTEM\CurrentControlSet\Control\SecurityProviders\SCHANNEL\Protocols\TLS 1.3]

- Delete the entire TLS 1.3 directory

Cause

TLS 1.3 is supported in Windows 11 and Windows Server 2022, but its not fully supported in Qlik Sense Enterprise on Windows yet. As a result your service will fail on startup.

Environment

- Qlik Sense Enterprise on Windows all versions

- OS: Windows Server 2022

-

Tabular Reporting events in the management console do not appear for all the use...

Issue reported Tabular Reporting events in the management console not showing for all the users in the tabular reporting recipient list Environment ... Show MoreIssue reported

Tabular Reporting events in the management console not showing for all the users in the tabular reporting recipient list

Environment

Resolution

When section access is used in a Qlik App, ensure to add all required recipients/users to the section access load script

For example, users in the Recipient import file should ideally match the users entered to the Section Access load script of the app.

This generally permits users to view management console details such as 'Events' assuming those user also have the necessary 'view' permissions in the tenant in which the app exists

Cause

If some of the recipients in the tabular reporting recipient list do not have access to the Space/App - they won't be considered in the task execution because they fail the governance.

ie: Recipients/users that are not added to the load script will not have access to the app nor associated management console events.

This is expected behavior.

Related Content

- Tabular reporting and section access

- URL to Events in your management console: https://yourtenant.us.qlikcloud.com/console/events

- Reviewing system events

-

Why do I get a Invalid Vizualization error in my Qlik Apps?

A Qlik app may show an Incomplete Visualization or Invalid Visualization error. This article covers the most common root causes. Resolution Check fo... Show MoreA Qlik app may show an Incomplete Visualization or Invalid Visualization error. This article covers the most common root causes.

Resolution

- Check for and fix incomplete chart dimensions or measures and also check for NULL values.

- Update your visualization bundles:

- These bundles must be the same version as the installed Qlik Sense version. A bundle can be copied from a different Qlik Sense server so long as it is the same Qlik version and patch of the Target Qlik Server which needs its bundle updated

- If supported 3rd party bundles, check with your vendor and upload a supported and current bundle version

- Use supported Native Qlik Bundles

Cause

Incomplete Visualizations:

- The chart may have invalid dimensions or measures due to recent changes by a developer

Invalid Visualizations:

- Wrong bundle version uploaded to the current Qlik Sense server, perhaps from an older Qlik Sense server

- Unsupported or outdated 3rd party bundles

- Incorrectly developed custom visualization bundle

Related Content

- Visualization bundles

- Dashboard bundles

- Installing, importing and exporting your visualizations

- Creating and editing visualizations

- Troubleshooting - Creating visualizations

- Null values in visualizations

The information in this article is provided as-is and will be used at your discretion. Depending on the tool(s) used, customization(s), and/or other factors, ongoing support on the solution below may not be provided by Qlik Support.

-

Failed to open document Errors in NPrinting Engine or Scheduler log

Issue Verifying NPrinting connections shows all green check boxes. However, when executing publish reports tasks, the task fails and we see failed t... Show MoreIssue

Verifying NPrinting connections shows all green check boxes.

However, when executing publish reports tasks, the task fails and we see failed to open document errors in NPrinting Engine or Scheduler log.

Environment

- Qlik NPrinting

Resolution

- Triggers. Remove any sheet triggers or any other unsupported items in the document (triggers not supported). See Related Content section below

- Note: the NPrinting connection verification process does not check for 'triggers' nor 'alternate states'. These must be checked manually in the connected document. Verification will pass even if these exist in the document.

- Section access. If the identity used does not have access to the document then it won't be able to use the document in the QS connection. See Related Content section below

- Note: If section access check box is selected but there is no section access in the document, make sure to uncheck the section check box on the NP connection configuration page

- In the NP Connection identity field, use an Identity that that has access to the document. See Related Content section below

Related Content

-

Qlik Replicate Global Rules: Filter is case sensitive

Limitation The column name text box in Qlik Replicate's Global Rule Filter is case-sensitive. If you do not match the case of the column name when run... Show MoreLimitation

The column name text box in Qlik Replicate's Global Rule Filter is case-sensitive. If you do not match the case of the column name when running the task, the filter will not be applied to the target results.

There will be no error in the task log and no error presented onscreen. Instead, reviewing the resulting records will show that the filter was not applied and that all records are present.

Resolution

Always case-match the column name in the source.

Related Content

Data (global transformations only)

Environment

-

Understanding Set Analysis

This Techspert Talks session covers: How Set Analysis works Possible and Excluded selections Using Advanced Expressions Chapters: 01:02 - What... Show More -

How to disable Task Log Data Encryption in Qlik Replicate

Environment Qlik Replicate May 2023 (Version 2023.5 and later) What is Replicate log file encryption? Starting from version "November 2021" (Versi... Show MoreEnvironment

- Qlik Replicate May 2023 (Version 2023.5 and later)

What is Replicate log file encryption?

Starting from version "November 2021" (Version 2021.11), Qlik Replicate introduced support for log data encryption to safeguard customer data snippets from being exposed in verbose task log files. However, this enhancement also presented its own set of challenges, such as making it very difficult to read the logs and complicating troubleshooting processes, the steps of Decrypting Qlik Replicate Verbose Task Log Files takes time.

How to disable Task Log Data Encryption?

In response to customer feedback and feature requests, we implemented a new feature allowing users the flexibility to disable log encryption in Qlik Replicate. This enhancement was rolled out with the release of Qlik Replicate version 2023.5 GA. This article serves as a guide on how to effectively disable Task Log Data Encryption.

Steps

- Stop the Replicate Server services.

- Open <REPLICATE_INSTALL_DIR>\bin\repctl.cfg and add one line disable_log_encryption.

{ "port": 3552, "plugins_load_list": "repui", ... ... "enable_data_logging": true, "disable_log_encryption": true } - Save the repctl.cfg file and start the Replicate Server services.

- Now the task log files display database data, sample lines like:

00029680: 2024-03-01T18:30:26:105005 [TARGET_LOAD ]V: Column name: ID value: 441260d008490: 2600012C000000000000000000000000 | &..,............ 1260d0084a0: 000000 | ... (sqlserver_endpoint_imp.c:2787) 00029680: 2024-03-01T18:30:26:105005 [TARGET_LOAD ]V: Column name: NAME value: trx222 1260d0084a8: 747278323232 | trx222 (sqlserver_endpoint_imp.c:2787)

Where the database table's column "ID" value is "44", and the column "NAME" value is "trx222" which are in plain text format as well as hexadecimal format datas.

Important Note:

Please exercise caution as verbose task logs may contain sensitive end-user data, and disabling encryption could potentially lead to data leakage. Always ensure appropriate measures are taken to protect sensitive information.Related Content

How to Decrypt Qlik Replicate Verbose Task Log Files

-

Qlik Replicate: Join different tables in source database and filter records in F...

Sometimes we need to join different tables in the source databases and filter records according to another table records values. In this article we ar... Show MoreSometimes we need to join different tables in the source databases and filter records according to another table records values. In this article we are using Oracle source endpoint, to demonstrate how to build up such a task in Qlik Replicate.

In the below sample task, table testfilter will be replicated from Oracle source database to SQL Server target database. During the replication, the records which value is INACTIVE in table testfiltercondition will be filtered out and are ignored in both Full Load and CDC stages. The two tables are joined by the same primary key column "id".

Detailed information:

- In Oracle database prepare 2 tables

create table testfilter (id integer not null primary key, name char(20), notes char(200));

insert into testfilter values (2,'John','ACTIVE will pass the filter');

insert into testfilter values (3,'Sybase','INACTIVE will be ignored');

insert into testfilter values (5,'Hana','ACTIVE will pass the filter');

create table testfiltercondition (id integer not null primary key, status char(20));

insert into testfiltercondition values (2,'ACTIVE');

insert into testfiltercondition values (3,'INACTIVE');

insert into testfiltercondition values (5,'ACTIVE'); - In Qlik Replicate the table testfilter is included in a Full Load and Apply Changes enabled task.

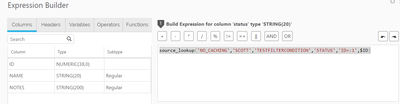

- In Table Settings --> Transformation, let's add an additional column name "status" which computed expression is:

source_lookup('NO_CACHING','SCOTT','TESTFILTERCONDITION','STATUS','ID=:1',$ID)In the above expression, the 2 tables testfilter and testfiltercondition are joined by the PK column "id" in function source_lookup.

- In Table Settings --> Filter add a filter on the column "status" which value equal to "ACTIVE"

- In target side tables, only rows with "status" equal to "ACTIVE" are replicated. Other rows were filtered out. The filter takes action for both Full Load and CDC stages.

Internal Investigation ID(s):

#00145952

Related Content

QnA with Qlik: Qlik Replicate Tips

Environment:

- Qlik Replicate All versions

- Oracle All versions

Qlik Replicate

- In Oracle database prepare 2 tables

-

Qlik Replicate task don't stop after running out of disk space

By default, Qlik Replicate tasks do not automatically stop when disk space or memory has been completely used up. Resource Control must first be set u... Show MoreBy default, Qlik Replicate tasks do not automatically stop when disk space or memory has been completely used up. Resource Control must first be set up.

The tasks will log errors that disk space or memory is insufficient, but endpoints that write data to physical disk space can lead to missing data if there is no space to write the data files.

Resolution

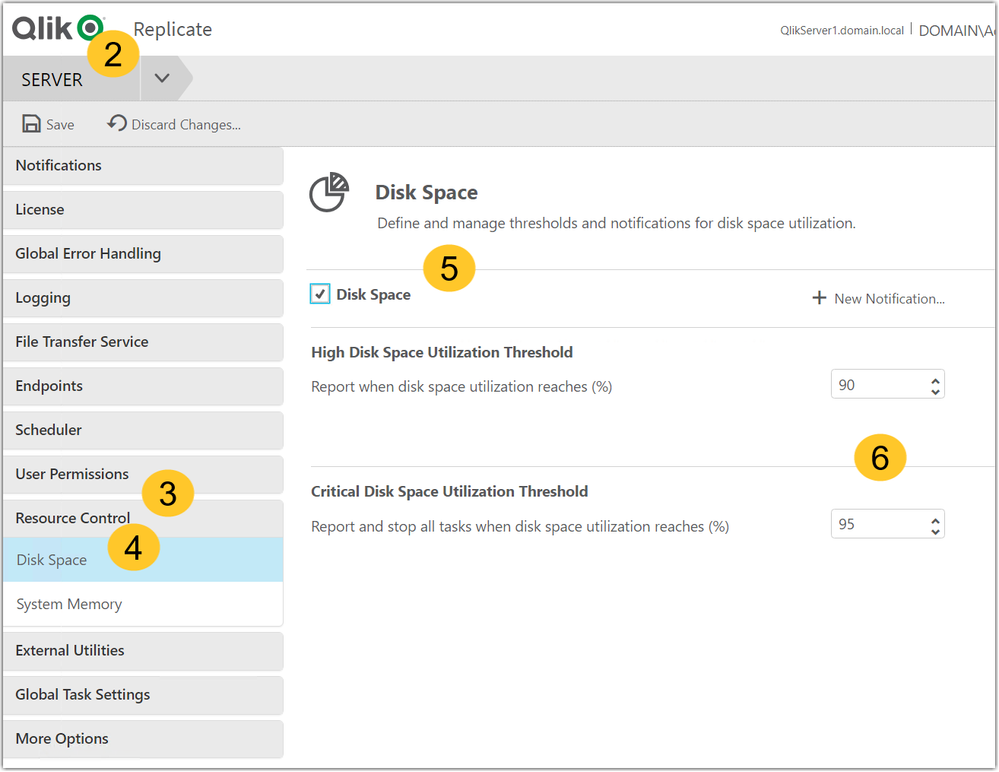

Set up Resource Control.

- Open the Qlik Replicate Management Console

- Go to the Server tab

- Open Resource Control

- Open Disk Space

- Check Disk Space

- Set up the Disk Space Utilization Threshold and Critical Disk Space Utilization Threshold

Related Content

Qlik Replicate Resource Control

Internal Investigation ID(s)

QB-24385

Environment

-

Troubleshooting Qlik Data Gateway Direct Access

This Techspert Talks session covers: How Data Gateways for Direct Access works What to do if things go wrong Troubleshooting best practices Chap... Show More