Unlock a world of possibilities! Login now and discover the exclusive benefits awaiting you.

Search our knowledge base, curated by global Support, for answers ranging from account questions to troubleshooting error messages.

Featured Content

-

How to contact Qlik Support

Qlik offers a wide range of channels to assist you in troubleshooting, answering frequently asked questions, and getting in touch with our technical e... Show MoreQlik offers a wide range of channels to assist you in troubleshooting, answering frequently asked questions, and getting in touch with our technical experts. In this article, we guide you through all available avenues to secure your best possible experience.

For details on our terms and conditions, review the Qlik Support Policy.

Index:

- Support and Professional Services; who to contact when.

- Qlik Support: How to access the support you need

- 1. Qlik Community, Forums & Knowledge Base

- The Knowledge Base

- Blogs

- Our Support programs:

- The Qlik Forums

- Ideation

- How to create a Qlik ID

- 2. Chat

- 3. Qlik Support Case Portal

- Escalate a Support Case

- Phone Numbers

- Resources

Support and Professional Services; who to contact when.

We're happy to help! Here's a breakdown of resources for each type of need.

Support Professional Services (*) Reactively fixes technical issues as well as answers narrowly defined specific questions. Handles administrative issues to keep the product up-to-date and functioning. Proactively accelerates projects, reduces risk, and achieves optimal configurations. Delivers expert help for training, planning, implementation, and performance improvement. - Error messages

- Task crashes

- Latency issues (due to errors or 1-1 mode)

- Performance degradation without config changes

- Specific questions

- Licensing requests

- Bug Report / Hotfixes

- Not functioning as designed or documented

- Software regression

- Deployment Implementation

- Setting up new endpoints

- Performance Tuning

- Architecture design or optimization

- Automation

- Customization

- Environment Migration

- Health Check

- New functionality walkthrough

- Realtime upgrade assistance

(*) reach out to your Account Manager or Customer Success Manager

Qlik Support: How to access the support you need

1. Qlik Community, Forums & Knowledge Base

Your first line of support: https://community.qlik.com/

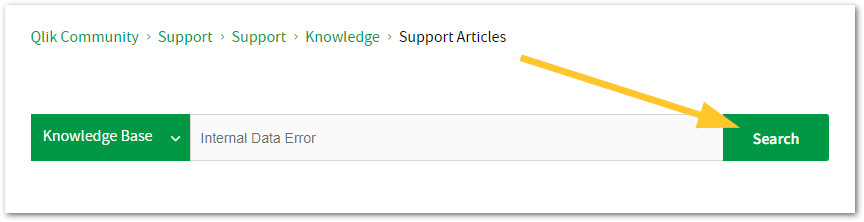

Looking for content? Type your question into our global search bar:

The Knowledge Base

Leverage the enhanced and continuously updated Knowledge Base to find solutions to your questions and best practice guides. Bookmark this page for quick access!

- Go to the Official Support Articles Knowledge base

- Type your question into our Search Engine

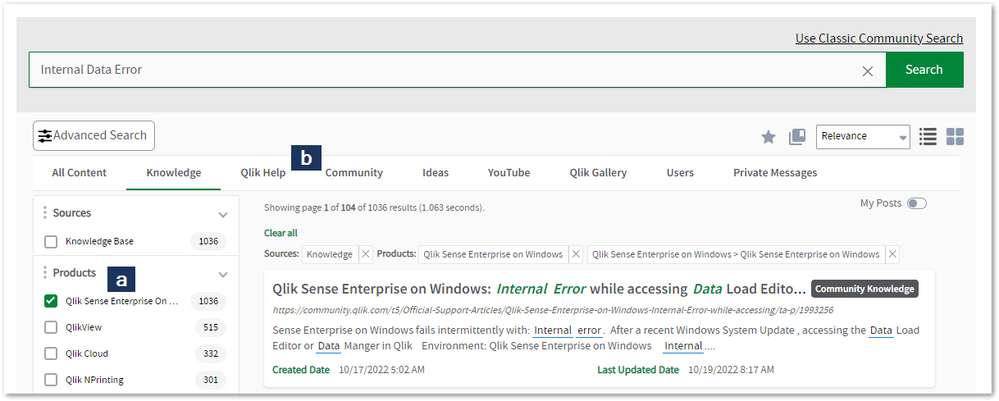

- Need more filters?

- Filter by Product

- Or switch tabs to browse content in the global community, on our Help Site, or even on our Youtube channel

Blogs

Subscribe to maximize your Qlik experience!

The Support Updates Blog

The Support Updates blog delivers important and useful Qlik Support information about end-of-product support, new service releases, and general support topics. (click)The Qlik Design Blog

The Design blog is all about product and Qlik solutions, such as scripting, data modelling, visual design, extensions, best practices, and more! (click)The Product Innovation Blog

By reading the Product Innovation blog, you will learn about what's new across all of the products in our growing Qlik product portfolio. (click)Our Support programs:

Q&A with Qlik

Live sessions with Qlik Experts in which we focus on your questions.Techspert Talks

Techspert Talks is a free webinar to facilitate knowledge sharing held on a monthly basis.Technical Adoption Workshops

Our in depth, hands-on workshops allow new Qlik Cloud Admins to build alongside Qlik Experts.Qlik Fix

Qlik Fix is a series of short video with helpful solutions for Qlik customers and partners.The Qlik Forums

- Quick, convenient, 24/7 availability

- Monitored by Qlik Experts

- New releases publicly announced within Qlik Community forums (click)

- Local language groups available (click)

Ideation

Suggest an idea, and influence the next generation of Qlik features!

Search & Submit Ideas

Ideation GuidelinesHow to create a Qlik ID

Get the full value of the community.

Register a Qlik ID:

- Go to register.myqlik.qlik.com

If you already have an account, please see How To Reset The Password of a Qlik Account for help using your existing account. - You must enter your company name exactly as it appears on your license or there will be significant delays in getting access.

- You will receive a system-generated email with an activation link for your new account. NOTE, this link will expire after 24 hours.

If you need additional details, see: Additional guidance on registering for a Qlik account

If you encounter problems with your Qlik ID, contact us through Live Chat!

2. Chat

Incidents are supported through our Chat, by clicking Chat Now on any Support Page across Qlik Community.

To raise a new issue, all you need to do is chat with us. With this, we can:

- Answer common questions instantly through our chatbot

- Have a live agent troubleshoot in real time

- With items that will take further investigating, we will create a case on your behalf with step-by-step intake questions.

3. Qlik Support Case Portal

Log in to manage and track your active cases in the Case Portal. (click)

Please note: to create a new case, it is easiest to do so via our chat (see above). Our chat will log your case through a series of guided intake questions.

Your advantages:

- Self-service access to all incidents so that you can track progress

- Option to upload documentation and troubleshooting files

- Option to include additional stakeholders and watchers to view active cases

- Follow-up conversations

When creating a case, you will be prompted to enter problem type and issue level. Definitions shared below:

Problem Type

Select Account Related for issues with your account, licenses, downloads, or payment.

Select Product Related for technical issues with Qlik products and platforms.

Priority

If your issue is account related, you will be asked to select a Priority level:

Select Medium/Low if the system is accessible, but there are some functional limitations that are not critical in the daily operation.

Select High if there are significant impacts on normal work or performance.

Select Urgent if there are major impacts on business-critical work or performance.

Severity

If your issue is product related, you will be asked to select a Severity level:

Severity 1: Qlik production software is down or not available, but not because of scheduled maintenance and/or upgrades.

Severity 2: Major functionality is not working in accordance with the technical specifications in documentation or significant performance degradation is experienced so that critical business operations cannot be performed.

Severity 3: Any error that is not Severity 1 Error or Severity 2 Issue. For more information, visit our Qlik Support Policy.

Escalate a Support Case

If you require a support case escalation, you have two options:

- Request to escalate within the case, mentioning the business reasons.

To escalate a support incident successfully, mention your intention to escalate in the open support case. This will begin the escalation process. - Contact your Regional Support Manager

If more attention is required, contact your regional support manager. You can find a full list of regional support managers in the How to escalate a support case article.

Phone Numbers

When other Support Channels are down for maintenance, please contact us via phone for high severity production-down concerns.

- Qlik Data Analytics: 1-877-754-5843

- Qlik Data Integration: 1-781-730-4060

- Talend AMER Region: 1-800-810-3065

- Talend UK Region: 44-800-098-8473

- Talend APAC Region: 65-800-492-2269

Resources

A collection of useful links.

Qlik Cloud Status Page

Keep up to date with Qlik Cloud's status.

Support Policy

Review our Service Level Agreements and License Agreements.

Live Chat and Case Portal

Your one stop to contact us.

Recent Documents

-

Qlik Replicate and Qlik Enterprise Manager: How to identify who made transformat...

It may be necessary to identify who made changes to a particular table. Example: A transform change was done on a task with CDC enabled. What user h... Show More -

User Directory Connector (Active Directory) not functional after deleting LDAP f...

An existing User Directory Connector (UDC) stopped working. The following error is displayed: The User Directory Connector (UDC) is not configured bec... Show MoreAn existing User Directory Connector (UDC) stopped working.

The following error is displayed:

The User Directory Connector (UDC) is not configured because the following error occurred. Setting up connection to LDAP root node failed. Check log file

Configuring an identical connector with the same parameters on another server is working fine.

When starting a sync, the following exception is thrown in the User Repository logs:

Repository.UserDirectoryConnectors.LDAP.GenericLDAP.GenerateUserQuery(String[] toFilterOn)?? at Repository.UserDirectoryConnectors.LDAP.GenericLDAP.SyncUsers(String[] usersToFilterOn)?? at System.Runtime.Remoting.Messaging.StackBuilderSink._PrivateProcessMessage(IntPtr md, Object[] args, Object server, Object[]& outArgs)?? at System.Runtime.Remoting.Messaging.StackBuilderSink.AsyncProcessMessage(IMessage msg, IMessageSink replySink)????Exception rethrown at [0]: ?? at System.Runtime.Remoting.Proxies.RealProxy.EndInvokeHelper(Message reqMsg, Boolean bProxyCase)?? at System.Runtime.Remoting.Proxies.RemotingProxy.Invoke(Object NotUsed,

Environments:

LDAP filter strings are stored for UDC. If the LDAP filter is removed from the database (by using pgadmin, for example), leaving the string as a null value rather than an empty string, the Qlik Sense Services will not be able to correctly interact with the UDC anymore.

Resolution:

- Open the Qlik Sense Management Console

- Navigate to User Directory Connectors

- Open the UDC showing the symtomps

- In the empty LDAP filter field, enter any values

- Confirm the changes

-

Qlik Sense Enterprise on Windows: Adding alternative measure or dimension is not...

Adding alternative measures or dimensions to a chart is expected to immediately update the chart. However, Qlik Sense May 2024 requires a page refresh... Show MoreAdding alternative measures or dimensions to a chart is expected to immediately update the chart. However, Qlik Sense May 2024 requires a page refresh before any changes are reflected correctly.

Earlier versions of Qlik Sense are not affected.

Resolution

This is caused by QB-26786.

Workaround:

Refresh the page.

Fix Version:

Upgrade to Qlik Sense Enterprise on Windows November 2024 or any later versions.

Information provided on this defect is given as is at the time of documenting. For up-to-date information, please review the most recent Release Notes, or contact support with the ID QB-26786 for reference.

Environment

- Qlik Sense Enterprise on Windows May 2024, all patches

-

Qlik Replicate and Qlik Enterprise Manager: HSTS not enabled as per a security s...

A third-party security scan detects HSTS as not being enabled in Qlik Replicate or Qlik Enterprise Manager, even if it has been correctly set up. Exam... Show MoreA third-party security scan detects HSTS as not being enabled in Qlik Replicate or Qlik Enterprise Manager, even if it has been correctly set up.

Example software used which returned a false fail: VAM Vulnerability Assessment/CUB

Resolution

Manually verify if HSTS is set up:

- Open your browser's debug menu (For example in Google Chrome: press F12, or right-click and select Inspect)

- Open the Network tab

- Reload the page to capture network requests

- Open the Headers tab

- With a valid page selected, locate the Response Headers section

- Find the Strict-Transport-Security header

Example:application-message:OK

application-status:200

cache-control:no-cache, no-store

content-length:12

content-type:application/json; charset=utf-8

date:Tue, 13 Aug 2024 01:33:36 GMT

server:Microsoft-HTTPAPI/2.0

strict-transport-security:max-age=31536000; includeSubDomains

x-content-type-options:nosniff

x-xss-protection:1; mode=block

Cause

As Qlik Replicate and Qlik Enterprise Manager do not use IIS, some third-party tools cannot successfully verify HSTS.

Related Content

Internal Investigation ID(s)

00298442

Environment

- Qlik Replicate

- Qlik Enterprise Manager

-

REST API task is failing Intermittently with General Script Error in statement h...

"RestConnectorMasterTable" General Script Error in statement handling RestConnectorMasterTable:20200826T102106.344+0000 0088 SQL SELECT 20200826T10210... Show More"RestConnectorMasterTable" General Script Error in statement handling

RestConnectorMasterTable:

20200826T102106.344+0000 0088 SQL SELECT

20200826T102106.344+0000 0089 "name",

20200826T102106.344+0000 0090 "value"

20200826T102106.344+0000 0091 FROM JSON (wrap off) "contactCustomData"

20200826T102106.344+0000 0092 WITH CONNECTION (

20200826T102106.344+0000 0093 URL " ",

20200826T102106.344+0000 0094 HTTPHEADER "Authorization" "**Token removed for security purpose**"

20200826T102106.344+0000 0095 )

20200826T102106.967+0000 General Script Error in statement handling

20200826T102106.982+0000 Execution Failed

20200826T102106.986+0000 Execution finished.Environment

- Qlik Sense Enterprise on Windows February 2021

Resolution

To be able to catch the exact error and mitigate the issue they need to apply our recommended best practices for error handling in Qlik scripting using the Error variables

Error variables

Script control statementsSet to ErrorMode=0 it will ignore any errors and continue with the script. You can use the IF statement to retry the connection or move to another connection for a few attempts and then it will change it to ErrorMode=1 and fail or just disconnect on its own.

A sample script is located here, but further options can be added from the Help links already provided.

Qlik-Sense-fail-and-retry-connection-sample-scriptNote: QlikView scripting is the same in these functions for Qlik Sense unless otherwise stated, but there are some very helpful items in the links.

Best-Practice-Error-Handling-in-ScriptCause

Error with the fetch of the token with the rest call. If the number of rows in a table doesn't match or is less than expected, trigger the script to throw an error and have it try to load the table again for more records, or if the count is off, do a Loop until returns the correct number.

Internal Investigation ID(s)

QB-3164

-

Unable to start Talend Studio

Talend Studio does not start and displays an error message: An error has occurred. see the log file /studio/configuration/xxxxxxxx.log The log files r... Show MoreTalend Studio does not start and displays an error message:

An error has occurred. see the log file /studio/configuration/xxxxxxxx.log

The log files read:

!MESSAGE Application error !STACK 1 java.lang.IllegalStateException: Unable to acquire application service. Ensure that the org.eclipse.core.runtime bundle is resolved and started (see config.ini). at org.eclipse.core.runtime.internal.adaptor.EclipseAppLauncher.start(EclipseAppLauncher.java:81) at org.eclipse.core.runtime.adaptor.EclipseStarter.run(EclipseStarter.java:400) at org.eclipse.core.runtime.adaptor.EclipseStarter.run(EclipseStarter.java:255) at sun.reflect.NativeMethodAccessorImpl.invoke0(Native Method) at sun.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:62) at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43) at java.lang.reflect.Method.invoke(Method.java:498) at org.eclipse.equinox.launcher.Main.invokeFramework(Main.java:659) at org.eclipse.equinox.launcher.Main.basicRun(Main.java:595) at org.eclipse.equinox.launcher.Main.run(Main.java:1501)

Cause

Cached values.

Resolution

To clear cached values and recreate certain configurations:

- Close Studio and go to the Studio installation directory.

- Open the configurations folder and delete the folders that begin with org. -- except org.talend.configurator.

- Restart Studio.

If the issue persists, create a backup of your workspace, then switch to a new empty workspace and try to pull your projects again.

-

Qlik Talend Data Catalog: The required edition for HA mode in Talend Data Catalo...

The documentation of Talend Data Catalog v8.1 states that: In fact, HA deployment is only available on the MM most advanced edition as the license sto... Show MoreThe documentation of Talend Data Catalog v8.1 states that:

In fact, HA deployment is only available on the MM most advanced edition as the license stored in the shared database must be enabled to support both the Active and Passive servers (obviously on different host ids).To be more precise, only the "Advanced Plus" version (the Data Governance version in v8.1) supports HA mode.

Environment

- #Talend Data Catalog 8.1

Related Content

Talend Data Catalog High Availability Considerations

-

How to set Qlik Replicate control tables as UPPERCASE in Snowflake

By default, Qlik Replicate control tables like attrep_apply_exceptions or attrep_status are created in lower case. If you would like for those tables ... Show MoreBy default, Qlik Replicate control tables like attrep_apply_exceptions or attrep_status are created in lower case. If you would like for those tables to be created in UPPERCASE on Snowflake as a target, please see below.

Starting in version 2021.5 service pack 02, there is an internal parameter called setIgnoreCaseFlag which if checked or set to true, will set the QUOTED_IDENTIFIERS_IGNORE_CASE to true.

Thus, letters in double-quoted identifiers are stored and resolved as uppercase letters.

For reference: https://docs.snowflake.com/en/sql-reference/parameters.html#quoted-identifiers-ignore-case

You can search for setIgnoreCaseFlag in the Advanced tab -> Internal Parameters for the Snowflake Target endpoint.

Environment

The information in this article is provided as-is and to be used at own discretion. Depending on tool(s) used, customization(s), and/or other factors ongoing support on the solution below may not be provided by Qlik Support.

-

Qlik Data Movement Gateway: Schema creation in Snowflake fails due to Japanese K...

Preparing a storage task fails with the following error: Failed to prepare Data TaskJob finished with error. SQL compilation error: Object already exi... Show MorePreparing a storage task fails with the following error:

Failed to prepare Data Task

Job finished with error. SQL compilation error: Object already exists as TABLEThe error will only occur if a task name includes Japanese Katakana characters. It does not fail when using English as the preferred language; only when using Japanese.

Environment

- Qlik Data Gateway version 2023.11.11

Resolution

This issue was caused by RECOB-8643 and has been resolved in the Qlik Data Gateway version 2024.5.7, along with any later releases.

See the Release Notes for details.

Internal Investigation ID(s)

RECOB-8643

-

Qlik Sense Enterprise on Windows upgrade fails with error: Could not find file

Upgrading Qlik Sense Enterprise on Windows (on-prem) fails. Reviewing the installer log files (see How To Collect Qlik Sense Installation Log File ) s... Show MoreUpgrading Qlik Sense Enterprise on Windows (on-prem) fails. Reviewing the installer log files (see How To Collect Qlik Sense Installation Log File ) shows:

Error! Validation of: C:\Program Files\Qlik\Sense\\Client\1130.68f106588cb51d5970a9.js.LICENSE.txt failed: Could not find file 'C:\Program Files\Qlik\Sense\Client\1130.68f106588cb51d5970a9.js.LICENSE.txt'.

The environment previously used the Qlik PostgreSQL Installer to upgrade its bundled PostgreSQL database.

Resolution

Verify if old Qlik Sense patches remained installed:

- Open the Windows Control Panel

- Open Programs & Features

- Open View Installed Updates (menu on the left)

- Locate an old Qlik Sense patch and click Uninstall

If the Uninstall option does not work, manually uninstall it instead:

- Open a Windows Explorer and navigate to C:\ProgramData\Package Cache

- Open a command prompt (as an administrator) and move to the same path using the command: "cd C:\ProgramData\Package Cache"

- Run the command "dir /s Qlik_Sense_update.exe"

- A folder will be revealed. Open the folder in Windows Explorer.

Example: - Verify the folder includes Qlik_Sense_update.exe

- Rename the folder. e.g. "9b21fde3-1245-4536-891f-e1d3965f3d0f_aaa"

- Go back to the list of Installed Upgrades and click Uninstall on the Patch

- Confirm the message with Yes

- Upgrade Qlik Sense.

Cause

A defect was observed if the Qlik PostgreSQL installer was previously used and old patches were not correctly removed by the installer. See the May 2023 Release Notes for QPI.

The error message appears when the installer is looking for the file which is no longer exists in the new version of the Qlik Sense.

Related Content

Upgrading Qlik Sense Repository Database using the Qlik PostgreSQL Installer

Environment

Qlik Sense Enterprise on Windows with Qlik PostgreSQL Installer used to upgrade PostgreSQL

Example: QPI was used to upgrade PostgreSQL on November 2022. November 2022 was then upgraded to February 2023.Internal Investigation ID(s)

QB-17862

-

Qlik Talend Data Integration: Best practice for Talend Runtime dependency jar fi...

When the runtime network environment is strictly restricted and the network firewall exception cannot be opened for repo1.maven.org, it leads to unres... Show MoreWhen the runtime network environment is strictly restricted and the network firewall exception cannot be opened for repo1.maven.org, it leads to unresolved jar dependencies, causing service deployment failure and generating "Unable to resolve xxx.jar" errors in <Talend Runtime Home>/log/tesb.log.

Resolution

Quick workaround

Download the jar locally and upload it to correct folder following maven2 structure, as Talend Runtime will use local maven cache to copy needed jars into <Talend Runtime Home>/system folder.

- mkdir -p /home/talenduser/.m2/respository

- Download the missing jar from https://repo1.maven.org to local and upload it to Talend Runtime.

- Create the maven2 folder structure and copy the jar to the newly created folder.

- Use bundle:install <group-id>/<artifact-id>/<version>

- Redeploy the failed service.

Long-term Solution

For each jar download, use a centralized network control.

- Setup a Nexus repository, such as "maven-central", which can mirror https://repo1.maven.org. This Nexus server must allowlist per https://repo1.maven.orgaccess

- Add "http://admin:Talend123@nexusHost:8081/repository/maven-central, \" in org.ops4j.pax.url.mvn.cfg.

vi Runtime/etc/org.ops4j.pax.url.mvn.cfg

org.ops4j.pax.url.mvn.repositories= \ http://admin:Talend123@nexusHost:8081/repository/releases@id=tesb.release,\ http://admin:Talend123@nexusHost:8081/repository/snapshots@snapshots@id=tesb.snapshot, \ http://admin:Talend123@nexusHost:8081/repository/maven-central, \ https://repo1.maven.org/maven2@id=central

Then, Talend Runtime can use this mirror repo to access dependency jars.

Cause

Strict network control from Talend Runtime server.

Environment

- Talend Data Integration 7.3.1, 8.0.1

-

Using Data Preparation as part of a Data Ingestion Pipeline

Introduction This article describes how to use Talend Data Preparation (Data Prep) as part of a data ingestion pipeline. This is a common busines... Show More -

Qlik Talend Cloud: TMC task is running under a different Java version than what ...

The task was published from Talend Studio using Java 17. The project properties within Talend Studio is currently set to Java 17 compatibility mode, a... Show MoreThe task was published from Talend Studio using Java 17. The project properties within Talend Studio is currently set to Java 17 compatibility mode, and the Remote Engine is also utilizing Java 17. Nevertheless, the execution summary of the task indicates that Java 8 is being used.

Cause

The environmental setting JAVA_HOME of the Remote Engine from which it run is set for Java 8 home path. Some servers require multiple Java environments and have the Remote Engine's JDK set in the <Remote Engine Installation Home>/bin/setenv or the <Remote Engine Installation Home>/etc/wrapper file. The Job launcher will take it's lead from JAVA_HOME, thereby causing the Job to execute with Java 8.

Resolution

- Backup and edit the <Remote Engine Installation Home>/etc/org.talend.remote.jobserver.server.cfg file.

- Set the java executable path org.talend.remote.jobserver.commons.config.JobServerConfiguration.JOB_LAUNCHER_PATH, for example:

org.talend.remote.jobserver.commons.config.JobServerConfiguration.JOB_LAUNCHER_PATH=/<JDK HOME>/bin/java

Environment

- Talend Data Integration 8.0.1

-

The Qlik Sense Monitoring Applications for Cloud and On Premise

Qlik Sense offers a range of Monitoring Applications that come pre-installed with the product. This article aims to provide information on where to f... Show MoreQlik Sense offers a range of Monitoring Applications that come pre-installed with the product. This article aims to provide information on where to find information about them or where to download them.

Content:

- Qlik Cloud

- Entitlement Analyzer for Qlik Cloud

- App Analyzer for Qlik Cloud

- Reload Analyzer for Qlik Cloud

- Access Evaluator for Qlik Cloud

- How to automate your Qlik Cloud Monitoring Apps

- Other Qlik Cloud Monitoring Apps

- Qlik Application Automation Monitoring App

- OEM Dashboard for Qlik Cloud

- Monitoring Apps for Qlik Sense Enterprise on Windows

- Operations Monitor and License Monitor

- App Metadata Analyzer

- The Monitoring & Administration Topic Group

- Other Apps

Qlik Cloud

Entitlement Analyzer for Qlik Cloud

The Entitlement Analyzer is a Qlik Sense application built for Qlik Cloud, which provides Entitlement usage overview for your Qlik Cloud tenant.

The app provides:

- Which users are accessing which apps

- Consumption of Professional, Analyzer and Analyzer Capacity entitlements

- Whether you have the correct entitlements assigned to each of your users

- Where your Analyzer Capacity entitlements are being consumed, and forecasted usage

For more information and to download the app and usage instructions, see The Entitlement Analyzer for Qlik Cloud.

App Analyzer for Qlik Cloud

The App Analyzer is a Qlik Sense application built for Qlik Cloud, which helps you to analyze and monitor Qlik Sense applications in your tenant.

The app provides:

- App, Table and Field memory footprints

- Synthetic keys and island tables to help improve app development

- Threshold analysis for fields, tables, rows and more

- Reload times and peak RAM utilization by app

For more information and to download the app and usage instructions, see Qlik Cloud App Analyzer.

Reload Analyzer for Qlik Cloud

The Reload Analyzer is a Qlik Sense application built for Qlik Cloud, which provides an overview of data refreshes for your Qlik Cloud tenant.

The app provides:

- The number of reloads by type (Scheduled, Hub, In App, API) and by user

- Data connections and used files of each app’s most recent reload

- Reload concurrency and peak reload RAM

- Reload tasks and their respective statuses

For more information and to download the app and usage instructions, see Qlik Cloud Reload Analyzer.

Access Evaluator for Qlik Cloud

The Access Evaluator is a Qlik Sense application built for Qlik Cloud, which helps you to analyze user roles, access, and permissions across a tenant.

The app provides:

- User and group access to spaces

- User, group, and share access to apps

- User roles and associated role permissions

- Group assignments to roles

For more information and to download the app and usage instructions, see Qlik Cloud Access Evaluator.

How to automate your Qlik Cloud Monitoring Apps

Do you want to automate the installation, upgrade, and management of your Qlik Cloud Monitoring apps? With the Qlik Cloud Monitoring Apps Workflow, made possible through Qlik's Application Automation, you can:

- Install/update the apps with a fully guided, click-through installer using an out-of-the-box Qlik Application Automation template.

- Programmatically rotate the API key that is required for the data connection on a schedule using an out-of-the-box Qlik Application Automation template. This ensures that the data connection is always operational.

- Get alerted whenever a new version of a monitoring app is available using Qlik Data Alerts.

For more information and usage instructions, see Qlik Cloud Monitoring Apps Workflow Guide.

Other Qlik Cloud Monitoring Apps

Qlik Application Automation Monitoring App

This article shows how to use the Qlik Application Automation Monitoring App. It explains how to set up the load script and how to use the app for monitoring Qlik Application Automation usage statistics for a cloud tenant.

For more information and to download the app and usage instructions, see Qlik Application Automation monitoring app.

OEM Dashboard for Qlik Cloud

The OEM Dashboard is a Qlik Sense application for Qlik Cloud designed for OEM partners to centrally monitor usage data across their customers’ tenants. It provides a single pane to review numerous dimensions and measures, compare trends, and quickly spot issues across many different areas.

Although this dashboard is designed for OEMs, it can also be used by partners and customers who manage more than one tenant in Qlik Cloud.

For more information and to download the app and usage instructions, see Qlik Cloud OEM Dashboard & Console Settings Collector.

The Qlik Cloud monitoring applications are provided as-is and are not supported by Qlik. Over time, the APIs and metrics used by the apps may change, so it is advised to monitor each repository for updates and to update the apps promptly when new versions are available.

If you have issues while using these apps, support is provided on a best-efforts basis by contributors to the repositories on GitHub.

Monitoring Apps for Qlik Sense Enterprise on Windows

Operations Monitor and License Monitor

The Operations Monitor loads service logs to populate charts covering performance history of hardware utilization, active users, app sessions, results of reload tasks, and errors and warnings. It also tracks changes made in the QMC that affect the Operations Monitor.

The License Monitor loads service logs to populate charts and tables covering token allocation, usage of login and user passes, and errors and warnings.

For a more detailed description of the sheets and visualizations in both apps, visit the story About the License Monitor or About the Operations Monitor that is available from the app overview page, under Stories.

Basic information can be found here:

The License Monitor

The Operations MonitorBoth apps come pre-installed with Qlik Sense.

If a direct download is required: Sense License Monitor | Sense Operations Monitor. Note that Support can only be provided for Apps pre-installed with your latest version of Qlik Sense Enterprise on Windows.

App Metadata Analyzer

The App Metadata Analyzer app provides a dashboard to analyze Qlik Sense application metadata across your Qlik Sense Enterprise deployment. It gives you a holistic view of all your Qlik Sense apps, including granular level detail of an app's data model and its resource utilization.

Basic information can be found here:

App Metadata Analyzer (help.qlik.com)

For more details and best practices, see:

App Metadata Analyzer (Admin Playbook)

The app comes pre-installed with Qlik Sense.

The Monitoring & Administration Topic Group

Looking to discuss the Monitoring Applications? Here we share key versions of the Sense Monitor Apps and the latest QV Governance Dashboard as well as discuss best practices, post video tutorials, and ask questions.

Other Apps

LogAnalysis App: The Qlik Sense app for troubleshooting Qlik Sense Enterprise on Windows logs

Sessions Monitor, Reloads-Monitor, Log-Monitor

Connectors Log AnalyzerAll Other Apps are provided as-is and no ongoing support will be provided by Qlik Support.

-

Qlik Replicate: What does the Internal Parameter skipMscdcJobFitnessCheck do?

The internal parameter skipMscdcJobFitnessCheck implies that, if enabled, it will prevent or (skip) the Fitness Check from running. This is incorrect.... Show MoreThe internal parameter skipMscdcJobFitnessCheck implies that, if enabled, it will prevent or (skip) the Fitness Check from running. This is incorrect.

skipMscdcJobFitnessCheck controls the cdcJob portion of the Fitness Check. The Fitness Check itself will always run and cannot be stopped. Only the cdcJob check is skipped.

This means that task log entries such as "Failed in MS-CDC fitness check" will not be addressed by enabling skipMscdcJobFitnessCheck.

Environment

Qlik Replicate

SQL Server MS-CDCRelated Content:

Qlik Replicate: MSSQL-CDC source endpoint to Snowflake. We are encountering the following error: Failed in MS-CDC fitness check

Qlik Replicate: How to set Internal Parameters and what are they for? -

Qlik Sense Migration Tools: Error operation unsupported for server-type "cloud" ...

Executing the Qlik Migration Tools leads to the following error on line 185 of 7_migrateapps.ps1: Error: operation unsupported for server-type "cloud"... Show MoreExecuting the Qlik Migration Tools leads to the following error on line 185 of 7_migrateapps.ps1:

Error: operation unsupported for server-type "cloud" (Qlik Cloud). Supported server-types are: ["windows"]

Example:

qlik context use $QlikWindowsContext | out-null qlik qrs app export create $appid --skipdata $SS --exportScope all --output-file $exportAppFile

Resolution

Verify you have followed the required step-by-step configuration documented in Using qlik-cli with Qlik Sense Enterprise client-managed Repository API (QRS).

Specifically, do not forget to execute this cmd to add the Qlik Sense Enterprise on Windows server to qlik-cli. See Configure a context in qlik-cli for details.

Example:

##qlik context create QSEoW --server <server name> --server-type windows --api-key <JWT Token>

Cause

Possible causes:

- failed to specify --server-type windows

- executed the cmd against Qlik Cloud, not Qlik Sense Enterprise on Windows

Lines 184 and 185 test the connection as documented in Qlik-CLI: Test the connection and point to a misconfiguration of the context.

Environment

- Qlik Cloud

- Qlik Sense Enterprise on Windows

-

Qlik Cloud: "Unable to connect to the Qlik Sense engine" error when opening or a...

By accessing the tenant of the Qlik Sense SaaS editions, you may get the following error after trying to open an app. "Unable to connect to the Qlik ... Show MoreBy accessing the tenant of the Qlik Sense SaaS editions, you may get the following error after trying to open an app.

"Unable to connect to the Qlik Sense engine. Possible causes: too many connections, the service is offline, or networking issues."Environment

- Qlik Sense Enterprise SaaS

- Qlik Sense Business

Resolution

In the most common scenario, the error is related to an idle session timeout. If the error occurs after 20 min idle time, the only required action is to click "Refresh" in order to reconnect the client to Qlik Sense.

For this scenario, a more user-friendly message is planned for future Qlik Cloud releases.It is recommended to try and establish if a network device between the browser and the Internet is causing the connection error if it occurs immediately or sooner than idle session timeout.

- Contact your local IT helpdesk for troubleshooting and advice.

- Confirm that network components support Websocket connections to your tenant <tenantname>.<region>.qlikcloud.com

- Check if the device utilizes an Internet proxy for Internet access

- Disable VPN if feasible

-

Connect to Qlik Cloud tenant from a different device or network

- Connect with a non-corporate device, to quickly identify if corporate policy is causing the issue

- Access Qlik Download page as a test, if the tenant is not allowed from another device

-

Check browser dev tools for more specific error messages

- Search Qlik Support Knowledge for related articles and known issues

- Open Qlik Support ticket if the error can not be resolved and local IT can not find a root cause

Cause

Qlik Sense uses WebSockets that enable communication between the web browser and Qlik Sense server. A Qlik Sense application gets generated with visualization objects and data via this communication.

The same technology is applied for web integration where Qlik Sense objects are embedded in other pages.The root cause of this error is that the browser's WebSocket connection has been terminated for various reasons;

- The most common scenario is that the session has been idle for 20 minutes, and session timeout has been reached in Qlik Sense engine. In such a case, the session will be restored by pressing "Refresh" in the error dialog.

- The WebSocket connection may be terminated prematurely or blocked by a network device.

- If the error occurs immediately when an app is accessed, it may indicate that WebSocket traffic is not allowed to pass through from the browser to the Internet.

It may also indicate that multiple concurrent WebSocket connections are not allowed in all network devices between the browser and the Internet. - If the error occurs a few seconds after the app has been successfully opened, it may indicate that the WebSocket connection is not allowed to be persistent in the network devices between the browser and the Internet.

Qlik Sense requires persistent WebSocket connections, and also sends TCP keep-alive packages every ~30 secs to keep the connection open even when a user is not actively interacting with a Qlik Sense app. This keep-alive mechanism will not work if a network device has a lower connection timeout than 30 seconds. - WebSocket connections use a different (WSS) protocol and port than normal (HTTPS) web traffic. Network devices between the browser and the Internet must support the use of the WebSocket protocol.

- If the error occurs immediately when an app is accessed, it may indicate that WebSocket traffic is not allowed to pass through from the browser to the Internet.

Related Content

-

How to load a file from SharePoint 365 using Qlik Using Qlik Web Connectors

In this example, we load data from an Excel file hosted on SharePoint 365 using the Qlik Office 365 Sharepoint connector.Environment: Qlik Web Connec... Show More

In this example, we load data from an Excel file hosted on SharePoint 365 using the Qlik Office 365 Sharepoint connector.

Environment:Qlik Web Connectors: Qlik Office 365 SharePoint Connector

All Qlik Sense Enterprise on WindowsFor our example, we are using Qlik Sense Enterprise on Windows and the installed Qlik Web Connector for Office 365 Sharepoint. For more information on the Office 365 Sharepoint Qlik Web Connectors and for installation instructions, see: Office 365 Sharepoint and Installation Web Connectors.

Step by Step Instructions (as seen in the video)

Links provided in these examples are example links, not real links.

- Using a supported web browser, open up the Qlik Web Connectors Console

YourWebConnectorServer:5555/web - Locate and click the Qlik Office 365 Sharepoint Connector

- Select the CanAuthenticate table in the available Tables list on the left.

- Click Parameters

- Copy your Sharepoint base URL (where the file is located) into the Base URL field

https://<yourO365TenantID>.sharepoint.com - Click Authenticate

- This will open a new browser window with your Authentication Code. Copy the code to clipboard.

- Return to the previous browser window and paste the code into the Authentication Code field. Click Save.

- Now click Save Inputs & Run Table

A confirmation will be shown in the Data Preview tab reading true in the authenticated row. - Locate the ListFolders table in the available Tables list on the left.

- Click Parameters

- Provide the Base URL and Sub Site Path

- Base URL: https://<yourO365TenantID>.sharepoint.com

- Sub site Path: /sites/<yoursubsiteDirectoryPath>

- Click Save Inputs and Run Table

- In the now available list, locate the directory your file is located in.

- Copy the path and paste it into the Folder field.

- /sites/<yoursubsiteDirectoryPath>/Shared Documents/Functional Area - Development

- Repeat this step if needed to continue listing nested folders until you locate the folder storing the file.

- Click Save Inputs and Run Table

- Copy the folder ServerRelativeUrl

- Locate the ListFiles table in the available Tables list on the left. This will allow us to identify the ID of the Excel file.

- Click Parameters

- Paste the ServerRelativeUrl into the Folder field

- Click Save Inputs and Run Table

- The Data Preview tab will now show us the UniqueId of the xslx document you are interested in. In our example: cf079b74-1bc8-4a5a-971d-07321081a6ac

- Copy the ID

- Go back and choose the GetFile table

- The Sub Site path should be the subsite that you have previously used

- Paste the UniqueId into the UniqueId For File field.

- Click Save Inputs and Run Table

- The Connector will now attempt to preview the data

- Click the Qlik Sense (Standard Mode) tab

- Copy the URL provided:

http://localhost:5555/data?connectorID=Office365Connector&table=GetFile&subSite=%2fsites%2f<yoursubsiteDirectoryPath>&fileId=cf079b74-1bc8-4a5a-971d-07321081a6ac&appID= - Go to your app in Qlik Sense

- Create a connection to a web file using that URL previously copied.

- Reload the data to your Sense app

- Using a supported web browser, open up the Qlik Web Connectors Console

-

Qlik Talend Data Integration: Talend Installer failed with an error: /bin/sh: /...

When running the Talend Installer with root user and sudo permission, the installation failed with an error: Error: There has been an error.Error runn... Show MoreWhen running the Talend Installer with root user and sudo permission, the installation failed with an error:

Error: There has been an error.

Error running /bin/sh -c "/tmp/create_user_4ce8b0db.sh" : /bin/sh: /tmp/create_user_4ce8b0db.sh: Permission deniedResolution

Collaborate with the Linux administrator to temporarily remove nonexec from /etc/fstab, and once the installation is complete, you may reactivate the nonexec option out of security concern.

Cause

The /tmp folder was mounted with the nonexec option, which disables execution. By default, Talend will use the /tmp folder to extract the temporary installer file for execution.

To verify this, you can type

cat /etc/fstab

and find the line for

/dev/mapper/vgroot-lvtmp /tmp xfs nodev,nosuid,noexec 0 0.

Environment

- Talend Data Integration 7.3.1

-

Qlik Sense Chart-level scripts, ADD LOAD ..... RESIDENT HC1 does not work

The conclusions in this article were reached in collaboration with cjgorrin from the European Commission's Joint Research Centre Qlik Sense team. With... Show MoreThe conclusions in this article were reached in collaboration with cjgorrin from the European Commission's Joint Research Centre Qlik Sense team.

With Qlik Sense's new feature, Chart-level scripts, Add prefix is used with LOAD to append values to the HC1 table, representing the hypercube computed by the Qlik associative engine.

Based on this documentation and using the attached CHART LEVEL SCRIPT_ADD RESIDENT Load.qvf, we would expect the table to have 12 entries (6 original and 6 new)

Example: as the hypercube already has 6 entries, we would expect 6 new entries to be added when using Add prefix.

ADD LOAD MyDim1 as MyDim1, MyDim2 as MyDim2, MyMeasure1*100 as MyMeasure1, MyMeasure2*1000 as MyMeasure2 RESIDENT HC1

The result only shows 6 rows, with only one of them being modified:

Resolution

This is working as designed.

ADD LOAD RESIDENT will try to merge duplicate rows and the test cases expect this behavior.

Workaround

To workaround this specific user case, add a blank space to one of the dimensions, as shown below:

ADD LOAD MyDim1&' ' as MyDim1, MyDim2 as MyDim2, MyMeasure1*100 as MyMeasure1, MyMeasure2*1000 as MyMeasure2 RESIDENT HC1;"

Internal Investigation ID(s):

QB-27903

Environment

- Qlik Sense May 2024 and above

- Qlik Sense Cloud