Unlock a world of possibilities! Login now and discover the exclusive benefits awaiting you.

Featured Content

-

How to contact Qlik Support

Qlik offers a wide range of channels to assist you in troubleshooting, answering frequently asked questions, and getting in touch with our technical e... Show MoreQlik offers a wide range of channels to assist you in troubleshooting, answering frequently asked questions, and getting in touch with our technical experts. In this article, we guide you through all available avenues to secure your best possible experience.

For details on our terms and conditions, review the Qlik Support Policy.

Index:

- Support and Professional Services; who to contact when.

- Qlik Support: How to access the support you need

- 1. Qlik Community, Forums & Knowledge Base

- The Knowledge Base

- Blogs

- Our Support programs:

- The Qlik Forums

- Ideation

- How to create a Qlik ID

- 2. Chat

- 3. Qlik Support Case Portal

- Escalate a Support Case

- Phone Numbers

- Resources

Support and Professional Services; who to contact when.

We're happy to help! Here's a breakdown of resources for each type of need.

Support Professional Services (*) Reactively fixes technical issues as well as answers narrowly defined specific questions. Handles administrative issues to keep the product up-to-date and functioning. Proactively accelerates projects, reduces risk, and achieves optimal configurations. Delivers expert help for training, planning, implementation, and performance improvement. - Error messages

- Task crashes

- Latency issues (due to errors or 1-1 mode)

- Performance degradation without config changes

- Specific questions

- Licensing requests

- Bug Report / Hotfixes

- Not functioning as designed or documented

- Software regression

- Deployment Implementation

- Setting up new endpoints

- Performance Tuning

- Architecture design or optimization

- Automation

- Customization

- Environment Migration

- Health Check

- New functionality walkthrough

- Realtime upgrade assistance

(*) reach out to your Account Manager or Customer Success Manager

Qlik Support: How to access the support you need

1. Qlik Community, Forums & Knowledge Base

Your first line of support: https://community.qlik.com/

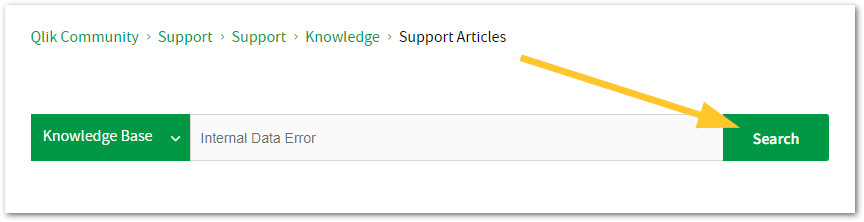

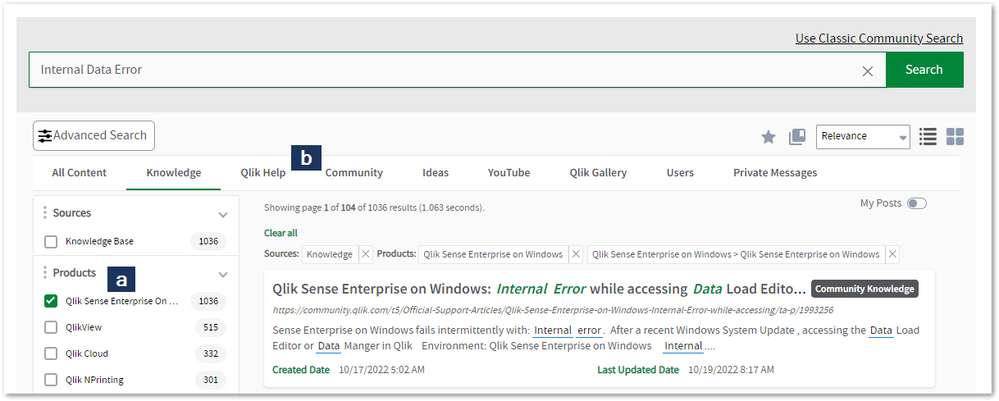

Looking for content? Type your question into our global search bar:

The Knowledge Base

Leverage the enhanced and continuously updated Knowledge Base to find solutions to your questions and best practice guides. Bookmark this page for quick access!

- Go to the Official Support Articles Knowledge base

- Type your question into our Search Engine

- Need more filters?

- Filter by Product

- Or switch tabs to browse content in the global community, on our Help Site, or even on our Youtube channel

Blogs

Subscribe to maximize your Qlik experience!

The Support Updates Blog

The Support Updates blog delivers important and useful Qlik Support information about end-of-product support, new service releases, and general support topics. (click)The Qlik Design Blog

The Design blog is all about product and Qlik solutions, such as scripting, data modelling, visual design, extensions, best practices, and more! (click)The Product Innovation Blog

By reading the Product Innovation blog, you will learn about what's new across all of the products in our growing Qlik product portfolio. (click)Our Support programs:

Q&A with Qlik

Live sessions with Qlik Experts in which we focus on your questions.Techspert Talks

Techspert Talks is a free webinar to facilitate knowledge sharing held on a monthly basis.Technical Adoption Workshops

Our in depth, hands-on workshops allow new Qlik Cloud Admins to build alongside Qlik Experts.Qlik Fix

Qlik Fix is a series of short video with helpful solutions for Qlik customers and partners.The Qlik Forums

- Quick, convenient, 24/7 availability

- Monitored by Qlik Experts

- New releases publicly announced within Qlik Community forums (click)

- Local language groups available (click)

Ideation

Suggest an idea, and influence the next generation of Qlik features!

Search & Submit Ideas

Ideation GuidelinesHow to create a Qlik ID

Get the full value of the community.

Register a Qlik ID:

- Go to register.myqlik.qlik.com

If you already have an account, please see How To Reset The Password of a Qlik Account for help using your existing account. - You must enter your company name exactly as it appears on your license or there will be significant delays in getting access.

- You will receive a system-generated email with an activation link for your new account. NOTE, this link will expire after 24 hours.

If you need additional details, see: Additional guidance on registering for a Qlik account

If you encounter problems with your Qlik ID, contact us through Live Chat!

2. Chat

Incidents are supported through our Chat, by clicking Chat Now on any Support Page across Qlik Community.

To raise a new issue, all you need to do is chat with us. With this, we can:

- Answer common questions instantly through our chatbot

- Have a live agent troubleshoot in real time

- With items that will take further investigating, we will create a case on your behalf with step-by-step intake questions.

3. Qlik Support Case Portal

Log in to manage and track your active cases in the Case Portal. (click)

Please note: to create a new case, it is easiest to do so via our chat (see above). Our chat will log your case through a series of guided intake questions.

Your advantages:

- Self-service access to all incidents so that you can track progress

- Option to upload documentation and troubleshooting files

- Option to include additional stakeholders and watchers to view active cases

- Follow-up conversations

When creating a case, you will be prompted to enter problem type and issue level. Definitions shared below:

Problem Type

Select Account Related for issues with your account, licenses, downloads, or payment.

Select Product Related for technical issues with Qlik products and platforms.

Priority

If your issue is account related, you will be asked to select a Priority level:

Select Medium/Low if the system is accessible, but there are some functional limitations that are not critical in the daily operation.

Select High if there are significant impacts on normal work or performance.

Select Urgent if there are major impacts on business-critical work or performance.

Severity

If your issue is product related, you will be asked to select a Severity level:

Severity 1: Qlik production software is down or not available, but not because of scheduled maintenance and/or upgrades.

Severity 2: Major functionality is not working in accordance with the technical specifications in documentation or significant performance degradation is experienced so that critical business operations cannot be performed.

Severity 3: Any error that is not Severity 1 Error or Severity 2 Issue. For more information, visit our Qlik Support Policy.

Escalate a Support Case

If you require a support case escalation, you have two options:

- Request to escalate within the case, mentioning the business reasons.

To escalate a support incident successfully, mention your intention to escalate in the open support case. This will begin the escalation process. - Contact your Regional Support Manager

If more attention is required, contact your regional support manager. You can find a full list of regional support managers in the How to escalate a support case article.

Phone Numbers

When other Support Channels are down for maintenance, please contact us via phone for high severity production-down concerns.

- Qlik Data Analytics: 1-877-754-5843

- Qlik Data Integration: 1-781-730-4060

- Talend AMER Region: 1-800-810-3065

- Talend UK Region: 44-800-098-8473

- Talend APAC Region: 65-800-492-2269

Resources

A collection of useful links.

Qlik Cloud Status Page

Keep up to date with Qlik Cloud's status.

Support Policy

Review our Service Level Agreements and License Agreements.

Live Chat and Case Portal

Your one stop to contact us.

Recent Documents

-

Qlik Talend Administration Center Error Migrating Nexus OrientDB to PostgreSQL D...

For Talend Administration Center configured with Nexus, when attempting to migrate Sonatype Nexus Repository Manager OSS (versions prior to 3.77.0) fr... Show MoreFor Talend Administration Center configured with Nexus, when attempting to migrate Sonatype Nexus Repository Manager OSS (versions prior to 3.77.0) from the embedded OrientDB database to an external PostgreSQL database, the Database Migrator utility may encounter the following error:

com.sonatype.nexus.db.migrator.exception.WrongNxrmEditionException:Migration to an external database requires Nexus Repository Manager Pro and is not supported for Nexus Repository Manager OSS instances.Resolution

To successfully migrate from OrientDB to PostgreSQL, follow the below migration paths recommended by Sonatype:

Migrating from OrientDB to H2 database (temporary step)

Use the Database Migrator utility to move your data from OrientDB into the embedded H2 database.

Documentation: Migrating from OrientDB to H2 database

Upgrade to Nexus Repository Community Edition (≥ 3.77.0)

PostgreSQL support is available only in Community Edition starting with version 3.77.0.

Documentation: Community Edition Onboarding

Migrating from H2 database to PostgreSQL database

Once upgraded, use the Database Migrator utility again to move from H2 into PostgreSQL.

Documentation: Migrating from H2 database to PostgreSQL database

Important Notes:

- Migration is one‑way: once you move to PostgreSQL, you cannot revert back to OrientDB.

- Ensure you have backups before starting any migration.

- The migration process is managed by Sonatype’s Database Migrator utility and Qlik does not maintain or support this tool.

For issues with the migrator or Nexus Repository itself, contact Sonatype Support directly.

Cause

Nexus Repository OSS (3.70.4-02) does not support migration directly from OrientDB to PostgreSQL.

PostgreSQL support was introduced in the Community Edition starting with version 3.77.0.

Attempting to run the Database Migrator against OSS versions 3.70.4-02 results in the WrongNxrmEditionException.

Related Content

migrating-to-a-new-database | help.sonatype.com

Environment

-

SAML authentication works in Qlik Sense Enterprise on Windows even if the Token ...

The scenario: A Qlik Sense Enterprise on Windows environment is set up to use Azure SAML (AD FS) for authentication. On the Azure side, the Token Sign... Show MoreThe scenario: A Qlik Sense Enterprise on Windows environment is set up to use Azure SAML (AD FS) for authentication.

On the Azure side, the Token Signing Certificate embedded as the X509Certificate in the SAML IdP metadata within the Qlik virtual proxy configuration expired several weeks ago. A new certificate has not yet been issued.

It is still possible to log in to Qlik Sense Enterprise on Windows.

This behavior may raise the questions of:

- Is this a security concern?

- How long will the expired certificate last?

Resolution

In SAML, the IdP (Azure AD/AD FS) signs the assertion with its private key, and Qlik Sense validates it using the public key embedded in the IdP metadata (the X509Certificate).

The expiry date in the certificate is not actively checked by Qlik Sense during assertion validation.

Qlik only verifies that the signature matches the public key it has stored. So even if the certificate is expired, as long as the key pair hasn’t changed and the signature is valid, authentication succeeds. This is a common behavior and not specific to Qlik Sense.

Is this a security concern?

It is not considered a security issue. The expiry matters for trust and compliance, not for the cryptographic check Qlik performs.

When will the expiry become relevant?

- Azure rotates the signing certificate (such as after expiry or manual rollover). If you haven’t updated Qlik virtual proxy metadata with the new certificate, logins will fail because Qlik can’t validate the signature.

- If your organization enforces certificate validity checks at the proxy or via custom code (rare in standard Qlik deployments).

Do we need to update the certificate?

Even though Qlik doesn’t break immediately, we recommend updating the IdP metadata in Qlik Sense as soon as Azure issues a new signing certificate. This ensures future-proofing and compliance.

Environment

- Qlik Sense Enterprise on Windows

-

Qlik Talend Studio: Salesforce Connection Issue - SOAP API login() is disabled b...

While creating a metadata connection to Salesforce in Talend Studio, the connection test fails with the following error message: "SOAP API login() is ... Show MoreWhile creating a metadata connection to Salesforce in Talend Studio, the connection test fails with the following error message:

"SOAP API login() is disabled by default in this org.

exceptionCode='INVALID_OPERATION'"INVALID_OPERATION

Resolution

To resolve this issue, a Salesforce administrator must explicitly enable SOAP API login access in the org before it can be used.

Even when enabled, login() remains unavailable in API version 65.0 and later.

Cause

This error occurs when Talend Studio attempts to authenticate with Salesforce using the SOAP API, but SOAP-based login access is disabled at the Salesforce organization level. As a result, Talend is unable to establish the metadata connection and retrieve Salesforce objects.

The issue is typically related to Salesforce security or API access settings, such as disabled SOAP API access.

Related Content

SOAP API login() Call is Disabled by Default in New Orgs | help.salesforce.com

Environment

-

ERR_SSL_KEY_USAGE_INCOMPATIBLE Error After Qlik Replicate Windows Patch/Upgrade

After upgrading from Windows 10 to Windows 11, or applying certain patches to Windows Server 2016, Qlik Replicate may not load in the browser. The fol... Show MoreAfter upgrading from Windows 10 to Windows 11, or applying certain patches to Windows Server 2016, Qlik Replicate may not load in the browser.

The following error is displayed:

ERR_SSL_KEY_USAGE_INCOMPATIBLE

Data Integration Products November 2021.11 or lower require a manual generation of SSL certificates for use. See Step 5 (options one and two) in the solution provided below.

Environment

Qlik Replicate 2022.11 (may happen with other versions)

Qlik Compose 2022.5 (may happen with other versions)

Qlik Enterprise Manager 2022.11 (may happen with other versions)

Use of the self-signed SSL certificate

Windows 11

Windows server 2016Resolution

This issue has to do with the self-signed SSL certificate. Deleting the existing one and allowing Qlik Replicate/Compose/Enterprise Manager to regenerate them resolves the issue.

- Stop the services for all affected software. Example: AttunityReplicateUIConsole, Qlik Compose or Qlik Enterprise Manager.

- (This step only applies to Qlik Replicate) Rename the data folder under the SSL folder (\Program Files\Attunity\Replicate\data\ssl\data)

- Delete self-signed certificates in the management console

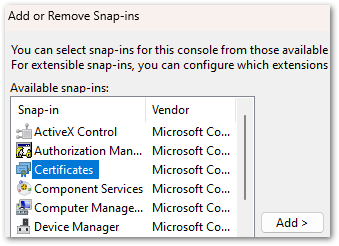

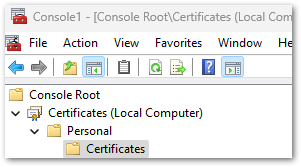

- Open a command prompt and enter MMC to open the console (on Windows Server, open "Manage computer certificates")

- Click the File drop-down menu and select Add/Remove snap-in

- Select Certificates and click Add

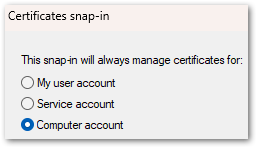

- Select Computer account. Click Next, then click Finish.

- Click OK to close the Add or Remove Snap-ins window

- Open Certificates and navigate to Local Computer, then Personal, then Certificates

- In the list of certificates shown, select the certificates where the "Issued To" value and the "Issued By" value equal your computer name and delete them.

- Close the Management Console.

- Next we will remove the reference to the self-signed certificate in Qlik Replicate:

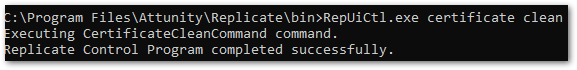

- Open a command prompt As Administrator

For Qlik Replicate:

Navigate to the ..\Attunity\Replicate\bin directory and then run the following command:

RepUiCtl.exe certificate clean

Navigate to the ..\Qlik\Compose\bin directory and then run the following command:

For Qlik Compose:

ComposeCtl.exe certificate clean

For Qlik Enterprise Manager:

Navigate to the ..\Attunity\Enterprise Manager\bin directory and then run the following command:

aemctl.exe certificate clean - Run the command: netsh http delete sslcert ipport=0.0.0.0:443

Note, that you may get a message that the command failed in the event there are no certificates bound to the port. This message can be ignored.

- Open a command prompt As Administrator

- Add either a self-signed Certificate or a trusted and signed Organization Certificate.

Option One: Trusted and Signed Certificate. Generate an organization certificate that must be a correctly configured SSL PFX file containing both the private key and the certificate.

Option Two: Self-Signed Certificate. Generate a self-signed certificate on the server to replace the existing self-signed certificate. The provided PowerShell examples generate an SSL certificate for 10 years.

Run the following in PowerShell:

$cert = New-SelfSignedCertificate -certstorelocation cert:\localmachine\my -dnsname <FQDN> -NotAfter (Get-Date).AddYears(10) - Once the certificates have been obtained or generated, follow the instructions for the individual product section on how to import a certificate:

- Start the services.

- You may need to clear browser cache/cookies & etc. before you can access the web page outside of incognito mode.

Qlik Replicate will now allow you to open the user interface once again.

If users still cannot log in and are returned to the log in prompt at each attempt, see How to change the Qlik Replicate URL on a Windows host.

It is recommended to use your organization certificates

Related Content:

How to change the Qlik Replicate URL on a Windows host

-

Request license offline approval - April 2020 and onwards

Long term offline capability for the releases April 2020 and onwards requires a change to the license (addition of a further attribute), which can onl... Show MoreLong term offline capability for the releases April 2020 and onwards requires a change to the license (addition of a further attribute), which can only be added after a special approval from the Customer Success Organization.

See below for how to submit the request for approval and proceed.

- Contact the Qlik Support team using Chat or the Qlik Support Portal.

- You will need to sign an offline addendum and share the signed addendum with Qlik Support. Choose between one of the two addenda attached to this article; one for banking and one for non-banking customers.

- Provide the License key that needs to be offline enabled, as well as the current Product version details, and the reason why the connection to Qlik's license back end (LBE) cannot be established.

- Request the support team to update the temporary offline feature (Delayed sync) and activate the product via an SLD key first, providing temporary offline access until the permanent offline feature is enabled. Review: How to activate Qlik Sense, QlikView, and Qlik NPrinting without Internet Access

- Once the license is processed and fulfilled for permanent offline activation, here is how the license activation process for long-term offline is done:

As part of the agreement for granting a customer offline license usage, the customer is required to regularly upload User Assignment log files. See Offline User Assignment logs.

This process is not required for OEM customers; OEM customers can contact support directly through chat or by creating a case using the Support portal to have the offline parameters enabled.

Related Content

Activate Qlik Products without Internet access - April 2020 and onwards

Long term offline use for Qlik Sense Signed Licenses

How to send User Assignment log files to Qlik? (Long term offline license activation) -

Qlik Write Table FAQ

This document contains frequently asked questions for the Qlik Write Table. Content Data and metadataQ: What happens to changes after 90 days?Q: Whic... Show More -

Http listening port already in use by an external service in the system - Unable...

When installing Qlik Sense February 2022 and later, it is possible that you may run into the following error:This issue is caused when another servic... Show More -

Changing the IP address of the Qlik Sense host

Changing the IP address of the Qlik Sense host does not require as much consideration as changing the host name. Some external components might need t... Show More

Changing the IP address of the Qlik Sense host does not require as much consideration as changing the host name. Some external components might need to be taken into account though.

Using an IP address as the hostname while installing Sense is not recommended and can lead to problems with service communication. Refer to While accessing Hub receive error "The service did not respond or could not process the request" .

Environment:Qlik Sense Enterprise on Windows, all versionsResolution:

Things to consider:

- In the PostgreSQL database, an listening_addresses might have been set up during the installation. See PostgreSQL: postgres.conf and pg_hba.conf explained for details.

- Outside configuration steps might need to be taken, such as updating DNS records or firewall rules.

- SAP connector considerations: Ensure that the SAP server is contactable by the new Sense server IP address and the SAP server allows connections from the new Sense IP address.

- Other outside connectors may also operate based on IP allowlist.

Related content:

-

Qlik Replicate: Using bindDateAsBinary Leads to Data Inconsistencies

A Qlik Replicate task may fail or encounter a warning caused by Data Inconsistencies when using Oracle as a source. Example Warning: ]W: Invalid time... Show MoreA Qlik Replicate task may fail or encounter a warning caused by Data Inconsistencies when using Oracle as a source.

Example Warning:

]W: Invalid timestamp value '0000-00-00 00:00:00.000000000' in table 'TABLE NAME' column 'COLUMN NAME'. The value will be set to default '1753-01-01 00:00:00.000000000' (ar_odbc_stmt.c:457)

]W: Invalid timestamp value '0000-00-00 00:00:00.000000000' in table 'CTABLE NAME' column 'COLUMN NAME'. The value will be set to default '1753-01-01 00:00:00.000000000' (ar_odbc_stmt.c:457)Example Error:

]E: Failed (retcode -1) to execute statement: 'COPY INTO "********"."********" FROM @"SFAAP"."********"."ATTREP_IS_SFAAP_0bb05b1d_456c_44e3_8e29_c5b00018399a"/11/ files=('LOAD00000001.csv.gz')' [1022502] (ar_odbc_stmt.c:4888) 00023950: 2021-08-31T18:04:23 [TARGET_LOAD ]E: RetCode: SQL_ERROR SqlState: 22007 NativeError: 100035 Message: Timestamp '0000-00-00 00:00:00' is not recognized File '11/LOAD00000001.csv.gz', line 1, character 181 Row 1, column "********"["MAIL_DATE_DROP_DATE":15] If you would like to continue loading when an error is encountered, use other values such as 'SKIP_FILE' or 'CONTINUE' for the ON_ERROR option. For more information on loading options, please run 'info loading_data' in a SQL client. [1022502] (ar_odbc_stmt.c:4895)

Resolution

The bindDateAsBinary attribute must be removed or set on both endpoints.

Cause

The parameter bindDateAsBinary means the date column is captured in binary format instead of date format.

If bindDateAsBinary is not configured on both tasks, the logstream staging and replication task may not parse the record in the same way.

Environment

- Qlik Replicate

-

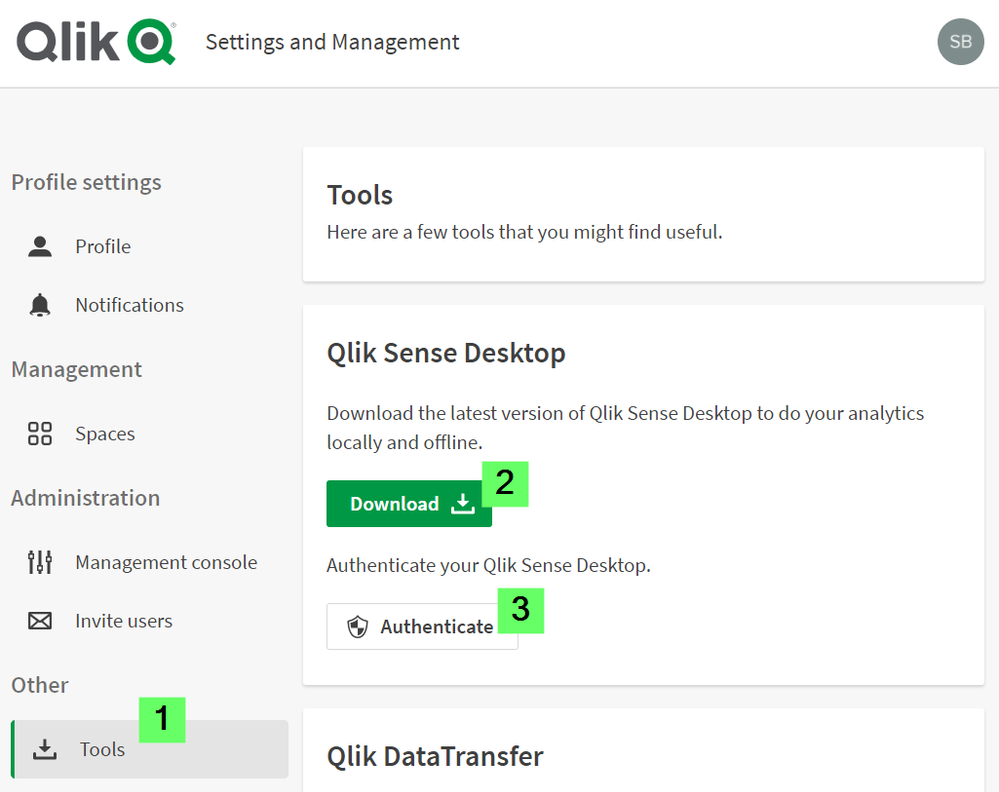

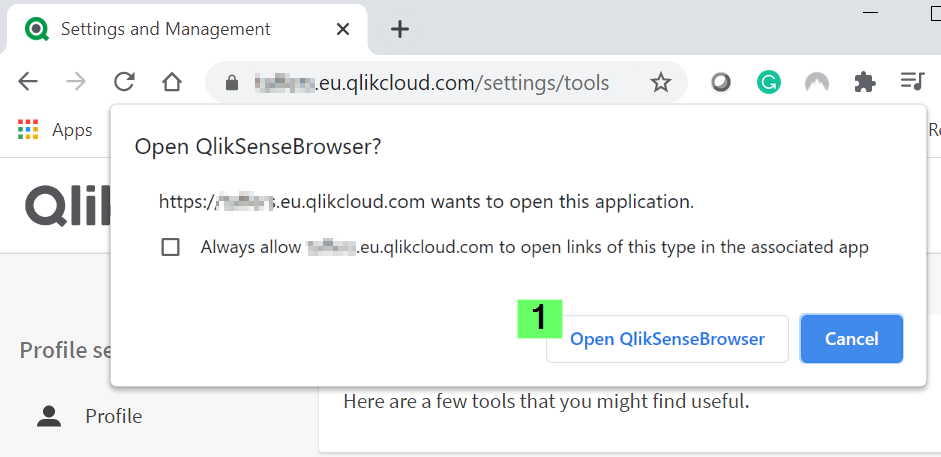

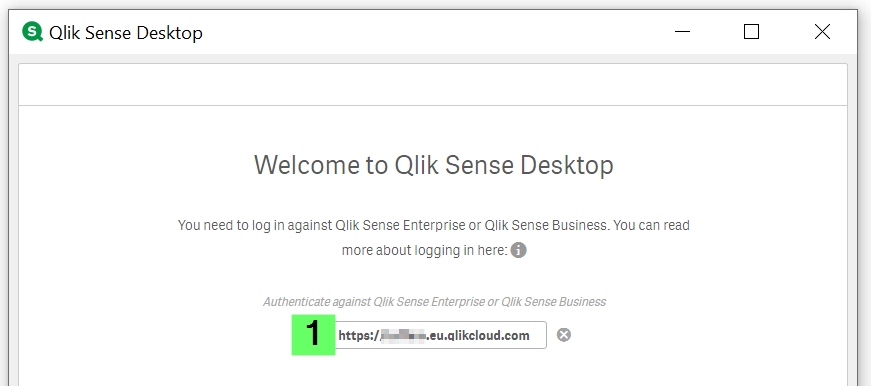

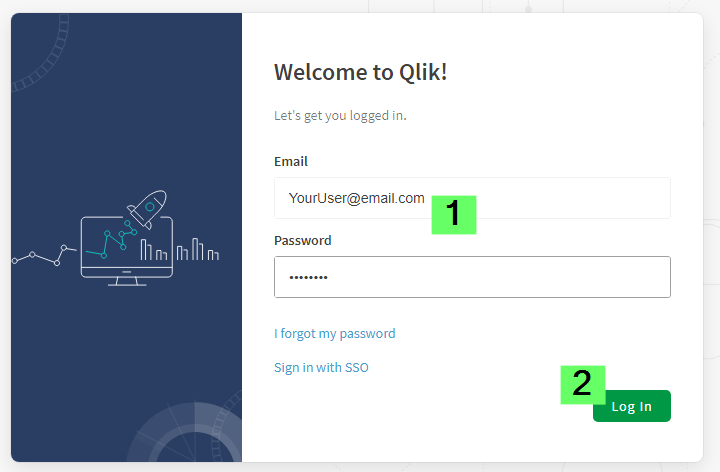

How to authenticate Qlik Sense Desktop against SaaS editions

Click here for video transcript From the June 2020 release, Qlik Sense Desktop can be authenticated against Qlik SaaS editions Qlik Sense Busines... Show MoreEnvironment

- Qlik Sense Desktop, June 2020

- Qlik Sense Business

- Qlik Sense Enterprise SaaS

-

Qlik Sense Enterprise on Windows May 2025: Slow Data Load Editor

The Data Load Editor in Qlik Sense Enterprise on Windows 2025 experiences noticeable performance issues. Resolution Fix Version Qlik Sense May 2025 ... Show MoreThe Data Load Editor in Qlik Sense Enterprise on Windows 2025 experiences noticeable performance issues.

Resolution

Fix Version

Qlik Sense May 2025 SR 6 and higher releases.

Workaround

A workaround is available. It is viable as long as the Qlik SAP Connector is not in use.

- In a Windows file browser, navigate to C:\Program Files\Common Files\Qlik\Custom Data\

- Move the QvSapConnectorPackage directory to a different location

No service restart is required.

Internal Investigation ID(s)

SUPPORT-6006

Environment

- Qlik Sense Enterprise on Windows

-

Qlik Replicate: Dataset Unload Failure Due to LocalDate and String Type Mismatch...

Unloading data from a specific SAP Extractor may fail with the error: [sourceunload ] [VERBOSE] [] Retrieved new data from Extractor 'ZDSGDZ037', Job ... Show MoreUnloading data from a specific SAP Extractor may fail with the error:

[sourceunload ] [VERBOSE] [] Retrieved new data from Extractor 'ZDSGDZ037', Job 'GCDEX67T70NNYN27EH6U4AJ6BRTI4L' row '38932'

[sourceunload ] [VERBOSE] [] Failed to convert value at row 38933 for column 'DATE_FROM' of type 'DATS' value = '~{AQAAALKr+HRru6Zm/c2sIw6hKyA=}~'

[sourceunload ] [VERBOSE] [] value 'JAPAN' replaced by the invalid date placeholder '1970-01-01'

[sourceunload ] [VERBOSE] [] Retrieved new data from Extractor 'ZDSGDZ037', Job 'GCDEX67T70NNYN27EH6U4AJ6BRTI4L' row '38933'

[sourceunload ] [TRACE ] [] Removing existing extraction job for 'ZDSGDZ037' while aborting load data

[sourceunload ] [ERROR ] [] An error occurred unloading dataset: .ZDSGDZ037Resolution

The issue has been resolved by addressing the problematic field in the SAP extractor ZDSGDZ037.

The DATE_FROM field has a short description of “Valid-from date – in current release only 00010101 possible”, and it was determined that this specific SAP date format is not supported by Qlik Replicate.

Change it to a valid value or exclude the DATE_FROM field if it's not necessary.

Cause

A data issue in the SAP extractor caused the failure.

Up to row 38932, all values were processed normally. The failure started at row 38933, where the following field could not be parsed as a valid SAP date:

- Column: DATE_FROM

- Type: DATS

- Value: ~{AQAAALKr+HRru6Zm/c2sIw6hKyA=}~

Qlik Replicate attempted to replace the invalid value with the fallback placeholder 1970-01-01, but failed to when the system produced a ClassCastException. This was caused by the program expecting the value to be a String, but instead receiving a LocalDate object.

The logs show the replaced value "JAPAN" being interpreted as an invalid date, which further confirms that the field contains non-date strings inside a DATS-type SAP field.

This indicates a data format inconsistency in the SAP source extractor, where a date field is populated with non-date content.

Environment

- Qlik Replicate

-

Qlik Replicate task failure caused by future-dated LogStream data

Changing the system time to a future date while a LogStream task is running leads to task errors. Example errors: Staging task [TASK_MANAGER ]I: Task ... Show MoreChanging the system time to a future date while a LogStream task is running leads to task errors.

Example errors:

Staging task

[TASK_MANAGER ]I: Task 'staging task' running CDC only in fresh start mode

[TARGET_APPLY ]I: Timeline is 20251211134943253089

[TARGET_LOAD ]I: Timeline is 20251231100205831444Replication task

[TASK_MANAGER ]I: Task 'replication task' running full load and CDC in fresh start mode

[STREAM_COMPONEN ]I: Timeline is 20251212174220223963 (ar_cdc_channel.c:552)

[SERVER ]I: The process stopped (server.c:2589)

[TASK_MANAGER ]W: Task 'replication task' encountered a recoverable error

[SERVER ]I: Stop server request received internallyResolution

The creation of LogStream data with a future date is caused by manual intervention. This scenario is outside the scope of Qlik Replicate’s intended test scenarios and is not covered under support.

The best action would be to reload the parent staging tasks and reload the child replication task.

Cause

After the system time was changed to a future date and the task was executed, a future-dated directory was created. When the system time was restored and the task was reloaded, the future-dated directory remained and appeared to have caused the replication task error.

Environment

- Qlik Replicate

-

Qlik Replicate: Task fails with error Alternate backup file XXX does not exists

A Qlik Replicate tasks fail with the error: Alternate backup file XXX does not exist Resolution This is frequently caused by the Qlik Replicate serv... Show MoreA Qlik Replicate tasks fail with the error:

Alternate backup file XXX does not exist

Resolution

This is frequently caused by the Qlik Replicate server and one of its endpoints being on a different timezone than the backup logs are being written in.

Example:

The SQL Server and Qlik Replicate server are on PST hours, while the rebuic backup logs are logged using UTC. This leads to backup errors.

Align the backup file time zone to resolve the error.

Environment

- Qlik Replicate

-

How to automatically make multiple selections on sheet open with Qlik Analytics

Qlik allows you to automatically make multiple selections when opening an app sheet. This is configured in the Sheet Properties using an Action: Ope... Show MoreQlik allows you to automatically make multiple selections when opening an app sheet. This is configured in the Sheet Properties using an Action:

- Open the Sheet you want to configure with an action

- Click Edit Sheet to enter edit mode

- Click Properties

- Expand Actions and click Add action

If Properties does not show the Actions tab, but instead lists Chart suggestions and other data display options, deselect the currently selected chart.

- Click the drop-down arrow and choose Select values in a field

- In the Field input, either search for or select the field from the drop-down

- In the Value input, enter the values you want to select, separating them with semicolons (example:

A;Borvalue1,value2) - Exit the Edit Sheet mode; this saves your changes

The defined selections will now apply whenever the sheet is opened.

Environment

- Qlik Sense Enterprise on Windows

- Qlik Cloud

-

Minimal rights for Oracle Source replication

This can serve as a more curated list of minimal permissions required for oracle source, to work with Replicate. SELECT ANY TRANSACTIONSELECT on V_$A... Show More -

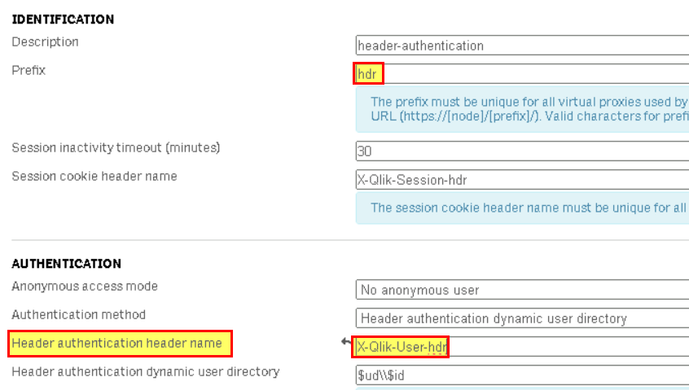

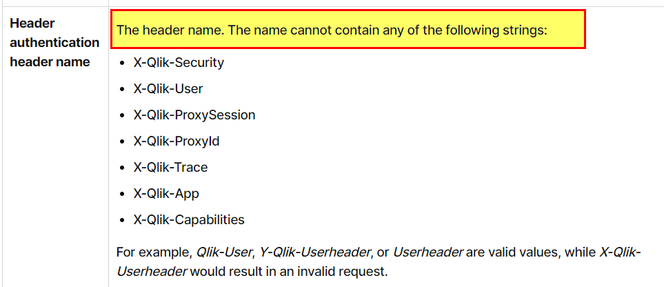

QS Header Authentication: Error when using “X-Qlik-User-hdr" as "header authenti...

When using "Header authentication" method, after upgrading to latest Qlik Sense Enterprise versions or latest patch, you may encouter error like "400 ... Show MoreWhen using "Header authentication" method, after upgrading to latest Qlik Sense Enterprise versions or latest patch, you may encouter error like "400 bad request Invalid header in the request".

From the image above, notice that the request header is “X-Qlik-User-hdr = Domain\administrator" (in this example). Meaning that, in Qlik Sense virtual proxy settings, the "header authentication header name” was set to “X-Qlik-User-hdr".

Resolution

This is working as designed (WAD).

R&D confirmed that, there was a security fix made back in August-September 2023, which disallow header authentication using header names that include "X-Qlik-User" in "header authentication header name".

Thus, if the "Header Authentication" setting was working before the upgrade and then the error "400 bad request Invalid header in the request" occurs after upgrading to latest version of Qlik Sense Enterprise or after installing a patch, please ensure that in the related virtual proxy, "header authentication header name” is not set to something like "X-Qlik-User-*" (Check for example QS Feb 2024 header name restrictions).

Information provided on this defect is given as is at the time of documenting. For up to date information, please review the most recent Release Notes, or contact support with the ID QB-25945 or QB-21731 for reference.

Cause

Product Defect ID: QB-25945, QB-21731 and HLP-15641

Environment

- Qlik Sense Entreprise on Windows

-

Qlik Talend Cloud Release R2025-11 new Firewall Allow list requirement due to st...

Talend Management Console Release Notes R2025-11 | Qlik Talend Documentation informs of the New storage Service which is currently not yet available f... Show MoreTalend Management Console Release Notes R2025-11 | Qlik Talend Documentation informs of the New storage Service which is currently not yet available for EU and US regions.

"New storage service endpoint for unified access to artifacts and execution logs: This storage service is planned to be deployed to AWS EU and AWS US in January 2026. Update your proxy and firewall settings when it is available."Questions and Answers

- Is this addition to the firewall allowlist optional or is it mandatory?

- This change is mandatory for customers using firewall rules, you have to add this new endpoint to the allowlist. Without adjustment, you will face multiple issues to run Jobs, upload logs… etc.

- Will our Jobs and artifacts still be executable even if we do not make this adjustment?

- The new URL must be added to the firewall allowlist to ensure the tasks will run, if firewall proxy rules block connections to/from the service there will be multiple issues for task executions on Remote Engines as well as new artifacts being published.

- When will the update be released for EU region? The notes only say “January 2026.”

- The switch from minio to storage endpoints on EU and US stacks will be done by Jan 14th, so the firewall allow list addition will have to be in place before this date.

- The allowlist contains URLs, but we'd like to add IPs, not URLs, to add/adjust our proxy and firewall allowlist. Where are these documented?

- We can only provide DNS as IPs can change. Since it is a common for Cloud providers such as Amazon or Azure to dynamically change host IP when necessary, DNS will be the best way for such configurations.

Internal Investigation ID(s)

Support-7691

Environment

- Is this addition to the firewall allowlist optional or is it mandatory?

-

Qlik Talend Administration Center (TAC) License Expiration Warning Message

The Talend Administration Center (TAC) displays the following warning: One of your licenses will expire in X days. Go to the license page and update y... Show MoreThe Talend Administration Center (TAC) displays the following warning:

One of your licenses will expire in X days. Go to the license page and update your license file.

This message is intended as a license expiration warning generated when at least one installed license is approaching its expiration date. It will first display at the 20-day mark.

Does this warning impact platform functionality?

The warning is information only and will be displayed regardless if:

- The expiring license is currently in use, or

- Other valid licenses remain active in the environment.

It has no impact on functionality, such as:

- Job execution

- Qlik Talend Studio availability

- Qlik Talend Studio usage

- Runtime operations

The platform will continue to operate as expected as long as at least one valid active license covers your required features.

Steps to address the warning

Scenario One: Only One License Exists

If the environment has only one license and it is approaching expiration:

- Renew the license before its expiration date

- Upload the new license file to the Qlik Talend Administration Console once you receive it

Scenario Two: Multiple Licenses Exist

As long as there are other active and valid licenses, the warning can be safely ignored.

While no immediate action is required, you may choose to deactivate the expiring license if it is no longer needed. This will remove the warning message.

To deactivate the license:

- Log in to the Qlik Talend Administration Center (TAC)

- Navigate to Settings

- Go to Licenses

- Right-click on the license approaching expiration

- Select Deactivate

Only deactivate your license if it is no longer required.

Recommendations

- Review active licenses periodically

- Ensure at least one valid license covers your required Qlik Talend features

- Contact your Qlik account representative for license renewal if needed

Related Content

Environment

- Qlik Talend Administration Center

-

Qlik Talend Studio: Uncommitted files exist, need to commit or clear them first

When attempting to open a remote project in Talend Studio, you may encounter the following error, even if no modifications have been made to the code.... Show MoreWhen attempting to open a remote project in Talend Studio, you may encounter the following error, even if no modifications have been made to the code.

org.talend.commons.exception.PersistenceException: java.lang.Exception: Uncommitted files exist, need to commit or clear them first.

at org.talend.repository.gitprovider.core.GitBaseRepositoryFactory.initProjectRepository(GitBaseRepositoryFactory.java:276)

at org.talend.repository.remoteprovider.RemoteRepositoryFactory.initProjectRepository(RemoteRepositoryFactory.java:1722)Resolution

a) To clear the Talend Studio cache, follow these steps:

- Stop Talend Studio.

- Clear the Talend Studio cache by deleting the following directories: org.eclipse.core.runtime and org.eclipse.equinox folders located in Talend Studio/configuration/ (Note: ensure to create a backup before deleting these folders.)

- Restart Talend Studio.

b) Alternatively, you can log in to Talend Studio by creating a new workspace.

Cause

This error appears to stem from inconsistencies in the Git Repository that require cleanup prior to launching Talend Studio.

Environment