Unlock a world of possibilities! Login now and discover the exclusive benefits awaiting you.

Featured Content

-

How to contact Qlik Support

Qlik offers a wide range of channels to assist you in troubleshooting, answering frequently asked questions, and getting in touch with our technical e... Show MoreQlik offers a wide range of channels to assist you in troubleshooting, answering frequently asked questions, and getting in touch with our technical experts. In this article, we guide you through all available avenues to secure your best possible experience.

For details on our terms and conditions, review the Qlik Support Policy.

Index:

- Support and Professional Services; who to contact when.

- Qlik Support: How to access the support you need

- 1. Qlik Community, Forums & Knowledge Base

- The Knowledge Base

- Blogs

- Our Support programs:

- The Qlik Forums

- Ideation

- How to create a Qlik ID

- 2. Chat

- 3. Qlik Support Case Portal

- Escalate a Support Case

- Phone Numbers

- Resources

Support and Professional Services; who to contact when.

We're happy to help! Here's a breakdown of resources for each type of need.

Support Professional Services (*) Reactively fixes technical issues as well as answers narrowly defined specific questions. Handles administrative issues to keep the product up-to-date and functioning. Proactively accelerates projects, reduces risk, and achieves optimal configurations. Delivers expert help for training, planning, implementation, and performance improvement. - Error messages

- Task crashes

- Latency issues (due to errors or 1-1 mode)

- Performance degradation without config changes

- Specific questions

- Licensing requests

- Bug Report / Hotfixes

- Not functioning as designed or documented

- Software regression

- Deployment Implementation

- Setting up new endpoints

- Performance Tuning

- Architecture design or optimization

- Automation

- Customization

- Environment Migration

- Health Check

- New functionality walkthrough

- Realtime upgrade assistance

(*) reach out to your Account Manager or Customer Success Manager

Qlik Support: How to access the support you need

1. Qlik Community, Forums & Knowledge Base

Your first line of support: https://community.qlik.com/

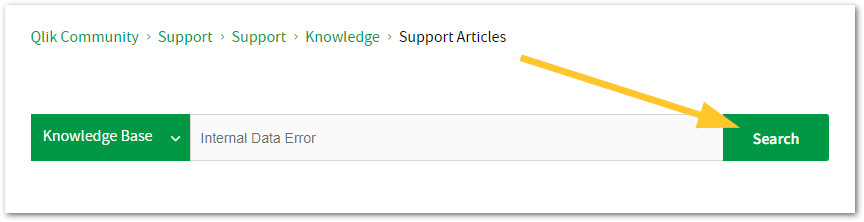

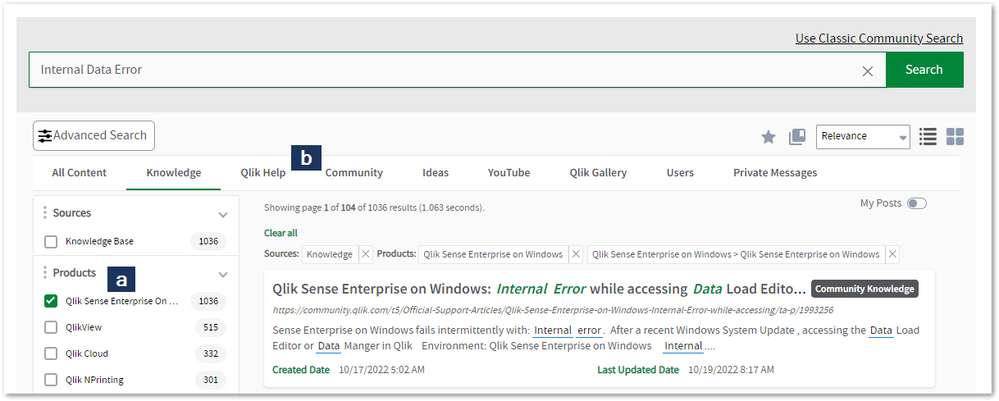

Looking for content? Type your question into our global search bar:

The Knowledge Base

Leverage the enhanced and continuously updated Knowledge Base to find solutions to your questions and best practice guides. Bookmark this page for quick access!

- Go to the Official Support Articles Knowledge base

- Type your question into our Search Engine

- Need more filters?

- Filter by Product

- Or switch tabs to browse content in the global community, on our Help Site, or even on our Youtube channel

Blogs

Subscribe to maximize your Qlik experience!

The Support Updates Blog

The Support Updates blog delivers important and useful Qlik Support information about end-of-product support, new service releases, and general support topics. (click)The Qlik Design Blog

The Design blog is all about product and Qlik solutions, such as scripting, data modelling, visual design, extensions, best practices, and more! (click)The Product Innovation Blog

By reading the Product Innovation blog, you will learn about what's new across all of the products in our growing Qlik product portfolio. (click)Our Support programs:

Q&A with Qlik

Live sessions with Qlik Experts in which we focus on your questions.Techspert Talks

Techspert Talks is a free webinar to facilitate knowledge sharing held on a monthly basis.Technical Adoption Workshops

Our in depth, hands-on workshops allow new Qlik Cloud Admins to build alongside Qlik Experts.Qlik Fix

Qlik Fix is a series of short video with helpful solutions for Qlik customers and partners.The Qlik Forums

- Quick, convenient, 24/7 availability

- Monitored by Qlik Experts

- New releases publicly announced within Qlik Community forums (click)

- Local language groups available (click)

Ideation

Suggest an idea, and influence the next generation of Qlik features!

Search & Submit Ideas

Ideation GuidelinesHow to create a Qlik ID

Get the full value of the community.

Register a Qlik ID:

- Go to register.myqlik.qlik.com

If you already have an account, please see How To Reset The Password of a Qlik Account for help using your existing account. - You must enter your company name exactly as it appears on your license or there will be significant delays in getting access.

- You will receive a system-generated email with an activation link for your new account. NOTE, this link will expire after 24 hours.

If you need additional details, see: Additional guidance on registering for a Qlik account

If you encounter problems with your Qlik ID, contact us through Live Chat!

2. Chat

Incidents are supported through our Chat, by clicking Chat Now on any Support Page across Qlik Community.

To raise a new issue, all you need to do is chat with us. With this, we can:

- Answer common questions instantly through our chatbot

- Have a live agent troubleshoot in real time

- With items that will take further investigating, we will create a case on your behalf with step-by-step intake questions.

3. Qlik Support Case Portal

Log in to manage and track your active cases in the Case Portal. (click)

Please note: to create a new case, it is easiest to do so via our chat (see above). Our chat will log your case through a series of guided intake questions.

Your advantages:

- Self-service access to all incidents so that you can track progress

- Option to upload documentation and troubleshooting files

- Option to include additional stakeholders and watchers to view active cases

- Follow-up conversations

When creating a case, you will be prompted to enter problem type and issue level. Definitions shared below:

Problem Type

Select Account Related for issues with your account, licenses, downloads, or payment.

Select Product Related for technical issues with Qlik products and platforms.

Priority

If your issue is account related, you will be asked to select a Priority level:

Select Medium/Low if the system is accessible, but there are some functional limitations that are not critical in the daily operation.

Select High if there are significant impacts on normal work or performance.

Select Urgent if there are major impacts on business-critical work or performance.

Severity

If your issue is product related, you will be asked to select a Severity level:

Severity 1: Qlik production software is down or not available, but not because of scheduled maintenance and/or upgrades.

Severity 2: Major functionality is not working in accordance with the technical specifications in documentation or significant performance degradation is experienced so that critical business operations cannot be performed.

Severity 3: Any error that is not Severity 1 Error or Severity 2 Issue. For more information, visit our Qlik Support Policy.

Escalate a Support Case

If you require a support case escalation, you have two options:

- Request to escalate within the case, mentioning the business reasons.

To escalate a support incident successfully, mention your intention to escalate in the open support case. This will begin the escalation process. - Contact your Regional Support Manager

If more attention is required, contact your regional support manager. You can find a full list of regional support managers in the How to escalate a support case article.

Phone Numbers

When other Support Channels are down for maintenance, please contact us via phone for high severity production-down concerns.

- Qlik Data Analytics: 1-877-754-5843

- Qlik Data Integration: 1-781-730-4060

- Talend AMER Region: 1-800-810-3065

- Talend UK Region: 44-800-098-8473

- Talend APAC Region: 65-800-492-2269

Resources

A collection of useful links.

Qlik Cloud Status Page

Keep up to date with Qlik Cloud's status.

Support Policy

Review our Service Level Agreements and License Agreements.

Live Chat and Case Portal

Your one stop to contact us.

Recent Documents

-

Qlik Sense Enterprise on Windows and Node.js

Node.js comes bundled with Qlik Sense Enterprise on Windows. Its version depends on the Qlik Sense released currently installed. How do I know which v... Show MoreNode.js comes bundled with Qlik Sense Enterprise on Windows. Its version depends on the Qlik Sense released currently installed.

How do I know which version of node.js is used by Qlik Sense?

You can verify the version of any of the third-party integrations Qlik Sense makes use of by:

- Using the Qlik Sense API and calling About Service API: thirdParty: Get, which returns details including information about copyright, version, licensing, and legal notices.

- Accessing the below URL in a browser, where qlikserver.domain.local is replaced with your Qlik Sense server hostname:

https://qlikserver.domain.local/api/about/v1/thirdParty

How do I upgrade my version of node.js is used by Qlik Sense?

You may want to upgrade node.js, specifically in response to a security vulnerability. To do so, upgrade Qlik Sense Enterprise on Windows. When upgrading Qlik Sense, the currently installed node.exe will be replaced with the version Qlik Sense comes bundled with at this release.

Can I install or upgrade a separately installed instance of node.js?

Installing Qlik Sense installs node.exe side-by-side in the following location: C:\Program Files\Qlik\Sense\ServiceDispatcher\Node.

If you install node.js manually it will typically be installed in C:\Program Files\nodejs and the Windows environment variable will point to this location by default (i.e. running node -v to get the version will result in providing the version of node found in C:\Program Files\nodejs).

As Qlik Sense will not register any Windows environment variable for node.js, it will not tamper with any settings affecting already installed node.js instances. Therefore it is safe to upgrade your separate instance of Node.js.

Environment

- Qlik Sense Enterprise all versions

Related Content

Third-party and middleware software integrated with Qlik Sense Enterprise on Windows

-

Qlik Direct Access Gateway (DAG) shown as disconnected while server time is unal...

Qlik Direct Access Gateway (DAG) is shown as disconnected. The Windows server's time lags behind the Qlik Cloud tenant's timezone. Resolution Addr... Show MoreQlik Direct Access Gateway (DAG) is shown as disconnected.

The Windows server's time lags behind the Qlik Cloud tenant's timezone.

Resolution

Address the time difference by aligning the Windows server's clock. If necessary, align it to a standard NTP service.

Cause

Misaligned time. Without alignment, Qlik Direct Access Gateway will disconnect.

Environment

- Qlik Direct Access Gateway

- Qlik Cloud Analytics

-

Qlik Sense Enterprise on Windows and changing the Active Directory Domain name

In this article, we detail the 12 steps necessary to successfully configure Qlik Sense Enterprise on Windows after an Active Directory Domain name cha... Show MoreIn this article, we detail the 12 steps necessary to successfully configure Qlik Sense Enterprise on Windows after an Active Directory Domain name change or moving Qlik Sense to a new domain.

Depending on how you have configured your platform, not all the steps might be applicable. Before updating any hostname in Qlik Sense, make sure that the original value is using the old domain name. In some case, you may have configured “localhost” or the server name without any reference to the domain so there is no need in these cases to perform any update.

Environment

Scenario

In this scenario we are updating a three-node environment running Qlik Sense February 2021:

The domain has been changed from DOMAIN.local to DOMAIN2.local

The servers mentioned below are already part of the new domain name DOMAIN2.local and their Fully Qualified Domain Name (FQDN) is already updated as followed:

- QlikServer1.domain.local to QlikServer1.domain2.local: Central node

- QlikServer2.domain.local to QlikServer2.domain2.local: Rim node

- QlikServer3.domain.local to QlikServer3.domain2.local: Dedicated Postgres and hosting the Qlik shared folder

All Qlik Sense services have been stopped on every node.

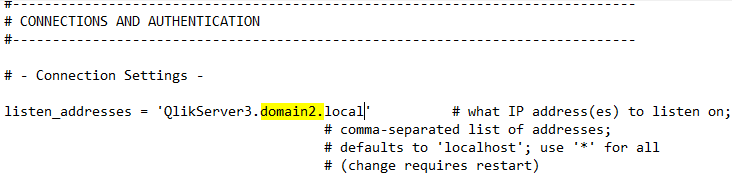

Step 1: Update the Postgres Configuration File

The first step is to update the postgres.conf file to listen on the correct hostname

- Open the postgres.conf (make sure to make a copy) under

- C:\ProgramData\Qlik\Sense\Repository\PostgreSQL\<version> (for an environment running the native Qlik Sense Repository Database)

- C:\Program Files\PostgreSQL\<version>\data (for an environment running a dedicated PostgreSQL database)

- Search for the parameter listen_addresses and update the hostname value if required

- Save the file, close it and start the Qlik Sense Repository Database or PosgresSQL service depending if you are using the native PostgreSQL or a dedicated instance

Step 2: Backup your Qlik Sense site

Before doing any further changes, it is important to take a backup of your Qlik Sense Platform

Step 3: Rename Server Node in QSR database

Now we update the node names in the database with the new Fully Qualified Domain Name.

- Connect to the Qlik Sense Database using Installing and Configuring PGAdmin4 to access the PostgreSQL

- Open the QSR Database and navigate through Schemas -> public ->Tables

- Right-click on ServerNodeConfiguration -> View/Edit Data -> All Rows

- Modify the hostname of each node if required

- Save by clicking on the below icon available in the toolbar

- Close PGAdmin

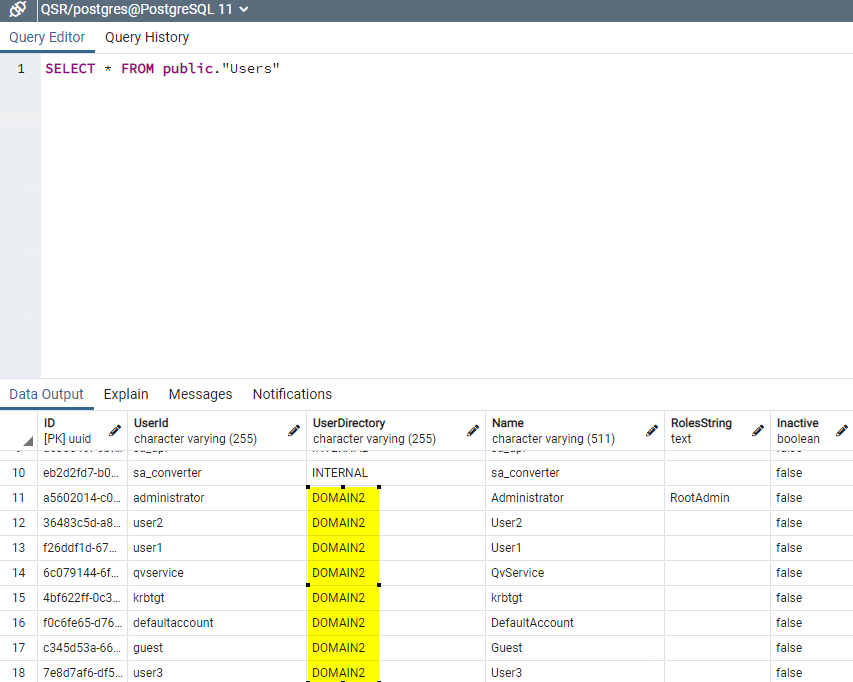

Step 4: Rename User Directory for existing users

- Connect to the Qlik Sense Database using Installing and Configuring PGAdmin4 to access the PostgreSQL

- Right-click on the QSR Database and select “Query Tool”

- Run the following query

UPDATE public."Users" SET "UserDirectory" = 'DOMAIN2' WHERE "UserDirectory" = 'DOMAIN'; - Validate the change by running the following query

SELECT * FROM public."Users"

The User Directory should be updated to the new domain: - Close PGAdmin

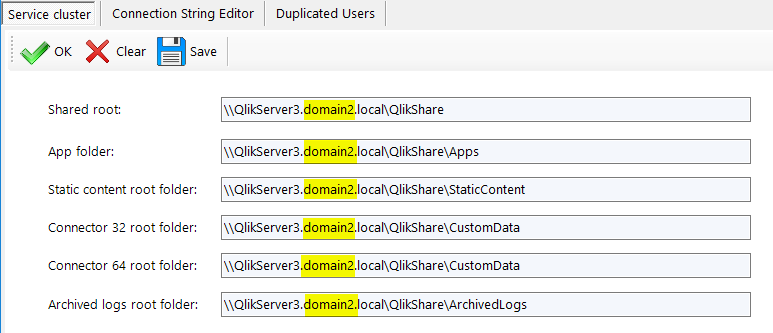

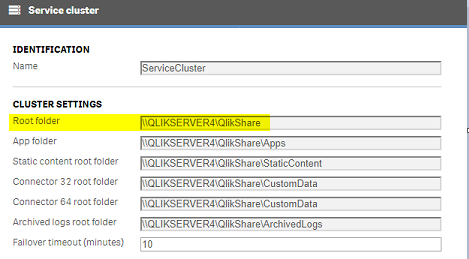

Step 5: Update Service Cluster configuration

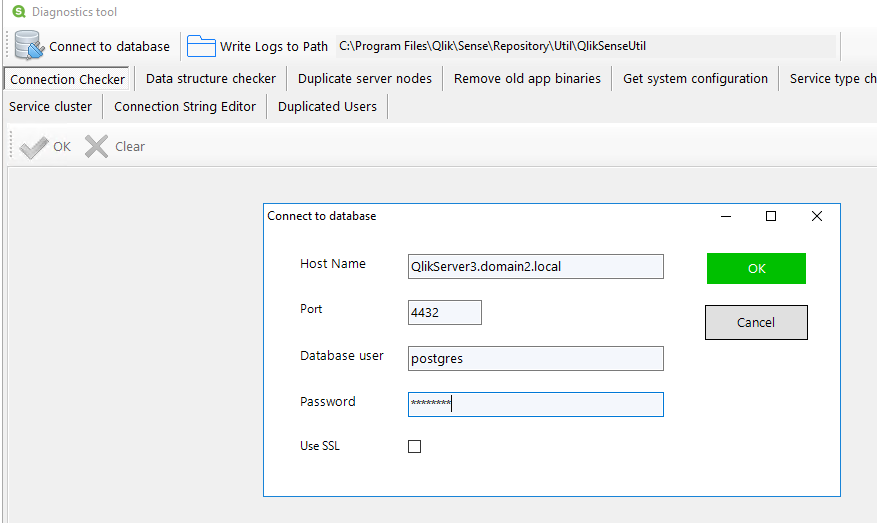

- On the central node run the program C:\Program Files\Qlik\Sense\Repository\Util\QlikSenseUtil\QlikSenseUtil.exe and Connect to Database

- Once connected, click on Service cluster and then OK

- Update the FQDN with the new domain if required and press Save

- Close QlikSenseUtil.exe

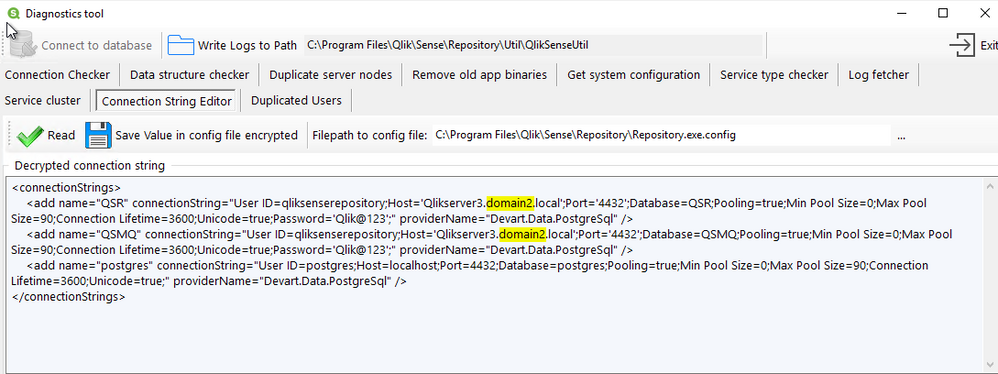

Step 6: Update the repository Connection Strings

- On the central node run the program C:\Program Files\Qlik\Sense\Repository\Util\QlikSenseUtil\QlikSenseUtil.exe

- Select Connection String Editor and click on Read

- Update the FQDN with the new domain if required and press Save Value in config file encrypted

- Close QlikSenseUtil.exe

- Repeat the above steps on every rim node

Step 7: Update the dispatcher services connection strings

Verify if a hostname update is required

- On the central node, open File Explorer and navigate to C:\Program Files\Qlik\Sense\Licenses and open the appsetting.json

- Check if the parameter host contains the old domain name. If it does then you need to follow the below steps to update it otherwise you can jump to the next section

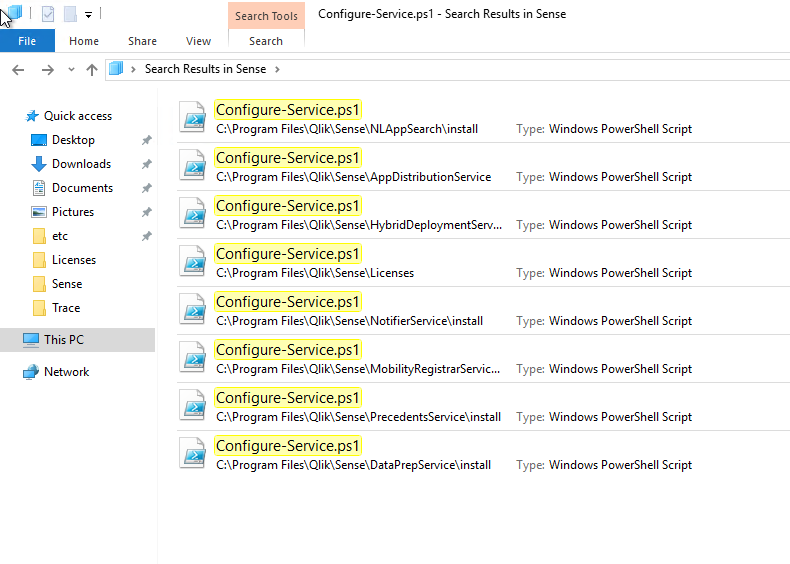

Update hostname on all Qlik Sense Dispatcher subservices

- Search for the file called Configure-Service.ps1

In Qlik Sense February, there are 8 of these files placed in different Qlik Sense Dispatcher sub-services. We need to run each of these files with a specific argument. In the previous or later version of Qlik Sense, the list presented below may need to be adapted. - Run the following command in PowerShell ISE as Administrator

cd "C:\Program Files\Qlik\Sense\NLAppSearch\install" .\Configure-Service.ps1 QlikServer3.domain2.local 4432 qliksenserepository <qliksenserepository_password> cd "C:\Program Files\Qlik\Sense\AppDistributionService" .\Configure-Service.ps1 QlikServer3.domain2.local 4432 qliksenserepository <qliksenserepository_password> cd "C:\Program Files\Qlik\Sense\HybridDeploymentService" .\Configure-Service.ps1 QlikServer3.domain2.local 4432 qliksenserepository <qliksenserepository_password> cd "C:\Program Files\Qlik\Sense\Licenses" .\Configure-Service.ps1 QlikServer3.domain2.local 4432 qliksenserepository <qliksenserepository_password> cd "C:\Program Files\Qlik\Sense\NotifierService\install" .\Configure-Service.ps1 QlikServer3.domain2.local 4432 qliksenserepository <qliksenserepository_password> cd "C:\Program Files\Qlik\Sense\MobilityRegistrarService\install" .\Configure-Service.ps1 QlikServer3.domain2.local 4432 qliksenserepository <qliksenserepository_password> cd "C:\Program Files\Qlik\Sense\PrecedentsService\install" .\Configure-Service.ps1 QlikServer3.domain2.local 4432 qliksenserepository <qliksenserepository_password> cd "C:\Program Files\Qlik\Sense\DataPrepService\install" .\Configure-Service.ps1 QlikServer3.domain2.local 4432 qliksenserepository <qliksenserepository_password> - Repeat the above steps on every node (Including the verification)

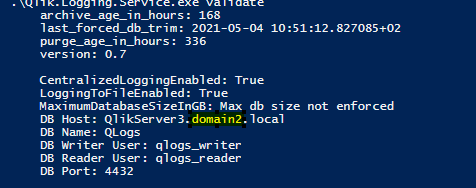

Step 8: Update the Qlik Logging Service

If you are using the Qlik Logging Service;

- Run the following command in Command Prompt:

cd "C:\Program Files\Qlik\Sense\Logging" Qlik.Logging.Service.exe validate - If you get something like:

Failed to validate logging database. Database does not exist or is an invalid version.

Then it probably means that the hostname needs to be updated - If so, run the following command in Command Prompt:

cd "C:\Program Files\Qlik\Sense\Logging" Qlik.Logging.Service.exe update -h QlikServer3.domain2.local - Once done, run the validate command again to verify that the connectivity is working

- Repeat the above steps on every rim node

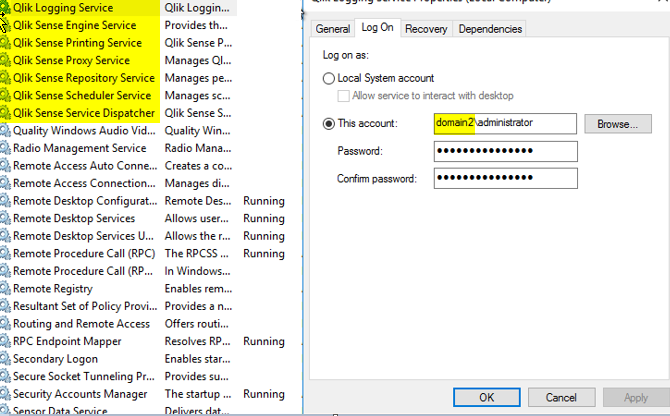

Step 9: Update the service account running the Qlik Sense services

- On the central node, open the Windows Service Console an update each Qlik Sense services with the new domain (DO NOT START THE SERVICE AT THIS POINT)

- Repeat the above step on every rim node

Step 10: Update the host.cfg and remove the certificate

- On the central node login as the service account

- Backup the Qlik Sense certificate following Backing up certificates

- Remove the certificate you have previous backed up from the MMC

- Make a copy of %ProgramData%\Qlik\Sense\Host.cfg and rename the copy to Host.cfg.old

- Host.cfg contains the hostname encoded in base64. You can generate this string for the new hostname using a site such as https://www.base64encode.org/ (You may want to decode the original string to see if it contains the old domain name.

- Open Host.cfg and replace the content with the new encoded hostname.

- Run Windows Command Prompt as Administrator and execute the following command:

"C:\Program Files\Qlik\Sense\Repository\Repository.exe" -bootstrap -iscentral -restorehostname - In parallel start the Qlik Sense Dispatcher services

- When the command has completed successfully you should see the following message in command prompt:

Bootstrap mode has terminated. Press ENTER to exit.. - Start every Qlik Sense services on the central node and try to access the QMC with https://localhost/qmc

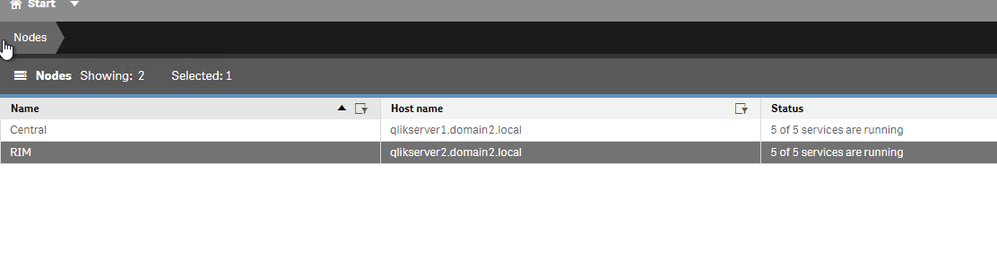

Step 11: Distribute the certificate on the rim node(s)

- On the rim node(s) login as the service account

- Backup the Qlik Sense certificate following Backing up certificates

- Remove the certificate you have previous backed up from the MMC

- Start the Qlik Sense services

- On the central node, open the QMC and go to Nodes

- After a few minutes the rim node should have the status The certificates has not been installed

- Click on Redistribute and follow the instructions to redistribute the certificates on the rim node(s)

- After a few minutes, the services should be running

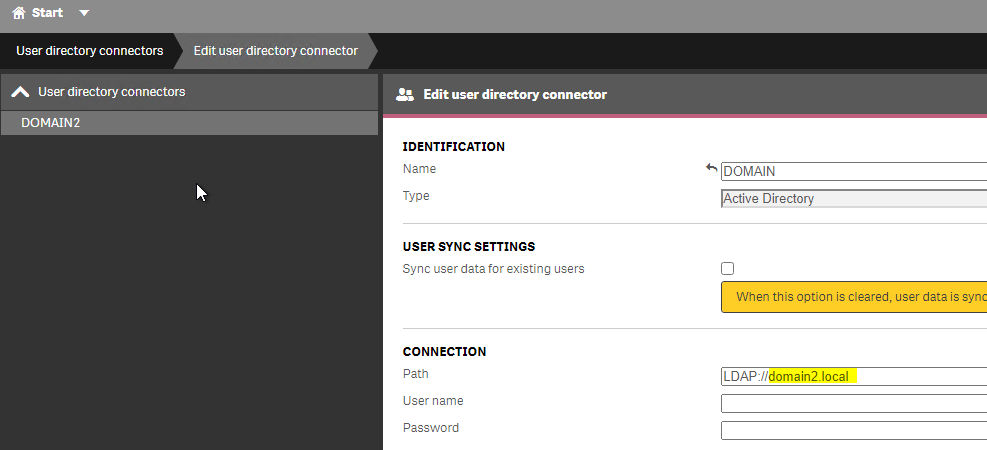

Step 12: Additional updates

User Directory Connector

If you are syncing your users with a User Directory Connector you will need to change the LDAP path to point to the new domain.

- In the QMC -> User Directory Connector

- Edit your existing User Directory Connector, check the LDAP Path and update if necessary

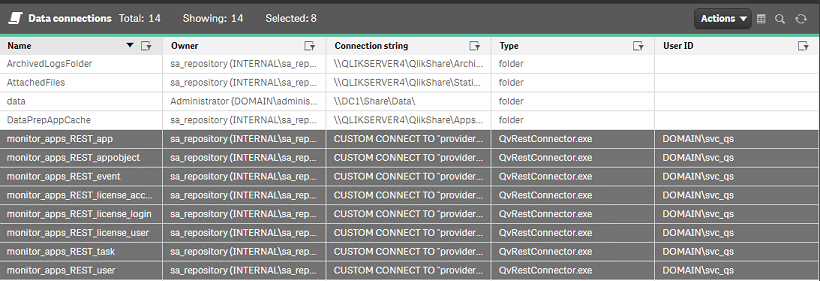

Monitoring Application

You will need to update the monitoring apps and their associated data connection to use the new Fully Qualified Domain Name

Data Connections password

- Part of the steps followed earlier, the certificates had to be recreated. As a result, the passwords for the existing data connection needs to be re-typed and saved for all Data Connections and User Directory Connectors that include password information.

Security Rules and Licenses rules

It is possible that you have created rules based on the User Directory. As you have changed the domain name, the user directory has also changed in Qlik Sense so you will need to update those to reflect that.

The information in this article is provided as-is and to be used at own discretion. Depending on tool(s) used, customization(s), and/or other factors ongoing support on the solution below may not be provided by Qlik Support.

-

Qlik Replicate: Create Unique ID Column Header for Kafka Target

Scenario: A task with Sybase ASE as the Source and Confluent Kafka as the Destination. Requirement: Create a new column with a unique identifier "UUID... Show MoreScenario: A task with Sybase ASE as the Source and Confluent Kafka as the Destination.

Requirement: Create a new column with a unique identifier "UUID" to identify the message in Confluent Kafka.

Is there any customization in Qlik Replicate that allows the creation of a unique sequencer for each replicated record per table?

Resolution

The following transformation is provided as is. For more detailed customization assistance, post your requirement in the Qlik Replicate forum, where your knowledgeable Qlik peers and our active Support Agents can help. If you need direct assistance, contact Qlik's Consulting Services.

You can add a new column and hard-code it to a unique (random) value by using the Transform tab in Table Settings.

Example Expression Value:

substr(lower(hex(randomblob(16))),1,8) || '-' || substr(lower(hex(randomblob(16))),9,4) || '-4' || substr(lower(hex(randomblob(16))),13,3) || '-' || substr('89ab', abs(random()) % 4 + 1, 1) || substr(lower(hex(randomblob(16))),17,3) || '-' || substr(lower(hex(randomblob(16))),21,12)For more information on transformations, see Defining transformations for a single table/view.

Environment

- Qlik Replicate

-

Qlik Talend Cloud: Cannot enable lineage collection for Job tasks in Talend Mana...

In processing step, under Lineage, you may unable to select the "Allow Lineage collection of this task" check box to enable it when creating or editi... Show MoreIn processing step, under Lineage, you may unable to select the "Allow Lineage collection of this task" check box to enable it when creating or editing your task in Talend Management Console.

Resolution

To verify your Qlik Talend Cloud License Type

- Open the Qlik Talend Management Console

- Go to Subscriptions Tab

- Go to License Type

Cause

The reason of "Allow Lineage collection of this task" option is not visible when creating tasks in Qlik Talend Management Console is due to the type of license currently in use. The Lineage feature is only available with the Qlik Talend Cloud Enterprise Edition or Qlik Talend Cloud Premium Edition licenses.

Related Content

For more details please find the links below

Environment

-

Qlik Sense Enterprise on Windows: Exporting Audit Results creates an empty file

Qlik Sense Enterprise on Windows facilitates the export of an Audit from the Qlik Sense Management Console. See Exporting audit query results. This ex... Show MoreQlik Sense Enterprise on Windows facilitates the export of an Audit from the Qlik Sense Management Console. See Exporting audit query results.

This export works as expected unless the Audit result is filtered by one app and one user. If only one user and one app are used in the filter, the resulting Excel file is empty.

Resolution

This is a known defect (QCB-32416), which is expected to be fixed in a future release. Review the Qlik Sense Enterprise on Windows Release Notes for details.

Workaround:

Filter by more than one app and one user.

Internal Investigation ID(s)

Product Defect ID: QCB-32416

Environment

- Qlik Sense Enterprise on Windows May 2025 up to Patch 4

- Qlik Sense Enterprise on Windows November 2024 up to Patch 16

-

How to disable 'Base memory size' info and 'StaticByteSize' calls in Qlik Sens E...

After upgrading to Qlik Sense Enterprise on Windows May 2023 (or later), the Qlik Sense Repository Service may cause CPU usage spikes on the central n... Show MoreAfter upgrading to Qlik Sense Enterprise on Windows May 2023 (or later), the Qlik Sense Repository Service may cause CPU usage spikes on the central node. In addition, the central Engine node may show an increased average RAM consumption while a high volume of reloads is being executed.

The Qlik Sense System_Repository log file will read:

"API call to Engine service was successful: The retrieved 'StaticByteSize' value for app 'App-GUID' is: 'Size in bytes' bytes."

Default location of log: C:\ProgramData\Qlik\Sense\Log\Repository\Trace\[Server_Name]_System_Repository.txt

This activity is associated with the ability to see the Base memory size in the Qlik Sense Enterprise Management Console. See Show base memory size of apps in QMC.

Resolution

The feature to see Base memory size can be disabled. This may require an upgrade and will require downtime as configuration files need to be changed.

Take a backup of your environment before proceeding.

- Verify the version you are using and verify that you are at least on one of the versions (and patches) listed:

- May 2023 Patch 13

- August 2023 Patch 10

- November 2023 Patch 4

- February 2024 IR

- Back up the following files:

- C:\Program Files\Qlik\Sense\CapabilityService\capabilities.json

- C:\Program Files\Qlik\Sense\Repository\Repository.exe.config

*where C:\Program Files can be replaced by your custom installation path.

- Stop the Qlik Sense Repository Service and the Qlik Sense Dispatcher Service

- Open and edit the capabilities.json with a text editor (using elevated permissions)

- Open C:\Program Files\Qlik\Sense\CapabilityService\capabilities.json

- Locate: "enabled": true,"flag": "QMCShowAppSize"

- Change this to: "enabled": false,"flag": "QMCShowAppSize"

- Save the file

- Open and edit the repository.exe.config with a text editor (using elevated permissions)

- Open C:\Program Files\Qlik\Sense\Repository\Repository.exe.config

- Locate: </appSettings>

- Before the </appSettings> closing statement, add:

<add key="AppStaticByteSizeUpdate.Enable" value="false"/> - Save

- Start all Qlik Sense services

- Verify you can access the Management Console

If any issues occur, revert the changes by restoring the backed up files and open a ticket with Support providing the changed versions of repository.exe.config, capabilities.json mentioning this article.

Related Content

Show base memory size of apps in QMC

Internal Investigation:

QB-22795

QB-24301Environment

Qlik Sense Enterprise on Windows May 2023, August 2023, November 2023, February 2024.

- Verify the version you are using and verify that you are at least on one of the versions (and patches) listed:

-

Customizing Qlik Sense Enterprise on Windows Forms Login Page

Ever wanted to brand or customize the default Qlik Sense Login page? The functionality exists, and it's really as simple as just designing your HTML p... Show MoreEver wanted to brand or customize the default Qlik Sense Login page?

The functionality exists, and it's really as simple as just designing your HTML page and 'POSTing' it into your environment.

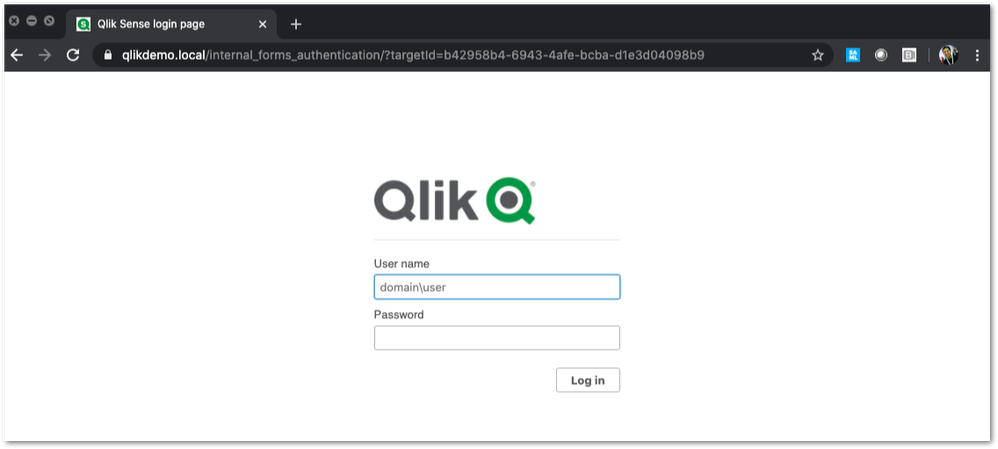

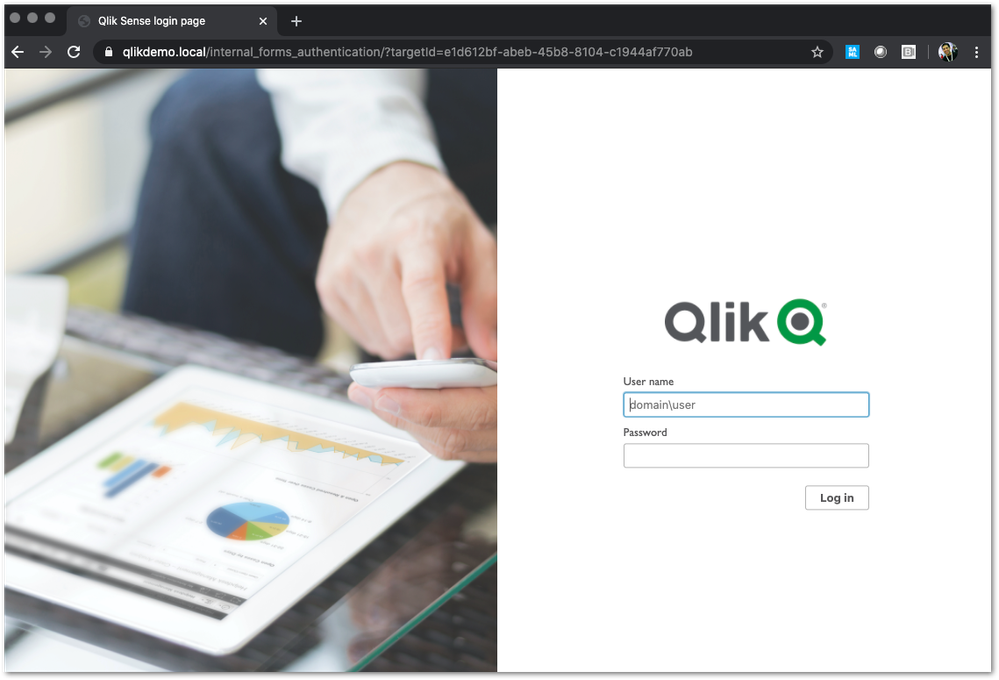

We've all seen the standard Qlik Sense Login page, this article is all about customizing this page.

This customization is provided as is. Qlik Support cannot provide continued support of the solution. For assistance, reach out to our Professional Services or engage in our active Integrations forum.

To customize the page:

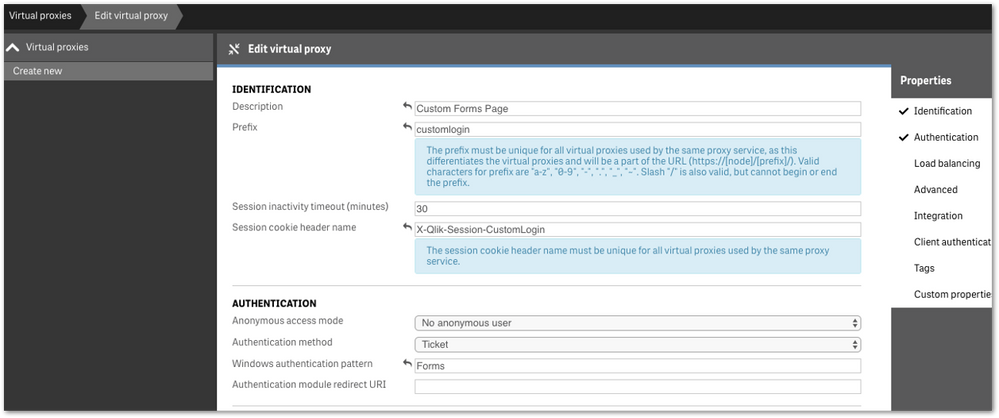

- We highly recommend setting up a new virtual proxy with Forms so you don't impact any users that are using auto-login Windows auth.

Example setup:

Description: Custom Forms Page

Prefix: customlogin

Session cookie header-name: X-Qlik-Session-CustomLogin

Windows authentication pattern: Forms - Once this is done, a good starting point is to download the default login page.

You can open up your web developer tool of choice, go to the login page, and download the HTML response from the GET http://<server>/customlogin/internal_forms_authentication request. It should be roughly a 273 line .html file. - Once you have this file, you can more or less customize it as much as you'd like.

Image files can be inlined as you'll see in the qlik default file, or can be referenced as long as they are publicly accessible. The only thing that needs to exist are the input boxes with appropriate classes and attributes, and the 'Log In' button. - After building out your custom HTML page and it looks great, it needs to be converted to Base64. There are online tools to do this, openssl also has this functionality.

Once you have your Base64 encoded HTML file, then you will want to PUT it into your sense environment. - First, do a GET request on /qrs/proxyservice and find the ID of the proxy service you want this login page to be shown for.

- You will then do a GET request on /qrs/proxyservice/<id> and copy the body of that response. Below is an example of that response.

{ "id": "8817d7ab-e9b2-4816-8332-f8cb869b27c2", "createdDate": "2020-03-23T15:39:33.540Z", "modifiedDate": "2020-05-20T18:46:13.995Z", "modifiedByUserName": "INTERNAL\\sa_api", "customProperties": [], "settings": { "id": "8817d7ab-e9b2-4816-8332-f8cb869b27c2", "createdDate": "2020-03-23T15:39:33.540Z", "modifiedDate": "2020-05-20T18:46:13.995Z", "modifiedByUserName": "INTERNAL\\sa_api", "listenPort": 443, "allowHttp": true, "unencryptedListenPort": 80, "authenticationListenPort": 4244, "kerberosAuthentication": false, "unencryptedAuthenticationListenPort": 4248, "sslBrowserCertificateThumbprint": "e6ee6df78f9afb22db8252cbeb8ad1646fa14142", "keepAliveTimeoutSeconds": 10, "maxHeaderSizeBytes": 16384, "maxHeaderLines": 100, "logVerbosity": { "id": "8817d7ab-e9b2-4816-8332-f8cb869b27c2", "createdDate": "2020-03-23T15:39:33.540Z", "modifiedDate": "2020-05-20T18:46:13.995Z", "modifiedByUserName": "INTERNAL\\sa_api", "logVerbosityAuditActivity": 4, "logVerbosityAuditSecurity": 4, "logVerbosityService": 4, "logVerbosityAudit": 4, "logVerbosityPerformance": 4, "logVerbositySecurity": 4, "logVerbositySystem": 4, "schemaPath": "ProxyService.Settings.LogVerbosity" }, "useWsTrace": false, "performanceLoggingInterval": 5, "restListenPort": 4243, "virtualProxies": [ { "id": "58d03102-656f-4075-a436-056d81144c1f", "prefix": "", "description": "Central Proxy (Default)", "authenticationModuleRedirectUri": "", "sessionModuleBaseUri": "", "loadBalancingModuleBaseUri": "", "useStickyLoadBalancing": false, "loadBalancingServerNodes": [ { "id": "f1d26a45-b0dd-4be1-91d0-34c698e18047", "name": "Central", "hostName": "qlikdemo", "temporaryfilepath": "C:\\Users\\qservice\\AppData\\Local\\Temp\\", "roles": [ { "id": "2a6a0d52-9bb4-4e74-b2b2-b597fa4e4470", "definition": 0, "privileges": null }, { "id": "d2c56b7b-43fd-44ad-a12f-59e778ce575a", "definition": 1, "privileges": null }, { "id": "37244424-96ae-4fe5-9522-088a0e9679e3", "definition": 2, "privileges": null }, { "id": "b770516e-fe8a-43a8-a7a4-318984ee4bd6", "definition": 3, "privileges": null }, { "id": "998b7df8-195f-4382-af18-4e0c023e7f1c", "definition": 4, "privileges": null }, { "id": "2a5325f4-649b-4147-b0b1-f568be1988aa", "definition": 5, "privileges": null } ], "serviceCluster": { "id": "b07fc5f2-f09e-4676-9de6-7d73f637b962", "name": "ServiceCluster", "privileges": null }, "privileges": null } ], "authenticationMethod": 0, "headerAuthenticationMode": 0, "headerAuthenticationHeaderName": "", "headerAuthenticationStaticUserDirectory": "", "headerAuthenticationDynamicUserDirectory": "", "anonymousAccessMode": 0, "windowsAuthenticationEnabledDevicePattern": "Windows", "sessionCookieHeaderName": "X-Qlik-Session", "sessionCookieDomain": "", "additionalResponseHeaders": "", "sessionInactivityTimeout": 30, "extendedSecurityEnvironment": false, "websocketCrossOriginWhiteList": [ "qlikdemo", "qlikdemo.local", "qlikdemo.paris.lan" ], "defaultVirtualProxy": true, "tags": [], "samlMetadataIdP": "", "samlHostUri": "", "samlEntityId": "", "samlAttributeUserId": "", "samlAttributeUserDirectory": "", "samlAttributeSigningAlgorithm": 0, "samlAttributeMap": [], "jwtAttributeUserId": "", "jwtAttributeUserDirectory": "", "jwtAudience": "", "jwtPublicKeyCertificate": "", "jwtAttributeMap": [], "magicLinkHostUri": "", "magicLinkFriendlyName": "", "samlSlo": false, "privileges": null }, { "id": "a8b561ec-f4dc-48a1-8bf1-94772d9aa6cc", "prefix": "header", "description": "header", "authenticationModuleRedirectUri": "", "sessionModuleBaseUri": "", "loadBalancingModuleBaseUri": "", "useStickyLoadBalancing": false, "loadBalancingServerNodes": [ { "id": "f1d26a45-b0dd-4be1-91d0-34c698e18047", "name": "Central", "hostName": "qlikdemo", "temporaryfilepath": "C:\\Users\\qservice\\AppData\\Local\\Temp\\", "roles": [ { "id": "2a6a0d52-9bb4-4e74-b2b2-b597fa4e4470", "definition": 0, "privileges": null }, { "id": "d2c56b7b-43fd-44ad-a12f-59e778ce575a", "definition": 1, "privileges": null }, { "id": "37244424-96ae-4fe5-9522-088a0e9679e3", "definition": 2, "privileges": null }, { "id": "b770516e-fe8a-43a8-a7a4-318984ee4bd6", "definition": 3, "privileges": null }, { "id": "998b7df8-195f-4382-af18-4e0c023e7f1c", "definition": 4, "privileges": null }, { "id": "2a5325f4-649b-4147-b0b1-f568be1988aa", "definition": 5, "privileges": null } ], "serviceCluster": { "id": "b07fc5f2-f09e-4676-9de6-7d73f637b962", "name": "ServiceCluster", "privileges": null }, "privileges": null } ], "authenticationMethod": 1, "headerAuthenticationMode": 1, "headerAuthenticationHeaderName": "userid", "headerAuthenticationStaticUserDirectory": "QLIKDEMO", "headerAuthenticationDynamicUserDirectory": "", "anonymousAccessMode": 0, "windowsAuthenticationEnabledDevicePattern": "Windows", "sessionCookieHeaderName": "X-Qlik-Session-Header", "sessionCookieDomain": "", "additionalResponseHeaders": "", "sessionInactivityTimeout": 30, "extendedSecurityEnvironment": false, "websocketCrossOriginWhiteList": [ "qlikdemo", "qlikdemo.local" ], "defaultVirtualProxy": false, "tags": [], "samlMetadataIdP": "", "samlHostUri": "", "samlEntityId": "", "samlAttributeUserId": "", "samlAttributeUserDirectory": "", "samlAttributeSigningAlgorithm": 0, "samlAttributeMap": [], "jwtAttributeUserId": "", "jwtAttributeUserDirectory": "", "jwtAudience": "", "jwtPublicKeyCertificate": "", "jwtAttributeMap": [], "magicLinkHostUri": "", "magicLinkFriendlyName": "", "samlSlo": false, "privileges": null } ], "formAuthenticationPageTemplate": "", "loggedOutPageTemplate": "", "errorPageTemplate": "", "schemaPath": "ProxyService.Settings" }, "serverNodeConfiguration": { "id": "f1d26a45-b0dd-4be1-91d0-34c698e18047", "name": "Central", "hostName": "qlikdemo", "temporaryfilepath": "C:\\Users\\qservice\\AppData\\Local\\Temp\\", "roles": [ { "id": "2a6a0d52-9bb4-4e74-b2b2-b597fa4e4470", "definition": 0, "privileges": null }, { "id": "d2c56b7b-43fd-44ad-a12f-59e778ce575a", "definition": 1, "privileges": null }, { "id": "37244424-96ae-4fe5-9522-088a0e9679e3", "definition": 2, "privileges": null }, { "id": "b770516e-fe8a-43a8-a7a4-318984ee4bd6", "definition": 3, "privileges": null }, { "id": "998b7df8-195f-4382-af18-4e0c023e7f1c", "definition": 4, "privileges": null }, { "id": "2a5325f4-649b-4147-b0b1-f568be1988aa", "definition": 5, "privileges": null } ], "serviceCluster": { "id": "b07fc5f2-f09e-4676-9de6-7d73f637b962", "name": "ServiceCluster", "privileges": null }, "privileges": null }, "tags": [], "privileges": null, "schemaPath": "ProxyService" } - In the response, locate the formAuthenticationPageTemplate field

- You can then take your base64 encoded HTML file, paste the value into the formAuthenticationPageTemplate field.

Example:

"formAuthenticationPageTemplate": "BASE 64 ENCODED HTML HERE",

Once you have an updated body, you can use the PUT verb (with an updated modifiedDate) to PUT this body back into the repository. Once this is done, you should be able to goto your virtual proxy and you should see your new login page (very Qlik branded in this example):

If your login page does not work and you need to revert back to the default, simply do a GET call on your proxy service, and set formAuthenticationPageTemplate back to an empty string:

formAuthenticationPageTemplate": ""Environment:

- We highly recommend setting up a new virtual proxy with Forms so you don't impact any users that are using auto-login Windows auth.

-

How to use Postman to make API calls and use/verify JSON output in Qlik Sense En...

The following article describes how to use Postman to make GET and PUT requests and verify the JSON output. Prerequisites In this example, Postman re... Show MoreThe following article describes how to use Postman to make GET and PUT requests and verify the JSON output.

Prerequisites

- In this example, Postman resides outside the Qlik Sense servers, so the certificates need to be exported to make the API calls. Export the Certificates selecting "Platform independent PEM-format", the following article can be used as a reference, just make sure to select "Platform independent PEM-format" instead. Export client certificate and root certificate to make API calls

- For best practices, the user utilized for this sample is not the Service Account.

- It is necessary to create a new Tag in the QMC so we can later use it to add it to an existing object.

- Download Postman

- This exercise was implemented using Qlik Sense April 2020 (13.72.3)

Environment:

- Qlik Sense Enterprise on Windows

The information in this article is provided as-is and to be used at own discretion. Depending on tool(s) used, customization(s), and/or other factors ongoing support on the solution below may not be provided by Qlik Support.

Resolution

Steps

-

Copy the Certificates to the server/computer where Postman is used.

-

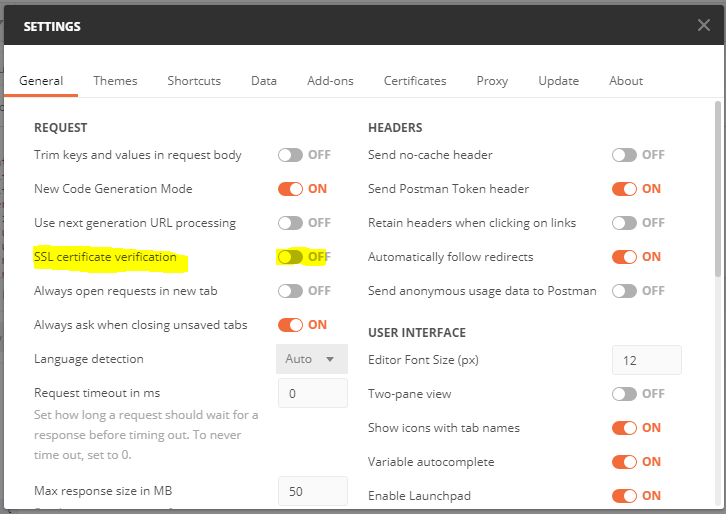

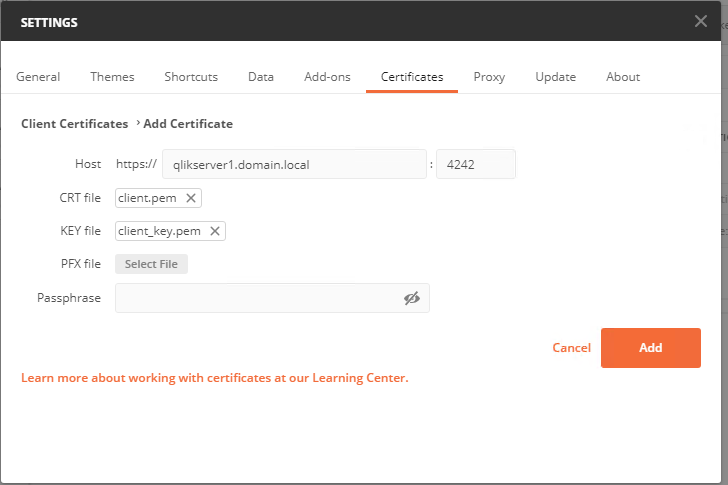

Open Postman and go to menu "Settings" , In the General tab set "SSL certificate verification" to Off, In the Certificates Tab select "add Certificate" and set the path to the exported certs. The CRT file path will correspond to the path where the client.pem certificate resides, the Key file to the cleint_key.pem

Now Postman is ready to be used, this article describes how to make a GET and PUT request to add a tag to a sheet.

It is suggested to visit our Help site to verify the documentation about the API, in this case we will use

get /app/object/{id}

put /app/object/{id}

The URL would be

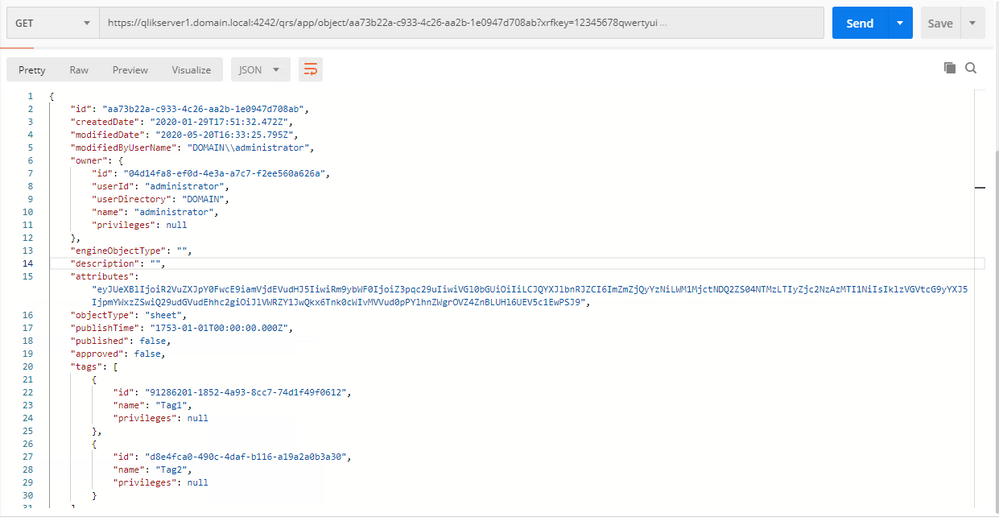

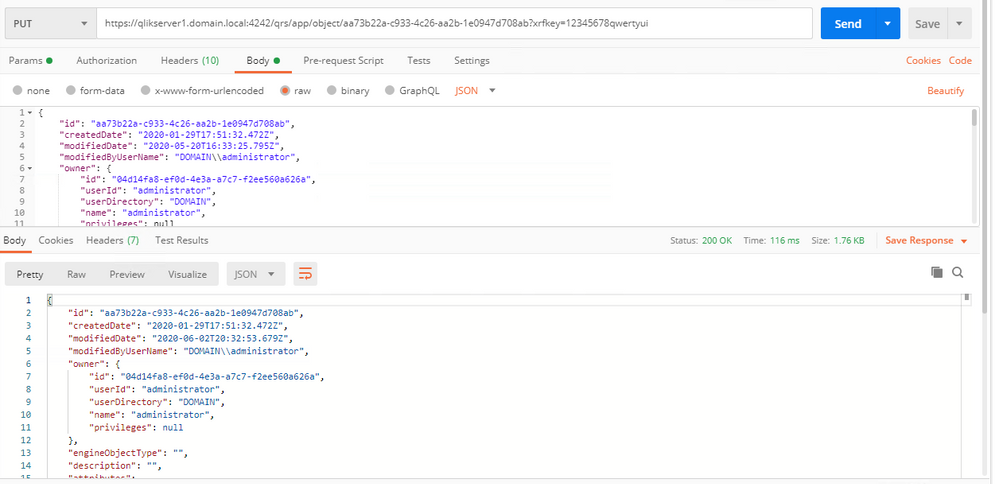

https://qlikserver1.domain.local:4242/qrs/app/object/aa73b22a-c933-4c26-aa2b-1e0947d708ab?xrfkey=12345678qwertyui

[Server] + [API end point] + [xrfkey]

The necessary headers would be :

X-Qlik-xrfkey = 12345678qwertyui

(this value needs to be the same provided in the URL xrfkey), for questions about xrfkey headers please refer to the following information: Using Xrfkey headers

X-Qlik-User = UserDirectory=DOMAIN;UserId=Administrator (this value would be the user to make the API calls)

Note that Postman will include xrfkey in the parameters section once the URL is pasted in the GET response.

Please refer to the following pictures to verify the configurations are correct.GET

Postman Headers

Once is configured you can click the "Send" button, the Response will look like this:

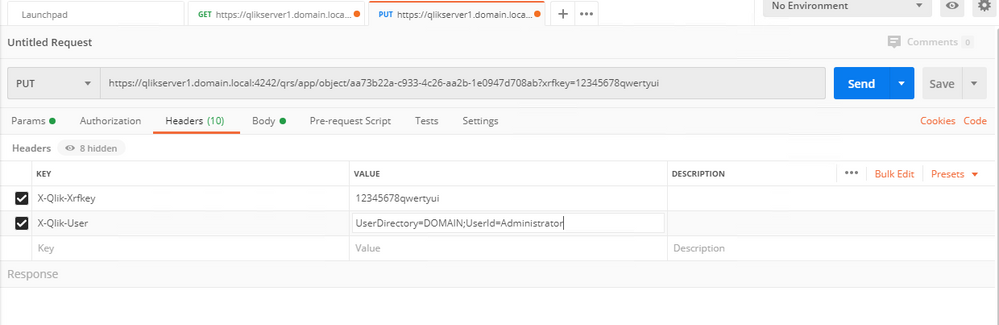

PUT

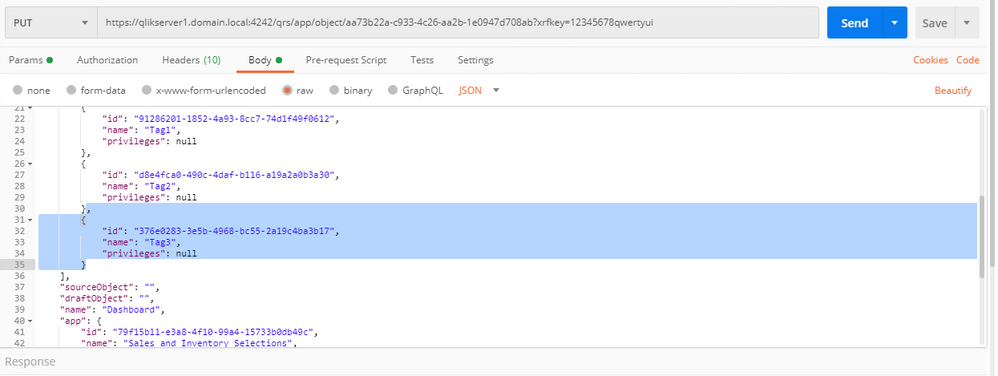

In order to send the PUT method it is necessary to copy the JSON Response from the previous GET call and modify the necessary information, this need to be done carefully otherwise the request will return an error. The details can be found In our Qlik Site under "Updating an entry" section included here.

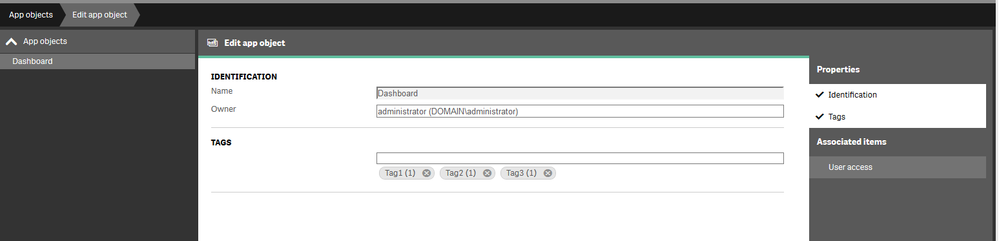

Sample (It will be necessary to include a comma if there are already tags associated to the object){ "id": "376e0283-3e5b-4968-bc55-2a19c4ba3b17", "name": "Tag3", "privileges": null }Please refer to the following pictures to verify that the configurations are correct

PUT HeaderPUT Body

Once the information is correct, select the "Send" button, and you should get a 200 OK Status.We can verify the chances in the Qlik Sense Management Console, now the tag shows 1 Occurrence and the sheet shows the new tag.

-

Qlik Talend Administration Center: License Token Validation without Internet Con...

When using Talend Administration Center, you may encounter the following message Error: License token will expire in xx days This message refers to th... Show MoreWhen using Talend Administration Center, you may encounter the following message

Error: License token will expire in xx days

This message refers to the license token expiring, not the license itself.

Resolution

Talend Administration Center has Internet access

- TAC automatically validates the license token.

- No manual action required.

Talend Administration Center has no Internet access

If TAC does not have Internet connectivity, follow these steps

- Go to Talend Administration Center page and click:

- Validate your license manually OR

- Generate Validation Request (button name depends on TAC version).

- A popup window will display a validation link (e.g. https://www.talend.com/api/get_tis_validation_token_form.php).

- Copy this link into a text file.

- On a machine with Internet access:

- Paste the link into a browser.

- Retrieve the validation token (e.g. 5xEfMp1ozPyiDTTUqzUHI8K3Xuvqx/iipc+).

- Return to TAC on the offline system.

- In the popup window:

- Paste the validation token.

- Click Enter validation token.

Your license token is now validated manually.

Cause

Talend Administration Center (TAC) requires an Internet connection during license token validation and the license token must be periodically validated.

- If TAC has Internet access, the token is validated automatically.

- If TAC does not have Internet access, you must manually validate the license token.

Related Content

- Refer to the generating-validation-request-without-internet-connection for more details.

- You can also configure notifications to alert administrators before the license token expiration date managing-notifications.

Summary

If TAC has Internet access, license token validation is automatic. If not, use the manual validation process by generating a request, retrieving the validation token from another machine, and pasting it back into TAC.

Environment

-

Replicate using Oracle source fails with missing redo log

Environment Qlik Replicate If a Replicate task fails with the following error: 00008696: 2021-01-20T10:06:02:964052 [SOURCE_CAPTURE ]E: Cannot find... Show MoreEnvironment

If a Replicate task fails with the following error:00008696: 2021-01-20T10:06:02:964052 [SOURCE_CAPTURE ]E: Cannot find archived REDO log with sequence '7204', thread '1' (oracdc_reader.c:412)

OR

00005624: 2020-06-02T17:05:23:665747 [SOURCE_CAPTURE ]E: Oracle CDC stopped [1022301] (oracdc_merger.c:1178)

Replicate is always reading V$archived_log to get the archived REDO information. The redo log needs to have an active/available status from the V$archived_log

Cause

This means that Replicate is complaining that it cannot find the redo archive file.

Either1. the Replicate Oracle Source Endpoint should be using a different Oracle DEST_ID;

2. the file does not exist on disk; or

3. the file exists but that the internal Oracle metadata in v$archived_log view says that it's not available.The simplest explanation is that the redo log could just have been deleted before Replicate was able to process it.

Resolution

We recommend to at least keep it for 72 hours in case of unexpected weekend issues.

What you can do as well if you encounter an issue like this, is to restore the oracle redo log and then resume the task.

If the redo log cannot be restored, then you need to reload the entire task.

-

Qlik Cloud Webhooks: Migration to new event formats is coming

The event payloads emitted by the Qlik Cloud webhooks service are changing. Qlik is replacing a legacy event format with a new cloud event format. Any... Show MoreThe event payloads emitted by the Qlik Cloud webhooks service are changing. Qlik is replacing a legacy event format with a new cloud event format.

Any legacy events (such as anything not already cloud event compliant) will be updated to a temporary hybrid event containing both legacy and cloud event payloads. This will start on or after November 3, 2025.

Please consider updating your integrations to use the new fields once added.

A formal deprecation with at least a 6-month notice will be provided via the Qlik Developer changelog. After that period, hybrid events will be replaced entirely by cloud events.

Webhook automations in Qlik Automate will not be impacted at this time.

Events impacted by this migration

The webhooks service in Qlik Cloud enables you to subscribe to notifications when your Qlik Cloud tenant generates specific events.

At the time of writing, the following legacy events are available:

Service Event name Event type When is event generated API keys API key validation failed com.qlik.v1.api-key.validation.failed The tenant tries to use an API key which is expired or revoked Apps (Analytics apps) App created com.qlik.v1.app.created A new analytics app is created Apps (Analytics apps) App deleted com.qlik.v1.app.deleted An analytics app is deleted Apps (Analytics apps) App exported com.qlik.v1.app.exported An analytics app is exported Apps (Analytics apps) App reload finished com.qlik.v1.app.reload.finished An analytics app has finished refreshing on an analytics engine (not it may not be saved yet) Apps (Analytics apps) App published com.qlik.v1.app.published An analytics app is published from a personal or shared space to a managed space Apps (Analytics apps) App data updated com.qlik.v1.app.data.updated An analytics app is saved to persistent storage Automations (Automate) Automation created com.qlik.v1.automation.created A new automation is created Automations (Automate) Automation deleted com.qlik.v1.automation.deleted An automation is deleted Automations (Automate) Automation updated com.qlik.v1.automation.updated An automation has been updated and saved to persistent storage Automations (Automate) Automation run started com.qlik.v1.automation.run.started An automation run began execution Automations (Automate) Automation run failed com.qlik.v1.automation.run.failed An automation run failed Automations (Automate) Automation run ended com.qlik.v1.automation.run.ended An automation run finished successfully Reloads (Analytics reloads) Reload finished com.qlik.v1.reload.finished An analytics app has been refreshed and saved Users User created com.qlik.v1.user.created A new user is created Users User deleted com.qlik.v1.user.deleted A user is deleted Any events not listed above will remain as-is, as they already adhere to the cloud event format.

How are events changing

Each event will change to a new structure. The details included in the payloads will remain the same, but some attributes will be available in a different location.

The changes being made:

- All new property names will be lowercase, which may cause some duplication of existing properties within the

dataobject. - The following properties or objects will be updated:

cloudEventsVersionis replaced byspecversion. For most events this will be fromcloudEventsVersion: 0.1tospecversion: 1.0+.contentTypeis replaced bydatacontentypeto describe the media type of the data object.eventIdis replaced byid.eventTimeis replaced bytime.eventTypeVersionis not present in the future schema.eventTypeis replaced bytype.extensions.actoris replaced byauthtypeandauthclaims.extensions.updatesis replaced bydata._updatesextensions.meta, and any other direct objects onextensionsare replaced by equivalents indatawhere relevant.- The direct properties of the

extensionsobject will be moved to the root and renamed to be lowercase if needed, such astenantId,userId,spaceId, etc.

Example: Automation created event

This is the current legacy payload of the automation created event:

{ "cloudEventsVersion": "0.1", "source": "com.qlik/automations", "contentType": "application/json", "eventId": "f4c26f04-18a4-4032-974b-6c7c39a59816", "eventTime": "2025-09-01T09:53:17.920Z", "eventTypeVersion": "1.0.0", "eventType": "com.qlik.v1.automation.created", "extensions": { "ownerId": "637390ef6541614d3a88d6c3", "spaceId": "685a770f2c31b9e482814a4f", "tenantId": "BL4tTJ4S7xrHTcq0zQxQrJ5qB1_Q6cSo", "userId": "637390ef6541614d3a88d6c3" }, "data": { "connectorIds": {}, "containsBillable": null, "createdAt": "2025-09-01T09:53:17.000000Z", "description": null, "endpointIds": {}, "id": "cae31848-2825-4841-bc88-931be2e3d01a", "lastRunAt": null, "lastRunStatus": null, "name": "hello world", "ownerId": "637390ef6541614d3a88d6c3", "runMode": "manual", "schedules": {}, "snippetIds": {}, "spaceId": "685a770f2c31b9e482814a4f", "state": "available", "tenantId": "BL4tTJ4S7xrHTcq0zQxQrJ5qB1_Q6cSo", "updatedAt": "2025-09-01T09:53:17.000000Z" } }This will be the temporary hybrid event for automation created:

{ // cloud event fields "id": "f4c26f04-18a4-4032-974b-6c7c39a59816", "time": "2025-09-01T09:53:17.920Z", "type": "com.qlik.v1.automation.created", "userid": "637390ef6541614d3a88d6c3", "ownerid": "637390ef6541614d3a88d6c3", "tenantid": "BL4tTJ4S7xrHTcq0zQxQrJ5qB1_Q6cSo", "description": "hello world", "datacontenttype": "application/json", "specversion": "1.0.2", // legacy event fields "eventId": "f4c26f04-18a4-4032-974b-6c7c39a59816", "eventTime": "2025-09-01T09:53:17.920Z", "eventType": "com.qlik.v1.automation.created", "extensions": { "userId": "637390ef6541614d3a88d6c3", "spaceId": "685a770f2c31b9e482814a4f", "ownerId": "637390ef6541614d3a88d6c3", "tenantId": "BL4tTJ4S7xrHTcq0zQxQrJ5qB1_Q6cSo", }, "contentType": "application/json", "eventTypeVersion": "1.0.0", "cloudEventsVersion": "0.1", // unchanged event fields "data": { "connectorIds": {}, "containsBillable": null, "createdAt": "2025-09-01T09:53:17.000000Z", "description": null, "endpointIds": {}, "id": "cae31848-2825-4841-bc88-931be2e3d01a", "lastRunAt": null, "lastRunStatus": null, "name": "hello world", "ownerId": "637390ef6541614d3a88d6c3", "runMode": "manual", "schedules": {}, "snippetIds": {}, "spaceId": "685a770f2c31b9e482814a4f", "state": "available", "tenantId": "BL4tTJ4S7xrHTcq0zQxQrJ5qB1_Q6cSo", "updatedAt": "2025-09-01T09:53:17.000000Z" }, "source": "com.qlik/automations" }Timeline

- November 3, 2025: Hybrid events start emitting (combined legacy and cloud event payloads).

- At least 6 months after a formal deprecation notice: Legacy fields will be removed, leaving fully cloud events only.

Environment

- Qlik Cloud

- Qlik Cloud Government

- All new property names will be lowercase, which may cause some duplication of existing properties within the

-

Qlik Talend Cloud: How to Change the Value of Context Parameters and Propagate t...

For Qlik Talend On-premises Solution, when using global context values in all jobs, If you want to change the file path values to replace d:/ with t:/... Show MoreFor Qlik Talend On-premises Solution, when using global context values in all jobs, If you want to change the file path values to replace d:/ with t:/, you can propagate the change to all jobs in the Studio.

How about Qlik Talend Cloud and Hybrid Solutions? This article provides a brief introduction to changing the value of context parameters and propagating the change to all Tasks in Talend Cloud.How To

You can use this API to update artifact parameters

Put https://api.eu.cloud.talend.com/orchestration/executables/tasks/{taskid}In the body, set the paramters you want to update:

Example: change the ContextFilePath": from "C:/Franking/in.csv" to "h:/Franking/in.csv"){ "name": "contextpath", "description": "CC", "workspaceId": "61167bef18d7d656bfae071d", "artifact": { "id": "689b5d04febbe74489779c31", "version": "0.1.0.20251208032554" }, "parameters":{ "ContextFilePath": "h:/Franking/in.csv" } }To maintain context values better in the long run for multiple jobs, create a Connection in Talend Cloud. This way, all context in the job can be updated by updating the file name in the Qlik Talend Management Console Connection as opposed to running the API multiple times. For information on how to set up a connection, see Managing connections.

Environment

-

Qlik Cloud: MS Access data not loaded using Data Manager and ODBC

Loading MS Access DB data via Qlik Data Gateway fails when using the Data Manager. The following error is thrown: Failed to add data Data could not be... Show MoreLoading MS Access DB data via Qlik Data Gateway fails when using the Data Manager. The following error is thrown:

Failed to add data

Data could not be added to Data manager. Please verify that all data sources connected to the app are working and try adding the data again.

Loading data through the Data Load Editor works as expected.

Reviewing the ODBC log for additional details highlights the error:

System.Exception: ODBC Wrapper: Unable to execute SQLForeignKeys: [Microsoft][ODBC Driver Manager] Driver does not support this function

Resolution

This is a driver-related issue and a known limitation.

Use the Data Load Editor instead of the Data Manager.

Considerations of using MS Access with Qlik Sense:

MS Access is a legacy technology dating back to the 1990s; loading data from a file-based Access database via ODBC into a modern BI tool is considered an outdated solution. Moreover, with larger files, this approach is likely to result in significant performance degradation during data loading.

Cause

The MS Access ODBC driver does not support the SQLForeignKeys ODBC function.

Environment

- Qlik Cloud Analytics

-

Migrating To Qlik Cloud Analytics

This Techspert Talks session covers: Demonstration of the Qlik Cloud Analytics Migration tool Advantages of Qlik Cloud SaaS Readiness Chapters... Show More -

Qlik Talend Products: Unable to Connect to Azure SQL Database after enabling TLS...

You may encounter this issue that unable to connect to Azure SQL Database after enabling TLS v1.2 on the database side, and gives an error when execut... Show MoreYou may encounter this issue that unable to connect to Azure SQL Database after enabling TLS v1.2 on the database side, and gives an error when executing the task on the Talend management Console side.

java.sql.SQLException: Reason: Login failed due to client TLS version being less than minimal TLS version allowed by the server.

Resolution

When using the MSSQL components in Talend Studio, Qlik Talend components support 2 kinds of driver (explicit choice to make). The open source one JTDS or the official Microsoft jdbc driver.

It is recommended to use the official MSSQL driver to ensure greater compatibility. The jTDS is not recommended but can still be supported and this driver in a “deprecated” status, Keep this possibility to use this driver for users who want to use it and for whom it remains compatible with their databases.

There are two solutions for this issue as belows

Solution 1

The jTDS driver prior 1.2 does not support TLS v1.2. Please use the official JDBC driver from Microsoft (switch to the official MSSQL driver to ensure greater compatibility).

- https://learn.microsoft.com/en-us/sql/connect/jdbc/download-microsoft-jdbc-driver-for-sql-server

- ways-to-install-external-modules-into-Talend-Studio

Solution 2

Add ssl=require to the end of the JDBC connection URL (ex: jdbc:jtds:sqlserver://my-example-instance.c656df8582985.database.windows.net:1433;ssl=require), or in "Additional JDBC parameters" field of MSSQL components and then re-publish the job and run on Talend management Console.

Cause

The open source jTDS driver, specifically earlier versions like 1.2, does not natively support TLS 1.2. This can lead to connection failures when connecting to SQL Server instances that enforce TLS 1.2, such as Azure SQL Managed Instances or on-premises servers configured to require TLS 1.2 for security reason.

Related Content

Environment

-

Qlik Insight Advisor Performance Slow

Qlik Insight Advisor performs slowly. No errors are logged. Resolution To optimize performance, Review what fields you have available for analysis:... Show MoreQlik Insight Advisor performs slowly. No errors are logged.

Resolution

To optimize performance,

- Review what fields you have available for analysis: Enabling a custom logical model

- Review the business logic to improve performance using the following guide: What is Insight Advisor and business logic?

For additional performance or optimization questions, head over to the Qlik App Development forum, where your knowledgeable Qlik peers and our active Support Agents can better assist you.

Environment

- Qlik Insight Advisor

-

QlikView: Unable to Lease License with Custom User

Leasing a license to the QlikView Desktop client with a custom user fails. Resolution To set up QlikView to lease a license to a custom user, allow... Show MoreLeasing a license to the QlikView Desktop client with a custom user fails.

Resolution

To set up QlikView to lease a license to a custom user, allow license leasing for DMS mode:

- On the Windows Host running QlikView Desktop, browse to C:\Users\USERNAME\AppData\Roaming\QlikTech\QlikView

- Open the settings.ini in a text editor of your choice (as administrator)

- Add the following parameter and value:

SupportLeaseLicenseForDMSMode=1

See How to change the settings.ini in QlikView Desktop for details. - Open the QlikView AccessPoint using QlikView Desktop and lease a license

See How to lease a license in QlikView with DMS mode enabled for reference.

If adding SupportLeaseLicenseForDMSMode=1 does not work:

- On the QlikView server, browse to /ProgramData/QlikTech/

- Rename the LicenseService folder to, for example, LicenseService_old

- Open the QlikView Management Console

- Navigate to System > License > Choose your QlikView Server's license

- Click Apply License

- Open the QlikView AccessPoint using QlikView Desktop and lease a license

See How to lease a license in QlikView with DMS mode enabled for reference.

Internal Investigation ID(s)

QV-24037

Environment

- QlikView

-

Qlik Sense Service Account requirements and how to change the account

This article will outline how to successfully change the service account running Qlik Sense. Contents: Account Requirements: What the account needs... Show MoreThis article will outline how to successfully change the service account running Qlik Sense.

Contents:

- Account Requirements: What the account needs access to.

- Prep work

- Changing Qlik Sense dependencies

- Change the service account

- External Dependencies

- Video Demonstration

- Related Content

Account Requirements: What the account needs access to.

- Certificates

- Access to the certificate(s) for the site

- Files and file shares

- Access to the installation path for Qlik Sense

- Access to %ProgramData%

- Access to C:\Program Files\Qlik

- Access to the Service Cluster share

- Access to external systems as data sources, e.g.

- Databases

- UNC shares to QVDs, CSVs, etc

Note: Many of the file level permissions would ordinarily be inherited from membership to the Local Administrators group. For information on non-Administrative accounts running Qlik Sense Services see Changing the user account type to run the Qlik Sense services on an existing site.

Prep work

Record the Share Path. Navigate in the Qlik Management Console (QMC) to Service Cluster and record the Root Folder.

Changing Qlik Sense dependencies

- Stop all Qlik Sense services

- Ensure permissions on the Program Files path (this should be provided by Local Administrator rights):

- Navigate to the installation path (default: C:\Program Files\Qlik)

- Select the Sense folder > Right Click > Properties > Security > Edit > Add

- Lookup the new service account

- Ensure that the account has Full control over this folder

- Ensure permissions on the %ProgramData% path (this should be provided by Local Administrator rights):

- Navigate to the installation path (default: C:\ProgramData\Qlik)

- Select the Sense folder > Right Click > Properties > Security > Edit > Add

- Lookup the new service account

- Ensure that the account has Full control over this folder

- Ensure access to the certificates used by Qlik Sense

- Start > MMC > File > Add/Remove Snap-In > Certificates > Computer Account > Finish

- Go into Certificates (Local Computer) > Personal > Certificates

- For the Qlik CA server certificate (under Certificates (Local Computer) > Personal > Certificates)

- Right Click on the Server Certificate > All Tasks > Manage Private Keys > Ensure that the new service account has control

- If using a third party certificate, do the same

- Start > MMC > File > Add/Remove Snap-In > Certificates > Computer Account > Finish

- Ensure access to the Service Cluster path used by Qlik Sense

- Start > Computer Management > Shared Folders > Shares > Select the Share path

- Right click on the Share Path > Properties > Share Permissions > Add the new service account to have full control

- Open Windows File Explorer and navigate to the folder (e.g. C:\Share) > Right click on the folder > Security > Edit > Add the new service account to have full control

- Ensure membership in the Local Groups that Qlik Sense requires:

- Start > Computer Management

- Navigate to Local Users and Groups > Local Groups

- Add the new service account as a member of:

- Administrators (if using this configuration option)

- Performance Monitor Users

- Qlik Sense Service Users

Change the service account

- Swap the account for all Qlik Services except the Qlik Sense Repository Database Service.

- Open the Windows Services Console

- Locate the services

- One by one open the Properties of the Service and change the accountover by using the Windows services control panel

- Start all Qlik Sense Services

- Access the QMC to validate functionality, preferably as a previously configured RootAdmin

- Access the Data Connections section of the QMC

- Toggle the User ID field and change the data connections used by the License and Operations Monitor apps to use the new user ID and password:

- Add the RootAdmin role to the new service account*

- QMC > Users

- Filter on the new UserID > Edit

- Add RootAdmin role

*If this account is not existing yet in Qlik Sense, you would need to try to connect to the Hub/QMC with this new account first, in order to be able to see it in QMC>Users.

- Execute the License Monitor reload task

- Inspect the configured User Directory Connectors and change the User ID and password combination if previously configured.

External Dependencies

- Go into the QMC > Data Connections section and inspect all Folder data connections to determine all network shares that the service account needs access to. Either change them yourself or alert the necessary teams to provide both Share and NT level access to these shares.

- Inspect all Data Connections and ensure that none use the old Service account and password. Follow up with necessary teams to provide access to data sources that used the old credentials.

Video Demonstration

Related Content

How to change the share path in Qlik Sense (Service Cluster)

-

Does Microsoft's End of Life for Microsoft SSIS CDC Components Impact Replicate?

Microsoft will deprecate Change Data Capture (CDC) components by Attunity. See SQL Server Integration Services (SSIS) Change Data Capture Attunity fea... Show MoreMicrosoft will deprecate Change Data Capture (CDC) components by Attunity. See SQL Server Integration Services (SSIS) Change Data Capture Attunity feature deprecations | microsoft.com for details.

Will this affect Qlik Replicate?

Resolution

This announcement does not affect Qlik Replicate. It is only relevant to the product "Change Data Capture (CDC) components by Attunity".

Microsoft distributes and provides primary support for this product. Qlik Replicate's functionality will remain the same.

Environment

- Qlik Replicate